Internet users across South Asia, the Gulf and parts of the Middle East endured slow, jittery connections and higher cloud latency after multiple undersea fiber‑optic cables in the Red Sea were reported cut, forcing major carriers and cloud operators to reroute traffic, rebalance capacity and warn customers that degraded performance could persist while repairs and diagnostics continue.

The modern global Internet depends on a dense, physical web of submarine fiber‑optic cables laid across ocean floors. These cables, not satellites or wireless links, carry roughly 95% of intercontinental data — everything from stock trades and banking transactions to streaming video and cloud backups. A handful of maritime corridors concentrate enormous east‑west capacity, and one of the most critical of those corridors runs through the Red Sea and the approaches to the Suez. Damage in that narrow co into measurable latency and reachability problems across continents.

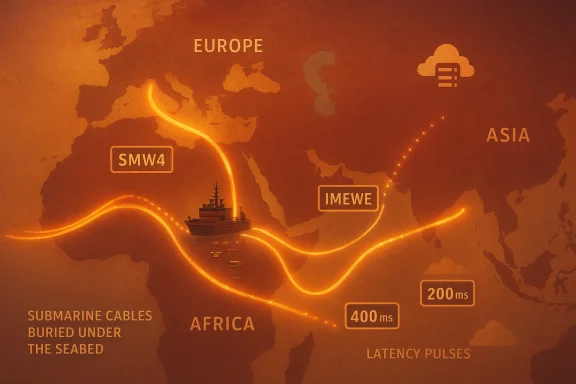

On 6 September 2025, monitoring systems and operator telemetry flagged anomalous routing and degraded throughput beginning around 05:45 UTC. Microsoft’s Azure service health advisory confirmed that traffic traversing the Middle East and connecting Asia, Europe and the Gulf could experience increased latency as traffic was rerouted away from the damaged paths. Independent network monitors, including NetBlocks, attributed the incident to failures on the SEA‑ME‑WE‑4 (SMW4) and IMEWE systemsrabia. Several regional ISPs and national carriers subsequently warned customers to expect slower performance during peak hours while alternate capacity was provisioned.

Bottom line: multiple credible hypotheses exist, and early public statements did not present definitive technical attribution. Analysts and governments will want a full repair‑log and OTDR trace analysis to reach confident conclusions.

The uncomfortable truth is that these operational band‑aids do not remove the fundamental geographic clustering of critical infrastructure. Reducing that risk will require coordinated investment — more diverse cable routes, stronger coastal protections, faster cross‑border repair procedures and realistic planning by critical enterprises for degraded network states. Until those systemic changes are made, similar incidents will continue to pose meaningful operational risk to latency‑sensitive services across the globe.

The September Red Sea incident should be treated as an urgent, actionable case study: the physical skeleton of the Internet matters, and resilience planning that ignores maritime cables is incomplete.

Source: AOL.com https://www.aol.com/internet-disrupted-across-asia-middle-095432371.html

Background / Overview

Background / Overview

The modern global Internet depends on a dense, physical web of submarine fiber‑optic cables laid across ocean floors. These cables, not satellites or wireless links, carry roughly 95% of intercontinental data — everything from stock trades and banking transactions to streaming video and cloud backups. A handful of maritime corridors concentrate enormous east‑west capacity, and one of the most critical of those corridors runs through the Red Sea and the approaches to the Suez. Damage in that narrow co into measurable latency and reachability problems across continents.On 6 September 2025, monitoring systems and operator telemetry flagged anomalous routing and degraded throughput beginning around 05:45 UTC. Microsoft’s Azure service health advisory confirmed that traffic traversing the Middle East and connecting Asia, Europe and the Gulf could experience increased latency as traffic was rerouted away from the damaged paths. Independent network monitors, including NetBlocks, attributed the incident to failures on the SEA‑ME‑WE‑4 (SMW4) and IMEWE systemsrabia. Several regional ISPs and national carriers subsequently warned customers to expect slower performance during peak hours while alternate capacity was provisioned.

What happened: timeline and immediate impact

Early detection and public advisories

- 05:45 UTC, 6 September 2025 — Monitoring systems began flagging BGP route changes, higher round‑trip times and packet loss patterns consistent with physical cable faults. Microsoft posted a Service Health advisory shortly after, warning of increased latency for traffic traversing the Middle East corridor.

- NetBlocks and other internet observatories reported measurable degradations in connectivity across India, Pakistan, the United Arab Emirates and neighboring countries. Those reports identified degraded segments on the SMW4 ystems near Jeddah.

- Regional carriers and national telecom firms publicly warned subscribers and said they were coordinating with international partners to secure alternative bandwidth, lease transit, and prepare for ship‑borne cable repair operations. Pakistan’s Telecom authority explicitly warned of degraded service during peak hours while alternate capacity was arranged.

Scope and measurable effects

The disruption was not a wholesale national blackout in most places; rather, it manifested as higher latency, intermittent access, slow websites, longer API response times and degraded performance for latency‑sensitive services (VoIP, financial trading, live video). Cloud customers — most visibly, some Microsoft Azure customers — observed elevated RTTs and timeouts for cross‑region traffic routed through the affected corridor. Because large cloud providers rely on global backbone diversity and on interconnect relationships, the event primarily translated into performance hits rather than complete service loss for most major cloud platforms.The submarine systems involved: SMW4 and IMEWE

Why these cables matter

SEA‑ME‑WE‑4 (SMW4) and India‑Middle East‑Western Europe (IMEWE) are legacy but high‑capacity systems that bridge Europe, the Middle East and South/Southeast Asia. They are among several systems carrying convergent traffic through the Red Sea corridor. A failure or cut on these systems forces traffic to travel longer, often more congested detours around Africa (e.g., Cape of Good Hope routes) or through alternative eastern routes, increasing latency and consuming spare capacity on other links.Physical geography makes the Red Sea a chokepoint

The Red Sea narrows and channelizes maritime traffic — and subsea cables — through a constrained geography. The approaches to the Suez, Bab al‑Mandeb and adjacent Saudi and Egyptian coasts concentrate cable landings and crossings, so a clustered failure can affect multiple systems simultaneously. This physical concentration is why an incident near Jeddah rippled across multiple underwater systems and produced wide‑area impacts.Repair logistics: why undersea cuts take time

Repairing buried or broken subsea fiber is complex, expensive and slow. Cable owners must:- Diagnose exact fault locations using optical time‑domain reflectometry (OTDR) and BGP/telemetry signals.

- Mobilize a cable repair ship equipped with ROVs (remotely operated vehicles) or grapnels, depending on depth and bottom conditions.

- Execute recovery, splice in new fiber segments and retest, which often requires literal days to weeks — longer when multiple cuts are involved or when work is complicated by weather, security risks or port permissions.

Cause: accident, sabotage, or attrition?

The immediate question for operators, national authorities and governments is how the cuts occurred. There are three broad explanations, each with different operational and policy implications.1) Accidental damage (shipping, anchors, trawling)

Commercial shipping and anchoring are historically the most common causes of subsea cable faults, especially in shallower, busy sea lanes. Heavy anchors or dragging trawl nets can damage cables. Several technical reports from past incidents conclude that a significant fraction of cable faults are attributable to maritime activity rather than deliberate attacks. Recent reporting after this incident suggested commercial ships could plausibly have caused some of the damage, and some analysts urged caution before attributing blame.2) Deliberate sabotage (state or non‑state actors)

Given the intensifying regional conflict dynamics in the Red Sea and surrounding littoral states, deliberate targeting cannot be dismissed. In 2024 and 2025, political actors publicly discussed the strategic leverage of undersea cables. Some commentators and officials warned that militant or state proxies could target cables to apply pressure. Houthi rebels in Yemen have been accused in the past of maritime harassment; they have denied responsibility for such cable attacks. As of the initial reporting of the September 2025 incident, operators and authorities had not published conclusive attribution. When attribution is politically sensitive or inconclusive, public reporting often remains circumspect pending forensic analysis. Attribution of undersea damage requires careful technical and forensic work; public allegations without corroboration should be treated cautiously.3) Natural or equipment failure

Although rarer, aging cable infrastructure, internal faults, or damage from seismic events can break fiber pairs. These possibilities are considered during the initial diagnostics; OTDR signatures and route stability patterns help engineers distinguish external mechanical damage from internal equipment failure.Bottom line: multiple credible hypotheses exist, and early public statements did not present definitive technical attribution. Analysts and governments will want a full repair‑log and OTDR trace analysis to reach confident conclusions.

Who was affected — geography and services

Countries and regions with noticeable effects

- India and Pakistan: Users and ISPs reported slower service and degraded international reachability during peak hours. Pakistan Telecom warned customers of expected degradation while alternate bandwidth was found.

- United Arab Emirates and the Gulf: Major operators experienced congested routes and intermittent slowdowns, particularly for Asia‑Europe transit.

- Parts of Europe and East Africa: Indirectly affected as rerouted traffic increased load on other Atlantic and African corridors, producing higher latency for certain cross‑region flows.

Services hit hardest

- Cloud services (cross‑region replication, APIs): Customers using Azure (and, to varying degrees, other cloud providers whose traffic transited the affected corridor) observed higher latency and occasional timeouts.

- Real‑time communications: VoIP, video conferencing, and live streaming saw increased jitter and packet drops.

- Financial services: Low‑latency trading and some interbank messages can be sensitive to even modest increases in RTT; operators advised traders to prepare for intermittent slowness on affected routes.

- Consumer web and streaming: End users noticed slower page loads and buffering, especially for international content served across the affected corridor.

Cloud resilience and what this incident exposes

This episode was a practical reminder that cloud providers’ layered redundancy does not make the underlying physical network invisible.Strengths demonstrated

- Traffic engineering and rapid rerouting: Major cloud providers and transit carriers can reroute flows within minutes to hours, preserving reachability for most customers despite physical faults. Microsoft’s ability to rebalance and route traffic away from the Red Sea corridor prevented more serious outages.

- Monitoring and observability: BGP telemetry, active probes and third‑party monitors like NetBlocks helped produce situational awareness quickly, enabling operational decisions.

Fragilities exposed

- Geographic concentration: Too much capacity still runs through the same narrow corridors. That concentration creates single points of failure that can translate into systemic slowdowns when multip- Dependency asymmetry: Not all countries, ISPs or enterprises enjoy the same routing diversity; regional operators that rely heavily on a small set of transit paths are particularly exposed.

- Repair bottlenecks: Cable repair capacity (repair ships, specialized crews) and geopolitical constraints (port permissions, security) can extend outage windows and complicate recovery planning.

Practical recommendations for enterprises and network operators

For cloud customers and IT teams

- Assume network variability: Design applications to tolerate latency spikes — use retries with exponential backoff, idempotent APIs, and client‑side timeouts suitable for degraded conditions.

- Multi‑region and multi‑cloud strategy: Where business impact is high, combine multi‑region replication with multi‑cloud diversity so a single corridor failure does not degrade all copies of critical services.

- Use CDN and edge caching: Cache static assets and take advantage of edge services to reduce cross‑region round trips during transit disruptions.

- Observe and alert on RTT, packet loss and BGP changes: Integrate network observability into SRE runbooks and test failover procedures regularly.

For carriers and ISPs

- Increase route diversity and strategic peering: Negotiate alternative transit and peer directly where possible to avoid single‑corridor reliance.

- Capacity contingency planning: Arrange standby transit agreements and cloud caching relationships to smooth short‑term congestion.

- Public communications: Timely, transparent advisories help manage customer expectations and reduce false attribution or panic. Pakistan Telecom’s statement during the incident is an example of clear messaging that mitigates user confusion.

For policymakers and national security planners

- Prioritize strategic redundancy: Encourage or fund additional landings and alternative routes to reduce concentration risk, including west‑to‑east land routes and diversified submarine paths.

- Coordinate cable protection measures: Improve maritime enforcement, establish no‑anchoring zones near cable landings and invest in coastal surveillance to detect suspicious activity.

- Plan for cooperative repair logistics: Streamline port access and cross‑border permissions for cable repair vessels to reduce non‑technical delays.

Industry and policy implications

The September 2025 Red Sea incident — even in its initial, not‑fully‑attributed form — has renewed calls for policy and infrastructure changes.- Governments in affected regions will likely accelerate efforts to diversify landing sites and to negotiate mutual‑aid agreements for rapid repairs.

- International bodies and consortiums that own cable systems may re‑examine route diversity in contract negotiations and investor planning.

- Greater investment in coastal and port security could be justified on grounds of protecting critical communications infrastructure from both accidental and deliberate threats.

- Financial regulators and critical infrastructure authorities may tighten SLAs, require contingency testing for low‑latency markets, and demand disclosure of routing dependencies for critical services.

Technical deep dive: how rerouting actually works and why latency rose

When a subsea system becomes unavailable, BGP (Border Gateway Protocol) routes withdraw and alternative paths are advertised. Traffic follows the best available path by the metrics available to routers (path length, policy, commercial relationships). Two main effects cause user‑visible latency increases:- Longer physical paths: Rerouting around Africa or through different subsea systems increases distance and therefore RTT.

- Capacity concentration on alternatives: Traffic suddenly shifts onto links designed for lower utilization, causing congestion, queuing, retransmissions and packet loss that further degrade throughput and application performance.

- Pre‑establishing multiple peering and transit arrangements.

- Using traffic engineering to shift flows gradually rather than in one abrupt wave.

- Leveraging WAN optimizers, compression and application‑level retransmission controls.

Assessing risk: should businesses be worried?

Short answer: it depends on exposure.- Businesses with localized operations and limited cross‑border traffic will likely see modest effects, especially where local ISPs provide resilient domestic routes.

- Enterprises that rely on low‑latency cross‑region replication (financial trading, real‑time bidding, multiplayer games) face significant operational risk and should treat such incidents as plausible, not exceptional.

- Large cloud customers should validate that their failover arrangements include geographic and provider diversity, and test them under realistic network stress conditions.

Strengths and weaknesses in the response to the incident

Notable strengths

- Rapid operational communication by cloud and monitoring firms: Microsoft’s service health advisory and public telemetry from NetBlocks provided early situational awareness. This allowed enterprises to trigger contingency plans promptly.

- Immediate traffic engineering: Carriers and cloud backbone teams quickly rerouted traffic and rebalanced capacity, preventing many services from becoming unavailable.

Potential weaknesses and risks

- Overreliance on corridor diversity: The incident underscored how multiple systems can suffer related damage in the same geographic cluster, causing correlated failure modes.

- Attribution ambiguity: Without clear, transparent forensic reports, public discourse can drift to politicized attributions, which complicates cooperative repair and regional diplomacy.

- Repair capacity shortfalls: A finite global fleet of cable ships and qualified crews means multiple simultaneous incidents or politically constrained ports could materially extend outage windows.

What to watch next

- Official forensic reports: Cable owners and national authorities will publish OTDR analyses aelp determine cause. Those reports are the key to separating accident from malice.

- Repair ship movements and estimated time to repair (ETR): Watch for ship dispatch and port reports; these set the likely recovery timeline once permissions are granted.

- Policy moves: Expect announcements around coastal protection, additional landings, or fast‑track repair agreements between affected nations and cable consortiums.

- Cloud provider follow‑ups: Microsoft and other cloud providers will likely publish post‑incident summaries outlining mitigation steps and any changes to routing or peering arrangements they will implement.

Final analysis: resilience is technical, logistical and political

The Red Sea cable cuts were a blunt reminder that the Internet’s resilience is not only a matter of routing tables and redundant datacenters. It is also a function of undersea engineering, maritime policy, repair logistics and geopolitical stability. The good news is that cloud operators and carriers have tools and playbooks to limit the immediate damage: traffic engineering, peering, leased capacity and edge caching all cushion the blow.The uncomfortable truth is that these operational band‑aids do not remove the fundamental geographic clustering of critical infrastructure. Reducing that risk will require coordinated investment — more diverse cable routes, stronger coastal protections, faster cross‑border repair procedures and realistic planning by critical enterprises for degraded network states. Until those systemic changes are made, similar incidents will continue to pose meaningful operational risk to latency‑sensitive services across the globe.

Practical checklist for IT leaders (ready actions)

- Verify your application SLAs versus realistic RTT and packet loss thresholds.

- Confirm multi‑region and multi‑cloud replication actually provides path diversity (not just logical copies within the same corridor).

- Ensure CDNs and edge caches are used for user‑facing static content.

- Run a network disaster tabletop that simulates an undersea corridor cut and validate failover procedures.

- Monitor BGP and RTT baselines; configure alerts for sustained deviations indicative of regional cable faults.

The September Red Sea incident should be treated as an urgent, actionable case study: the physical skeleton of the Internet matters, and resilience planning that ignores maritime cables is incomplete.

Source: AOL.com https://www.aol.com/internet-disrupted-across-asia-middle-095432371.html