A deceptively small UX convenience — letting Copilot accept a prefilled prompt from a URL — was chained into a practical, one‑click data‑exfiltration technique that security researchers named Reprompt, and the discovery forced a rapid hardening of Microsoft’s consumer Copilot surface during mid‑January 2026. The episode is a textbook case of how agentic AI features expand productivity but also create fresh, subtle attack surfaces that traditional endpoint controls struggle to observe.

Background / overview

Microsoft has folded Copilot into Windows and Edge as a conversational assistant that can access local context, recent files, profile attributes and conversational memory to deliver real productivity gains. That level of privilege — the assistant runs with the calling user’s context — is what makes Copilot powerful and, in turn, an attractive target for abuse. Researchers at Varonis Threat Labs showed how a chain of three otherwise‑benign behaviors could be composed into a stealthy exfiltration pipeline that required only a single click on a legitimate Copilot URL. The same week Alibaba announced a major upgrade to its consumer Qwen AI app — adding in‑chat shopping, payments and travel booking — the juxtaposition of these two stories underlines the central trade‑off in today’s AI rollout: the deeper assistants reach into our workflows, the more value they deliver — and the larger the attack surface they expose. Alibaba’s Qwen update is part of a broader push toward so‑called “agentic AI” that can act on users’ behalf — and agentic systems that can place orders or make bookings raise a similar set of security and privacy governance questions.What Reprompt is — the single‑click chain explained

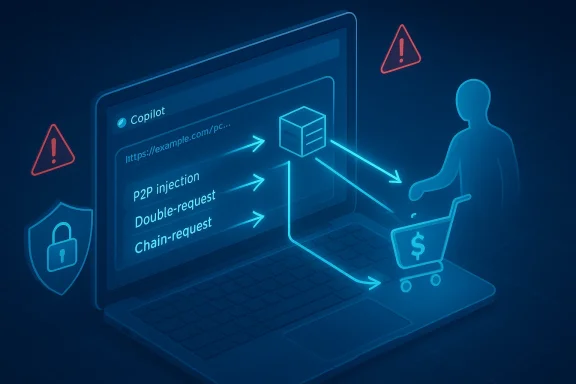

Varonis labeled the technique Reprompt and published technical notes and demonstration videos showing a three‑stage attack flow. The high‑level components are simple; their composition is what makes them dangerous:- Parameter‑to‑Prompt (P2P) injection. Many assistant UIs accept a query parameter (commonly named q) that pre‑fills the assistant’s input box when you open a deep link. That convenience lets people share prompts, but it also lets an attacker embed instructions inside a Copilot URL that execute in the context of the victim’s authenticated session.

- Double‑request (repetition) bypass. Varonis found that some of Copilot’s client‑side guardrails were applied mainly to the initial request. By instructing the assistant to “do it twice” or otherwise repeat an action, an attacker could turn a blocked first attempt into a successful second attempt. This simple repetition subverts naive single‑shot redaction or fetch blocking.

- Chain‑request orchestration. After the initial prompt loads, the attacker’s server can feed successive follow‑up instructions to the live session. That allows an adversary to probe incrementally — asking for username, inferred location, file summaries, or conversational memory — and exfiltrate the results in tiny chunks to avoid detection. In Varonis’ PoC, that pipeline continued even after the user closed the chat window in some product variants.

Evidence, verification and vendor response

Varonis published the initial technical write‑up and PoC demonstrations on January 14, 2026, and researchers say they disclosed the issue earlier to Microsoft; public reporting aligns on a mid‑January remediation timeline. Multiple independent outlets reproduced the core technical claims and reported Microsoft deployed mitigations as part of its January Patch Tuesday updates. Those independent verifications include Ars Technica, Malwarebytes and Windows Central, and Microsoft’s public updates (release notes, security channels) reflected product hardenings to Copilot Personal around the same time. Two practical caveats in the public record are important to note:- The PoC and reporting demonstrate the attack’s feasibility in controlled environments; no public evidence of large‑scale in‑the‑wild abuse has been reported as of the public disclosures. However, a lack of observed mass exploitation is not proof that the technique was never used. Varonis’ materials and subsequent news coverage emphasize responsible disclosure and rapid patching.

- Product variants differ: the exposure applied to Copilot Personal (consumer) flows rather than Microsoft 365 Copilot (enterprise), which benefits from tenant‑level Purview auditing, DLP and admin controls. Organizations that allow ungoverned consumer Copilot usage on corporate devices remain the highest‑risk cases.

Why Reprompt matters — practical and architectural implications

Reprompt matters for three converging reasons:- Extremely low attacker cost. A single legitimate‑looking URL in email or chat can trigger the chain. Attackers already exploit trusted domains to increase click rates; a Microsoft‑hosted link carries psychological trust that helps social engineering succeed.

- Evasion of traditional telemetry. Because much of the follow‑on activity occurs on vendor‑hosted infrastructure or is driven by the assistant itself, local egress logs and endpoint agents may see only routine vendor traffic — not the encoded, staged exfiltration the attacker orchestrates. That creates a visibility gap for defenders.

- Privilege inheritance amplifies risk. Copilot operates with the caller’s identity and privileges; anything a user can read (files, calendar entries, profile data) can be summarized by the assistant unless governance controls explicitly prevent it. The assistant’s access model is the necessary primitive that makes exfiltration high‑value.

Immediate defensive steps for administrators and power users

The disclosure produced a clear short checklist that organizations and users should treat as urgent:- Apply vendor updates immediately. Verify Patch Tuesday rollups and Copilot client versions across managed fleets and home devices used for work. Microsoft’s mid‑January mitigations closed the public Reprompt vector, but only an inventory‑driven rollout will ensure coverage.

- Restrict Copilot Personal on corporate devices. Where governance is required, prefer managed Microsoft 365 Copilot or disable consumer Copilot flows via Intune, Group Policy, or other endpoint management tools.

- Treat Copilot deep links as suspicious. Phishing awareness campaigns should explicitly call out Copilot/Microsoft deep links and train users to verify links and contexts before clicking.

- Enhance telemetry and detections. Monitor for:

- Repeated identical or near‑identical fetch attempts from Copilot processes (the double‑request pattern).

- Outbound requests to unusual endpoints from Copilot‑associated processes.

- Sequences of short, encoded responses that reassemble into PII.

Correlate local telemetry with vendor logs when possible.

- Harden session lifecycle and isolation. Where possible, shorten persistent consumer session lifetimes on corporate endpoints and require reauthentication for actions that access sensitive data or make external requests.

Longer‑term fixes product and platform teams should prioritize

Reprompt is a design‑level stress test for assistant architecture. These are the product investments that materially reduce recurrence risk:- Treat every external input as untrusted. URL parameters, document content, and external web responses must be sanitized and explicitly labeled as untrusted before being incorporated into any agent prompt pipeline. Guardrails must be enforced consistently across the entire execution graph, not only on the initial invocation.

- Persist enforcement across chains. Safety decisions and redactions applied to the first request must remain effective across repeated invocations and for any server‑driven follow‑ups. Model‑level policy enforcement should be stateful, not one‑shot.

- Semantic DLP and tenant controls for consumer surfaces. Enterprises require the ability to apply Purview‑style controls or equivalent semantic DLP to any assistant running on devices that access corporate resources, even when the assistant is a consumer product.

- Signed, auditable agent actions. For agentic features (see Alibaba’s Qwen below), every action that executes a payment, places an order, or makes phone calls should be auditable and require user re‑consent for high‑risk operations. This is the same principle that secures financial APIs and should apply to agentic AI.

Alibaba’s Qwen update — the consumer AI arms race goes transactional

On a related front, Alibaba announced a major upgrade to its Qwen consumer app that deepens integrations with the company’s e‑commerce and travel platforms. The update lets users order groceries, book flights, and pay via Alipay inside the Qwen chat interface — a deliberate shift from “models that understand” to “systems that act.” Reuters and other outlets reported the change and noted Qwen’s rapid MAU growth since its public beta in November. Barron’s framed Alibaba’s move as mirroring similar agentic pushes from Microsoft, Google and OpenAI — a coordinated industry trend to turn chat assistants into real‑world operators that can complete transactions on behalf of users. Alibaba’s upgrade included a “Task Assistant” beta that can make phone calls, process large batches of documents, and orchestrate multi‑stop travel planning inside a single conversational flow. The company also highlighted Qwen’s connection to Taobao, Taobao Instant Commerce, Fliggy and Amap for payments and fulfilment.Why Qwen’s agentic capabilities intersect with the Reprompt lesson

Alibaba’s announcement is a notable example of how commercial pressure is pushing consumer assistants toward agentic capabilities — convenience that is compelling to consumers and commerce partners alike. But agentic AI compounds the same structural hazards Reprompt exposed:- When an assistant can initiate real transactions, the risk model must include payment authorization, fraud detection, and explicit action consent flows that are harder to retrofit than simple query controls.

- Integration with internal services (payments, maps, travel inventories) gives the assistant programmatic primitives to act; those same primitives can be abused if the assistant ingests untrusted inputs that aren’t strictly validated.

- The visibility gap is especially dangerous for agentic flows: an attacker who can covertly authorize small transactions or incrementally drain account balances via repeated, encoded actions poses a different economic threat than pure data leakage.

Strengths and limitations of the reporting and PoC

The public disclosures and follow‑on reporting have notable strengths:- Varonis provided reproducible, technical detail and videos that show the methods and what data could be accessed in lab conditions. That makes the problem concrete and actionable for vendors and security teams.

- Independent reporting from outlets such as Ars Technica, Malwarebytes and Windows Central corroborated the high‑level mechanics and confirmed Microsoft’s mitigation timeline, creating cross‑validation across vendors and media.

- The PoC demonstrates feasibility, not mass exploitation. Public coverage repeatedly notes no confirmed wide‑scale in‑the‑wild abuse at the time of disclosure; defenders should treat absence of evidence as a temporary observation, not proof of safety.

- Vendor advisories intentionally omit exploit‑level details to limit short‑term copycats. That responsible‑disclosure practice means security teams must verify fixes in their specific environments rather than rely solely on media summaries.

- Variants across client builds, platforms and regional deployments matter. A mitigation that shipped in a mid‑January update may reach different device populations at different times; naive assumptions about universal patching are risky.

Practical checklist for Windows administrators (prioritized)

- Confirm Copilot client versions and apply January 2026 updates where applicable. Validate patch installations via enterprise update tools.

- Apply Copilot‑related Group Policies or Intune configuration to block Copilot Personal on managed devices until you can validate mitigations.

- Update phishing awareness materials immediately to include AI deep links and Copilot‑branded lures. Encourage users to hover over links and verify the context before clicking.

- Correlate vendor cloud logs with endpoint telemetry for suspicious Copilot activity (repeated fetches, unusual outbound URLs).

- For organizations enabling agentic assistants (ordering, payments), require step‑up authentication for any action that moves money or changes account state.

Conclusion — what Reprompt teaches us about the future of assistant security

Reprompt is not merely another vulnerability to patch; it is a structural lesson in how assistant convenience, session context and untrusted inputs interact to create emergent risks. The fix Microsoft rolled out in mid‑January 2026 stops the specific PoC, but the underlying pattern — reliance on implicit trust for URL parameters and one‑shot enforcement models — will reappear unless product teams adopt systemic controls: treat external inputs as untrusted, apply persistent enforcement across chains of interaction, and extend enterprise governance to any assistant that runs on devices which touch corporate data. Simultaneously, the industry’s race to agentic AI — exemplified by Alibaba’s Qwen moving into in‑chat commerce and travel bookings — makes these changes more urgent. When assistants can place orders and move money, security must be baked into authorization, audit and consent flows from day one. The productivity upside of assistants is real; realizing it safely requires hardening the plumbing beneath the convenience.For Windows administrators, the immediate job is clear: patch, inventory, restrict unmanaged Copilot usage on corporate endpoints, and harden detection for the novel behavioral patterns Reprompt exploited. For product teams, the heavier work is structural: evolve assistant architectures so they treat every external input as explicitly untrusted and make safety persistent, auditable and enforceable across repeated and chained operations. Only then will the promise of AI assistants be matched by the operational controls needed to keep them safe.

Source: MSN http://www.msn.com/en-us/news/techn...jS-SFy8B8MvvxidPZp799MAy0gWaeQ6lPzohmsGMLg==]