Samsung has quietly begun testing Perplexity’s answer engine inside Bixby as part of the One UI 8.5 beta, a development that turns Samsung’s long-struggling assistant from a device-control utility into a research-capable conversational agent — and signals a deliberate, multi‑vendor AI strategy across phones, TVs and appliances.

Background / Overview

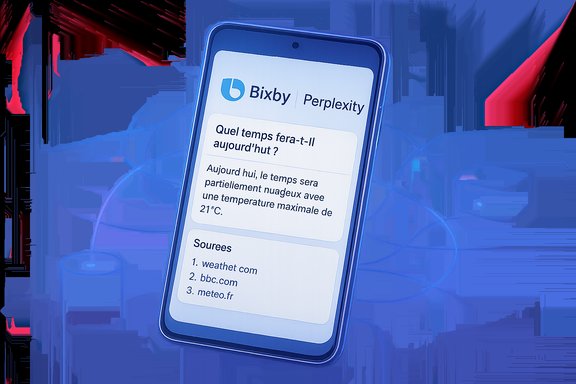

Samsung’s Vision AI strategy for 2025 and beyond centers on a multi‑agent approach: instead of depending on a single large language model, the company is building an orchestration layer that routes different queries to the partner best suited for the task (for example, Microsoft Copilot for conversational discovery and Perplexity for citation‑driven retrieval). This Vision AI Companion concept was first formalized for Samsung TVs and Smart Monitors and bundled multiple partners as selectable agents.The recent beta sightings show that Samsung is extending this multi‑agent philosophy to mobile, adding Perplexity inside Bixby on One UI 8.5 beta builds running on Galaxy S25 hardware. Early screenshots from public beta testers show Perplexity’s icon within Bixby responses and web‑sourced answers with sourcing metadata — a major jump from Bixby’s previous focus on local actions and basic replies.

What the beta screenshots reveal

Key UI and behaviour changes

- A Perplexity icon appears in the bottom of Bixby answer cards, indicating that Bixby has routed the query to Perplexity’s answer engine and is surfacing the partner as the source.

- The examples shown include nontrivial, language‑specific queries (a weather question asked in French) that return multi‑sentence, contextualized answers — including practical recommendations (e.g., clothing choices) — plus a list of sources. This is unlike the short, transactional replies Bixby typically produced.

- The integration appears in Bixby app version 4.0.50.4 on devices running the One UI 8.5 beta, according to beta reports. Availability in the wild looks limited to some S25 testers so far.

Why this matters for users

Bixby has historically excelled at phone-level automation (launching apps, toggling settings, SmartThings control) but performed poorly on open‑ended research queries. Routing complex questions to Perplexity gives Samsung a way to combine local control with web‑backed knowledge and citations — raising Bixby from utility to conversational research assistant. Early screenshots show answers that are clearly web‑sourced and phrased for conversational follow‑up, closing an important capability gap versus competitors.Technical context: One UI 8.5, Bixby 4.x and ecosystem continuity

Samsung’s One UI 8.5 beta rollout is already in motion for Galaxy S25 owners; the OS update was presented as a key staging ground for next‑generation AI features on mobile. One UI 8.5 also powers the Vision AI Companion features on Samsung displays, which clarifies why the company is piloting similar agent routing across devices. From a technical standpoint, Samsung’s architecture is consistent: keep latency‑sensitive perception on‑device (vision, wake‑word detection, basic on‑phone commands) and route long‑context web retrieval and multi‑turn reasoning to cloud agents. That hybrid model is already implemented for TVs (Copilot + Perplexity) and now appears to be used on phones via Bixby’s updated app shell.Perplexity’s role and capabilities

Perplexity differs from many LLMs in emphasis: it is an “answer engine” that performs web retrieval, synthesizes results and links to sources. Its strengths include:- Web retrieval with visible sourcing and traceable citations.

- Multi‑modal and multi‑model responses that can blend different backend tools.

- A retrieval‑first posture aimed at research‑style queries rather than purely generative chatter.

Timeline and rollout expectations

Public reporting and beta sightings indicate:- One UI 8.5 beta builds with Perplexity routing are appearing on Galaxy S25 devices in select markets today.

- Samsung is widely expected to deliver a stable One UI 8.5 release — and broader Perplexity support — in conjunction with the Galaxy S26 launch window (industry reporting points to an Unpacked event in late January with retail timing around February 2026). That gives Samsung roughly six months from these beta sightings to refine the integration.

How this mirrors and diverges from Apple and Google strategies

Apple’s recent strategy to let Siri call external AI providers (notably ChatGPT) demonstrates a similar pattern: keep the platform assistant but offer optional integrations with specialized external models for improved world knowledge and reasoning. Apple’s Siri‑ChatGPT integration is set up as an extension of Apple Intelligence, accessible via user preferences and tightly gated with privacy controls. Samsung is following a comparable diversification path but with a key difference: Samsung is explicitly packaging a multi‑agent ecosystem. Instead of choosing a single external partner, Samsung’s Vision AI Companion (and now Bixby experiments) orchestrates between Copilot, Perplexity and its own on‑device systems. That gives Samsung flexibility and redundancy — an advantage when partners’ availability, pricing or capabilities change — but introduces UX complexity around answer consistency and account linking.Google remains a central partner for Samsung (Gemini and the broader Google ecosystem remain integrated across Galaxy devices), but Samsung’s Perplexity test shows the company is hedging: offering consumers choice between multiple AI engines and keeping control of the assistant activation points (for example, the AI button and power‑button quick access). Early speculation even suggests Samsung could let users choose Bixby (with Perplexity) or Google Gemini for quick‑access assistance, though exact product choices have not been confirmed.

Business and product strategy: why Samsung is doing this

- Competitive differentiation: Bixby has lagged behind Google Assistant and Apple’s assistant; adding Perplexity brings a factual, web‑aware backbone that could help Bixby reclaim relevance.

- Ecosystem cohesion: Samsung already offers Perplexity on TVs and select appliances; integrating Perplexity into phones creates a unified, cross‑device AI experience. That consistency reduces friction for users who move between TV and phone contexts.

- Vendor risk management: A pluralistic agent strategy reduces single‑vendor lock‑in and lets Samsung switch or add partners as technologies evolve or commercial terms change.

UX, privacy and trust considerations

UX advantages

- Cited answers: Showing sources strengthens trust for factual queries and gives users ways to dig deeper.

- Contextual, multi‑turn sessions: Perplexity’s retrieval approach supports follow‑ups that remain grounded in web evidence — useful for research tasks, travel planning, or language‑specific queries.

- Unified visuals across devices: Samsung’s card‑based, large‑screen design philosophy for TVs is now influencing mobile Bixby UI — clearer cards, actionable suggestions and visible sourcing.

Privacy and data‑flow questions (flagged for scrutiny)

The hybrid architecture necessarily routes certain user prompts and content to partner backends. While Samsung’s TV materials describe privacy choices and QR sign‑in flows for personalization, the precise telemetry, retention and partner‑access boundaries for phone queries are not fully public. Users must expect:- Some queries will be routed off‑device to Perplexity’s servers and may be subject to Perplexity’s data practices.

- Account linking (to access Pro features or personalization) will change data governance; signed‑in modes often subject data to partner terms.

- Different partners may retain different logs or may use data for service improvement unless explicitly restricted.

Potential pitfalls and operational risks

- Answer consistency: Multi‑agent routing can produce conflicting answers for the same query, depending on which agent is used. Users will need clear cues and fallback rules to avoid confusion.

- Reliability on mobile networks: Phone users expect instantaneous answers; calls to cloud agents can suffer latency or fail offline. On‑device fallbacks will be essential for robust UX.

- Privacy complexity: Multiple parties (Samsung, Perplexity, Microsoft, Google) may be involved in a single interaction; transparency and granular controls will determine user acceptance.

- Commercial terms and feature fragmentation: Bundled offers (e.g., Perplexity Pro promotions on TVs or Galaxy devices) may lead to regional differences in capability and experience, complicating support and expectations.

Industry significance: what this means for assistant competition

- For Samsung: A successful Perplexity‑powered Bixby could restore Bixby as a meaningful, differentiating on‑device assistant rather than a phone‑only utility, while preserving partnerships with Google and Microsoft.

- For users: More choice — the ability to pick which AI engine answers different classes of questions — can be empowering provided Samsung makes agent routing transparent and easy to control.

- For competitors: Samsung’s multi‑agent orchestration is a potential roadmap for other device makers who want to avoid being dependent on a single LLM provider.

Short‑term recommendations for power users and IT pros

- If enrolled in One UI 8.5 beta: test Perplexity answers in controlled scenarios and note which queries are routed off‑device. Evaluate latency and whether answers include clear sources.

- If privacy is a concern: delay linking any Perplexity account or signing into Pro tiers until Samsung publishes explicit privacy and telemetry documentation.

- If you manage a fleet of Galaxy devices: prepare user guidance about multi‑agent AI behavior and potential differences across regions or builds.

What remains unverified and needs clearer confirmation

- Exact rollout schedule for Perplexity inside Bixby across regions and device models. Beta sightings point to S25 testers and a broader release likely timed with Galaxy S26, but official Samsung confirmation on phone timing is absent. Treat specific dates as provisional.

- Full privacy, telemetry and retention policies for phone‑side Perplexity queries — corporate materials for TV deployments are more explicit than what’s public for mobile. Samsung must publish phone‑specific technical privacy documentation before a stable release.

- Precise UX choices for default assistant selection (whether the quick‑access assistant will be Bixby+Perplexity or allow a user to pick Gemini or another engine) are not confirmed and should be considered a potential feature rather than an announced one.

Final analysis: strengths, strategic risks, and likely outcomes

Samsung’s Perplexity experiments inside Bixby are strategically smart: they leverage an existing multi‑agent approach, reuse investments from the TV Vision AI effort, and give the company both competitive parity and optionality against Google and Apple. If executed well, this could make Bixby into a uniquely flexible assistant that blends local device control with cited, web‑backed reasoning.Strengths:

- Rapid capability lift for Bixby on research and web‑facing queries.

- Ecosystem consistency across TVs, phones and appliances, improving cross‑device continuity.

- Business optionality: multi‑agent routing reduces dependence on any single AI provider.

- Privacy and data governance complexity must be resolved and clearly communicated before mass rollout.

- UX fragmentation and inconsistent answers across agents can harm user trust unless Samsung provides clear routing logic and fallback behavior.

- Regional fragmentation due to promotional Perplexity Pro offers and partner account linking may leave users in different markets with materially different experiences.

- A staged beta → stable release flow aligned with One UI 8.5 and Galaxy S26 debut is the most probable path: Perplexity shows up in the S25 beta now, and a polished, broadly distributed integration will appear when Samsung ships S26 and One UI 8.5 to a wider audience. This gives Samsung time to firm up privacy controls, latency fallbacks and UX polish.

Samsung’s Bixby + Perplexity experiment is more than a headline: it’s an operational test of Samsung’s multi‑agent thesis and a concrete signal that device makers will increasingly treat assistants as customizable orchestration layers rather than single‑vendor monopolies. The success of the approach will depend less on raw model ability and more on clarity: transparent routing, robust privacy controls, consistent UX fallbacks, and a clear explanation for enterprise and consumer users about when queries are handled on‑device and when they cross external partners’ servers.

If Samsung gets those elements right, Bixby could go from a neglected in‑phone assistant to a credible, cross‑device AI companion that competes on the same ground as Apple’s Siri‑ChatGPT and Google’s Gemini integrations — but with a uniquely Samsung approach: one that embraces multiple specialist partners rather than betting everything on a single LLM.

Source: Technobezz Samsung Tests Perplexity AI Integration in Bixby Assistant Beta