The era of passive applications is ending: AI agents are already reasoning, deciding, invoking tools, and acting across cloud and endpoint environments — and that shift demands a fundamentally different security posture than anything most organizations have prepared for. ])

AI agents are not merely “smarter scripts.” They are composite runtimes that bind models, toolkits, data grounding, identities, and orchestration logic into autonomous actors that can execute multi-step workflows with minimal human intervention. This makes agent security a cross-cutting problem: an agent’s risk comes not just from the model it uses, but from the tools it can call, the data it can access assumes, and the people and other agents it interacts with.

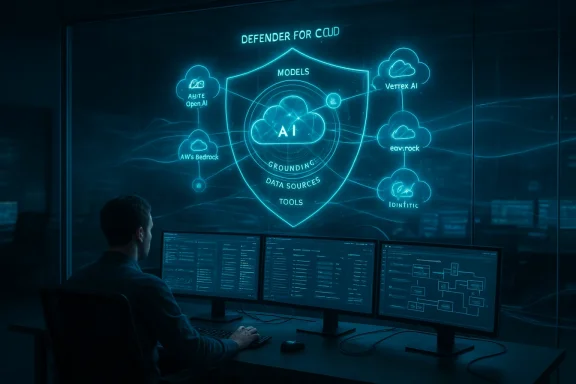

Microsoft’s recent security messaging positions the problem as one of posture management: discover every AI asset (the “AI Bill of Materials”), map how agents are grounded in data and tools, and apply focused mitigations before misuse or compromise becomes an incident. Defender for Cloud’s AI security posture features — currently previewed under the Defender CSPM plan — are explicitly designed to find agents and AI workloads across Azure AI Foundry, Azure OpenAI, AWS Bedrock, and GCP Vertex AI, and to produce contextual recommendations and attack-path analysis.

Microsoft Defender’s attack-path analysis is designed to show precisely how an Internet-exposed API or misconfigured storage account can become an exploit path into an agent that is grounded with sensitive data. That visualization — tying the agent to the source of its grounding and displaying remediation steps — is a practical example of “contextualized visibility” defenders need to prioritize response. Key controls defenders should prioritize here:

Microsoft’s posture tooling analyzes an agent’s toolchain and configuration to determine indirect prompt-injection exposure and surfaces targeted recommendations — for example, adding guardrails or human approvals for high-impact operations — while assigning a Risk Factor to help prioritize remediation. This is a necessary evolution beyond simple input sanitization: you must evaluate the combination of sources, tools, and agent autonomy. Practical defenses against XPIA:

Operational rules to limit blast radius:

Background: why agents change the security equation

Background: why agents change the security equation

AI agents are not merely “smarter scripts.” They are composite runtimes that bind models, toolkits, data grounding, identities, and orchestration logic into autonomous actors that can execute multi-step workflows with minimal human intervention. This makes agent security a cross-cutting problem: an agent’s risk comes not just from the model it uses, but from the tools it can call, the data it can access assumes, and the people and other agents it interacts with.Microsoft’s recent security messaging positions the problem as one of posture management: discover every AI asset (the “AI Bill of Materials”), map how agents are grounded in data and tools, and apply focused mitigations before misuse or compromise becomes an incident. Defender for Cloud’s AI security posture features — currently previewed under the Defender CSPM plan — are explicitly designed to find agents and AI workloads across Azure AI Foundry, Azure OpenAI, AWS Bedrock, and GCP Vertex AI, and to produce contextual recommendations and attack-path analysis.

What’s new, at a glance

- Microsoft has extended Defender for Cloud with AI Security Posture Management (AI-SPM) that inventories generative AI applications and agents, provides recommendations, and identifies attack paths across multi-cloud environments.

- Microsoft has also introduced administrative tooling and a control plane for agents — widely described as Agent 365 — intended to register, monitor, and govern agents using identity, telemetry, and policy controls. Independent coverage and Microsoft messaging both describe Agent 365 as part of a larger “agent factory” strategy.

- Defender and Security Copilot are adding specialized security agents and detection logic to triage threats, find risky configurations, and reduce mean time to remediate for AI workloads. These additions are already rolling through preview programs and early-access channels.

The multi-layer attack surface: how agents expand risk

AI agents expose a broad, layered attack surface. To rationalize defenses it helps to think through the layers:- Moredels and inference environments — potential for model-specific attacks (jailbreaks) and misuse of privileged model capabilities.

- Grounding and knowledge sources — retrieval or fine-tuning data that can be poisoned or used to inject

- Tooling and connectors — browser tools, file-system actions, database connectors, and third-party APIs agents can call. These are the most consequential escalation paths.

- Identity and lifecycle — agent identities, credential management, and revocation mechanics determine whether a compromised agent becomes a persistent foothold.

- Orchestration and coordination — “coordinator” agents that delegate to sub-agents create high-value control nodes whose compromise cascades across workflows.

Case study: agents connected to sensitive data

Agents often need context to be useful, and that context frequently comes from sensitive sources — customer PII, financial ledgers, internal documents. When an agent is grounded to those sources, a compromise of the agent can functionally equal a data brea producing the same telemetry signals as a direct database extraction.Microsoft Defender’s attack-path analysis is designed to show precisely how an Internet-exposed API or misconfigured storage account can become an exploit path into an agent that is grounded with sensitive data. That visualization — tying the agent to the source of its grounding and displaying remediation steps — is a practical example of “contextualized visibility” defenders need to prioritize response. Key controls defenders should prioritize here:

- Inventory every data grounding used by agents and apply least-privilege access.

- Treat agent data accesses like service accounts: require managed identities, short-lived tokens, and auditable request flows.

- Force human-in-the-loop approval for any agent action that could export or disseminate sensitive data.

Case study: Indirect Prompt Injection (XPIA) and tool abuse

A particularly stealthy class of attacks is indirect prompt injection (sometimes called XPIA or cross-prompt injection), where the malicious input flows through an otherwise trusted data source — a fetched web page, a PDF an agent summarizes, or a third-party API the agent calls. Because agents operate autonomously retrieved content as instructions, these inputs can silently change agent behavior and trigger dangerous operations.Microsoft’s posture tooling analyzes an agent’s toolchain and configuration to determine indirect prompt-injection exposure and surfaces targeted recommendations — for example, adding guardrails or human approvals for high-impact operations — while assigning a Risk Factor to help prioritize remediation. This is a necessary evolution beyond simple input sanitization: you must evaluate the combination of sources, tools, and agent autonomy. Practical defenses against XPIA:

- Deny-by-default external content execution; require explicit whitelisting.

- Add prompt shields and strict parsing/validation for retrieved documents.

- Use rate-limiting and stepwise approvals for actions that change state (database writes, exec calls).

- Ensure agents adata for every retrieved artifact used in decisioning.

Case study: coordinator agents and blast radius

Not all agents are equal. In multi-agent systems, coordinator agents orchestrate sub-agents and issue high-level commands; their compromise produces a multiplicative effect. Defender’s posture logic explicitly models agent roles and flags coordinator agents as higher-risk entities, recommending extra controls — stronger identity templates, elevated telemetry retention, and mandatory review gates for destructive commands. Visual attack-path tools can show how a single coordinator exposed via a misrouted API could enable broad lateral actions.Operational rules to limit blast radius:

- Apply stricter lifetime and revocation rules for coordinator agent credentials.

- Enforce separation-of-duty: coordinators cannot perform direct data exfiltration without separate approvals.

- Instrument coordinators with behavior-based anomaly detections tuned to agent-to-agent communications.

Hardening agents: practical, prioritized steps

Microsoft’s Defender guidance aggregates a broad set of recommendations; below is a prioritized, practical hardening checklist aligned to that guidance and to standard enterprise controls.- Inventory and classify (Discover): build a continuous inventory of models, agents, tool configuration data across clouds. Use automated discovery where possible.

- Map attack paths: run attack-path visualizations that connect exposed internet endpoints, identity privileges, and data groundings to agents. Prioritize fixes with the greatest risk-reduction per unit effort.

- Apply least privilege (Identify & Restrict): use managed identities and minimal scopes, rotate credentials, and avoid embedding long-lived secrets in agent manifests.

- Guardrails & human controls: require human-in-the-loop for high-risk operations; implement stepwise approval templates for potentially destructive actions.

- Monitor agent behavior: forward agent telemetry to SIEM/XDR; add rules for anomalous tool usage, new external grounding sources, and unusual inter-agent messaging.

- Test and red-team: run prompt-injection exercises, data-poisoning drills, and full compromise simulations that attempt to abuse agent toolchains.

Cross-checking the claims: what’s verifiable and what needs scrutiny

A responsible posture piece must separate documented capabilities from vendor narrative:- Verifiable: Defender for Cloud’s AI-SPM discovery across Azure Foundry, Azure OpenAI, AWS Bedrock, and GCP Vertex AI is documented in Microsoft Learn and release notes; attack-path analysis and inventory capabilities for AI workloads are explicitly described. ft has publicly announced Agent 365 and an expanded agent strategy (Copilot Studio, Foundry, Agent 365) and early-access programs (Frontier). Independent reporting from major technology outlets corroborates the high-level product direction.

- Needs cautious interpretation: market projections (for example, an IDC figure frequently quoted by Microsoft that projects ~1.3 billion agents by 2028) are vendor-cited and originate from sponsored research; they are useful as directional indicators but should be treated aA new era of agents, a new era of posture | Microsoft Security Blog