Structured data has quietly become the single most practical lever brands can pull to show up not just in blue links, but inside the answers that people now trust — the AI-generated, citation-backed responses powering tools such as ChatGPT, Gemini, Perplexity, and Copilot — and the implications for traffic, trust, and legal risk are already profound. /www.webfx.com/blog/ai/why-structured-data-matters-for-ai-citations/)

Background / Overview

Search used to be a simple funnel: user query → ranked links → clicks. That funnel is fragmenting. Conversational and generative systems increasingly synthesize information from multiple web sources and present a single, consolidated answer — often with an attached set of citations or an explicit “source” list. When those systems surface your content inside the answer itself, they create a new class of visibility that combines the reach of organic ranking with the immediacy of a featured snippet and the implicit authority of a cited source.This new discovery surface is not hypothetical. Industry audits and academic investigations show that structured page-level signals — metadata, schema markup, and consistent entity identifiers — strongly correlate with whether a page is selected and cited by AI answer engines. In observational corpora, pillars related to structured data and semantic HTML were among the strongest predictors of citation. That correlation doesn’t mean a schema guarantee, but it does mean structured data meaningfully improves your eligibility for being cited.

At the same time, platforms vary. Some AI systems explicitly expose citation telemetry and rely on retrieval layers that parse structured data; others use looser retrieval strategies that emphasize visible text. The net effect for brands: structured data is necessary but not sufficient — and it must be implemented with attention to context, provenance, and cross-platform consistency.

Why LLM citations matter for brands

- Visibility inside the answer flow. Being cited means your content appears within the conversational reply, often before a user would scroll or click through to a traditional result. That first impression matters and can redirect decision journeys.

- Authority and trust signals. A citation inside an AI answer functions like a micro-endorsement: it tells users (and downstream systems) that your content was judged by that system as relevant and verifiable.

- High-intent referral traffic. Users who click citations or expand sources tend to be further along the funnel — they want to verify, dive deeper, or transact. For publishers and merchants, that traffic is valuable and measurable.

- Brand recall in non-linear discovery. As agents and assistants become top-of-channel entry points, having your brand appear in answers builds recall outside traditional SERP positions.

The link between structured data and LLM understanding

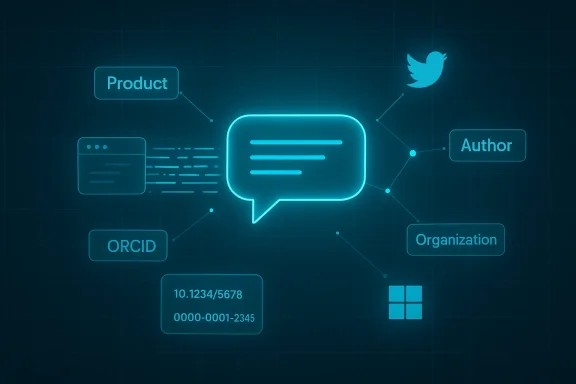

Large language models do two things in modern answer engines: they retrieve relevant content, and they synthesize that content into fluent answers. Structured data primarily helps the retrieval layer and the verification/provenance layer. It does this in three practical ways:- Clear entity signals. Schema markup exposes the page’s core entities — product, price, author, organization, dates — in machine-readable fields. Retrieval systems and knowledge graphs use those fields to disambiguate similarly named entities and to prefer pages that explicitly declare authoritative attributes.

- Provenance and sourcing. Structured properties such as publisher, author, datePublished, isBasedOn, and sameAs help a system trace where a claim came from and how fresh it is. For dataset and research content, explicit citation and identifier fields (DOI, ORCID, ROR) are especially important.

- Extractability and precision. When an AI retrieval index can read facts as discrete fields rather than free-form prose, it can extract precise answers without hallucinating. That increases the chance your pages will be used as a direct quote or as the factual basis for a synthesized answer.

Which schema types matter (and when)

Different page goals require different schema types. The pragmatic approach is to choose the schema type that reflects the page’s primary intent — and to populate both required and recommended properties with accurate, verifiable values.- Article / NewsArticle

- Use for editorial and thought-leadership pieces.

- Populate author, datePublished, dateModified, headline, publisher (with logo), and mainEntityOfPage.

- Why it matters: author and publication metadata are core provenance signals for AI summaries and news overviews.

- FAQPage and HowTo

- Use for pages that directly answer user questions or provide step-by-step instructions.

- Why it matters: these markups map naturally to conversational prompts and are highly extractable for assistants that present bulleted advice or step lists.

- Product, Offer, AggregateRating, Review

- Use for ecommerce pages, product comparisons, and review hubs.

- Why it matters: shopping-focused answer engines and comparison features look for structured price, availability, and rating data to ground recommendations.

- Organization / LocalBusiness

- Use for brand pages and local listings.

- Key fields: name, address, geo, sameAs (link to Wikidata/LinkedIn), contactPoint, openingHours.

- Why it matters: local and brand attribution heavily rely on consistent NAP (name, address, phone) and canonical identifiers.

- Person (Author)

- Use for author pages and expert bios.

- Include sameAs (ORCID, LinkedIn), affiliation, and credentials.

- Why it matters: author reputation and verifiable identity are inputs into model trust heuristics.

- Dataset

- Use for research data and reproducible artifacts.

- Include identifier, license, citation, and isBasedOn when appropriate.

- Why it matters: AI systems that surface technical or academic answers prefer pages that expose datasets with machine-readable provenance.

Practical audit checklist: make your site AI-citable

Structured data is about correctness and consistency as much as it is about presence. Use this checklist as a practical playbook.- Audit with the right tools:

- Run Google’s Rich Results Test and the Schema Markup Validator to catch syntax and common semantic errors.

- Match schema to intent:

- Don’t force a schema type that doesn’t match the visible content. If it’s a tutorial, use HowTo/FAQ; if it’s editorial, use Article. Misuse reduces eligibility.

- Mirror visible content:

- Every fact declared in JSON-LD must be visible on the page. Hidden or contradictory metadata is a red flag and can nullify the benefit.

- Normalize your entity signals:

- Use canonical URLs for @id, populate sameAs with authoritative identifiers (Wikidata, official social profiles, ROR/ORCID where relevant), and keep names and bylines consistent across pages and third-party platforms.

- Keep timestamps honest:

- Populate datePublished and dateModified correctly; many answer engines favor fresh content for topical queries.

- Provide provenance links:

- For claims that rely on external data, use isBasedOn, citation, or references fields to indicate original sources. This is particularly important for datasets and research.

- Consolidate and deduplicate:

- Avoid multiple conflicting JSON-LD blocks for the same entity on a single page. Use a single well-structured block per canonical resource.

- Monitor citation telemetry:

- Track referral clicks from AI tools where possible, and use brand-monitoring tools that attempt to surface AI citations and answer-engine visibility.

Implementation patterns and technical tips

JSON-LD as the default format

Google explicitly recommends JSON-LD as the simplest and most robust format for site-scale structured data deployment; it decouples markup from visible HTML and reduces breakage risk during template changes. Use a single JSON-LD block in the head or near the top of the body for each page, and avoid injecting isolated microdata fragments through multiple plugins.Stable @id and canonicalization

Use absolute canonical URLs for @id values. Avoid query strings, session tokens, or ephemeral IDs. The same @id should always refer to one entity; inconsistency causes knowledge-graph fragmentation.sameAs and external identifiers

Populate sameAs with links to authoritative external identities — Wikidata, LinkedIn, ORCID, ROR — where appropriate. For datasets and scholarly work, include DOIs in identifier fields. These external identifiers are powerful disambiguation signals for AI systems building entity graphs.Dates and freshness signals

Explicitly provide datePublished and dateModified. For time-sensitive queries (news, product recalls, events), freshness is often a top selection criterion for citation. Some platforms weight recency heavily in their retrieval ranking.Don’t make schema the only place you store facts

If a fact is meaningful, it should appear as readable text. Experiments show systems that do not integrate structured data into the retrieval pipeline may ignore facts stored only in JSON-LD. Put the same facts in clear headings and first-paragraph sentences so retrieval layers that prioritize visible text still surface the content.Measurement and ROI: how to know it’s working

- Establish baseline metrics for organic referral clicks, brand SERP prominence, and conversions.

- Instrument pages with UTM parameters if you can (for landing pages targeted by answer-engine users), and use event tracking to capture clicks from “source” or “learn more” actions inside AI interfaces when they are exposed.

- Use AEO/GEO platforms and publisher tools that report answer-engine citation shares; these platforms are emerging but offer early visibility into how often your content is referenced.

- Perform controlled A/B tests: deploy enriched schema on a set of pages and compare citation and referral outcomes against a matched control group.

- Not every AI tool surfaces click-level referrals; some keep users inside the assistant. Track downstream metrics (brand searches, direct traffic spikes, branded queries) alongside click metrics.

- Expect platform-specific variance: a page that gets cited by one answer engine may not be chosen by another. Don’t optimize to one tool unless your audience is concentrated there.

Risks, legal issues, and ethical considerations

Structured data helps visibility — but AI-driven citation practices have also provoked legal and ethical pushback.- Copyright and republishing disputes. Publishers have brought suits against AI answer engines that allegedly ingest and reproduce premium content without permission. Lawsuits and publisher demands can change how platforms access and cite content, which in turn changes the citation dynamics brands rely on. Monitor rights and licensing considerations for your content model.

- Over-reliance on opaque systems. A citation gives perceived authority, but the model’s internal weighting and whether it extracted facts correctly remains opaque. Don’t treat AI citations as a substitute for audited backlinks or verified authority signals. Maintain the fundamentals of editorial quality and transparency.

- Mismatched metadata risks. Conflicting dates, authorship, or identity claims can harm both SEO and legal standing. If your JSON-LD misrepresents authorship or ownership, you could face search penalties or credibility loss. Google explicitly warns against misleading or hidden markup.

- Privacy and scraped data. If your pages expose user data in schema (don’t), you risk privacy violations. Only include public, non-sensitive data in structured markup.

What publishers and brands should prioritize this quarter

- Audit top-converting pages first. Those pages already have traffic and conversions; making them AI-citable maximizes ROI.

- Add or refine Article, FAQ, Product, and Organization schema where appropriate, ensuring visible text mirrors JSON-LD facts.

- Add authoritative external identifiers (Wikidata, ORCID,ation and author objects.

- Add provenance fields to research and data assets (citation, isBasedOn, identifier).

- Run a controlled test: enrich schema on a segment of pages, monitor citation and referral patterns across multiple answer engines, and iterate.

Looking ahead: structured data as the language of discovery

Structured data is evolving from a search-engine nicety into a lingua franca for AI-powered discovery. Expect three converging trends:- Richer entity relationships. Schema will expand beyond flat attributes to describe networks of people, organizations, and resources — the kind of graph data that powers explainable, traceable answers.

- LLM-specific markups. New vocabularies and properties could appear that are designed for retrieval-augmented generation and “explainable” outputs — fields that indicate confidence, evidence, and provenance in machine-readable ways. Early entrants and standards projects are experimenting with these primitives.

- Auto-generated, CMS-native schema. Expect major CMSs and SEO tools to bake richer JSON-LD generation into templates and to surface validation and provenance gates natively to editors. Automation reduces errors but also increases the importance of auditing defaults and customization.

Conclusion

Structured data no longer sits in the domain of technical specialists as an optional SEO trick; it’s a strategic asset for brands that want to own how they appear inside AI answers. Correctly implemented schema improves the precision of retrieval, surfaces provenance for verification, and helps answer engines select your content as a cited source. But implementation matters: JSON-LD is the recommended format, metadata must mirror visible content, external identifiers and provenance fields raise trust, and platform behavior varies — so test and measure.Importantly, the landscape is dynamic. Legal disputes over content use, shifting platform behaviors, and evolving LLM pipelines mean that citation strategies must be monitored continuously. Brands that combine rigorous structured data practice with editorial quality, clear provenance, and measurement will gain the advantage — securing not just clicks, but citation share and the implicit authority that comes with being the source inside the answer.

For brands and publishers, the immediate playbook is straightforward: audit your highest-value pages, deploy accurate JSON-LD that reflects the visible content, add authoritative identifiers, and measure citation outcomes across multiple AI platforms. Done well, that work turns metadata into measurable business value — and keeps your brand visible in the answers people trust.

Source: Tri-City Herald https://www.tri-cityherald.com/news/business/article314865217.html