Symmetry Systems’ new Symmetry AIGuard brings a single-pane-of-glass approach to a problem that has become painfully obvious across enterprises in 2026: AI is proliferating faster than organizations can govern it, and shadow models, internal services, enterprise copilots, and autonomous “agentic” identities are introducing new, intertwined data-and-identity risks.

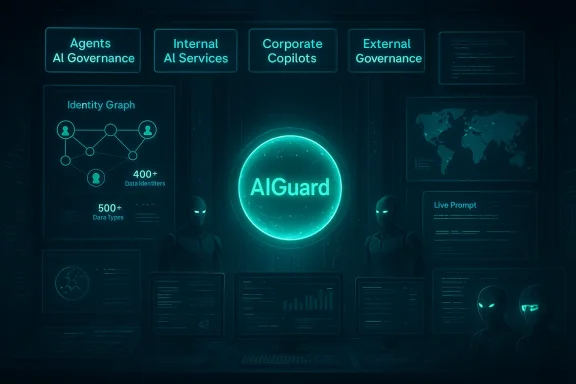

Symmetry AIGuard, announced Feb. 25, 2026, is a standalone product spun out of Symmetry Systems’ Data+AI security portfolio that promises unified visibility, governance, and enforcement across four primary pillars: agentic AI governance, internal AI services, corporate copilot security, and external LLM governance. The product positions itself on Symmetry’s established data-and-identity graph (the same foundation behind Symmetry DataGuard), which the vendor says includes more than 400 sensitive data identifiers and 500 semantic data types to map what AI systems can reach and what that access actually means.

At a high level, Symmetry AIGuard claims to answer the core enterprise questions executives and security leaders now face: What AI is running? Who created or uses it? What sensitive data can it access? Is it sanctioned? And — crucially — what actions can we take immediately to reduce exposure? The platform emphasizes treating agent identities (autonomous AI agents and bots) as first-class security principals, applying identity governance, lifecycle sanctioning, and permission blast-radius mapping to both machines and humans.

This article unpacks what Symmetry AIGuard brings to market, why its approach matters for modern AI risk, the practical strengths of the product, potential gaps and risks organizations should weigh, and how security teams can operationalize these new controls without slowing innovation to a crawl.

However, success depends on execution: the breadth and accuracy of connectors, the fidelity of semantic detection, and the reliability of lifecycle enforcement across heterogeneous environments. Enterprises should assess these capabilities relative to their own AI footprint, cloud mix, and regulatory landscape.

That said, organizations should adopt AIGuard (or any similar platform) with realistic expectations. Achieving comprehensive coverage will require integrations across networking, endpoints, cloud platforms, and developer toolchains; policies must be tuned to business context to avoid excessive false positives; and legal/privacy impacts must be managed deliberately. When deployed as part of a cross-functional governance program — with Dev/ML, Security, Legal, and Data teams aligned — Symmetry AIGuard looks positioned to materially reduce the most acute AI-driven data risks while enabling responsible adoption.

For security teams racing to tame agentic AI and shadow LLMs, platforms that bring together identity and data context are no longer optional. They are foundational tools for safe innovation — provided they are implemented thoughtfully, transparently, and in coordination with the broader organization.

Source: Bolsamania Symmetry Systems Launches Symmetry AIGuard: The Industry's Most Comprehensive AI Security and Governance Platform

Background / Overview

Background / Overview

Symmetry AIGuard, announced Feb. 25, 2026, is a standalone product spun out of Symmetry Systems’ Data+AI security portfolio that promises unified visibility, governance, and enforcement across four primary pillars: agentic AI governance, internal AI services, corporate copilot security, and external LLM governance. The product positions itself on Symmetry’s established data-and-identity graph (the same foundation behind Symmetry DataGuard), which the vendor says includes more than 400 sensitive data identifiers and 500 semantic data types to map what AI systems can reach and what that access actually means.At a high level, Symmetry AIGuard claims to answer the core enterprise questions executives and security leaders now face: What AI is running? Who created or uses it? What sensitive data can it access? Is it sanctioned? And — crucially — what actions can we take immediately to reduce exposure? The platform emphasizes treating agent identities (autonomous AI agents and bots) as first-class security principals, applying identity governance, lifecycle sanctioning, and permission blast-radius mapping to both machines and humans.

This article unpacks what Symmetry AIGuard brings to market, why its approach matters for modern AI risk, the practical strengths of the product, potential gaps and risks organizations should weigh, and how security teams can operationalize these new controls without slowing innovation to a crawl.

Why an AI-specific governance layer is now table stakes

Adoption of generative AI and automation stacks has created multiple overlapping risk surfaces:- Rapid adoption of external LLMs and hosted chat services by knowledge workers without central approval (shadow AI).

- Internal model deployments and Retrieval-Augmented Generation (RAG) pipelines that introduce new data paths between sensitive data stores and generative models.

- Enterprise copilots (vendor-built or in-house) that have broad data access yet are often configured inconsistently.

- Emerging agentic systems — scripted, chained, or autonomous agents that act on behalf of users — which can execute multi-step workflows with permissions that could be destructive.

What Symmetry AIGuard actually does — product breakdown

Agentic AI governance: identities, lifecycle, and blast radius

Symmetry AIGuard treats agents as security principals with the same scrutiny applied to human and service accounts. The capabilities Symmetry highlights include:- Inventory of agents by type (Copilot Studio outputs, Microsoft Copilot platform agents, custom agent frameworks).

- Classification and registry metadata (who created the agent, purpose, ownership).

- Permission mapping and blast-radius analysis to determine what each agent can reach.

- Built-in sanctioning workflows that track approval state and lifecycle (approved, revoked, orphaned, dormant).

- Automated risk surfacing for high-risk agents (over-privileged, destructive capabilities, orphaned or dormant accounts).

Internal AI services security & governance

For internal AI services — the models and pipelines organizations build themselves — AIGuard aims to:- Discover and inventory services by purpose (predictive, generative, RAG), environment, and instance.

- Map data dependencies and identify overexposure, residency, and regulatory issues.

- Provide enforcement actions from the same interface (reduce need for triage tickets).

Corporate copilot security

Symmetry expands on its prior work securing Microsoft Copilot to support governance across enterprise copilots more broadly. Key operational questions it answers:- Who has active copilot licenses and how are users engaging with copilots?

- What data is accessible to each copilot and does that access exceed policy intent?

- Are there geographic or compliance gaps (data residency, export controls) to address?

External LLM governance and shadow AI detection

AIGuard monitors both sanctioned and unsanctioned external LLM usage via proxy integrations. It claims to identify:- Which models users are calling (ChatGPT, Gemini, Claude, etc.).

- Who is using them and what prompts contain sensitive material.

- Real-time prompt and response monitoring to surface immediate policy violations.

Data-and-identity foundation

AIGuard is built against Symmetry’s data-and-identity graph, which aggregates sensitive-data detection (400+ identifiers) and semantic classifications (500+ data types). This taxonomy and mapping lets AIGuard answer not just “an AI accessed something,” but what that access was and how sensitive the underlying asset is — enabling risk-prioritized responses.Strengths: where Symmetry AIGuard can move the needle

- Data-to-identity context at scale. The product explicitly ties identities (human, service, agent) to sensitive data access — a core deficiency in many DSPM and DLP solutions today. This combination is the critical signal set for modern AI risk.

- Agent-first governance model. Treating autonomous agents as first-class identities reflects the operational reality of agentic AI and allows lifecycle controls, which many organizations lack today.

- Unified remediation workflows. Moving from detection to action inside the same platform reduces Mean Time To Remediation (MTTR), especially important when risky prompts or agent behavior must be stopped quickly.

- Pre-built semantic taxonomy. Having a broad, vendor-curated set of sensitive-data identifiers and semantic types accelerates deployment and policy creation for teams that lack a mature data taxonomy.

- Copilot and external LLM coverage. The product explicitly addresses corporate copilots and shadow LLM usage, both top-of-mind for CISOs worried about data exfiltration via conversational interfaces.

- Operational alignment for multiple stakeholders. Symmetry frames AIGuard as a tool for CTOs, CISOs, Legal, and Data leaders — necessary for cross-functional ownership of AI risk.

Potential gaps, limitations, and risks to consider

While the product addresses real problems, organizations will need to weigh several operational realities before assuming AIGuard is a silver bullet.1. Visibility blindspots: encrypted channels and endpoint bypass

Proxy-based monitoring and prompt/response inspection can be effective for traffic that routes through managed proxies, but modern work patterns and third-party tooling often use encrypted channels, private browsers, or unmanaged endpoints. Users can also call LLMs via mobile devices or third-party connectors that bypass enterprise network controls. Achieving complete external LLM visibility will remain a cat-and-mouse game unless organizations combine proxy controls with endpoint agents, browser extensions, and strict policy enforcement.2. False positives and semantic nuance

Mapping prompts and RAG queries to sensitive data types requires strong natural-language understanding and context. Enterprises that rely on aggressive classifiers may see elevated false positives, creating alert fatigue. Conversely, permissive classifiers risk missing subtle but high-impact data leaks embedded in paraphrased prompts. Successful deployments will need careful calibration, human review, and policies attuned to business context.3. Integration and operational overhead

Delivering identity-and-data enforcement across clouds, SaaS, on-prem systems, and airgapped environments is technically demanding. Enterprises should prepare for integration work — especially where custom or homegrown AI frameworks and pipelines are in use. The promise of “single pane” control can mask substantial implementation complexity.4. Governance, legal, and privacy implications

Real-time prompt/response monitoring captures potentially sensitive employee communications. Legal and HR teams must be engaged early to define acceptable monitoring practices, employee notice, and retention policies. Overly broad surveillance can create regulatory and morale issues, especially across jurisdictions with strong privacy protections.5. Model behavior and non-determinism

Agentic systems and LLMs can behave unpredictably. Governance controls that revoke or alter privileges must account for non-deterministic outcomes and ensure safe fallbacks. For example, disabling an agent mid-run could leave partial state changes or orphaned processes. Lifecycle workflows must support safe decommissioning.6. Vendor concentration and lock-in

Relying on a single vendor for both detection and enforcement increases dependency. Organizations should plan for data portability, exportability of policies, and integration with open standards to avoid lock-in.Operational playbook: how organizations should evaluate and deploy AIGuard (or similar solutions)

Adopting an AI governance platform is as much process as it is technology. Below is a practical sequence IT and security teams can use.- Convene a cross-functional steering group with Security, Legal/Compliance, Data Governance, and Dev/ML Engineering.

- Perform an initial AI inventory: catalog known copilots, internal models, RAG pipelines, and any agent frameworks in use.

- Conduct a controlled preview: deploy AIGuard in a non-production environment or with a pilot business unit to validate taxonomy matches and false-positive rates.

- Tune classifiers and policies iteratively with stakeholder feedback, focusing first on high-sensitivity assets and high-risk agents.

- Integrate enforcement actions with SOC workflows: SIEM, SOAR, ticketing, and incident response playbooks.

- Define lifecycle and approval workflows for agent creation and privileged capabilities; require human sponsorship for agents that request sensitive permissions.

- Establish continuous monitoring and auditing for sanctioned models and agents, plus a rapid deprovisioning path for orphaned or over-privileged identities.

- Document privacy disclosures and retention policies for prompt/response logging, and obtain legal signoff for monitoring policies across jurisdictions.

Practical scenarios: how AIGuard would function in the wild

Scenario A — Shadow LLM prompt leakage

An analyst uses a public LLM in a browser to summarize sensitive customer data and pastes a customer dataset into the prompt. AIGuard’s proxy monitoring flags the prompt as containing PII; the platform correlates the prompt to a classified dataset in the DataGuard taxonomy and raises an alert to the security and data governance teams. Depending on policy, AIGuard can (a) notify the user in-line, (b) block the outbound request, or (c) create an incident for manual review.Scenario B — Orphaned autonomous agent with cloud privileges

A marketing automation agent built six months ago is still active, with permissions to delete objects in an S3-equivalent store. AIGuard’s inventory detects the agent as orphaned (no active owner), calculates blast radius using identity-to-data mapping, and marks the agent as high-risk. The platform triggers a lifecycle action to disable the agent pending owner verification and issues a remediation ticket to the cloud team.Scenario C — RAG pipeline exposing regulated documents

A RAG pipeline used in legal workflows indexes contracts containing export-controlled terms. AIGuard maps the RAG data sources to semantic classifications indicating regulatory sensitivity and flags the pipeline for misconfigured residency settings. Security can then apply policy changes to restrict training/serving to compliant regions or require data redaction.Competitive and market positioning

Symmetry’s approach emphasizes the marriage of identity governance and data classification specifically for AI workloads, which differentiates it from vendors who focus on either DSPM (data security posture management) or traditional IAM alone. This data+identity strategy is aligned with the direction of enterprise risk teams that now see AI risk as neither purely a data nor a model problem but an intersectional one.However, success depends on execution: the breadth and accuracy of connectors, the fidelity of semantic detection, and the reliability of lifecycle enforcement across heterogeneous environments. Enterprises should assess these capabilities relative to their own AI footprint, cloud mix, and regulatory landscape.

Governance and policy recommendations for enterprise leaders

- Treat agent identities like privileged human users: require sponsorship, just-in-time access, and automated expiration.

- Apply least privilege to all AI systems, not just human accounts; map and restrict model access to the minimum data necessary.

- Implement real-time monitoring for external LLM use, but pair it with employee education and clear acceptable-use policies.

- Ensure legal and HR review of monitoring to avoid privacy violations and to comply with local laws.

- Use RAG hygiene: filter, redact, or transform sensitive inputs before they reach any external or internal model used for generative tasks.

- Maintain exportable policies and audit logs so controls and decisions are defensible in compliance or incident response reviews.

Where Symmetry AIGuard could evolve next

AIGuard’s initial capabilities map well to the immediate gaps enterprises face, but the product roadmap to reach broader enterprise maturity could include:- Agent-level behavioral analytics: detect anomalous agent decision-making, not just static permission risks.

- Tight integration with model governance registries: link model training data provenance, drift metrics, and model-card metadata to enforcement decisions.

- Standardized policy export/import: interoperate with third-party governance frameworks and open taxonomies to reduce vendor lock-in.

- Expanded endpoint and browser protections to reduce blindspots from unmanaged devices.

- Privacy-preserving detection techniques to minimize retention of user prompt contents while still surfacing risk.

Final assessment: practical, necessary, but not a turnkey cure

Symmetry AIGuard addresses an urgent market need with a sensible premise: to govern AI effectively you must connect identities, data, and AI behavior. Its emphasis on treating agents as first-class identities and combining that with a rich semantic data taxonomy is a meaningful advance beyond the piecemeal tooling many organizations currently stitch together.That said, organizations should adopt AIGuard (or any similar platform) with realistic expectations. Achieving comprehensive coverage will require integrations across networking, endpoints, cloud platforms, and developer toolchains; policies must be tuned to business context to avoid excessive false positives; and legal/privacy impacts must be managed deliberately. When deployed as part of a cross-functional governance program — with Dev/ML, Security, Legal, and Data teams aligned — Symmetry AIGuard looks positioned to materially reduce the most acute AI-driven data risks while enabling responsible adoption.

For security teams racing to tame agentic AI and shadow LLMs, platforms that bring together identity and data context are no longer optional. They are foundational tools for safe innovation — provided they are implemented thoughtfully, transparently, and in coordination with the broader organization.

Source: Bolsamania Symmetry Systems Launches Symmetry AIGuard: The Industry's Most Comprehensive AI Security and Governance Platform