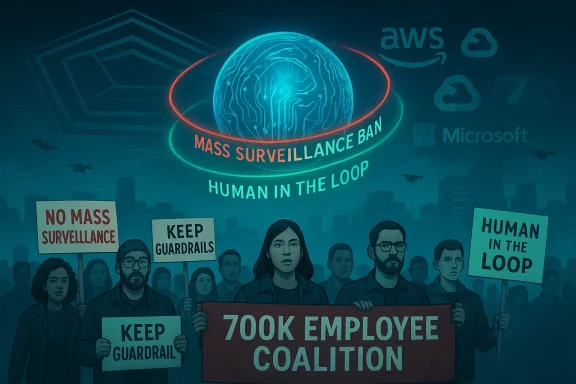

The short, sharp clash between the Pentagon and Anthropic has now spilled into boardrooms and cafeterias across Big Tech — and a coalition of worker groups claiming to represent roughly 700,000 employees has publicly asked Amazon, Google and Microsoft to refuse any Pentagon demands that would strip AI systems of guardrails against mass domestic surveillance and fully autonomous lethal systems.

For more than a year the U.S. Department of Defense (DoD) has quietly accelerated efforts to put frontier AI models to use in classified operations. That effort ran headlong into Anthropic, the developer of the Claude family of models, when defense officials pressed the company to remove two explicit constraints from its model use policy: (1) a ban on using the model for mass domestic surveillance of Americans, and (2) a ban on allowing the model to operate as the decision-maker in fully autonomous weapons that can apply lethal force without human oversight. Anthropic’s leadership, led publicly by CEO Dario Amodei, has resisted those specific requests and framed them as fundamental, ethical red lines.

The Pentagon responded with unusually harsh steps for a government‑supplier dispute: it publicly designated Anthropic a “supply‑chain risk” and ordered federal agencies to cease using Claude in classified missions while signaling it might compel action through authorities such as the Defense Production Act. That escalation converted a behind‑the‑scenes procurement disagreement into a national policy crisis about who decides the permissible uses of advanced AI. Independent reporting and multiple official briefings confirm both the nature of the DoD’s demands and the Pentagon’s follow‑through on restrictions.

In direct response, worker organizations and unions across the technology sector issued a joint statement — organized and published by activists including the No Tech For Apartheid campaign — urging their employers to reject any similar Pentagon demands and to increase transparency around government contracts that touch surveillance and immigration enforcement. The organizers say the collective voice aggregates groups from Amazon, Google and Microsoft, as well as several unions and worker campaigns, and they peg the combined constituency at roughly 700,000 employees. The letter names specific concerns about contracts with agencies such as the Department of Homeland Security, Customs and Border Protection and Immigration and Customs Enforcement — and it explicitly frames the Anthropic dispute as a threshold test for whether companies will keep internal guardrails in place.

Two legal levers have been discussed in public reporting:

Companies now face a strategic choice that cannot be resolved purely by legal counsel or PR messaging. They must weigh operational obligations to government customers against long‑term social license and workforce cohesion. Policymakers face an equally hard choice: either legislate clearer boundaries for defense AI — a politically difficult but stabilizing move — or accept an ad‑hoc, case‑by‑case contest between the Pentagon, vendors and activist coalitions.

For workers, the current coalition demonstrates leverage: public moral claims backed by organized groups can reshape corporate calculations. But without clearer legal protections or statutory guardrails, worker influence risks being episodic rather than structural.

This dispute will not be settled by a single headline. It is a pivotal moment in which corporate governance, national security, labor power and civil liberties intersect. The decisions companies, workers and the DoD make in the coming weeks will set precedents that ripple through procurement law, the architecture of cloud and model governance, and the ethical contours of technological power.

Source: Moneycontrol.com https://www.moneycontrol.com/techno...ect-pentagon-ai-demands-article-13854485.html

Background / Overview

Background / Overview

For more than a year the U.S. Department of Defense (DoD) has quietly accelerated efforts to put frontier AI models to use in classified operations. That effort ran headlong into Anthropic, the developer of the Claude family of models, when defense officials pressed the company to remove two explicit constraints from its model use policy: (1) a ban on using the model for mass domestic surveillance of Americans, and (2) a ban on allowing the model to operate as the decision-maker in fully autonomous weapons that can apply lethal force without human oversight. Anthropic’s leadership, led publicly by CEO Dario Amodei, has resisted those specific requests and framed them as fundamental, ethical red lines.The Pentagon responded with unusually harsh steps for a government‑supplier dispute: it publicly designated Anthropic a “supply‑chain risk” and ordered federal agencies to cease using Claude in classified missions while signaling it might compel action through authorities such as the Defense Production Act. That escalation converted a behind‑the‑scenes procurement disagreement into a national policy crisis about who decides the permissible uses of advanced AI. Independent reporting and multiple official briefings confirm both the nature of the DoD’s demands and the Pentagon’s follow‑through on restrictions.

In direct response, worker organizations and unions across the technology sector issued a joint statement — organized and published by activists including the No Tech For Apartheid campaign — urging their employers to reject any similar Pentagon demands and to increase transparency around government contracts that touch surveillance and immigration enforcement. The organizers say the collective voice aggregates groups from Amazon, Google and Microsoft, as well as several unions and worker campaigns, and they peg the combined constituency at roughly 700,000 employees. The letter names specific concerns about contracts with agencies such as the Department of Homeland Security, Customs and Border Protection and Immigration and Customs Enforcement — and it explicitly frames the Anthropic dispute as a threshold test for whether companies will keep internal guardrails in place.

What the worker coalition is asking for

The joint worker statement contains three core requests:- Executive leadership at Amazon, Google and Microsoft should refuse to accede to Pentagon demands that would remove safeguards against mass domestic surveillance and fully autonomous lethal systems.

- Companies should publish more transparency about existing and proposed agreements with federal agencies — especially those connected to immigration enforcement and domestic policing — and disclose the scope of data and capabilities provided through cloud and AI services.

- Workers urge broader public and legislative action: they call for federal regulation to restrict the use of AI in mass surveillance and autonomous weapons and ask employees across firms to organize to prevent their labor from being co‑opted into these outcomes.

The Pentagon’s position and legal tools

Senior Pentagon officials have argued publicly that companies should tune guardrails to permit lawful military uses; the point of friction is whether vendor-set limits constitute an unacceptable operational constraint during national‑security contingencies. According to reporting and interviews with Pentagon staffers, the DoD pressed Anthropic to modify terms that would otherwise prevent the military from deploying Claude in certain scenarios, including high‑tempo autonomous systems or domestic operations that the Pentagon characterizes as lawful surveillance.Two legal levers have been discussed in public reporting:

- Supply‑chain designations — labeling a vendor a “supply‑chain risk” can dissuade other government customers from using that vendor and create practical barriers to federal contracting.

- The Defense Production Act (DPA) — while rarely invoked in this manner, executives and legal analysts have debated whether the DPA could be used to compel access to a supplier’s technical capabilities in the name of national defense if a vendor refuses to cooperate. Analysts caution that the legal fit is imperfect and politically contentious, but the DoD has publicly threatened both tools during this dispute.

Why the two guardrails matter (technical and ethical dimensions)

Mass domestic surveillance: technical reality and civil‑liberties risk

Mass domestic surveillance is not an abstract risk: AI models enhance the scale and speed of pattern finding across telephony, social media, imagery, and sensor networks. When models are fine‑tuned and integrated into real‑time pipelines, they can accelerate identifying, classifying and predicting individuals’ locations, affiliations and even emotional states. Vendors and civil‑liberties advocates argue that explicit vendor policy prohibiting such uses is a practical mitigation; defense officials counter that lawful intelligence and force‑protection activities sometimes require broad data access. The core dispute is whether vendor‑imposed use restrictions should bind government actors who claim lawful authority.Fully autonomous lethal systems: the human‑in‑the‑loop debate

The second guardrail — prohibiting AI systems from making final lethal decisions without human oversight — sits at the heart of decades‑old debates about lethal autonomous weapons systems (LAWS). Technically, “human‑in‑the‑loop” can mean anything from a human making the final trigger decision after AI identifies a target, to a human supervising behavior at a system‑of‑systems level. The Pentagon’s interest in more autonomous systems is driven by perceived needs for speed, scale and survivability in contested environments, especially where peer adversaries are reportedly pursuing autonomy. Ethicists, engineers and many tech workers argue that delegating kill decisions to algorithms creates unacceptable risk of misidentification, escalation and legal liability. Anthropic’s refusal to accept a blanket removal of this guardrail was therefore pitched as an ethical boundary rather than a narrow contractual tweak.Corporate and market fault lines

Major cloud providers and model developers occupy overlapping but distinct roles in the ecosystem:- Cloud hosts (Amazon Web Services, Google Cloud, Microsoft Azure) operate the infrastructure that stores data and runs models. Federal contracts often depend on certified government cloud regions and compliance postures.

- Model creators (Anthropic, OpenAI, others) supply the models and, in many contracts, retain control over usage policies and model governance.

- Integrators and contractors stitch services into operational products used by agencies.

The worker movement’s precedent and leverage

Employee activism in tech is now an established force. Over recent years, campaigns such as No Tech For Apartheid, No Azure for Apartheid, Alphabet Workers Union and others have successfully pressured companies over Project Nimbus contracts, Israel‑related cloud deals, and internal policies. Those campaigns have demonstrated several mechanisms of influence:- Public pressure and reputational risk: employee protests and open letters attract media attention and customer scrutiny.

- Collective bargaining and unionization: unions such as the Communications Workers of America (CWA) and company‑specific unions can leverage contractual tools where present.

- Operational friction: employee refusals to work on specific projects or internal whistleblowing create logistical and reputational costs for management.

Risks, trade‑offs and unintended consequences

National‑security risks of a vendor lockdown

If vendors uniformly refuse to adapt policy to DoD demands, the Pentagon faces a set of hard choices: continue negotiating, switch vendors (including foreign or emergent suppliers), build in‑house capabilities, or seek statutory compulsion. Each path carries operational costs and political implications. A vendor blacklist can create capability gaps and could push the DoD to accelerate in‑house tech development — a reasonable defense outcome in some scenarios, but one that could reduce the role of ethical guardrails enforced by commercial firms.Corporate risk of capitulation

If companies accept Pentagon terms that strip the two guardrails, they risk significant reputational damage, employee unrest, regulatory scrutiny and potential legal exposure. The market reaction could include customer churn, worker resignations, and activist investor pressure. Past episodes (for example, controversy over cloud deals in conflict zones) show that public backlash can force rapid reversals or product removals. For companies, the calculus is not merely legal compliance but the long‑term social license to operate.The legal and constitutional minefield

Forcing companies to change usage policies raises questions about compelled speech, procurement fairness, and due process. The DPA has never been widely used to require product‑use policy changes, and a test case here could create extended litigation and uncertain constitutional outcomes. That legal uncertainty amplifies the strategic risk for both sides. Observers have noted that there is no neat legal blueprint for compelling an American company to remove ethical safeguards from a product it controls.What independent reporting confirms (verification of key claims)

I cross‑checked the most consequential claims against multiple reputable outlets and primary documents:- The Pentagon did pressure Anthropic to alter guardrails related to mass surveillance and autonomous weapons, and DoD officials publicly discussed the dispute. This reporting has been confirmed in both AP and The Guardian coverage, as well as detailed reporting in several national outlets.

- The Pentagon labeled Anthropic a supply‑chain risk and ordered agencies to suspend use of Claude pending resolution. Multiple outlets reported the DoD’s designation and the order to agencies.

- The worker coalition’s joint statement — organized by No Tech For Apartheid and published on Medium — calls on Amazon, Google and Microsoft to refuse similar Pentagon demands and asserts a combined constituency of roughly 700,000 employees; the letter text and the claim are publicly available and have been republished by several press outlets. Independent journalists have reproduced the full text and signatory list.

Strategic analysis: strengths and weaknesses of the worker coalition’s approach

Strengths

- Rapid narrative framing: By naming clear, emotionally resonant guardrails — no mass domestic surveillance and no autonomous killing machines — the coalition turned a complex procurement dispute into a public‑facing moral dilemma that ordinary readers can grasp.

- Coalition breadth: Combining unionized workers with activist campaigns amplifies reach across media, policy advocates and internal stakeholders.

- Leverage on reputation and hiring: Big Tech firms rely on engineering talent and reputation. Sustained internal dissent raises real business risks that boardrooms must weigh against government pressure.

Weaknesses and operational limits

- Legal and procurement constraints: Worker demands are powerful politically but do not create a legal bar to DoD action. If the government chooses to escalate, companies could face binding orders or legal pressure.

- Coalition heterogeneity: The coalition aggregates many organizations; tactical unity may fray under sustained negotiation or if companies offer partial concessions.

- Potential for escalation: A hardline refusal by multiple major vendors could prompt the DoD to accelerate in‑house development or use other procurement pathways, lowering the influence of vendor ethics. That tactical shift could lead to less transparent development routes for defense AI.

Practical steps for companies, workers and policymakers

For companies (Amazon, Google, Microsoft, others)

- Publish clearer, machine‑readable summaries of government contracts touching surveillance and law enforcement so employees and the public can audibly assess risk.

- Establish independent external review boards for defense contracts that include civil‑liberties experts, technical safety researchers and worker representatives.

- Maintain a public register of concessions sought or granted in national‑security negotiations to reduce opaque back‑channel concessions that provoke reputational backlash.

For workers and unions

- Prioritize concrete bargaining objectives that translate moral demands into enforceable workplace protections or procurement transparency clauses.

- Coordinate with civil‑society groups and privacy advocates to translate internal pressure into public policy proposals, such as narrowly scoping acceptable defense uses of AI by statute.

- Use measured escalation tactics that preserve long‑term credibility: document specific harms and operational worries rather than rely solely on moral framing.

For policymakers

- Draft legislative guardrails that address mass‑surveillance capabilities and lethal autonomous weapons, making clear where federal agencies may and may not deploy third‑party AI systems.

- Clarify the limits on invoking the Defense Production Act for forcing model‑use policy changes to avoid ad‑hoc precedents that create chilling effects for civil‑liberties protections.

- Fund independent technical audits of AI systems used in defense contexts and create a statutory process for adjudicating disputes between vendors and the government.

What’s likely to happen next (plausible scenarios)

- Negotiated compromise: Anthropic holds the line and the DoD and Anthropic reach a narrowly framed compromise that preserves certain guardrails while permitting limited technical collaboration for specific defense use cases. This would de‑escalate public conflict but leave unresolved questions about future exigencies.

- Vendor capitulation: One or more major vendors acquiesce under pressure, changing their policies and setting precedent — likely triggering employee resignations, protests and regulatory scrutiny.

- Statutory escalation: The DoD attempts to compel access under the DPA or similar authorities. This would almost certainly prompt court challenges, prolonged litigation and a broader national debate around government power vs. corporate ethics.

- Fragmented market response: The Pentagon turns to alternate suppliers (domestic or foreign) or builds capabilities in‑house, reducing le model provider and creating a less transparent procurement path.

The verification caveats and unverifiable claims

- The 700,000 figure: it is the coalition’s stated combined membership across listed organizations; while it is repeatedly reported by news outlets and appears in the joint statement, independent auditing to confirm unique, active supporters was not available in the public record I reviewed. Treat the figure as the coalition’s claimed reach, not as an independently verified single‑source headcount.

- Closed‑door negotiation details: some reporting describes private moments and hypotheticals from DoD‑Anthropic talks (for example, references to hypothetical nuclear scenarios). Those items come from sources briefed on private conversations; they are appropriately included in public reporting but cannot be fully corroborated from public documents. Such reports should be labeled as sourced to participants or officials, rather than as independently verifiable facts.

- Future use cases: claims about how a model would behave in a mass‑surveillance pipeline depend on integration details, data flows and model fine‑tuning that are often proprietary and not publicly auditable. Technical risk descriptions here are grounded in normative and technical risk literature but not reducible to binary outcomes without system‑level audits.

Conclusion — a new leverage point in the AI governance debate

The Anthropic–Pentagon confrontation has crystallized a broader governance question that will define AI policy for years: who gets to decide how state actors may use privately created intelligence? Worker collectives have seized a narrow tactical moment and converted it into a forward‑leaning public demand that tests corporate ethics against national‑security pressure. That interplay exposes the limits of soft governance (company policies and volunteer ethics) and the risks of hard compulsion (statutory orders that reconfigure procurement norms).Companies now face a strategic choice that cannot be resolved purely by legal counsel or PR messaging. They must weigh operational obligations to government customers against long‑term social license and workforce cohesion. Policymakers face an equally hard choice: either legislate clearer boundaries for defense AI — a politically difficult but stabilizing move — or accept an ad‑hoc, case‑by‑case contest between the Pentagon, vendors and activist coalitions.

For workers, the current coalition demonstrates leverage: public moral claims backed by organized groups can reshape corporate calculations. But without clearer legal protections or statutory guardrails, worker influence risks being episodic rather than structural.

This dispute will not be settled by a single headline. It is a pivotal moment in which corporate governance, national security, labor power and civil liberties intersect. The decisions companies, workers and the DoD make in the coming weeks will set precedents that ripple through procurement law, the architecture of cloud and model governance, and the ethical contours of technological power.

Source: Moneycontrol.com https://www.moneycontrol.com/techno...ect-pentagon-ai-demands-article-13854485.html