Teramind’s latest announcement promises to give enterprises a single control plane for the messy, fast-moving world of autonomous AI agents — a commercial attempt to turn the “agentic enterprise” from a risk-prone experiment into an auditable, enforceable platform. According to a report published by 01net, Teramind positions its new offering as an AI governance platform built specifically to discover, inventory, and govern agentic AI — treating agents as first-class identities with lifecycle, data-access, and policy controls that mirror how enterprises manage service accounts and applications today.

Agentic AI — software systems that do more than answer a prompt and instead act autonomously, chaining calls, invoking APIs, modifying records, and initiating transactions — moved from research buzzword to enterprise priority in 2024–2026. That shift has created a clear marketplace: vendors racing to deliver capabilities for discovery, identity, access governance, observability, and policy enforcement tailored to agents rather than traditional ML models. Multiple vendors and platform providers now pitch purpose-built governance controls for fleets of agents, reflecting a widespread recognition that organizations cannot secure what they cannot see or control.

The regulatory and standards context amplifies those operational requirements. The NIST AI Risk Management Framework (AI RMF) and companion playbooks have set a widely accepted, risk-based structure — Govern, Map, Measure, Manage — that many vendors use as a blueprint for product design and compliance workflows. Enterprises are increasingly demanding tools that generate verifiable artifacts for these frameworks: inventories, lineage, testing evidence, and lifecycle records that auditors and legal teams can consume.

Large observability and operations vendors are also folding agentic controls into their stacks. Platforms that already gather telemetry and perform causal analysis are announcing “agentic” capabilities to act and govern autonomously within operational contexts. That means customers who already invest in observability or security telemetry may get overlapping functionality from multiple suppliers — a strategic integration challenge IT leaders must plan for.

The EU AI Act and similar laws further elevate the importance of lifecycle controls, high-risk system registries, and incident disclosure obligations. Tools that can produce verifiable artifacts — model provenance, dataset lineage, risk assessments — will materially reduce compliance effort. But vendors and customers must be explicit: a governance platform alone does not equal compliance; documented processes and accountable roles do.

Yet the product’s ultimate value will be determined in the field. Key success factors include discovery completeness, safe enforcement patterns, verifiable audit artifacts, and documented operational playbooks that integrate governance into day-to-day change management. Enterprises should treat these platforms as critical infrastructure that require a disciplined rollout: proof-of-concept with measurable risk-reduction goals, phased enforcement, and explicit legal/compliance sign-off. The market also remains crowded, with observability, security, and governance vendors converging on similar feature sets; interoperability and clear integration playbooks will separate winners from also-rans.

As agentic AI moves from pilot to production, governance platforms like Teramind’s will be necessary but not sufficient. Governance succeeds when technology, process, and accountability align — the platform supplies the “how,” but organizations must supply the “who” and the discipline to act on what the platform reveals.

Source: 01net Teramind Launches the First AI Governance Platform for the Agentic Enterprise

Background / Overview

Background / Overview

Agentic AI — software systems that do more than answer a prompt and instead act autonomously, chaining calls, invoking APIs, modifying records, and initiating transactions — moved from research buzzword to enterprise priority in 2024–2026. That shift has created a clear marketplace: vendors racing to deliver capabilities for discovery, identity, access governance, observability, and policy enforcement tailored to agents rather than traditional ML models. Multiple vendors and platform providers now pitch purpose-built governance controls for fleets of agents, reflecting a widespread recognition that organizations cannot secure what they cannot see or control.The regulatory and standards context amplifies those operational requirements. The NIST AI Risk Management Framework (AI RMF) and companion playbooks have set a widely accepted, risk-based structure — Govern, Map, Measure, Manage — that many vendors use as a blueprint for product design and compliance workflows. Enterprises are increasingly demanding tools that generate verifiable artifacts for these frameworks: inventories, lineage, testing evidence, and lifecycle records that auditors and legal teams can consume.

What Teramind says it is shipping

Product positioning and core claims

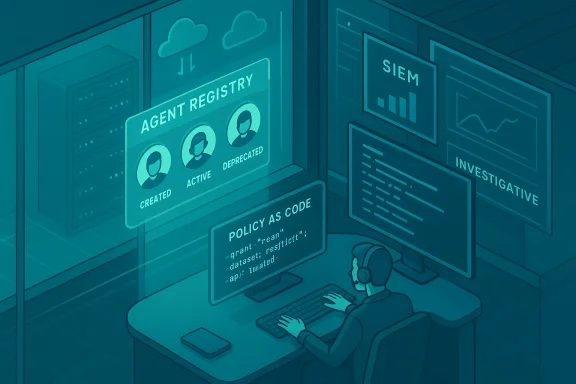

According to the 01net piece summarizing Teramind’s release, the new platform is built to:- Discover and inventory agentic workloads across clouds, Copilot platforms, and custom agent frameworks.

- Treat agents as identities with attributes, owners, and lifecycle states (created, approved, active, deprecated).

- Provide policy-as-code controls for data access, escalation, and allowed actions, with enforcement points and audit trails.

- Offer observability and behavioral analytics that surface anomalous agent decisions, data access patterns, and policy violations.

- Integrate with existing security stacks (SIEM, IAM, DLP) so that agent activity becomes another auditable telemetry source.

Feature highlights reported

01net’s coverage identifies several practical feature areas Teramind emphasizes:- An agent registry with ownership metadata and risk classification.

- A policy engine capable of enforcing least-privilege access to datasets, systems, and APIs.

- Behavioral baselines for agents to enable anomaly detection when an agent deviates from expected workflows.

- Approval and sanctions workflows (human-in-the-loop gating for high-risk actions).

- Read-only investigative tools for security and HR teams so analysts can audit agent decisions without enabling further automation.

How Teramind’s entry fits the market

Market dynamics: many vendors, one common problem

Teramind’s launch arrives at a moment when several companies have publicly released agent governance or control-plane products. Startups and established security vendors alike are shipping agent-aware inventories, policy enforcement points, and agent risk scoring; examples include vendor launches specifically addressing agentic governance and agent discovery on cloud marketplaces. The pattern is consistent: visibility first, then policy, then automated remediation.Large observability and operations vendors are also folding agentic controls into their stacks. Platforms that already gather telemetry and perform causal analysis are announcing “agentic” capabilities to act and govern autonomously within operational contexts. That means customers who already invest in observability or security telemetry may get overlapping functionality from multiple suppliers — a strategic integration challenge IT leaders must plan for.

Distinguishing claims and credible differentiators

Teramind’s credibility rests on three practical differentiators if the platform delivers as described:- DLP and insider-risk lineage: Teramind’s background in workforce intelligence should give it a data- and behavior-focused lens that is immediately valuable when agents interact with sensitive information. This background can translate into more precise detection of risky agent access patterns than vendors that approach governance purely from an identity perspective.

- Read-only investigative posture: Positioning investigative workflows as read-only by default reduces the risk of governance tooling inadvertently introducing automated changes or escalation paths — an operationally sensible design choice for early adopters.

- Policy-as-code + lifecycle controls: If the policy engine can enforce least-privilege access at runtime and produce verifiable lifecycle records, Teramind can meet auditors’ and regulators’ need for reproducible evidence. That aligns well with NIST AI RMF expectations for continuous governance.

Technical analysis — what enterprises should expect (and verify)

Agent discovery and identity binding

A credible agent governance platform must reliably find agents across multiple vectors: cloud-native orchestrations, embedded Copilot/assistant platforms, scheduled tasks, CI/CD pipelines, and user-facing chat integrations. Detection is non-trivial because agents may hide inside scripts, serverless functions, or third-party integrations and may assume human service-account credentials.- Expect discovery to use a mix of telemetry connectors, API scans, and heuristics that flag agent-like behavior. Enterprises should verify detection coverage by testing simulated agents and measuring false negatives (missed agents) and false positives (human workflows misclassified).

Policy enforcement surface and timing

Policy-as-code is the right model — policies written in executable form that map to access controls, API call filters, and escalation requirements. But enforcement must be carefully placed:- Enforcement at the data-access layer (gateway, database proxy, or DLP hook) prevents exposure at the source but can create latency and availability concerns.

- Enforcement at orchestration layers (agent orchestration platform, runtime container) offers behavioral control but may not prevent direct data exfiltration if an agent has separate credentials.

Observability, explainability, and auditability

Agentic governance is only useful if investigative teams can reconstruct what an agent decided and why. That implies:- Action-level logs with inputs, prompt context, tool/API calls, and resulting outputs.

- Model, prompt, and data provenance to trace decisions to their sources.

- Tamper-resistant audit trails that can be exported for legal review.

Integration with existing security controls

The governance platform must interoperate with IAM, SIEM, DLP, and incident response tooling. The practical work here is mapping agent identities to existing account management constructs and ensuring policies can be expressed in terms security teams already use (roles, permissions, conditional access). Ask for concrete integration guides and evidence from proof-of-concept runs.Strengths and potential benefits

- Rapid visibility into agent sprawl. Organizations often have ad-hoc agents in Slack bots, automation scripts, and RPA workflows; a registry helps centralize ownership and accountability.

- Reduced data-exfiltration risk. Applying least-privilege and DLP-aware policies specifically to agents cuts a high-risk vector for sensitive information leaks.

- Audit-ready evidence for risk frameworks. If the platform delivers lifecycle records and immutable logs, it simplifies compliance with frameworks like NIST AI RMF and obligations under emerging laws such as the EU AI Act.

- Operational containment without halting innovation. Human-in-the-loop gating and read-only investigative modes let teams experiment while containing high-risk actions until policies are proven effective.

Risks, limitations, and open questions

Discovery coverage and blind spots

No discovery tool is perfect. Agents that execute outside monitored cloud environments or that use stolen credentials remain a gap. Enterprises should plan for layered detection — host-based telemetry, network egress controls, and behavior analytics — rather than relying on a single product as a silver bullet.Enforcement ambiguity and runtime fragility

Policy enforcement for improvisational agents can be brittle. A policy that blocks a legitimate multi-step workflow may cause business outages. Conversely, overly permissive policies fail to stop misuse. Expect an iterative policy tuning period with robust rollback and observability during enforcement rollouts.False sense of security

Products that focus on inventory and alerts can create a surveillance mindset that substitutes detection for remediation. True governance requires processes: defined owners, lifecycle approvals, incident playbooks, and organizational accountability. Vendors that present product capabilities without operational playbooks risk leaving customers exposed.Vendor trust, model risk, and supply chain issues

Many agentic stacks rely on third-party models and managed copilots. Governance platforms must surface not only agent behavior but the model and data sources those agents use. If a governance tool cannot trace agent decisions back to model versions and dataset lineage, it will struggle to produce defensible evidence in audits.Practical adoption checklist for CIOs and CISOs

- Map current agent footprint. Run discovery across clouds, chat platforms, CI/CD pipelines, and RPA consoles to establish a baseline.

- Prioritize high-risk agents by access scope. Classify by data sensitivity, ability to perform high-impact actions, and production footprint.

- Run a limited PoC focused on detection fidelity. Verify false positive and false negative rates with red-team simulations.

- Validate enforcement paths. Test policies in monitor-only mode first, then phased enforcement with rollback capabilities.

- Integrate with existing GRC workflows. Ensure evidence artifacts feed automatically into audit, legal, and compliance systems.

- Define ownership, lifecycle rules, and approval workflows. Agents need named owners and lifecycle SLAs just like other enterprise services.

- Train incident responders on agent-specific failure modes. Agents introduce unique failure and exploitation patterns that IR teams must rehearse.

Regulatory and compliance implications

NIST’s AI RMF remains the most pragmatic, vendor-neutral framework for operationalizing governance outcomes — Govern, Map, Measure, Manage — and platforms that align their outputs to those functions will be easier to adopt across regulated enterprises. Demonstrable mapping between platform artifacts (agent inventory, policy enforcement logs, test results) and RMF functions is essential for audit readiness.The EU AI Act and similar laws further elevate the importance of lifecycle controls, high-risk system registries, and incident disclosure obligations. Tools that can produce verifiable artifacts — model provenance, dataset lineage, risk assessments — will materially reduce compliance effort. But vendors and customers must be explicit: a governance platform alone does not equal compliance; documented processes and accountable roles do.

Competitive context — who else is shipping similar capabilities?

- Arthur launched an Agent Discovery & Governance platform on cloud marketplaces, emphasizing discovery and in-cloud governance workflows. This illustrates an emerging preference for marketplace-deployable governance that runs within a customer’s cloud tenancy.

- Symmetry Systems recently announced an AI security governance offering that treats agent identities as first-class principals, mapping permissions and blast radius in ways familiar to identity governance teams.

- Observability vendors are also entering the space: Dynatrace and others have integrated agentic controls into their telemetry-driven platforms to enable actions and governance informed by real-time operational context.

- Niche governance vendors and open-source projects are producing RMF-aligned control planes and playbooks for agentic AI; enterprises will have to weigh the benefits of single-vendor simplicity against best-of-breed composability.

Independent validation — what to ask Teramind before you buy

- Can you provide customer case studies showing reduced agent-driven incidents and measurable ROI from governance automation?

- What is the platform’s detection coverage matrix (cloud providers, Copilot studios, RPA frameworks, on-prem systems) and how is it updated?

- Where are enforcement points placed, and what latency or availability impacts have been measured under production loads?

- Can the platform produce immutable, exportable audit artifacts (action logs, prompt/response context, model IDs, dataset references) that map to NIST AI RMF functions?

- What mechanisms prevent the governance tool itself from becoming an automation vector (e.g., preventing policy loops, ensuring read-only investigative modes by default)?

Final assessment

Teramind’s announcement — as reported by 01net — is a sensible, market-timed product move: enterprises urgently need tools to manage agentic AI, and platforms that can tie discovery, policy, and auditability together address a real operational gap. The vendor’s DLP and insider-risk background gives it a plausible differentiation: contextual behavioral detection where many identity-first solutions may lack nuance.Yet the product’s ultimate value will be determined in the field. Key success factors include discovery completeness, safe enforcement patterns, verifiable audit artifacts, and documented operational playbooks that integrate governance into day-to-day change management. Enterprises should treat these platforms as critical infrastructure that require a disciplined rollout: proof-of-concept with measurable risk-reduction goals, phased enforcement, and explicit legal/compliance sign-off. The market also remains crowded, with observability, security, and governance vendors converging on similar feature sets; interoperability and clear integration playbooks will separate winners from also-rans.

As agentic AI moves from pilot to production, governance platforms like Teramind’s will be necessary but not sufficient. Governance succeeds when technology, process, and accountability align — the platform supplies the “how,” but organizations must supply the “who” and the discipline to act on what the platform reveals.

Quick practical takeaway for WindowsForum readers

- Start with discovery: run a controlled agent discovery exercise this quarter and baseline your agent count and data access scopes.

- Prioritize high-sensitivity data and high-impact agents for immediate lifecycle controls.

- Use governance products in monitor-only mode first, validate artifacts against NIST AI RMF requirements, then adopt phased enforcement.

- Require vendors to demonstrate verifiable audit exports and integrations with your existing SIEM, IAM, and DLP stacks before procurement.

Source: 01net Teramind Launches the First AI Governance Platform for the Agentic Enterprise