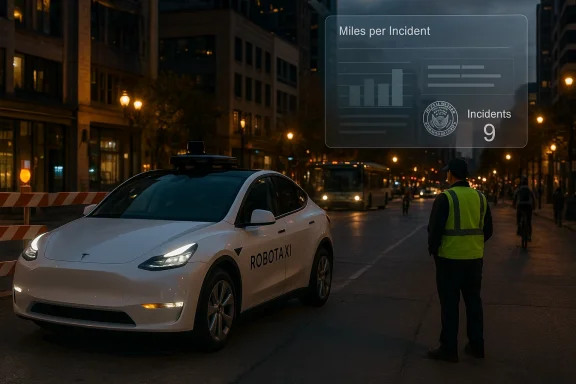

New federal incident filings and Tesla’s own disclosures paint a stark picture: between July and November 2025, Tesla’s experimental robotaxi fleet in Austin was listed in nine NHTSA reportable crashes across roughly 500,000 cumulative miles—an incident frequency that, on face value, is materially higher than widely used human-driver benchmarks and far above the performance shown by leading robotaxi operators. The gap is not just statistical; it exposes deep questions about testing methods, reporting transparency, and whether Tesla’s “Robotaxi” promise is ready for public streets at scale.

Tesla’s robotaxi effort—built on its Full Self-Driving (FSD) stack and deployed in modified Tesla Model Y vehicles—moved into public view in mid‑2025 with invite-only service in Austin, Texas, and supervised pilot operations in other regions. As required under the National Highway Traffic Safety Administration’s (NHTSA) Standing General Order for automated driving systems, incidents in Austin where autonomous mode was engaged have been reported to the agency.

During the first months of operations a cluster of incidents was recorded and publicly disclosed through those NHTSA filings. At roughly the same time Tesla began publishing cumulative robotaxi mileage in investor materials. Journalists and independent trackers combined the two datasets to produce a simple metric: miles-per-incident (MPI). Using that approach the Tesla Austin program’s MPI through November 2025 works out to roughly one reportable crash every ~55,000 miles—substantially worse than commonly cited human-driver baselines and behind the performance of mature robotaxi operators that publish their results.

Before accepting that headline number at face value, it’s essential to unpack how those figures are built, what they actually mean, and where the analysis becomes fragile.

However, the headline comparison obscures many crucial caveats—some methodological, some technical, and some legal—that must be examined before concluding that the program is categorically unsafe.

Two operational shifts matter here:

Key contrasts with Waymo:

Key regulatory and public responses include:

The most constructive path forward is transparent measurement, independent verification, and conservative operational gating. If Tesla and regulators treat these early incidents as diagnostic rather than declarative—documenting causes, publishing sanitized incident descriptions, and agreeing on clear safety thresholds—then the company’s rapid engineering cycles can still translate into safer, more reliable autonomy. If, instead, deployment outpaces verifiable safety improvements and opacity persists, regulators and the public will rightly demand slower, more accountable progress.

Autonomy’s promise—fewer crashes, lower congestion, and new mobility choices—remains within reach, but delivering on it will require humility in public claims, rigor in safety reporting, and cooperation among companies, regulators, and researchers. The Austin incidents are not an indictment of the entire industry, but they are a timely reminder that real-world driving is unforgiving, and that safety must be proven in the open, not asserted in investor decks.

Source: fakti.bg Teslas robo-taxis are involved in accidents nine times more often than regular driverless cars

Background

Background

Tesla’s robotaxi effort—built on its Full Self-Driving (FSD) stack and deployed in modified Tesla Model Y vehicles—moved into public view in mid‑2025 with invite-only service in Austin, Texas, and supervised pilot operations in other regions. As required under the National Highway Traffic Safety Administration’s (NHTSA) Standing General Order for automated driving systems, incidents in Austin where autonomous mode was engaged have been reported to the agency.During the first months of operations a cluster of incidents was recorded and publicly disclosed through those NHTSA filings. At roughly the same time Tesla began publishing cumulative robotaxi mileage in investor materials. Journalists and independent trackers combined the two datasets to produce a simple metric: miles-per-incident (MPI). Using that approach the Tesla Austin program’s MPI through November 2025 works out to roughly one reportable crash every ~55,000 miles—substantially worse than commonly cited human-driver baselines and behind the performance of mature robotaxi operators that publish their results.

Before accepting that headline number at face value, it’s essential to unpack how those figures are built, what they actually mean, and where the analysis becomes fragile.

The raw numbers and what they claim

- Tesla-reported incidents (NHTSA SGO filings): 9 reportable crashes tied to the Austin robotaxi program between July and November 2025.

- Tesla-disclosed cumulative robotaxi miles (Tesla Q4 2025 investor materials): ~500,000 miles as of November 2025.

- Simple MPI calculation: ~500,000 miles / 9 incidents = ~55,000 miles per incident.

- Common human-driver benchmark (police‑reported crashes): ~1 police-reported crash per ~500,000 miles.

- Adjusted human-driver estimate (including non‑police-reported minor collisions): frequently cited ~1 crash per ~200,000 miles.

However, the headline comparison obscures many crucial caveats—some methodological, some technical, and some legal—that must be examined before concluding that the program is categorically unsafe.

Timeline and nature of the Austin incidents

What the NHTSA filings reveal

The nine NHTSA entries covering July–November 2025 include a mix of low-speed and higher-speed events. Reported types include:- Low-speed contact with stationary objects in parking lots.

- Collisions with other SUVs during turns or in construction zones.

- A collision involving a cyclist.

- An animal strike at a reported speed above 27 mph.

- A wrong-way right turn that resulted in a collision.

Observers, supervisors, and the “safety monitor” factor

During much of this period the Austin robotaxis were accompanied by human safety monitors—employees whose role was to watch the trip and take control if needed. The presence of a trained monitor in the vehicle should in principle reduce crash frequency versus a completely unsupervised vehicle, because the human can intervene when the system misbehaves.Two operational shifts matter here:

- Tesla publicly announced phasing out onboard safety monitors in parts of Austin and moving toward unsupervised operation in certain geofenced areas.

- Independent observers and local bloggers reported that Tesla began using companion/chase vehicles or insurance arrangements to accompany prototypes; this claim is not consistently documented in public filings and remains partly anecdotal.

Why the simple MPI comparison is misleading (methodology concerns)

A direct miles-per-incident comparison between Tesla’s early Austin data and national human-driver averages treats heterogeneous datasets as if they were apples-to-apples. They are not. Key methodological problems:- Differences in reporting thresholds: NHTSA SGO requires ADS operators to report crashes where the autonomous system was engaged. That includes any crash meeting the threshold, even low-speed contact that a human driver might not report to police. Human-driver national averages (the “1 per ~500,000 miles” figure) refer to police‑reported crashes, which are a different, typically higher-severity class.

- Denominator mismatch and timing: Tesla’s mileage figure is cumulative and presented as of November 2025, whereas the NHTSA reports are discrete entries with variable reporting lags. Counting miles up through a given month while the incident reports for that month show up later can distort MPI. Early-stage fleets also have highly variable utilization rates (few vehicles, concentrated operation), which creates noisy per‑mile stats.

- Small-sample statistics: Nine incidents across a nascent, likely small fleet is an extremely small sample. Early months for any new mobility program tend to produce higher incident rates as the system encounters edge cases. Small fleets produce volatile MPI numbers that can swing dramatically with each new incident or with slightly different mileage assumptions.

- Fault attribution: NHTSA SGO reports list incidents, but they do not always assign causation or fault in a way that’s directly comparable to human traffic-safety statistics. A robotaxi being struck by another vehicle is very different from a robotaxi making an unsafe maneuver; counting both as identical “incidents” hides crucial nuance.

- Geographic and operational domain differences: Tesla’s Austin robotaxis operate in a limited geofence that may contain dense urban interactions, school zones, cyclists, and construction—conditions that can produce higher incident density than the national driving mix, which includes safer highway miles and rural driving.

- Redactions and missing narrative context: Tesla claimed CBI for parts of some NHTSA narratives. When narrative context is redacted, analysts cannot determine whether an incident was a system failure, another driver’s fault, or an unavoidable occurrence.

How the industry benchmark (Waymo and peers) compares

Waymo—Alphabet’s robotaxi subsidiary—operates at a much larger scale and publishes detailed safety and operational data. Waymo’s reported cumulative fully autonomous miles reached into the tens or hundreds of millions across multiple U.S. cities by late 2025, and their local safety publications show a markedly lower incident rate on comparable metrics. Where Waymo’s operations have been scrutinized, the company generally provides richer incident narratives and routine safety reporting that distinguish between at-fault events and incidents caused by external actors.Key contrasts with Waymo:

- Scale: Waymo’s fleet has logged orders of magnitude more autonomous miles than Tesla’s early Austin deployment, which provides statistical robustness and faster learning from edge cases.

- Transparency: Waymo voluntarily publishes detailed safety reports by city and includes narrative analysis; Tesla’s NHTSA filings sometimes omit narrative details due to CBI claims.

- Operational model: Waymo’s early commercial services were built from driverless architecture and controlled geofences, often combined with extensive simulation and human-in-the-loop verification processes prior to full public deployment.

What the federal regulator and others are doing

NHTSA enforces reporting rules under its Standing General Order governing automated driving systems. The SGO requires operators to report qualifying crashes and gives the agency tools to follow up. NHTSA has been in contact with Tesla about robotaxi incidents and has previously opened broader inquiries into Tesla’s FSD reporting practices.Key regulatory and public responses include:

- NHTSA collection of SGO incident reports and the capacity to audit reporting completeness.

- Public and local scrutiny in Austin, where residents and advocacy groups have documented robotaxi interactions on city dashboards and on social media.

- Media and independent trackers flagging redactions and pushing for more narrative transparency.

- Broader regulatory focus on whether safety monitors can be removed while fleet reliability remains unproven.

Tesla’s operational choices: progress, risk, and opacity

Tesla’s strategic moves in Austin reveal a tension between rapid deployment and conservative risk management.- Removing safety monitors: Tesla announced the removal of onboard observers in some Austin operations, signaling an operational confidence shift. Removing safety monitors reduces operational cost and is consistent with a business case for scaled robotaxi service—but it also eliminates the last human safety net in the vehicle and raises the stakes of each software decision.

- Redacting incident narratives: Tesla has used the CBI clauses in SGO filings to redact narrative fields. The SGO expressly permits confidential business claims for certain data fields, but the practice increases public and regulator distrust when it obscures whether incidents are systemic or isolated.

- Fleet utilization and insurance: Independent observers reported evidence (bloggers, on‑street sightings) suggesting Tesla uses companion or chase vehicles and specific insurance arrangements for prototypes; this appears to be at least partially anecdotal and is not consistently documented in regulatory filings. Where it is true, such practices are operationally sensible but also raise questions about real-world independence of the tests.

Technical analysis: why these incidents matter for autonomy

A handful of recurring technical failure modes explain why nascent autonomous fleets often show higher early incident rates:- Perception edge cases: Urban objects (e.g., temporary construction cones, oddly shaped vehicles, animals, cyclists at oblique angles) can trigger sensor misclassification or failure to detect. Some Austin incidents involved fixed objects or a cyclist—classic perception stressors.

- Decision-making and prediction: Autonomous stacks must predict intent of other road users. Wrong‑time or wrong‑direction turns and failure to anticipate a cyclist’s trajectory are failures in prediction and planning modules.

- Localization drift and map updates: If HD mapping or localization does not match a temporarily modified road (construction zones, lane closures), the planner can choose unsafe maneuvers.

- Human-machine handoffs and monitoring: With safety monitors seated out of the driver’s seat (or removed entirely), latency for human intervention grows. Even a vigilant monitor cannot always compensate for sudden system errors.

- Edge-case exposure: Small pilot fleets are exposed to rare scenarios more slowly; only by scaling do systems collect enough examples to robustly learn to handle corner cases—provided the company is transparent and shares anonymized data for broader validation.

Strengths and credible signals of progress

It would be unfair to portray Tesla’s program as only regressive. There are areas of progress and legitimate operational strengths:- Rapid iteration and data collection: Tesla has demonstrated a capacity for fast software updates and frequent fleet learning cycles, which can compress the time to address systemic faults.

- Public mileage disclosure: Including robotaxi mileage in investor materials is a notable step toward openness about usage and exposure.

- Moving to unsupervised operation in carefully selected geofences can accelerate learning in real traffic without relying on contrived testing environments.

- Competition drives improvement: The presence of multiple operators (Waymo, Zoox, Tesla, others) in the same urban market accelerates comparative learning and forces better safety practices.

Risks and wider implications

- Public safety risk: Even if a fraction of the incidents were not the autonomous system’s fault, higher incident frequency in early deployments increases the chance of serious injuries as scale increases.

- Reputational and market risk: Growing media narratives showing Tesla behind competitors on basic safety metrics can erode public trust and investor confidence.

- Regulatory clampdown: Patterned concerns could trigger stricter reporting and operational constraints from NHTSA or local authorities, slowing expansion.

- Insurance and liability complexities: Redacted narratives and novel operational models complicate determination of liability and insurance underwriting for robotaxi fleets.

- Data opacity: If operators withhold narrative details, independent researchers cannot evaluate systemic risk or propose fixes, slowing collective progress for the industry.

Recommendations — what regulators, Tesla, and the industry should do

- For regulators:

- Standardize incident categorization so that comparisons across operators and between AVs and human drivers are meaningful.

- Require time-bound justification for CBI redactions and publish sanitized narratives that preserve IP while allowing public safety assessment.

- Mandate phased removal of onboard safety monitors only after verifiable performance thresholds are met and audited.

- For Tesla:

- Publish sanitized incident narratives and an explicit methodology for MPI and other safety metrics to enable independent scrutiny.

- Publicly commit to target thresholds (e.g., MPI targets, reductions in at-fault incidents) that must be met before scaling unsupervised operations.

- Share anonymized data about edge cases with a consortium of academic and industry partners to accelerate research and remediation.

- For the AV industry:

- Agree on common safety reporting standards that harmonize definitions (reportable crash vs. police‑reported vs. non‑reportable contact).

- Collaborate on shared simulation datasets for rare edge cases (animals, odd construction geometries, school bus interactions).

- Create a neutral, third-party incident review board for early deployments to adjudicate causes and recommend fixes.

Conclusion

Tesla’s Austin robotaxi dataset—nine NHTSA‑reported incidents across roughly 500,000 cumulative miles through November 2025—is an unmistakable red flag that demands rigorous analysis. The headline MPI metrics raise real concerns, especially given Tesla’s removal of onboard safety monitors and the company’s reluctance to fully disclose incident narratives. But headline arithmetic alone cannot settle the question of system readiness. The data are noisy, the sample small, and fundamental methodological mismatches complicate direct comparisons to national human-driver averages or to other operators.The most constructive path forward is transparent measurement, independent verification, and conservative operational gating. If Tesla and regulators treat these early incidents as diagnostic rather than declarative—documenting causes, publishing sanitized incident descriptions, and agreeing on clear safety thresholds—then the company’s rapid engineering cycles can still translate into safer, more reliable autonomy. If, instead, deployment outpaces verifiable safety improvements and opacity persists, regulators and the public will rightly demand slower, more accountable progress.

Autonomy’s promise—fewer crashes, lower congestion, and new mobility choices—remains within reach, but delivering on it will require humility in public claims, rigor in safety reporting, and cooperation among companies, regulators, and researchers. The Austin incidents are not an indictment of the entire industry, but they are a timely reminder that real-world driving is unforgiving, and that safety must be proven in the open, not asserted in investor decks.

Source: fakti.bg Teslas robo-taxis are involved in accidents nine times more often than regular driverless cars