Tshwane University of Technology’s Faculty of Information and Communication Technology (FoICT) used a hands‑on Microsoft Copilot workshop to give first‑year ICT students a practical introduction to generative AI across research, writing, coding and productivity — an experience designed to bridge classroom theory and workplace practice while pointing to the governance and ethical questions every campus must now answer. The session, organised with industry partner Scadco and delivered by educational consultant Ryan Gallus, combined demos, a team “Promptathon” challenge and guided practice with Microsoft learning resources to help students learn how to use AI tools effectively, and why thoughtful rules and pedagogy are required as these tools enter everyday academic work.

Tshwane University of Technology has been steadily expanding its AI and digital‑skills activity in recent years, from AI Weeks and short courses to formal partnerships aimed at closing the skills gap between graduates and employers. The university’s engagement with private-sector training and Microsoft initiatives reflects a broader trend: universities are increasingly treating practical AI literacy as core to the curriculum rather than optional enrichment. This workshop — part of those efforts — brought a vendor‑aligned, practice‑led format into a first‑year ICT cohort to accelerate basic competence and confidence with widely used productivity AI.

Scadco’s education and skills teams are active across South Africa in AI training and Copilot‑focused classroom activities. The company’s portfolio shows dedicated roles in curriculum and workshop design, confirming the presence of education consultants who design prompt engineering and Copilot exercises for campus workshops. That industry presence matters: effective, safe AI adoption in universities almost always involves partnerships that provide both technical know‑how and classroom‑ready exercises.

But some important gaps remain:

If universities want the benefits of Copilot without the downsides, they must pair training events with campus‑wide governance, redesigned assessments, and ongoing faculty development. The TUT model — practical, localised, and connected to Microsoft’s learning ecosystem — is a promising start. The next steps are institutional: policy, licencing controls and curricular depth that make AI literacy a measured, auditable part of a modern ICT education rather than an optional add‑on.

Source: Tshwane University of Technology TUT students explore AI tools during Microsoft Copilot workshop

Background: TUT, industry partners and the rise of Copilot in higher education

Background: TUT, industry partners and the rise of Copilot in higher education

Tshwane University of Technology has been steadily expanding its AI and digital‑skills activity in recent years, from AI Weeks and short courses to formal partnerships aimed at closing the skills gap between graduates and employers. The university’s engagement with private-sector training and Microsoft initiatives reflects a broader trend: universities are increasingly treating practical AI literacy as core to the curriculum rather than optional enrichment. This workshop — part of those efforts — brought a vendor‑aligned, practice‑led format into a first‑year ICT cohort to accelerate basic competence and confidence with widely used productivity AI.Scadco’s education and skills teams are active across South Africa in AI training and Copilot‑focused classroom activities. The company’s portfolio shows dedicated roles in curriculum and workshop design, confirming the presence of education consultants who design prompt engineering and Copilot exercises for campus workshops. That industry presence matters: effective, safe AI adoption in universities almost always involves partnerships that provide both technical know‑how and classroom‑ready exercises.

What happened at the workshop — structure, content and activities

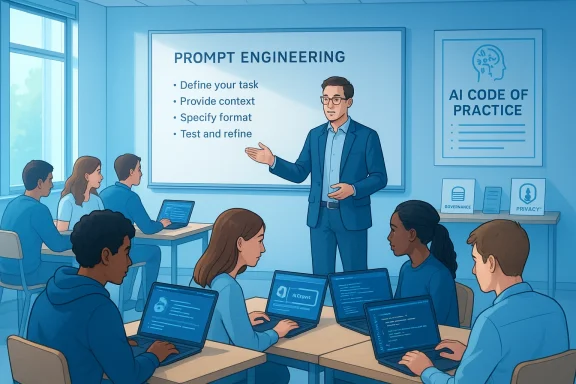

The half‑day session targeted first‑year ICT students and mixed short demos, teacher‑led explanation and competitive, team‑based practice.- Opening orientation: an industry consultant explained why Copilot matters for academic workflows — drafting, summarising research, data extraction and code‑level assistance inside Microsoft tools. The workshop emphasized practical uses rather than product evangelism.

- Core concepts: students were introduced to prompt engineering, the discipline of crafting inputs so generative models return useful, verifiable outputs; to responsible AI principles; and to structuring prompts to elicit more accurate, context‑aware responses.

- Hands‑on exploration: the class used Microsoft Copilot inside familiar apps and the Microsoft Learn platform for self‑paced modules and certification preparation. The workshop also highlighted the Microsoft Learn Student Ambassadors programme as a pathway for students to deepen industry connections and leadership experience.

- Promptathon challenge: teams competed to design prompts that solved real‑world problems, judged on usefulness, reproducibility and explanation of risk mitigation. The activity emphasized collaboration, iteration and the need for verification when using AI‑generated content.

Why this matters: practical benefits for students and educators

The workshop targeted four immediate, practical student needs:- Faster research and note synthesis. Copilot can turn scattered readings into concise summaries and identify follow‑up questions — useful for early stage literature reviews and problem scoping.

- Higher‑quality drafts and revision help. Students can use Copilot to produce structured essay outlines, get feedback on clarity or tone, and iterate more quickly on drafts.

- Coding support and debugging. Copilot‑style assistants (including GitHub Copilot variants) can suggest code snippets, explain functions and surface common debugging strategies, which speeds learning for novice programmers.

- Productivity inside familiar apps. Integrations into Word, Excel and PowerPoint let students automate formatting, generate tables from data, and convert notes into presentable slides.

Strengths of the TUT approach

- Industry‑informed, hands‑on learning. Partnering with a practitioner organisation allowed students to experience real Copilot workflows, rather than only watching demos. This gives learners transferable skills they can show employers.

- Emphasis on how to ask a model, not just what it outputs. The workshop’s prompt engineering focus — paired with exercises such as a Promptathon — trains students to treat AI like a tool with limits rather than an oracle.

- Linkage to certification paths. Introducing Microsoft Learn and Student Ambassadors creates clearly signposted routes from a single workshop to longer, credentialed learning journeys and leadership opportunities. That scaffolding is a practical boost for employability.

- Teamwork and soft skills. The Promptathon’s team format cultivates communication, problem decomposition and critical evaluation — skills that remain highly prized even as tools change.

- Early exposure to responsible‑use concepts. Teaching responsible AI and verification strategies at the start of a student’s degree helps reduce misuses and promotes academic integrity when AI tools are adopted widely.

Risks, gaps and the governance imperative

Workshops like the one at TUT are valuable, but they also expose universities to real risks if adoption is left ungoverned or ad‑hoc. The TUT session implicitly raised several systemic issues every campus should address:- Academic integrity and assessment design. Students using Copilot to draft essays or generate answers can produce plausible but incorrect content. Universities must redesign assessments to evaluate skills that AI cannot fully replicate, and require disclosure where AI was used. Evidence from deployments and institutional guidance suggests that tool availability changes behaviour fast; without policy, misuse is likely.

- Hallucinations and false confidence. Generative models can invent citations, fabricate data or hallucinate facts with convincing surface fluency. Teaching students how to verify AI outputs is therefore as important as teaching how to prompt. The workshop’s verification emphasis is a good start, but verification protocols should be formalised in curricula.

- Overreliance and skill erosion. If students rely on AI to produce first drafts or boilerplate code, they may miss formative practice essential to learning. The right balance is to use AI for scaffolding, not for replacing core skill development.

- Equity and access. Not all students have equal access to premium subscriptions or capable devices; universities must prevent a two‑tier learning experience where some students benefit from Copilot’s paid features while others cannot access them.

- Agentic AI and automation risks. As Copilot and agentic features (tools that plan and execute multi‑step workflows autonomously) become more powerful, universities should anticipate new governance questions: who owns an agent’s outputs; how to audit automated submissions; and how to set limits for academic use. Broader debates about agentic AI in education are already underway and highlight the need for careful policy design.

Concrete recommendations for universities and educators

Below is a practical, sequential roadmap that TUT and similar institutions can adopt to scale responsible Copilot training across faculties.- Establish a cross‑campus AI governance working group (policy, legal, IT, academics, student reps). Charge it with defi disclosure rules and data protection boundaries.

- Audit technical access and licensing. Determine which Copilot features will be available campus‑wide and which require opt‑in approvals; document data boundaries for each service. Use Microsoft’s admin controls and licensing guidance when deploying Copilot features.

- Create modular, credit‑bearing AI literacy units. Integrate short modules on prompt engineering, verification routines, bias and legal considerations into first‑year curricula and assessment rubrics.

- Rethink assessments. Design tasks that assess reasoning, process and reflection (e.g., annotated work that explains how AI was used and how outputs were validated) rather than only final products.

- Train the trainers. Run faculty workshops that mirror the student Promptathon model so instructors can design assignments with tool‑aware learning objectives.

- Use Student Ambassadors as peer trainers. Competitive programmes such as Microsoft Learn Student Ambassadors create leadership pathways and can be formalised to provide peer‑to‑peer tutoring and campus events.

- Publish transparent guidance for students. Create an “AI Code of Practice” that specifies permitted tools, required disclosures and consequences for misuse.

- Monitor and iterate. Collect usage data (privacy‑preserving), student feedback and assessment outcomes to refine policy and teaching. Regularly review the governance framework as agentic features and model behaviour evolve.

How to teach prompt engineering and verification — a practical primer

Teaching prompt engineering is most effective when it’s anchored in verifiable tasks and includes explicit checks. Below is a practical classroom recipe you can reuse.- Start with a simple task: summarise three short research abstracts and list three citations that support the summary.

- Have students craft an initial prompt and run it through Copilot or a supervised sandbox.

- Ask students to verify each factual claim against primary sources and record mismatches.

- Iterate prompts to reduce hallucinations (e.g., "Provide a summary and include verbatim quotes with exact page numbers from the supplied PDF; if unsure, say 'source not found'").

- Debrief as a class: which prompt changes reduced errors? Why did the model hallucinate certain claims?

- Grade on the quality of verification and the student’s ability to explain why the tool succeeded or failed.

Career benefits and certification pathways

Workshops that introduce Copilot and Microsoft Learn pathways are not just pedagogical experiments; they are also deliberate employability investments. Microsoft Learn offers learning paths and role‑based certifications aligned to industry needs; Student Ambassador programmes provide networking, mentoring and practical leadership experience. For students in ICT programmes, these credentials can be differentiators in the job market, especially where employers expect graduates to be comfortable with AI‑augmented workflows. Universities should therefore map microcredentials to competency frameworks and track student completions as part of employability metrics.Balancing optimism with skepticism: a critical assessment

The TUT workshop models many best practices: industry partnership, practical exercises, and an explicit focus on responsible usage. Those choices reduce the risk that students will be exposed to Copilot as a novelty without learning its limits.But some important gaps remain:

- One workshop cannot substitute for curriculum change. To be effective, teaching AI literacy must be sustained, scaffolded and formally assessed across a student’s degree.

- Institutional controls and IT governance are often more complex than individual faculty realise. Licensing, tenant‑level controls and data policy must be handled at the central IT level with legal input.

- The temptation to treat Copilot as a productivity hack can overshadow deeper pedagogical reform. Universities must ensure that tool adoption is anchored in learning outcomes, not only time savings.

A short primer for students: how to use Copilot safely and effectively

- Treat Copilot as a first drafter and research assistant — never as the final source.

- Always verify facts, quotations and citations against primary sources.

- Keep track of prompts and model outputs when using AI for assignments; be prepared to explain how you used the tool.

- Avoid uploading sensitive or personally identifiable information into external models without explicit institutional approval.

- Use official campus channels (labs, sanctioned Microsoft tenancy) where possible; personal accounts and free trial tools may have different privacy terms.

Conclusion

TUT’s Microsoft Copilot workshop is a model of how universities can introduce AI responsibly: a short, practice‑forward format that teaches students how to prompt, verify and think critically about AI‑generated outputs while pointing them to certification and leadership pathways. The session’s strengths — industry involvement, prompt engineering and team problem solving — position students to use AI as a productivity and research amplifier. But the event also underscores a broader institutional challenge: turning a helpful workshop into sustained, governed, curriculum‑level change that protects academic integrity, preserves learning outcomes and ensures equitable access.If universities want the benefits of Copilot without the downsides, they must pair training events with campus‑wide governance, redesigned assessments, and ongoing faculty development. The TUT model — practical, localised, and connected to Microsoft’s learning ecosystem — is a promising start. The next steps are institutional: policy, licencing controls and curricular depth that make AI literacy a measured, auditable part of a modern ICT education rather than an optional add‑on.

Source: Tshwane University of Technology TUT students explore AI tools during Microsoft Copilot workshop