UC San Diego said on May 13, 2026, that its Computer Science Department is integrating GitHub Copilot into selected introductory and advanced programming courses, using AI assistance for some projects while preserving unaided assessments to test student understanding. The news matters less because one university adopted one Microsoft-owned tool than because it shows how quickly the old bargain of programming education is breaking down. If students will graduate into workplaces where AI coding assistants are normal, then banning them from the classroom is no longer rigor. It is nostalgia with a syllabus.

The most interesting part of UC San Diego’s experiment is not that students used GitHub Copilot. Plenty of students already do, whether instructors approve or not. The more consequential move is that faculty appear to be treating AI-assisted programming as something to be designed around rather than merely policed.

That distinction matters. Universities have spent the past few years reacting to generative AI with a familiar academic reflex: define the boundary between acceptable help and academic misconduct, then try to enforce it. UC San Diego’s approach starts from a more uncomfortable premise. If AI coding assistants are becoming part of professional software development, the classroom has to teach students how to use them without letting the tool become a substitute for thought.

Leo Porter, a UC San Diego computer science professor, framed the issue bluntly in Microsoft’s customer story: many introductory programming courses have not served non-CS majors well. That is not a small admission. CS1, the traditional gateway programming course, has long carried a double burden: it must prepare future computer scientists for a major while also giving biologists, economists, cognitive scientists, engineers, and business students enough computational fluency to do meaningful work in their own fields.

The old model often failed both groups in different ways. Majors sometimes found the early work too constrained and artificial. Non-majors often emerged able to recognize syntax but not necessarily able to produce useful software. UC San Diego’s experiment argues that AI may not be the enemy of foundational learning; used carefully, it may expose how narrow the old foundation had become.

Porter’s critique points to a persistent weakness in the standard introductory sequence. Students can spend weeks wrestling with syntax, loops, conditionals, and small functions without ever building something that feels connected to the work they actually want to do. That may be tolerable for students who already identify as programmers. It is a much tougher sell for a biology major who wants to analyze lab data or a psychology student who wants to build an experimental interface.

The traditional defense is that students must first learn the basics. That is true as far as it goes, but it can become an excuse for a curriculum that delays usefulness for too long. If a student finishes an introductory course and still cannot complete a basic programming task, the problem is not merely student persistence. It is instructional design.

Copilot changes that equation because it lowers the friction between intent and implementation. A novice can describe a goal and get a plausible piece of code, an explanation, or a starting point. That is dangerous if the student treats the result as magic. It is powerful if the course requires the student to inspect, modify, explain, and defend what the assistant produced.

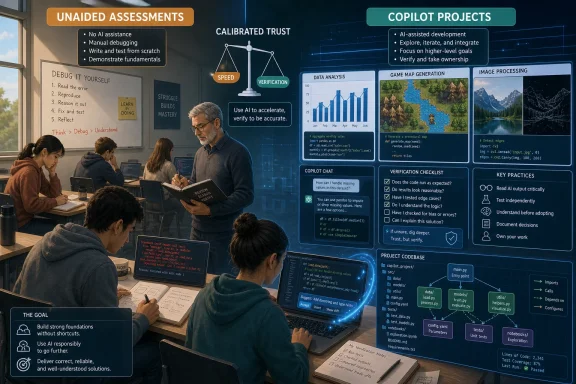

This is where UC San Diego’s model is more interesting than a simple “AI allowed” policy. Porter’s course reportedly separated contexts in which students had to demonstrate unaided understanding from contexts in which they could use Copilot for larger creative projects. That is not permissiveness. It is a recognition that programming now has multiple modes, and that education has to test more than one of them.

If the learning objective is to understand loops, variables, data structures, or debugging, then unaided work still has a place. Students need to internalize enough of the machine’s logic to reason about code without outsourcing every step. Otherwise, they become prompt operators with no diagnostic ability when the answer is wrong.

But if the objective is to design an application, explore a domain problem, or build a larger system, forbidding AI can become artificial. Professional developers already use autocomplete, documentation search, Stack Overflow, package managers, linters, static analyzers, AI assistants, and code review tools. Nobody writes software in a vacuum. The real professional skill is knowing which forms of assistance improve the work and which ones quietly corrupt it.

UC San Diego’s split between unaided assessments and Copilot-enabled projects is a practical compromise. It acknowledges that students must still learn the underlying concepts while also letting them experience the broader arc of software creation. That broader arc is where many introductory courses have historically been weakest.

There is also an equity argument hiding in plain sight. The Microsoft story says Porter’s study included 552 students, with two-thirds from non-computing majors, nearly half first-generation college students, and 47 percent Pell Grant eligible. If those numbers hold up in the underlying research, this was not a boutique experiment for a small class of already-confident coders. It was a test of whether AI-assisted programming can widen access to meaningful computing work.

Instead of living entirely inside small syntax exercises, students reportedly produced projects in data science, image processing, and game design. That matters because the shape of an assignment teaches students what a field is. If programming is presented as a sequence of tiny puzzles, some students will conclude that computing is a syntax discipline. If programming is presented as a way to make tools, analyze information, and express ideas, a different population of students may see themselves in it.

This is where AI assistance becomes pedagogically complicated. A tool like Copilot can help a student leap into larger projects earlier than a conventional curriculum would allow. But that same leap can conceal gaps. A student may successfully assemble a working program while misunderstanding why it works, where it fails, or how to repair it.

The answer is not to shrink the work back down. It is to change what instructors ask students to explain. UC San Diego’s advanced-course approach, as described by Microsoft, included video presentations and in-person code explanation sessions. That is the right instinct. If AI makes producing code cheaper, assessment has to move closer to comprehension, critique, and authorship.

In other words, the artifact is no longer enough. A working submission may prove only that the student, the assistant, and the surrounding ecosystem collectively produced something functional. The educational question is whether the student can reason about the result. That was always important. AI simply makes it unavoidable.

Most industry coding happens inside existing systems. Developers read unfamiliar code, trace behavior across files, infer architecture, write small changes, avoid regressions, and negotiate with tools and teammates. Many university courses still underrepresent that reality. Students graduate having written code, but not necessarily having navigated software as a living, layered artifact.

According to Microsoft’s account, Shah first established manual competency before bringing in Copilot’s more advanced features. That sequencing is important. AI assistance in a large codebase can be transformative because it can summarize files, identify likely locations for changes, explain unfamiliar APIs, and accelerate navigation. But if students never learn to orient themselves manually, they may not know when the assistant has confidently pointed them in the wrong direction.

The reported classroom effect was dramatic: some tasks that previously took 30 to 40 minutes could often be completed in under five minutes with well-crafted prompts. That kind of speedup is exactly why industry is adopting these tools. It is also why education cannot simply pretend they do not exist.

The course’s final projects, which involved building feature additions to Python’s IDLE environment as if presenting to the Python development team, signal a more authentic model of learning. Students were not just solving toy problems. They were being asked to understand an existing system, propose changes, and communicate those changes in a professional register. Copilot may have accelerated the mechanics, but the educational value came from the surrounding demands.

A student who blindly trusts Copilot is in trouble. The tool can generate plausible code that fails edge cases, introduces security problems, misuses libraries, or solves a slightly different problem than the one assigned. But a student who refuses to use such tools on principle may also be poorly prepared for modern software work, where AI assistance is increasingly woven into editors, repositories, terminals, and review workflows.

Calibrated trust means students learn to ask when the assistant is likely to be useful, how to verify its output, and when to fall back to independent reasoning. That is not a soft skill bolted onto programming. It is becoming part of programming.

This is especially important for security-minded readers and IT professionals. In enterprise environments, AI-generated code is not just a learning issue. It raises questions about dependency risk, secret leakage, licensing exposure, vulnerability propagation, and maintainability. A developer who can prompt effectively but cannot review effectively is not a force multiplier. They are an incident waiting for a ticket number.

UC San Diego’s reported use of chat transcripts and student behavior data is therefore notable. Instructors were not merely grading final submissions. They were studying how students interacted with the tool, where they got stuck, and what distinguished productive prompting from unproductive loops. That is the sort of evidence education needs if it is going to move beyond vibes.

That does not make the underlying experiment unimportant. It does mean readers should separate the university’s pedagogical choices from Microsoft’s commercial interest in making Copilot the default AI layer for developers. The useful question is not whether this customer story proves that Copilot improves education in every context. It plainly does not. The useful question is what UC San Diego’s design reveals about the kind of curriculum AI forces universities to build.

The answer is a curriculum with clearer boundaries. Students need to know when AI is prohibited, when it is expected, and what they are accountable for in each case. They need assignments that measure conceptual understanding, not just code output. They need larger projects that make programming feel consequential. They need practice reviewing AI-generated suggestions with the skepticism normally reserved for a mysterious pull request from a stranger.

Microsoft’s broader positioning around Copilot is also relevant. GitHub Copilot has moved from autocomplete novelty to a family of coding assistance features across editors, terminals, pull requests, and, increasingly, agentic workflows. The company wants students to meet the tool early because today’s student is tomorrow’s enterprise developer, startup founder, IT admin, or data analyst.

That creates an institutional tension. Universities should not outsource their curriculum to a vendor roadmap. But neither can they prepare students for a world of professional tooling by pretending the tooling is not there. UC San Diego’s approach is useful precisely because it does not sound like a blanket surrender. It sounds like an attempt to domesticate the tool inside an educational framework.

For students, especially non-CS majors, the bigger issue is whether AI assistance changes who gets to build meaningful software. A student who would have bounced off syntax frustration may persist long enough to make a data tool for a lab project. A first-generation student who does not arrive with years of informal coding exposure may be able to close some of the confidence gap. A humanities or social science student may see programming less as a gatekeeping ritual and more as a medium.

That possibility should not be romanticized. AI tools can reproduce inequities too. Students with better hardware, better prior knowledge, better English-language prompting skills, or more familiarity with developer workflows may still extract more value from Copilot than their peers. If institutions charge students for access, licensing becomes another line of stratification. If instructors assume the tool explains everything clearly, students who need more structured support may fall further behind.

Still, the UC San Diego cohort described in the Microsoft story makes the equity dimension hard to ignore. A course population with many non-computing majors, first-generation students, and Pell Grant-eligible students is exactly where a more applied, AI-assisted model deserves scrutiny. If the old CS1 course was filtering students partly through patience for abstraction and syntax, then AI may allow instructors to test deeper forms of computational thinking earlier.

The danger is that universities mistake access to output for access to understanding. A student who can generate a dashboard does not automatically understand data cleaning, statistical reasoning, interface design, or software maintenance. The equity promise only becomes real if AI-assisted courses pair ambitious projects with demanding explanation, reflection, and revision.

AI weakens that model. If a tool can help students produce passing code, the assessment must ask what else the student knows. That does not mean correctness stops mattering. It means correctness becomes the start of the conversation, not the end.

UC San Diego’s use of video presentations and in-person code explanations points toward a more resilient model. Students should be asked to describe design choices, explain failures, justify tradeoffs, identify limitations, and walk through unfamiliar portions of their own submissions. They should be able to say what Copilot suggested, what they accepted, what they rejected, and why.

That approach is harder to scale than autograding. It requires instructor time, teaching assistant training, and careful rubrics. It may also be less comfortable for students who are used to treating programming assignments as private negotiations between themselves, the compiler, and the deadline. But it better matches professional reality.

In the workplace, code is social. Developers explain changes in pull requests, discuss architecture, respond to review comments, write documentation, and debug with others. If Copilot makes code generation faster, the human burden shifts toward judgment and communication. Education should follow that shift.

Windows developers are already seeing AI assistance appear across Visual Studio, Visual Studio Code, GitHub, Azure tooling, Power Platform, and Microsoft 365 workflows. The boundary between “developer” and “power user” is getting blurrier as natural language becomes a front end for scripts, automations, dashboards, and internal tools. That does not eliminate the need for expertise. It raises the cost of fake expertise.

A sysadmin who uses AI to draft a PowerShell script still needs to understand execution policy, permissions, error handling, logging, and blast radius. A developer who asks Copilot to modify a Windows application still needs to understand threading, deployment, dependencies, and security boundaries. An analyst who generates code for data processing still needs to understand what the data means and how it can mislead.

This is why UC San Diego’s classroom design is a useful preview. The future is not a neat division between people who code and people who prompt. It is a spectrum of AI-mediated technical work in which more people can produce software-like artifacts, but fewer excuses remain for not understanding what those artifacts do.

Enterprise IT should be watching how universities solve this. The graduates entering the workforce over the next few years will not treat AI coding assistants as exotic. They will expect them. The organizations that benefit will be those with review processes, security controls, and engineering cultures that teach calibrated trust rather than either blanket permission or blanket fear.

The tool category is moving too quickly for any single product to define the pedagogy. Features that seem advanced today—chat over a repository, code explanation, automated test generation, agentic issue handling—may become baseline tomorrow. Students trained only on the buttonology of one assistant will date quickly. Students trained to interrogate AI output, decompose problems, and verify behavior will adapt.

That matters for curriculum design. A course that says “here is how to use Copilot” is less durable than a course that says “here is how to collaborate with a fallible code-generating system.” The first teaches a product. The second teaches a stance.

Universities also need to avoid letting AI narrow the imagination of programming. If every project begins with what the assistant can easily generate, students may drift toward conventional solutions and familiar patterns. The best courses will push students to use AI as leverage without letting it determine the shape of the work.

That requires instructors to remain technically current. Faculty cannot meaningfully teach AI-assisted programming if they do not understand the tools themselves. Porter’s comment that AI coding assistants changed how he programs is revealing. The instructor’s own practice matters. Students can tell the difference between a policy written from panic and a course designed from experience.

Traditional programming courses produced their own version of this problem. A student could memorize patterns, pass small tests, and still struggle in real projects. AI amplifies the risk because it can generate complexity faster than the student can understand it. A beginner can now create a codebase that exceeds their ability to reason about it.

This is why “students built bigger projects” is both exciting and worrying. Bigger projects are more motivating and more realistic. They also contain more places for misunderstanding to hide. The educational win depends on whether students are forced to open the black box.

The right response is not to keep beginners confined to tiny exercises forever. It is to make reflection and debugging central. Students should be graded not only on what they build, but on their ability to explain a bug, revise an AI-generated solution, compare alternatives, and identify risks. They should experience Copilot being wrong often enough that skepticism becomes muscle memory.

In that sense, the most important outcome is not comfort with generative AI. Comfort is easy to produce. Competence is harder. The goal should be students who are comfortable enough to use AI and suspicious enough to survive it.

Start with the task, not the tool. Decide when employees should work unaided to build or demonstrate core competency, and when AI assistance should be encouraged because the real goal is speed, exploration, or system-level work. Make people explain what the assistant produced. Collect examples of failures. Teach prompting, but do not confuse prompting with engineering.

This matters for Windows-heavy environments because AI-generated scripts and configuration changes can be deceptively dangerous. A flawed automation can touch thousands of machines. A generated remediation step can weaken security. A copied command can solve the immediate problem while creating a long-term maintenance hazard. The review culture around AI output needs to be stronger, not weaker, than the review culture around human-only work.

Education and enterprise training are converging here. Both need workers who can use AI without being used by it. Both need to preserve fundamentals while acknowledging that the workflow has changed. Both need to reward explanation and judgment.

The cliché says AI will not replace programmers, but programmers using AI will replace programmers who do not. That line is too tidy. A more accurate version is that organizations will favor people who can combine domain knowledge, tool fluency, and verification discipline. UC San Diego’s experiment is an early attempt to teach that combination before students reach the office.

Source: Microsoft UC San Diego prepares students for AI-driven industry with GitHub Copilot | Microsoft Customer Stories

UC San Diego Is Treating Copilot as a Curriculum Problem, Not a Cheating Problem

UC San Diego Is Treating Copilot as a Curriculum Problem, Not a Cheating Problem

The most interesting part of UC San Diego’s experiment is not that students used GitHub Copilot. Plenty of students already do, whether instructors approve or not. The more consequential move is that faculty appear to be treating AI-assisted programming as something to be designed around rather than merely policed.That distinction matters. Universities have spent the past few years reacting to generative AI with a familiar academic reflex: define the boundary between acceptable help and academic misconduct, then try to enforce it. UC San Diego’s approach starts from a more uncomfortable premise. If AI coding assistants are becoming part of professional software development, the classroom has to teach students how to use them without letting the tool become a substitute for thought.

Leo Porter, a UC San Diego computer science professor, framed the issue bluntly in Microsoft’s customer story: many introductory programming courses have not served non-CS majors well. That is not a small admission. CS1, the traditional gateway programming course, has long carried a double burden: it must prepare future computer scientists for a major while also giving biologists, economists, cognitive scientists, engineers, and business students enough computational fluency to do meaningful work in their own fields.

The old model often failed both groups in different ways. Majors sometimes found the early work too constrained and artificial. Non-majors often emerged able to recognize syntax but not necessarily able to produce useful software. UC San Diego’s experiment argues that AI may not be the enemy of foundational learning; used carefully, it may expose how narrow the old foundation had become.

The CS1 Course Was Already in Trouble Before AI Arrived

The debate over Copilot in education is too often staged as a morality play: students are tempted by automation, instructors defend authentic learning, and the institution scrambles to preserve the value of assessment. That framing misses a harder truth. Introductory programming was already under strain before ChatGPT, Copilot, and other coding assistants arrived.Porter’s critique points to a persistent weakness in the standard introductory sequence. Students can spend weeks wrestling with syntax, loops, conditionals, and small functions without ever building something that feels connected to the work they actually want to do. That may be tolerable for students who already identify as programmers. It is a much tougher sell for a biology major who wants to analyze lab data or a psychology student who wants to build an experimental interface.

The traditional defense is that students must first learn the basics. That is true as far as it goes, but it can become an excuse for a curriculum that delays usefulness for too long. If a student finishes an introductory course and still cannot complete a basic programming task, the problem is not merely student persistence. It is instructional design.

Copilot changes that equation because it lowers the friction between intent and implementation. A novice can describe a goal and get a plausible piece of code, an explanation, or a starting point. That is dangerous if the student treats the result as magic. It is powerful if the course requires the student to inspect, modify, explain, and defend what the assistant produced.

This is where UC San Diego’s model is more interesting than a simple “AI allowed” policy. Porter’s course reportedly separated contexts in which students had to demonstrate unaided understanding from contexts in which they could use Copilot for larger creative projects. That is not permissiveness. It is a recognition that programming now has multiple modes, and that education has to test more than one of them.

The New Skill Is Knowing When Not to Use the Tool

A good AI policy in a programming course cannot be summarized as yes or no. It has to answer a more practical question: what is the student supposed to be learning in this particular moment?If the learning objective is to understand loops, variables, data structures, or debugging, then unaided work still has a place. Students need to internalize enough of the machine’s logic to reason about code without outsourcing every step. Otherwise, they become prompt operators with no diagnostic ability when the answer is wrong.

But if the objective is to design an application, explore a domain problem, or build a larger system, forbidding AI can become artificial. Professional developers already use autocomplete, documentation search, Stack Overflow, package managers, linters, static analyzers, AI assistants, and code review tools. Nobody writes software in a vacuum. The real professional skill is knowing which forms of assistance improve the work and which ones quietly corrupt it.

UC San Diego’s split between unaided assessments and Copilot-enabled projects is a practical compromise. It acknowledges that students must still learn the underlying concepts while also letting them experience the broader arc of software creation. That broader arc is where many introductory courses have historically been weakest.

There is also an equity argument hiding in plain sight. The Microsoft story says Porter’s study included 552 students, with two-thirds from non-computing majors, nearly half first-generation college students, and 47 percent Pell Grant eligible. If those numbers hold up in the underlying research, this was not a boutique experiment for a small class of already-confident coders. It was a test of whether AI-assisted programming can widen access to meaningful computing work.

Bigger Projects Change What Beginners Think Programming Is

The most compelling result in Microsoft’s account is not the comfort metric, though 79 percent of students reportedly said they felt comfortable using generative AI tools for programming by the end of the course. It is not even the 59 percent who said Copilot actively helped them learn programming concepts. The larger shift is what students were able to build.Instead of living entirely inside small syntax exercises, students reportedly produced projects in data science, image processing, and game design. That matters because the shape of an assignment teaches students what a field is. If programming is presented as a sequence of tiny puzzles, some students will conclude that computing is a syntax discipline. If programming is presented as a way to make tools, analyze information, and express ideas, a different population of students may see themselves in it.

This is where AI assistance becomes pedagogically complicated. A tool like Copilot can help a student leap into larger projects earlier than a conventional curriculum would allow. But that same leap can conceal gaps. A student may successfully assemble a working program while misunderstanding why it works, where it fails, or how to repair it.

The answer is not to shrink the work back down. It is to change what instructors ask students to explain. UC San Diego’s advanced-course approach, as described by Microsoft, included video presentations and in-person code explanation sessions. That is the right instinct. If AI makes producing code cheaper, assessment has to move closer to comprehension, critique, and authorship.

In other words, the artifact is no longer enough. A working submission may prove only that the student, the assistant, and the surrounding ecosystem collectively produced something functional. The educational question is whether the student can reason about the result. That was always important. AI simply makes it unavoidable.

The Large Codebase Course Points Closer to Real Work

The introductory course will attract the headline attention, but the advanced course may be the better preview of where computing education is headed. Anshul Shah, a PhD candidate and instructor in UC San Diego’s Computing Education Research Lab, used GitHub Copilot in a course focused on working with large codebases. That is a crucial frontier because professional software development is rarely about writing a clean program from scratch.Most industry coding happens inside existing systems. Developers read unfamiliar code, trace behavior across files, infer architecture, write small changes, avoid regressions, and negotiate with tools and teammates. Many university courses still underrepresent that reality. Students graduate having written code, but not necessarily having navigated software as a living, layered artifact.

According to Microsoft’s account, Shah first established manual competency before bringing in Copilot’s more advanced features. That sequencing is important. AI assistance in a large codebase can be transformative because it can summarize files, identify likely locations for changes, explain unfamiliar APIs, and accelerate navigation. But if students never learn to orient themselves manually, they may not know when the assistant has confidently pointed them in the wrong direction.

The reported classroom effect was dramatic: some tasks that previously took 30 to 40 minutes could often be completed in under five minutes with well-crafted prompts. That kind of speedup is exactly why industry is adopting these tools. It is also why education cannot simply pretend they do not exist.

The course’s final projects, which involved building feature additions to Python’s IDLE environment as if presenting to the Python development team, signal a more authentic model of learning. Students were not just solving toy problems. They were being asked to understand an existing system, propose changes, and communicate those changes in a professional register. Copilot may have accelerated the mechanics, but the educational value came from the surrounding demands.

“Calibrated Trust” Is the Phrase Every Syllabus Now Needs

The strongest idea in UC San Diego’s approach is Shah’s emphasis on calibrated trust. That phrase should travel far beyond this one department. It captures the central problem of AI-assisted work better than the usual binaries of trust versus distrust, human versus machine, or cheating versus learning.A student who blindly trusts Copilot is in trouble. The tool can generate plausible code that fails edge cases, introduces security problems, misuses libraries, or solves a slightly different problem than the one assigned. But a student who refuses to use such tools on principle may also be poorly prepared for modern software work, where AI assistance is increasingly woven into editors, repositories, terminals, and review workflows.

Calibrated trust means students learn to ask when the assistant is likely to be useful, how to verify its output, and when to fall back to independent reasoning. That is not a soft skill bolted onto programming. It is becoming part of programming.

This is especially important for security-minded readers and IT professionals. In enterprise environments, AI-generated code is not just a learning issue. It raises questions about dependency risk, secret leakage, licensing exposure, vulnerability propagation, and maintainability. A developer who can prompt effectively but cannot review effectively is not a force multiplier. They are an incident waiting for a ticket number.

UC San Diego’s reported use of chat transcripts and student behavior data is therefore notable. Instructors were not merely grading final submissions. They were studying how students interacted with the tool, where they got stuck, and what distinguished productive prompting from unproductive loops. That is the sort of evidence education needs if it is going to move beyond vibes.

Microsoft’s Customer Story Is Not Neutral, but the Signal Still Matters

There is an obvious caveat: this story comes from Microsoft, which owns GitHub and has every incentive to make Copilot look like an educational inevitability. Customer stories are marketing artifacts. They select favorable examples, emphasize success metrics, and frame the vendor’s product as part of a broader transformation narrative.That does not make the underlying experiment unimportant. It does mean readers should separate the university’s pedagogical choices from Microsoft’s commercial interest in making Copilot the default AI layer for developers. The useful question is not whether this customer story proves that Copilot improves education in every context. It plainly does not. The useful question is what UC San Diego’s design reveals about the kind of curriculum AI forces universities to build.

The answer is a curriculum with clearer boundaries. Students need to know when AI is prohibited, when it is expected, and what they are accountable for in each case. They need assignments that measure conceptual understanding, not just code output. They need larger projects that make programming feel consequential. They need practice reviewing AI-generated suggestions with the skepticism normally reserved for a mysterious pull request from a stranger.

Microsoft’s broader positioning around Copilot is also relevant. GitHub Copilot has moved from autocomplete novelty to a family of coding assistance features across editors, terminals, pull requests, and, increasingly, agentic workflows. The company wants students to meet the tool early because today’s student is tomorrow’s enterprise developer, startup founder, IT admin, or data analyst.

That creates an institutional tension. Universities should not outsource their curriculum to a vendor roadmap. But neither can they prepare students for a world of professional tooling by pretending the tooling is not there. UC San Diego’s approach is useful precisely because it does not sound like a blanket surrender. It sounds like an attempt to domesticate the tool inside an educational framework.

The Equity Case Is Stronger Than the Productivity Case

Most discussions of coding assistants fixate on productivity. How much faster can a developer complete a task? How many boilerplate functions can be skipped? How much time can be saved in a sprint? Those questions matter in industry, but they are not the most interesting questions in education.For students, especially non-CS majors, the bigger issue is whether AI assistance changes who gets to build meaningful software. A student who would have bounced off syntax frustration may persist long enough to make a data tool for a lab project. A first-generation student who does not arrive with years of informal coding exposure may be able to close some of the confidence gap. A humanities or social science student may see programming less as a gatekeeping ritual and more as a medium.

That possibility should not be romanticized. AI tools can reproduce inequities too. Students with better hardware, better prior knowledge, better English-language prompting skills, or more familiarity with developer workflows may still extract more value from Copilot than their peers. If institutions charge students for access, licensing becomes another line of stratification. If instructors assume the tool explains everything clearly, students who need more structured support may fall further behind.

Still, the UC San Diego cohort described in the Microsoft story makes the equity dimension hard to ignore. A course population with many non-computing majors, first-generation students, and Pell Grant-eligible students is exactly where a more applied, AI-assisted model deserves scrutiny. If the old CS1 course was filtering students partly through patience for abstraction and syntax, then AI may allow instructors to test deeper forms of computational thinking earlier.

The danger is that universities mistake access to output for access to understanding. A student who can generate a dashboard does not automatically understand data cleaning, statistical reasoning, interface design, or software maintenance. The equity promise only becomes real if AI-assisted courses pair ambitious projects with demanding explanation, reflection, and revision.

Assessment Has to Move From “Did It Run?” to “Can You Defend It?”

For decades, programming assessment has leaned heavily on executable correctness. Does the program compile? Does it pass the test cases? Does it produce the expected output? Automated grading made that model even more attractive because it scaled.AI weakens that model. If a tool can help students produce passing code, the assessment must ask what else the student knows. That does not mean correctness stops mattering. It means correctness becomes the start of the conversation, not the end.

UC San Diego’s use of video presentations and in-person code explanations points toward a more resilient model. Students should be asked to describe design choices, explain failures, justify tradeoffs, identify limitations, and walk through unfamiliar portions of their own submissions. They should be able to say what Copilot suggested, what they accepted, what they rejected, and why.

That approach is harder to scale than autograding. It requires instructor time, teaching assistant training, and careful rubrics. It may also be less comfortable for students who are used to treating programming assignments as private negotiations between themselves, the compiler, and the deadline. But it better matches professional reality.

In the workplace, code is social. Developers explain changes in pull requests, discuss architecture, respond to review comments, write documentation, and debug with others. If Copilot makes code generation faster, the human burden shifts toward judgment and communication. Education should follow that shift.

Windows Developers Should See the Shape of Their Own Future

For WindowsForum.com readers, this may sound like a campus story with limited relevance to day-to-day administration or development. It is not. The same pressures showing up in UC San Diego’s classrooms are arriving in IT departments, software teams, help desks, and security operations centers.Windows developers are already seeing AI assistance appear across Visual Studio, Visual Studio Code, GitHub, Azure tooling, Power Platform, and Microsoft 365 workflows. The boundary between “developer” and “power user” is getting blurrier as natural language becomes a front end for scripts, automations, dashboards, and internal tools. That does not eliminate the need for expertise. It raises the cost of fake expertise.

A sysadmin who uses AI to draft a PowerShell script still needs to understand execution policy, permissions, error handling, logging, and blast radius. A developer who asks Copilot to modify a Windows application still needs to understand threading, deployment, dependencies, and security boundaries. An analyst who generates code for data processing still needs to understand what the data means and how it can mislead.

This is why UC San Diego’s classroom design is a useful preview. The future is not a neat division between people who code and people who prompt. It is a spectrum of AI-mediated technical work in which more people can produce software-like artifacts, but fewer excuses remain for not understanding what those artifacts do.

Enterprise IT should be watching how universities solve this. The graduates entering the workforce over the next few years will not treat AI coding assistants as exotic. They will expect them. The organizations that benefit will be those with review processes, security controls, and engineering cultures that teach calibrated trust rather than either blanket permission or blanket fear.

The Vendor-Neutral Lesson Is More Important Than Copilot Itself

It would be a mistake to read UC San Diego’s story as a verdict on GitHub Copilot alone. Copilot is the named tool, and Microsoft’s platform reach makes it especially significant. But the deeper lesson applies to AI coding assistants generally, including rival tools and open-source models that will continue to evolve.The tool category is moving too quickly for any single product to define the pedagogy. Features that seem advanced today—chat over a repository, code explanation, automated test generation, agentic issue handling—may become baseline tomorrow. Students trained only on the buttonology of one assistant will date quickly. Students trained to interrogate AI output, decompose problems, and verify behavior will adapt.

That matters for curriculum design. A course that says “here is how to use Copilot” is less durable than a course that says “here is how to collaborate with a fallible code-generating system.” The first teaches a product. The second teaches a stance.

Universities also need to avoid letting AI narrow the imagination of programming. If every project begins with what the assistant can easily generate, students may drift toward conventional solutions and familiar patterns. The best courses will push students to use AI as leverage without letting it determine the shape of the work.

That requires instructors to remain technically current. Faculty cannot meaningfully teach AI-assisted programming if they do not understand the tools themselves. Porter’s comment that AI coding assistants changed how he programs is revealing. The instructor’s own practice matters. Students can tell the difference between a policy written from panic and a course designed from experience.

The Real Risk Is Producing Confident Beginners

There is a failure mode that every AI-assisted programming course must fight: the confident beginner. This is the student who can produce impressive-looking software, speak fluently about prompts, and move quickly through assignments, but lacks the deeper mental model needed to debug, secure, or maintain the result.Traditional programming courses produced their own version of this problem. A student could memorize patterns, pass small tests, and still struggle in real projects. AI amplifies the risk because it can generate complexity faster than the student can understand it. A beginner can now create a codebase that exceeds their ability to reason about it.

This is why “students built bigger projects” is both exciting and worrying. Bigger projects are more motivating and more realistic. They also contain more places for misunderstanding to hide. The educational win depends on whether students are forced to open the black box.

The right response is not to keep beginners confined to tiny exercises forever. It is to make reflection and debugging central. Students should be graded not only on what they build, but on their ability to explain a bug, revise an AI-generated solution, compare alternatives, and identify risks. They should experience Copilot being wrong often enough that skepticism becomes muscle memory.

In that sense, the most important outcome is not comfort with generative AI. Comfort is easy to produce. Competence is harder. The goal should be students who are comfortable enough to use AI and suspicious enough to survive it.

The UC San Diego Model Gives IT Leaders a Training Template

The lessons for industry are direct. Many organizations are rolling out AI coding assistants faster than they are changing training, review, and governance. That creates a gap between tool adoption and organizational learning. UC San Diego’s model suggests a more disciplined path.Start with the task, not the tool. Decide when employees should work unaided to build or demonstrate core competency, and when AI assistance should be encouraged because the real goal is speed, exploration, or system-level work. Make people explain what the assistant produced. Collect examples of failures. Teach prompting, but do not confuse prompting with engineering.

This matters for Windows-heavy environments because AI-generated scripts and configuration changes can be deceptively dangerous. A flawed automation can touch thousands of machines. A generated remediation step can weaken security. A copied command can solve the immediate problem while creating a long-term maintenance hazard. The review culture around AI output needs to be stronger, not weaker, than the review culture around human-only work.

Education and enterprise training are converging here. Both need workers who can use AI without being used by it. Both need to preserve fundamentals while acknowledging that the workflow has changed. Both need to reward explanation and judgment.

The cliché says AI will not replace programmers, but programmers using AI will replace programmers who do not. That line is too tidy. A more accurate version is that organizations will favor people who can combine domain knowledge, tool fluency, and verification discipline. UC San Diego’s experiment is an early attempt to teach that combination before students reach the office.

The Lesson From San Diego Is That the Ban Era Is Ending

UC San Diego’s Copilot rollout does not settle the debate over AI in programming education, but it does make the old default harder to defend. A blanket ban is simple to state and increasingly difficult to justify. A blanket embrace is easy to market and pedagogically reckless. The emerging middle is more labor-intensive, but it is also more honest.- UC San Diego is using GitHub Copilot in selected introductory and advanced courses while preserving unaided work for assessments that test core understanding.

- Porter’s introductory redesign treats AI assistance as part of the course architecture, not as an optional add-on for students who already know how to code.

- The reported 552-student study suggests many non-CS majors became more comfortable with generative AI programming tools while building projects beyond the usual CS1 scope.

- Shah’s large-codebase course shows that AI assistance may be most valuable when students must navigate, understand, and modify existing software rather than start from scratch.

- The central educational challenge is teaching calibrated trust, because students need to know when to rely on AI, when to verify it, and when to work without it.

- For IT departments and Windows developers, the same lesson applies: AI-generated code and scripts require stronger review, not blind acceleration.

Source: Microsoft UC San Diego prepares students for AI-driven industry with GitHub Copilot | Microsoft Customer Stories