KubeVirt maintainers published a security advisory this autumn describing an authentication-bypass in the aggregation-layer handling inside the virt-api component that can let an attacker impersonate the Kubernetes API server and bypass RBAC when a small set of preconditions exist.

KubeVirt is a widely used Kubernetes extension that runs virtual machines as first‑class Kubernetes resources. Its control‑plane includes cluster‑level components such as virt-api, virt-controller, virt-handler and the operator; by default those components run in the kubevirt namespace and the API surface is exposed via an aggregated APIService so the Kubernetes API server can proxy requests into KubeVirt. On November 7, 2025 the vulnerability tracked as CVE‑2025‑64432 was published. Multiple vulnerability feeds and the KubeVirt GitHub advisory describe the root cause: the virt‑api implementation did not correctly validate client identity when accepting proxied mTLS connections from a front‑end proxy (the Kubernetes API server). Practically this meant a crafted client holding a front‑end proxy certificate — and with network access to virt‑api — could be treated as the Kubernetes API server and thereby bypass some RBAC checks enforced by the aggregator. The issue is fixed in KubeVirt versions 1.5.3 and 1.6.1. The NVD and several third‑party trackers list a CVSSv3.1 base score around 4.7 (Medium) with the impact vector emphasizing Availability and an attack vector of Local / Pod‑level access — i.e., the attacker needs some presence inside the cluster network or a compromised pod.

High‑value detection checks (ordered by immediacy):

KubeVirt’s advisory and the consolidated vulnerability records are consistent about the root cause and the remediation, but the practical exploitability depends heavily on whether an attacker can obtain or misuse front‑proxy TLS credentials. Operators should therefore combine code upgrades with PKI hardening, tighter network segmentation, and focused audit hunts to both remediate and validate that no unauthorized proxied activity occurred prior to the patch.

Source: MSRC Security Update Guide - Microsoft Security Response Center

Background / Overview

Background / Overview

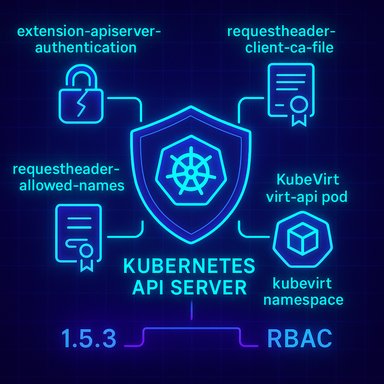

KubeVirt is a widely used Kubernetes extension that runs virtual machines as first‑class Kubernetes resources. Its control‑plane includes cluster‑level components such as virt-api, virt-controller, virt-handler and the operator; by default those components run in the kubevirt namespace and the API surface is exposed via an aggregated APIService so the Kubernetes API server can proxy requests into KubeVirt. On November 7, 2025 the vulnerability tracked as CVE‑2025‑64432 was published. Multiple vulnerability feeds and the KubeVirt GitHub advisory describe the root cause: the virt‑api implementation did not correctly validate client identity when accepting proxied mTLS connections from a front‑end proxy (the Kubernetes API server). Practically this meant a crafted client holding a front‑end proxy certificate — and with network access to virt‑api — could be treated as the Kubernetes API server and thereby bypass some RBAC checks enforced by the aggregator. The issue is fixed in KubeVirt versions 1.5.3 and 1.6.1. The NVD and several third‑party trackers list a CVSSv3.1 base score around 4.7 (Medium) with the impact vector emphasizing Availability and an attack vector of Local / Pod‑level access — i.e., the attacker needs some presence inside the cluster network or a compromised pod. How the aggregation layer normally authenticates

Understanding the risk requires a quick recap of how the Kubernetes aggregation layer is supposed to work.- Kubernetes runs a single kube‑apiserver that authenticates users and then proxies some API calls to extension API servers (like KubeVirt) using a client certificate. The apiserver writes the proxy CA and allowed client CNs into a configmap named extension-apiserver-authentication in the kube-system namespace. The extension apiserver must validate that:

- the connecting client certificate is signed by the CA listed in

requestheader-client-ca-file, and - the certificate's Common Name (CN) is one of the allowed names in

requestheader-allowed-names. - If these checks pass, the proxied request from the kube‑apiserver is trusted and the extension apiserver reads the user identity headers injected by the apiserver and performs standard SubjectAccessReview calls back to the apiserver for RBAC decisions.

Technical anatomy of CVE‑2025‑64432

The public advisory and patch commits identify a narrow but crucial validation omission:- The virt‑api component accepted mTLS client connections but did not correctly validate the certificate Common Name (CN) against the allowed names defined in the cluster’s

extension-apiserver-authenticationconfigmap. - Because the kube‑apiserver’s front‑proxy client certificate is the credential that marks proxied requests as coming from the API server, failing to check the expected CN can enable a different client — if it holds a certificate signed by the same CA — to impersonate the apiserver.

- In practice, two preconditions must be satisfied for an attacker to exploit this:

- The attacker must possess a front‑end proxy client certificate that is trusted by the cluster (

requestheader-client-ca-file) — either by compromising the front proxy or by obtaining or forging the certificate from a mis‑managed PKI. - The attacker must have network access to the virt‑api service endpoint (for example via a compromised pod or a container runtime that allows intra‑cluster access).

What this means for cluster security (impact analysis)

At first glance this is not a classic remote, unauthenticated RCE — the attack has friction — but the real‑world consequences can still be significant in the right context.- Privilege escalation via identity impersonation. If the attacker obtains a trusted front‑proxy certificate and can reach virt‑api, they can submit requests that the virt‑api treats as proxied from kube‑apiserver. That effectively lets the attacker bypass RBAC checks for certain aggregated endpoints and act on VM lifecycles, console access, or other resource actions handled through virt‑api. This is a high‑impact capability in clusters that run sensitive VMs.

- Operability and availability risk. The vulnerability’s published scoring emphasizes Availability — an attacker who can impersonate the aggregator may disrupt VM operations, pause or delete workloads, or trigger state changes that take down services. The attack is not necessarily noiseless and may leave traces in control‑plane logs if defenders are collecting the right telemetry.

- Exploitability is conditional. The requirement to possess a valid front‑proxy client certificate is the primary limiter. In well‑managed clusters this material is tightly controlled; in poorly managed or multi‑tenant environments (or where a front proxy was compromised) the risk becomes real. The GitHub advisory explicitly spells out that these prerequisites significantly reduce exploitation likelihood, but they do not eliminate it.

Verification: affected versions, CVSS and fixes

- Affected KubeVirt releases: < 1.5.3 and 1.6.0. Fixed releases: 1.5.3 and 1.6.1.

- Public CVSS (v3.1) shown by multiple trackers: 4.7 (Medium) with vector roughly AV:L/AC:H/PR:L/UI:N/S:U/C:N/I:N/A:H. This emphasizes local exploit requirements and higher attack complexity.

- The KubeVirt project fixed the validation logic in targeted commits and issued the advisory; administrators should map those fixed versions into their vendor packaging and images. The authoritative record for vendor KB mappings and Microsoft‑style advisories is usually the Security Update Guide for Microsoft products, but for open source projects the GitHub security advisory and NVD remain common references — use the KubeVirt advisory and the NVD entry to map version numbers precisely.

Detection and hunting guidance

Cluster operators should treat this as an aggregation‑layer authentication control failure and hunt for evidence of anomalous proxied connections or certificate usage.High‑value detection checks (ordered by immediacy):

- Inventory KubeVirt components and their versions:

- kubectl get pods -n kubevirt

- kubectl get deploy -n kubevirt

Confirm virt‑api is running and check the image tag/version to see whether it is in the vulnerable range. KubeVirt typically installs components in the kubevirt namespace and exposes the API via servicevirt-apiby default. - Check your

extension-apiserver-authenticationconfigmap (in kube‑system) and therequestheader-client-ca-file/requestheader-allowed-namesentries. Confirm the cluster’s front‑proxy CA is what you expect and that allowed names are narrow. kubectl get configmap extension-apiserver-authentication -n kube-system -o yaml. - Audit logs: search kube‑apiserver and virt‑api logs for unexpected client certs, mTLS handshakes from non‑apiserver clients, or requests that carry proxied identity headers originating from unusual pod IP ranges.

- Monitor APIService objects and the apiserver proxy CA bundles: kubectl get apiservices -o wide. Validate that the spec.caBundle for kubevirt’s APIService matches the expected CA, and that apiservices are pointing to the intended service/namespace.

- Look for anomalous

SubjectAccessReviewcalls or RBAC denials that correspond to virt‑api activity. Unusual patterns (e.g., a single pod source repeatedly performing lifecycle actions on many VMs) can indicate misuse.

- Retain kube‑apiserver, virt‑api, and audit logs for longer windows during triage (7–30 days depending on your threat model).

- Generate alerts when an API server proxy header originates from a non‑apiserver source IP range or when proxied requests succeed but the client certificate CN does not match the

requestheader‑allowed‑namesin the configmap.

Immediate mitigations and patching playbook

If you run KubeVirt or manage clusters that host it, follow this prioritized remediation plan.- Confirm exposure and inventory:

- Identify clusters that run KubeVirt and record their versions (check container image tags or KubeVirt CR status).

- Confirm the network topology so you know which pods or services can reach the

virt-apiservice. - Apply the vendor fixes:

- Upgrade KubeVirt to 1.5.3 (for the 1.5.x line) or 1.6.1 (for the 1.6.x line) as applicable. Use the project’s upgrade instructions and test in a staging cluster first. The GitHub advisory and upstream commit log list the exact changes.

- If you cannot patch immediately, apply compensating controls:

- Limit network access to the virt‑api service (namespace NetworkPolicies, service mesh mTLS policies, or cluster network ACLs). Deny intra‑namespace pod access to control plane endpoints unless explicitly required.

- Rotate and restrict front‑proxy certificates and private keys; ensure they are stored securely and that only the kube‑apiserver holds them.

- Ensure the

extension-apiserver-authenticationconfigmap is correctly populated and thatrequestheader-allowed-namesis not blank or overly permissive. - Post‑patch validation:

- After upgrading, confirm that virt‑api rejects unauthorised mTLS connections and that kube‑apiserver proxy flows continue to work for legitimate traffic.

- Run functional tests against VM lifecycle operations and console/vnc endpoints to ensure the aggregator behaviour is correct.

- Long‑term hardening:

- Reduce the blast radius of any single compromised pod by using stronger pod isolation controls, CSPs, and least‑privilege service accounts.

- Integrate certificate management into centralized PKI processes and use short‑lived credentials where possible.

Operational and supply‑chain considerations

- Multi‑tenant or shared clusters increase risk. A malicious tenant or a compromised CI job that can obtain a front‑proxy cert or reach the control plane endpoints dramatically lowers the attack cost and moves the vulnerability from theoretical to practical.

- Managed Kubernetes providers may present variant behaviour for extension aggregation and configmap exposure; verify how your provider implements

requestheader‑client‑caand whether it populatesextension-apiserver-authenticationin a way compatible with your expectations. Managed control planes can make some mitigations easier (provider‑side cert rotation) but can also hide important details — validate with provider documentation. - The fix is focused and small; however, operators of vendor‑bundled KubeVirt packages, air‑gapped clusters, or appliance images should confirm their vendors backported the fix and that images were rebuilt to include the patched virt‑api image.

Strengths, remaining risks, and verification caveats

Strengths of the vendor response:- The KubeVirt team published a targeted advisory, fixed the validation failure in small, reviewable commits, and released DOT releases that address the issue. Multiple vulnerability databases (NVD, GitHub Advisory, SUSE / trackers) consistently reflect the fixed versions and the technical summary, which improves confidence in the remediation path.

- The attack requires possession of front‑proxy certificate material. That is both the mitigating friction and the Achilles’ heel: if certificates are stolen, leaked, or mis‑issued, the vulnerability becomes far more serious. Teams should therefore evaluate PKI practices and certificate lifecycles as part of remediation.

- Detection is possible but depends on adequate logging and audit pipeline configuration. If audit trails were not collected or rotated away, post‑incident analysis may be incomplete.

- Some third‑party trackers list the impact primarily as availability and local attack complexity high; avoid over‑alarmist interpretations but prioritize clusters with sensitive VM workloads or lax certificate controls.

- At the time of publication there is no authoritative, public proof‑of‑concept showing remote, unauthenticated exploitation in the wild. Public trackers and the GitHub advisory note the prerequisites and reduce the exploitation likelihood; however, organizations with weak certificate hygiene or compromised pods should assume higher risk. Treat speculation about mass exploitation cautiously and validate telemetry in your environment.

Practical checklist (concise)

- Inventory clusters running KubeVirt and record component versions.

- Upgrade to KubeVirt 1.5.3 or 1.6.1 (as appropriate) and validate in staging.

- Confirm

extension-apiserver-authenticationconfigmap values (client CA and allowed names) and narrow allowed names. - Limit network access to the

virt-apiservice with NetworkPolicies or ACLs until patched. - Rotate front‑proxy certificates if compromise is suspected; tighten PKI controls.

- Enable and retain kube‑apiserver/virt‑api/audit logs for hunting; search for anomalous proxied requests.

- Validate vendor/backport status for appliance images and cloud marketplaces that bundle KubeVirt.

Conclusion

CVE‑2025‑64432 is a clear example of how a small validation omission in the aggregation‑layer handshake can produce outsized risk when combined with poor certificate hygiene or intra‑cluster compromise. The technical fix is straightforward and available in KubeVirt 1.5.3 and 1.6.1; however, operational risk varies by environment. Clusters that run virtual machine workloads — especially multi‑tenant or production VMs — should treat the issue with a high priority: verify versions, patch quickly, audit certificate usage, and apply network restrictions until you can confirm the fix.KubeVirt’s advisory and the consolidated vulnerability records are consistent about the root cause and the remediation, but the practical exploitability depends heavily on whether an attacker can obtain or misuse front‑proxy TLS credentials. Operators should therefore combine code upgrades with PKI hardening, tighter network segmentation, and focused audit hunts to both remediate and validate that no unauthorized proxied activity occurred prior to the patch.

Source: MSRC Security Update Guide - Microsoft Security Response Center