The University of Waterloo’s latest student-facing piece on generative AI does not frame ChatGPT and Microsoft Copilot as villains. Instead, it draws a clear line between using GenAI to deepen understanding and using it to sidestep the work entirely, warning that the difference is less about the tool itself and more about the learner’s intent and discipline. The message is timely, practical, and very much in step with how Waterloo is now positioning GenAI across teaching, learning, and academic integrity. (uwaterloo.ca)

The core argument is simple: technology does not automatically ruin learning. Waterloo’s student blog reaches back to the arrival of calculators and Wikipedia to show that every new learning aid has sparked anxiety about cheating, laziness, or shortcut culture, yet the real outcome always depends on how people use the tool. That framing matters because it shifts the conversation away from panic and toward pedagogy, which is exactly where GenAI belongs if universities want students to build durable skills. (uwaterloo.ca)

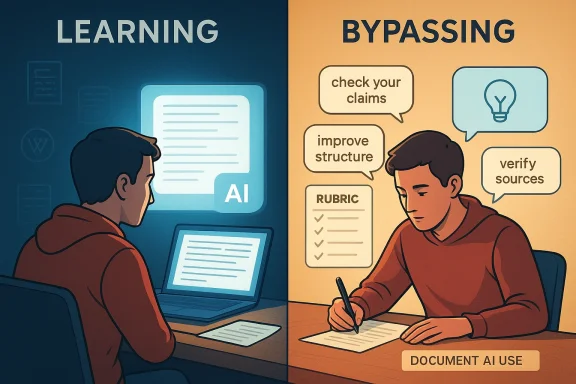

The post divides GenAI use into two broad camps. In one, students use it to replace their own thinking by asking it to generate arguments, structure, or full drafts; in the other, students use it like a smart assistant after they have already done the hard intellectual work. Waterloo is not pretending the boundary is always obvious, but it insists the distinction is real and consequential. (uwaterloo.ca)

That distinction is not merely philosophical. The university’s Writing and Communication Centre says GenAI can be productive only when it helps students learn, not when it substitutes for the work of learning, and it explicitly warns that overreliance can become unproductive for learning depending on a student’s stage and goals. In other words, GenAI is not a magic study hack; it is a tool whose value depends on timing, purpose, and transparency. (uwaterloo.ca)

Waterloo’s broader institutional guidance reinforces the same theme with more formal guardrails. Students are told not to submit AI-generated work as their own, instructors are advised to be explicit about what is allowed, and the university’s academic integrity materials emphasize transparency, disclosure, and privacy awareness. The blog post is therefore not a standalone take; it is the student-friendly expression of a wider policy and teaching strategy. (uwaterloo.ca)

Wikipedia created a similar tension around credibility and convenience. It became a fast route to background information while also raising fears that students would stop reading primary sources or checking evidence carefully. GenAI is a more powerful version of that problem because it can generate plausible prose with an even higher risk of fabricated details, invented citations, or unexamined bias. (uwaterloo.ca)

That is why the analogy is useful but incomplete. A calculator does not hallucinate equations, and Wikipedia usually does not invent entire bibliographies. GenAI can do both, which makes the demands on student judgment much higher than in earlier waves of classroom technology. (uwaterloo.ca)

Waterloo’s framing around “productive struggle” captures the educational logic well. Learning is supposed to include friction: wrestling with an idea, getting stuck, revising, and then understanding why the solution works. If GenAI removes that friction too early, it may improve convenience while quietly degrading retention, transfer, and career readiness. (uwaterloo.ca)

This is where efficiency can become a trap. A student who uses GenAI to finish an assignment faster may believe they are being strategic, but the university’s message is that speed is not the same as intellectual growth. That warning is especially relevant in a job market where employers increasingly value adaptability and independent judgment, not just polished deliverables. (uwaterloo.ca)

This approach also better matches the university’s broader writing guidance. The Writing and Communication Centre says GenAI can support brainstorming, drafting, revising, and editing when used carefully and ethically, but it also stresses that students must understand when their use is permitted and how to document it. That combination of permission and accountability is the difference between educational augmentation and academic risk. (uwaterloo.ca)

There is also a subtle but important benefit here: GenAI can expose students to alternative framings without stealing agency from them. If used well, it can push a learner to see weak spots in an argument, recognize an awkward transition, or notice where evidence is thin. The student still owns the intellectual core, but the tool helps sharpen the edges. (uwaterloo.ca)

The blog’s self-check questions are effective because they move the issue from abstract ethics to practical introspection. Am I using GenAI to support thinking, or to avoid it? Am I learning more deeply, or just finishing faster? Am I still doing the hard work? Those questions are simple, but they expose whether a student is trying to extend their cognition or bypass it. (uwaterloo.ca)

This framing is also useful for faculty. Instructors do not need to ban every AI tool to preserve rigor; they need to design assignments that reward reasoning, revision, and explanation. A well-structured course can still use GenAI as a learning accelerator while making it harder to fake conceptual mastery. That is the strategic shift higher education now needs. (uwaterloo.ca)

Waterloo’s resources also highlight a second layer of responsibility: privacy. Students are warned not to put personal, institutional, or research data into public AI tools, and Microsoft Copilot is specifically recommended through university accounts for privacy and security reasons. That is not a minor footnote; it is a recognition that convenience can create data governance problems very quickly. (uwaterloo.ca)

The university is also adjusting its stance as the technology evolves. Its academic integrity page notes that AI detection features in Turnitin will no longer be available effective September 2025, and it recommends that instructors focus on evidence of learning rather than trying to police misuse through detection alone. That is a significant shift: verification is moving from surveillance toward assessment design. (uwaterloo.ca)

This is where the classroom challenge becomes broader than cheating. Students now need to know when a tool is appropriate, how to interrogate it, and how to avoid becoming dependent on it for core intellectual tasks. In that sense, AI literacy is really a blend of media literacy, source criticism, and workflow judgment. (uwaterloo.ca)

Waterloo’s own institutional investments suggest it understands that shift. The university has launched student resources, teaching initiatives, and GenAI support structures that try to normalize responsible use rather than leaving students and instructors to improvise individually. That makes the blog post feel less like a warning label and more like an entry point into a larger pedagogical strategy. (uwaterloo.ca)

That is why Waterloo’s emphasis on doing the hard work first is strategically sound. It trains students to use AI as a multiplier rather than a crutch, which is exactly how GenAI tends to be deployed in stronger workplaces. The highest-value employees will not be the ones who can delegate the most to AI; they will be the ones who can direct AI while still understanding the substance better than the machine does. (uwaterloo.ca)

There is also a reputational dimension. Universities that normalize lazy AI use risk producing graduates who can generate polished text but not original thought. Waterloo’s article pushes in the opposite direction: it suggests that the point of higher education is still to build thinkers, not just prompt engineers. That may sound idealistic, but it is increasingly a market differentiator. (uwaterloo.ca)

The next phase will likely be less about whether GenAI is allowed and more about where it fits in a learning sequence. Expect more assignments that ask students to document how they used AI, explain why they accepted or rejected suggestions, and demonstrate the reasoning behind the final submission. The universities that handle this well will not be the ones that eliminate GenAI; they will be the ones that make it impossible to confuse speed with skill. (uwaterloo.ca)

Source: University of Waterloo Boosting your learning—or Bypassing it with GenAI? | Current Students | University of Waterloo

Overview

Overview

The core argument is simple: technology does not automatically ruin learning. Waterloo’s student blog reaches back to the arrival of calculators and Wikipedia to show that every new learning aid has sparked anxiety about cheating, laziness, or shortcut culture, yet the real outcome always depends on how people use the tool. That framing matters because it shifts the conversation away from panic and toward pedagogy, which is exactly where GenAI belongs if universities want students to build durable skills. (uwaterloo.ca)The post divides GenAI use into two broad camps. In one, students use it to replace their own thinking by asking it to generate arguments, structure, or full drafts; in the other, students use it like a smart assistant after they have already done the hard intellectual work. Waterloo is not pretending the boundary is always obvious, but it insists the distinction is real and consequential. (uwaterloo.ca)

That distinction is not merely philosophical. The university’s Writing and Communication Centre says GenAI can be productive only when it helps students learn, not when it substitutes for the work of learning, and it explicitly warns that overreliance can become unproductive for learning depending on a student’s stage and goals. In other words, GenAI is not a magic study hack; it is a tool whose value depends on timing, purpose, and transparency. (uwaterloo.ca)

Waterloo’s broader institutional guidance reinforces the same theme with more formal guardrails. Students are told not to submit AI-generated work as their own, instructors are advised to be explicit about what is allowed, and the university’s academic integrity materials emphasize transparency, disclosure, and privacy awareness. The blog post is therefore not a standalone take; it is the student-friendly expression of a wider policy and teaching strategy. (uwaterloo.ca)

A Familiar Debate, Recast for GenAI

The nostalgia opening is not accidental. By invoking calculators and Wikipedia, Waterloo places GenAI inside a long-running pattern of educational disruption, where each new tool first looks like a threat to effort before becoming part of the default learning environment. That historical arc matters because it reminds readers that educational technology rarely destroys learning outright; it usually changes what “good learning” looks like. (uwaterloo.ca)The calculator analogy still works

Calculators did not eliminate math education, but they did force educators to decide which skills were foundational and which were procedural. GenAI is producing a similar reckoning in writing, research, coding, and synthesis tasks, especially when students can ask a model to draft, summarize, or rephrase in seconds. The real question is not whether GenAI can complete a task; it is whether students still know how to do the underlying reasoning themselves. (uwaterloo.ca)Wikipedia created a similar tension around credibility and convenience. It became a fast route to background information while also raising fears that students would stop reading primary sources or checking evidence carefully. GenAI is a more powerful version of that problem because it can generate plausible prose with an even higher risk of fabricated details, invented citations, or unexamined bias. (uwaterloo.ca)

That is why the analogy is useful but incomplete. A calculator does not hallucinate equations, and Wikipedia usually does not invent entire bibliographies. GenAI can do both, which makes the demands on student judgment much higher than in earlier waves of classroom technology. (uwaterloo.ca)

- Calculators changed how we test mathematical fluency.

- Wikipedia changed how we teach source evaluation.

- GenAI is changing how we define drafting, revision, and originality.

- The tool is not the main issue; the learning design is.

- Students still need to show their own thinking.

What Waterloo Means by “Bypassing” Learning

Waterloo’s article is careful not to moralize too quickly, but its warning is plain: if students ask GenAI to generate the arguments, structure, and full draft of an essay, then the deepest cognitive work has been outsourced. The student may still edit the output, but editing is not the same as learning how to analyze, synthesize, and create from scratch. (uwaterloo.ca)Outsourcing the hard part

This is the central danger in AI-assisted coursework. Students can end up producing submissions that look polished while never actually developing the ability to build a case, weigh evidence, or organize an argument under pressure. That creates a false sense of competence, which is often worse than obvious underperformance because it hides the skill gap until later. (uwaterloo.ca)Waterloo’s framing around “productive struggle” captures the educational logic well. Learning is supposed to include friction: wrestling with an idea, getting stuck, revising, and then understanding why the solution works. If GenAI removes that friction too early, it may improve convenience while quietly degrading retention, transfer, and career readiness. (uwaterloo.ca)

This is where efficiency can become a trap. A student who uses GenAI to finish an assignment faster may believe they are being strategic, but the university’s message is that speed is not the same as intellectual growth. That warning is especially relevant in a job market where employers increasingly value adaptability and independent judgment, not just polished deliverables. (uwaterloo.ca)

- Replacement use shifts the student from author to editor.

- Editing AI output does not guarantee understanding.

- Fast completion can conceal weak mastery.

- Skills built through struggle are more durable.

- Convenience can be educationally expensive.

Amplifying Learning Without Surrendering It

The more interesting half of Waterloo’s argument is the positive one: GenAI can amplify learning when students do the thinking first and use the tool afterward to challenge, organize, or refine their work. That is a much more mature model of AI use, and it mirrors how most serious productivity tools work in practice. (uwaterloo.ca)Assistant, not substitute

In this model, the student begins with their own ideas, then uses GenAI to test them, spot gaps, and refine language. That makes the tool a partner in revision rather than a replacement for reasoning, which is a meaningful distinction in a university context. Waterloo’s student blog explicitly describes GenAI as a “smart assistant,” not a substitute, and that language sets the tone for responsible use. (uwaterloo.ca)This approach also better matches the university’s broader writing guidance. The Writing and Communication Centre says GenAI can support brainstorming, drafting, revising, and editing when used carefully and ethically, but it also stresses that students must understand when their use is permitted and how to document it. That combination of permission and accountability is the difference between educational augmentation and academic risk. (uwaterloo.ca)

There is also a subtle but important benefit here: GenAI can expose students to alternative framings without stealing agency from them. If used well, it can push a learner to see weak spots in an argument, recognize an awkward transition, or notice where evidence is thin. The student still owns the intellectual core, but the tool helps sharpen the edges. (uwaterloo.ca)

- Start with your own argument before consulting the tool.

- Use GenAI to test, not invent, your reasoning.

- Treat feedback as input, not authority.

- Keep ownership of claims and evidence.

- Use the model to improve clarity after the thinking is done.

Productive Struggle and Why It Still Matters

Waterloo’s use of the phrase productive struggle is the most educationally grounded part of the post. It recognizes that learning is not supposed to feel frictionless all the time, and that the temporary discomfort of wrestling with a difficult problem is often where durable understanding is built. (uwaterloo.ca)Why difficulty is not a flaw

That idea may sound old-fashioned in an era of one-click answers, but it is central to serious learning. Students who avoid hard thinking may still complete their assignments, yet they often miss the deeper reward: the ability to transfer a concept to a new setting, defend it under questioning, or improvise when the prompt changes. GenAI can accidentally train the opposite habit if it is used too early or too often. (uwaterloo.ca)The blog’s self-check questions are effective because they move the issue from abstract ethics to practical introspection. Am I using GenAI to support thinking, or to avoid it? Am I learning more deeply, or just finishing faster? Am I still doing the hard work? Those questions are simple, but they expose whether a student is trying to extend their cognition or bypass it. (uwaterloo.ca)

This framing is also useful for faculty. Instructors do not need to ban every AI tool to preserve rigor; they need to design assignments that reward reasoning, revision, and explanation. A well-structured course can still use GenAI as a learning accelerator while making it harder to fake conceptual mastery. That is the strategic shift higher education now needs. (uwaterloo.ca)

- Productive struggle builds confidence through effort.

- Easy output can reduce long-term recall.

- Self-assessment helps students spot misuse.

- Strong pedagogy can make AI use safer.

- Learning design matters as much as policy.

Policy, Integrity, and the Need for Transparency

Waterloo’s student blog sits inside a much firmer academic integrity framework than the tone might suggest on first read. The university’s official materials say plainly that submitting AI-generated work as one’s own is a violation of Policy 71, and instructors are advised to define what use, if any, is allowed in assignments, tests, and exams. (uwaterloo.ca)Disclosure is now part of the workflow

That matters because the old “did you use it or not?” question is no longer enough. The university now expects students and staff to be transparent about when GenAI was used, what it contributed, and how the output was checked or modified. In practice, disclosure is becoming part of the workflow of learning, much like citations became a basic part of scholarly practice. (uwaterloo.ca)Waterloo’s resources also highlight a second layer of responsibility: privacy. Students are warned not to put personal, institutional, or research data into public AI tools, and Microsoft Copilot is specifically recommended through university accounts for privacy and security reasons. That is not a minor footnote; it is a recognition that convenience can create data governance problems very quickly. (uwaterloo.ca)

The university is also adjusting its stance as the technology evolves. Its academic integrity page notes that AI detection features in Turnitin will no longer be available effective September 2025, and it recommends that instructors focus on evidence of learning rather than trying to police misuse through detection alone. That is a significant shift: verification is moving from surveillance toward assessment design. (uwaterloo.ca)

- Students must know whether GenAI is permitted.

- Disclosure is expected when GenAI contributes to work.

- Privacy protections matter as much as originality.

- Detection tools are not a substitute for good assessment.

- Evidence of learning is becoming the stronger standard.

The Role of AI Literacy in Higher Education

If there is one thing Waterloo gets right, it is that the GenAI conversation cannot stop at policing misuse. The university’s teaching guidance says instructors should discuss GenAI in the context of academic integrity and AI literacy, with the goal of using the tools to support, deepen, and extend learning rather than merely banning them. (uwaterloo.ca)Literacy beats fear

That is a crucial point because AI literacy is not just about prompt-writing. It includes understanding hallucinations, fabricated citations, bias, privacy issues, copyright concerns, and the limits of model outputs. Waterloo’s writing guidance explicitly warns that GenAI may invent sources, repeat biased material, and present information without attribution, so students must verify claims instead of accepting polished prose at face value. (uwaterloo.ca)This is where the classroom challenge becomes broader than cheating. Students now need to know when a tool is appropriate, how to interrogate it, and how to avoid becoming dependent on it for core intellectual tasks. In that sense, AI literacy is really a blend of media literacy, source criticism, and workflow judgment. (uwaterloo.ca)

Waterloo’s own institutional investments suggest it understands that shift. The university has launched student resources, teaching initiatives, and GenAI support structures that try to normalize responsible use rather than leaving students and instructors to improvise individually. That makes the blog post feel less like a warning label and more like an entry point into a larger pedagogical strategy. (uwaterloo.ca)

- AI literacy includes source checking and bias awareness.

- Students need to understand model limits, not just prompts.

- Responsible use is a teachable skill.

- Universities must support both students and instructors.

- Literacy reduces both misuse and anxiety.

The Enterprise and Career Readiness Angle

Waterloo’s caution about career readiness is worth taking seriously. Students are being told that if they let GenAI do the thinking for them, they may arrive in the workplace with weaker analytical habits, shallower problem-solving skills, and less resilience when a task cannot be outsourced. That is not just an academic issue; it is a workforce issue. (uwaterloo.ca)Employers want judgment, not just output

In professional settings, the value of GenAI is increasingly tied to judgment. Employers may welcome faster drafting, summarization, or ideation, but they also expect workers to spot errors, verify claims, protect data, and choose the right tool for the job. A student trained only to prompt a model may struggle when asked to review, defend, or improve that model’s output under real constraints. (uwaterloo.ca)That is why Waterloo’s emphasis on doing the hard work first is strategically sound. It trains students to use AI as a multiplier rather than a crutch, which is exactly how GenAI tends to be deployed in stronger workplaces. The highest-value employees will not be the ones who can delegate the most to AI; they will be the ones who can direct AI while still understanding the substance better than the machine does. (uwaterloo.ca)

There is also a reputational dimension. Universities that normalize lazy AI use risk producing graduates who can generate polished text but not original thought. Waterloo’s article pushes in the opposite direction: it suggests that the point of higher education is still to build thinkers, not just prompt engineers. That may sound idealistic, but it is increasingly a market differentiator. (uwaterloo.ca)

- Good workplaces reward judgment, not just speed.

- AI can multiply strong thinking or mask weak thinking.

- Verification is a professional skill.

- Tool fluency is not the same as subject mastery.

- Universities still need to graduate independent thinkers.

Strengths and Opportunities

Waterloo’s approach is strong because it avoids the two worst extremes: technophobic panic and uncritical enthusiasm. It acknowledges the reality that students are already using GenAI, while still drawing clear boundaries around academic honesty, privacy, and learning quality. That balance creates room for responsible experimentation without surrendering educational standards. (uwaterloo.ca)- The blog uses a relatable, non-judgmental tone.

- It distinguishes clearly between augmentation and substitution.

- The “productive struggle” concept is easy to remember.

- It aligns student guidance with formal academic integrity policy.

- It reinforces the importance of transparency and documentation.

- It supports instructors who want structure rather than blanket bans.

- It helps students think about AI as a learning design choice, not a reflex.

Risks and Concerns

The biggest risk is that students will read the blog as permission to use GenAI broadly without fully absorbing the difference between supported learning and outsourced learning. Because GenAI outputs are often polished and persuasive, even thoughtful students can slide into overreliance without realizing how much of their own intellectual work has been displaced. (uwaterloo.ca)- Students may confuse editing AI output with genuine authorship.

- Overreliance can weaken long-term retention and transfer.

- AI-generated citations and facts may be unreliable.

- Privacy risks remain if students paste sensitive material into public tools.

- Instructors may vary widely in what they permit, creating confusion.

- Detection-based enforcement is becoming less viable.

- Uneven AI literacy could widen performance gaps between students.

Looking Ahead

Waterloo’s post arrives at a moment when universities are being forced to redesign assessment, not just update policy language. As GenAI gets better at drafting, summarizing, and coding, assignments built around generic output will become easier to fake, which means institutions will have to reward process, originality, oral defense, and reflection more consistently. That is a healthy direction, even if it is inconvenient. (uwaterloo.ca)The next phase will likely be less about whether GenAI is allowed and more about where it fits in a learning sequence. Expect more assignments that ask students to document how they used AI, explain why they accepted or rejected suggestions, and demonstrate the reasoning behind the final submission. The universities that handle this well will not be the ones that eliminate GenAI; they will be the ones that make it impossible to confuse speed with skill. (uwaterloo.ca)

- More courses will likely require AI disclosure.

- Assignments may shift toward process-based evaluation.

- Students will need stronger source-checking habits.

- Faculty will need clearer rubrics and examples.

- AI literacy modules will become more common.

- Privacy guidance will matter as much as citation rules.

Source: University of Waterloo Boosting your learning—or Bypassing it with GenAI? | Current Students | University of Waterloo