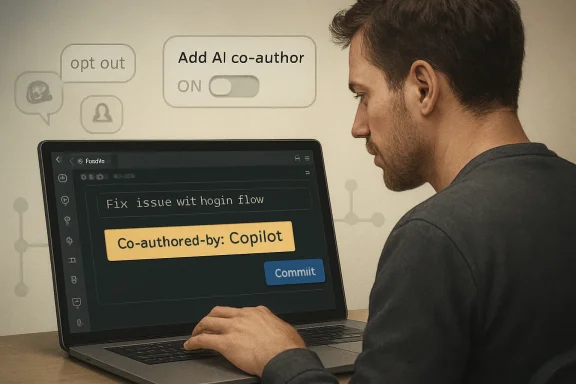

Microsoft briefly made VS Code's Git integration append a Copilot co-author trailer to commits by default after an April 16, 2026, merge changed git.addAICoAuthor to all, prompting GitHub backlash and a May 3, 2026, reversal that restores the default to off. The controversy is not just that an editor touched commit metadata. It is that Microsoft managed to turn a reasonable AI-disclosure idea into another example of opt-out AI creeping into developer workflows. For a company trying to convince programmers that Copilot is a trustworthy collaborator, this was a remarkably efficient way to remind them who controls the tooling.

A Git commit is not a social post, a telemetry event, or a product usage banner. It is part of a project’s permanent record: a chain of authorship, review, responsibility, blame, compliance, and institutional memory. When VS Code adds a

That distinction is why the reaction was so sharp. Developers tolerate a lot of ambient product nudging inside editors: welcome pages, notification badges, extension recommendations, model-picker prompts, and the slow accretion of AI affordances in sidebars. But the commit log is different. It belongs to the repository, not the editor.

The original VS Code pull request was small in code and large in consequence. It changed the Git extension’s

The backlash was predictable because the feature sat at the intersection of three already volatile developer anxieties: unwanted AI integration, authorship ambiguity, and vendor self-promotion. Even if the intent was disclosure, the effect looked to many users like Copilot was signing itself into their project history.

This matters because the dispute was never only about whether Copilot deserves credit. If a user has disabled AI features, an editor should not behave as though AI participation still needs to be tracked. If a developer removes a trailer from a commit message, it should not quietly reappear later in the publishing flow. If a project has policies around AI-assisted code, the tool should support those policies without surprising the people subject to them.

Microsoft’s fix is the right default. AI co-author trailers may be useful in some teams, especially where generated code must be auditable. But an audit marker is only credible when it is accurate, intentional, and explainable. A marker that appears unexpectedly becomes noise at best and false evidence at worst.

There is also a delicious irony in the rollback pull request itself reportedly carrying a Copilot co-author trailer. The joke wrote itself because the tooling had already turned attribution into theater. When the mechanism designed to clarify authorship becomes the thing people laugh at, the product team has lost control of the narrative.

A commit trailer is not a crazy place to put that information. Git trailers are already used for sign-offs, acknowledgements, reviewed-by lines, and co-authorship. GitHub’s UI understands co-author metadata, and teams have long used it to represent pair programming or collaborative changes. On paper, “Co-authored-by: Copilot” is a clean, machine-readable signal.

The problem is that “co-author” is a loaded word. In human collaboration, a co-author usually has agency, accountability, and some claim to contribution. Copilot has none of those in the ordinary sense. It does not own the patch, review the patch, answer for the patch, or show up in an incident review when the patch breaks production. It is a tool controlled by a user, even when that tool is highly generative.

That semantic mismatch is why many developers recoil at the exact phrasing. A more neutral marker — something closer to “AI-assisted” or “Generated-with” — would separate provenance from authorship. Calling Copilot a co-author imports social and legal connotations that the tool cannot bear. It also gives Microsoft’s AI brand a place in repositories where project maintainers may not want it.

Defaults are policy. Defaults decide what happens to the hurried developer who commits through the Source Control panel without checking every new setting after an update. Defaults decide what a junior engineer accidentally pushes to a public repository. Defaults decide whether a maintainer has to clean up metadata after the fact.

That is why “you can turn it off” was never a satisfying answer. The more sensitive the surface, the stronger the case for opt-in. Commit metadata is sensitive precisely because it persists. An editor setting that changes a theme can be forgiven. An editor setting that changes repository history demands a higher bar.

The April 16 change also arrived in a climate where developers are already primed to distrust AI defaults. Microsoft has spent the past several years weaving Copilot and related AI features through Windows, GitHub, Office, Edge, and developer tools. Some of those integrations are genuinely useful. But when every product surface becomes a new place for an AI feature to appear by default, even defensible ideas begin to look like conquest.

That trust is unusually valuable. Developers spend all day inside their editor. They give it access to source trees, terminals, secrets, build scripts, containers, remote machines, and debugging sessions. An editor is not just an application; it is a working environment.

When VS Code changes how commits are authored, it touches one of the few places where developers expect deterministic behavior. Type a message, commit the staged changes, get the message you typed. If AI used the editor to modify files, the editor may have a reasonable basis to suggest an attribution marker. But suggestion and insertion are different product philosophies.

The controversy also highlights the awkward split identity of VS Code. It is an open-source project, a Microsoft product, a GitHub-adjacent AI distribution channel, and the de facto front end for many cloud development workflows. Those identities can coexist, but only if the project is careful about where Microsoft’s commercial priorities show through. A Copilot co-author trailer in every eligible commit made that boundary feel porous.

Worse, a false-positive trailer can create avoidable friction. Imagine a regulated team where AI-generated code requires additional review, and a commit is marked as Copilot-assisted even though the developer says no AI was used. Now the audit trail conflicts with the human account. The team either wastes time investigating a tooling artifact or learns to ignore the marker entirely.

A false negative is also a problem. If a trailer only appears in some workflows, or if developers can disable it locally, the absence of the trailer does not prove that AI was not used. That makes the feature weak as a compliance control unless it is part of a broader, centrally managed policy. Commit trailers are useful breadcrumbs, not a substitute for governance.

That is where Microsoft has an opportunity it nearly squandered. Enterprises do need first-class controls for AI-assisted development: policy-based enablement, workspace-level enforcement, auditable logs, and clear distinctions among AI actions. But those controls must be designed as administrative and team features, not smuggled into the default commit path of a general-purpose editor.

That precedent matters more than this specific setting. If Copilot can add itself as a co-author by default, what else can an AI development environment decide to annotate? Could it label generated tests, agent-created branches, AI-reviewed pull requests, or security remediations? Could those labels become part of ranking, compliance scoring, marketplace badges, or contribution analytics?

None of that has to be malicious to be troubling. Modern developer tools are increasingly mediated by platforms that see workflow metadata as a valuable asset. AI makes that metadata more valuable still, because vendors want to measure adoption, demonstrate productivity, and create feedback loops for future tooling. Developers, meanwhile, want tools that help them without turning their repositories into product dashboards.

The co-author flap compressed all of those suspicions into one visible line of text. It was easy to screenshot, easy to mock, and easy to understand. That is why a small default change became a larger referendum on Microsoft’s AI posture.

But the right design starts with intent. When an AI tool materially changes files, VS Code could show a clear commit-time prompt: “This commit includes AI-assisted changes. Add an AI assistance trailer?” It could explain what will be added, allow the developer to edit the wording, and remember the choice per repository or organization policy. That would treat attribution as a meaningful act rather than a hidden side effect.

The wording should also evolve. “Co-authored-by” may be technically convenient because GitHub already understands it, but convenience is not neutrality. An AI assistance trailer could carry richer and less misleading information: tool name, class of assistance, and perhaps whether changes came from inline completion, chat, or agent mode. It should not pretend the model is a colleague.

Finally, the feature should be honest about its limits. Local settings cannot prove whether AI was or was not used. A trailer can indicate that a supported tool detected supported AI involvement in a supported workflow. That is valuable, but it is not a cryptographic attestation, a license analysis, or a moral confession. Tooling that presents provenance modestly will earn more trust than tooling that overclaims.

Source: heise online WTF: Microsoft forces "Co-Authored-by Copilot" in commits

Microsoft Found the Most Sensitive Place to Put an AI Badge

Microsoft Found the Most Sensitive Place to Put an AI Badge

A Git commit is not a social post, a telemetry event, or a product usage banner. It is part of a project’s permanent record: a chain of authorship, review, responsibility, blame, compliance, and institutional memory. When VS Code adds a Co-authored-by: Copilot <[email][email protected][/email]> trailer, it is not merely decorating a message; it is changing the historical artifact that other tools and people will read later.That distinction is why the reaction was so sharp. Developers tolerate a lot of ambient product nudging inside editors: welcome pages, notification badges, extension recommendations, model-picker prompts, and the slow accretion of AI affordances in sidebars. But the commit log is different. It belongs to the repository, not the editor.

The original VS Code pull request was small in code and large in consequence. It changed the Git extension’s

git.addAICoAuthor default from off to all, meaning the editor would append an AI co-author trailer when it believed AI-generated code was included. The change was merged on April 16, 2026, with little public explanation in the pull request itself, and the absence of a strong rationale became part of the story.The backlash was predictable because the feature sat at the intersection of three already volatile developer anxieties: unwanted AI integration, authorship ambiguity, and vendor self-promotion. Even if the intent was disclosure, the effect looked to many users like Copilot was signing itself into their project history.

The Reversal Came Quickly, But Not Before the Trust Damage

By May 3, Microsoft had merged a follow-up pull request changing the default back tooff. That same fix also addressed a more damaging complaint: AI contribution tracking should not run when built-in AI features are disabled, and the trailer should not be added when chat.disableAIFeatures is enabled. In other words, the rollback was not merely a public-relations concession; it acknowledged that the previous behavior could activate in scenarios where users reasonably believed AI was out of the loop.This matters because the dispute was never only about whether Copilot deserves credit. If a user has disabled AI features, an editor should not behave as though AI participation still needs to be tracked. If a developer removes a trailer from a commit message, it should not quietly reappear later in the publishing flow. If a project has policies around AI-assisted code, the tool should support those policies without surprising the people subject to them.

Microsoft’s fix is the right default. AI co-author trailers may be useful in some teams, especially where generated code must be auditable. But an audit marker is only credible when it is accurate, intentional, and explainable. A marker that appears unexpectedly becomes noise at best and false evidence at worst.

There is also a delicious irony in the rollback pull request itself reportedly carrying a Copilot co-author trailer. The joke wrote itself because the tooling had already turned attribution into theater. When the mechanism designed to clarify authorship becomes the thing people laugh at, the product team has lost control of the narrative.

AI Attribution Is Useful Only When It Is Boring

The strongest argument in Microsoft’s favor is that AI-assisted development does need better provenance. Teams increasingly want to know when code was written, transformed, summarized, or reviewed with machine assistance. Security reviewers may care because generated code can carry subtle defects. Legal teams may care because policies around AI-generated work are still evolving. Maintainers may care because a patch generated by an agent may need a different kind of scrutiny than a patch typed line by line.A commit trailer is not a crazy place to put that information. Git trailers are already used for sign-offs, acknowledgements, reviewed-by lines, and co-authorship. GitHub’s UI understands co-author metadata, and teams have long used it to represent pair programming or collaborative changes. On paper, “Co-authored-by: Copilot” is a clean, machine-readable signal.

The problem is that “co-author” is a loaded word. In human collaboration, a co-author usually has agency, accountability, and some claim to contribution. Copilot has none of those in the ordinary sense. It does not own the patch, review the patch, answer for the patch, or show up in an incident review when the patch breaks production. It is a tool controlled by a user, even when that tool is highly generative.

That semantic mismatch is why many developers recoil at the exact phrasing. A more neutral marker — something closer to “AI-assisted” or “Generated-with” — would separate provenance from authorship. Calling Copilot a co-author imports social and legal connotations that the tool cannot bear. It also gives Microsoft’s AI brand a place in repositories where project maintainers may not want it.

The Default Was the Product Decision

Microsoft can argue, fairly, that the setting was configurable. Users could setgit.addAICoAuthor to off in VS Code settings or in settings.json. Depending on the build, users also saw narrower options such as applying attribution to chat and agent workflows rather than every AI-assisted path. But configurability is not consent.Defaults are policy. Defaults decide what happens to the hurried developer who commits through the Source Control panel without checking every new setting after an update. Defaults decide what a junior engineer accidentally pushes to a public repository. Defaults decide whether a maintainer has to clean up metadata after the fact.

That is why “you can turn it off” was never a satisfying answer. The more sensitive the surface, the stronger the case for opt-in. Commit metadata is sensitive precisely because it persists. An editor setting that changes a theme can be forgiven. An editor setting that changes repository history demands a higher bar.

The April 16 change also arrived in a climate where developers are already primed to distrust AI defaults. Microsoft has spent the past several years weaving Copilot and related AI features through Windows, GitHub, Office, Edge, and developer tools. Some of those integrations are genuinely useful. But when every product surface becomes a new place for an AI feature to appear by default, even defensible ideas begin to look like conquest.

VS Code’s Strength Has Always Been That It Felt Like Yours

VS Code became the default editor for much of the industry because it struck a balance that Microsoft historically struggled to achieve. It was polished but hackable, opinionated but not overbearing, corporate-backed but open enough to feel community-shaped. It gave Microsoft a foothold on Linux desktops, MacBooks, and web stacks that would once have treated Redmond tooling with suspicion.That trust is unusually valuable. Developers spend all day inside their editor. They give it access to source trees, terminals, secrets, build scripts, containers, remote machines, and debugging sessions. An editor is not just an application; it is a working environment.

When VS Code changes how commits are authored, it touches one of the few places where developers expect deterministic behavior. Type a message, commit the staged changes, get the message you typed. If AI used the editor to modify files, the editor may have a reasonable basis to suggest an attribution marker. But suggestion and insertion are different product philosophies.

The controversy also highlights the awkward split identity of VS Code. It is an open-source project, a Microsoft product, a GitHub-adjacent AI distribution channel, and the de facto front end for many cloud development workflows. Those identities can coexist, but only if the project is careful about where Microsoft’s commercial priorities show through. A Copilot co-author trailer in every eligible commit made that boundary feel porous.

Enterprise IT Sees a Compliance Problem Wearing a UX Costume

For individual developers, the issue may be annoyance. For enterprises, it is governance. Many organizations are still writing policies for generative AI in software development, and those policies often distinguish between autocomplete, chat suggestions, agentic file edits, generated tests, generated documentation, and code review assistance. A single co-author trailer is too blunt to capture those distinctions.Worse, a false-positive trailer can create avoidable friction. Imagine a regulated team where AI-generated code requires additional review, and a commit is marked as Copilot-assisted even though the developer says no AI was used. Now the audit trail conflicts with the human account. The team either wastes time investigating a tooling artifact or learns to ignore the marker entirely.

A false negative is also a problem. If a trailer only appears in some workflows, or if developers can disable it locally, the absence of the trailer does not prove that AI was not used. That makes the feature weak as a compliance control unless it is part of a broader, centrally managed policy. Commit trailers are useful breadcrumbs, not a substitute for governance.

That is where Microsoft has an opportunity it nearly squandered. Enterprises do need first-class controls for AI-assisted development: policy-based enablement, workspace-level enforcement, auditable logs, and clear distinctions among AI actions. But those controls must be designed as administrative and team features, not smuggled into the default commit path of a general-purpose editor.

The Community Was Angry Because It Understood the Precedent

Some reactions were exaggerated, because GitHub reactions and forum threads are not known for restraint. But the underlying concern was not irrational. Developers saw a precedent: a vendor-controlled editor deciding that use of a vendor AI tool should be written into project history unless the user opts out.That precedent matters more than this specific setting. If Copilot can add itself as a co-author by default, what else can an AI development environment decide to annotate? Could it label generated tests, agent-created branches, AI-reviewed pull requests, or security remediations? Could those labels become part of ranking, compliance scoring, marketplace badges, or contribution analytics?

None of that has to be malicious to be troubling. Modern developer tools are increasingly mediated by platforms that see workflow metadata as a valuable asset. AI makes that metadata more valuable still, because vendors want to measure adoption, demonstrate productivity, and create feedback loops for future tooling. Developers, meanwhile, want tools that help them without turning their repositories into product dashboards.

The co-author flap compressed all of those suspicions into one visible line of text. It was easy to screenshot, easy to mock, and easy to understand. That is why a small default change became a larger referendum on Microsoft’s AI posture.

The Sensible Version of This Feature Is Still Worth Building

The answer is not to pretend AI assistance should never be disclosed. That would be naïve. As AI agents move from autocomplete to multi-file edits, dependency upgrades, refactors, test generation, and bug fixes, teams will need ways to reconstruct how work was produced. A clean provenance trail could help reviewers focus attention and help maintainers understand the shape of a contribution.But the right design starts with intent. When an AI tool materially changes files, VS Code could show a clear commit-time prompt: “This commit includes AI-assisted changes. Add an AI assistance trailer?” It could explain what will be added, allow the developer to edit the wording, and remember the choice per repository or organization policy. That would treat attribution as a meaningful act rather than a hidden side effect.

The wording should also evolve. “Co-authored-by” may be technically convenient because GitHub already understands it, but convenience is not neutrality. An AI assistance trailer could carry richer and less misleading information: tool name, class of assistance, and perhaps whether changes came from inline completion, chat, or agent mode. It should not pretend the model is a colleague.

Finally, the feature should be honest about its limits. Local settings cannot prove whether AI was or was not used. A trailer can indicate that a supported tool detected supported AI involvement in a supported workflow. That is valuable, but it is not a cryptographic attestation, a license analysis, or a moral confession. Tooling that presents provenance modestly will earn more trust than tooling that overclaims.

The Commit Line That Taught the Lesson

Microsoft’s quick reversal reduces the immediate blast radius, but developers and administrators should still check their environments, especially where VS Code updates moved faster than internal communication. The practical lesson is simple: AI provenance belongs in the workflow, but not as a surprise.- VS Code users who do not want Copilot attribution should set

git.addAICoAuthortooffin user or workspace settings. - Teams that want AI disclosure should define repository-level policy rather than relying on each developer’s local editor defaults.

- Administrators should verify that disabling built-in AI features also disables related AI tracking behavior in the builds they deploy.

- Maintainers should treat Copilot co-author trailers from the affected period as potentially noisy rather than definitive evidence of AI use.

- Tool vendors should make AI provenance opt-in, explicit, and semantically accurate before writing it into durable project history.

Source: heise online WTF: Microsoft forces "Co-Authored-by Copilot" in commits