Visual Studio Code recently shipped a change that could append “Co-authored-by: Copilot” to Git commits by default, including cases where Copilot had not generated the code, before Microsoft-linked maintainers acknowledged the mistake and restored the feature to off by default. The incident is not just another developer-tooling papercut. It is a warning about what happens when AI features stop behaving like optional assistants and start rewriting the evidentiary record of software work. For Microsoft, the problem is less that Copilot wanted credit than that VS Code presumed the authority to give it.

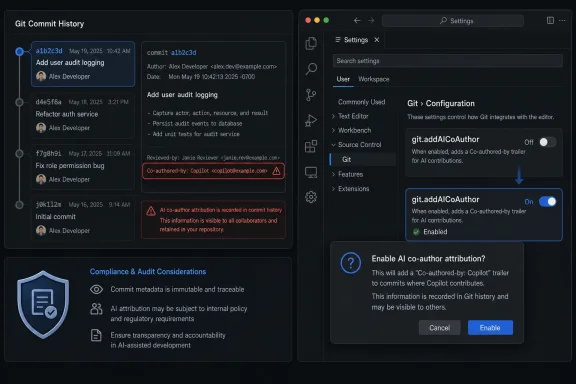

The controversy began with a small-looking change in VS Code’s Git extension: the setting controlling AI co-author trailers,

The intended use case is easy enough to defend. If an AI coding tool materially generated code, some teams may want the commit history to say so. AI provenance is becoming a real governance issue, particularly in companies with source-code policies, license-review obligations, and customer contracts that increasingly distinguish between human-written and machine-assisted work.

But the implementation crossed the line from provenance into presumption. Users reported Copilot being added even when AI had not generated the code, and even when AI features were disabled through settings such as

The most damaging part of the story is that the trailer was not always immediately visible to the user at the point where professional trust is formed. Developers often scan a commit message, hit commit, and move on. If the editor mutates that message in the last mile, the developer may discover the change only after pushing to a shared repository — or after someone else notices it.

That is why the furious reaction was not developer melodrama. A “Co-authored-by” line can be read by managers, compliance systems, reviewers, clients, and future investigators. It can imply tool usage, process deviation, IP exposure, or policy noncompliance. Microsoft may have intended a helpful attribution mechanism, but the shipped behavior created the appearance of an admission.

The author field says who made a change. The committer field says who applied it. Trailers such as “Signed-off-by,” “Reviewed-by,” and “Co-authored-by” carry conventions that teams have spent years operationalizing. They feed release notes, compliance scans, dashboards, contribution graphs, open-source credits, and occasionally courtroom exhibits.

That is why this is different from a product inserting a banner or adding an extra onboarding card. The editor was touching the permanent record of the work. Even if the user can remove the line, the default matters because defaults become the behavior of millions of people who never read the setting diff.

“Co-authored-by” also has a specific social meaning. It does not say “this tool was available,” or “an AI model may have influenced an idea,” or “a developer used autocomplete once.” It says another named party helped author the change. When that party is GitHub Copilot, the implication is unavoidable: AI contributed to the code.

That implication can be harmless in a personal repository and toxic in a regulated workplace. Some companies ban AI coding tools outright. Others allow them only for non-sensitive code, only under enterprise agreements, or only after legal review. A developer whose commit suddenly claims Copilot participation may have to explain why a forbidden assistant appears in the project history.

The complaint quoted across the developer discussion — “this could cost people their jobs” — sounds dramatic only if one assumes commit metadata is just decoration. In many organizations, it is not. If a bank, defense contractor, healthcare vendor, or enterprise software supplier has a policy against non-approved AI assistance, a false Copilot co-author line is not an inconvenience. It is an accusation with a timestamp.

The apology’s substance, however, is less important than what the incident revealed about process. According to the public discussion, the behavior was reverted because of a bug, not simply because users hated the default. Vasyura also reportedly acknowledged that the bug had been caught in testing but shipped anyway.

That is the part enterprise IT departments will remember. Not because they believe every bug is scandalous, but because this bug sat at the intersection of identity, policy, and auditability. A scrollbar bug can wait for a point release. A false authorship marker in source control should trigger a much higher bar.

There is a deeper organizational tell here. Microsoft and GitHub are under immense pressure to make Copilot feel native everywhere: the editor, the pull request, the terminal, the issue, the code review, the project plan. When a company is trying to make AI ubiquitous, product teams will naturally start treating AI involvement as the default state of modern work. The rest of the industry is not there yet.

That mismatch is the heart of the blowback. Microsoft may see Copilot attribution as a compliance-friendly feature that brings transparency to AI-assisted development. Developers saw an editor asserting facts about their labor without asking them first. Both views can be sincere, but only one of them respects the user as the source of truth.

As AI coding agents become more capable, teams will need durable ways to answer basic questions. Did a model generate this function? Did a human merely accept a suggestion? Did an agent open the pull request? Was the code reviewed under the team’s AI policy? Was any proprietary context sent to a third-party service?

Those questions cannot be solved by pretending AI assistance does not exist. Nor can they be solved by relying on developers to write perfect commit messages after the fact. Tooling should help, and commit trailers are one plausible mechanism.

But provenance systems live or die on precision. “Copilot touched this” is not precise enough. “Copilot generated these lines during this session, under this account, using this enterprise policy, and the developer approved them” is closer to what serious organizations will need. Anything less risks producing a fog of pseudo-transparency that is worse than silence.

A false positive is especially dangerous because it dilutes the signal. If Copilot co-authorship appears on commits where no AI-generated code exists, teams will learn to ignore it. The metadata becomes spam. Worse, it teaches developers that AI audit trails are arbitrary, which undermines the very compliance story Microsoft wants to sell.

There is also a philosophical trap in calling Copilot a “co-author.” Lawyers, open-source maintainers, and engineers may not agree on whether AI can be an author in any meaningful sense. GitHub’s trailer convention was built for human collaborators. Applying it to a tool is already a metaphor; applying it inaccurately turns the metaphor into a liability.

The better approach would be explicit and layered. Ask the user before enabling the setting. Show the exact trailer before commit creation. Distinguish generated code from chat assistance, commit-message generation, review suggestions, and agentic edits. Give organizations policy controls that are enforceable, visible, and easy to audit.

When VS Code ships a default, it is making a bet about what most users want and what risks they should silently accept. In this case, the bet appears to have been that AI attribution is desirable enough to enable broadly, and that users who object can turn it off. That is backwards for authorship metadata.

The right default for anything that changes a developer’s commit identity should be opt-in. Not because AI is uniquely dangerous, but because source-control history is shared infrastructure. The user’s name, email, signatures, co-authors, and trailers are not the editor’s playground.

Microsoft knows this in other contexts. Git commit signing is not silently enabled with a Microsoft key. Repository remotes are not rewritten because a cloud workflow would be more convenient. A developer tool may suggest, scaffold, or warn, but it should not silently add claims about who helped produce the work.

The failure is therefore not just a bad line of code or a missing test. It is a product judgment that treated attribution as a convenience setting rather than a trust boundary. The reason developers reacted so sharply is that they recognized the boundary immediately.

There is a long history of tech companies discovering that “you can turn it off” is not a sufficient answer when the default changes the user’s public posture. Telemetry, cloud sync, personalized ads, browser prompts, start-menu recommendations, AI sidebars — all of these have trained users to distrust defaults that serve the platform’s strategic priorities more clearly than the user’s immediate needs.

Copilot attribution now joins that list, even if temporarily. Microsoft can revert the setting in code, but it cannot so easily revert the impression that VS Code is becoming another surface where Copilot’s presence is assumed until the user objects.

That history made the VS Code co-author controversy combustible. When developers see Copilot inserted into pull requests one month and commit metadata the next, they do not evaluate each incident as an isolated bug. They perceive a pattern: AI branding and AI workflow hooks are moving closer to the artifacts that define software work.

This is the reputational cost of aggressive integration. Every product team can explain its own feature as helpful, transparent, or easily disabled. But users experience the aggregate as encroachment. The assistant is no longer sitting politely in a side panel; it is appearing in commits, pull requests, reviews, terminals, search boxes, and settings panes.

Microsoft has a particular challenge because VS Code is not merely another Microsoft app. It is the default editor for huge numbers of developers who do not otherwise live in the Microsoft ecosystem. Its success came from being fast, extensible, cross-platform, and relatively unburdened by the old Redmond instinct to turn every surface into a funnel.

Copilot changes that perception. The more VS Code behaves like a delivery mechanism for Microsoft’s AI strategy, the more developers will ask whether the editor still belongs primarily to them. That question is dangerous because developer tools are sticky until they are not. Once trust erodes, alternatives do not need to be perfect; they only need to feel less presumptuous.

The irony is that Copilot itself does not need this kind of forceful adjacency to succeed. Many developers genuinely find AI coding assistance useful. The market for AI-assisted development is real. But useful tools can still lose goodwill if they insist on narrating themselves into every workflow artifact.

That question is becoming urgent. Organizations are trying to define when AI coding tools are allowed, what data they can see, how generated code is reviewed, whether open-source license risk is increased, and how to document AI assistance for customers. These policies are already difficult to write because the tooling landscape changes faster than procurement and legal review.

A default that inaccurately stamps Copilot onto commits makes that job harder. It creates noise in audit trails. It can trigger false policy violations. It forces teams to distinguish between actual AI use and editor-inserted metadata. It may require repository cleanup if public history has already been pushed.

Even when the immediate bug is fixed, the incident will likely nudge administrators toward stricter controls. Some teams will lock VS Code settings through managed configuration. Others will add commit hooks that strip unauthorized trailers or reject AI co-author lines unless a specific policy condition is met. Larger organizations may demand clearer enterprise documentation from Microsoft about exactly when Copilot metadata is generated.

This is where Microsoft’s commercial ambitions collide with its platform responsibilities. Copilot is not just a consumer feature; it is an enterprise product sold on productivity, governance, and security. If the surrounding tools make governance less reliable, Microsoft undercuts its own sales pitch.

The company should be especially careful with developers because developers are unusually sensitive to hidden state. They understand that tiny defaults can have large downstream effects. They know the difference between a UI preference and a mutation of a repository artifact. They are also the people most likely to build institutional narratives around whether a tool can be trusted.

In that sense, the VS Code incident is not a public-relations flare-up. It is a procurement signal. If Microsoft wants Copilot to be accepted inside serious software organizations, it has to prove that AI features obey policy boundaries even when those boundaries are inconvenient to growth metrics.

But open development also means controversial product changes are visible. Pull requests, settings changes, review comments, and reverts become part of the public argument. In this case, that visibility helped users identify the default change and debate it in real time.

That transparency is healthy, but it also means Microsoft cannot hide behind abstraction. A one-line settings flip may look minor internally. Externally, it becomes evidence in a broader case about Copilot creep. The pull request is not just a development artifact; it is a document in the court of developer opinion.

The apology from an individual reviewer deserves some grace. Large software projects have many contributors, release pressures, and imperfect tests. But Microsoft as an institution does not get to treat this as only an individual lapse. The company has spent years telling developers that AI will reshape software creation. It cannot then be surprised when developers scrutinize any feature that changes authorship records.

The open-source optics are especially fraught because the “Co-authored-by” convention is deeply tied to community recognition. Open-source contributors care about credit. They care about authorship. They care about who appears in the graph. Automatically inserting a corporate AI identity into that space feels invasive in a way that a proprietary IDE feature might not.

Microsoft’s challenge is to separate two things it has allowed to blur: Copilot as a developer-chosen assistant, and Copilot as an ambient layer of Microsoft’s developer cloud. The former can earn loyalty. The latter will generate resistance, particularly when it touches communal records like Git history.

The principle should be simple: AI may suggest changes to a user-controlled artifact, but it should not silently change the artifact’s claims about authorship, intent, compliance, or provenance. That applies to commit trailers, pull request descriptions, issue comments, release notes, changelogs, and code-review summaries. These are not ordinary UI surfaces. They are records.

A better design would make AI attribution explicit at the moment of commit. If Copilot generated code, VS Code could show a clearly worded prompt: “Copilot contributed to this change. Add an AI co-author trailer?” The dialog should display the exact text to be inserted and remember the user’s choice at the workspace or organization level.

For enterprise environments, Microsoft should expose policy controls that can enforce three states: never add AI attribution, always require user confirmation, or require attribution when Copilot-generated code is detected. Those controls should be visible to developers, not hidden in admin portals they cannot inspect. A developer should know whether a setting is their choice or an organizational rule.

The detection model also needs humility. If VS Code cannot reliably distinguish between AI-generated code, AI chat advice, AI commit-message generation, and unrelated edits, it should not write a co-author trailer automatically. Uncertainty should produce a prompt, not a claim.

Finally, Microsoft should treat AI metadata as part of the security and compliance surface. That means regression tests, release-note clarity, enterprise advisories when behavior changes, and a higher approval bar for defaults. A company that can document every nuance of cloud identity management can document when its editor will write “Copilot” into a commit.

Source: Windows Central https://www.windowscentral.com/soft...ot-as-co-author-without-permission-or-notice/

Microsoft Turned Attribution Into an Ambient Feature

Microsoft Turned Attribution Into an Ambient Feature

The controversy began with a small-looking change in VS Code’s Git extension: the setting controlling AI co-author trailers, git.addAICoAuthor, was flipped from off to a broader default. In plain English, commits made through VS Code could gain a trailer naming Copilot as a co-author. That line is not cosmetic in the way a status bar icon is cosmetic. In Git culture, commit metadata is the receipt.The intended use case is easy enough to defend. If an AI coding tool materially generated code, some teams may want the commit history to say so. AI provenance is becoming a real governance issue, particularly in companies with source-code policies, license-review obligations, and customer contracts that increasingly distinguish between human-written and machine-assisted work.

But the implementation crossed the line from provenance into presumption. Users reported Copilot being added even when AI had not generated the code, and even when AI features were disabled through settings such as

chat.disableAIFeatures. That distinction matters. A tool that records AI involvement accurately is an audit aid; a tool that records AI involvement inaccurately is an audit risk.The most damaging part of the story is that the trailer was not always immediately visible to the user at the point where professional trust is formed. Developers often scan a commit message, hit commit, and move on. If the editor mutates that message in the last mile, the developer may discover the change only after pushing to a shared repository — or after someone else notices it.

That is why the furious reaction was not developer melodrama. A “Co-authored-by” line can be read by managers, compliance systems, reviewers, clients, and future investigators. It can imply tool usage, process deviation, IP exposure, or policy noncompliance. Microsoft may have intended a helpful attribution mechanism, but the shipped behavior created the appearance of an admission.

The Commit Log Is Not a Marketing Surface

To understand why this landed so badly, it helps to remember what Git metadata does in a professional software shop. A commit is not merely a note to oneself. It is part changelog, part accountability system, part legal artifact, and part cultural memory.The author field says who made a change. The committer field says who applied it. Trailers such as “Signed-off-by,” “Reviewed-by,” and “Co-authored-by” carry conventions that teams have spent years operationalizing. They feed release notes, compliance scans, dashboards, contribution graphs, open-source credits, and occasionally courtroom exhibits.

That is why this is different from a product inserting a banner or adding an extra onboarding card. The editor was touching the permanent record of the work. Even if the user can remove the line, the default matters because defaults become the behavior of millions of people who never read the setting diff.

“Co-authored-by” also has a specific social meaning. It does not say “this tool was available,” or “an AI model may have influenced an idea,” or “a developer used autocomplete once.” It says another named party helped author the change. When that party is GitHub Copilot, the implication is unavoidable: AI contributed to the code.

That implication can be harmless in a personal repository and toxic in a regulated workplace. Some companies ban AI coding tools outright. Others allow them only for non-sensitive code, only under enterprise agreements, or only after legal review. A developer whose commit suddenly claims Copilot participation may have to explain why a forbidden assistant appears in the project history.

The complaint quoted across the developer discussion — “this could cost people their jobs” — sounds dramatic only if one assumes commit metadata is just decoration. In many organizations, it is not. If a bank, defense contractor, healthcare vendor, or enterprise software supplier has a policy against non-approved AI assistance, a false Copilot co-author line is not an inconvenience. It is an accusation with a timestamp.

The Apology Helped, but It Also Revealed the Real Failure

Dmitriy Vasyura, who approved the pull request, apologized publicly and said there was no malicious intent. That matters. It is too easy to turn every unpopular Microsoft default into a cartoon of corporate villainy, and software organizations do make honest mistakes, especially when features pass through preview channels, defaults, experiments, and release trains.The apology’s substance, however, is less important than what the incident revealed about process. According to the public discussion, the behavior was reverted because of a bug, not simply because users hated the default. Vasyura also reportedly acknowledged that the bug had been caught in testing but shipped anyway.

That is the part enterprise IT departments will remember. Not because they believe every bug is scandalous, but because this bug sat at the intersection of identity, policy, and auditability. A scrollbar bug can wait for a point release. A false authorship marker in source control should trigger a much higher bar.

There is a deeper organizational tell here. Microsoft and GitHub are under immense pressure to make Copilot feel native everywhere: the editor, the pull request, the terminal, the issue, the code review, the project plan. When a company is trying to make AI ubiquitous, product teams will naturally start treating AI involvement as the default state of modern work. The rest of the industry is not there yet.

That mismatch is the heart of the blowback. Microsoft may see Copilot attribution as a compliance-friendly feature that brings transparency to AI-assisted development. Developers saw an editor asserting facts about their labor without asking them first. Both views can be sincere, but only one of them respects the user as the source of truth.

AI Provenance Is Valuable Only When It Is Precise

There is a good version of this feature. In fact, the industry probably needs it.As AI coding agents become more capable, teams will need durable ways to answer basic questions. Did a model generate this function? Did a human merely accept a suggestion? Did an agent open the pull request? Was the code reviewed under the team’s AI policy? Was any proprietary context sent to a third-party service?

Those questions cannot be solved by pretending AI assistance does not exist. Nor can they be solved by relying on developers to write perfect commit messages after the fact. Tooling should help, and commit trailers are one plausible mechanism.

But provenance systems live or die on precision. “Copilot touched this” is not precise enough. “Copilot generated these lines during this session, under this account, using this enterprise policy, and the developer approved them” is closer to what serious organizations will need. Anything less risks producing a fog of pseudo-transparency that is worse than silence.

A false positive is especially dangerous because it dilutes the signal. If Copilot co-authorship appears on commits where no AI-generated code exists, teams will learn to ignore it. The metadata becomes spam. Worse, it teaches developers that AI audit trails are arbitrary, which undermines the very compliance story Microsoft wants to sell.

There is also a philosophical trap in calling Copilot a “co-author.” Lawyers, open-source maintainers, and engineers may not agree on whether AI can be an author in any meaningful sense. GitHub’s trailer convention was built for human collaborators. Applying it to a tool is already a metaphor; applying it inaccurately turns the metaphor into a liability.

The better approach would be explicit and layered. Ask the user before enabling the setting. Show the exact trailer before commit creation. Distinguish generated code from chat assistance, commit-message generation, review suggestions, and agentic edits. Give organizations policy controls that are enforceable, visible, and easy to audit.

The Default Was the Product Decision

Microsoft’s defenders can argue that the setting was configurable, and technically that is true. But defaults are not neutral. Defaults are where a platform expresses its values.When VS Code ships a default, it is making a bet about what most users want and what risks they should silently accept. In this case, the bet appears to have been that AI attribution is desirable enough to enable broadly, and that users who object can turn it off. That is backwards for authorship metadata.

The right default for anything that changes a developer’s commit identity should be opt-in. Not because AI is uniquely dangerous, but because source-control history is shared infrastructure. The user’s name, email, signatures, co-authors, and trailers are not the editor’s playground.

Microsoft knows this in other contexts. Git commit signing is not silently enabled with a Microsoft key. Repository remotes are not rewritten because a cloud workflow would be more convenient. A developer tool may suggest, scaffold, or warn, but it should not silently add claims about who helped produce the work.

The failure is therefore not just a bad line of code or a missing test. It is a product judgment that treated attribution as a convenience setting rather than a trust boundary. The reason developers reacted so sharply is that they recognized the boundary immediately.

There is a long history of tech companies discovering that “you can turn it off” is not a sufficient answer when the default changes the user’s public posture. Telemetry, cloud sync, personalized ads, browser prompts, start-menu recommendations, AI sidebars — all of these have trained users to distrust defaults that serve the platform’s strategic priorities more clearly than the user’s immediate needs.

Copilot attribution now joins that list, even if temporarily. Microsoft can revert the setting in code, but it cannot so easily revert the impression that VS Code is becoming another surface where Copilot’s presence is assumed until the user objects.

GitHub’s Recent “Tips” Made the Suspicion Inevitable

This episode did not happen in a vacuum. Earlier this year, GitHub faced criticism after Copilot-related “coding agent tips” appeared in pull request text in ways users characterized as advertising. Microsoft and GitHub disputed the framing, but the practical concern was familiar: platform-generated content appeared in developer workflow artifacts where users expected their own words and choices.That history made the VS Code co-author controversy combustible. When developers see Copilot inserted into pull requests one month and commit metadata the next, they do not evaluate each incident as an isolated bug. They perceive a pattern: AI branding and AI workflow hooks are moving closer to the artifacts that define software work.

This is the reputational cost of aggressive integration. Every product team can explain its own feature as helpful, transparent, or easily disabled. But users experience the aggregate as encroachment. The assistant is no longer sitting politely in a side panel; it is appearing in commits, pull requests, reviews, terminals, search boxes, and settings panes.

Microsoft has a particular challenge because VS Code is not merely another Microsoft app. It is the default editor for huge numbers of developers who do not otherwise live in the Microsoft ecosystem. Its success came from being fast, extensible, cross-platform, and relatively unburdened by the old Redmond instinct to turn every surface into a funnel.

Copilot changes that perception. The more VS Code behaves like a delivery mechanism for Microsoft’s AI strategy, the more developers will ask whether the editor still belongs primarily to them. That question is dangerous because developer tools are sticky until they are not. Once trust erodes, alternatives do not need to be perfect; they only need to feel less presumptuous.

The irony is that Copilot itself does not need this kind of forceful adjacency to succeed. Many developers genuinely find AI coding assistance useful. The market for AI-assisted development is real. But useful tools can still lose goodwill if they insist on narrating themselves into every workflow artifact.

Enterprise IT Will See a Controls Problem, Not a Culture War

Among hobbyists, this controversy will be debated as another skirmish in the AI backlash. Among enterprise IT teams, the more practical question is simpler: who controls the development environment’s statements about work?That question is becoming urgent. Organizations are trying to define when AI coding tools are allowed, what data they can see, how generated code is reviewed, whether open-source license risk is increased, and how to document AI assistance for customers. These policies are already difficult to write because the tooling landscape changes faster than procurement and legal review.

A default that inaccurately stamps Copilot onto commits makes that job harder. It creates noise in audit trails. It can trigger false policy violations. It forces teams to distinguish between actual AI use and editor-inserted metadata. It may require repository cleanup if public history has already been pushed.

Even when the immediate bug is fixed, the incident will likely nudge administrators toward stricter controls. Some teams will lock VS Code settings through managed configuration. Others will add commit hooks that strip unauthorized trailers or reject AI co-author lines unless a specific policy condition is met. Larger organizations may demand clearer enterprise documentation from Microsoft about exactly when Copilot metadata is generated.

This is where Microsoft’s commercial ambitions collide with its platform responsibilities. Copilot is not just a consumer feature; it is an enterprise product sold on productivity, governance, and security. If the surrounding tools make governance less reliable, Microsoft undercuts its own sales pitch.

The company should be especially careful with developers because developers are unusually sensitive to hidden state. They understand that tiny defaults can have large downstream effects. They know the difference between a UI preference and a mutation of a repository artifact. They are also the people most likely to build institutional narratives around whether a tool can be trusted.

In that sense, the VS Code incident is not a public-relations flare-up. It is a procurement signal. If Microsoft wants Copilot to be accepted inside serious software organizations, it has to prove that AI features obey policy boundaries even when those boundaries are inconvenient to growth metrics.

The Open-Source Optics Are Awkward for Microsoft

VS Code occupies an unusual place in the open-source world. Its core is developed in the open, but the Microsoft-distributed product includes Microsoft branding, services, and integration choices. Developers understand this bargain. They have accepted it because the tool is excellent.But open development also means controversial product changes are visible. Pull requests, settings changes, review comments, and reverts become part of the public argument. In this case, that visibility helped users identify the default change and debate it in real time.

That transparency is healthy, but it also means Microsoft cannot hide behind abstraction. A one-line settings flip may look minor internally. Externally, it becomes evidence in a broader case about Copilot creep. The pull request is not just a development artifact; it is a document in the court of developer opinion.

The apology from an individual reviewer deserves some grace. Large software projects have many contributors, release pressures, and imperfect tests. But Microsoft as an institution does not get to treat this as only an individual lapse. The company has spent years telling developers that AI will reshape software creation. It cannot then be surprised when developers scrutinize any feature that changes authorship records.

The open-source optics are especially fraught because the “Co-authored-by” convention is deeply tied to community recognition. Open-source contributors care about credit. They care about authorship. They care about who appears in the graph. Automatically inserting a corporate AI identity into that space feels invasive in a way that a proprietary IDE feature might not.

Microsoft’s challenge is to separate two things it has allowed to blur: Copilot as a developer-chosen assistant, and Copilot as an ambient layer of Microsoft’s developer cloud. The former can earn loyalty. The latter will generate resistance, particularly when it touches communal records like Git history.

The Fix Needs to Be Cultural, Not Just Technical

Turning the setting back off by default is necessary, but it is not sufficient. Microsoft needs a more conservative doctrine for AI features that alter durable artifacts.The principle should be simple: AI may suggest changes to a user-controlled artifact, but it should not silently change the artifact’s claims about authorship, intent, compliance, or provenance. That applies to commit trailers, pull request descriptions, issue comments, release notes, changelogs, and code-review summaries. These are not ordinary UI surfaces. They are records.

A better design would make AI attribution explicit at the moment of commit. If Copilot generated code, VS Code could show a clearly worded prompt: “Copilot contributed to this change. Add an AI co-author trailer?” The dialog should display the exact text to be inserted and remember the user’s choice at the workspace or organization level.

For enterprise environments, Microsoft should expose policy controls that can enforce three states: never add AI attribution, always require user confirmation, or require attribution when Copilot-generated code is detected. Those controls should be visible to developers, not hidden in admin portals they cannot inspect. A developer should know whether a setting is their choice or an organizational rule.

The detection model also needs humility. If VS Code cannot reliably distinguish between AI-generated code, AI chat advice, AI commit-message generation, and unrelated edits, it should not write a co-author trailer automatically. Uncertainty should produce a prompt, not a claim.

Finally, Microsoft should treat AI metadata as part of the security and compliance surface. That means regression tests, release-note clarity, enterprise advisories when behavior changes, and a higher approval bar for defaults. A company that can document every nuance of cloud identity management can document when its editor will write “Copilot” into a commit.

The Small Trailer That Exposed the Whole Copilot Bargain

The details of this incident are narrow, but the lesson is broad: developers will tolerate AI assistance more readily than AI assumption. Near-term fixes should be practical, not ideological.- Teams using VS Code should verify the current value of

git.addAICoAuthoracross their developer environments and decide whether it belongs in user settings, workspace settings, or managed policy. - Organizations with AI restrictions should add server-side repository checks or commit hooks if a false Copilot co-author line would create compliance or employment risk.

- Developers who used VS Code during the affected period should inspect recent commits for unintended “Co-authored-by: Copilot” trailers before relying on that history as an accurate record.

- Microsoft should reserve opt-out defaults for convenience features, not for metadata that makes public claims about authorship or tool usage.

- AI provenance should become more granular than a generic co-author line if vendors expect enterprises to trust it.

- The developer community’s backlash is best read as a demand for consent, not as a blanket rejection of AI coding tools.

Source: Windows Central https://www.windowscentral.com/soft...ot-as-co-author-without-permission-or-notice/