Microsoft's latest demos show artificial intelligence moving out of a sidebar and straight into the places Windows users open every day: the taskbar and File Explorer — a practical, system-level push that folds Microsoft 365 Copilot and a new class of long-running agents into the Windows 11 shell for quick answers, document summaries, image edits, and background work you can monitor from the taskbar.

Microsoft has been steadily folding Copilot features into Windows 11 for more than a year, but recent previews and demonstrations mark a clear evolution: Copilot is no longer an isolated assistant living in a panel — it’s becoming an operating-system–level service that can be invoked from the taskbar, surface contextual actions in File Explorer, and run agentic tasks in sandboxed workspaces. This shift blends local, on-device AI capabilities with cloud models and connective "agent" patterns that can access apps, files, and services when authorized.

Key pieces of Microsoft’s approach:

Practical implications shown:

Notable UI and behavior details:

Additional technical points verified across sources:

Mitigation: Enterprises should adopt a phased rollout, restrict connector registration via device management, and audit connector permissions before enabling agent use at scale. Microsoft’s documentation and preview guidance highlight ODR and admin controls; organizations must use them.

Mitigation: Keep human‑in‑the‑loop for any agent action that writes or sends external communications. Use conservative defaults (summarize, suggest, and require explicit approval for changes). Microsoft’s design for Copilot Actions emphasizes explicit consent and sandboxing; organizations should enforce confirmation steps.

Mitigation: Provide visibility and controls for active agents (start/stop, scope, logs) and limit agent lifetime by default. The taskbar progress indicators and Agent Workspace are steps in this direction, but admins will want logging and telemetry to be configurable.

However, the same integration also raises real enterprise and consumer concerns. Data governance, connector security, hallucination risk when automations act, and regional regulatory differences are not hypothetical — they are immediate operational questions that IT teams and privacy officers must address before enabling broad adoption. The prudent rollout path is a staged, measured approach that prioritizes pilot programs, strict connector governance, human approval for automated actions, and comprehensive logging.

If you manage Windows deployments, treat this as a policy-first feature set: decide where agents may run, which connectors are allowed, and how end users will be trained. If you’re a power user, try the Insider builds in a test environment to understand how the new taskbar and File Explorer interactions change your daily flow. Either way, this shift signals that Windows 11 is moving from a passive platform into a workspace where AI agents are first-class citizens — a powerful change, but one that demands governance, testing, and a clear view of the security boundaries.

Conclusion: Microsoft’s demo of AI running in the Windows 11 taskbar and File Explorer shows a maturing Copilot vision — one that promises meaningful productivity gains while also introducing governance and security responsibilities. For individuals and organizations, the immediate next steps are simple and practical: pilot carefully, lock down connectors and permissions, ensure human oversight for automated actions, and watch Microsoft’s public preview notes and administrative controls as these features make their way from Insider builds into broadly available releases.

Source: TechPowerUp Microsoft Shows AI Integration in Windows 11 Running in Task Bar and File Explorer

Background / Overview

Background / Overview

Microsoft has been steadily folding Copilot features into Windows 11 for more than a year, but recent previews and demonstrations mark a clear evolution: Copilot is no longer an isolated assistant living in a panel — it’s becoming an operating-system–level service that can be invoked from the taskbar, surface contextual actions in File Explorer, and run agentic tasks in sandboxed workspaces. This shift blends local, on-device AI capabilities with cloud models and connective "agent" patterns that can access apps, files, and services when authorized.Key pieces of Microsoft’s approach:

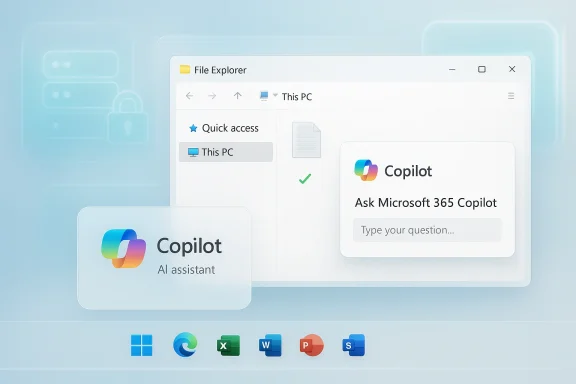

- Ask Copilot on the taskbar — an optional, opt-in replacement for the classic search box that lets users type or speak to Copilot and launch or monitor AI agents.

- AI Actions in File Explorer — context-aware right‑click options that surface summarization, visual search, and micro‑edits without opening a full editor.

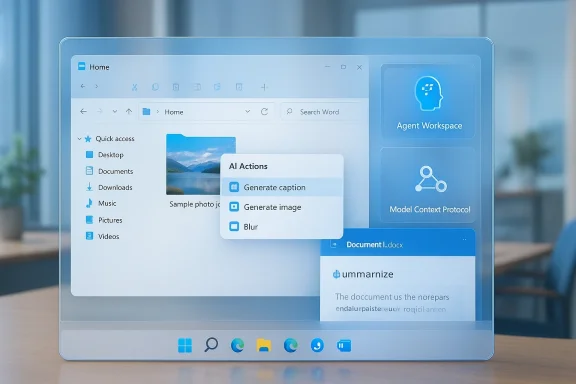

- Agent Workspace + Model Context Protocol (MCP) — a sandboxed runtime and a protocol to let agents connect to apps, run multi-step workflows, and use connectors registered to the on‑device registry (ODR). Microsoft positions these as managed, auditable ways for agents to act on files and settings with admin controls.

What Microsoft demonstrated — concrete details from the demo

Ask Copilot on the taskbar: instant access, persistent agents

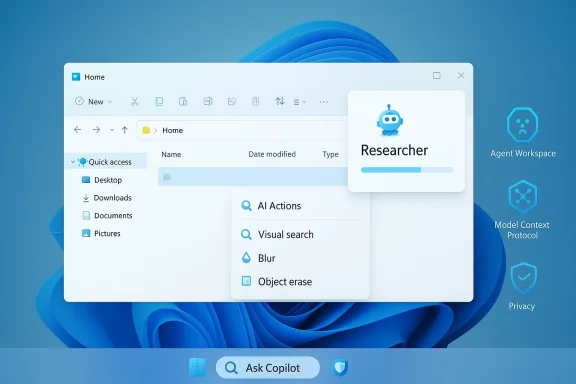

The taskbar demo replaces (optionally) the search box with an Ask Copilot composer. Typing queries or using voice/vision produces conversational results, but the more notable capability is agents — user-invoked assistants that can run longer tasks in the background and be observed from the taskbar via progress indicators and status badges. Agents can be summoned with an “@” followed by an agent name (for example, the Researcher agent), and they can keep running for many minutes to fetch, summarize, or analyze content across your files, mail, and meetings. The demo highlights progress icons on taskbar entries and a small summary once a background agent completes its work.Practical implications shown:

- Agents surface intermediate results and status without taking over the screen.

- Agents can be started from a quick prompt and left to run while you continue working.

- Agents integrate with Microsoft 365 content sources (Outlook, Teams, OneDrive) for richer context than local search alone.

Copilot inside File Explorer: inline intelligence for files

File Explorer demonstrated a new “Ask Microsoft 365 Copilot” affordance, plus an AI Actions context menu for files. For synced Microsoft 365 documents, Copilot can provide a short summary, a suggested next step, or extract key details. For images, quick actions such as background blur/removal, object erasure, and visual search (Bing Visual Search integration) are surfaced directly from Explorer’s context menu, reducing friction for small edits that previously required opening Photos or Paint.Notable UI and behavior details:

- Right‑click an image or document → see AI Actions → select available operations (summarize, edit, visual search).

- File Explorer’s AI actions are context-sensitive: options appear only if relevant to the file type and user’s configuration.

- Some insiders and regions may see staged rollouts and differences in the initial feature set.

How this is implemented (technical verification)

Microsoft’s public documentation and company demos make three implementation claims repeatedly: agentic features run in an isolated Agent Workspace, agent-to-app integration uses a Model Context Protocol (MCP) with registered connectors, and administrators retain policy controls for deployment. Those claims are corroborated by Microsoft support notes and independent reporting. The Agent Workspace and MCP are described as sandboxed execution contexts where agents can be observed, limited, and audited. Developers can build agent connectors (MCP servers) that expose app-specific actions to agents; connectors are discoverable through the Windows On‑Device Registry (ODR).Additional technical points verified across sources:

- The taskbar Ask Copilot interface uses the same indexing that powers Windows Search but layers Copilot’s access to cloud resources and connectors on top of it. Microsoft says Ask Copilot uses fewer resources and is designed for responsiveness.

- Some advanced features (for example, Windows Recall and other high‑bandwidth vision capabilities) require Copilot+ PCs with NPUs or hardware-enabled model acceleration. Microsoft’s hardware gating for certain on‑device features is documented in their Copilot+ materials.

- Insider build numbers and changelog notes show AI Actions in Explorer and taskbar experiments appearing in Dev/Beta channel releases (for example, builds in the 26120–26220 range), which aligns with community testing and the company’s staged rollout plan.

Why Microsoft is doing this: the productivity argument

Microsoft’s pitch is straightforward: put AI in places users already work so they can get faster answers, keep flow, and reduce context switching. The demos emphasize:- Faster retrieval of meeting or document facts without opening multiple apps.

- Micro-editing tasks (image edits, quick rewrites) available with fewer clicks.

- Background, long-running research tasks that finish while you continue to work.

Strengths — what this delivers well

- Seamless workflows: Having Copilot-derived summaries and edits available directly in Explorer reduces app switches and small repetitive tasks. This can measurably shorten document triage and simple image edits.

- Agent productivity: Taskbar-visible agents let users launch longer tasks (research, aggregated summaries) and track progress without losing focus — a genuine UX improvement for heavy knowledge workers.

- Hybrid cloud + local model design: Microsoft’s dual approach — cloud models for heavy reasoning, local on‑device models for low-latency or sensitive tasks on Copilot+ hardware — gives admins and users choices over performance and privacy.

- Developer extensibility (MCP): Opening a formal protocol for agents to use connectors creates an ecosystem opportunity. Organizations can build controlled connectors to their internal apps, enabling safe, auditable automation.

Risks and trade-offs — what to watch closely

No major platform shift is without risk. Here are the primary areas of concern with practical context and mitigation direction.1) Data exposure and connectors

Agents that access mail, calendars, shared drives, and third‑party services expand the attack surface. Even with an ODR‑managed connector system, a misconfigured connector or an overly permissive agent could surface sensitive information into model prompts or cloud services.Mitigation: Enterprises should adopt a phased rollout, restrict connector registration via device management, and audit connector permissions before enabling agent use at scale. Microsoft’s documentation and preview guidance highlight ODR and admin controls; organizations must use them.

2) Hallucination and automation risk

Copilot-style models can produce plausible but incorrect outputs. When agents are allowed to perform multi-step actions (modify files, change settings, send messages), an incorrect instruction could have outsized consequences.Mitigation: Keep human‑in‑the‑loop for any agent action that writes or sends external communications. Use conservative defaults (summarize, suggest, and require explicit approval for changes). Microsoft’s design for Copilot Actions emphasizes explicit consent and sandboxing; organizations should enforce confirmation steps.

3) Persistent background agents and resource/privacy tradeoffs

Long‑running agents improve productivity, but they also introduce persistent background processes that may keep search indexes, files, or cached credentials in memory longer than before.Mitigation: Provide visibility and controls for active agents (start/stop, scope, logs) and limit agent lifetime by default. The taskbar progress indicators and Agent Workspace are steps in this direction, but admins will want logging and telemetry to be configurable.

4) Regulatory and regional fragmentation

Microsoft’s behavior in different regions — e.g., the EEA or jurisdictions with strict AI/data rules — may be constrained. Prior previews have shown feature gating by region, and Microsoft has already introduced admin policies (for example, a RemoveMicrosoftCopilotApp policy surfaced in preview notes) to allow enterprises to control Copilot’s footprint.How enterprises and IT teams should prepare (practical checklist)

- Inventory and policy

- Audit where Copilot/agents will be allowed to connect (OneDrive, Exchange, third‑party connectors).

- Define a policy for connector registration and scope.

- Pilot and measure

- Start with a small Insider/early adopter group.

- Measure agent usage patterns, resource impacts, and error rates for any automated actions.

- Safety gates

- Enforce approval for agent actions that write files, send mail, or change system settings.

- Require explicit human confirmation for any outbound messages generated by agents.

- Visibility and logging

- Ensure Agent Workspace activity is logged to enterprise SIEM.

- Configure telemetry levels consistent with privacy rules.

- Training and documentation

- Educate end users on when Copilot may access corporate data and how to revoke agent permissions.

- Provide a simple “how to disable Ask Copilot” and “how to opt out” guide for non‑participants.

Real-world examples — how this changes common tasks

Example: Summarize a shared report without opening Word

- Select the synced Word file in File Explorer.

- Right‑click → AI Actions → choose Summarize.

- Copilot returns a short brief with action suggestions (talking points, next steps).

- If you accept, the summary can be pasted into an email draft or opened in Copilot for refinement.

Example: Start a research agent from the taskbar

- Click Ask Copilot on the taskbar (or press the configured shortcut).

- Type “@Researcher: Compile recent regulation changes for X.”

- Agent appears in taskbar with a progress indicator and runs for several minutes, aggregating documents and emails.

- Agent completes, surfaces a slide‑ready summary and a link to deeper notes in the Copilot app.

Developer and ecosystem opportunity

The Model Context Protocol and agent connectors create a platform play: independent developers and enterprise vendors can expose controlled capabilities to agents. The ODR provides a discoverable, registry-based approach to manage connectors, which helps with governance but also presents an integration surface for productivity vendors, LOB apps, and SI partners. Careful API design and permission scoping will determine whether MCP fosters secure innovation or a chaotic permission sprawl.What we still don’t fully know — caution on unverifiable claims

- Timing for general availability: Microsoft’s demos and insider rollouts indicate a staged release, but exact GA dates and which Windows update will include the features for all users remain vendor-controlled and subject to change. Public previews show builds in the 26120–26220 range, but Microsoft has historically adjusted timelines based on feedback.

- The scope of third‑party connector vetting: MCP/ODR are documented, but the exact certification process and enforcement level for third‑party connectors will be critical and are not fully specified in the public preview docs. Treat any connector integration as a governance risk until the vetting workflow is transparent.

- Offline / local model limitations: Microsoft emphasizes hybrid cloud+local models, but the performance and accuracy tradeoffs for on‑device models on Copilot+ PCs versus cloud models vary by hardware and are influenced by model updates Microsoft may push. Organizations reliant on strictly offline inference should validate behavior on their Copilot+ hardware.

Final assessment — balancing optimism with caution

Microsoft’s integration of Copilot into the taskbar and File Explorer is an important and logical step toward AI that feels native to the OS. The productivity potential is real: fewer context switches, faster triage of files, and background agents that can do the grunt work while humans focus on decisions. The technical architecture — Agent Workspace, MCP, and ODR — is a sensible attempt to balance capability and control, and Microsoft’s preview notes already show admin-oriented controls and opt‑in UX design.However, the same integration also raises real enterprise and consumer concerns. Data governance, connector security, hallucination risk when automations act, and regional regulatory differences are not hypothetical — they are immediate operational questions that IT teams and privacy officers must address before enabling broad adoption. The prudent rollout path is a staged, measured approach that prioritizes pilot programs, strict connector governance, human approval for automated actions, and comprehensive logging.

If you manage Windows deployments, treat this as a policy-first feature set: decide where agents may run, which connectors are allowed, and how end users will be trained. If you’re a power user, try the Insider builds in a test environment to understand how the new taskbar and File Explorer interactions change your daily flow. Either way, this shift signals that Windows 11 is moving from a passive platform into a workspace where AI agents are first-class citizens — a powerful change, but one that demands governance, testing, and a clear view of the security boundaries.

Conclusion: Microsoft’s demo of AI running in the Windows 11 taskbar and File Explorer shows a maturing Copilot vision — one that promises meaningful productivity gains while also introducing governance and security responsibilities. For individuals and organizations, the immediate next steps are simple and practical: pilot carefully, lock down connectors and permissions, ensure human oversight for automated actions, and watch Microsoft’s public preview notes and administrative controls as these features make their way from Insider builds into broadly available releases.

Source: TechPowerUp Microsoft Shows AI Integration in Windows 11 Running in Task Bar and File Explorer