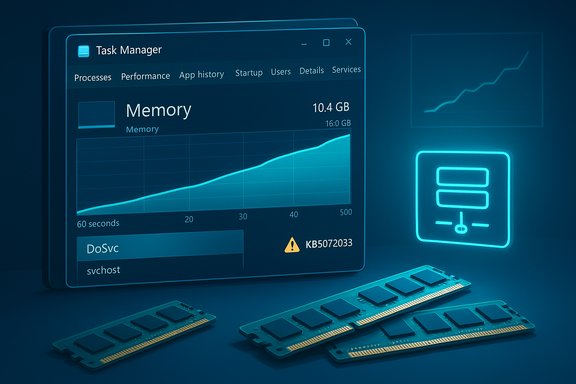

Windows 11’s built‑in update‑sharing engine, Delivery Optimization (service name DoSvc), is being blamed for steady RAM growth on many machines running 24H2 and 25H2 — a symptom that looks and behaves like a memory leak and that has left some 8 GB and 16 GB systems sluggish or unusable unless the service is limited or disabled.

Delivery Optimization is Microsoft’s peer‑assisted distribution layer for Windows Update and Microsoft Store content. It breaks update payloads into chunks and can fetch pieces from other devices on the same LAN (or, optionally, from Internet peers) to reduce repeated downloads from Microsoft’s servers and to speed distribution in dense deployments. End users can view activity, set bandwidth limits, or toggle peer sharing in Settings → Windows Update → Advanced options → Delivery Optimization. In early December 2025 Microsoft shipped the cumulative update identified as KB5072033 (build 26100.7462 for 24H2 and 26200.7462 for 25H2). The KB explicitly documents a configuration change: the AppX Deployment Service (AppXSVC) was moved from a trigger/manual start type to Automatic to “improve reliability in some isolated scenarios.” That startup change increases the runtime exposure of services that historically ran only when needed — and community reporting shows that this can amplify any background resource usage by those services.

Source: Mix Vale Delivery Optimization in Windows 11 24H2 presents memory leak and high RAM consumption

Background

Background

Delivery Optimization is Microsoft’s peer‑assisted distribution layer for Windows Update and Microsoft Store content. It breaks update payloads into chunks and can fetch pieces from other devices on the same LAN (or, optionally, from Internet peers) to reduce repeated downloads from Microsoft’s servers and to speed distribution in dense deployments. End users can view activity, set bandwidth limits, or toggle peer sharing in Settings → Windows Update → Advanced options → Delivery Optimization. In early December 2025 Microsoft shipped the cumulative update identified as KB5072033 (build 26100.7462 for 24H2 and 26200.7462 for 25H2). The KB explicitly documents a configuration change: the AppX Deployment Service (AppXSVC) was moved from a trigger/manual start type to Automatic to “improve reliability in some isolated scenarios.” That startup change increases the runtime exposure of services that historically ran only when needed — and community reporting shows that this can amplify any background resource usage by those services. What users are seeing (symptoms and scope)

- The observable pattern across multiple community reports and forum traces is a monotonic rise in memory use for an svchost.exe instance that hosts Delivery Optimization (DoSvc). Memory usage can begin at modest levels (hundreds of megabytes) and climb across hours to multiple gigabytes in some anecdotal cases, producing swapping, UI lag, and even RDP session freezes on memory‑constrained hosts.

- Machines with 8 GB or 12 GB of RAM tend to show the worst user impact; systems with 16 GB or more often tolerate the effect without noticeable disruption, though the process still appears near the top of Task Manager’s memory ranking.

- The growth pattern is consistent with a memory leak hypothesis: private bytes and working set rise over time without obvious release points, even when the PC is idle and no downloads are in progress. Community diagnostics (Process Explorer, RAMMap, ETW traces) have been used to reproduce and quantify the behavior on affected machines.

- At the time community reporting spiked there was no Microsoft public advisory explicitly labelling DoSvc as a confirmed leak; instead, Microsoft’s KB confirmed the AppXSVC startup change and users linked the timing to growing DoSvc footprints. Treat the leak characterization as a credible community diagnosis that awaits engineering confirmation and root‑cause detail from Microsoft.

Why this surfaced now: trigger start vs automatic start

Windows services use different startup semantics for performance and resource stewardship:- Trigger/manual start: the service remains dormant until a specific trigger (e.g., Store activity, scheduled update) launches it. This minimizes steady‑state memory and thread footprints.

- Automatic start: the binary is loaded at boot and remains resident (or remains subject to automatic restart behavior). Even idle services consume mapped pages, timers, thread pools, and cached state — all of which increase working set and private bytes.

What’s verified and what still needs confirmation

Verified facts- KB5072033’s release notes document AppXSVC’s change to Automatic.

- Delivery Optimization is configurable via Settings and has bandwidth and peer controls that users can change.

- Numerous independent community reports, forum threads and user traces document rising DoSvc memory usage and successful mitigation via disabling or limiting Delivery Optimization on symptomatic machines.

- Extreme user anecdotes quoting DoSvc growing to 20 GB are real reports from individuals but remain anecdotal outliers until validated by Microsoft engineering traces. Community numbers are important signals but should be treated with caution when used as a universal expectation.

- The definitive root cause — whether a native memory leak inside DoSvc, an untrimmed cache made visible by a startup semantics change, or a cross‑service interaction — requires ETW/RAMMap/PoolMon traces and Microsoft’s engineering analysis before it can be confirmed. Community artifacts accelerate that work, but they are not a substitute for vendor validation.

How to detect and triage the problem (step‑by‑step)

- Quick check

- Open Task Manager (Ctrl+Shift+Esc) → Details tab → sort by Memory. Look for an svchost.exe process running as NetworkService and check the Services column to confirm it hosts DoSvc (or check the PID → Services mapping).

- Deeper inspection (power users / admins)

- Use Process Explorer to view Private Bytes, Working Set, thread counts and loaded DLLs for the DoSvc host.

- Run RAMMap to inspect kernel pools and the standby list to separate user‑space allocations from kernel allocations.

- Capture ETW traces using Windows Performance Recorder (WPR) and collect ProcMon if you can reproduce the growth window. Structured artifacts greatly increase the chance of meaningful Microsoft engineering engagement.

- Correlate with Delivery Optimization UI

- Settings → Windows Update → Advanced options → Delivery Optimization → Activity monitor: check upload/download totals and cache size to see if DoSvc is actually doing network work or if memory growth occurs while the service is idle.

Immediate mitigations (safe, reversible) — prioritized

- Easiest and safest (recommended for most home users)

- Settings → Windows Update → Advanced options → Delivery Optimization → toggle Allow downloads from other devices to Off. Reboot and monitor Task Manager. Many users report the memory growth stops after this change.

- Middle ground (preserve LAN peering)

- Set Delivery Optimization to Devices on my local network only and apply bandwidth caps under Advanced options. This keeps LAN caching while preventing Internet peers from being used.

- Power‑user / temporary troubleshooting

- Stop the service: open Services (services.msc) → Delivery Optimization → Stop; set Startup type to Manual for testing.

- Registry change for persistent disable (advanced): set HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Services\DoSvc\Start to 4 (Disabled) and reboot. Use this only if comfortable with registry edits — managed systems may prevent or revert this.

- Clearing cache

- Settings → System → Storage → Temporary files → select Delivery Optimization Files and remove, or run Disk Cleanup as admin and clear Delivery Optimization files. Reboot after clearing. This reclaims space and can reduce immediate background activity.

- Server / VDI mitigation (test in a pilot cohort)

- Revert AppXSVC to a demand (trigger) start in a small pilot: open elevated command prompt and run sc config AppXSVC start= demand and sc stop AppXSVC. This can reduce start/stop flapping and resident memory on image‑managed hosts, but must be tested because it impacts Store app readiness and registration. Use managed policies (Intune / Group Policy) to apply consistent behavior at scale.

Enterprise considerations and remediation workflow

- Pilot first: roll KB and any start‑type reversion to a small, representative test group before deploying at scale.

- Prefer policy controls: use Group Policy / Intune to set Delivery Optimization to LAN‑only or to apply throttling, rather than ad‑hoc registry edits that can drift.

- Collect structured diagnostics when escalating: periodic Process Explorer dumps, RAMMap snapshots, ETW traces, and a concise reproduction plan (steps, time to growth) dramatically improve the chance Microsoft will engage and provide a Known Issue Rollback (KIR) or hotfix if required. Community experience shows Microsoft engineers react faster to well‑formed artifacts.

- Monitoring systems: for server images, watch for start/stop flapping alerts tied to AppXSVC after KB5072033 and correlate with update windows — revert AppXSVC to demand start in the pilot ring if flapping floods the monitoring queue.

Analysis: strengths, risks and longer‑term implications

Strengths of Delivery Optimization- Efficient at scale: reduces repeated downloads across many devices, saving upstream bandwidth and improving update delivery speed in dense networks.

- Tunable: Microsoft provides UI controls and MDM/Group Policy settings to adapt behavior to different environments.

- Visibility amplification: changes that make previously dormant services run more often will magnify any latent resource issues. The AppXSVC change to Automatic is an example of a small servicing change with outsized operational effect.

- Unbounded caches or retained references: if DoSvc holds caches or references without proper trimming over long lifetimes (or if a leak exists in native allocations), that will produce steady, system‑affecting growth on low‑RAM hosts.

- Operational cost at scale: For enterprises, disabling P2P adds bandwidth cost and slows deliveries. For servers, unexpected automatic residency can produce monitoring noise and density regressions in VDI and multi‑tenant hosts.

- Microsoft needs to validate the community traces, reproduce the growth on instrumented test rigs, and publish an engineering advisory that distinguishes between (a) a true native memory leak, (b) untrimmed caches made visible by startup changes, or (c) service interaction effects; then ship a fix or KIR as appropriate. Community artifacts speed that work.

- Administrators should collect and escalate structured diagnostics for devices where mitigations do not help, while balancing bandwidth and update distribution trade‑offs across their fleets.

Context: increasing hardware expectations for AI features

Microsoft’s recent guidance around Copilot+ PCs and other on‑device AI experiences has raised the bar for recommended memory configurations: Copilot+ PCs are documented to require 16 GB of RAM as a baseline for those experiences, together with NPU and storage requirements. That makes low‑RAM systems (8 GB or less) increasingly sensitive to resident service footprints introduced by updates and feature rollouts. In other words, the platform’s evolution toward on‑device AI and richer background services magnifies the operational importance of background service resource stewardship.Practical checklist (one‑page takeaways)

- Confirm your Windows build: Win + R → winver; KB5072033 corresponds to builds 26100.7462 / 26200.7462.

- Quick remedy (home users): Settings → Windows Update → Advanced options → Delivery Optimization → turn Allow downloads from other devices to Off, reboot, monitor Task Manager.

- If you need LAN‑only caching: choose Devices on my local network only and set bandwidth caps.

- Power‑user check: use Process Explorer and RAMMap to verify whether DoSvc’s private bytes are climbing. Collect ETW traces if the problem persists.

- For servers/VDI: pilot sc config AppXSVC start= demand and measure monitoring noise before wider rollout. Use Intune/Group Policy to apply consistent changes.

- When escalating to Microsoft: attach structured artifacts (Process Explorer dumps, RAMMap snapshots, ETW traces, Activity monitor screenshots) and a clear reproduction plan.

Conclusion

Delivery Optimization performs a useful role in Windows, but recent servicing changes and a wave of community diagnostics have exposed a problematic interaction: DoSvc’s memory footprint can grow steadily on some 24H2/25H2 installations, producing visible performance degradation on systems with constrained RAM. The safest short‑term action for affected users is to limit or disable peer downloads via Settings (a reversible change), while IT teams should pilot AppXSVC startup reversion and collect structured diagnostics for escalation. Microsoft’s documented KB change that moved AppXSVC to Automatic is the concrete, verifiable event that explains why this symptom surfaced now; however, the precise engineering root cause of DoSvc’s growth still requires Microsoft confirmation and a targeted patch. Until then, balanced mitigations — preserving bandwidth where necessary while protecting device responsiveness — are the practical path forward.Source: Mix Vale Delivery Optimization in Windows 11 24H2 presents memory leak and high RAM consumption