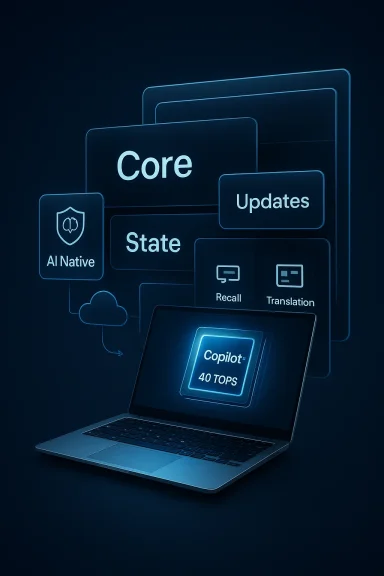

Microsoft’s purported plan to make dedicated AI silicon a hard requirement for its next major Windows release has jolted the PC industry and provoked a fierce debate about upgrades, privacy, and the future shape of the Windows platform. Over the past 72 hours a set of consistent—but not officially confirmed—leaks and reporting threads have described a project internally tagged “Hudson Valley Next” (commonly framed in the press as “Windows 12”) that would ship a modular CorePC operating system and gate its richest, system-level AI features behind a Neural Processing Unit (NPU) threshold of roughly 40 TOPS (trillions of operations per second). If true, that would mark Microsoft’s most aggressive hardware-driven feature policy to date, and it would reshape upgrade cycles for consumers and enterprises alike.

Since the launch of Copilot and Microsoft’s public pivot to “AI-first” product messaging, the company and its partners have been steadily building a hardware-and-software ecosystem that privileges on-device AI acceleration. Microsoft’s Copilot+ program already brands a class of machines offering enhanced local AI experiences, and OEMs and silicon vendors have been racing to produce processors with integrated NPUs and advertised TOPS figures.

The newer wave of reporting ties those separate developments into a single strategic move: a new Windows release that is not merely AI-enhanced but AI-native. The leaked picture includes three headline claims:

What to watch for in the coming months:

Key principles Microsoft and partners would need to follow to make this transition defensible:

In short: the AI chip story is real as a trend; the precise policy decisions and timelines remain in flux. That uncertainty is both a risk and an opportunity — for users who can wait and test, and for vendors and administrators who plan carefully, this next chapter could deliver meaningful gains. But if Microsoft insists on hardware gates without clear transparency and reasonable fallbacks, the backlash may be as significant as the technical benefits it promises.

Source: Technobezz Microsoft Reportedly Plans Windows 12 AI Chip Requirement for 2026

Background / Overview

Background / Overview

Since the launch of Copilot and Microsoft’s public pivot to “AI-first” product messaging, the company and its partners have been steadily building a hardware-and-software ecosystem that privileges on-device AI acceleration. Microsoft’s Copilot+ program already brands a class of machines offering enhanced local AI experiences, and OEMs and silicon vendors have been racing to produce processors with integrated NPUs and advertised TOPS figures.The newer wave of reporting ties those separate developments into a single strategic move: a new Windows release that is not merely AI-enhanced but AI-native. The leaked picture includes three headline claims:

- A modular operating system architecture (referred to in leaks as CorePC) that separates core system state and allows more targeted, smaller updates and device-class tailoring.

- Deeper system-level integration of Copilot so the assistant becomes the operating system’s intelligence layer rather than a sideloaded app.

- A hardware gate: devices must include an NPU capable of roughly 40 TOPS to unlock the full set of local, low-latency AI features; systems that do not meet the threshold would see reduced functionality, reliance on cloud processing, or possibly exclusion from certain upgrades.

What the leaks actually claim

CorePC and modular Windows

The CorePC concept described in the leaks is a departure from the monolithic Windows images of old. CorePC is presented as:- A modular layout that isolates OS binaries, user state, and applications into clearer boundaries or partitions.

- Faster, less intrusive updates by allowing Microsoft to swap or refresh modules independently.

- Device-tailored “editions” built from a common core: pared-down builds for cheap education tablets, full builds for workstations and gaming rigs.

System-level Copilot and AI experiences

Under the leaked model, Copilot becomes the system’s contextual intelligence layer. Expect descriptions like:- System-wide, context aware recommendations and real-time summaries.

- Instant “Recall” style features that index and summarize local documents, emails, and screen content.

- Low-latency, on-device translation, transcriptions, and even local image generation for UI elements and content creation.

The 40 TOPS NPU gate

The single most headline-grabbing claim is the NPU threshold: ~40 TOPS. According to the leaks, that number is being used internally to define which devices will be eligible for the full Copilot+ AI experience. Devices with NPUs below that threshold would either:- Receive a limited “basic” or “Core Home” experience without advanced local AI, or

- Be able to access some capabilities via cloud processing (Windows 365 or other paid services), but with higher latency and potential additional cost.

What can be verified today (and what can’t)

- Verified technical reality: Copilot+ hardware certification already exists as a concept and Microsoft and partners are marketing NPUs and TOPS figures. Multiple recent consumer and vendor product announcements show NPUs with TOPS claims in the same ballpark as the 40 TOPS figure.

- Vendor roadmaps: leading silicon vendors — Qualcomm, Intel, and AMD — have announced or shipped processors with integrated NPUs. Several current-generation and upcoming chips advertise NPU performance at or above 40 TOPS, especially in the high-end mobile/workstation space.

- Unverified or contradicted: there is no official Microsoft announcement naming “Windows 12”, confirming a 2026 ship date, or declaring a mandatory NPU requirement for the core OS. Some Microsoft-focused reporters have pushed back on the immediacy of a Windows 12 release, characterizing parts of this narrative as conflations of older initiatives and marketing previews.

Hardware reality: who already meets 40 TOPS?

The industry is not starting from zero. Over the last two years, silicon vendors have responded to the AI PC narrative by integrating dedicated NPUs into mainstream client processors. Practical observations:- Qualcomm’s Snapdragon X Elite and X Plus family of SoCs were among the first broadly marketed consumer NPUs around or above the 40 TOPS mark in laptop-class devices.

- Intel’s Core Ultra (and related Lunar/Nova Lake lineage) and later desktop refreshes have been explicitly engineered for integrated neural engines with TOPS numbers that approach the 40+ range in full-power configurations.

- AMD’s recent Ryzen AI family (including newer PRO enterprise variants) advertise NPUs in the 40–60 TOPS range for certain SKUs, widening the field of compatible devices.

Market consequences and OEM behavior

OEMs are expected to respond in predictable ways:- “Windows 12 Ready” labeling: vendors will likely pre-certify machines and co-market them with the new badge well ahead of any OS launch to capture upgrade demand.

- SKU segmentation: expect device lines explicitly marketed as AI PCs, with higher price points for the NPU-equipped SKUs and value models without NPUs.

- Firmware and driver ecosystems: OEM firmware and NPU driver stacks will become critical to delivering the promised on-device experiences. Inconsistent OEM support or shipping devices with underperforming drivers will create fragmentation and consumer frustration.

Strategic motives: Why Microsoft would push this

There are several plausible commercial and engineering motives behind a hardware gate:- Performance and UX: local NPUs dramatically reduce latency and dependency on cloud connectivity for inference-heavy features. For developers and product managers, requiring local acceleration simplifies performance guarantees.

- Privacy positioning: Microsoft can legitimately market local model inference as a privacy advantage (data stays on the device), which helps in regulated or privacy-sensitive segments.

- Hardware upgrade cycle: forcing new hardware boosts OEM sales and creates an upsell path for Microsoft’s cloud and subscription services (Copilot tiers, Windows 365, etc.).

- Monetization: a two-tier Windows experience (traditional one-time license for base functionality plus subscription or cloud fallbacks for premium AI features) aligns with a platform business model that mixes licenses and recurring revenue.

Benefits for users and enterprises

If implemented carefully and inclusively, the proposed approach could deliver real advantages:- Immediate responsiveness: local NPUs enable near-instant summarization, search, and context-aware assistance that cloud-first systems cannot match for latency-sensitive tasks.

- Improved privacy control: sensitive inference can be kept on-device, reducing the need to ship private data to remote servers.

- New productivity experiences: system-level semantic search, Recall, and real-time contextual automation could materially speed mundane work across document-heavy roles.

- Reduced cloud load: offloading inference to on-device hardware can reduce cloud compute costs for both Microsoft and customers.

Major risks and downsides

However, the downsides are concrete and significant:- Forced upgrades and e-waste: gating AI features by hardware specs creates an upgrade pressure that will accelerate device churn and raise environmental concerns.

- Cost and inequality: households, education systems, and small businesses on tight budgets may be locked out of premium features or face subscription costs to access cloud fallbacks.

- Fragmentation: a bifurcated Windows ecosystem (AI-enabled vs. legacy) complicates software development, support, and corporate image.

- Enterprise migration risk: centralized IT teams will have to contend with mixed fleets, extended compatibility testing, and potentially large capital expenditures if Microsoft ties security or management primitives to the new architecture.

- Performance inconsistency: advertized TOPS figures are insufficient if NPUs are thermally throttled or if drivers are immature, leading to poor experiences even on “qualified” machines.

- Lack of transparency: if Microsoft defines gates through non-public benchmarks or OEM-implemented results, users and admins will lack clarity about eligibility and future-proofing.

The subscription question: licensing and monetization

Leaks suggest a plausible hybrid approach to licensing:- A Core Home SKU remains a conventional one-time purchase, preserving the basic Windows experience for mass-market users.

- Advanced, AI-powered capabilities could be multilayered: some delivered locally on certified hardware at no extra charge, others tied to subscription tiers (Copilot+, Windows 365 forms of cloud-based offload) where Microsoft bills for cloud compute or advanced capabilities.

What IT managers and consumers should do now

If you run a fleet or are planning a purchase in the next 12–24 months, practical steps help reduce downstream risk:- Audit current hardware. Record processor family, available NPUs (if any), memory, and storage. Determine which endpoints already meet Copilot+ specs today.

- Define priority workloads. Not every user needs local AI acceleration. Target high-value personas (knowledge workers, call centers, content creators) for earlier refresh.

- Consider extended support options. Vendors and Microsoft offer ESU and extended maintenance for older OSes; evaluate costs versus refresh.

- Test before committing. If possible, trial early Copilot+ devices and measure real-world NPU performance under your typical workloads.

- Engage procurement early. If a hardware gate is imminent, negotiate longer life-cycle guarantees and driver/firmware commitments from OEMs.

- Watch for Microsoft clarifications. The company historically announces major changes with lead time; plan to revisit choices when Microsoft publishes official system requirements.

Timeline: when might this happen?

Public reporting places the earliest possible ship window for a next-generation Windows aligned to 2026, but industry insiders and fact-checks have pushed back on that immediacy. Microsoft’s own product cycles and the lifecycle event for Windows 10 (end-of-support on October 14, 2025) influence timing and create windows of increased upgrade activity — but they do not by themselves force a new Windows release on a calendar.What to watch for in the coming months:

- Official Microsoft communications about Copilot+, Windows system requirements, and any major rebranding of the OS.

- OEM certification programs and “Windows X Ready” badges that pre-announce device compatibility.

- Windows Insider branches and preview builds that might surface CorePC components or new Copilot behaviors.

- Vendor product announcements at major trade events and chipmakers’ TOPS claims (and independent benchmarks that validate sustained throughput).

Judging the story: cautious skepticism is warranted

The leaked narrative is internally consistent: Microsoft wants to build an AI-native platform; vendors are shipping NPUs; a performance bar would simplify UX guarantees. But a few red flags counsel caution:- A modular CorePC shipped as a full platform pivot would be a massive engineering effort and would require a sustained, transparent roadmap — not just a single marketing push.

- Microsoft historically tests new architecture ideas over multiple years and iterates with partners; the CorePC concept shows up in older filings and insider discussions and may have evolved or been shelved.

- Several well-connected reporters have publicly questioned the immediacy or the scale of the change described in the most viral summaries; those pushbacks suggest the story as circulated mixes verifiable facts with aggregation and speculation.

Final assessment: an inflection point — if executed responsibly

The convergence of OS-level AI, vendor NPUs, and new modular OS concepts represents a genuine inflection point for the PC ecosystem. Done well, an AI-native Windows with capable on-device inference could produce meaningful improvements in speed, privacy, and productivity. Done poorly, it risks accelerating e-waste, creating new digital divides, and eroding goodwill among long-term Windows customers.Key principles Microsoft and partners would need to follow to make this transition defensible:

- Transparency: publish clear, standardized eligibility criteria and public benchmark methodologies for any hardware gates.

- Graceful fallbacks: ensure accessible, affordable alternatives for users who cannot or will not upgrade hardware, including no-cost local features where possible and generous cloud-offload terms where necessary.

- Longevity commitments: OEMs and Microsoft should commit to longer driver and firmware support windows for AI-capable hardware to counteract churn.

- Environmental considerations: incentivize trade-in and recycling programs to reduce e-waste and provide pathways for refurbishers and lower-income buyers.

In short: the AI chip story is real as a trend; the precise policy decisions and timelines remain in flux. That uncertainty is both a risk and an opportunity — for users who can wait and test, and for vendors and administrators who plan carefully, this next chapter could deliver meaningful gains. But if Microsoft insists on hardware gates without clear transparency and reasonable fallbacks, the backlash may be as significant as the technical benefits it promises.

Source: Technobezz Microsoft Reportedly Plans Windows 12 AI Chip Requirement for 2026