Zenity’s presence around Microsoft 365 Copilot, AI agents, and automation is a timely reminder that enterprise AI adoption has moved well beyond experimentation. The core issue is no longer whether organizations will deploy these tools, but whether they can govern them before sensitive data, permissions, and workflows become too dispersed to control. That tension is exactly what makes Zenity’s positioning relevant for both security buyers and investors watching the AI security category.

The enterprise AI conversation has shifted rapidly over the past two years. What began as a productivity story around chat-style assistants has evolved into a broader operational model in which Copilot, Copilot Studio, and custom AI agents are embedded into everyday business processes. Microsoft has steadily expanded the security and governance layer around that stack, including the Copilot Control System, data loss prevention, Purview-based controls, and newer managed security enhancements for agents.

That evolution matters because Copilot is not just a consumer-facing feature. In Microsoft’s own framing, it is increasingly tied to enterprise data security, compliance, and administration, with controls spanning visibility, risk management, and policy enforcement. Microsoft’s documentation also makes clear that AI agent governance is a distinct challenge, especially where user consent, tool invocation, and external services are involved.

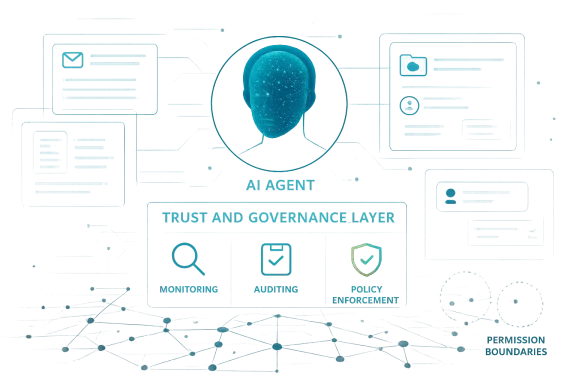

Zenity has been leaning into this exact market gap. The company has repeatedly described itself as a security layer for enterprise copilots, low-code development, and agentic AI, and it has positioned Microsoft integrations as central to that strategy. In 2024, Zenity announced an AI Trust Layer for Microsoft 365 Copilot, and in 2025 it expanded integration with Copilot Studio to secure AI agents at scale.

The broader industry backdrop is important too. Microsoft has publicly acknowledged that prompt injection, data leakage, and agent misuse are real security concerns, while its own security organization has continued to add protections for AI systems and copilots. That means vendors like Zenity are not inventing the problem; they are building around a problem the platform owner itself is now formalizing.

Against that backdrop, Zenity’s reported outreach at M365 Orlando and its booth presence is best understood not as a routine conference appearance, but as a signal about market timing. The company is effectively telling enterprise buyers that AI adoption is already happening, and that the security layer is late if it arrives only after deployment. That is a compelling commercial message, especially in high-compliance and high-exposure environments.

That transition creates an unavoidable security mismatch. Adoption can happen in weeks through pilot programs, shadow use, or bottom-up business requests, while governance programs often move at the pace of policy review, identity hardening, and compliance sign-off. Zenity’s central claim is that the blast radius of unsecured AI is larger than many enterprises realize, because agents can touch data that older application models never reached so directly.

That creates a natural opening for specialized security vendors. If enterprise teams are already using Copilot, then they need answers for what happens when a prompt reaches the wrong file, a connector points at the wrong system, or an agent is allowed to take action beyond its intended scope. Zenity’s pitch is that the control plane must sit closer to the AI runtime itself, not only around the perimeter.

Key implications include:

The company also benefits from a vocabulary shift in the market. Security buyers are no longer talking only about “bots” or “automation scripts.” They are talking about agentic AI, prompt injection, tool invocation, and data access pathways that cross traditional application boundaries. Zenity has adapted its language to match this shift, which helps it stay in the conversation as the category matures.

Zenity has also pushed hard on the idea of agentless security. That is a useful message in Microsoft-centric enterprises because it suggests lower friction for deployment and less dependency on invasive integration work. In enterprise security, lower friction often matters as much as technical elegance, because the best tool is frequently the one that can actually be rolled out.

At a practical level, Zenity’s positioning implies several things:

That is good news for the overall market, but it also validates the existence of a gap. When the platform vendor adds security features, it does not necessarily eliminate demand for specialized security providers. In many enterprise categories, vendor-native controls create a baseline, while third-party vendors compete on depth, visibility, workflow integration, and speed of response.

Microsoft has also published guidance on securing and governing Microsoft 365 Copilot deployments through Purview and SharePoint management, again reinforcing that governance is a lifecycle issue. This is where a vendor like Zenity can argue that it complements Microsoft rather than competes head-on with it. The pitch becomes: Microsoft provides the platform, and Zenity provides the specialized lens for AI behavior.

That dynamic creates a layered market structure:

There is also a valuation angle. Security vendors that can tie themselves to durable enterprise trends often earn more attention than those chasing one-off product cycles. By aligning with Microsoft 365, Copilot Studio, and agentic AI, Zenity is positioning itself in a category where growth can be measured in seat expansion, module adoption, and enterprise breadth. That can be much more appealing than a narrow feature set.

That said, investors should avoid assuming that every conference conversation converts cleanly into revenue. Enterprise security cycles are often long, and many organizations will first rely on what Microsoft already includes in the stack. So the bullish thesis is real, but not automatic; the company still has to prove it can turn interest into repeatable pipeline conversion.

What makes the opportunity notable is the convergence of factors:

Competitors are likely to frame the market in different ways. Some will emphasize data security and compliance, some will emphasize cloud posture management, and others will focus on runtime protections against prompt injection or malicious tool use. Zenity’s challenge is to show that it owns the specific layer where AI behavior turns into enterprise action.

Zenity also has a messaging advantage in a market where many vendors still speak in generic AI terms. The company’s repeated references to prompt injection, exfiltration, tool abuse, and agent control make its value proposition concrete. Concrete beats vague, especially in security procurement where buyers want to know exactly what can go wrong and how the product stops it.

In competitive terms, the market seems to be splitting into clear categories:

That tension is not temporary. It is likely to become a defining feature of the AI workplace. The more useful Copilot becomes, the more pressure there will be to let it act on behalf of users, which increases the need for controls on identity, approvals, connectors, and data boundaries. Zenity is clearly aiming at that friction point.

This is why the market conversation has shifted from “Can we use AI?” to “Can we trust the way it is wired into our systems?” That shift favors vendors that can map the chain from prompt to action, because modern AI risk is increasingly about sequence, context, and delegation rather than only code vulnerability.

Enterprise stakeholders are likely to divide along these lines:

In consumer settings, users often self-limit or abandon tools when they behave badly. In enterprise settings, the same failure may be absorbed into workflows, approvals, and integrations before it is noticed. That makes governance and runtime monitoring much more important, especially where AI touches finance, legal, HR, or regulated operational data.

That means enterprises will likely need more than a single policy toggle. They will need a layered framework that includes data classification, connector governance, identity hardening, monitoring, and incident response for AI-specific events. Zenity’s value proposition is that it helps translate that framework into operational control.

For consumers, the issue is convenience. For enterprises, the issue is exposure. That is why the same AI feature can be a casual productivity boost on one side and a governance headache on the other.

The evidence so far points in that direction. Microsoft is building more governance into Copilot Studio and Security Copilot, while its documentation and blog posts continue to emphasize protections around prompt abuse, agent behavior, and enterprise data security. That suggests the platform is maturing in a way that supports a broader ecosystem of controls.

A successful category story would likely include:

A second area to watch is how Microsoft continues to evolve its own controls. Every new governance feature in Copilot Studio or Microsoft 365 Copilot could be read in two ways: as validation of the category, or as a narrowing of the independent vendor opportunity. Zenity will need to stay ahead by focusing on the hardest operational problems, especially where agents take action across systems.

In the near term, Zenity looks well positioned to benefit from a market that is finally acknowledging the security cost of AI acceleration. The long-term outcome will depend on whether it can turn that acknowledgement into a durable platform, not just a timely talking point.

Source: TipRanks Zenity Highlights Growing Enterprise Demand for Security Around AI and Copilot Deployments - TipRanks.com

Background

Background

The enterprise AI conversation has shifted rapidly over the past two years. What began as a productivity story around chat-style assistants has evolved into a broader operational model in which Copilot, Copilot Studio, and custom AI agents are embedded into everyday business processes. Microsoft has steadily expanded the security and governance layer around that stack, including the Copilot Control System, data loss prevention, Purview-based controls, and newer managed security enhancements for agents.That evolution matters because Copilot is not just a consumer-facing feature. In Microsoft’s own framing, it is increasingly tied to enterprise data security, compliance, and administration, with controls spanning visibility, risk management, and policy enforcement. Microsoft’s documentation also makes clear that AI agent governance is a distinct challenge, especially where user consent, tool invocation, and external services are involved.

Zenity has been leaning into this exact market gap. The company has repeatedly described itself as a security layer for enterprise copilots, low-code development, and agentic AI, and it has positioned Microsoft integrations as central to that strategy. In 2024, Zenity announced an AI Trust Layer for Microsoft 365 Copilot, and in 2025 it expanded integration with Copilot Studio to secure AI agents at scale.

The broader industry backdrop is important too. Microsoft has publicly acknowledged that prompt injection, data leakage, and agent misuse are real security concerns, while its own security organization has continued to add protections for AI systems and copilots. That means vendors like Zenity are not inventing the problem; they are building around a problem the platform owner itself is now formalizing.

Against that backdrop, Zenity’s reported outreach at M365 Orlando and its booth presence is best understood not as a routine conference appearance, but as a signal about market timing. The company is effectively telling enterprise buyers that AI adoption is already happening, and that the security layer is late if it arrives only after deployment. That is a compelling commercial message, especially in high-compliance and high-exposure environments.

Why This Message Lands Now

The most important reason Zenity’s message resonates is simple: enterprise AI is now operational, not hypothetical. Microsoft has continued to extend Copilot into the daily flow of work, while its documentation emphasizes the need for governance and data protection across Copilot and agent deployments. In practical terms, that means AI is increasingly acting on real documents, messages, connectors, and business applications rather than on sanitized test data.That transition creates an unavoidable security mismatch. Adoption can happen in weeks through pilot programs, shadow use, or bottom-up business requests, while governance programs often move at the pace of policy review, identity hardening, and compliance sign-off. Zenity’s central claim is that the blast radius of unsecured AI is larger than many enterprises realize, because agents can touch data that older application models never reached so directly.

The enterprise timing problem

The timing problem is not unique to Microsoft, but Microsoft environments make it especially visible because Copilot sits atop an already dense ecosystem of identities, files, chats, mailboxes, connectors, and Power Platform assets. Microsoft’s own guidance on Copilot deployment emphasizes remediation of oversharing, guardrails, and regulatory readiness, which suggests the vendor sees governance as a deployment prerequisite rather than an afterthought.That creates a natural opening for specialized security vendors. If enterprise teams are already using Copilot, then they need answers for what happens when a prompt reaches the wrong file, a connector points at the wrong system, or an agent is allowed to take action beyond its intended scope. Zenity’s pitch is that the control plane must sit closer to the AI runtime itself, not only around the perimeter.

Key implications include:

- AI adoption is outpacing security policy in many organizations.

- Governance must address runtime behavior, not just configuration.

- Copilot and agent deployments can expose hidden permission sprawl.

- Buyers increasingly want visibility before they want scale.

- Security teams are being pulled into AI projects earlier than before.

Zenity’s Product Positioning

Zenity’s messaging has been remarkably consistent: it wants to secure enterprise copilots and agents from build time through runtime. The company says its platform helps organizations monitor how business users interact with Microsoft 365 Copilot, detect suspicious activity, and apply remediation when risk appears. That framing is attractive because it combines visibility, prevention, and response in a single story.The company also benefits from a vocabulary shift in the market. Security buyers are no longer talking only about “bots” or “automation scripts.” They are talking about agentic AI, prompt injection, tool invocation, and data access pathways that cross traditional application boundaries. Zenity has adapted its language to match this shift, which helps it stay in the conversation as the category matures.

From low-code to agentic AI

This matters because Zenity’s credibility did not begin with Copilot alone. Its earlier work around low-code and business-led development gave it a foothold in environments where non-developers build powerful workflows with enterprise reach. That background is important because the same governance issues that affected low-code platforms are now appearing in AI agents, only with more autonomy and more opaque behavior.Zenity has also pushed hard on the idea of agentless security. That is a useful message in Microsoft-centric enterprises because it suggests lower friction for deployment and less dependency on invasive integration work. In enterprise security, lower friction often matters as much as technical elegance, because the best tool is frequently the one that can actually be rolled out.

At a practical level, Zenity’s positioning implies several things:

- It wants to be seen as a control layer, not a point product.

- It is betting that AI security budgets will expand.

- It is targeting companies already investing in Microsoft 365 and Copilot.

- It is trying to own the conversation around secure adoption rather than restriction.

- It is aligning itself with the operational realities of enterprise rollout.

Microsoft’s Own Direction Supports the Thesis

Zenity’s pitch would be much weaker if Microsoft were not simultaneously elevating security in its AI stack. But Microsoft has been doing exactly that. The company’s Copilot Control System is designed to provide enterprise-grade controls and analytics across Microsoft 365 Copilot, Copilot Studio, and AI agents, and its Copilot Studio updates have added governance, DLP, auditing, and secure-by-default features.That is good news for the overall market, but it also validates the existence of a gap. When the platform vendor adds security features, it does not necessarily eliminate demand for specialized security providers. In many enterprise categories, vendor-native controls create a baseline, while third-party vendors compete on depth, visibility, workflow integration, and speed of response.

Platform controls do not end the market

Microsoft’s guidance shows why this remains an active category. Its documentation on governance and administration models explains that Copilot agents may rely on user consent at the point of invocation, while administrators may have limited ability to isolate content at fine granularity. That is a classic example of a platform-level control that is necessary but not sufficient.Microsoft has also published guidance on securing and governing Microsoft 365 Copilot deployments through Purview and SharePoint management, again reinforcing that governance is a lifecycle issue. This is where a vendor like Zenity can argue that it complements Microsoft rather than competes head-on with it. The pitch becomes: Microsoft provides the platform, and Zenity provides the specialized lens for AI behavior.

That dynamic creates a layered market structure:

- Microsoft establishes baseline governance.

- Security teams use built-in tools for compliance and policy.

- Specialized vendors cover runtime risk and AI-specific threat models.

- Customers blend both approaches depending on maturity.

- Over time, buyers may standardize on a multi-layer defense model.

Why Investors Care

From an investor’s perspective, the significance of Zenity’s message is that it sits in a category with strong secular tailwinds. AI is expanding attack surfaces, and enterprises are learning that security spend tends to follow new platform adoption. If Copilot and AI agents become embedded in core workflows, then security around those deployments becomes less optional and more like an operating requirement.There is also a valuation angle. Security vendors that can tie themselves to durable enterprise trends often earn more attention than those chasing one-off product cycles. By aligning with Microsoft 365, Copilot Studio, and agentic AI, Zenity is positioning itself in a category where growth can be measured in seat expansion, module adoption, and enterprise breadth. That can be much more appealing than a narrow feature set.

The revenue logic

The revenue logic is straightforward. If a customer begins with a Copilot pilot, then the discovery of governance issues can create demand for assessment, monitoring, and remediation. If the AI deployment expands into agents and connectors, then the security surface expands too. That gives Zenity a path to land-and-expand selling, which is usually where high-quality enterprise software margins start to emerge.That said, investors should avoid assuming that every conference conversation converts cleanly into revenue. Enterprise security cycles are often long, and many organizations will first rely on what Microsoft already includes in the stack. So the bullish thesis is real, but not automatic; the company still has to prove it can turn interest into repeatable pipeline conversion.

What makes the opportunity notable is the convergence of factors:

- Copilot adoption is accelerating.

- Agent security is a budgetable problem.

- Microsoft’s own roadmap legitimizes the category.

- Compliance teams need governance narratives.

- Buyers increasingly prefer tools that fit the Microsoft ecosystem.

The Competitive Landscape Is Getting Crowded

The AI security market is attracting more attention because the pain point is obvious and the buyer is identifiable. That is good for Zenity, but it also means more competition. Microsoft’s own tools, partner ecosystem, and security products all overlap with parts of Zenity’s story, especially where governance and compliance are involved.Competitors are likely to frame the market in different ways. Some will emphasize data security and compliance, some will emphasize cloud posture management, and others will focus on runtime protections against prompt injection or malicious tool use. Zenity’s challenge is to show that it owns the specific layer where AI behavior turns into enterprise action.

Differentiation will matter

The most defensible position may be specialization. If a security buyer wants a broad Microsoft compliance stack, Microsoft is already a strong default. If the buyer wants a purpose-built lens for agent behavior, external connections, and misuse inside AI workflows, Zenity can argue that it is solving a different problem. That distinction is important because the most successful security vendors often survive by being the deepest answer to one hard problem.Zenity also has a messaging advantage in a market where many vendors still speak in generic AI terms. The company’s repeated references to prompt injection, exfiltration, tool abuse, and agent control make its value proposition concrete. Concrete beats vague, especially in security procurement where buyers want to know exactly what can go wrong and how the product stops it.

In competitive terms, the market seems to be splitting into clear categories:

- Platform-native AI governance.

- Security platform extensions.

- Specialist AI runtime protection.

- Compliance and data-loss prevention layers.

- Consulting-led assessment and hardening services.

Enterprise Impact: Security Teams Versus Business Users

For enterprise buyers, the split between security teams and business users is one of the biggest issues in AI adoption. Business users usually want speed, flexibility, and immediate value from Copilot and automation tools. Security teams want visibility, policy enforcement, and assurance that new capabilities will not create hidden privilege or data exposure problems.That tension is not temporary. It is likely to become a defining feature of the AI workplace. The more useful Copilot becomes, the more pressure there will be to let it act on behalf of users, which increases the need for controls on identity, approvals, connectors, and data boundaries. Zenity is clearly aiming at that friction point.

Where the operational pain shows up

The operational pain tends to appear in predictable places. Teams discover that Copilot can access too much, agents can inherit too many permissions, or a workflow can reach outside the intended tenant boundary. The problem is rarely a single catastrophic flaw; it is usually a collection of small governance gaps that add up to a major exposure.This is why the market conversation has shifted from “Can we use AI?” to “Can we trust the way it is wired into our systems?” That shift favors vendors that can map the chain from prompt to action, because modern AI risk is increasingly about sequence, context, and delegation rather than only code vulnerability.

Enterprise stakeholders are likely to divide along these lines:

- Security teams will ask what the agent can touch.

- IT administrators will ask who approved it.

- Compliance officers will ask how it is audited.

- Business users will ask why a guardrail slowed them down.

- Executives will ask whether the risk is worth the productivity gain.

Consumer vs. Enterprise AI Risk

Consumer AI risk is real, but enterprise AI risk is more consequential because the data is richer, the permissions are broader, and the output can trigger real business actions. A personal chatbot can produce a bad answer. An enterprise agent can expose sensitive mail, move data, or execute a workflow with downstream impact. That distinction is why AI security is becoming a board-level issue instead of a niche technical discussion.In consumer settings, users often self-limit or abandon tools when they behave badly. In enterprise settings, the same failure may be absorbed into workflows, approvals, and integrations before it is noticed. That makes governance and runtime monitoring much more important, especially where AI touches finance, legal, HR, or regulated operational data.

Why Microsoft environments are especially sensitive

Microsoft environments are especially sensitive because Copilot sits in the center of collaboration and productivity. When the same system can summarize email, inspect files, interact with chats, and connect to business apps, the security story becomes one of scope control more than just account protection. Microsoft’s own governance documentation underscores this by focusing on data policies, consent, auditing, and the limits of fine-grained isolation.That means enterprises will likely need more than a single policy toggle. They will need a layered framework that includes data classification, connector governance, identity hardening, monitoring, and incident response for AI-specific events. Zenity’s value proposition is that it helps translate that framework into operational control.

For consumers, the issue is convenience. For enterprises, the issue is exposure. That is why the same AI feature can be a casual productivity boost on one side and a governance headache on the other.

The Case for Agentic Security as a Category

Zenity’s strongest strategic bet may be that agentic security becomes a durable category rather than a short-lived theme. That would mirror earlier market formations in cloud security, SaaS security, and identity protection, where a new way of working created a new attack surface and a new class of controls. If AI agents become the standard interface for enterprise automation, then security for agents could become a permanent budget line.The evidence so far points in that direction. Microsoft is building more governance into Copilot Studio and Security Copilot, while its documentation and blog posts continue to emphasize protections around prompt abuse, agent behavior, and enterprise data security. That suggests the platform is maturing in a way that supports a broader ecosystem of controls.

What category formation usually looks like

Category formation usually follows a familiar pattern. First, the platform owner legitimizes the need. Next, enterprises encounter practical pain. Then specialist vendors package the pain into measurable controls. Finally, buyers start expecting those controls as part of a standard architecture. Zenity appears to be trying to accelerate that final stage.A successful category story would likely include:

- Clear naming around agent risk.

- Repeatable enterprise use cases.

- Measurable incidents or near-misses.

- Native platform overlap that still leaves gaps.

- A vendor ecosystem with specialized functions.

Strengths and Opportunities

Zenity’s strongest opportunity is that it is aligned with a real problem at the exact moment enterprises are scaling into it. It is also benefiting from Microsoft’s own validation of the governance challenge, which reduces skepticism that AI security is a niche concern. If Zenity can keep the story specific, operational, and tied to Microsoft workflows, it has a meaningful chance to remain relevant as the market expands.- Microsoft ecosystem fit gives Zenity a direct line into a large enterprise base.

- Agentic AI security is emerging as a distinct budget category.

- Runtime visibility is a strong differentiator versus generic governance tooling.

- Copilot adoption increases the total addressable market for AI security.

- Low-friction deployment can improve buyer willingness to trial the product.

- Compliance pressure creates urgency in regulated industries.

- Land-and-expand potential is strong if initial assessments lead to broader controls.

Risks and Concerns

The main risk is that the market may normalize Microsoft’s built-in controls faster than third-party vendors can differentiate. Another concern is that enterprise buyers may admire the narrative but still delay purchases until AI risk becomes more concrete in their own environment. Zenity will need to prove that its controls are not just conceptually valuable but operationally indispensable.- Platform overlap could compress differentiation over time.

- Long enterprise sales cycles may slow revenue conversion.

- Budget competition from broader security platforms is intense.

- Category immaturity can make procurement harder.

- Proof of ROI may be difficult before incidents occur.

- Vendor consolidation risk could pressure smaller specialists.

- Overreliance on Microsoft-centric demand may limit diversification.

What to Watch Next

The next phase to watch is whether Zenity can convert conference visibility and Microsoft-aligned messaging into real customer momentum. The best indicators will be not just press releases, but evidence of larger deployments, deeper integrations, and repeatable enterprise wins. If the company can show that organizations are moving from pilots to governed production use, the market will treat that as a stronger signal than any single event appearance.A second area to watch is how Microsoft continues to evolve its own controls. Every new governance feature in Copilot Studio or Microsoft 365 Copilot could be read in two ways: as validation of the category, or as a narrowing of the independent vendor opportunity. Zenity will need to stay ahead by focusing on the hardest operational problems, especially where agents take action across systems.

Key signals to monitor

- New enterprise customer wins tied to Copilot or Copilot Studio.

- Product updates focused on runtime protection and agent behavior.

- Partnerships that deepen distribution inside Microsoft-centric accounts.

- Evidence of broader demand outside the initial security enthusiast base.

- Signs that Microsoft-native controls are leaving gaps for specialists.

- Adoption in regulated industries where governance urgency is highest.

In the near term, Zenity looks well positioned to benefit from a market that is finally acknowledging the security cost of AI acceleration. The long-term outcome will depend on whether it can turn that acknowledgement into a durable platform, not just a timely talking point.

Source: TipRanks Zenity Highlights Growing Enterprise Demand for Security Around AI and Copilot Deployments - TipRanks.com