IPT’s practical push to turn Microsoft Copilot into a team of specialised, role-based AI agents reframes AI from an experimental chatbot into a production-ready set of digital employees — and it arrives with clear promises, manageable engineering patterns, and governance headaches that every CIO should budget for today.

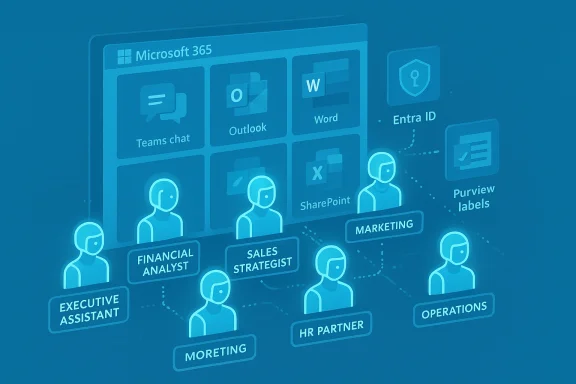

South African systems integrator IPT is marketing a structured approach to Microsoft Copilot that treats AI as agentic — a collection of role-focused agents that operate inside the Microsoft 365 tenant, obey tenant permissions, and are published into the collaboration surfaces where people already work (Teams, Outlook, Word, Excel and SharePoint). The company’s pitch: design each agent with a job description, scope its data access tightly, and measure outcomes so AI shifts from novelty to dependable productivity.

This is not an abstract architectural exercise. IPT’s framework uses Microsoft Copilot Studio to author agents, Graph/SharePoint connectors for data grounding, and Microsoft’s security stack (Entra ID, Purview, sensitivity labels) to enforce least privilege and audit trails. The approach is explicitly integrator-led: IPT configures and operationalises agents while the customer retains policy and compliance control.

However, the upside is conditional. The real determinant is governance: narrow scopes, least privilege, human-in-the-loop for consequential decisions, adversarial testing, and mature cost controls must be non-negotiable. Failure to enforce these controls risks turning a productivity tool into an operational liability.

Organisations that pilot carefully, instrument outcomes, and codify governance will likely capture the productivity gains; those that treat agentic AI as a plug-and-play productivity magic trick will face surprises in cost, compliance, and output quality.

Source: Insurance Edge Need Some Agentic AI and Copilot Insights?

Background

Background

South African systems integrator IPT is marketing a structured approach to Microsoft Copilot that treats AI as agentic — a collection of role-focused agents that operate inside the Microsoft 365 tenant, obey tenant permissions, and are published into the collaboration surfaces where people already work (Teams, Outlook, Word, Excel and SharePoint). The company’s pitch: design each agent with a job description, scope its data access tightly, and measure outcomes so AI shifts from novelty to dependable productivity.This is not an abstract architectural exercise. IPT’s framework uses Microsoft Copilot Studio to author agents, Graph/SharePoint connectors for data grounding, and Microsoft’s security stack (Entra ID, Purview, sensitivity labels) to enforce least privilege and audit trails. The approach is explicitly integrator-led: IPT configures and operationalises agents while the customer retains policy and compliance control.

What “agentic AI” means in practice

Defining agentic AI for the enterprise

- Agentic AI: systems that accept a goal or task, access authorised sources, execute multi-step workflows within policy constraints, and learn or improve through feedback loops. These systems go beyond single-turn conversation to act inside productivity apps or trigger downstream processes.

Typical role-based agents IPT describes

- Executive Assistant agent — drafts replies, prepares agendas, summarizes Teams meetings, and proposes follow-ups from calendar context.

- Financial Analyst agent — monitors cash flow from approved spreadsheets, flags anomalies, and prepares board-ready summary drafts with source provenance.

- Sales Strategist agent — reviews CRM notes and suggests next-best actions or outreach scripts.

- Marketing agent — evaluates campaign metrics and drafts copy for approval.

- HR partner agent — surfaces retention risks from HR systems and prepares talking points for managers.

- Operations agent — maps workflows, identifies bottlenecks and triggers tickets in Power Platform or ITSM systems.

How IPT builds Copilot agents (technical anatomy)

Copilot Studio as the authoring surface

Copilot Studio provides a low-code environment to compose agents: bind prompts to connectors, add slot-filling, attach deterministic actions (Power Automate, Dataverse calls), and publish to endpoints such as Teams or a tenant Copilot instance. This tooling makes it possible for integrators to prototype quickly while preserving enterprise governance.Data grounding and connectors

Agents use native Microsoft connectors (Microsoft Graph, SharePoint, Outlook, Teams) to fetch context while respecting the calling user’s permissions via Microsoft Entra ID. Approved external connectors or web sources can be added only when explicitly permitted by policy. This is retrieval‑augmented generation (RAG) applied inside the tenant rather than relying on public web retrieval alone.Security and governance primitives

- Identity and access: Entra ID enforces per-user privileges so an agent cannot surface content the caller is not authorised to view.

- Data protection: Purview sensitivity labels, Information Protection, and Double Key Encryption can be used to prevent agents from accessing or transmitting highly sensitive content.

- Auditing: Copilot analytics and tenant logging capture agent invocations, data sources used, and actions taken — enabling post‑hoc reviews and regulatory proofs.

Cost model and licensing

Enterprise deployments require two parallel considerations: per‑user Copilot licensing for access to Copilot features and Copilot Studio / runtime consumption (Copilot Credits or pay‑as‑you‑go) for agent execution. Practical rollouts must budget both user entitlements and runtime costs to avoid unexpected spend.Why CEOs and business leaders should care

- Scale expertise: Agents can deliver analyst-level summaries or triage at a fraction of manual cost. This enables smaller teams to produce board-ready outputs without hiring matched headcount.

- Reduce cognitive load: Routine inbox triage, meeting summaries, and first-draft reports are delegated to agents, allowing leaders to focus on decision-making.

- Speed and auditability: Agents operating on live tenant data can surface near-real-time insights while producing logs and provenance needed for compliance.

- Drive innovation: By automating repetitive tasks, teams are freed to work on higher-value strategy and product work.

A practical rollout: IPT’s phased approach

IPT recommends a conservative, six-step playbook that mirrors enterprise change-management best practices:- Identify high‑impact roles and processes with repeatable structure and measurable outcomes.

- Define the agent’s job description: permitted inputs, outputs, escalation rules and KPIs.

- Configure the agent in Copilot Studio with minimal connectors and test data in a sandbox.

- Train end users on how to prompt the agent and how to verify and correct outputs (human-in-the-loop).

- Monitor usage and logs; iterate prompts, connectors and guardrails.

- Scale deliberately after meeting adoption, quality and compliance gates.

Strengths: what makes IPT’s proposition credible

- Platform maturity: Microsoft’s Copilot Studio, Graph connectors, Purview and Entra ID provide the building blocks necessary to create tenant-bound, auditable agents — meaning the technology to implement IPT’s design already exists.

- Workflow-first design: By publishing agents into Teams, Outlook and Office, agents surface where employees already work — reducing context switching and adoption friction.

- Managed integration: IPT positions itself as the integrator — designing, configuring and supporting agents — which lowers the barrier for organisations without in-house AI engineering teams.

- Sector fit: Insurance, finance and HR — sectors with heavy administrative burden and regulatory scrutiny — stand to gain immediately from conservative agentic automation patterns.

Risks and limitations (what to watch closely)

1. Data protection and POPIA (South Africa) considerations

South African organisations must handle personal information under POPIA rules. Agents accessing customer or employee PII must be explicitly scoped, logged, and controlled; cross‑border connectors introduce complex transfer risks that require legal validation. IPT’s approach flags these obligations and recommends legal and privacy review during design.2. Model hallucination and business risk

Generative models can produce plausible but incorrect outputs. When agents draft legal correspondence, financial summaries, or regulatory reporting, these outputs require human validation. Best practice is to limit agents to draft-and-review workflows for high‑impact scenarios.3. Prompt injection and external content risks

Agents that consult web sources or third-party connectors can be vulnerable to prompt injection or malicious inputs. Constraining external sources and adversarial testing are practical mitigations.4. Over‑privileging agents

The temptation to “grant everything” is dangerous. Enforce least privilege on connector scopes and review agent access regularly. This reduces the attack surface and limits potential data exfiltration.5. Cost control and licensing surprises

Copilot user licenses, Copilot Studio runtime credits, and pay-as-you-go metering can create unexpected costs when agents scale. Establish metering, budget alerts, and chargeback models before wide rollout.6. Vendor lock‑in and model provenance

Grounding agents on a particular model or hosting configuration affects portability. Organisations should clarify where models are hosted, whether data leaves the tenant, and whether features like Double Key Encryption are available.Governance checklist: practical controls to demand

- Define agent job descriptions with explicit scope and KPIs.

- Apply least privilege to connectors and data access.

- Use Purview sensitivity labels and Information Protection for datasets agents can access.

- Enable comprehensive logging and retention for agent interactions and data sources.

- Implement human‑in‑the‑loop checkpoints for decisions with legal, financial or compliance impact.

- Set consumption budgets, metering alerts and an owner for every agent to avoid runaway costs.

- Run red-team / adversarial tests against agents to surface prompt-injection or chaining vulnerabilities.

Measurable KPIs and what success looks like

- Hours saved per role per week on routine tasks.

- Reduction in turnaround time for approvals and reports.

- Percentage of agent outputs accepted without edit (quality measure).

- Number of compliance exceptions or incidents attributable to agents.

- Cost per automated task vs manual cost (ROI).

Sector-specific notes — insurance and finance

Insurance and finance are natural early adopters because routine document handling, claims triage and reporting are high-volume, structured tasks. However, these sectors also face the strictest regulatory scrutiny: preserve policyholder data segmentation, require sign-offs on decisions that affect coverage or settlement, and document model explainability as part of audit packs. IPT’s positioning as a managed service with SOC-aware monitoring makes the firm a logical partner for insurers that lack internal AI ops capabilities.Implementation pitfalls to avoid

- Skipping legal and privacy reviews during pilot scoping (especially POPIA and cross‑border transfer assessments).

- Granting agents broad write or execute privileges without human confirmations and rollback options.

- Running pilots without instrumentation or baseline metrics — pilots need a holdout group and measurable targets.

- Under-provisioning cost governance — lack of Copilot credits monitoring is a common surprise.

Where claims should be treated cautiously

Some vendor statements about long-term pricing, bundled feature concessions, or future Microsoft roadmaps are marketing-forward and should be validated directly with Microsoft or through contract terms. Similarly, any specific claims about an IPT deployment’s long-term architecture (such as whether a client uses a particular external model or different cloud provider for runtime) are client-specific and should be explicitly documented in the SOW. Treat these items as negotiable, contract-governed variables rather than hard guarantees.A short operational playbook for IT teams

- Run a readiness audit: data hygiene, identity posture, and system compatibility.

- Choose a tightly scoped pilot (Executive Assistant, month‑end finance summary, or marketing copy drafts).

- Author the agent in Copilot Studio with whitelisted connectors only; enforce read-only where possible.

- Instrument everything into Dataverse/Power BI for monitoring and reporting.

- Require human approval gates for any action that changes system state.

- Set cost alerts, ownership, and a schedule to review agent scopes quarterly.

Final analysis — cautious optimism, governed aggressively

Agentic Copilot deployments, when done with discipline, are a practical next step for organisations that want to move beyond AI proofs-of-concept to measurable operational value. The technical stack exists: Copilot Studio, Microsoft Graph, Purview and Entra ID collectively permit tenant-bound, auditable agents that operate where knowledge workers already spend their day. IPT’s integrator-led model maps cleanly to the market need for configuration, governance and change management, particularly in compliance-heavy sectors.However, the upside is conditional. The real determinant is governance: narrow scopes, least privilege, human-in-the-loop for consequential decisions, adversarial testing, and mature cost controls must be non-negotiable. Failure to enforce these controls risks turning a productivity tool into an operational liability.

Organisations that pilot carefully, instrument outcomes, and codify governance will likely capture the productivity gains; those that treat agentic AI as a plug-and-play productivity magic trick will face surprises in cost, compliance, and output quality.

Conclusion

The shift from single-chatbot Copilot to a fleet of role-based agents is less about a single dramatic technology leap and more about pragmatic systems integration: authoring agents in Copilot Studio, grounding them in approved tenant data, enforcing identity and sensitivity controls, and running disciplined pilots that prove measurable value. IPT’s offering maps to that reality — and its emphasis on job‑described agents, narrow scopes, and managed rollouts is the correct posture for South African businesses (and enterprises worldwide) that need productivity gains without surrendering compliance control. The path to agentic productivity is open; governance and measurement will decide who walks it successfully.Source: Insurance Edge Need Some Agentic AI and Copilot Insights?