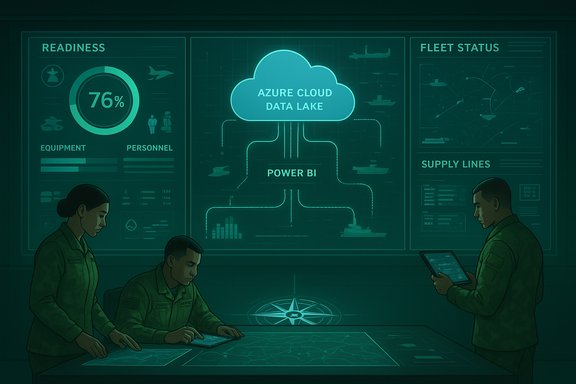

U.S. Army sustainment analytics has moved from incremental improvement to a strategic inflection point: AI-enhanced dashboards and cloud-backed predictive analytics are now delivering near-real-time, mission-relevant insights that materially change how tactical commanders manage readiness. In fiscal year 2025 the U.S. Army Combined Arms Support Command (CASCOM) transitioned its fielded sustainment visualization suite to a Power BI-based Sustainment Enterprise Analytics (SEA) capability, and in late July 2025 it brought an Azure-hosted data staging environment online that consolidates more than a decade of transactional logistics data to enable AI-driven forecasting. These changes are not merely technical upgrades — they reshape decision tempo, operational planning, and the internal workforce the Army must field to operate and govern data-intensive systems at scale.

Background / Overview

CASCOM’s SEA effort replaces an earlier Commander’s Actionable Readiness Dashboard (C@RD) with dashboards and reports built on Microsoft Power BI, ingesting authoritative, near-real-time data from the Global Combat Support System–Army (GCSS‑Army). Phase I of the modernization focused on migrating C@RD functionality into Power BI, delivering the first four enterprise dashboards in early 2025 (Equipment Readiness, Fleet Management, Class IX Repair Parts, and My Materiel Tracker). Those initial gains demonstrated dramatic responsiveness improvements: a brigade-sized equipment status report that previously rendered in roughly 14 seconds now renders in under one second, and deeper drilldowns go from multi‑tens-of-seconds (or minutes) to near instantaneous performance on the new platform.Beyond the UX and speed wins, CASCOM’s decisive new capability is the Azure-hosted staging environment that consolidates roughly 12 years of GCSS‑Army transaction history. With that historical foundation, CASCOM is actively developing AI models to predict fleet readiness, forecast demand for long‑lead items, and create a near‑real‑time combat-power analytic by combining GCSS‑Army with personnel/pay data and terminal/airfield information. The command has aligned model priorities with major commands and staff elements and intends to develop at least 10 distinct AI models to address sustainment decision needs at division and corps echelons.

This modernization is paired with a human-capital shift: CASCOM is recruiting and building an in‑house analytics cadre through direct commissioning, branch transfers, targeted incentives, skill identifiers, and an academic partnership to leverage a Master of Decision Analytics program. The emphasis on organic expertise signals a deliberate move away from sole reliance on contractors for analytic mission-critical systems.

What changed: from dashboards to predictive combat-power analytics

SEA as an enterprise visualization baseline

SEA’s move to Power BI addresses three persistent sustainment problems: slow rendering and drilldown on legacy dashboards; limited data volume and cross‑domain joins; and a lack of integrated sustainment, personnel, and terminal information in a single analytic picture. By adopting an off‑the‑shelf enterprise BI platform that is broadly available across the Army’s Microsoft licensing footprint, CASCOM enabled rapid uptake across commands and reduced the learning curve for end users.Key practical improvements include:

- Faster decision cycles: Routine reports and brigade-level equipment status are now queried and presented in under a second, enabling tactical timelines to compress.

- Wider scope: Power BI’s ingestion pipeline and model capabilities let SEA expand beyond maintenance into class‑IX parts, personnel readiness overlays, and operational logistics indicators.

- User-level familiarity: Power BI’s prevalence in DoD and federal environments lowers training friction and supports local customization by staff sections.

Cloud data staging and 12 years of GCSS‑Army history

The Azure staging environment is the architectural foundation for predictive analytics. By consolidating roughly 12 years of GCSS‑Army transactional records in a managed cloud lake/warehouse, CASCOM can now:- Build training datasets with long operational cycles and seasonal patterns that are required for reliable forecasting.

- Run large‑scale batch and near‑real‑time scoring across units to forecast readiness and supply risk.

- Integrate external data (personnel, terminal/airfield status, environmental cues) to train models that reflect mission and operating‑condition dependencies.

AI use cases already prioritized

Initial, practical AI model use cases under development include:- Predicting fleet readiness from projected supply conditions, maintenance resource availability, and historical failure patterns.

- Forecasting demand for long‑lead parts based on planned missions, environmental stressors, and observed repair cycles.

- Creating a combat power analytic that fuses materiel, personnel (Integrated Personnel and Pay System–Army), and terminal/airfield information into a near‑real‑time common operating picture for tactical decision making.

Why this matters for readiness and LSCO

Decision tempo is a force multiplier. In strategic planning, aggregate trends and months‑long indicators suffice. In LSCO, division commanders must make immediate, consequential choices about allocation of scarce repair assets, routing of high‑priority shipments, and task‑organizing sustainment forces. AI‑enabled dashboards change the calculus in three ways:- Speed: Faster analytics compress the observe-orient-decide-act (OODA) loop, so commanders and sustainment planners can arrive at and execute decisions more quickly.

- Anticipation: Predictive maintenance and demand forecasting shift sustainment from reactive resupply to proactive prevention, lowering failure rates and increasing platform availability.

- Contextual fusion: Integrating personnel, materiel, and terminal status creates multidimensional readiness indicators — for example, a vehicle group may be supply‑able but personnel‑short, or a depot may show parts availability but be constrained by terminal throughput.

Strengths: what CASCOM did right

- Authoritative data sources: Centering analytics on GCSS‑Army ensures outputs derive from the Army’s lifecycle logistics system rather than fragile, ad‑hoc spreadsheets.

- Use of enterprise software: Choosing a widely deployed product that integrates with the broader Microsoft stack reduces training friction and accelerates adoption across the force.

- Historical depth: Consolidating ~12 years of data supplies sufficient training history for models to learn seasonality, logistics cycles, and operational covariates.

- Operational partnerships: Prioritizing model development in collaboration with combatant and functional commands aligns analytic outputs with real operational needs.

- Workforce development: Investing in organic analytic talent and academic partnerships ensures long‑term sustainment, ownership, and reduced contractor dependency.

Risks, weaknesses, and unresolved questions

1. Data quality and lineage are mission‑critical but difficult

Historical transactional data are valuable only if consistent, correctly coded, and auditable. GCSS‑Army went through substantial fielding phases over the past decade, meaning schema changes, process changes, and user behavior shifts are embedded in the dataset. Without rigorous data cleansing, lineage mapping, and versioned schemas, models can learn artifacts that lead to incorrect forecasts under different conditions.Mitigation: Implement rigorous data validation pipelines, maintain a documented medallion or bronze/silver/gold data architecture, and deploy data‑quality dashboards to show where training datasets are weak.

2. Model governance, validation, and explainability

AI models in sustainment affect life‑and‑death operational decisions. Models must be validated across operational regimes, regularly re‑tested for concept drift, and packaged with clear explainability so staff officers can interrogate why a model recommended a particular allocation.Mitigation: Establish a Model Risk Management framework with acceptance criteria, red‑teaming exercises, and human‑in‑the‑loop policies. Use explainable AI toolchains and maintain versioned model artifacts and test suites.

3. Operational resilience and connectivity

LSCO can degrade communications and satellite services. Relying on cloud‑hosted analytics creates an operational dependency on networks that may be contested. If commanders rely on cloud results that are unavailable during a critical window, operational decisions may be impaired.Mitigation: Build a two‑layer architecture: cloud for heavy training and large‑scale scoring; edge or compact models for disconnected operations with periodic synchronization. Precompute contingency options and distribute lightweight, mission‑tailored decision aids to echelons expected to operate disconnected.

4. Security and data sovereignty

Staging years of transactional logistics and personnel data in a commercial cloud raises policy questions about Controlled Unclassified Information (CUI), Personally Identifiable Information (PII), and national security data. The implementation must align with DoD impact level and compliance requirements and protect against data sprawl.Mitigation: Deploy isolated government cloud instances where required, enforce role‑based access and least privilege, and apply continuous monitoring and logging. Adopt encryption‑at‑rest and in‑transit, and ensure data sharing policies limit exposure.

5. Adversarial risk to models and supply‑chain attacks

AI models can be manipulated by poisoned inputs or by exfiltration of training data patterns. Supply‑chain compromise of code, third‑party libraries, or cloud components can also undermine system integrity.Mitigation: Harden model training pipelines, use provenance verification for packages, apply adversarial testing, and segregate high‑impact model training to vetted environments.

6. Overtrust and human oversight gaps

Decision support that is faster and more persuasive can entice staff to accept recommendations uncritically. This is especially dangerous in the fog of war when models may extrapolate beyond their validated domain.Mitigation: Enforce decision rules that require human authorization for critical resource reallocation. Provide confidence bands and scenario sensitivity analyses with every recommendation.

7. Ambiguity around some integrated sources

The SEA initiative is integrating personnel and terminal data; however, terms such as “Automated Terminal Information Service” are commonly associated with aviation systems. There is a potential mismatch between what the system designers mean by terminal information and commonly understood aviation acronyms. Where definitions are unclear or the provenance of a dataset is ambiguous, analytic outputs can be misinterpreted.Mitigation: Maintain clear dataset inventories and glossaries, and annotate models with dataset provenance and data owners.

Verification and technical fact‑checks

Key technical claims were validated against multiple authoritative public documents and vendor technical materials:- The adoption of Power BI as SEA’s visualization platform and the phased migration from C@RD to Power BI, with initial dashboard rollouts in early 2025, are confirmed as the modernization path the command publicly announced.

- Measured performance improvements — an example equipment status report moving from ~14 seconds to under 1 second — were part of the operational comparisons CASCOM reported during the Phase I rollout.

- The Azure staging environment bringing together roughly 12 years of GCSS‑Army transactional data and being operational in late July 2025 is a central program fact as reported by the command.

- Microsoft’s Power Platform and Power BI have been actively integrated with Copilot and Microsoft Fabric capabilities, enabling AI‑augmented analytics and natural‑language interaction inside Power BI; using Copilot for analytics requires appropriate licensing, workspace capacity, and administrator enablement.

- The VCU Master of Decision Analytics program is an accredited, operational graduate degree with multiple delivery formats and was cited by CASCOM as a partner for developing analytic talent.

Recommendations for operationalization and scaling

Scaling CASCOM’s SEA and predictive analytics from pilots to an Army‑wide, LSCO‑ready capability requires a deliberate program of technical, organizational, and policy work. The priority steps, in recommended order, are:- Formalize a Data Governance Board

- Charter cross‑functional ownership (G‑4, G‑1, G‑6 equivalents) for dataset certification, access control, and lifecycle management.

- Establish Model Governance and Certification

- Define performance acceptance criteria, retraining cadence, and adversarial‑resilience tests. Require documented model cards and signed approvals for production promotion.

- Harden architectures for degraded operations

- Design an edge‑capable tier of compact models and precomputed decision products for disconnected use, with scheduled synchronization to cloud scoring.

- Create a Model Test and Red‑Team Cell

- Continuously stress test models against realistic tactical scenarios, including data drift, sensor faults, and adversarial manipulation.

- Scale workforce competency and career paths

- Expand direct commissioning pipelines and civilian upskilling, formalize skill identifiers, and sustain partnerships with accredited analytics programs to create a steady talent inflow.

- Continuously measure operational impact

- Instrument analytic outputs with KPIs tied to readiness, mean time to repair, mission success, and logistics throughput. Publish quarterly operational impact reports to measure ROI and refine model priorities.

- Implement strict supply‑chain security controls

- Vet third‑party software, require SBOMs for deployed code, and maintain air‑gapped testing for high‑impact training datasets.

Where the program could lead: strategic implications

If CASCOM’s approach proves robust in realistic operational testing, the Army gains a template for responsibly integrating AI into operational decision loops. Strategic implications include:- Faster sustainment decisions will compress operational timelines, making multi‑domain maneuver and sustainment more tightly coupled.

- Predictive logistics could reduce inventory requirements, lower costs, and increase the availability of high‑value platforms in the field by shifting investments into preventive maintenance and optimized spares distribution.

- Institutionalization of data culture across sustainment branches — by building analytic skill sets and career paths — could lead to broader organizational agility in adopting data‑driven processes across doctrine, training, and structure.

Practical cautions for the user: what is not yet solved

- Performance metrics from early rollouts demonstrate promise, but full model validation in contested and degraded environments has not been demonstrated at scale.

- Data harmonization across echelons, especially with varied field data entry practices, remains a persistent operational challenge that can undermine model accuracy.

- Cloud dependency increases capability but can create single‑point operational risks if not paired with robust disconnected solutions and contingency doctrine.

- AI models trained on decade‑long historical data may underperform in qualitatively different future conflicts or force structures; avoiding overfitting to peacetime patterns is essential.

Conclusion

CASCOM’s Sustainment Enterprise Analytics program exemplifies the pragmatic, mission‑driven application of modern data engineering and AI to military sustainment. By pairing a broadly accessible BI layer with a cloud‑backed staging environment containing a decade of transactional logistics records, the command has built the necessary scaffolding for predictive maintenance, demand forecasting, and integrated combat‑power visualization.The initiative’s success will depend less on raw algorithmic novelty and more on the disciplined governance of data, models, and operational processes — and on the Army’s ability to nurture an internal analytic workforce capable of stewarding high‑consequence models under pressure. Done well, AI‑enhanced dashboards will make sustainment systems faster, more anticipatory, and more tightly integrated with maneuver. Done poorly — without governance, validation, and robust contingency planning — they risk producing brittle insights at inopportune moments.

The difference between those futures will be the program’s attention to the fundamentals: data quality, model governance, human oversight, and operational resilience.

Source: army.mil The Future of Readiness: AI-Enhanced Dashboards