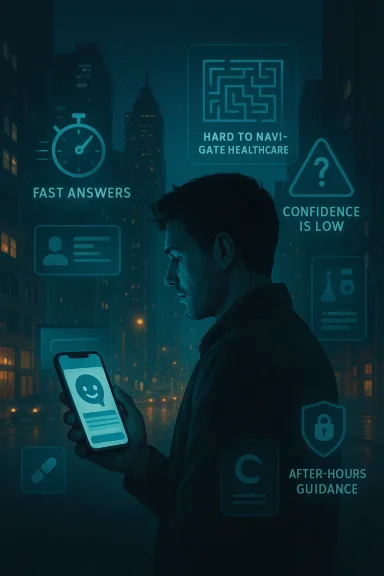

Americans are increasingly turning to AI not because they have stopped trusting doctors, but because the health system often feels too slow, too expensive, and too hard to navigate. Recent polling suggests that AI chatbots have become a kind of on-demand triage layer: a place to get quick explanations, compare symptoms, decode lab results, or decide whether a visit is worth the time and cost. Yet the same polls also show a striking contradiction — many users do not fully trust AI, even as they rely on it.

The latest numbers help explain why the behavior is spreading. The West Health–Gallup Center on Healthcare in America found that 25% of U.S. adults have used an AI tool or chatbot for health information or advice, with most recent users describing it as a supplement to medical care rather than a replacement. KFF found an even larger share — 32% — had used AI chatbots for health information in the past year, while Pew reported that 22% of Americans get health information from AI chatbots at least sometimes. The details point to a major shift in how Americans are managing uncertainty about their health: always available, fast, and good enough to start with are becoming powerful selling points.

For years, the default first stop for a health question was a search engine, a family member, or a waiting room pamphlet. AI tools are now moving into that role by packaging search results into conversational answers that feel more direct and more personal. That matters because health questions are rarely just factual; they are often emotional, urgent, and tangled with fear.

The AP article based on interviews with patients describes that shift vividly. One user, Tiffany Davis, said she consults ChatGPT when she wants to know whether a symptom from weight-loss injections is serious, and another respondent said she uses AI to interpret lab results after a specialist visit. Those anecdotes align with the broader polling trend: AI is increasingly being used as a bridge between doctor visits, not merely as a novelty.

That bridge is being built in a health-care environment that many Americans already find frustrating. Costs remain high, appointments can be hard to get, and the system still often works around business hours rather than patient schedules. AI is attractive precisely because it is available on demand, which makes it useful for people juggling work, caregiving, or symptoms that do not seem urgent enough for an immediate call.

The polls also show that AI health use is not replacing traditional care in a simple way. The Gallup survey found that 59% of recent AI health users researched before seeing a doctor and 56% researched afterward, while KFF found that 58% of people who used AI for physical health advice later followed up with a doctor or other provider. In other words, for many Americans, AI is becoming part of the care workflow, not a substitute for it.

Still, the broader context is important: health information has fragmented across platforms. Americans now move between doctors, websites, social media, and AI chatbots, often comparing sources without always knowing which one deserves more weight. Pew’s finding that health information users rate AI as convenient but not especially accurate is a useful reminder that convenience and confidence are not the same thing.

Gallup found that 59% of recent AI health users researched before seeing a doctor and 56% researched afterward, showing that people use AI to compress the time between uncertainty and action. KFF similarly found that AI is being used for both physical and mental health questions, with 29% of users seeking physical health information and 16% seeking mental health information. Those are not niche use cases; they are broad categories of everyday concern.

Pew’s research reinforces that point by showing people rate AI health information as convenient and, for chatbots, easy to understand, even when they are skeptical about accuracy. This is why AI can succeed in places where traditional health websites sometimes fail: it gives patients a quick narrative, not just a list of facts.

In Gallup’s survey, 14% of recent users said AI information led them to skip a provider visit, which the researchers estimate as about 14 million adults. That is a major number, even if it does not mean all of those skipped visits were medically necessary. It does mean AI is beginning to influence downstream care decisions in a measurable way.

This matters especially for working adults, caregivers, and parents. If someone is holding down multiple jobs or impossible schedules, a chatbot that can answer at 11 p.m. may feel more realistic than waiting until Monday. The problem, of course, is that convenience can encourage self-triage beyond a user’s medical expertise.

Gallup found that 58% of recent AI health users asked about physical symptoms, and 46% asked about medication side effects. That tells us users are not just browsing trivia — they are trying to make sense of real bodily experiences.

KFF’s earlier work also found persistent skepticism, with many adults saying they are not confident AI chatbots provide accurate health information. The broader pattern is clear: Americans may appreciate the convenience, but they have not granted AI the moral or professional authority of a clinician.

This is why many experts frame AI as an assistant. It can organize information, surface possibilities, and help users think through what to ask next. But an assistant is not an expert, and it is certainly not a substitute for hands-on care.

Health systems, insurers, and regulators will face pressure to respond. Clinicians may need to assume more patients will arrive with AI-generated context, while platforms may need clearer rules on data handling and medical advice boundaries. The central challenge will be building systems that preserve convenience without normalizing unsafe self-diagnosis.

Source: JC Post Why many Americans are turning to AI for health advice, according to recent polls

The latest numbers help explain why the behavior is spreading. The West Health–Gallup Center on Healthcare in America found that 25% of U.S. adults have used an AI tool or chatbot for health information or advice, with most recent users describing it as a supplement to medical care rather than a replacement. KFF found an even larger share — 32% — had used AI chatbots for health information in the past year, while Pew reported that 22% of Americans get health information from AI chatbots at least sometimes. The details point to a major shift in how Americans are managing uncertainty about their health: always available, fast, and good enough to start with are becoming powerful selling points.

Background

Background

For years, the default first stop for a health question was a search engine, a family member, or a waiting room pamphlet. AI tools are now moving into that role by packaging search results into conversational answers that feel more direct and more personal. That matters because health questions are rarely just factual; they are often emotional, urgent, and tangled with fear.The AP article based on interviews with patients describes that shift vividly. One user, Tiffany Davis, said she consults ChatGPT when she wants to know whether a symptom from weight-loss injections is serious, and another respondent said she uses AI to interpret lab results after a specialist visit. Those anecdotes align with the broader polling trend: AI is increasingly being used as a bridge between doctor visits, not merely as a novelty.

That bridge is being built in a health-care environment that many Americans already find frustrating. Costs remain high, appointments can be hard to get, and the system still often works around business hours rather than patient schedules. AI is attractive precisely because it is available on demand, which makes it useful for people juggling work, caregiving, or symptoms that do not seem urgent enough for an immediate call.

The polls also show that AI health use is not replacing traditional care in a simple way. The Gallup survey found that 59% of recent AI health users researched before seeing a doctor and 56% researched afterward, while KFF found that 58% of people who used AI for physical health advice later followed up with a doctor or other provider. In other words, for many Americans, AI is becoming part of the care workflow, not a substitute for it.

Still, the broader context is important: health information has fragmented across platforms. Americans now move between doctors, websites, social media, and AI chatbots, often comparing sources without always knowing which one deserves more weight. Pew’s finding that health information users rate AI as convenient but not especially accurate is a useful reminder that convenience and confidence are not the same thing.

Why AI Feels Like the Fastest Path to an Answer

Speed is the biggest reason AI health tools are catching on. In the KFF poll, many respondents said they turned to AI because they wanted quick, immediate advice, and Gallup found similar motivations among recent users. When people are dealing with a symptom or a confusing test result, the appeal of an instant explanation is obvious.Quick Answers Beat Waiting

The modern health system often forces patients into delays: waiting for a callback, waiting for an appointment, or waiting for a portal message that may not be answered until the next day. AI short-circuits that wait by offering a response in seconds, which makes it feel efficient even when it is incomplete. That illusion of momentum is a powerful consumer experience in health care.Gallup found that 59% of recent AI health users researched before seeing a doctor and 56% researched afterward, showing that people use AI to compress the time between uncertainty and action. KFF similarly found that AI is being used for both physical and mental health questions, with 29% of users seeking physical health information and 16% seeking mental health information. Those are not niche use cases; they are broad categories of everyday concern.

- Fast access matters more when symptoms appear outside office hours.

- AI lowers the friction of asking “Is this normal?”

- Users can ask follow-up questions without feeling rushed.

- The conversation format makes the answer feel tailored, even when it is generic.

The “Executive Summary” Effect

Dr. Karandeep Singh of UC San Diego Health told AP that AI is basically a better entry portal into web search. That is a telling description because it captures how many users perceive these tools: not as miracle diagnosticians, but as a way to turn a messy pile of links into a readable first draft. The tool’s value is less in authority than in compression.Pew’s research reinforces that point by showing people rate AI health information as convenient and, for chatbots, easy to understand, even when they are skeptical about accuracy. This is why AI can succeed in places where traditional health websites sometimes fail: it gives patients a quick narrative, not just a list of facts.

Why This Matters

The danger is that speed can be mistaken for reliability. A response that arrives instantly feels useful, but in health care the wrong answer can be more costly than no answer at all. That tension sits at the heart of the current AI health boom.The Health-Care Access Problem Behind the Trend

The polls suggest that AI use is not happening in a vacuum. A meaningful share of respondents said they use it because regular care is expensive, inconvenient, or simply hard to access. That makes AI a symptom of the system’s pain points as much as it is a story about technology.Cost, Time, and Friction

KFF found that younger adults and lower-income people were more likely to say they used AI because they could not afford a provider visit or were struggling to access care. Gallup similarly found that some users turned to AI because they could not pay for a doctor, could not access a provider, or felt dismissed in the past. Those are not abstract frustrations; they are practical barriers that shape everyday decision-making.In Gallup’s survey, 14% of recent users said AI information led them to skip a provider visit, which the researchers estimate as about 14 million adults. That is a major number, even if it does not mean all of those skipped visits were medically necessary. It does mean AI is beginning to influence downstream care decisions in a measurable way.

- Some people use AI because they cannot afford care.

- Others use it because they cannot get care fast enough.

- Some use it after feeling ignored by prior clinicians.

- Many simply want a second opinion before spending money.

After-Hours Care in a 24/7 World

Another reason AI fits the moment is that health anxiety does not respect business hours. KFF and Gallup both found that immediate access is a central draw, and Gallup reported that many users go to AI after hours or before deciding whether a visit is necessary. That makes AI look less like a replacement for medicine and more like a night-shift front desk for the digitally fluent.This matters especially for working adults, caregivers, and parents. If someone is holding down multiple jobs or impossible schedules, a chatbot that can answer at 11 p.m. may feel more realistic than waiting until Monday. The problem, of course, is that convenience can encourage self-triage beyond a user’s medical expertise.

Why Lower-Income Users Stand Out

The equity angle is important. If AI health use grows because care is inaccessible, then the technology may be filling gaps that policy has left open. That makes the trend useful, but also structural rather than accidental. In that sense, AI is less a luxury product than a coping mechanism for a strained system.What Americans Are Asking AI About

The questions people ask AI are often basic, practical, and anxiety-driven. Gallup found that the most common topics were nutrition, exercise, and physical symptoms, followed by medication side effects, medical information, and diagnosis research. These are the kinds of questions where users want clarity quickly and often do not know how to frame the question in medical language.Everyday Symptom Checking

Symptom checking is one of the most intuitive uses for a chatbot. People want to know whether a headache, rash, stomach issue, or side effect is worth escalating, and AI can make that initial decision feel less intimidating. The appeal is not diagnosis in the strict sense; it is reassurance and prioritization.Gallup found that 58% of recent AI health users asked about physical symptoms, and 46% asked about medication side effects. That tells us users are not just browsing trivia — they are trying to make sense of real bodily experiences.

Understanding Medical Jargon

AI is also being used as a translator. People who receive lab results, discharge instructions, or specialist notes often want plain-English explanations, and AI is well suited to repackaging technical language into something more accessible. That may be one reason users say it helps them feel more confident when talking to providers.Mental Health and Sensitive Topics

KFF found that 16% of adults had used AI for mental health information in the past year. That is smaller than physical health use, but it may be more significant because mental health questions can feel private, awkward, or difficult to bring up with another person. AI can lower that barrier, although it may also reduce the chance that someone gets appropriate professional support.- Symptom interpretation is a major use case.

- Medication side effects are a common concern.

- Users want help translating test results.

- Mental health questions may feel easier to ask a bot than a person.

Trust Is the Central Fault Line

The strongest theme running through the polls is not adoption but ambivalence. People are using AI for health advice, but they are not fully convinced it is accurate. That combination is important, because it suggests behavior is being driven by utility even when confidence remains shaky.Confidence Is Low, Even Among Users

Gallup found that among recent AI health users, 33% trust the information, 33% neither trust nor distrust it, and 34% distrust it. Only 4% said they strongly trust the accuracy of AI-generated health information. That is a remarkable gap between usage and confidence, and it should make anyone in health policy pause.KFF’s earlier work also found persistent skepticism, with many adults saying they are not confident AI chatbots provide accurate health information. The broader pattern is clear: Americans may appreciate the convenience, but they have not granted AI the moral or professional authority of a clinician.

Accuracy Versus Convenience

Pew’s report is useful because it separates convenience from accuracy. About 22% of Americans say they get health information from AI chatbots at least sometimes, but many of those same users do not rate the information as highly accurate. In practice, that means people may be using AI as a starting point while mentally discounting the answer — a partial trust model that can work, but only up to a point.Why Trust Still Matters

A health answer does not need to be perfect to influence behavior. If a chatbot says “monitor this” or “it sounds minor,” a user may delay care even while doubting the model. That is why low trust is not necessarily a safeguard; sometimes it just means people are using a tool while hoping it will be right.Doctors Want AI to Be a Tool, Not a Replacement

Medical professionals are not uniformly hostile to the trend. In fact, many doctors seem relieved when patients arrive with better questions and more context. But they are also clear that AI cannot replace clinical judgment, physical examination, or responsibility.The Physician’s Perspective

Dr. Bobby Mukkamala, president of the American Medical Association, said he likes when patients come in with more evolved questions after using AI for research. That is the best-case scenario: AI prepares the patient, and the clinician provides the interpretation. The risk is when the patient treats the bot as the final authority.This is why many experts frame AI as an assistant. It can organize information, surface possibilities, and help users think through what to ask next. But an assistant is not an expert, and it is certainly not a substitute for hands-on care.

What Better Patient Conversations Could Look Like

If AI is used well, appointments could become more focused. Patients may arrive with clearer symptom histories, more precise questions, and a better grasp of their own records. That could save time on both sides and make visits feel less rushed.Where the Line Should Stay

The line is crossed when a chatbot becomes the decision-maker. AI can help a person prepare for a visit, but it should not be the only triage system for chest pain, suspicious lesions, neurological symptoms, or medication changes. In health care, helpful is not the same as safest.- AI can improve question quality.

- AI can help explain terminology.

- AI can support follow-up after a visit.

- AI should not replace clinical evaluation.

Privacy and Data Security Are Emerging as Major Concerns

The privacy issue may become one of the most consequential parts of this story. Health data is uniquely sensitive, and users often share far more than they realize when they type symptoms, medications, or lab values into a chatbot. That creates both personal and systemic risks.People Are Sharing Medical Details

KFF found that 41% of AI health users said they had uploaded personal medical information such as test results or doctors’ notes to get more personalized advice. That means 13% of all adults have done so. In other words, a nontrivial slice of the public is feeding private health data into systems they may not fully understand.Concern Is Widespread

About three-quarters of adults told KFF they are concerned about the privacy of medical information given to AI tools. That concern is not irrational. Even if a platform claims it does not train on user data by default, the settings and disclosures can be confusing, and the burden often falls on the user to manage privacy controls.The Risk of Unintended Exposure

Gallup’s findings and AP’s reporting point to a broader lesson: users may not realize how easily conversations can be exposed, retained, or misused. The issue is not just what the model answers; it is also where the data goes afterward. That is a quiet but serious risk, especially when people are using AI for stigmatized or deeply personal concerns.What This Means for Consumers and Employers

The consumer impact is immediate, but the workplace impact may be just as important. If AI is becoming a routine part of health decision-making, it can affect attendance, productivity, benefit usage, and how quickly employees seek care. That makes this a workplace issue as much as a tech story.For Consumers

For consumers, AI is giving people a new sense of control over health uncertainty. It may help them prepare for appointments, understand side effects, or decide whether an issue is urgent. That can be empowering, but it can also create false reassurance if the answer is wrong or incomplete.For Employers and Health Plans

Employers and health plans should care because AI may change how workers use care. If people skip visits, delay treatment, or rely on AI to decide whether a symptom matters, costs could shift downstream. That might mean lower immediate utilization, but potentially more serious claims later.The Broader Market Signal

The market signal is also unmistakable. AI companies are increasingly positioning their products as health helpers, while search engines are adding AI summaries and health-related prompts. The competitive question is no longer whether AI belongs in health information; it is who gets to define the experience, the guardrails, and the trust model.Strengths and Opportunities

The rise of AI health advice is not automatically bad news. Used carefully, it can help people think more clearly, ask better questions, and engage with care more proactively. The opportunity is to make the technology complement, not corrode, clinical care.- Faster access to basic explanations when anxiety is high.

- Better preparation before appointments and follow-up visits.

- Improved health literacy through simpler language.

- More informed questions from patients during clinical encounters.

- Potential access support for people facing cost or time barriers.

- After-hours guidance for users who cannot easily reach a clinician.

- Workflow efficiency if patients arrive with clearer histories and priorities.

Risks and Concerns

The risks are equally real, and they grow if consumers begin treating AI as a primary source rather than a helper. The system’s convenience can mask the fact that health advice is one of the highest-stakes domains for error.- False reassurance can delay care for serious symptoms.

- Hallucinations or inaccuracies can create dangerous misunderstandings.

- Privacy leakage is a major concern when users upload test results or notes.

- Unequal access may widen if AI becomes a substitute for affordable care.

- Skipped follow-up can leave conditions untreated or under-evaluated.

- Overconfidence in summaries may crowd out uncertainty and nuance.

- Poor triage judgments can lead users to avoid urgent care.

Looking Ahead

The next phase of this trend will likely be defined by integration. AI health use is already spreading from standalone chatbots into search engines, consumer devices, and platform-native assistants. That means the line between “asking AI” and “searching the web” will keep blurring, and with it the question of who is responsible for the answer.Health systems, insurers, and regulators will face pressure to respond. Clinicians may need to assume more patients will arrive with AI-generated context, while platforms may need clearer rules on data handling and medical advice boundaries. The central challenge will be building systems that preserve convenience without normalizing unsafe self-diagnosis.

- More AI embedded in search will make usage harder to measure.

- More patient uploads will increase privacy stakes.

- More clinical adoption could normalize AI as a prep tool.

- More public skepticism may slow full trust, even as usage rises.

- More policy attention is likely if AI begins redirecting care decisions.

Source: JC Post Why many Americans are turning to AI for health advice, according to recent polls