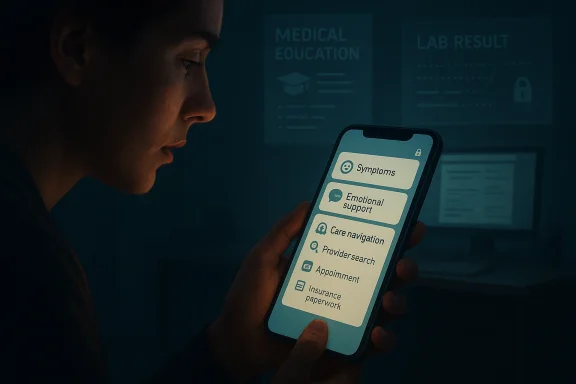

Americans are turning to AI chatbots for health help in ways that are broader, messier, and more human than many people expected. A new Microsoft AI study in Nature Health, based on more than 617,000 de-identified Copilot conversations, shows that people are not just asking for medical facts; they are using chatbots for symptoms, emotional support, care navigation, academic work, and paperwork. The result is a picture of consumer AI acting less like a novelty and more like a first-stop interface for health uncertainty

For years, the default path for health questions was a mixture of search engines, doctor visits, family advice, and whatever the patient could decode from portal messages or discharge paperwork. That workflow was already fragmented, but it was at least familiar. What has changed is that conversational AI now sits in the middle of that process and offers something that feels faster, more private, and easier to use than a traditional web search result page

The Microsoft study matters because it does not just measure adoption in a survey. It looks at actual conversations and shows what people are doing when they turn to Copilot for health help. The researchers sampled conversations from January 2026, classified those labeled “health and fitness,” and then applied LLM-based methods to map intent, context, and user journey. That design gives the results a different kind of texture from polling alone, because it captures real behavior instead of stated preference

The headline finding is that health chat is not dominated by abstract curiosity. The single largest category was health information and education, but the study also found substantial use for symptom questions, emotional well-being, condition information, and care navigation. In other words, people are bringing chatbots into the emotional and logistical parts of healthcare, not just the informational side

This fits a broader pattern that has already been visible in polling from Gallup, KFF, and Pew. Those studies found that many adults use AI for health information, but trust remains mixed. Users like speed and convenience, yet they do not treat chatbot answers as fully authoritative. That tension is important, because it suggests the technology is being used despite imperfect confidence, not because people have suddenly decided AI is medically equivalent to a clinician

The bigger story is that AI is becoming part of the care workflow. People are researching before and after doctor visits, interpreting lab results, asking about side effects, and trying to understand whether a symptom deserves urgency. That does not mean AI has replaced physicians. It means the first draft of health understanding is increasingly being written by software

That distinction matters because “health query” is too blunt a label for modern chatbot use. A person asking about nutrition is not the same as a parent asking whether a child’s fever is urgent, and neither is the same as a student asking for help with medical research. By separating intent, the study makes clear that health AI use is not one behavior but a bundle of different motivations

The sample also had a privacy-preserving design, which is important in a domain where users may enter sensitive or emotionally fraught information. The researchers did not need to know the identities of users to identify that health conversations often revolve around genuine decision-making pressure rather than idle curiosity

The study’s time window also matters. It only covers one month, so seasonal effects could shape some results. Even so, the distribution is revealing because the same kinds of questions keep surfacing across separate surveys from other institutions, which strengthens the case that these are durable usage patterns rather than a one-off spike

That means the line between health education and self-triage is blurrier than it seems. The chatbot may be used as a safe place to ask questions a person would not yet pose to a doctor. In that sense, the tool is functioning as a staging ground for care, not just a source of general knowledge

The study also found that many conversations focused on specific conditions and treatments rather than generic wellness. That is a signal that users are not merely browsing health trivia. They are trying to make decisions, interpret uncertainty, or prepare themselves for action

Symptom checking is one of the most intuitive chatbot use cases because it combines uncertainty, urgency, and embarrassment. A user may want to know whether a headache, rash, stomach issue, or medication side effect deserves attention, but they may not want to open a browser and sift through a wall of medical content. A chatbot offers a calmer, more conversational entry point

That ease can be useful, but it also creates a dangerous illusion of certainty. If a model sounds confident, users may treat that confidence as a diagnosis. In health care, that is risky because the wrong reassurance can be as harmful as the wrong alarm

This is where AI’s convenience becomes psychologically powerful. When someone is worried at 10 p.m., they may not want a formal medical system. They want an immediate answer, a little reassurance, or a quick sense of whether the situation is urgent. Chatbots are well positioned to meet that need, even if they cannot fully resolve it

That is especially true for desktop users, where the study found more academic support, paperwork, and research-oriented behavior. Desktop health conversations often looked like work-adjacent tasks, suggesting that people are using AI as a productivity tool for managing medicine as much as for understanding it

This has an important implication for enterprises and insurers. If AI is becoming the place people go to decode bills, prepare forms, or understand provider options, then consumer chat tools are quietly entering the administrative front end of health care. That could reduce friction, but it also creates new questions about correctness, liability, and whether users can distinguish general guidance from official policy

This also explains why academic support and research were so much more prominent on desktop than mobile. On a bigger screen, users are more likely to treat the chatbot as an assistant for synthesis and drafting. On a phone, they are more likely to treat it as a quick responder to a live concern

That divide matters for product design. A system that is used as a homework helper on a laptop and a symptom checker on a phone has to perform very different jobs under the same brand. One interface, many jobs is a strength for platform reach, but it is also a governance headache for product teams

That also means chatbot health usage is not just an individual behavior. It is embedded in family decision-making. The person asking may not be the patient, but they are often trying to protect someone else. That raises the stakes because the model may be helping users reason through third-party symptoms without the necessary context

There is also a social-emotional component here. Some users may find it easier to ask a chatbot about a spouse’s or child’s symptoms before escalating to a clinician. In that sense, AI becomes a form of private rehearsal for difficult conversations, which may be useful, but should not be mistaken for medical clearance

Pew’s findings reinforce the same point from another angle. Users often see AI health information as convenient and easy to understand, but not necessarily highly accurate. In practice, that means the tool is winning on usability even where it has not won on credibility

This is where the industry is heading into a serious governance problem. If a system is useful enough to shape decisions but not trusted enough to be authoritative, then users may end up in an unstable middle ground. They may follow advice when it is convenient and ignore it when it is inconvenient, which is not a good recipe for consistent care

In health, a bad suggestion does not just create disappointment. It can encourage delay, fuel unnecessary worry, or redirect care in the wrong direction. That is why the gap between trust and usage is more alarming than reassuring

What makes this shift so consequential is that it is happening at the intersection of convenience and vulnerability. Health questions arrive when people are tired, worried, busy, or scared, and AI is designed to respond instantly. That combination is powerful, but it is also fragile. If the industry gets this right, chatbot health tools could become a genuinely useful layer of orientation and support. If it gets it wrong, the same convenience that makes the tools attractive could make their mistakes spread faster than ever.

Source: News-Medical Study reveals what people ask AI chatbots about health most often

Background

Background

For years, the default path for health questions was a mixture of search engines, doctor visits, family advice, and whatever the patient could decode from portal messages or discharge paperwork. That workflow was already fragmented, but it was at least familiar. What has changed is that conversational AI now sits in the middle of that process and offers something that feels faster, more private, and easier to use than a traditional web search result pageThe Microsoft study matters because it does not just measure adoption in a survey. It looks at actual conversations and shows what people are doing when they turn to Copilot for health help. The researchers sampled conversations from January 2026, classified those labeled “health and fitness,” and then applied LLM-based methods to map intent, context, and user journey. That design gives the results a different kind of texture from polling alone, because it captures real behavior instead of stated preference

The headline finding is that health chat is not dominated by abstract curiosity. The single largest category was health information and education, but the study also found substantial use for symptom questions, emotional well-being, condition information, and care navigation. In other words, people are bringing chatbots into the emotional and logistical parts of healthcare, not just the informational side

This fits a broader pattern that has already been visible in polling from Gallup, KFF, and Pew. Those studies found that many adults use AI for health information, but trust remains mixed. Users like speed and convenience, yet they do not treat chatbot answers as fully authoritative. That tension is important, because it suggests the technology is being used despite imperfect confidence, not because people have suddenly decided AI is medically equivalent to a clinician

The bigger story is that AI is becoming part of the care workflow. People are researching before and after doctor visits, interpreting lab results, asking about side effects, and trying to understand whether a symptom deserves urgency. That does not mean AI has replaced physicians. It means the first draft of health understanding is increasingly being written by software

What the Microsoft Study Actually Measured

The study analyzed 617,827 conversations classified as health and fitness, which is a huge sample for a consumer AI dataset. That scale matters because it lets the researchers move beyond anecdotes and identify patterns in how people actually use the chatbot. The dataset also included a smaller sub-sample for deeper labeling, which helped them separate personal, academic, and administrative usesFrom broad topics to user intent

The research team did more than count questions. They assigned conversations to 12 general health intent categories and then used an LLM-based clustering approach on 10,000 conversations to group them by user journey. That allowed the study to show whether a user was seeking education, looking for help with a symptom, navigating care, or asking on behalf of someone elseThat distinction matters because “health query” is too blunt a label for modern chatbot use. A person asking about nutrition is not the same as a parent asking whether a child’s fever is urgent, and neither is the same as a student asking for help with medical research. By separating intent, the study makes clear that health AI use is not one behavior but a bundle of different motivations

The sample also had a privacy-preserving design, which is important in a domain where users may enter sensitive or emotionally fraught information. The researchers did not need to know the identities of users to identify that health conversations often revolve around genuine decision-making pressure rather than idle curiosity

Why the methodology matters

Because the study is based on Copilot logs, it reflects a specific platform and user base. That makes the findings strong for understanding Copilot behavior, but not automatically universal for every AI chatbot. Still, the internal consistency of the patterns suggests a broader consumer trend rather than a Copilot-only quirkThe study’s time window also matters. It only covers one month, so seasonal effects could shape some results. Even so, the distribution is revealing because the same kinds of questions keep surfacing across separate surveys from other institutions, which strengthens the case that these are durable usage patterns rather than a one-off spike

- The study examined 617,827 health-related conversations.

- It classified health behavior into 12 intent categories.

- A deeper sub-sample of 10,000 conversations was clustered by user journey.

- A separate sub-sample of 2,165 conversations was used to identify who the questions were about.

- The methodology was designed to be privacy-preserving rather than identity-driven

The Biggest Reason People Ask AI About Health

The most common reason people used Copilot for health was health information and education, which accounted for about 41% of health conversations. That category included general nutrition questions, explanations of medical conditions, and how medicines work. It is broad enough to include both casual and personal use, which is why the authors treat it as a likely lower bound for truly personal concernEducation often masks private concern

This is one of the most interesting parts of the study. A question that looks general on its face can still be rooted in a specific worry. Someone asking how a medication works may be preparing to start a prescription. Someone asking about a condition’s causes may be trying to decode a symptom they are reluctant to name directly. So the apparently “non-personal” category likely hides some private decision-makingThat means the line between health education and self-triage is blurrier than it seems. The chatbot may be used as a safe place to ask questions a person would not yet pose to a doctor. In that sense, the tool is functioning as a staging ground for care, not just a source of general knowledge

The study also found that many conversations focused on specific conditions and treatments rather than generic wellness. That is a signal that users are not merely browsing health trivia. They are trying to make decisions, interpret uncertainty, or prepare themselves for action

The practical interpretation

This behavior lines up with what consumers say in surveys: AI is convenient, understandable, and available when people need it. But that convenience comes with a subtle risk. A chatbot answer can feel like an explanation even when it is only a well-worded summary of common knowledge. That is precisely why health use is so sensitive- Health education was the largest intent category.

- General questions often overlap with personal anxiety.

- Specific condition and treatment questions were common.

- Users are clearly using AI for decision support, not just curiosity.

- The study likely understates the personal nature of some “general” questions

Symptom Questions, Anxiety, and Personal Health Worries

Once you move beyond generic education, the most emotionally charged category is symptom questioning. The study found that symptom questions and health concerns were much more common on mobile than desktop, which suggests these queries are often spontaneous, immediate, and tied to a specific worry. That pattern is exactly what you would expect from a person sitting with a concern late at night or away from a computerWhy mobile matters

Mobile usage was more associated with personal health intent, while desktop skewed toward academic support, medical paperwork, and research. That split is telling because it maps to context. A phone is typically in your hand when you are already in the moment, while a desktop is more likely to be used for planned, task-oriented workSymptom checking is one of the most intuitive chatbot use cases because it combines uncertainty, urgency, and embarrassment. A user may want to know whether a headache, rash, stomach issue, or medication side effect deserves attention, but they may not want to open a browser and sift through a wall of medical content. A chatbot offers a calmer, more conversational entry point

That ease can be useful, but it also creates a dangerous illusion of certainty. If a model sounds confident, users may treat that confidence as a diagnosis. In health care, that is risky because the wrong reassurance can be as harmful as the wrong alarm

The emotional layer

The study’s finding that personal health queries increase in the evening and at night is especially striking. That timing aligns with previous research on negative affect, where distress often grows later in the day. The researchers could not prove that the same people were changing mood across time, but the pattern strongly suggests that health anxiety has its own circadian rhythmThis is where AI’s convenience becomes psychologically powerful. When someone is worried at 10 p.m., they may not want a formal medical system. They want an immediate answer, a little reassurance, or a quick sense of whether the situation is urgent. Chatbots are well positioned to meet that need, even if they cannot fully resolve it

- Symptom questions were more common on mobile.

- Personal health concerns rose in the evening and nighttime.

- Users often seek reassurance, not just diagnosis.

- The timing suggests health anxiety is tightly tied to daily life.

- Chatbots are acting as a late-night triage layer

Care Navigation Is Becoming a Major Use Case

One of the study’s most revealing findings is that people are not just asking “What is this?” They are also asking “How do I deal with the system?” The researchers found many conversations about finding providers, understanding coverage, and managing appointments or paperwork. That puts AI squarely into the space of care navigation, which is often where patients feel most frustratedThe hidden bureaucracy of medicine

Health care is not only a clinical experience. It is also an administrative maze of billing, referrals, insurance language, scheduling, and forms. For many patients, that bureaucracy is as stressful as the health problem itself. A chatbot that can explain coverage terms or help organize questions for an appointment is solving a real pain point, even if it is not delivering care directlyThat is especially true for desktop users, where the study found more academic support, paperwork, and research-oriented behavior. Desktop health conversations often looked like work-adjacent tasks, suggesting that people are using AI as a productivity tool for managing medicine as much as for understanding it

This has an important implication for enterprises and insurers. If AI is becoming the place people go to decode bills, prepare forms, or understand provider options, then consumer chat tools are quietly entering the administrative front end of health care. That could reduce friction, but it also creates new questions about correctness, liability, and whether users can distinguish general guidance from official policy

A new kind of digital front desk

The study suggests that conversational AI is becoming a kind of pre-visit concierge. It can help users decide what to ask, what documents to gather, and how to think about next steps. That does not replace a human coordinator, but it can reduce the friction that keeps people from acting at all- Users ask AI for help finding providers.

- People use it to understand insurance coverage.

- Appointment scheduling and paperwork are common topics.

- Desktop usage is more aligned with administrative work.

- AI is becoming a digital front desk for frustrated patients

How Device Type Changes the Story

The clearest split in the study is not only between general and personal use, but between mobile and desktop behavior. Mobile usage was more personal and more emotionally loaded, while desktop use was more likely to support work, school, or paperwork. That means the same chatbot can serve different roles depending on where and when the user reaches for itDesktop as a research machine

Desktop health use often happened alongside other productive tasks such as thesis writing, research, or official documents. That makes sense because desktop sessions usually happen in a more deliberate setting. Users may be compiling notes, preparing for an appointment, or trying to understand a health issue in a structured wayThis also explains why academic support and research were so much more prominent on desktop than mobile. On a bigger screen, users are more likely to treat the chatbot as an assistant for synthesis and drafting. On a phone, they are more likely to treat it as a quick responder to a live concern

That divide matters for product design. A system that is used as a homework helper on a laptop and a symptom checker on a phone has to perform very different jobs under the same brand. One interface, many jobs is a strength for platform reach, but it is also a governance headache for product teams

Mobile as the emotional channel

Mobile sessions were more likely to happen at night and to involve symptoms, concerns, and well-being. That suggests the phone is where immediate worry gets translated into a question. The form factor itself may be shaping the emotional tone of the interaction, which is a useful reminder that technology usage is never just about model capability- Desktop use skews toward research and paperwork.

- Mobile use skews toward symptoms and emotional concerns.

- Time of day and device type appear closely linked.

- The same chatbot behaves like two different products.

- Context shapes the kind of trust the user is seeking

People Ask on Behalf of Others, Too

A useful nuance in the study is that not every health query was about the person typing the prompt. In the sub-sample of 2,165 conversations, the researchers found that in the personal intent categories, most questions were about the user, but a meaningful share involved a partner, child, parent, or other dependent. In symptom and condition questions, about one in seven queries were on behalf of someone elseWhy family caregiving matters

This is a bigger deal than it may first appear. Caregivers often act under pressure, with incomplete information and limited time. A parent trying to understand a child’s symptoms or an adult helping an aging parent manage a condition may not have access to the full clinical picture. AI can feel like a quick interpretive aid in those momentsThat also means chatbot health usage is not just an individual behavior. It is embedded in family decision-making. The person asking may not be the patient, but they are often trying to protect someone else. That raises the stakes because the model may be helping users reason through third-party symptoms without the necessary context

There is also a social-emotional component here. Some users may find it easier to ask a chatbot about a spouse’s or child’s symptoms before escalating to a clinician. In that sense, AI becomes a form of private rehearsal for difficult conversations, which may be useful, but should not be mistaken for medical clearance

Dependent care as a design challenge

The study suggests that future health AI products may need better support for caregiver scenarios. Systems that assume a single patient-user relationship may miss how often health decisions are made by families and proxies. That is especially true for pediatric, elder-care, and chronic-condition workflows- Some health queries are asked for a partner, child, or parent.

- Caregivers often need quick orientation, not certainty.

- On-behalf-of usage makes context harder for AI to infer.

- Family health decisions are a major hidden use case.

- Better caregiver-aware design may be needed

Trust Is Still the Central Fault Line

If the study shows what people ask AI about health, the polling around it shows what they think about the answers. The answer is: not enough to fully trust them. In Gallup’s work, only 33% of recent AI health users said they trust the information, while 34% said they distrust it and 33% were neutral. Only 4% strongly trusted itUsage is outpacing confidence

That gap is one of the most important findings in the broader AI health conversation. People are using the tools even though they do not fully believe them. That can be rational if the chatbot is treated as a first draft, but it becomes risky if the user treats the answer as a substitute for medical judgmentPew’s findings reinforce the same point from another angle. Users often see AI health information as convenient and easy to understand, but not necessarily highly accurate. In practice, that means the tool is winning on usability even where it has not won on credibility

This is where the industry is heading into a serious governance problem. If a system is useful enough to shape decisions but not trusted enough to be authoritative, then users may end up in an unstable middle ground. They may follow advice when it is convenient and ignore it when it is inconvenient, which is not a good recipe for consistent care

Why low trust does not eliminate risk

Low trust is not a cure-all. A user can distrust the model and still act on its advice if the answer feels plausible or if the alternative is too expensive or slow. That is why even skeptical users can be influenced by a confident chatbot responseIn health, a bad suggestion does not just create disappointment. It can encourage delay, fuel unnecessary worry, or redirect care in the wrong direction. That is why the gap between trust and usage is more alarming than reassuring

- Trust in AI health information is mixed, not strong.

- Users value convenience more than authority.

- A confident answer can still influence behavior.

- Skepticism does not fully prevent risk.

- Governance matters because the tool is already in use

Strengths and Opportunities

The study highlights why AI health chat is spreading so quickly, but it also shows where the real product opportunity lies. The strongest use cases are not flashy diagnosis claims; they are low-friction help, translation, and navigation. That is a very different market from the one many people imagine when they hear “AI in healthcare.”- Always-on access makes AI useful when clinics are closed.

- Plain-language translation helps users decode medical jargon.

- Care navigation support reduces friction around appointments, coverage, and paperwork.

- Desktop productivity use opens a path for research and administrative workflows.

- Mobile symptom support fits the moments when anxiety is highest.

- Caregiver scenarios create a broader family-oriented use case.

- Follow-up after doctor visits shows AI can support, not just replace, clinical encounters

Risks and Concerns

The upside is real, but so are the hazards. Health is one of the few consumer AI categories where a persuasive answer can change behavior in ways that matter immediately. The study and surrounding polling make clear that the main concerns are not theoretical; they are about delay, misinformation, privacy, and overconfidence.- Wrong reassurance could delay needed care.

- False alarms may increase anxiety and unnecessary utilization.

- Privacy exposure is a concern when users upload test results or notes.

- Hallucinations and omission errors are especially dangerous in health contexts.

- Low trust plus high use creates unstable decision-making.

- Caregiver queries may lack enough context for safe answers.

- Single-month data limits certainty about long-term seasonal patterns

What to Watch Next

The study is best understood as a snapshot of a much larger transition. The next phase will not just be about whether more people use AI for health, but about whether the technology becomes better integrated with records, care pathways, and human oversight. That is where the real test begins.The questions that now matter

- Whether health AI can reliably distinguish education from advice

- Whether products built around records and wearables reduce error or merely increase confidence

- Whether users continue to rely on AI at night for symptom triage

- Whether insurers, providers, and regulators set clearer boundaries for administrative help

- Whether future studies show actual health outcomes, not just query patterns

- Whether trust improves when AI is tied to constrained, clinical workflows

What makes this shift so consequential is that it is happening at the intersection of convenience and vulnerability. Health questions arrive when people are tired, worried, busy, or scared, and AI is designed to respond instantly. That combination is powerful, but it is also fragile. If the industry gets this right, chatbot health tools could become a genuinely useful layer of orientation and support. If it gets it wrong, the same convenience that makes the tools attractive could make their mistakes spread faster than ever.

Source: News-Medical Study reveals what people ask AI chatbots about health most often