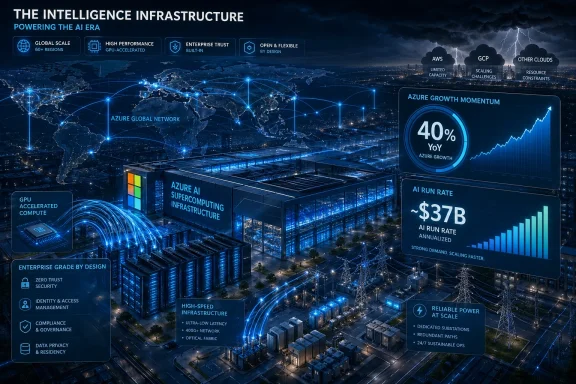

Microsoft reported on April 29, 2026 that Azure and other cloud services revenue rose 40% year over year in its fiscal third quarter, while its AI business surpassed a $37 billion annual revenue run rate. That is the kind of number that changes the argument around AI spending. The story is no longer whether Microsoft can buy its way into the AI boom. It is whether anyone without Microsoft’s balance sheet, data-center footprint, and enterprise distribution can keep up.

The market has spent much of the past two years asking whether Big Tech’s AI capital expenditure is becoming a bonfire. Microsoft’s latest results suggest a more uncomfortable answer for competitors: the bonfire is also a moat. The company is spending heavily because the product is being bought heavily, and Azure’s growth shows that infrastructure, not model exclusivity, is becoming the scarce asset in enterprise AI.

Microsoft’s quarterly headline numbers were impressive even before the AI gloss was applied. Revenue reached $82.9 billion, operating income rose 20%, and Microsoft Cloud revenue hit $54.5 billion, up 29% from a year earlier. But the figure that matters most for the next phase of the cloud wars is Azure’s 40% growth, or 39% in constant currency.

That growth lands at a moment when investors have been trying to decide whether AI is a software story or an infrastructure story. The answer, increasingly, is that it is both — but the profits accrue first to companies that can provision the machines. A chatbot subscription may be the visible product, but the durable leverage sits in chips, networking, power contracts, cooling systems, identity layers, compliance tooling, and global sales relationships.

Microsoft’s AI revenue run rate, now above $37 billion by its own disclosure, is not a side note. It is evidence that AI has moved beyond demo culture and into procurement culture. Enterprises are not merely experimenting with models; they are buying capacity, embedding Copilot-like features into workflows, and asking cloud providers to carry the operational risk.

That is why Azure’s growth matters more than the latest benchmark leaderboard. If the same foundation models increasingly appear across multiple clouds, customers will not choose only on novelty. They will choose on availability, governance, latency, data residency, security posture, and how easily an AI system plugs into the identity and application estate they already run.

That is a major strategic shift. Microsoft’s license to OpenAI intellectual property for models and products continues through 2032, but it is now non-exclusive. Microsoft will no longer pay a revenue share to OpenAI, while OpenAI will continue paying Microsoft a revenue share through 2030, at the same percentage but subject to a cap. Microsoft also remains a major shareholder.

The old arrangement made Azure feel like the privileged gateway to the industry’s most famous AI lab. The new arrangement makes Azure something subtler and arguably more defensible: the first-choice infrastructure partner in a world where OpenAI needs more compute than any single cloud can comfortably promise forever. Exclusivity mattered when access was the bottleneck. Capacity matters when demand is.

This is not a retreat so much as a normalization. OpenAI wants to distribute broadly, court customers wherever they already spend, and avoid being constrained by one vendor’s data-center buildout. Microsoft wants OpenAI economics without being solely defined by OpenAI dependency. Both sides are acting like companies that believe AI demand will be too large, too capital-intensive, and too politically scrutinized for a single exclusive relationship to carry the whole market.

The next advantage is messier and more physical. It is the ability to build data centers fast enough, secure enough power, absorb GPU supply shocks, design inference infrastructure, run regulated workloads, and offer customers contractual confidence that the system will still be there when their pilot becomes a production dependency. This is where Microsoft’s AI spending starts looking less like speculative excess and more like the cloud equivalent of railroad expansion.

The company has been explicit that AI demand is driving capital and talent investment. It has also warned that cloud and AI services bring execution and competitive risks. That duality is the whole story: Microsoft is spending because it sees demand, but the spending itself creates a new class of risk that only the largest platforms can tolerate.

For smaller cloud providers, this is brutal. They can rent GPUs, partner, specialize, or differentiate around geography and service quality, but they cannot easily replicate the combination of Azure’s global reach, Microsoft 365 distribution, enterprise account control, and balance sheet. AI has not made cloud infrastructure more democratic. It has made the cloud’s scale economics more visible.

Amazon’s opportunity is obvious. AWS remains the largest cloud platform by many measures, with deep enterprise relationships and its own AI stack through Bedrock, Trainium, Inferentia, and partnerships with model providers. If OpenAI becomes easier to buy on AWS, Microsoft loses some of the automatic pull-through that came from being the exclusive or default OpenAI cloud path.

Google has a different opening. It has frontier-model credibility through Gemini, custom silicon through TPUs, and a long history of AI research. A less exclusive OpenAI ecosystem could strengthen Google Cloud’s argument that customers should not bet on a single model family. In that world, the platform that orchestrates multiple models safely may be more valuable than the platform with one marquee relationship.

But neither AWS nor Google gets to skip the hard part. Serving frontier AI at enterprise scale is not just a marketplace listing. It requires enormous infrastructure commitments, high utilization discipline, and the ability to keep inference economics from eating margins. The same opening that weakens Microsoft’s exclusivity also raises the bar for everyone else.

But Microsoft also gained clarity. It keeps model and product IP rights through 2032, stops paying revenue share to OpenAI, continues receiving OpenAI revenue share through 2030, and remains financially tied to OpenAI’s upside. In other words, Microsoft is exchanging some control for a cleaner economic structure and more strategic flexibility.

That matters because Microsoft has already been signaling that Copilot cannot be a single-model religion. Its enterprise AI messaging increasingly emphasizes model diversity, orchestration, governance, and trust. The company has been adding Anthropic, open-weight models, and other model options across parts of its stack, positioning Copilot and Microsoft Foundry less as wrappers around one lab and more as enterprise control planes for AI work.

This is a mature-cloud move. Microsoft does not want to be merely the reseller of someone else’s intelligence. It wants to be the operating environment where intelligence is procured, secured, observed, governed, and billed. OpenAI remains critical, but Microsoft’s long-term margin story depends on owning the enterprise layer above and the infrastructure layer below.

That does not mean the application layer is irrelevant. Microsoft 365 Copilot, GitHub Copilot, Security Copilot, Dynamics, Power Platform, and Windows-adjacent AI features still matter enormously. But their economics increasingly depend on whether Microsoft can deliver the back-end capacity cheaply and reliably enough to make AI features feel normal rather than exotic.

This is where the market’s worry about cash flow collides with the market’s appetite for growth. AI infrastructure is expensive before it is efficient. Servers must be bought, data centers built, power secured, and depreciation absorbed. If demand disappoints, the spending looks reckless. If demand keeps growing, the same spending becomes the reason competitors cannot catch up.

Microsoft’s quarter strengthens the second case. Azure’s growth implies that customers are not waiting for perfect clarity before buying AI-related cloud services. They are moving now, often because their competitors are moving now. The risk for Microsoft is not that AI has no demand. The risk is that demand is so uneven, power-hungry, and costly to serve that margins become harder to defend.

A CIO does not buy a model the way a hobbyist chooses a chatbot. The enterprise buyer has to think about identity, permissions, audit logs, data leakage, retention, regulatory exposure, procurement terms, support, uptime, and integration with existing systems. If several clouds can offer access to strong models, the differentiator becomes the system around the model.

That is Microsoft’s home field. Its pitch is not merely that Azure can run OpenAI models. It is that Azure can run AI inside an enterprise stack already tied to Entra ID, Microsoft 365, Defender, Purview, Fabric, GitHub, and Windows endpoints. The more AI becomes a production workflow rather than a novelty, the more those boring integration points matter.

This also explains why Microsoft can tolerate a less exclusive OpenAI relationship. If OpenAI’s models are everywhere, Microsoft’s job is to make them more useful, governable, and monetizable inside Microsoft-shaped enterprises. The fight shifts from “who has the model?” to “who controls the workflow?”

Yet AI pricing is unlikely to collapse in a clean, linear way. The costs are too variable. Reasoning models can consume far more compute than simpler completions. Agents that call tools, search documents, generate code, and run multi-step workflows have unpredictable usage patterns. Enterprise-grade guarantees are expensive. Data residency and compliance add complexity. The cheapest token is not always the cheapest business outcome.

Still, competition will bite. AWS and Google will not treat OpenAI distribution as charity. They will use it to defend workloads, win migrations, and pressure Azure’s AI premium. Microsoft, in turn, will bundle, discount, and integrate AI into broader enterprise agreements in ways that make direct price comparisons difficult.

That is bad news for stand-alone AI vendors that lack infrastructure leverage. If model access gets broader and cloud platforms compete aggressively, thin wrappers around third-party models will be squeezed. The durable businesses will either own differentiated models, own critical workflow data, or own the infrastructure and governance layer that enterprises cannot easily replace.

The PC is becoming one surface among many for AI work. Some tasks will run locally on neural processing units for privacy, latency, or cost reasons. Others will burst to cloud models because they require frontier-scale reasoning or access to enterprise data. The winning experience will hide that boundary from users while giving admins enough policy control to sleep at night.

That is why Azure’s capacity buildout matters even to people who mostly care about Windows. Microsoft’s ability to make AI feel native in Windows depends partly on whether the cloud side can handle the heavy lifting. Local AI may reduce some inference costs, but it will not eliminate the need for cloud-scale models, enterprise connectors, and centralized governance.

The same is true for developers. GitHub Copilot, Visual Studio integrations, Azure AI Foundry, and Windows development workflows all become more compelling if Microsoft can offer a coherent path from local coding to cloud deployment to governed production agents. The desktop and the data center are no longer separate Microsoft stories. They are two ends of the same AI pipeline.

Microsoft can argue that OpenAI’s ability to serve products across any cloud provider makes the ecosystem more competitive. That is true at the distribution layer. Customers should have more choice if OpenAI tools are not bound as tightly to Azure.

But infrastructure concentration remains a harder problem. AI workloads need scarce chips, massive power commitments, specialized data centers, and global network reliability. The companies best positioned to supply those inputs are the same hyperscalers already dominating cloud computing. Even without exclusive contracts, the market may naturally consolidate around the firms with the deepest pockets.

That is not necessarily illegal, and it is not necessarily bad for customers in the short term. Scale can lower costs and improve reliability. But it does mean the AI economy may inherit the cloud economy’s concentration and intensify it. The rhetoric of open AI will keep colliding with the physics of closed capital.

But a 40% Azure growth rate makes the bearish case harder to state cleanly. This is not a company building empty temples to AI. It is a company adding capacity into visible demand, with commercial remaining performance obligation swelling and cloud revenue continuing to compound at enormous scale. The spending is pressuring cash flow, but it is also feeding the segment investors most want to own.

The more nuanced concern is not whether AI spending pays off at all. It is who captures the payoff. Microsoft may grow revenue while still facing margin pressure from expensive inference, GPU depreciation, energy costs, and competitive pricing. Customers may adopt AI widely while pushing vendors into brutal cost competition. OpenAI may expand distribution while weakening Azure’s unique pull. All of these can be true at once.

That is why this quarter matters. It does not prove that every AI dollar will earn a handsome return. It proves that Microsoft’s AI infrastructure bet has crossed from narrative into measurable sales momentum. In markets, that distinction is everything.

Source: Finimize https://finimize.com/content/microsofts-azure-growth-shows-its-ai-spend-is-paying-off/

The market has spent much of the past two years asking whether Big Tech’s AI capital expenditure is becoming a bonfire. Microsoft’s latest results suggest a more uncomfortable answer for competitors: the bonfire is also a moat. The company is spending heavily because the product is being bought heavily, and Azure’s growth shows that infrastructure, not model exclusivity, is becoming the scarce asset in enterprise AI.

Azure Is Turning AI Hype Into a Capacity Business

Azure Is Turning AI Hype Into a Capacity Business

Microsoft’s quarterly headline numbers were impressive even before the AI gloss was applied. Revenue reached $82.9 billion, operating income rose 20%, and Microsoft Cloud revenue hit $54.5 billion, up 29% from a year earlier. But the figure that matters most for the next phase of the cloud wars is Azure’s 40% growth, or 39% in constant currency.That growth lands at a moment when investors have been trying to decide whether AI is a software story or an infrastructure story. The answer, increasingly, is that it is both — but the profits accrue first to companies that can provision the machines. A chatbot subscription may be the visible product, but the durable leverage sits in chips, networking, power contracts, cooling systems, identity layers, compliance tooling, and global sales relationships.

Microsoft’s AI revenue run rate, now above $37 billion by its own disclosure, is not a side note. It is evidence that AI has moved beyond demo culture and into procurement culture. Enterprises are not merely experimenting with models; they are buying capacity, embedding Copilot-like features into workflows, and asking cloud providers to carry the operational risk.

That is why Azure’s growth matters more than the latest benchmark leaderboard. If the same foundation models increasingly appear across multiple clouds, customers will not choose only on novelty. They will choose on availability, governance, latency, data residency, security posture, and how easily an AI system plugs into the identity and application estate they already run.

The OpenAI Deal Is Less Exclusive, Not Less Important

The most revealing part of Microsoft’s week was not just the earnings report. It was the amended OpenAI agreement announced two days earlier, which loosened one of the defining partnerships of the generative AI era. Microsoft remains OpenAI’s primary cloud partner, and OpenAI products are still expected to ship first on Azure unless Microsoft cannot or chooses not to support the necessary capabilities. But OpenAI can now serve products across any cloud provider.That is a major strategic shift. Microsoft’s license to OpenAI intellectual property for models and products continues through 2032, but it is now non-exclusive. Microsoft will no longer pay a revenue share to OpenAI, while OpenAI will continue paying Microsoft a revenue share through 2030, at the same percentage but subject to a cap. Microsoft also remains a major shareholder.

The old arrangement made Azure feel like the privileged gateway to the industry’s most famous AI lab. The new arrangement makes Azure something subtler and arguably more defensible: the first-choice infrastructure partner in a world where OpenAI needs more compute than any single cloud can comfortably promise forever. Exclusivity mattered when access was the bottleneck. Capacity matters when demand is.

This is not a retreat so much as a normalization. OpenAI wants to distribute broadly, court customers wherever they already spend, and avoid being constrained by one vendor’s data-center buildout. Microsoft wants OpenAI economics without being solely defined by OpenAI dependency. Both sides are acting like companies that believe AI demand will be too large, too capital-intensive, and too politically scrutinized for a single exclusive relationship to carry the whole market.

The Moat Moves From Model Access to Metal

For the first wave of generative AI, Microsoft’s advantage was easy to describe: it had OpenAI. That was true enough to move markets, sell Copilot, and make Azure OpenAI Service a boardroom phrase. But it was always too simple.The next advantage is messier and more physical. It is the ability to build data centers fast enough, secure enough power, absorb GPU supply shocks, design inference infrastructure, run regulated workloads, and offer customers contractual confidence that the system will still be there when their pilot becomes a production dependency. This is where Microsoft’s AI spending starts looking less like speculative excess and more like the cloud equivalent of railroad expansion.

The company has been explicit that AI demand is driving capital and talent investment. It has also warned that cloud and AI services bring execution and competitive risks. That duality is the whole story: Microsoft is spending because it sees demand, but the spending itself creates a new class of risk that only the largest platforms can tolerate.

For smaller cloud providers, this is brutal. They can rent GPUs, partner, specialize, or differentiate around geography and service quality, but they cannot easily replicate the combination of Azure’s global reach, Microsoft 365 distribution, enterprise account control, and balance sheet. AI has not made cloud infrastructure more democratic. It has made the cloud’s scale economics more visible.

AWS and Google Get an Opening, But Not a Free Pass

The amended OpenAI deal gives Amazon Web Services, Google Cloud, Oracle, and others a clearer path to carry OpenAI workloads or offer OpenAI products to customers already committed to their platforms. That matters. Many enterprises are multi-cloud in theory and deeply attached to one primary cloud in practice. If OpenAI models appear more directly across those environments, procurement friction falls.Amazon’s opportunity is obvious. AWS remains the largest cloud platform by many measures, with deep enterprise relationships and its own AI stack through Bedrock, Trainium, Inferentia, and partnerships with model providers. If OpenAI becomes easier to buy on AWS, Microsoft loses some of the automatic pull-through that came from being the exclusive or default OpenAI cloud path.

Google has a different opening. It has frontier-model credibility through Gemini, custom silicon through TPUs, and a long history of AI research. A less exclusive OpenAI ecosystem could strengthen Google Cloud’s argument that customers should not bet on a single model family. In that world, the platform that orchestrates multiple models safely may be more valuable than the platform with one marquee relationship.

But neither AWS nor Google gets to skip the hard part. Serving frontier AI at enterprise scale is not just a marketplace listing. It requires enormous infrastructure commitments, high utilization discipline, and the ability to keep inference economics from eating margins. The same opening that weakens Microsoft’s exclusivity also raises the bar for everyone else.

Microsoft Is Quietly De-Risking Its OpenAI Dependence

The conventional reading of the amended deal is that Microsoft gave something up. It did. OpenAI can now reach customers across other clouds, and Microsoft’s license is no longer exclusive. That reduces the sense that Azure is the one unavoidable commercial route into OpenAI’s technology.But Microsoft also gained clarity. It keeps model and product IP rights through 2032, stops paying revenue share to OpenAI, continues receiving OpenAI revenue share through 2030, and remains financially tied to OpenAI’s upside. In other words, Microsoft is exchanging some control for a cleaner economic structure and more strategic flexibility.

That matters because Microsoft has already been signaling that Copilot cannot be a single-model religion. Its enterprise AI messaging increasingly emphasizes model diversity, orchestration, governance, and trust. The company has been adding Anthropic, open-weight models, and other model options across parts of its stack, positioning Copilot and Microsoft Foundry less as wrappers around one lab and more as enterprise control planes for AI work.

This is a mature-cloud move. Microsoft does not want to be merely the reseller of someone else’s intelligence. It wants to be the operating environment where intelligence is procured, secured, observed, governed, and billed. OpenAI remains critical, but Microsoft’s long-term margin story depends on owning the enterprise layer above and the infrastructure layer below.

Capital Expenditure Is the New Product Roadmap

For WindowsForum readers, the most interesting part of this shift may be how un-software-like the software industry has become. The defining Microsoft product roadmap now includes data centers, power agreements, liquid cooling, accelerators, and network fabrics. The company’s strategic vocabulary has moved from features and licenses to gigawatts and inference-heavy workloads.That does not mean the application layer is irrelevant. Microsoft 365 Copilot, GitHub Copilot, Security Copilot, Dynamics, Power Platform, and Windows-adjacent AI features still matter enormously. But their economics increasingly depend on whether Microsoft can deliver the back-end capacity cheaply and reliably enough to make AI features feel normal rather than exotic.

This is where the market’s worry about cash flow collides with the market’s appetite for growth. AI infrastructure is expensive before it is efficient. Servers must be bought, data centers built, power secured, and depreciation absorbed. If demand disappoints, the spending looks reckless. If demand keeps growing, the same spending becomes the reason competitors cannot catch up.

Microsoft’s quarter strengthens the second case. Azure’s growth implies that customers are not waiting for perfect clarity before buying AI-related cloud services. They are moving now, often because their competitors are moving now. The risk for Microsoft is not that AI has no demand. The risk is that demand is so uneven, power-hungry, and costly to serve that margins become harder to defend.

Enterprise Buyers Will Care Less About the Logo on the Model

The Finimize framing gets the central point right: AI partnerships are becoming less exclusive and more interchangeable. That does not mean models are commodities in a technical sense. Frontier models still differ in reasoning, coding, multimodal behavior, cost, latency, context handling, safety policies, and tool use. But for enterprise buyers, the model is only one component in a much larger risk equation.A CIO does not buy a model the way a hobbyist chooses a chatbot. The enterprise buyer has to think about identity, permissions, audit logs, data leakage, retention, regulatory exposure, procurement terms, support, uptime, and integration with existing systems. If several clouds can offer access to strong models, the differentiator becomes the system around the model.

That is Microsoft’s home field. Its pitch is not merely that Azure can run OpenAI models. It is that Azure can run AI inside an enterprise stack already tied to Entra ID, Microsoft 365, Defender, Purview, Fabric, GitHub, and Windows endpoints. The more AI becomes a production workflow rather than a novelty, the more those boring integration points matter.

This also explains why Microsoft can tolerate a less exclusive OpenAI relationship. If OpenAI’s models are everywhere, Microsoft’s job is to make them more useful, governable, and monetizable inside Microsoft-shaped enterprises. The fight shifts from “who has the model?” to “who controls the workflow?”

The Price War Is Coming, But It Will Not Be Simple

When the same AI tools appear across multiple clouds, price pressure usually follows. Customers will compare inference costs, committed-use discounts, latency tiers, and bundled Copilot-style pricing. Procurement teams will ask why a model call should cost materially more on one cloud than another if the underlying capability looks similar.Yet AI pricing is unlikely to collapse in a clean, linear way. The costs are too variable. Reasoning models can consume far more compute than simpler completions. Agents that call tools, search documents, generate code, and run multi-step workflows have unpredictable usage patterns. Enterprise-grade guarantees are expensive. Data residency and compliance add complexity. The cheapest token is not always the cheapest business outcome.

Still, competition will bite. AWS and Google will not treat OpenAI distribution as charity. They will use it to defend workloads, win migrations, and pressure Azure’s AI premium. Microsoft, in turn, will bundle, discount, and integrate AI into broader enterprise agreements in ways that make direct price comparisons difficult.

That is bad news for stand-alone AI vendors that lack infrastructure leverage. If model access gets broader and cloud platforms compete aggressively, thin wrappers around third-party models will be squeezed. The durable businesses will either own differentiated models, own critical workflow data, or own the infrastructure and governance layer that enterprises cannot easily replace.

Windows Is Not the Center of This Story, But It Is Not Outside It

For a Windows audience, Azure’s AI growth can feel distant from the desktop. But Microsoft’s cloud economics increasingly shape what happens on Windows PCs. Copilot in Windows, Recall-like local indexing concepts, on-device NPUs, developer tools, and enterprise endpoint management all sit downstream of Microsoft’s broader AI platform strategy.The PC is becoming one surface among many for AI work. Some tasks will run locally on neural processing units for privacy, latency, or cost reasons. Others will burst to cloud models because they require frontier-scale reasoning or access to enterprise data. The winning experience will hide that boundary from users while giving admins enough policy control to sleep at night.

That is why Azure’s capacity buildout matters even to people who mostly care about Windows. Microsoft’s ability to make AI feel native in Windows depends partly on whether the cloud side can handle the heavy lifting. Local AI may reduce some inference costs, but it will not eliminate the need for cloud-scale models, enterprise connectors, and centralized governance.

The same is true for developers. GitHub Copilot, Visual Studio integrations, Azure AI Foundry, and Windows development workflows all become more compelling if Microsoft can offer a coherent path from local coding to cloud deployment to governed production agents. The desktop and the data center are no longer separate Microsoft stories. They are two ends of the same AI pipeline.

Regulators Will Notice the Infrastructure Moat

A less exclusive OpenAI deal may reduce one antitrust pressure point, but it does not make the broader competition questions disappear. If anything, the focus may shift from contractual exclusivity to structural capacity. When only a handful of firms can finance and operate the infrastructure required for frontier AI, regulators will ask whether the market is open in name only.Microsoft can argue that OpenAI’s ability to serve products across any cloud provider makes the ecosystem more competitive. That is true at the distribution layer. Customers should have more choice if OpenAI tools are not bound as tightly to Azure.

But infrastructure concentration remains a harder problem. AI workloads need scarce chips, massive power commitments, specialized data centers, and global network reliability. The companies best positioned to supply those inputs are the same hyperscalers already dominating cloud computing. Even without exclusive contracts, the market may naturally consolidate around the firms with the deepest pockets.

That is not necessarily illegal, and it is not necessarily bad for customers in the short term. Scale can lower costs and improve reliability. But it does mean the AI economy may inherit the cloud economy’s concentration and intensify it. The rhetoric of open AI will keep colliding with the physics of closed capital.

The Azure Number Rewrites the AI Spending Debate

The strongest argument against Microsoft’s AI spending has been that the company is overbuilding ahead of uncertain demand. That argument is not dead. Capital cycles can overshoot, customers can optimize usage, and a recession could make expensive AI projects easier to postpone. Even Microsoft’s own risk language acknowledges that significant investments may not achieve expected returns.But a 40% Azure growth rate makes the bearish case harder to state cleanly. This is not a company building empty temples to AI. It is a company adding capacity into visible demand, with commercial remaining performance obligation swelling and cloud revenue continuing to compound at enormous scale. The spending is pressuring cash flow, but it is also feeding the segment investors most want to own.

The more nuanced concern is not whether AI spending pays off at all. It is who captures the payoff. Microsoft may grow revenue while still facing margin pressure from expensive inference, GPU depreciation, energy costs, and competitive pricing. Customers may adopt AI widely while pushing vendors into brutal cost competition. OpenAI may expand distribution while weakening Azure’s unique pull. All of these can be true at once.

That is why this quarter matters. It does not prove that every AI dollar will earn a handsome return. It proves that Microsoft’s AI infrastructure bet has crossed from narrative into measurable sales momentum. In markets, that distinction is everything.

The Cloud War Now Runs Through the Power Substation

The concrete lesson from Microsoft’s week is that AI advantage is moving down the stack. The glamorous layer still gets the demos, but the durable fight is over who can operate the machinery behind those demos at global scale. For IT buyers, investors, and Microsoft’s competitors, the implications are already visible.- Microsoft’s latest quarter showed that Azure’s AI-driven momentum is translating into real revenue growth, not just executive talking points.

- The amended OpenAI agreement weakens Microsoft’s exclusivity but preserves major strategic benefits, including continued OpenAI revenue share through 2030 and IP rights through 2032.

- OpenAI’s broader cloud distribution gives AWS, Google Cloud, and others a stronger competitive opening, especially with customers that do not want to standardize on Azure.

- Enterprise AI buying will increasingly turn on uptime, security, compliance, integration, and capacity rather than exclusive access to a single frontier model.

- Smaller AI and cloud players face a harsher market as hyperscalers turn infrastructure scale into the defining advantage of the AI era.

Source: Finimize https://finimize.com/content/microsofts-azure-growth-shows-its-ai-spend-is-paying-off/