CData’s recent stretch into the Model Context Protocol (MCP) ecosystem marks a clear pivot: the company that spent years building universal connectors is now selling not just access to data, but the context that makes that data usable by agents and AI assistants in production. The launch of Connect AI and the rapid string of partnerships and hires that followed position CData as a player aiming to solve the single biggest bottleneck in enterprise AI adoption—governed, live access to the systems that actually run the business.

For more than a decade CData built its reputation on a simple idea: make it easy for applications and analysts to reach data where it lives. That legacy—broad, maintained connectors to CRMs, ERPs, databases, and cloud platforms—remains the foundation of the company’s value. What changed in 2025 is not the existence of those connectors, but how CData packages and exposes them to AI systems.

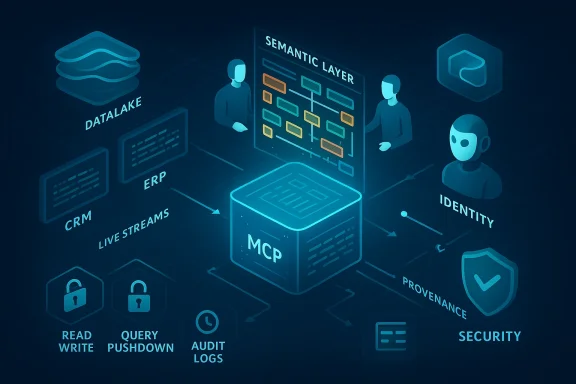

Connect AI reframes connectivity as an MCP‑native service: a managed Model Context Protocol platform that presents live, semantic-aware endpoints to agent frameworks. Instead of copying data into data lakes or building brittle point-to-point integrations for each assistant, Connect AI lets agents query business systems in-place while inheriting the source system’s permissions, metadata, and schema relationships.

That shift matters because enterprise AI failures rarely come from poor models. They come from missing, stale, or poorly governed data. Without reliable, auditable access to the right records—at the right permission level—agents can’t safely reason about customers, contracts, invoices, or inventory. CData’s bet is that solving the connectivity and context problem removes one of the largest practical barriers between pilots and production.

Why does this matter now? The enterprise market spent heavily on pilots in 2024–2025, but a growing body of industry research shows most pilots don’t translate into measurable production value. One recurring theme across analyst commentary and independent research is that governed access to operational systems—not model quality—is the primary blocker. Connect AI targets that choke point by offering:

CData’s proposition addresses all three in one managed surface:

Strengths

That said, competition is intense. Big cloud vendors and platform incumbents have two natural responses:

Finally, the trend of “semantic connectivity” can change how enterprise applications are built. If agents can reliably access system-level semantics, product developers may stop shipping heavy data export features and instead rely on controlled MCP interfaces. That changes integration economics and reduces the friction for new agent-enabled features across verticals.

Connect AI bundles three things teams usually must implement themselves: a protocol‑native server (MCP), a semantic mapping layer, and a managed operations model. That combination can materially shorten the path from pilot to production—provided organizations take the obvious but non-trivial steps to harden security, design safe action policies, and build observability into agent lifecycles.

The devil is in the operational details. MCP servers centralize power and therefore risk; semantic mapping centralizes interpretation and therefore requires continuous validation; and agentic actions centralize workflow and therefore require robust approval and rollback mechanisms. For enterprises that treat these as a product-program problem—defined SLAs, staged rollouts, thorough testing—the CData approach could be the connective tissue that finally lets agents move from prototypes into the fabric of business processes.

For those still in pilot purgatory, the lesson is practical: solving model choice is necessary but not sufficient. The production problem is a data and governance problem, and infrastructure that treats connectivity as a managed, semantic, auditable layer is worth evaluating. CData’s bet is sensible; the proof will be whether it reduces implementation time, operational surprises, and security incidents once agents become part of day-to-day business operations.

Source: The Software Report CData Is Teaching AI Agents Where the Data Lives | The Software Report

Background: from connectors to context

Background: from connectors to context

For more than a decade CData built its reputation on a simple idea: make it easy for applications and analysts to reach data where it lives. That legacy—broad, maintained connectors to CRMs, ERPs, databases, and cloud platforms—remains the foundation of the company’s value. What changed in 2025 is not the existence of those connectors, but how CData packages and exposes them to AI systems.Connect AI reframes connectivity as an MCP‑native service: a managed Model Context Protocol platform that presents live, semantic-aware endpoints to agent frameworks. Instead of copying data into data lakes or building brittle point-to-point integrations for each assistant, Connect AI lets agents query business systems in-place while inheriting the source system’s permissions, metadata, and schema relationships.

That shift matters because enterprise AI failures rarely come from poor models. They come from missing, stale, or poorly governed data. Without reliable, auditable access to the right records—at the right permission level—agents can’t safely reason about customers, contracts, invoices, or inventory. CData’s bet is that solving the connectivity and context problem removes one of the largest practical barriers between pilots and production.

What CData announced and why the timing matters

In late September 2025 CData unveiled Connect AI, a managed MCP platform that the company describes as enabling AI assistants, agent orchestration platforms, and embedded AI apps to access more than 300 (now promoted as 350+) enterprise systems with semantic intent and governed, in-place access. Within weeks they extended availability to two of the platforms enterprises are actively using to build agents: Databricks’ Marketplace for Agent Bricks, and Microsoft’s Copilot Studio / Agent 365 ecosystem. A strategic product hire—Ken Yagen, formerly of MuleSoft and other enterprise product roles—arrived to lead product as the company pushes into the AI-connectivity category.Why does this matter now? The enterprise market spent heavily on pilots in 2024–2025, but a growing body of industry research shows most pilots don’t translate into measurable production value. One recurring theme across analyst commentary and independent research is that governed access to operational systems—not model quality—is the primary blocker. Connect AI targets that choke point by offering:

- Live, in-place access (no wholesale extraction)

- Preservation of semantics and relationships (schema-aware context)

- Identity-first security (inherit source system RBAC)

- A managed MCP server that exposes a uniform semantic API to many agent frameworks

Inside the architecture: Model Context Protocol and the semantic layer

Two technical ideas sit at the center of this approach: the Model Context Protocol (MCP) and a pre‑mapped semantic layer.- MCP is an open protocol that standardizes how LLMs and agents connect to external tools, files, databases, and service endpoints. Adopted by major AI platforms and increasingly treated as the “adapter” layer for agentic workflows, MCP provides a transport and message model that lets an AI system request context, run queries, read files, and call actions through a consistent interface.

- The semantic layer is what makes CData’s connectors different in practice. Rather than surface raw endpoints and require an LLM to discover tables, fields, and relationships at runtime, CData pre-maps system schemas into a semantic model that surfaces table names, field types, relationships, and business logic to the agent up-front. That reduces exploratory token consumption, speeds first-try correctness, and provides predictable mappings between user intent and the underlying data shape.

- Read and write: agents can query and—when permitted—perform writes and actions in source systems.

- Identity passthrough: queries are executed under the authenticated user or agent identity, inheriting row- and field-level permissions where supported.

- Query pushdown and federation: filtering, joins and aggregation are pushed to source systems where feasible, limiting data shipped to the model and reducing token and compute cost.

- Audit and governance: every request and result is logged with attribution for audit trails and SIEM export.

The ecosystem plays: Databricks, Microsoft, and the agent wave

CData did not launch Connect AI in isolation. Two platform integrations are especially consequential.- Databricks: Publishing Connect AI on the Databricks Marketplace and positioning it as a launch partner for Agent Bricks ties CData directly into the workflows organizations already use for data engineering, analytics, and agent orchestration. The technical value is obvious—Databricks hosts historical, large-scale datasets; Connect AI brings operational system context to agents built in that environment so an Agent Bricks instance can reason across both sources in real time.

- Microsoft: Integration into Copilot Studio and Agent 365 embeds managed MCP connectivity into Microsoft’s agent and copilot tooling. For enterprises using Microsoft 365, Azure, and the Copilot ecosystem, this reduces friction for IT teams that want controlled agent connectivity without building bespoke adapters.

Practical use cases: what this enables today

Connect AI’s capabilities translate into immediate, concrete workflows where agents can add measurable business value.- Sales enablement: Agents can answer “Which deals closing this quarter have overdue invoices and what’s the exposure by rep?” by joining CRM opportunity records with ERP outstanding invoice data in real time—without moving batches into a separate analytics store.

- Finance and FP&A: Copilot-style assistants can generate up-to-the-minute cash forecasts by combining bank balances, unapplied payments, and pending orders across systems, while enforcing financial roles and auditability.

- Support automation: Ticket triage agents can pull customer account context, recent order history, and known product incidents to recommend responses or open corrective work items in a single, auditable flow.

- Order-to-cash orchestration: Agents can run cross-system reconciliations and, where policy permits, perform actions like creating credit memos or triggering collections workflows based on rules and human approvals.

Why this is a credible response to the “pilot purgatory” problem

Enterprises have a long history of treating data access as an engineering problem solved by ETL and integration teams. That architecture produced massive data warehouses—great for retrospective analytics but poor for real-time operations and agent autonomy. The agent era needs three things that traditional integration stacks don’t prioritize together: semantic understanding, live access, and governance.CData’s proposition addresses all three in one managed surface:

- Semantic understanding reduces the brittle “lookup, map, guess” loop that often causes models to hallucinate field names or relationships.

- Live access avoids dangerous stale-state decisions.

- Governance and identity-first access make it possible to trust an agent with queries against production systems while providing audit trails.

Critical analysis: strengths and practical limitations

CData’s Connect AI is an elegant answer to a real operational bottleneck, but the proposition is not a silver bullet. Below I break down both the notable strengths and the important risks enterprises must weigh.Strengths

- Operational fit: connecting agents directly to source systems solves the “freshness” problem that often kills business workflows that require current state.

- Semantic mapping: pre-mapped, source-level semantics reduces trial-and-error on queries and lowers both token consumption and time-to-first-correct-answer.

- Rapid platform adoption: integrations with Databricks and Microsoft place CData where teams are already designing agent workflows, reducing integration friction and enabling faster pilots.

- Managed MCP: handing the operations of MCP servers to a vendor can reduce internal operational burden, especially for companies without a mature platform engineering function.

- MCP server security: an MCP server becomes a high-value target. It typically stores OAuth tokens and service credentials that allow access across many systems. A compromised MCP server could enable broad lateral and data exfiltration unless properly isolated, rotated, and audited.

- Inherited complexity: “Identity passthrough” depends on source-system support and correct configuration. Row-level security in one system might not map perfectly to another; subtle mismatches in permission models can expose unintended data.

- Write-safety and action governance: allowing agents to act (write/delete/execute) creates real operational risks. A malformed prompt or unexpected model output could trigger a cascading set of actions unless safeguards, approvals, and strict downscoping are in place.

- Semantic correctness and corner cases: pre-mapping schemas is powerful—but enterprise systems are messy. Custom fields, cross-system transforms, and business rules can diverge. Incorrect semantic mapping will produce incorrect answers, and models can be confidently wrong.

- Vendor lock-in and portability: by standardizing on a managed MCP provider, enterprises trade off speed for dependency. Exiting that relationship or migrating connectors could be non-trivial for heavily embedded agent deployments.

- Cost dynamics: pushing query processing back to systems reduces tokens but introduces operational compute and API-call costs. Read/write call volumes and audit logging will create both cloud egress and API rate pressures that must be budgeted.

- Compliance and data residency: managed platforms that cross national borders must prove they respect regional data residency and regulatory constraints. Enterprises with strict compliance regimes will need to validate deployment models (cloud vs private-hosted, isolation, certifications).

Safety controls and a recommended adoption playbook

If you’re evaluating Connect AI or any MCP-based connectivity layer, treat this as infrastructure—precisely the kind that needs discipline. Below is a practical playbook to move from pilot to production safely.- Start with read-only pilots

- Validate queries, latency, and semantic mappings without exposing write paths.

- Confirm that the agent’s responses are accurate under audit, and measure token and API cost.

- Define explicit action policies

- For any write or action, define a safe action catalogue with allowable operations, parameter constraints, and required approvals.

- Use an explicit action approval workflow that inserts human-in-the-loop gating for anything with business or financial impact.

- Implement least-privilege RBAC

- Map agent identities to service accounts that are narrowly scoped. Do not use broad service-level credentials.

- Enforce session-level scoping so that each agent instance only gets access to systems it legitimately needs.

- Create an observability and audit stack

- Log every request, response, and action with full attribution and cryptographic time-stamps.

- Export to SIEM, maintain immutable logs for forensic analysis, and integrate replay capabilities for troubleshooting agent behavior.

- Canary and staged rollouts

- Roll out agent capabilities to a small, monitored cohort first. Validate error modes and the operator response playbook.

- Implement rate limits and circuit breakers that can be toggled from a central control plane.

- Test adversarial and failure modes

- Simulate incomplete credentials, expired tokens, network partitions, and model hallucination scenarios.

- Put rollback and remediation plans in place for each class of failure.

- Model evaluation and calibration

- Continuously evaluate model outputs for correctness, bias, and safety using domain‑specific test suites.

- Route uncertain queries to curated RAG (retrieval-augmented generation) fallbacks or human review rather than letting agents act autonomously.

- Contract and SLA scrutiny

- For managed MCP services, insist on granular SLAs for availability, incident response, and data handling.

- Confirm certification evidence—SOC2, ISO, GDPR compliance statements—and request penetration test reports where available.

- Cost governance

- Track both token/AI compute costs and the API/ingress costs of source systems. Set budgets and throttles to avoid runaway invoices.

- Exit and portability planning

- Ensure you can export connector definitions, semantic mappings, and audit logs in a usable format if you need to move to another provider.

Market implications: competition, differentiation, and where this could lead

CData’s announcement places it at the intersection of three market forces: an open standard (MCP), platform incumbents (Microsoft, Databricks), and the rising demand for agentic automation. If CData can deliver a managed, secure, and performant MCP layer, it becomes a plausible candidate for the “data control plane” that enterprises will rely on for agentic automation.That said, competition is intense. Big cloud vendors and platform incumbents have two natural responses:

- Build-native connectors into their own agent frameworks (deep platform integration), or

- Partner with neutral connectivity providers but offer incentives to keep customers inside their control planes.

Finally, the trend of “semantic connectivity” can change how enterprise applications are built. If agents can reliably access system-level semantics, product developers may stop shipping heavy data export features and instead rely on controlled MCP interfaces. That changes integration economics and reduces the friction for new agent-enabled features across verticals.

Uncertainties to watch and claims to scrutinize

CData’s marketing asserts first-of-kind leadership and dramatic efficiency gains (reduced token usage, faster time-to-value). Those are credible in principle but should be validated empirically in your environment. A few specific items to confirm before committing:- “First managed MCP platform”: marketing language like “first” is often competitive positioning. Evaluate functional fit and operational maturity rather than rely on uniqueness claims.

- Connector coverage and depth: a vendor can support a connector superficially (basic read) or deeply (write paths, custom objects, incremental syncs, advanced filters). Audit coverage for your critical systems and object types.

- Identity passthrough fidelity: confirm whether the source system supports row‑level and field‑level RBAC and how those maps into any agent identity model.

- Latency and reliability under production load: pushing queries to many systems concurrently can create tail-latency issues that degrade an agent’s responsiveness.

- Security controls around credentials and secrets: confirm where tokens live, rotation policies, and encryption-at-rest / in-transit details. Require third‑party pen tests where possible.

Conclusion: a pragmatic bet on a tougher problem

CData’s move to “teach agents where the data lives” is not flashy hype—it’s an engineering-centric response to a blunt market problem. Enterprises can no longer treat AI agents as toys that sit on top of a CSV dump. The next wave of value comes from agents that understand the structure, relationships, and governance of the systems that run the business.Connect AI bundles three things teams usually must implement themselves: a protocol‑native server (MCP), a semantic mapping layer, and a managed operations model. That combination can materially shorten the path from pilot to production—provided organizations take the obvious but non-trivial steps to harden security, design safe action policies, and build observability into agent lifecycles.

The devil is in the operational details. MCP servers centralize power and therefore risk; semantic mapping centralizes interpretation and therefore requires continuous validation; and agentic actions centralize workflow and therefore require robust approval and rollback mechanisms. For enterprises that treat these as a product-program problem—defined SLAs, staged rollouts, thorough testing—the CData approach could be the connective tissue that finally lets agents move from prototypes into the fabric of business processes.

For those still in pilot purgatory, the lesson is practical: solving model choice is necessary but not sufficient. The production problem is a data and governance problem, and infrastructure that treats connectivity as a managed, semantic, auditable layer is worth evaluating. CData’s bet is sensible; the proof will be whether it reduces implementation time, operational surprises, and security incidents once agents become part of day-to-day business operations.

Source: The Software Report CData Is Teaching AI Agents Where the Data Lives | The Software Report