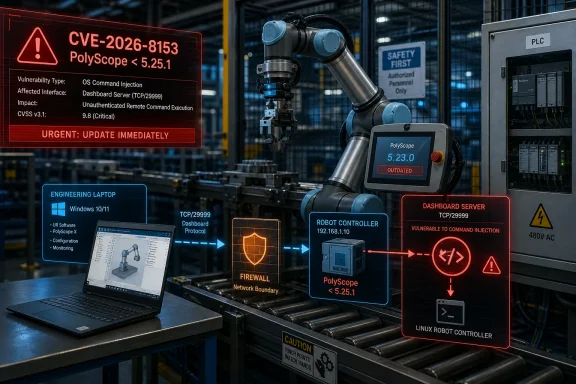

CISA published an industrial control systems advisory on May 14, 2026, warning that Universal Robots PolyScope 5 versions before 5.25.1 contain a critical command-injection flaw that can let an unauthenticated network attacker execute code on a robot controller. The vulnerability, tracked as CVE-2026-8153 by the vendor, lands with the kind of 9.8 CVSS score that should make factory IT teams stop treating cobots as peripheral equipment. The bug is not merely another entry in the industrial vulnerability ledger; it is a reminder that the modern robot is a networked Linux computer with motors attached. When that computer sits on the wrong side of a firewall, “collaborative” can start to mean collaborative with the attacker.

Universal Robots’ PolyScope 5 is the software environment that operators use to program and manage the company’s UR-series and e-Series collaborative robots. In practical terms, it is the interface between human intent and industrial motion: move here, grip there, wait for this signal, talk to that PLC, resume the cell. That makes it familiar to automation engineers, but it also makes it dangerous to underestimate.

The CISA advisory says the affected versions are PolyScope 5 releases earlier than 5.25.1, and Universal Robots’ own notice identifies the vulnerable component as the Dashboard Server interface. That matters because the Dashboard Server is not an ornamental feature. It is designed to let external systems communicate with the robot controller over the network, enabling automation cells, supervisory systems, and custom integrations to issue commands.

That kind of interface is exactly where industrial convenience and cyber risk collide. A robot that can be started, stopped, queried, or managed from another machine is easier to integrate into a production line. It is also a more interesting target when the exposed service mishandles input badly enough to reach the operating system.

The vulnerability class is CWE-78: improper neutralization of special elements used in an OS command, better known as OS command injection. In plain English, the software accepts something from the network that it should treat as data, but instead allows it to become part of a command executed by the underlying system. In an office application, that is bad. In a robot controller, it is bad with torque.

That combination is the red flag: network reachability, no credentials, low complexity, and code execution. A vulnerability does not need a Hollywood exploit chain when the attacker’s starting position is “can connect to the service” and the ending position is “can run commands on the controller.” The leap from vulnerability to incident is then governed less by cleverness than by network architecture.

This is why CISA’s recommendations begin with exposure reduction rather than clever detection rules. Minimize network exposure. Put control-system devices behind firewalls. Isolate control networks from business networks. Use VPNs for remote access, but do not pretend that a VPN magically secures the devices behind it.

The most uncomfortable part of the advisory is that none of this advice is new. Industrial security teams have been told for years that flat networks are dangerous, remote access paths need discipline, and controllers should not be reachable from arbitrary hosts. Yet advisories like this keep landing because the operational pressure to connect things often outruns the security work needed to connect them safely.

But management and automation interfaces are also the first places defenders should inventory when a critical advisory appears. They tend to be trusted because they live inside the plant network. They tend to be under-monitored because they are not conventional Windows endpoints. They tend to be reachable by engineering workstations, HMIs, line controllers, and sometimes vendor-maintenance paths.

That is a toxic mix when the interface has a command-injection flaw. The attacker does not need to defeat a glossy user interface or trick an operator into clicking through a warning. The useful attack surface is the service designed to accept commands.

Universal Robots’ mitigation advice is correspondingly direct: upgrade to PolyScope 5.25.1 or newer, disable the Dashboard Server if it is not required, and restrict access to trusted hosts or subnets where possible. Those are not exotic mitigations. They are the industrial equivalent of closing the door that should never have been left open to the whole building.

In a well-segmented environment, this vulnerability is still serious, but exploitation requires access to a narrower zone. An attacker must first reach the robot controller’s relevant network service from an authorized system or subnet. That does not eliminate risk, but it gives defenders places to enforce policy, log connections, and detect abnormal behavior.

In a poorly segmented environment, the robot controller becomes just another host on a broad internal network. A compromised laptop, vendor jump box, engineering workstation, or misconfigured remote access path can become the bridge to production equipment. That is how a vulnerability in a component that was never meant to be internet-facing becomes part of a much larger enterprise risk.

The word collaborative can also lull organizations into underestimating the safety implications. Cobots are designed to work around people under defined safety conditions, but software compromise is not a normal operating condition. A security incident does not need to produce dramatic robot motion to hurt production. It can alter programs, stop cells, corrupt configurations, interfere with availability, or force a plant into a cautious shutdown while engineers validate integrity.

Robot software updates can require testing against URCaps, custom scripts, PLC logic, safety configurations, fieldbus behavior, and production recipes. A robot may be part of a larger cell whose validation depends on specific software behavior. Downtime may be expensive, and the people qualified to test the cell may not be the same people reading the security advisory.

That complexity is real, but it is not an excuse for indefinite delay. A critical unauthenticated remote code execution flaw deserves a change window with urgency, not a note in a quarterly maintenance backlog. Where immediate updating is not possible, compensating controls must be concrete: disable unused services, restrict access, firewall the controller, and verify that remote support paths do not accidentally bypass segmentation.

The danger is the half-measure: an organization acknowledges the advisory, records an exception, and assumes that “inside the network” means “safe enough.” That assumption has aged badly in IT. It is aging even worse in OT, where the assets are harder to patch and the consequences of disruption are more physical.

Industrial vulnerabilities often have a strange public life. Some are patched quietly and never become fashionable targets. Others sit in search engines, asset inventories, and exploit notebooks until a ransomware crew, botnet operator, or opportunistic intruder finds a path into an exposed environment. The absence of known exploitation on publication day says something about current reporting. It does not say much about future intent.

The vulnerability was reported by Vera Mens of Claroty Team82, a research group that has spent years focusing on cyber-physical systems and industrial control environments. That kind of coordinated disclosure is the best version of the process: researcher finds issue, vendor produces fix, CISA amplifies the risk, operators patch or mitigate. The system worked, at least on paper.

The operational question is whether asset owners can complete their half of the loop. Advisories do not secure production lines. Inventory does. Change control does. Network enforcement does. Someone has to know where PolyScope 5 is running, which version it is running, whether Dashboard Server is enabled, and which machines can reach it.

That is why defenders should treat this advisory as a prompt to check the surrounding ecosystem, not just the robot pendant. Which Windows machines can reach the robot controller? Which accounts can access the engineering workstation? Are vendor tools installed on general-purpose laptops? Are firewall rules based on actual workflow, or did someone open an entire subnet during commissioning and never close it?

The most realistic attack path may not begin with a criminal scanning the internet for a Universal Robots controller. It may begin with phishing, stolen VPN credentials, a compromised remote support machine, or malware on a contractor laptop. Once inside, the attacker looks for reachable industrial services. A critical unauthenticated command-injection bug then becomes a privilege-escalation mechanism across the boundary between IT compromise and OT impact.

This is where Microsoft-centric defenders can help. Windows event logging, endpoint detection, privileged access management, network access control, and jump-server discipline all shape whether an attacker can ever reach the vulnerable service. The robot vendor ships the patch, but enterprise IT often controls the roads leading to the robot.

Factories were once full of devices that assumed proximity as a security model. If you could talk to the controller, you were probably standing near the controller. That assumption collapsed as plants adopted remote maintenance, data collection, centralized monitoring, cloud dashboards, and vendor support channels. The network became part of the machine.

But the security model has not always caught up. Asset inventories remain incomplete. Remote access paths multiply. Temporary commissioning rules become permanent. Engineering workstations are powerful, trusted, and difficult to lock down. Production pressure discourages change, while attackers are perfectly happy to exploit old assumptions.

PolyScope 5’s issue is therefore less an anomaly than a case study. The vulnerable component is a service intended to make automation easier. The affected product is deployed globally in critical manufacturing. The fix exists, but deploying it requires operational coordination. The fallback controls are basic, but only work if the network was designed to enforce them.

If the service is not needed, disabling it is the most elegant mitigation. Unused network services are liabilities pretending to be options. If the service is needed, it should be reachable only from the specific hosts and subnets that require it, not from the broad plant network and certainly not from corporate desktops.

Then comes validation. After patching or reconfiguring, teams should confirm that the robot still behaves as expected within the automation cell. They should also confirm that firewall rules actually block unauthorized access. Too many security controls exist only in diagrams until someone tests them with a packet.

This is also a good moment to review incident procedures. If suspicious activity is observed, CISA recommends following established internal procedures and reporting findings for correlation. That sounds bureaucratic until the same vulnerability appears in multiple plants, and early reporting becomes the difference between isolated troubleshooting and a broader campaign warning.

Source: CISA Universal Robots Polyscope 5 | CISA

The Robot Controller Has Become the Soft Underbelly of the Factory

The Robot Controller Has Become the Soft Underbelly of the Factory

Universal Robots’ PolyScope 5 is the software environment that operators use to program and manage the company’s UR-series and e-Series collaborative robots. In practical terms, it is the interface between human intent and industrial motion: move here, grip there, wait for this signal, talk to that PLC, resume the cell. That makes it familiar to automation engineers, but it also makes it dangerous to underestimate.The CISA advisory says the affected versions are PolyScope 5 releases earlier than 5.25.1, and Universal Robots’ own notice identifies the vulnerable component as the Dashboard Server interface. That matters because the Dashboard Server is not an ornamental feature. It is designed to let external systems communicate with the robot controller over the network, enabling automation cells, supervisory systems, and custom integrations to issue commands.

That kind of interface is exactly where industrial convenience and cyber risk collide. A robot that can be started, stopped, queried, or managed from another machine is easier to integrate into a production line. It is also a more interesting target when the exposed service mishandles input badly enough to reach the operating system.

The vulnerability class is CWE-78: improper neutralization of special elements used in an OS command, better known as OS command injection. In plain English, the software accepts something from the network that it should treat as data, but instead allows it to become part of a command executed by the underlying system. In an office application, that is bad. In a robot controller, it is bad with torque.

A 9.8 Score Is Not Theater When Authentication Is Missing

CVSS scores can be abused as marketing confetti, but this one earns attention. The advisory describes exploitation as potentially allowing authentication bypass and code execution. Universal Robots’ security notice describes the issue as allowing an unauthenticated attacker with network access to the Dashboard Server port to craft commands that execute on the robot’s operating system.That combination is the red flag: network reachability, no credentials, low complexity, and code execution. A vulnerability does not need a Hollywood exploit chain when the attacker’s starting position is “can connect to the service” and the ending position is “can run commands on the controller.” The leap from vulnerability to incident is then governed less by cleverness than by network architecture.

This is why CISA’s recommendations begin with exposure reduction rather than clever detection rules. Minimize network exposure. Put control-system devices behind firewalls. Isolate control networks from business networks. Use VPNs for remote access, but do not pretend that a VPN magically secures the devices behind it.

The most uncomfortable part of the advisory is that none of this advice is new. Industrial security teams have been told for years that flat networks are dangerous, remote access paths need discipline, and controllers should not be reachable from arbitrary hosts. Yet advisories like this keep landing because the operational pressure to connect things often outruns the security work needed to connect them safely.

The Dashboard Server Is the Convenience Layer Attackers Love

The Dashboard Server exists for understandable reasons. Production systems need ways to control robots programmatically, and external orchestration is a normal part of industrial automation. A robot that cannot communicate with the rest of the line is less useful than one that can be monitored, paused, resumed, and coordinated.But management and automation interfaces are also the first places defenders should inventory when a critical advisory appears. They tend to be trusted because they live inside the plant network. They tend to be under-monitored because they are not conventional Windows endpoints. They tend to be reachable by engineering workstations, HMIs, line controllers, and sometimes vendor-maintenance paths.

That is a toxic mix when the interface has a command-injection flaw. The attacker does not need to defeat a glossy user interface or trick an operator into clicking through a warning. The useful attack surface is the service designed to accept commands.

Universal Robots’ mitigation advice is correspondingly direct: upgrade to PolyScope 5.25.1 or newer, disable the Dashboard Server if it is not required, and restrict access to trusted hosts or subnets where possible. Those are not exotic mitigations. They are the industrial equivalent of closing the door that should never have been left open to the whole building.

CISA’s Advisory Is Really About Segmentation

The line that deserves the most attention is not the CVSS score. It is CISA’s recommendation to isolate control-system networks and remote devices from business networks. That sentence is the boring center of almost every serious OT security conversation, and it is still where many incidents either become contained events or cross-site disasters.In a well-segmented environment, this vulnerability is still serious, but exploitation requires access to a narrower zone. An attacker must first reach the robot controller’s relevant network service from an authorized system or subnet. That does not eliminate risk, but it gives defenders places to enforce policy, log connections, and detect abnormal behavior.

In a poorly segmented environment, the robot controller becomes just another host on a broad internal network. A compromised laptop, vendor jump box, engineering workstation, or misconfigured remote access path can become the bridge to production equipment. That is how a vulnerability in a component that was never meant to be internet-facing becomes part of a much larger enterprise risk.

The word collaborative can also lull organizations into underestimating the safety implications. Cobots are designed to work around people under defined safety conditions, but software compromise is not a normal operating condition. A security incident does not need to produce dramatic robot motion to hurt production. It can alter programs, stop cells, corrupt configurations, interfere with availability, or force a plant into a cautious shutdown while engineers validate integrity.

The Patch Is Simple; the Maintenance Window Is Not

Universal Robots has released PolyScope 5.25.1 to address the issue. That is the cleanest fix, and it should be the default plan for affected deployments. But in industrial environments, “just patch it” is often the beginning of the work rather than the end.Robot software updates can require testing against URCaps, custom scripts, PLC logic, safety configurations, fieldbus behavior, and production recipes. A robot may be part of a larger cell whose validation depends on specific software behavior. Downtime may be expensive, and the people qualified to test the cell may not be the same people reading the security advisory.

That complexity is real, but it is not an excuse for indefinite delay. A critical unauthenticated remote code execution flaw deserves a change window with urgency, not a note in a quarterly maintenance backlog. Where immediate updating is not possible, compensating controls must be concrete: disable unused services, restrict access, firewall the controller, and verify that remote support paths do not accidentally bypass segmentation.

The danger is the half-measure: an organization acknowledges the advisory, records an exception, and assumes that “inside the network” means “safe enough.” That assumption has aged badly in IT. It is aging even worse in OT, where the assets are harder to patch and the consequences of disruption are more physical.

No Known Exploitation Is Good News, Not a Strategy

CISA says it has not received reports of known public exploitation specifically targeting these vulnerabilities. That is welcome. It is also not a reason to relax.Industrial vulnerabilities often have a strange public life. Some are patched quietly and never become fashionable targets. Others sit in search engines, asset inventories, and exploit notebooks until a ransomware crew, botnet operator, or opportunistic intruder finds a path into an exposed environment. The absence of known exploitation on publication day says something about current reporting. It does not say much about future intent.

The vulnerability was reported by Vera Mens of Claroty Team82, a research group that has spent years focusing on cyber-physical systems and industrial control environments. That kind of coordinated disclosure is the best version of the process: researcher finds issue, vendor produces fix, CISA amplifies the risk, operators patch or mitigate. The system worked, at least on paper.

The operational question is whether asset owners can complete their half of the loop. Advisories do not secure production lines. Inventory does. Change control does. Network enforcement does. Someone has to know where PolyScope 5 is running, which version it is running, whether Dashboard Server is enabled, and which machines can reach it.

Windows Shops Still Own Part of This Problem

This is not a Windows vulnerability, but WindowsForum readers should not file it under “not our stack.” In many factories, Windows remains the connective tissue around the robot: engineering laptops, HMIs, jump hosts, file shares, remote access clients, endpoint agents, documentation repositories, and sometimes the systems that push scripts or collect telemetry. A compromised robot controller and a compromised Windows engineering workstation are different assets, but they often live in the same operational story.That is why defenders should treat this advisory as a prompt to check the surrounding ecosystem, not just the robot pendant. Which Windows machines can reach the robot controller? Which accounts can access the engineering workstation? Are vendor tools installed on general-purpose laptops? Are firewall rules based on actual workflow, or did someone open an entire subnet during commissioning and never close it?

The most realistic attack path may not begin with a criminal scanning the internet for a Universal Robots controller. It may begin with phishing, stolen VPN credentials, a compromised remote support machine, or malware on a contractor laptop. Once inside, the attacker looks for reachable industrial services. A critical unauthenticated command-injection bug then becomes a privilege-escalation mechanism across the boundary between IT compromise and OT impact.

This is where Microsoft-centric defenders can help. Windows event logging, endpoint detection, privileged access management, network access control, and jump-server discipline all shape whether an attacker can ever reach the vulnerable service. The robot vendor ships the patch, but enterprise IT often controls the roads leading to the robot.

The Advisory Exposes a Cultural Gap in OT Security

The deeper issue is not that one vendor shipped one vulnerable interface. Software has bugs, and industrial software is not exempt from the ordinary humiliations of input handling. The deeper issue is that many industrial environments still treat embedded and cyber-physical systems as if their network exposure is incidental rather than architectural.Factories were once full of devices that assumed proximity as a security model. If you could talk to the controller, you were probably standing near the controller. That assumption collapsed as plants adopted remote maintenance, data collection, centralized monitoring, cloud dashboards, and vendor support channels. The network became part of the machine.

But the security model has not always caught up. Asset inventories remain incomplete. Remote access paths multiply. Temporary commissioning rules become permanent. Engineering workstations are powerful, trusted, and difficult to lock down. Production pressure discourages change, while attackers are perfectly happy to exploit old assumptions.

PolyScope 5’s issue is therefore less an anomaly than a case study. The vulnerable component is a service intended to make automation easier. The affected product is deployed globally in critical manufacturing. The fix exists, but deploying it requires operational coordination. The fallback controls are basic, but only work if the network was designed to enforce them.

The Right Response Starts With Reachability

Organizations running Universal Robots equipment should begin with version identification. Any PolyScope 5 deployment earlier than 5.25.1 belongs in the remediation queue. But the next question should be reachability, because exploitability in the real world depends on who can connect to the Dashboard Server interface.If the service is not needed, disabling it is the most elegant mitigation. Unused network services are liabilities pretending to be options. If the service is needed, it should be reachable only from the specific hosts and subnets that require it, not from the broad plant network and certainly not from corporate desktops.

Then comes validation. After patching or reconfiguring, teams should confirm that the robot still behaves as expected within the automation cell. They should also confirm that firewall rules actually block unauthorized access. Too many security controls exist only in diagrams until someone tests them with a packet.

This is also a good moment to review incident procedures. If suspicious activity is observed, CISA recommends following established internal procedures and reporting findings for correlation. That sounds bureaucratic until the same vulnerability appears in multiple plants, and early reporting becomes the difference between isolated troubleshooting and a broader campaign warning.

The Factory Floor Gets a Very Specific To-Do List

The practical story here is not mysterious. Universal Robots has a critical vulnerability in PolyScope 5 before version 5.25.1, the vendor has released a fix, and CISA is telling operators to reduce exposure while they patch. The hard part is making sure those sentences turn into work orders, firewall changes, and verified software updates rather than a PDF in a risk register.- Organizations running Universal Robots PolyScope 5 should identify every controller running a version earlier than 5.25.1 and prioritize those systems for update.

- Teams that do not use the Dashboard Server interface should disable it rather than leave a powerful management service available by default or habit.

- Networks should restrict robot-controller access to the smallest set of trusted engineering, automation, and supervisory hosts that actually require it.

- Remote access paths into production environments should be reviewed because VPN access is only as safe as the devices and accounts allowed through it.

- Windows administrators should treat engineering workstations and jump hosts as part of the robot security boundary, not as ordinary office endpoints.

- Plants that cannot patch immediately should document compensating controls, test them, and set a near-term date to remove the exception.

Source: CISA Universal Robots Polyscope 5 | CISA