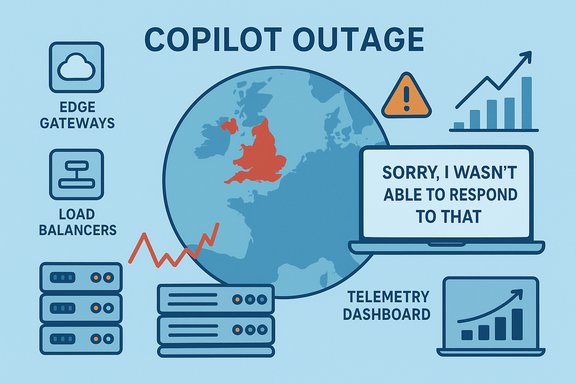

Microsoft’s Copilot experienced a regionally concentrated outage on 9 December 2025 that left users across the United Kingdom — and parts of Europe — unable to access the assistant or receiving degraded responses, an event Microsoft logged as incident CP1193544 and attributed to an unexpected surge in traffic that overwhelmed regional autoscaling and exposed load‑balancing fragilities.

Copilot is no longer an experimental novelty inside Microsoft 365 — it sits at the heart of drafting, summarisation, meeting recaps, spreadsheet analysis, and a growing set of automation workflows. That deep embedding makes availability a business‑critical requirement for many organisations. The December 9 incident surfaced that dependency sharply: users reported the same fallback message across Copilot surfaces — “Sorry, I wasn’t able to respond to that. Is there something else I can help with?” — and outage monitors logged a rapid spike of reports originating in the UK. Microsoft acknowledged the incident publicly through its Microsoft 365 service channels, opening an admin‑facing incident under code CP1193544 and stating that telemetry suggested an “unexpected increase in traffic” which impacted service autoscaling. The company said engineers were manually scaling capacity and adjusting load‑balancer rules while monitoring for stabilization. Independent reporting and outage trackers corroborated the incident’s timing and symptomatic footprint.

A second vector is load balancing. If routing rules or policies funnel traffic into a constrained subset of regional nodes — or if a policy change unintentionally changes distribution — healthy capacity elsewhere can remain unused while local pools become saturated. Microsoft publicly acknowledged a separate load‑balancing issue that compounded the autoscaling pressure, and later reversed a policy change affecting traffic distribution in affected EU environments to accelerate recovery.

At a market level, outages like CP1193544 will shape procurement conversations, push vendors to publish deeper reliability metrics, and encourage standards‑level discussion about how to measure availability for interactive AI services.

Source: NationalWorld Users report 'outage' with Microsoft AI tool

Background / Overview

Background / Overview

Copilot is no longer an experimental novelty inside Microsoft 365 — it sits at the heart of drafting, summarisation, meeting recaps, spreadsheet analysis, and a growing set of automation workflows. That deep embedding makes availability a business‑critical requirement for many organisations. The December 9 incident surfaced that dependency sharply: users reported the same fallback message across Copilot surfaces — “Sorry, I wasn’t able to respond to that. Is there something else I can help with?” — and outage monitors logged a rapid spike of reports originating in the UK. Microsoft acknowledged the incident publicly through its Microsoft 365 service channels, opening an admin‑facing incident under code CP1193544 and stating that telemetry suggested an “unexpected increase in traffic” which impacted service autoscaling. The company said engineers were manually scaling capacity and adjusting load‑balancer rules while monitoring for stabilization. Independent reporting and outage trackers corroborated the incident’s timing and symptomatic footprint. What happened — timeline and visible symptoms

Early signal and public acknowledgement

- Morning (UK local time), 9 December 2025: outage monitors and social feeds recorded a sharp rise in reports of Copilot failures concentrated in the United Kingdom.

- Microsoft posted an incident advisory (CP1193544) to the Microsoft 365 status feed and advised administrators to consult the Microsoft 365 admin centre for rolling updates.

User-facing behaviour

Affected users reported consistent failure modes across multiple surfaces:- Copilot panes failing to open inside Word, Excel, Outlook and Teams.

- Generic fallback replies (“Sorry, I wasn’t able to respond to that”) or truncated, slow, or timed‑out chat completions.

- “Coming soon” or indefinite loading states in some clients.

- Failure of Copilot file actions — summaries, edits, conversions — despite OneDrive or SharePoint files remaining accessible via native Office clients.

Immediate remediation

Microsoft’s first‑line mitigations were operational and pragmatic: manual capacity scaling to add inference and orchestration resources faster than automatic autoscalers could, and changes to load‑balancer rules to rebalance traffic away from stressed regional pools. Microsoft’s public posts explicitly called out autoscaling pressure and subsequent load‑balancing changes as the twin tracks of remediation.Technical anatomy — why a regional traffic surge can break Copilot

Copilot’s delivery architecture is multi‑layered and latency‑sensitive. To understand why this outage looked so broad, it helps to visualise the principal components involved:- Edge/API gateways and global load‑balancers that terminate TLS and route client requests to regional processing planes.

- Identity and token issuance (Microsoft Entra/Azure AD) that validates sessions and entitlements.

- Orchestration and service‑mesh microservices that assemble context, check eligibility, and enqueue inference work.

- GPU/accelerator‑backed inference endpoints (Azure model services / Azure OpenAI) that run large models and must be warmed to meet interactive latency SLAs.

- Telemetry and autoscaler control planes that detect load and trigger provisioning of additional capacity.

A second vector is load balancing. If routing rules or policies funnel traffic into a constrained subset of regional nodes — or if a policy change unintentionally changes distribution — healthy capacity elsewhere can remain unused while local pools become saturated. Microsoft publicly acknowledged a separate load‑balancing issue that compounded the autoscaling pressure, and later reversed a policy change affecting traffic distribution in affected EU environments to accelerate recovery.

Cross‑verification of key claims

- Incident code and Microsoft acknowledgement: the service health posts referenced incident CP1193544 and the “unexpected increase in traffic” diagnosis. This is confirmed by specialist reporting and mirrored admin‑centre notices.

- Signs and geography: outage trackers and mainstream outlets recorded complaint spikes concentrated in the UK with secondary reports from neighbouring European countries. Downdetector showed hundreds to thousands of user reports during the incident window.

- Operational mitigations: Microsoft’s status updates described manual capacity scaling and load‑balancer rule adjustments as immediate mitigations; independent reconstructions concur that those mitigations are predictable and effective for this failure class.

Why this outage matters: operational and governance impact

1. Copilot is mission‑critical for many workflows

For organizations that have woven Copilot into day‑to‑day work (drafting contracts, summarising meetings, triaging support tickets), any interruption converts into immediate friction: manual rework, missed SLAs, and elevated helpdesk loads. The event on 9 December made this visible, with helpdesks and outage trackers reporting spike behaviour consistent with business impact.2. AI availability becomes a contractual and architectural concern

Traditional SaaS availability SLAs were designed for storage, identity, or email; large‑model inference adds a new class of availability risk tied to compute provisioning and localized routing. Customers must now treat Copilot availability as a procurement and architecture variable: define resilience, fallbacks, and contractual remedies that reflect the new dependencies.3. Operational transparency and post‑incident learning

When outages affect productive capacity, enterprises will demand richer post‑incident analysis — not only “the feature failed” statements but measurable metrics: peak queue lengths, warm‑pool sizes, failover timelines, and the precise configuration change that led to any misrouting. Early public accounts repeatedly ask for deeper telemetry disclosures from providers.4. Data residency and regional capacity fragility

Regional outages underscore the tension between data‑residency/locality requirements and the need for global failover capacity. Systems constrained by residency or regional processing rules cannot trivially fail over to compute elsewhere; that increases the operational coupling between local demand and local capacity planning. The December outage’s regional flavour highlights this trade‑off.Strengths demonstrated by Microsoft’s response

- Rapid acknowledgement: Microsoft quickly opened the incident, posted CP1193544, and used its M365 status channels to inform administrators — a necessary practice for enterprise transparency.

- Appropriate mitigations: manual capacity scaling and load‑balancer rule adjustments are the right operational levers for autoscaling pressure and misrouting. Those actions tend to restore service faster than waiting for slow autoscalers in unprecedented traffic events.

- Active monitoring and rolling updates: Microsoft maintained telemetry‑driven monitoring while engineers applied mitigations, which is essential to avoid over‑provisioning and to steer recovery safely.

Risks and unresolved questions

- Autoscaler design and warm‑pool sizing: was the system’s predictive scaling configured for realistic peak patterns, or did a recent product push (or coordinated launches) create a demand pattern outside the design envelope? The public record cites “unexpected traffic,” but that phrase covers a range of possibilities from organic demand spikes to launch‑related bursts.

- Load‑balancer policy regression: multiple independent reconstructions flagged a load‑balancing policy change as a contributing factor. The root cause — whether a human configuration change, a rollout bug, or a control‑plane regression — remains to be confirmed in a PIR.

- Regional capacity constraints vs. global failover: how easily can EU/UK traffic be redirected to non‑EU compute in the event of local shortages, given compliance and residency rules? The incident suggests operations may be constrained here.

- SLA coverage and compensable damages: customers who relied on Copilot to meet business commitments may find current SLAs inadequate for AI inference outages, especially when those outages cascade into business‑critical failures. Contractual clarity is needed.

Practical guidance and hardening steps for IT teams

Organisations that rely on Copilot should treat the outage as a live case study and update their resilience playbooks accordingly. The following checklist converts lessons from CP1193544 into concrete actions.Immediate checklist (operational)

- Monitor Microsoft 365 Service Health and watch incident tickets for codes such as CP1193544.

- Communicate clearly to end users when Copilot is unavailable and explain temporary workarounds (manual drafting, meeting notes templates).

- Record and tag all Copilot‑related helpdesk tickets to capture incident impact metrics (lost hours, missed deadlines).

- Evaluate the viability of local caching or templated automation that can be executed offline.

Medium‑term resilience (administrative and contractual)

- Update runbooks to include Copilot failure modes: control‑plane vs inference timeouts vs auth failures.

- Negotiate contract language that recognises inference availability as a KPIs in addition to traditional service metrics.

- Request post‑incident reports from vendors and demand measurable mitigation commitments (pre‑warmed capacity, traffic‑distribution guarantees).

Architectural mitigations (technical)

- Design graceful degradation: ensure core workflows can fall back to non‑AI versions without losing data integrity.

- Decouple critical path operations from single AI endpoints by building modular pipelines that can switch providers or degrade to local logic.

- Implement client‑side timeouts and retry strategies that respect user experience while avoiding thundering herd behaviors.

Example 6‑step runbook to prepare for future Copilot outages

- Subscribe to Microsoft 365 Status and the tenant admin centre for real‑time incident codes and advisories.

- Implement an incident triage channel and designate a Copilot incident lead.

- Triage: classify impact (write/read latency vs. full unavailability vs. feature degradation).

- Communicate to users with templated messages and guidance on manual workarounds.

- Activate fallback automations (scripted templates, local macros, internal wikis).

- Post‑incident: gather forensic logs, tally business impact, and lobby vendor for PIR and SLA enhancements.

Strategic takeaways for CIOs and procurement teams

- Treat generative‑AI features as infrastructure: inclusion in productivity suites implies a higher operational bar than optional add‑ins. Contracts, runbooks, and architecture should reflect that shift.

- Demand operational transparency: for services that directly affect productivity, enterprises should insist on measurable post‑incident data: queue depths, autoscale trigger points, warm‑pool sizes, and precise change logs for any traffic‑affecting policy changes.

- Insist on technical options for resilience: reserved capacity, cross‑region failover provisions (where compliant), and predictable throttling strategies help avoid single‑region collapses.

- Consider a multi‑vendor posture for non‑specialist workloads: where possible, architect critical workflows to tolerate alternative LLM providers or local deterministic logic.

Wider industry implications

This outage is not an isolated curiosity; it is a bellwether for the industry as generative AI moves from optional convenience to everyday operational dependency. Autoscaling constraints, warm‑pool economics, and routing policy complexity will become routine governance topics for CIOs, cloud architects, and compliance officers. The event underlines that building predictable, transparent, and auditable operational models for inference workloads is as important as the models themselves.At a market level, outages like CP1193544 will shape procurement conversations, push vendors to publish deeper reliability metrics, and encourage standards‑level discussion about how to measure availability for interactive AI services.

Conclusion

The December 9 Copilot outage (CP1193544) was an operational stress test for the evolving class of large‑model, interactive cloud services. Microsoft’s rapid acknowledgement and hands‑on mitigations — manual capacity scaling and load‑balancer adjustments — restored service for many customers, but the episode highlighted enduring technical and contractual gaps that organisations must address as AI becomes central to daily work. Enterprises should treat Copilot like core infrastructure: update runbooks, demand operational transparency, negotiate resilience guarantees, and build practical fallbacks into workflows. The event offers a clear lesson: the promise of generative AI is only as valuable as the robustness of the systems that deliver it.Source: NationalWorld Users report 'outage' with Microsoft AI tool