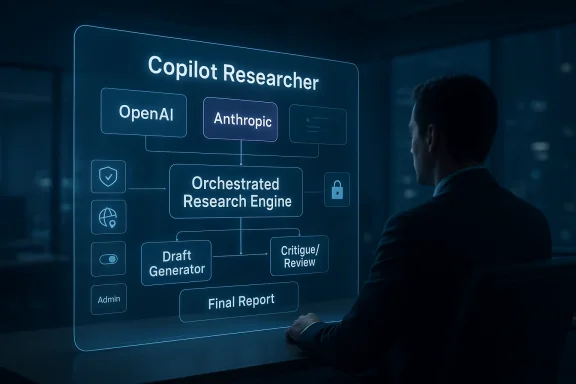

Microsoft is moving Copilot’s Researcher tool into a more ambitious phase, and the implications go well beyond a simple feature update. According to Microsoft’s own March 2026 announcements, Researcher now sits inside a broader multi-model strategy that lets Copilot draw from both OpenAI and Anthropic systems, with Microsoft saying the new approach improves reasoning, synthesis, and enterprise trust. For users, that means Researcher is no longer just another chatbot-style helper; it is becoming an orchestrated research engine built to compare, critique, and refine answers before they reach the user. The shift also signals a deeper competitive reality: Microsoft wants Copilot to be the place where the best models from across the industry are combined, not merely selected from a single vendor stack. (microsoft.com)

The immediate story is about Researcher, but the larger story is about Microsoft’s strategy for AI at work. Microsoft’s March 9, 2026 blog post framed Wave 3 of Microsoft 365 Copilot as a step beyond prompts and responses toward agentic execution, with Anthropic technology now used in some workflows alongside OpenAI models. In that framing, the company argues that users should not have to think about which model is best for a task, because Copilot should route work to the right engine behind the scenes. (microsoft.com)

That is a meaningful change from the early Copilot era, when the product was effectively synonymous with OpenAI. Microsoft still has deep ties to OpenAI, but it is now openly positioning itself as a multi-model platform. That matters because enterprise buyers increasingly care about model choice, latency, governance, cost, and quality across different task types. A research workflow that benefits from one model’s planning ability may perform better when another model critiques or revises the draft. (microsoft.com)

Microsoft’s own documentation also confirms that Anthropic models are being introduced across multiple Microsoft offerings, including Microsoft 365 Copilot, Researcher, Copilot Studio, Power Platform, Agent Mode in Excel, and Word, Excel, and PowerPoint agents. That breadth suggests this is not a one-off experiment. It is a platform-level model diversification effort, with Researcher serving as one of the more visible demonstration points. (learn.microsoft.com)

There is also a governance story here. Microsoft says Anthropic is operating as a subprocessor under Microsoft oversight, with enterprise data protections, contractual safeguards, and region-specific exclusions. That gives the company a way to expand model choice while still telling CIOs and compliance teams that the plumbing remains under Microsoft’s control. The result is a system that is more pluralistic in models but still centralized in administration. (learn.microsoft.com)

That is why the “multi-model” angle is more important than the headline benchmark claim. Microsoft says Researcher’s new approach improves Deep Research Accuracy, Completeness, and Objectivity performance by 13.8%, but the deeper implication is architectural: the product is being designed so generation and evaluation can be separated. In practical terms, that is a way to reduce the odds that one model’s blind spot becomes the final answer.

In the March 9 Microsoft 365 blog, Microsoft described Copilot Cowork and Wave 3 as examples of the new direction. The company said it worked closely with Anthropic to bring the technology behind Claude Cowork into Microsoft 365 Copilot, calling this the “multimodel advantage.” Microsoft’s messaging is unusually explicit: it is no longer selling Copilot as a single-model assistant, but as a broker of the best available models for the job. (microsoft.com)

This matters because many AI failures happen not at the drafting stage but at the validation stage. A single model can be persuasive even when it is wrong, especially in long-form synthesis tasks. By splitting generation from evaluation, Microsoft is trying to reduce overconfidence and improve objectivity, which is precisely the kind of engineering answer enterprise customers want to hear. (microsoft.com)

Microsoft’s pitch is that Copilot already has the work context. It sees files, meetings, chats, and relationships through the Microsoft 365 stack, and that context can make research outputs more relevant than what a standalone chatbot can produce. That becomes even more important in Researcher, where the task is not simply to answer a question, but to synthesize information across sources and present a substantiated position. (microsoft.com)

It also creates room for product differentiation. Copilot is not being sold as “ChatGPT inside Microsoft 365” anymore. Instead, Microsoft wants Copilot to be the workplace layer where multiple frontier models are combined under one policy umbrella. That is a stronger strategic position than mere embedding. It turns Microsoft into a model orchestrator rather than a model reseller. (microsoft.com)

At the same time, Microsoft is careful to keep model selection bounded by its admin controls. Anthropic can be enabled or disabled by tenant administrators, and availability varies by region. That means Microsoft is not decentralizing AI governance; it is centralizing governance while diversifying the underlying intelligence. That distinction will matter to compliance teams. (learn.microsoft.com)

The practical effect is that the first model can focus on ideation, structure, and breadth, while the second model can focus on checking gaps, inconsistencies, or weak reasoning. That means the final answer can be stronger than either model alone, especially on complex research tasks that involve synthesis rather than one-step retrieval. (microsoft.com)

The benefit is not merely better prose. It is fewer unsupported conclusions, better structure, and more reliable synthesis across multiple source documents. In enterprise settings, that can be the difference between a useful briefing and a polished hallucination. Microsoft is betting that customers will value the reduction in error more than they value a single-model purity narrative. (microsoft.com)

That is especially relevant for Researcher because research tasks are inherently adversarial to model confidence. They require judgment about what matters, what conflicts, and what evidence is sufficiently strong. A critic model does not guarantee correctness, but it does raise the quality floor by forcing another pass over the reasoning. That is a meaningful difference, not a cosmetic one.

A benchmark improvement can reflect real capability gains, but it can also reflect better task tuning, better orchestration, or a benchmark that closely matches the design strengths of the new system. In other words, the score matters, but it does not automatically prove universal superiority across every research scenario. That nuance is essential.

Microsoft is smart to pair benchmark language with operational language. It does not just say the score is higher; it also says the product can synthesize across sources and deliver citations with well-reasoned responses. That combination is more convincing than a naked number, because it connects the measurement to the output users actually see.

They will also ask whether the gains hold across industries. A legal team, a consulting team, and a finance team do not use research tools in the same way. If Researcher performs well across those contexts, the 13.8% claim becomes more persuasive; if not, it becomes a useful but narrow signal. (microsoft.com)

Microsoft’s documentation makes a point of saying Anthropic models are governed through Microsoft oversight, are covered by enterprise data protections, and are subject to region-specific availability and admin controls. That matters because enterprises frequently block or slow down AI adoption when vendor data handling is unclear. Microsoft is trying to lower that adoption friction. (learn.microsoft.com)

For many companies, though, the existence of tenant controls is enough to make experimentation possible. The ability to enable or disable Anthropic at the admin level gives IT teams a practical lever. That is often the difference between a pilot and a stalled proposal. (learn.microsoft.com)

There is also a hidden productivity benefit: less context switching. If the best model for the job is accessible inside the same Copilot interface that already knows the company’s files and policies, employees do not need to manage separate tools and prompts. That convenience is not trivial; it is the difference between experimentation and daily use. (microsoft.com)

The consumer-style appeal is that users do not need to understand the model stack. Microsoft is explicitly arguing that the platform should make those choices on their behalf. In theory, that keeps the interface simple while the back end gets smarter. (microsoft.com)

The main value proposition is time savings. If Researcher can produce a more credible first draft, users spend less time fact-checking and reorganizing. That is especially valuable for people who already use Microsoft 365 as the center of their working day. (microsoft.com)

This is why Microsoft’s emphasis on citations and transparency matters. The more Researcher can show its work, the easier it is for users to verify conclusions and spot weak assumptions. In a research environment, explainability is not a luxury; it is part of the product’s utility.

For Google, this is a reminder that model quality alone is not the whole game. The winner in enterprise AI may be the company that can combine frontier intelligence with deployment trust, document context, and governance. Microsoft is aggressively trying to own that middle layer. (microsoft.com)

It also gives Microsoft leverage in negotiations with model providers. If the company can route tasks to multiple frontier labs, it is less dependent on any single partnership. That kind of flexibility is a classic platform move, and it usually strengthens the platform owner over time. (microsoft.com)

There is also a compliance issue. Microsoft says Anthropic models are excluded from certain geographic and government-cloud environments, which means organizations will face uneven availability across regions and tenant types. That unevenness can complicate rollout plans, especially for multinational companies. (learn.microsoft.com)

This means some organizations will see the upside of model diversity while others will not be able to use the feature in the same way. That fragmentation could create internal inconsistency, where one business unit gets access to the new Researcher behavior and another does not. That kind of split is often overlooked in product announcements. (learn.microsoft.com)

There is also the danger of hidden complexity. If a final answer is the result of several model passes, users may not understand how to interpret confidence, variance, or failure modes. The more sophisticated the pipeline, the more important it becomes to preserve transparency. (microsoft.com)

The more interesting question is whether Microsoft extends this orchestration approach deeper into other parts of Microsoft 365 and its broader agent ecosystem. The company has already indicated that model choice is moving across Copilot Studio, Power Platform, and Office agents. If that expansion continues, Researcher may be remembered as the first widely noticed proof point for a much larger platform transformation. (learn.microsoft.com)

Source: Tech Times Microsoft Researcher AI Tool Upgrade Allows It to Use Multiple AI Models at the Same Time

Overview

Overview

The immediate story is about Researcher, but the larger story is about Microsoft’s strategy for AI at work. Microsoft’s March 9, 2026 blog post framed Wave 3 of Microsoft 365 Copilot as a step beyond prompts and responses toward agentic execution, with Anthropic technology now used in some workflows alongside OpenAI models. In that framing, the company argues that users should not have to think about which model is best for a task, because Copilot should route work to the right engine behind the scenes. (microsoft.com)That is a meaningful change from the early Copilot era, when the product was effectively synonymous with OpenAI. Microsoft still has deep ties to OpenAI, but it is now openly positioning itself as a multi-model platform. That matters because enterprise buyers increasingly care about model choice, latency, governance, cost, and quality across different task types. A research workflow that benefits from one model’s planning ability may perform better when another model critiques or revises the draft. (microsoft.com)

Microsoft’s own documentation also confirms that Anthropic models are being introduced across multiple Microsoft offerings, including Microsoft 365 Copilot, Researcher, Copilot Studio, Power Platform, Agent Mode in Excel, and Word, Excel, and PowerPoint agents. That breadth suggests this is not a one-off experiment. It is a platform-level model diversification effort, with Researcher serving as one of the more visible demonstration points. (learn.microsoft.com)

There is also a governance story here. Microsoft says Anthropic is operating as a subprocessor under Microsoft oversight, with enterprise data protections, contractual safeguards, and region-specific exclusions. That gives the company a way to expand model choice while still telling CIOs and compliance teams that the plumbing remains under Microsoft’s control. The result is a system that is more pluralistic in models but still centralized in administration. (learn.microsoft.com)

Why this matters now

The timing is not accidental. The AI market in 2026 is no longer just a race to release the most capable foundation model; it is a race to prove which platform can combine models, tools, context, and governance into something businesses can actually deploy. Microsoft’s move with Researcher reflects the reality that one model rarely wins every task. Some models are better at long-form reasoning, some at structured critique, and some at tool use or summarization. (microsoft.com)That is why the “multi-model” angle is more important than the headline benchmark claim. Microsoft says Researcher’s new approach improves Deep Research Accuracy, Completeness, and Objectivity performance by 13.8%, but the deeper implication is architectural: the product is being designed so generation and evaluation can be separated. In practical terms, that is a way to reduce the odds that one model’s blind spot becomes the final answer.

What Microsoft Actually Announced

The clearest official statement is that Microsoft is broadening model access inside Microsoft 365 Copilot and related services, including Researcher. Microsoft says Anthropic models are now available by default for most commercial-cloud customers outside certain regions, while users in Researcher and agent mode for Excel can select Claude where enabled. That is a concrete shift from model exclusivity to model plurality. (learn.microsoft.com)In the March 9 Microsoft 365 blog, Microsoft described Copilot Cowork and Wave 3 as examples of the new direction. The company said it worked closely with Anthropic to bring the technology behind Claude Cowork into Microsoft 365 Copilot, calling this the “multimodel advantage.” Microsoft’s messaging is unusually explicit: it is no longer selling Copilot as a single-model assistant, but as a broker of the best available models for the job. (microsoft.com)

The research workflow change

Researcher’s upgraded behavior appears to follow a critique-and-refine pattern. Microsoft says one model plans the task and drafts an initial response, while another model acts as an expert reviewer before the final report is produced. That division of labor is important because it mirrors how serious human research teams work: one person drafts, another audits, and a final editor tightens the result.This matters because many AI failures happen not at the drafting stage but at the validation stage. A single model can be persuasive even when it is wrong, especially in long-form synthesis tasks. By splitting generation from evaluation, Microsoft is trying to reduce overconfidence and improve objectivity, which is precisely the kind of engineering answer enterprise customers want to hear. (microsoft.com)

Key points from the announcement

- Anthropic models are now part of Microsoft 365 Copilot’s model mix. (learn.microsoft.com)

- Researcher is one of the first visible places where the multi-model approach is being used. (learn.microsoft.com)

- Microsoft says the rollout is phased, not universal. (learn.microsoft.com)

- The company is emphasizing enterprise governance alongside capability gains. (learn.microsoft.com)

- The new approach is tied to Microsoft’s Frontier early-access program. (microsoft.com)

How Researcher Fits Into Microsoft’s AI Strategy

Researcher is not just a product feature; it is a proving ground for Microsoft’s broader AI thesis. In enterprise software, Microsoft is trying to show that the winning model is not necessarily the single most powerful model, but the best-integrated system around the model. That system includes identity, permissions, compliance, file context, app integration, and administration. (microsoft.com)Microsoft’s pitch is that Copilot already has the work context. It sees files, meetings, chats, and relationships through the Microsoft 365 stack, and that context can make research outputs more relevant than what a standalone chatbot can produce. That becomes even more important in Researcher, where the task is not simply to answer a question, but to synthesize information across sources and present a substantiated position. (microsoft.com)

The platform logic

The platform logic is straightforward. If Microsoft can route some tasks to OpenAI models and others to Anthropic models, it can optimize for quality without forcing the customer to manage model selection at every step. That lowers friction for users while also letting Microsoft hedge against dependency on any one provider. (microsoft.com)It also creates room for product differentiation. Copilot is not being sold as “ChatGPT inside Microsoft 365” anymore. Instead, Microsoft wants Copilot to be the workplace layer where multiple frontier models are combined under one policy umbrella. That is a stronger strategic position than mere embedding. It turns Microsoft into a model orchestrator rather than a model reseller. (microsoft.com)

Why enterprise buyers should care

For enterprises, the ability to switch or blend models is not just a technical nicety. It affects governance, procurement, security review, and performance tuning. Large organizations do not want to rip and replace AI tooling every time one vendor launches a new flagship model, and Microsoft is clearly selling model diversity as a defense against that churn. (microsoft.com)At the same time, Microsoft is careful to keep model selection bounded by its admin controls. Anthropic can be enabled or disabled by tenant administrators, and availability varies by region. That means Microsoft is not decentralizing AI governance; it is centralizing governance while diversifying the underlying intelligence. That distinction will matter to compliance teams. (learn.microsoft.com)

The Multi-Model Architecture

The architecture behind the upgrade is arguably more interesting than the headline feature itself. Microsoft describes a Critique approach in which generation and evaluation are split between models. This is a familiar pattern in advanced AI systems, but it becomes more powerful when applied inside a mainstream enterprise product with native permissions and citations.The practical effect is that the first model can focus on ideation, structure, and breadth, while the second model can focus on checking gaps, inconsistencies, or weak reasoning. That means the final answer can be stronger than either model alone, especially on complex research tasks that involve synthesis rather than one-step retrieval. (microsoft.com)

Generation versus evaluation

This separation is an old idea in AI research, but Microsoft is productizing it for business users. A model that drafts quickly is not always the best model to judge its own work. A second model acting as reviewer creates a kind of built-in peer review, which is especially useful when outputs are long, source-heavy, or nuanced. (microsoft.com)The benefit is not merely better prose. It is fewer unsupported conclusions, better structure, and more reliable synthesis across multiple source documents. In enterprise settings, that can be the difference between a useful briefing and a polished hallucination. Microsoft is betting that customers will value the reduction in error more than they value a single-model purity narrative. (microsoft.com)

Why this could outperform single-model systems

Single-model systems often suffer from self-reinforcement. If the model starts with a flawed premise, it can elaborate that mistake with confidence. Multi-model systems are not perfect, but they create an internal check that can catch weaknesses before they ship to the user. (microsoft.com)That is especially relevant for Researcher because research tasks are inherently adversarial to model confidence. They require judgment about what matters, what conflicts, and what evidence is sufficiently strong. A critic model does not guarantee correctness, but it does raise the quality floor by forcing another pass over the reasoning. That is a meaningful difference, not a cosmetic one.

Architecture highlights

- One model can plan while another critiques. (microsoft.com)

- Microsoft is pursuing separation of generation and evaluation.

- The workflow is designed to improve completeness and objectivity, not only speed.

- The system is meant to work inside enterprise context, not as a standalone assistant. (microsoft.com)

Benchmark Claims and What They Mean

Microsoft says the new Researcher feature delivers a 13.8% higher score on the Deep Research Accuracy, Completeness, and Objectivity, or DRACO, benchmark. That is the kind of claim that gets attention because it implies a measurable quality lift rather than vague product marketing. Still, benchmark gains should be read carefully, especially when the benchmark is not yet universally familiar.A benchmark improvement can reflect real capability gains, but it can also reflect better task tuning, better orchestration, or a benchmark that closely matches the design strengths of the new system. In other words, the score matters, but it does not automatically prove universal superiority across every research scenario. That nuance is essential.

The limits of benchmark storytelling

Benchmarks are useful because they provide a common yardstick, yet they rarely capture the whole user experience. A system can score well on deep research tasks while still underperforming on edge cases such as ambiguous instructions, highly specialized domains, or content with conflicting source reliability. The real test is what happens after deployment.Microsoft is smart to pair benchmark language with operational language. It does not just say the score is higher; it also says the product can synthesize across sources and deliver citations with well-reasoned responses. That combination is more convincing than a naked number, because it connects the measurement to the output users actually see.

What enterprises will ask next

Enterprise buyers will want to know whether the benchmark improvement translates into fewer human review cycles. They will ask whether the model combination reduces factual errors, improves citation quality, and shortens the time needed to produce a usable brief. Those are business outcomes, not lab outcomes. (microsoft.com)They will also ask whether the gains hold across industries. A legal team, a consulting team, and a finance team do not use research tools in the same way. If Researcher performs well across those contexts, the 13.8% claim becomes more persuasive; if not, it becomes a useful but narrow signal. (microsoft.com)

Takeaways on the benchmark

- 13.8% is meaningful, but it is not the same as universal superiority.

- Benchmark wins can reflect task fit as much as raw intelligence.

- Real-world value depends on citation quality and source synthesis.

- Enterprises will judge the feature by workflow impact, not scorecards alone. (microsoft.com)

Enterprise Impact

The enterprise impact is where this upgrade becomes strategically significant. Microsoft has spent years turning Copilot into an enterprise product rather than a consumer novelty, and Researcher’s multi-model capabilities fit that playbook perfectly. Businesses want AI that can be audited, controlled, and embedded into existing software habits, not another disconnected tool that requires new governance structures. (microsoft.com)Microsoft’s documentation makes a point of saying Anthropic models are governed through Microsoft oversight, are covered by enterprise data protections, and are subject to region-specific availability and admin controls. That matters because enterprises frequently block or slow down AI adoption when vendor data handling is unclear. Microsoft is trying to lower that adoption friction. (learn.microsoft.com)

Governance and compliance

There is a strong compliance angle here. Microsoft says Anthropic models are excluded from the EU Data Boundary and are not available in government clouds or sovereign clouds, at least for now. That means the rollout is substantial, but not universally applicable, and organizations with strict residency requirements will need to examine the details closely. (learn.microsoft.com)For many companies, though, the existence of tenant controls is enough to make experimentation possible. The ability to enable or disable Anthropic at the admin level gives IT teams a practical lever. That is often the difference between a pilot and a stalled proposal. (learn.microsoft.com)

Productivity and procurement

The procurement story is equally important. Model diversification may reduce dependence on any one vendor and give enterprise buyers more negotiating power over time. In a market where AI pricing and model capabilities change rapidly, flexibility is a real asset. (microsoft.com)There is also a hidden productivity benefit: less context switching. If the best model for the job is accessible inside the same Copilot interface that already knows the company’s files and policies, employees do not need to manage separate tools and prompts. That convenience is not trivial; it is the difference between experimentation and daily use. (microsoft.com)

Enterprise implications

- Stronger governance through Microsoft admin controls. (learn.microsoft.com)

- Better procurement flexibility by avoiding single-vendor lock-in. (microsoft.com)

- More usable research workflows inside the apps employees already use. (microsoft.com)

- Greater chance of measurable adoption because context stays inside Microsoft 365. (microsoft.com)

- Clearer separation between model capability and enterprise policy. (learn.microsoft.com)

Consumer and Knowledge Worker Impact

For individual users, the upgrade may feel less like a grand platform shift and more like a noticeable quality improvement in the answers they get. Researcher’s role is to handle messy, multi-source questions, so the most visible benefit will be in reports that feel better organized, more carefully checked, and less prone to surface-level confidence. That can matter a great deal for analysts, managers, consultants, and power users. (microsoft.com)The consumer-style appeal is that users do not need to understand the model stack. Microsoft is explicitly arguing that the platform should make those choices on their behalf. In theory, that keeps the interface simple while the back end gets smarter. (microsoft.com)

What users may notice first

Users may notice that responses are more structured and better supported by citations. They may also see fewer weakly connected claims, because a second model has already reviewed the draft. Even if the improvement is subtle, the cumulative effect across daily use could be significant.The main value proposition is time savings. If Researcher can produce a more credible first draft, users spend less time fact-checking and reorganizing. That is especially valuable for people who already use Microsoft 365 as the center of their working day. (microsoft.com)

The human factor

There is, however, a psychological shift as well. Users are being invited to trust an AI system that not only reasons, but critiques itself through another model. That can increase confidence, but it can also create overconfidence if people assume a multi-model answer is automatically correct. The system may be better, but it is still not a substitute for human judgment. (microsoft.com)This is why Microsoft’s emphasis on citations and transparency matters. The more Researcher can show its work, the easier it is for users to verify conclusions and spot weak assumptions. In a research environment, explainability is not a luxury; it is part of the product’s utility.

User-facing benefits

- More coherent research drafts.

- Better source synthesis across documents and web material.

- Less need to manually prompt multiple tools. (microsoft.com)

- Stronger citation confidence when done well.

- Faster movement from question to usable briefing. (microsoft.com)

Competitive Implications

Microsoft’s move pressures almost every major AI platform vendor in different ways. For OpenAI, it reinforces that Microsoft is not treating it as the sole strategic model provider anymore. For Anthropic, it is a strong distribution win: Claude is now embedded inside one of the world’s biggest enterprise productivity suites, not just available through direct access or developer APIs. (microsoft.com)For Google, this is a reminder that model quality alone is not the whole game. The winner in enterprise AI may be the company that can combine frontier intelligence with deployment trust, document context, and governance. Microsoft is aggressively trying to own that middle layer. (microsoft.com)

Microsoft’s positioning advantage

Microsoft has one notable advantage: it can present model choice as a feature of a larger productivity environment rather than as a standalone AI app. That gives it room to absorb changes in the model market without constantly renaming the product or retraining users. The result is a calmer customer experience even when the underlying model market is volatile. (microsoft.com)It also gives Microsoft leverage in negotiations with model providers. If the company can route tasks to multiple frontier labs, it is less dependent on any single partnership. That kind of flexibility is a classic platform move, and it usually strengthens the platform owner over time. (microsoft.com)

Competitive pressure points

- OpenAI faces a world where Microsoft is multi-sourcing frontier models. (microsoft.com)

- Anthropic gains valuable enterprise distribution through Microsoft 365. (learn.microsoft.com)

- Google faces pressure to match not just model quality but workflow integration. (microsoft.com)

- Smaller AI vendors may struggle to compete unless they offer specialized strengths. (microsoft.com)

A broader market shift

This also reflects a broader industry pattern: customers are increasingly less interested in which lab made the model and more interested in whether the platform can deliver trustworthy outcomes. That is why Microsoft’s language leans so heavily on choice, governance, and work context. The value proposition is not simply “better AI,” but better enterprise AI plumbing. (microsoft.com)Risks and Concerns

As impressive as the upgrade sounds, there are real risks in celebrating multi-model AI too quickly. The first concern is operational complexity. Combining models can improve results, but it can also make debugging, auditing, and explaining failures harder, especially when users do not know which model handled which stage of a response. (microsoft.com)There is also a compliance issue. Microsoft says Anthropic models are excluded from certain geographic and government-cloud environments, which means organizations will face uneven availability across regions and tenant types. That unevenness can complicate rollout plans, especially for multinational companies. (learn.microsoft.com)

Data boundary concerns

The EU Data Boundary exclusion is particularly important. Microsoft explicitly notes that Anthropic models deployed in its offerings are currently excluded from the EU Data Boundary and related in-country commitments, with phased rollout continuing through March 2026. That is a major caveat for regulated customers. (learn.microsoft.com)This means some organizations will see the upside of model diversity while others will not be able to use the feature in the same way. That fragmentation could create internal inconsistency, where one business unit gets access to the new Researcher behavior and another does not. That kind of split is often overlooked in product announcements. (learn.microsoft.com)

Model reliability and trust

Another concern is that multi-model orchestration does not eliminate hallucinations. A critic model can reduce errors, but it can also bless a flawed draft if it shares the same blind spots or if the underlying retrieval is weak. Users may assume the presence of multiple models guarantees accuracy, when in reality it just improves the odds. (microsoft.com)There is also the danger of hidden complexity. If a final answer is the result of several model passes, users may not understand how to interpret confidence, variance, or failure modes. The more sophisticated the pipeline, the more important it becomes to preserve transparency. (microsoft.com)

Risk summary

- Regional inconsistency may limit rollout value. (learn.microsoft.com)

- Government and sovereign clouds remain excluded. (learn.microsoft.com)

- Multi-model systems can still hallucinate or overfit to weak premises. (microsoft.com)

- More orchestration can mean more hidden complexity for admins. (learn.microsoft.com)

- Users may over-trust outputs because they are critique-validated. (microsoft.com)

Strengths and Opportunities

Microsoft’s Researcher upgrade has a lot going for it, especially if the company can keep the experience simple while the back end gets more intelligent. The most compelling opportunity is that Copilot can become a model-agnostic work layer where users get better answers without having to understand the model market. That is a powerful positioning move in a fast-changing industry. (microsoft.com)- Better research quality through generation-plus-critique workflows.

- Stronger enterprise trust via Microsoft governance and admin controls. (learn.microsoft.com)

- Reduced vendor lock-in for Microsoft and, indirectly, for customers. (microsoft.com)

- Improved workflow continuity inside Microsoft 365 apps. (microsoft.com)

- Broader model choice without forcing users to leave the Copilot interface. (microsoft.com)

- Potentially faster adoption because the feature sits inside familiar tools. (microsoft.com)

- Competitive leverage against rivals that still sell a more single-stack AI story. (microsoft.com)

Risks and Concerns

The risks are manageable, but they are real, and Microsoft will need to communicate them carefully. The biggest concern is that the marketing story may outpace the user reality if regional exclusions, phased rollout, or admin toggles slow access. For enterprise customers, that gap can be frustrating if expectations are set too high. (learn.microsoft.com)- Data residency limits may block use in sensitive environments. (learn.microsoft.com)

- Phased rollout means not every tenant gets the same experience immediately. (learn.microsoft.com)

- Benchmark gains may not generalize to every task or industry.

- Multi-model opacity could make failures harder to diagnose. (microsoft.com)

- User overreliance may increase if outputs feel more authoritative than they are. (microsoft.com)

- Competitive backlash could intensify as other vendors mirror the strategy. (microsoft.com)

Looking Ahead

The next phase will be about proving that Microsoft’s multi-model strategy is more than a temporary integration story. If Researcher continues to improve and the Frontier rollout expands cleanly, Microsoft could normalize the idea that users should not care which frontier lab powers a given task. That would be a major shift in enterprise AI behavior. (microsoft.com)The more interesting question is whether Microsoft extends this orchestration approach deeper into other parts of Microsoft 365 and its broader agent ecosystem. The company has already indicated that model choice is moving across Copilot Studio, Power Platform, and Office agents. If that expansion continues, Researcher may be remembered as the first widely noticed proof point for a much larger platform transformation. (learn.microsoft.com)

What to watch

- Whether Microsoft expands Claude support beyond Frontier and preview-style access. (microsoft.com)

- Whether the DRACO benchmark improvement holds in broader real-world use.

- Whether Microsoft adds more explicit model-switching controls or keeps routing automated. (microsoft.com)

- Whether regional restrictions ease as compliance and residency issues are resolved. (learn.microsoft.com)

- Whether rivals respond with their own multi-model enterprise research features. (microsoft.com)

Source: Tech Times Microsoft Researcher AI Tool Upgrade Allows It to Use Multiple AI Models at the Same Time