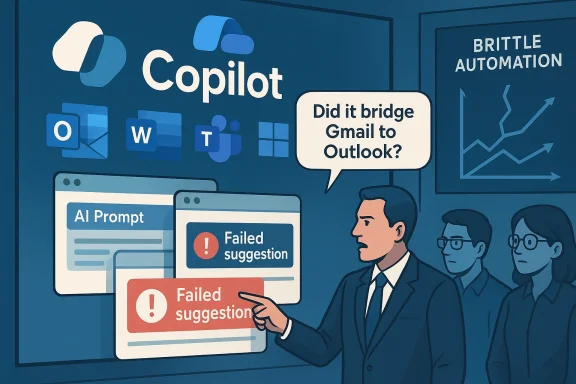

Peter Girnus’s one‑liner about a Copilot rollout that “couldn’t even bridge Gmail to Outlook” landed as a sharp—if comic—rebuke to the kind of executive optimism that treats AI as a turnkey productivity miracle, and the reaction it provoked is more revealing than the joke itself. The tweet’s viral spread crystallizes a pattern: large, public AI commitments; earnest executive narratives about agentic operating systems; and day‑to‑day product realities that frequently fall short of the marketing. This disconnect has real consequences for enterprise trust, developer morale, and how organizations choose to adopt generative AI tooling. a joke matters now

Microsoft’s Copilot branding has become the company’s flagship AI story—tied into Office, Windows, Edge and developer tools—so any satire aimed at that stack lands broader than a single social post. The public conversation over late 2024 and 2025 shifted from feature announcements to a thorny debate over reliability, privacy, and how these assistants are being shipped to users. Viral mockery and community outrage reflect a deeper credibility gap between the promises of an “agentic OS” and the measured reality of multi‑step automation delivered to billions of endpoints.

Microsoft’s own agents that can see, speak, and act across apps—raises governance and UX expectations that the shipped experiences must meet. When demos or clips show Copilot recommending the wrong settings or proposing changes already in effect, the result is not merely an awkward ad; it becomes a reproducible case study that undermines trust.

Peter Girnus’s viral post impersonated an executive who proudly announced a large Copilot roll‑out only for it to fail simple tasks—an intentionally absurd scenario that resonates because many professionals recognize the underlying truth: AI features are often brittle in the wild. The post’s timing amplified its reach. Social platforms and community forums were already jittery over high‑profile Copilot demos and the “Microslop” backlash that framed many users’ experiences with a single, memorable word.

Key context around the reaction:

Despite the satire and backlash, Microsoft’s Copilot strategy contains elements with genuine technical and product merit. These strengths explain why enterprises keep experimenting with Copilot and related services.

The broader lesson for product teams, executives and IT leaders is straightforward: when you attach billions in investment and a brand umbrella to AI, you also attach high expectations for reliability, explainability and governance. If those expectations aren’t met, satire becomes a credible signal of erosion in user trust—and a practical call to refocus on the fundamentals that make AI truly useful in real enterprise contexts.

Source: WebProNews Peter Girnus’s Viral Tweet Satirizes Microsoft Copilot AI Hype Fails

Microsoft’s Copilot branding has become the company’s flagship AI story—tied into Office, Windows, Edge and developer tools—so any satire aimed at that stack lands broader than a single social post. The public conversation over late 2024 and 2025 shifted from feature announcements to a thorny debate over reliability, privacy, and how these assistants are being shipped to users. Viral mockery and community outrage reflect a deeper credibility gap between the promises of an “agentic OS” and the measured reality of multi‑step automation delivered to billions of endpoints.

Microsoft’s own agents that can see, speak, and act across apps—raises governance and UX expectations that the shipped experiences must meet. When demos or clips show Copilot recommending the wrong settings or proposing changes already in effect, the result is not merely an awkward ad; it becomes a reproducible case study that undermines trust.

What happened: satire, context, cks

What happened: satire, context, cks

Peter Girnus’s viral post impersonated an executive who proudly announced a large Copilot roll‑out only for it to fail simple tasks—an intentionally absurd scenario that resonates because many professionals recognize the underlying truth: AI features are often brittle in the wild. The post’s timing amplified its reach. Social platforms and community forums were already jittery over high‑profile Copilot demos and the “Microslop” backlash that framed many users’ experiences with a single, memorable word.Key context around the reaction:

- Developers and admins complaint behavior among Copilot instances (Outlook vs. Teams vs. Windows) and the appearance of AI UI elements that felt default or hard to opt out of.

- High‑visibility demos—such as a promoted clip that misdirected a user to an inferred as repeatable examples of state‑blindness in agentic interactions.

- The satire spread at a time when X experienced outages and partial blackouts, which pushed conversations and other forums where crits and memes quickly multiplied.

Overview: the structural faults the satire exposes

The Girnus tweet is funny because it maps onto several recurring, verifiable patterns in enterprise AI rollouts. Breaking those down:1. Scope creep: one brand, many assistants

“Copilot” is intentionally an umbrella brand. That umbrella covers dozens of assistant experiences—GitHub Copilot for code, Copilot in Outlook for mail composition, Copilot Vision for screen analysis, and system‑level Copilot Actions that attempt multi‑step tasks. That breadth creates a brand identity problem: users expect consistent behavior under a single label, and inconsistent outcomes quickly generate distrust.2. Defaults and perceived coercion

Users reporaring prominently or returning after removal, and community scripts have sprung up to disable these features comprehensively—an indicator that opt‑out workflows feel insufficient. The existence of removal tools and scripts is itself a user‑level protest against vendor‑first defaults.3. Marketing vs. operational reality

Executive narratives—celebratory claims about on‑device AI and agentic operating systems—collide with reproducible usability failures. When market me” experiences that produce wrong results, the damage is amplified because the failure mode is visible and easy to reproduce.4. Governance, provenance, and auditability

Enterprise IT teams consistently ask for auditable AI behavior: provenance, versioning, review workflows, and controls that prevent unauthorized agent actions. Without these tools, top‑down AI pushes looather than productivity wins. Analysts and forums repeatedly call for transparency and stronger governance.Verifiable claims and what they mean

Any responsible critique needs to separate provable facts from rhetoric or transient social metrics. The load‑bearing, verifiable claims underlying the public outcry include:- Microsoft documented a formal hardware bar for “Copilot+ PCs” tied to NPUs measured in TOPS; many Copilot+ features are specifically associated with devices featuring 40+ TOPS NPUs. This is an explicit product requirement and has driven OEM design and marketing decisions.

- Microsoft publicly acknowledged sizable financial commitments to AI partnerships and infrastructure (the company has disclosed in filings and coverage that it has made commitments on the order of $13 billion toward OpenAI and related AI initiatives). That magnitude explains why the company is vocal about AI’s strategic centrality. Treat headline figures as strategic indicators rather than audited line‑items unless you consult official filings.

- Company leaders have made statements about AI’s prevalence in software development: in public remarks at an industry event, Satya Nadella estimated that “maybe 20%, 30%” of code in some Microsoft repositories is written with software assistance. Multiple reputable outlets reported and analyzed that quote, and the meaning is nuanced—companies count autocomplete, scaffolding, compiled artifacts and AI suggestions differently. Use the figure as directional context, not a precise static metric.

- Windows 10’s mainstream support officially concluded on October 14, 2025, a milestone that materially affects upgrade cadence and Microsoft’s leverage to normalize Windows 11 and its AI surfaces. That date is a verified planning pivot for many enterprises.

- Social platform outages (X in mid‑January 2026) created amplification effects for viral posts and drove conversation to other venues. These outages are documented across outage trackers and tech press.

Despite the satire and backlash, Microsoft’s Copilot strategy contains elements with genuine technical and product merit. These strengths explain why enterprises keep experimenting with Copilot and related services.

- Deep platform integration: embedding AI across productivity apps and the OS enables scenarios that are difficult to replicate with third‑party overlays. When the pieces line up, Copilot can reduce friction for repeated, low‑risk tasks (templates, summaries, boilerplate, accessibility aids).

- Investment in on‑device acceleration: the Copilot+ hardware push drove a rapid NPU arms race in the PC industry, pushing OEMs to offer NPUs and enabling lower‑latency, privacy‑sensitive inference for suitable workloads. The 40+ TOPS specification created a clear engineering target for hardware partners.

- Enterprise governance primitives in development: Microsoft has productized controls for admins—enterprise Copilot licensing, admin toggles for GitHub Copilot, and staged release channels. These are imperfect, but they form the basis for stronger governance if Microsoft follows through.

- Productivity uplift in narrow domains: independent reports and hands‑on tests repeatedly show real savings for specific workflowng, test scaffolding, and document summarization—when AI is treated as a first draft, not a final artifact.

Risks and downsides worth attention

The satire highlights genuine, systemic risks that deserve concrete mitigation plans from vendors and enterprise adopters alike.- Hallucinations and safety gaps: AI assistants can supply plausible-but-wrong answers or suggest unsafe code patterns. In regulated environments, such errors are not mere annoyances—they can cause operational faultses. Risk increases when agents operate with elevated privileges or across sensitive data sets.

- Perceived or real coercion: default placements of AI features and insufficiently visible opt‑out mechanisms fuel distrust. Users and admins who feel forced into AI experiences will build shadow IT workarounds or remove official functionality en masse. Community tools that strip AI components demonstrate this effect.

- Governance immaturity: provenance, version control for models, and auditable decision trails are still evolving. Enterprises require defensible audit logs, model version pinning, and CI gates before they can rely on automated agents for production tasks.

- Talent and labor risk: executive claims that 20–50% of development could be performed by AI prompt unsettling workforce dynamics. Misframed automation strategies can erode morale or lead to premature headcount reductions without parallel investment in re‑skilling. Nadella’s remark (20–30%) is a directional indicator of adoption, not a policy prescription.

- Privacy and data exposure: features that record, summarize or index user activity (e.g., system‑level recall functions) must be designed with encryption, minimal retention, and clear access controls. Early research and proofs of concept showed how Recall‑style artifacts could be extracted, prompting scrutiny and mitigation work.

What organizations should do (practical guidance)

Enterprises can adopt a pragmatic, risk‑aware strategy that preserves upside while limiting downside. The following steps are sequential and actionable.- Inventory and classify

- Map every Copilot/Copilot+ feature that impacts users or data (Windows, Office, Edge, GitHub).

- Classify by impact (safety, privacy, regulatory risk) and value (time saved, error reduction).

- Gate deployment behind governance

- Require model versioning and provenance capture for any AI that affects production artifacts.

- Add CI gates/scripts that automatically run static analysis, dependency scans, and security checks on AI‑suggested code or agentic edits.

- Empower opt‑out and defaults

- Enforce conservative defaults: features that act or capture data should be opt‑in for end users by policy.

- Provide clear admin master switches and documented processes for removal (and test restores).

- Measure empirically

- Track time‑to‑green build, bug density in AI‑influenced commits, false‑positive rates, and user satisfaction.

- Use these metrics for go/no‑go decisionstes.

- Re‑skill and communicate

- Offer targeted re‑skilling programs (prompt engineering, model auditing, verification tools).

- Communicate transparently about what AI is replacing an6. Use staging and pilot groups

- Run agentic automations in bounded pilot contexts with rollback plans and human signoff requirements.

- Expand only when measurable benefihis mix of technical controls, people investments, and conservative defaults will make AI adoption defensible rather than headline‑driven.

How vendors (especially Microsoft) could repair trust

Constructive change is possible. The market signals generated by satire and social backlash are instructive, not merely performative.- Lower the marketing temperature: swap sweeping slogans for clear, narrowly scoped demos that include caveats and required human review steps. Honest demos build credibility, not just excitement.

- Publish measurable evidence: anonymized case studies and verifiable telemetry showing where Copilot reduces time and where it fails would build trust with enterprise buyers and developers.

- Improve provenance and audit tooling: expose model provenance, prompt history, and decision trails for agent actions; integrate these into existing SIEM and audit platforms.

- Strengthen opt‑outs and admin controls: offer durable, well‑documented master switches and enterprise configuration packages that persist across updates and servicing. Community scripts like RemoveWindowsAI exist because official controls were perceived as insufficient—address that gap proactively.

- Tighten security around “memory” features: features that record desktop activity need clear encryption, per‑session entitlements, and verifiable deletion semantics to avoid becoming vectors for exfiltration or surveillance.

The upside if vendors get it right

If these approaches are taken seriously, the potential gains remain compelling and concrete:- Lowered cognitive load for routine tasks (summaries, format conversions, first‑draft generation).

- Faster iteration cycles for prototyping and testing in controlled contexts.

- On‑device capabilities (low latency, offline inference, improved privacy assurances) that unlock new UX patterns for collaboity on Copilot+ hardware.

A final assessment: why satire matters as a corrective

Peter Girnus’s viral jab at Copilot is hilarious because comedy compresses a long list of user frustrations into a single, memorableAI rollout that still struggles with everyday plumbing. That image matters because it highlights where attention should fall: not on hype, but on durable engineering, user control, and workplace safety.The broader lesson for product teams, executives and IT leaders is straightforward: when you attach billions in investment and a brand umbrella to AI, you also attach high expectations for reliability, explainability and governance. If those expectations aren’t met, satire becomes a credible signal of erosion in user trust—and a practical call to refocus on the fundamentals that make AI truly useful in real enterprise contexts.

Closing: practical takeaways in one paragraph

Treat AI as a system problem, not a marketing slogan: insist on provenance, CI gates, conservative defaults, and measurable pilots before wide deployment; push vendors for durable admin controls and transparent evidence of benefit; and remember that a well‑timed joke—like Girnus’s—often points to a fixable gap between rhetoric and the daily reality of work.Source: WebProNews Peter Girnus’s Viral Tweet Satirizes Microsoft Copilot AI Hype Fails