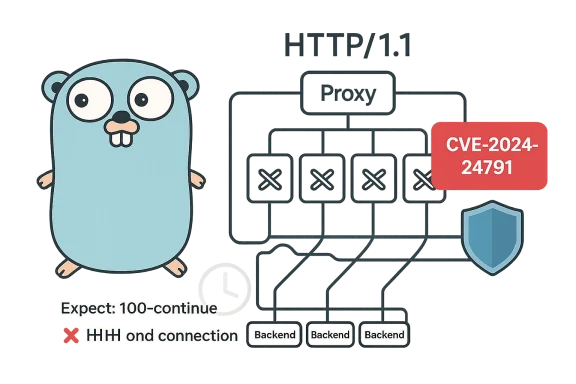

Go’s net/http standard library contains a subtle protocol-handling bug — tracked as CVE-2024-24791 — that can be weaponized to cause sustained denial-of-service conditions against Go-based HTTP proxies and other components that reuse HTTP connections, and operators must treat it as a high-priority patching and mitigation exercise for any public- or internal-facing service that depends on Go’s HTTP client behavior. (go.dev)

The HTTP/1.1 mechanism called Expect: 100-continue exists to optimize large request bodies: a client can send only headers with Expect: 100-continue set, wait for the server to reply with an informational 100 Continue, and only then send the (potentially large) body. This avoids unnecessary uploads when the server will reject the request on headers alone (for example, due to authentication or an invalid route). The RFC leaves room for servers to either respond with a 1xx informational code to prompt the body or return a final non-1xx response immediately if the body is not needed. The interplay between the client’s decision to delay sending the body and a server returning a final status must be handled carefully to keep the connection protocol-consistent.

In Go’s net/http implementation, the client-side handling of that exact interaction was inconsistent. In some cases, when a server responded with a non‑informational status (200 or higher) to a request that included Expect: 100-continue, the Go HTTP client could leave the underlying connection in an invalid state where the connection’s next request would fail or the client would incorrectly reuse a connection that was still waiting for a body to be sent. That misbehavior is the essence of CVE-2024-24791. (go.dev)

This is not a theoretical edge-case limited to toy code: the problem is practical when it affects connection reuse in proxies, reverse proxies, and other components that rely on persistent connections (HTTP keep-alive). In a common, high-impact scenario an attacker can repeatedly send specially crafted requests that provoke non‑informational responses from upstream backends, causing a backend connection pool to accumulate “poisoned” entries; each poisoned connection will make one subsequent request fail, and repeated abuse can exhaust healthy backend connections and deny service to legitimate traffic. Multiple vendor advisories, vulnerability trackers, and downstream vendors confirmed the impact and issued patches or advisories.

Crucially, in typical reverse-proxy setups built atop net/http/httputil.ReverseProxy, the proxy forwards the attacker’s Expect: 100-continue headers to the backend. If the backend responds with a final status — say because it’s an endpoint that returns 404 or 401 without needing the body — the proxy’s connection to that backend can become invalid but remain counted as an available persistent connection. A small number of crafted requests can therefore “poison” many connections and reduce the backend pool availability quickly, producing a denial of service for normal users. The public Go issue that tracks the bug explains the corner cases and the inconsistent behavior between HTTP/1 and HTTP/2 code paths. (go.dev)

Source: MSRC Security Update Guide - Microsoft Security Response Center

Background / Overview

Background / Overview

The HTTP/1.1 mechanism called Expect: 100-continue exists to optimize large request bodies: a client can send only headers with Expect: 100-continue set, wait for the server to reply with an informational 100 Continue, and only then send the (potentially large) body. This avoids unnecessary uploads when the server will reject the request on headers alone (for example, due to authentication or an invalid route). The RFC leaves room for servers to either respond with a 1xx informational code to prompt the body or return a final non-1xx response immediately if the body is not needed. The interplay between the client’s decision to delay sending the body and a server returning a final status must be handled carefully to keep the connection protocol-consistent.In Go’s net/http implementation, the client-side handling of that exact interaction was inconsistent. In some cases, when a server responded with a non‑informational status (200 or higher) to a request that included Expect: 100-continue, the Go HTTP client could leave the underlying connection in an invalid state where the connection’s next request would fail or the client would incorrectly reuse a connection that was still waiting for a body to be sent. That misbehavior is the essence of CVE-2024-24791. (go.dev)

This is not a theoretical edge-case limited to toy code: the problem is practical when it affects connection reuse in proxies, reverse proxies, and other components that rely on persistent connections (HTTP keep-alive). In a common, high-impact scenario an attacker can repeatedly send specially crafted requests that provoke non‑informational responses from upstream backends, causing a backend connection pool to accumulate “poisoned” entries; each poisoned connection will make one subsequent request fail, and repeated abuse can exhaust healthy backend connections and deny service to legitimate traffic. Multiple vendor advisories, vulnerability trackers, and downstream vendors confirmed the impact and issued patches or advisories.

Technical root cause — what actually goes wrong

How Expect: 100-continue is supposed to work

- Client sends headers with Expect: 100-continue and sets a timer (or optionally sends the body immediately).

- Server either responds with:

- 100 Continue (informational) — client sends the body and the flow proceeds, or

- a final status (>= 200) — server indicates it is not reading the body; with HTTP/1.1 the client must still complete or abort sending the body before reusing the connection, otherwise the request/response boundaries will be misaligned.

- Proper implementations therefore ensure that if a client decides not to send the body after a final response, the connection is either closed or otherwise advanced to a safe state before reusing it.

Where net/http diverged from a robust strategy

Go’s net/http client sometimes chose not to send the request body when it observed a non-1xx response early, and then allowed connection reuse while the server might still be expecting or reading the body. That race left the connection in a protocol-ambiguous state: the server and client no longer agreed on where the next request begins, so the next request on that connection could be misinterpreted, fail, or otherwise behave incorrectly.Crucially, in typical reverse-proxy setups built atop net/http/httputil.ReverseProxy, the proxy forwards the attacker’s Expect: 100-continue headers to the backend. If the backend responds with a final status — say because it’s an endpoint that returns 404 or 401 without needing the body — the proxy’s connection to that backend can become invalid but remain counted as an available persistent connection. A small number of crafted requests can therefore “poison” many connections and reduce the backend pool availability quickly, producing a denial of service for normal users. The public Go issue that tracks the bug explains the corner cases and the inconsistent behavior between HTTP/1 and HTTP/2 code paths. (go.dev)

Real-world attack scenario — step-by-step

- Attacker crafts a simple HTTP/1.1 request with the header Expect: 100-continue.

- That request targets a service fronted by a Go-based reverse proxy (common in cloud-native stacks).

- The reverse proxy forwards the request to one of its backend servers.

- The backend responds immediately with a non‑1xx final status (for example, a 404 or 401), rather than sending 100 Continue.

- The Go client/proxy sees the final response and — due to the bug — may not send the body and may also leave the connection in a state that makes it unsafe to reuse.

- The proxy’s connection to that backend remains in its pool and is reused for a later request; the next request on that connection fails or causes the proxy to generate network-level failures (timeouts, connection resets, malformed requests).

- Repeat steps 1–6 at scale or in rapid sequence: each malicious attempt can poison another pooled connection until the proxy has too few healthy connections to serve legitimate traffic, causing an effective denial of service.

Who’s affected — scope and vendor impact

- Affected component: the Go standard library’s net/http HTTP/1.1 client and anything that uses it (notably net/http/httputil.ReverseProxy). The Go project flagged the issue and assigned GO-2024-2963 / CVE-2024-24791.

- Affected versions (upstream semantics): releases before Go 1.21.12 and Go 1.22.0-0 up to but not including 1.22.5 were identified as vulnerable in vendor tracking; downstream vendors produced patches and advisories at different times. Check your environment’s Go runtime version or the versions of vendor-supplied Go toolchains and images.

- Vendors and downstream products: many vendor images, distributions, and products that embed or build with affected Go versions needed updates; Red Hat, Amazon Linux, Debian, Ubuntu (USN), Oracle Linux, and other distributions issued advisories or packages to remediate the problem. Enterprise products that embed Go-based agents, collectors, or proxies (for example, container runtimes, monitoring agents, API gateways written in Go) were also listed by vigilance services and vendor bulletins.

Detection — how to spot an active abuse or a vulnerable deployment

Look for these operational signals in logs and telemetry:- Unusual clustering of inbound requests that contain the header Expect: 100-continue, especially originating from a small set of IPs or from scripted clients.

- Sudden increases in backend errors or proxy-side 502/503 responses while overall incoming request volume remains steady.

- A rising count of connection failures or “broken pipe” / protocol error messages between proxy and backend.

- Increased rates of connection churn or unexpectedly high numbers of requests that require backend connection re-establishment (which can indicate the pool contains poisoned connections).

- Application-layer logs that show request bodies not being received despite early responses, or handlers attempting to read bodies after a response was already written (a sign of protocol mismatch).

Remediation and mitigations — short-term and permanent

First, patch: the authoritative fix is to upgrade Go or the affected binary packages

- The Go project fixed the issue in targeted releases; downstream packagers bundled those fixes into point releases. Vulnerability trackers and the Go vulnerability database list affected / fixed ranges — upgrade to at least the fixed Go releases or apply your vendor package updates as distributed by your OS or vendor. The Go vuln database and the official issue/CL provide the definitive fix references. (go.dev)

- Upgrade to Go versions patched by the Go project (for the affected branches: the fixes were backported into the maintenance releases called out in the Go security advisories; downstream vendors map those fixes into distro packages). Confirm your vendor’s package advisory for exact package names and versions for your OS.

If you cannot patch immediately — pragmatic interim mitigations

- Strip or normalize the Expect header at the edge

- Remove Expect: 100-continue from incoming requests at your external ingress (load balancer, API gateway, or fronting proxy). This prevents the backend from entering the Expect flow entirely and avoids the vulnerable client-path behavior. Several write-ups suggested header stripping as a short-term workaround; while it may reduce performance benefits for some legitimate large uploads, it prevents the specific connection-poisoning vector. Label this as a temporary workaround, not a permanent substitute for patching.

- Avoid connection reuse if practical

- Configure your reverse proxy or HTTP client to reduce or disable connection reuse to backends (for example, reduce keep‑alive or limit pool sizes). This has a performance cost — higher TCP connection churn and CPU — but will blunt the impact of poisoned pooled connections. Use this only as an emergency measure while patching. Document and monitor the resource impact carefully.

- Rate-limit or block abusive clients

- Deploy rate-limits keyed by source IP, API key, or other identifier to slow any actor attempting to poison many backend connections quickly.

- Harden backend responses

- Where possible, modify backend servers to behave more consistently with Expect semantics: explicitly send 100 Continue when the backend will read the body or close the connection when returning a final response that will not read the body. In practice this requires careful code changes and is harder than patching the client library; still, in controlled environments you can consider server-side hardening.

- Investigate product-specific guidance

- If you run a vendor-managed appliance or distribution (for example, container runtime images, base images, or security appliances), follow that vendor’s remediation path. Many vendors released updated builds and images. HAProxy Technologies published fixes for its products and noted vendor-specific release guidance. Some vendors stated that no safe workaround was available other than patching, reinforcing that upgrades are the recommended route.

Long-term hardening

- Adopt schema or behavior-driven ingress normalization: explicitly validate and normalize headers at the edge so that backend ecosystems don’t experience protocol semantics surprises.

- Add synthetic load tests for connection-pool resilience as part of CI/CD smoke tests. A simple test that issues Expect: 100-continue requests and validates that connection reuse remains safe will catch regressions early.

- Keep language toolchains and runtimes on a predictable update cadence, and subscribe to your distribution’s security advisories — many vendors bundled Go fixes into platform point releases.

Operational guidance — checklist for immediate action

- Inventory: find all components that use Go’s net/http client (reverse proxies, sidecars, agents, CLI tools used in pipelines). Package manifests, Dockerfile / image layer inspection, and “go version” checks in runtime containers will help.

- Patch: apply Go runtime updates or vendor-supplied packages that include the fix. Confirm the package version maps to the CVE fix referenced in your vendor advisory.

- Mitigate: if patching isn’t immediately possible, strip Expect headers at the ingress or apply temporary connection-pool conservative settings as an emergency measure. Monitor the cost of these mitigations on throughput and latency.

- Monitor: watch for Expect: 100-continue spikes, backend error patterns, and sudden connection pool depletion. Create alerts tied to these signatures.

- Post-mortem: if you observed an outage tied to this behavior, collect connection pool metrics, gateway logs, and packet captures (if feasible) to reconstruct how many pooled connections were poisoned and whether any client IPs or artifacts point to scanning or exploitation. Do and incorporate them into incident response runbooks.

Why this matters — trade-offs, risk, and lessons for secure protocol handling

This vulnerability is instructive for several reasons:- Protocol edge-cases are not academic: small, normative behaviors (like Expect: 100-continue) can have outsized operational effects when paired with connection reuse and load-balancing logic.

- Library correctness matters across the supply chain: the Go runtime is widespread, and vulnerabilities in a standard library can cascade into many downstream products. Multiple distribution trackers and vendors mapped the issue into their advisories and pushed fixes — a reminder that patching language runtimes is a core part of infrastructure security.

- There is rarely a zero-cost workaround: disabling connection reuse or stripping headers reduces certain risks but costs performance or functionality. Teams must balance short-term availability with long-term correctness and deploy proper fixes.

Verification and references (how we validated the facts)

To ensure the technical assertions in this article are accurate we cross-referenced:- The Go project’s vulnerability and issue tracking pages and change lists that document the design discussion and the patch. The public Go issue describing Expect/100-continue handling and the associated change list are the primary canonical sources for the technical root cause and the intended behavioral change. (go.dev)

- The Go vulnerability database / pkg.go.dev vulnerability entry (GO-2024-2963) that maps the CVE to affected symbols and the version ranges. That is the authoritative mapping for affected versions of the standard library.

- Multiple vendor and distribution advisories (Amazon ALAS, Red Hat errata, Ubuntu USNs, Debian tracker) and vendor blogs (HAProxy) that document vendor-specific impacts and the availability of patches, ensuring practical guidance for operators running packaged distributions.

- Public vulnerability aggregators and trackers (NVD, cvedetails, cvefeed) to confirm dates, CVE metadata, and differing CVSS notations for operational context. Note that NVD sometimes shows “awaiting analysis” — always check vendor advisories for the most actionable package-level guidance.

Final recommendations — concrete steps for every team

- Immediately inventory all services that run Go binaries or embed Go libraries, and map them to the runtime versions they were built with.

- Prioritize patching internet-facing reverse proxies, API gateways, and any service that exposes an HTTP endpoint and reuses backend connections.

- If you cannot patch at once, implement ingress header normalization to strip Expect: 100-continue and apply conservative connection pooling settings for affected proxy components.

- Add detection rules: search logs for Expect: 100-continue, set alerts for rapid increases, and monitor backend error rates closely during and after mitigation.

- Schedule a follow-up after patching: re-enable whatever mitigations you temporarily applied (if they affected performance), validate that the upgrade removed the abnormal connection-failure signals, and document the timeline thoroughly.

Source: MSRC Security Update Guide - Microsoft Security Response Center