CVE-2026-2708 is a reminder that some of the most consequential web vulnerabilities still begin with a deceptively small parsing decision: what should a server do when an HTTP request contains more than one Content-Length header? The flaw, assigned to libsoup, concerns HTTP/1 request smuggling caused by ambiguous body framing, with Red Hat rating the issue low at CVSS 3.7 while other ecosystem trackers treat the broader class of behavior with more urgency. For WindowsForum readers, the practical lesson is not that Windows itself has suddenly gained a new native attack surface, but that modern Windows, Linux, cloud, container, and cross-platform application stacks routinely inherit risk from shared open-source components.

libsoup is the GNOME project’s HTTP client and server library, widely used in Linux desktop software, embedded applications, and cross-platform projects that rely on the GLib ecosystem. It is not a household name like OpenSSL, curl, or nginx, but it occupies the same quiet layer of infrastructure where parsing bugs can have disproportionate effects. When a library handles HTTP framing, it becomes part of the trust boundary between the network and application logic.

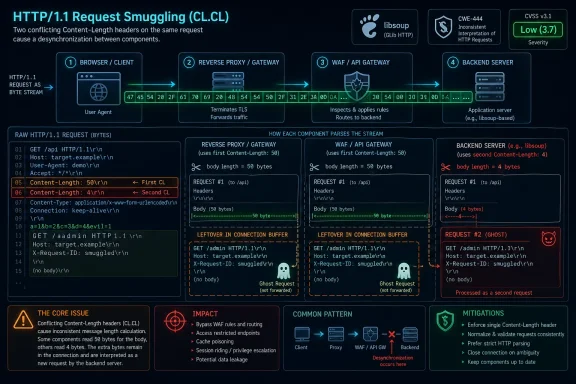

The vulnerability tracked as CVE-2026-2708 sits in libsoup’s HTTP/1 header parsing behavior. The reported issue centers on the way header values were appended without rejecting duplicate or conflicting Content-Length fields. In practical terms, an attacker may be able to craft a request where one component in a chain believes the body is one length while another component believes it is another.

That mismatch is the essence of HTTP request smuggling, also known as HTTP desynchronization. The attack does not require breaking encryption, guessing credentials, or exploiting memory corruption. Instead, it exploits disagreement between parsers, proxies, load balancers, application servers, and libraries about where one request ends and the next begins.

Historically, request smuggling has been associated with reverse proxies, CDNs, web application firewalls, and backend servers that disagree over Content-Length and Transfer-Encoding rules. What makes the libsoup case notable is that it reaches into a library used by many applications rather than a single public web server product. That makes exposure highly dependent on context, packaging, and deployment topology.

The key dates are also worth disentangling. Public distribution trackers began showing information about the issue in February 2026, while the NVD record cited by Microsoft’s vulnerability page was published on April 23, 2026 and last modified shortly afterward. That gap illustrates a familiar reality in vulnerability management: security teams often see the same issue arrive in different databases at different times.

Request smuggling is therefore not just a bug in one parser. It is a protocol interpretation gap between multiple parsers. A front-end proxy may accept, normalize, or forward a malformed request, while the backend may parse it in a way that leaves attacker-controlled bytes queued as the beginning of a second request.

In the libsoup case, the reported problem involves accepting duplicate or conflicting Content-Length values, commonly described as CL.CL behavior. Related descriptions from ecosystem advisories also reference TE+CL, where Transfer-Encoding and Content-Length appear together. Both patterns are dangerous because they create ambiguity about body boundaries.

A simplified vulnerable flow looks like this:

The relevant weakness category is CWE-444, “Inconsistent Interpretation of HTTP Requests,” which is the formal bucket for request smuggling and related desynchronization flaws. The classification matters because it tells defenders not to look for a crash or a memory leak. The bug is semantic: the program accepts input that should have been rejected or normalized before trust was extended.

libsoup is also used in both client and server contexts. The risk profile changes sharply depending on whether the affected code path is parsing inbound requests to an embedded server, outbound responses, or traffic through an intermediary-like component. This is why advisories can appear conservative while security engineers still take the issue seriously.

The fix strategy for this class of vulnerability is conceptually simple but operationally important:

However, CVSS is not a topology model. It does not fully capture whether a vulnerable component sits behind a permissive reverse proxy, inside a privileged internal management plane, or in front of an endpoint that trusts source IP headers and gateway routing. In request smuggling, the same parser behavior can be almost irrelevant in one deployment and highly exploitable in another.

Security teams should therefore treat the low score as a prioritization signal, not a dismissal. If libsoup is only used by a desktop RSS reader talking to trusted servers, urgency may be modest. If it backs an embedded HTTP service reachable through a proxy, the same CVE deserves closer scrutiny.

The practical questions are straightforward:

For Windows administrators, the important distinction is between operating system exposure and application supply-chain exposure. A Windows Server installation is not vulnerable merely because libsoup exists somewhere in the open-source ecosystem. But a Windows environment running Linux containers, WSL-based services, cross-platform GNOME-derived tools, embedded appliances, or vendor software that bundles libsoup may still have exposure.

This is the new normal for mixed estates. A vulnerability can appear in a Linux-native library, surface in Microsoft’s vulnerability systems, be scanned by enterprise tools on Windows endpoints, and ultimately matter because a third-party product includes the affected code. That is not a contradiction; it is the reality of shared components.

Administrators should check:

Amazon Linux has described Amazon Linux 2’s libsoup package as not affected, Amazon Linux 2023’s libsoup package as not affected, and Amazon Linux 2023’s libsoup3 as pending fix in its tracker. Red Hat’s ecosystem view has shown several Enterprise Linux streams under investigation or deferred depending on version and package. These differences reflect packaging, code version, backports, and support policy rather than simple disagreement over whether the bug exists.

For defenders, the takeaway is to follow the distribution channel that supplied the library. Upstream commits matter, but production systems usually receive fixes through vendor packages, backported patches, or application rebuilds. Cherry-picking a commit from upstream may be tempting, but it can create support drift if not handled carefully.

Security teams should therefore avoid a single-package assumption. A scan that only checks one library name may miss another installed variant. Likewise, a fixed upstream version does not necessarily mean the distribution package will show the same version number, because enterprise Linux vendors often backport fixes while preserving stable version identifiers.

A practical patch workflow should include:

This layered design is powerful, but it creates parser diversity. One hop may be strict, another permissive, and another optimized for compatibility with legacy clients. Request smuggling feeds on those differences, especially when the backend reuses persistent connections and trusts that the front-end has already made all security decisions.

The enterprise concern is not that every ambiguous request immediately becomes a breach. The concern is that a vulnerable parser can become a bridge across trust boundaries. In a segmented network, an attacker’s smuggled bytes may be interpreted as a request from a trusted proxy rather than from the original untrusted client.

Enterprise teams should pay special attention to services that combine these traits:

The safest approach is strict rejection of ambiguous framing. A recipient should not attempt to be clever when it sees conflicting Content-Length values or a request that mixes Transfer-Encoding and Content-Length in a way that could desynchronize downstream components. Compatibility is valuable, but not at the cost of treating attacker-selected ambiguity as a recoverable condition.

Libraries also need tests that model hostile intermediaries. Unit tests for “valid POST with Content-Length” are not enough. Parser test suites should include duplicate headers, comma-separated values, whitespace variants, chunked encoding edge cases, invalid lengths, and connection reuse after malformed requests.

Developers maintaining HTTP-facing code should apply these principles:

Still, defenders can build useful visibility. Logs should capture rejected malformed requests, duplicate framing headers, and mismatches between front-end and backend errors. If the proxy logs a 400 but the backend logs a successful request on the same connection, that discrepancy deserves investigation.

Testing should be controlled and authorized. Request smuggling probes can disrupt shared infrastructure because they intentionally desynchronize connections. Security teams should test in staging first, coordinate with operations, and avoid running aggressive scanners against production without guardrails.

A useful sequence is:

That said, consumers increasingly run developer tools, Linux containers, self-hosted dashboards, media servers, and home automation software. A technically inclined Windows user might run Ubuntu under WSL, Docker Desktop with Linux containers, or cross-platform apps that include GNOME-derived dependencies. Those setups blur the old boundary between “desktop user” and “server operator.”

The best consumer guidance is simple: keep applications, containers, Linux distributions, and developer environments updated. If a self-hosted service is exposed to the internet through a tunnel, reverse proxy, or home router, treat it more like enterprise infrastructure than a casual desktop app.

Home users should consider:

For Linux vendors, the competitive pressure is not only who patches first. It is who communicates clearly about affected versions, backports, exploitability, and operational mitigation. SUSE’s bundling of the fix into a broader libsoup 3.6.6 security update is one model. Debian and Ubuntu’s tracker-based status reporting is another. Red Hat’s CNA role adds further weight because its scoring and wording often propagate into enterprise scanners.

For Microsoft, the issue demonstrates why the Security Update Guide increasingly intersects with third-party open-source vulnerability management. Windows customers do not live in a Windows-only world. They run Linux workloads in Azure, containers on Windows hosts, cross-platform applications, and vendor software assembled from open-source libraries.

Vendors can differentiate themselves by offering:

For WindowsForum readers, the most important development will be whether any Microsoft product advisory links CVE-2026-2708 to a concrete product update rather than merely listing the CVE for ecosystem awareness. If that happens, normal Microsoft servicing channels may become relevant. Until then, the practical work is inventory, Linux package maintenance, container rebuilds, and vendor follow-up.

CVE-2026-2708 is not the loudest vulnerability of the year, and for many environments it will not be the most urgent. Its importance lies in what it exposes about modern software: a small open-source parser decision can ripple through proxies, containers, Microsoft vulnerability tracking, Linux distributions, and enterprise risk models. The right response is calm but disciplined—inventory the component, understand the topology, patch through supported channels, and treat ambiguous HTTP framing as a security boundary rather than a compatibility nuisance.

Source: NVD Security Update Guide - Microsoft Security Response Center

Overview

Overview

libsoup is the GNOME project’s HTTP client and server library, widely used in Linux desktop software, embedded applications, and cross-platform projects that rely on the GLib ecosystem. It is not a household name like OpenSSL, curl, or nginx, but it occupies the same quiet layer of infrastructure where parsing bugs can have disproportionate effects. When a library handles HTTP framing, it becomes part of the trust boundary between the network and application logic.The vulnerability tracked as CVE-2026-2708 sits in libsoup’s HTTP/1 header parsing behavior. The reported issue centers on the way header values were appended without rejecting duplicate or conflicting Content-Length fields. In practical terms, an attacker may be able to craft a request where one component in a chain believes the body is one length while another component believes it is another.

That mismatch is the essence of HTTP request smuggling, also known as HTTP desynchronization. The attack does not require breaking encryption, guessing credentials, or exploiting memory corruption. Instead, it exploits disagreement between parsers, proxies, load balancers, application servers, and libraries about where one request ends and the next begins.

Historically, request smuggling has been associated with reverse proxies, CDNs, web application firewalls, and backend servers that disagree over Content-Length and Transfer-Encoding rules. What makes the libsoup case notable is that it reaches into a library used by many applications rather than a single public web server product. That makes exposure highly dependent on context, packaging, and deployment topology.

Why this CVE deserves attention

The official scoring from Red Hat gives CVE-2026-2708 a CVSS 3.1 base score of 3.7, reflecting high attack complexity and limited direct impact. That score may be reasonable for the generic library flaw, but it can understate risk in a real environment where libsoup is placed behind a proxy, service mesh, API gateway, or load balancer.The key dates are also worth disentangling. Public distribution trackers began showing information about the issue in February 2026, while the NVD record cited by Microsoft’s vulnerability page was published on April 23, 2026 and last modified shortly afterward. That gap illustrates a familiar reality in vulnerability management: security teams often see the same issue arrive in different databases at different times.

How HTTP Request Smuggling Actually Works

HTTP/1.1 depends on message framing rules to decide where a request body ends. A server can use a Content-Length header to read a fixed number of bytes, or it can use Transfer-Encoding: chunked to read chunks until a terminating marker appears. Trouble begins when a request contains conflicting signals and different systems make different choices.Request smuggling is therefore not just a bug in one parser. It is a protocol interpretation gap between multiple parsers. A front-end proxy may accept, normalize, or forward a malformed request, while the backend may parse it in a way that leaves attacker-controlled bytes queued as the beginning of a second request.

In the libsoup case, the reported problem involves accepting duplicate or conflicting Content-Length values, commonly described as CL.CL behavior. Related descriptions from ecosystem advisories also reference TE+CL, where Transfer-Encoding and Content-Length appear together. Both patterns are dangerous because they create ambiguity about body boundaries.

The parser disagreement problem

The danger comes from chained infrastructure. A browser, proxy, WAF, CDN, load balancer, application server, and library may all touch the same request before business logic sees it. If even two of those components disagree, attackers may be able to influence the next request processed on a reused connection.A simplified vulnerable flow looks like this:

- The attacker sends a request with conflicting framing headers.

- The front-end component interprets the request one way and forwards it.

- The backend component interprets the same bytes differently.

- Extra bytes remain in the backend connection buffer.

- The backend treats those bytes as part of a following request.

The Specific libsoup Weakness

CVE-2026-2708 is described as a flaw in libsoup’s HTTP/1 header parsing logic, specifically involving the path that appends header values without validating duplicate or conflicting Content-Length fields. The named function,soup_message_headers_append_common(), is significant because header appending sounds harmless until the field in question controls message boundaries. For ordinary metadata headers, duplicates may be acceptable; for framing headers, duplicates can be dangerous.The relevant weakness category is CWE-444, “Inconsistent Interpretation of HTTP Requests,” which is the formal bucket for request smuggling and related desynchronization flaws. The classification matters because it tells defenders not to look for a crash or a memory leak. The bug is semantic: the program accepts input that should have been rejected or normalized before trust was extended.

libsoup is also used in both client and server contexts. The risk profile changes sharply depending on whether the affected code path is parsing inbound requests to an embedded server, outbound responses, or traffic through an intermediary-like component. This is why advisories can appear conservative while security engineers still take the issue seriously.

Duplicate Content-Length is not a cosmetic error

A duplicate Content-Length header with identical values can sometimes be normalized safely under strict rules. A duplicate Content-Length header with different values is a fundamentally different situation. It forces a recipient to choose which length is authoritative, and attackers rely on different components making different choices.The fix strategy for this class of vulnerability is conceptually simple but operationally important:

- Reject conflicting Content-Length values rather than picking one.

- Reject or safely handle Transfer-Encoding plus Content-Length combinations.

- Close the connection after malformed framing to avoid queued-byte attacks.

- Normalize only when the relevant specification permits it.

- Add regression tests for CL.CL and TE+CL cases.

Why a “Low” CVSS Score Can Still Matter

Red Hat’s CVSS vector for CVE-2026-2708 is AV:N/AC:H/PR:N/UI:N/S:U/C:N/I:L/A:N, producing a low base score. The score says exploitation is network-accessible, requires no privileges, and needs no user interaction, but also has high attack complexity and only limited integrity impact in the generic assessment. That is a defensible reading for a library vulnerability whose exploitability depends heavily on deployment architecture.However, CVSS is not a topology model. It does not fully capture whether a vulnerable component sits behind a permissive reverse proxy, inside a privileged internal management plane, or in front of an endpoint that trusts source IP headers and gateway routing. In request smuggling, the same parser behavior can be almost irrelevant in one deployment and highly exploitable in another.

Security teams should therefore treat the low score as a prioritization signal, not a dismissal. If libsoup is only used by a desktop RSS reader talking to trusted servers, urgency may be modest. If it backs an embedded HTTP service reachable through a proxy, the same CVE deserves closer scrutiny.

Environmental risk beats generic severity

Request smuggling has a long history of exceeding its nominal severity because the exploit impact is compositional. The attacker’s payoff depends on what the smuggled request can reach, not merely on what the parser bug does in isolation. That could include bypassing front-end authentication checks, confusing cache behavior, or reaching routes intended to be internal-only.The practical questions are straightforward:

- Is libsoup used in a server role?

- Is the service behind a proxy, gateway, CDN, or load balancer?

- Are persistent backend connections enabled?

- Does any front-end component enforce authentication, routing, or ACLs before forwarding?

- Are malformed requests logged and rejected consistently across the chain?

- Is HTTP/1 used between internal hops even when HTTP/2 or HTTP/3 is used externally?

The Windows and Microsoft Angle

The user-visible Microsoft connection comes through the Microsoft Security Response Center update guide entry for CVE-2026-2708. This does not automatically mean Windows is directly vulnerable in the same way a native Windows component would be. Microsoft’s guide can track third-party and open-source vulnerabilities when they affect Microsoft products, shipped components, developer dependencies, or customer risk visibility.For Windows administrators, the important distinction is between operating system exposure and application supply-chain exposure. A Windows Server installation is not vulnerable merely because libsoup exists somewhere in the open-source ecosystem. But a Windows environment running Linux containers, WSL-based services, cross-platform GNOME-derived tools, embedded appliances, or vendor software that bundles libsoup may still have exposure.

This is the new normal for mixed estates. A vulnerability can appear in a Linux-native library, surface in Microsoft’s vulnerability systems, be scanned by enterprise tools on Windows endpoints, and ultimately matter because a third-party product includes the affected code. That is not a contradiction; it is the reality of shared components.

What Windows admins should verify

The sensible Windows-side response is inventory-driven. Do not assume exposure, but do not assume irrelevance either. In 2026, many Windows shops run Linux workloads through containers, Kubernetes, WSL, appliances, developer tools, and cross-platform agents.Administrators should check:

- Container images based on Debian, Ubuntu, Fedora, RHEL, SUSE, or derivatives.

- WSL distributions used for development or automation.

- Third-party desktop applications that bundle GNOME or GLib components.

- Security appliances and scanners that embed Linux userland packages.

- CI/CD runners that build or test software with libsoup dependencies.

- Vendor software bills of materials where libsoup2.4 or libsoup3 appears.

Distribution Status and Patch Reality

The ecosystem picture around CVE-2026-2708 is uneven, which is normal for a library shipped across many distributions and support channels. Debian’s tracker has listed vulnerable source packages across libsoup2.4 and libsoup3 lines, with notes classifying the issue as minor in some stable releases. Ubuntu has marked affected releases as needing evaluation. SUSE has shipped a libsoup 3.6.6 update that includes a fix for this CVE among several other libsoup security fixes.Amazon Linux has described Amazon Linux 2’s libsoup package as not affected, Amazon Linux 2023’s libsoup package as not affected, and Amazon Linux 2023’s libsoup3 as pending fix in its tracker. Red Hat’s ecosystem view has shown several Enterprise Linux streams under investigation or deferred depending on version and package. These differences reflect packaging, code version, backports, and support policy rather than simple disagreement over whether the bug exists.

For defenders, the takeaway is to follow the distribution channel that supplied the library. Upstream commits matter, but production systems usually receive fixes through vendor packages, backported patches, or application rebuilds. Cherry-picking a commit from upstream may be tempting, but it can create support drift if not handled carefully.

Why package names complicate response

libsoup exists in multiple major package lines, commonly including libsoup2.4 and libsoup3. Applications may depend on one or the other, and distributions may patch them differently. A system can even carry both versions because different applications have different ABI expectations.Security teams should therefore avoid a single-package assumption. A scan that only checks one library name may miss another installed variant. Likewise, a fixed upstream version does not necessarily mean the distribution package will show the same version number, because enterprise Linux vendors often backport fixes while preserving stable version identifiers.

A practical patch workflow should include:

- Identify whether libsoup2.4, libsoup3, or both are installed.

- Map each package to the distribution’s own advisory status.

- Check whether containers contain older copies independent of the host.

- Rebuild images after base image updates become available.

- Restart services that have already loaded vulnerable shared libraries.

- Validate that vendor appliances have published firmware or package updates.

Enterprise Exposure: Proxies, Gateways, and Internal Trust

The highest-risk scenarios for CVE-2026-2708 involve multi-hop HTTP architectures. Modern enterprise traffic rarely travels directly from a client to a single application process. It commonly passes through edge gateways, TLS terminators, identity-aware proxies, WAFs, API gateways, ingress controllers, service meshes, and backend services.This layered design is powerful, but it creates parser diversity. One hop may be strict, another permissive, and another optimized for compatibility with legacy clients. Request smuggling feeds on those differences, especially when the backend reuses persistent connections and trusts that the front-end has already made all security decisions.

The enterprise concern is not that every ambiguous request immediately becomes a breach. The concern is that a vulnerable parser can become a bridge across trust boundaries. In a segmented network, an attacker’s smuggled bytes may be interpreted as a request from a trusted proxy rather than from the original untrusted client.

Where the blast radius grows

The blast radius grows when security controls live only at the front edge. If the front-end enforces authentication but the backend assumes every request from the proxy is authenticated, a desync flaw can become an access-control problem. If the front-end applies routing rules but the backend exposes internal routes, a smuggled request may reach paths the attacker could not request directly.Enterprise teams should pay special attention to services that combine these traits:

- HTTP/1.1 backend connections with keep-alive enabled.

- Reverse proxies that forward ambiguous requests instead of rejecting them.

- Backend services using embedded HTTP servers rather than hardened front-end servers.

- Administrative endpoints reachable only from internal networks.

- Cache layers that store responses based on front-end interpretation.

- Authentication controls enforced before traffic reaches the vulnerable parser.

Developer Guidance: Fixing the Class, Not Just the CVE

For developers, CVE-2026-2708 reinforces a central rule of HTTP parsing: framing headers are security-critical input. They are not ordinary metadata. They define how the byte stream is segmented, and segmentation determines what the application will process as a request.The safest approach is strict rejection of ambiguous framing. A recipient should not attempt to be clever when it sees conflicting Content-Length values or a request that mixes Transfer-Encoding and Content-Length in a way that could desynchronize downstream components. Compatibility is valuable, but not at the cost of treating attacker-selected ambiguity as a recoverable condition.

Libraries also need tests that model hostile intermediaries. Unit tests for “valid POST with Content-Length” are not enough. Parser test suites should include duplicate headers, comma-separated values, whitespace variants, chunked encoding edge cases, invalid lengths, and connection reuse after malformed requests.

Secure parsing principles

A robust HTTP parser should make the unsafe path boring: reject, log, close, and move on. That may break some non-compliant clients, but it protects the larger system from ambiguity. In security-sensitive infrastructure, being liberal in what you accept has aged poorly.Developers maintaining HTTP-facing code should apply these principles:

- Treat duplicate framing headers as suspect unless the specification explicitly allows safe normalization.

- Reject conflicting Content-Length values before request routing or body handling.

- Avoid forwarding malformed requests to downstream services.

- Close connections after framing errors to clear any queued attacker-controlled bytes.

- Test proxy-to-backend combinations, not just a single parser in isolation.

- Document parser behavior so operators know what the library accepts and rejects.

Detection, Logging, and Validation

Detecting request smuggling attempts is harder than detecting a conventional exploit string. Attack payloads may look like malformed HTTP rather than malware. They often involve subtle combinations of Content-Length, Transfer-Encoding, whitespace, connection reuse, and partial requests.Still, defenders can build useful visibility. Logs should capture rejected malformed requests, duplicate framing headers, and mismatches between front-end and backend errors. If the proxy logs a 400 but the backend logs a successful request on the same connection, that discrepancy deserves investigation.

Testing should be controlled and authorized. Request smuggling probes can disrupt shared infrastructure because they intentionally desynchronize connections. Security teams should test in staging first, coordinate with operations, and avoid running aggressive scanners against production without guardrails.

Practical validation steps

A mature validation program should combine package inventory, configuration review, and safe protocol testing. The goal is not merely to prove whether a scanner can trigger a warning. The goal is to determine whether ambiguous requests can cross a trust boundary.A useful sequence is:

- Inventory systems and images for libsoup2.4 and libsoup3.

- Determine whether the library is used in a server-side request parsing role.

- Identify any front-end proxies or gateways in front of the service.

- Confirm how each hop handles duplicate Content-Length and TE+CL requests.

- Apply distribution or vendor patches when available.

- Retest malformed framing behavior after patching and service restart.

Consumer Impact: Mostly Indirect, But Not Irrelevant

For ordinary Windows consumers, CVE-2026-2708 is unlikely to be a panic item. The average home PC is not running an internet-facing libsoup-based embedded HTTP server behind a reverse proxy. Most consumer exposure, if any, would come through applications that bundle vulnerable libraries and later ship updates through their normal channels.That said, consumers increasingly run developer tools, Linux containers, self-hosted dashboards, media servers, and home automation software. A technically inclined Windows user might run Ubuntu under WSL, Docker Desktop with Linux containers, or cross-platform apps that include GNOME-derived dependencies. Those setups blur the old boundary between “desktop user” and “server operator.”

The best consumer guidance is simple: keep applications, containers, Linux distributions, and developer environments updated. If a self-hosted service is exposed to the internet through a tunnel, reverse proxy, or home router, treat it more like enterprise infrastructure than a casual desktop app.

The home lab wrinkle

Home labs deserve special mention because they often combine enterprise-style architecture with consumer-grade maintenance. A hobbyist may run a reverse proxy, several containers, a dashboard, an identity proxy, and experimental services on repurposed hardware. That is exactly the kind of multi-hop HTTP environment where parser inconsistencies matter.Home users should consider:

- Updating base images rather than only updating application code.

- Avoiding direct internet exposure for experimental services.

- Using strict reverse proxy configurations that reject malformed requests.

- Disabling unused embedded web interfaces on appliances.

- Monitoring logs for repeated malformed HTTP requests.

- Separating home lab networks from primary personal devices.

Competitive and Market Implications

CVE-2026-2708 lands in a broader market shift toward software supply-chain accountability. Enterprises now expect vendors to identify bundled open-source components, publish timely advisories, and provide machine-readable vulnerability data. A small HTTP parser flaw can therefore become a test of SBOM quality, vendor transparency, and patch distribution speed.For Linux vendors, the competitive pressure is not only who patches first. It is who communicates clearly about affected versions, backports, exploitability, and operational mitigation. SUSE’s bundling of the fix into a broader libsoup 3.6.6 security update is one model. Debian and Ubuntu’s tracker-based status reporting is another. Red Hat’s CNA role adds further weight because its scoring and wording often propagate into enterprise scanners.

For Microsoft, the issue demonstrates why the Security Update Guide increasingly intersects with third-party open-source vulnerability management. Windows customers do not live in a Windows-only world. They run Linux workloads in Azure, containers on Windows hosts, cross-platform applications, and vendor software assembled from open-source libraries.

What rivals can learn

The competitive lesson for platform vendors is that vulnerability response is now part of product trust. Customers compare not only kernel hardening or endpoint features, but also advisory clarity, package metadata, scanner integration, and remediation workflows. In that environment, a low-severity CVE can still influence confidence if the response is opaque.Vendors can differentiate themselves by offering:

- Accurate SBOMs that identify libsoup variants and build provenance.

- Clear exploitability statements tied to actual product configurations.

- Fast container base image rebuilds after upstream fixes land.

- Consistent scanner metadata to reduce false positives and duplicates.

- Documented mitigations for customers waiting on package updates.

- Lifecycle transparency for products where fixes are deferred.

Strengths and Opportunities

CVE-2026-2708 also shows the security ecosystem working as intended in several respects. The issue has a CVE identifier, a CWE classification, distribution tracking, upstream references, and vendor-specific status pages. That coordination gives defenders enough structure to inventory exposure and plan remediation without waiting for a dramatic exploit headline.Positive takeaways

- The vulnerability class is well understood, which helps defenders reason about likely exploit paths.

- The affected behavior is narrow, centering on HTTP/1 message framing rather than arbitrary code execution.

- Patch paths are emerging through normal distribution channels, reducing the need for risky manual source changes.

- The low generic CVSS score supports measured prioritization, especially for systems where libsoup is not server-facing.

- The issue encourages better HTTP parser test coverage, including duplicate and conflicting header cases.

- SBOM and package inventory programs gain a concrete validation case, especially for containerized environments.

- Cross-vendor visibility improves response, because Microsoft, Linux vendors, and security scanners can all surface the same identifier.

Risks and Concerns

The main concern is not mass exploitation of every libsoup installation. The more realistic concern is selective exploitation in environments where vulnerable parsing combines with permissive proxies, trusted backend connections, and internal-only functionality. That kind of risk is easy to underestimate because each individual component may appear low severity when assessed alone.Watch the hidden assumptions

- Low CVSS may lead to under-prioritization in environments where request smuggling has higher practical impact.

- Scanner results may be noisy, especially where distributions backport fixes without changing upstream-looking version numbers.

- Containers may remain vulnerable after hosts are patched if images are not rebuilt.

- Third-party applications may bundle private copies of libsoup outside normal package management.

- Proxy and backend behavior may differ, creating exposure even when each component appears standards-aware in isolation.

- Legacy HTTP/1 backend links may persist behind modern HTTP/2 or HTTP/3 public endpoints.

- Deferred fixes in older product lines may create long-tail exposure for appliances and embedded systems.

What to Watch Next

The next phase will be distribution and vendor convergence. Security teams should watch whether their Linux vendors mark the issue fixed, deferred, not affected, or still under investigation. They should also track whether application vendors that bundle libsoup release their own updates, because bundled libraries often escape operating system patch cycles.For WindowsForum readers, the most important development will be whether any Microsoft product advisory links CVE-2026-2708 to a concrete product update rather than merely listing the CVE for ecosystem awareness. If that happens, normal Microsoft servicing channels may become relevant. Until then, the practical work is inventory, Linux package maintenance, container rebuilds, and vendor follow-up.

Near-term checklist

- Confirm exposure across Windows-hosted containers, WSL distributions, Linux servers, and third-party apps.

- Track vendor advisories for libsoup2.4 and libsoup3 separately.

- Prioritize internet-facing multi-hop HTTP services over isolated desktop use.

- Validate proxy behavior for duplicate Content-Length and TE+CL requests.

- Rebuild and redeploy containers after base images receive patched packages.

CVE-2026-2708 is not the loudest vulnerability of the year, and for many environments it will not be the most urgent. Its importance lies in what it exposes about modern software: a small open-source parser decision can ripple through proxies, containers, Microsoft vulnerability tracking, Linux distributions, and enterprise risk models. The right response is calm but disciplined—inventory the component, understand the topology, patch through supported channels, and treat ambiguous HTTP framing as a security boundary rather than a compatibility nuisance.

Source: NVD Security Update Guide - Microsoft Security Response Center