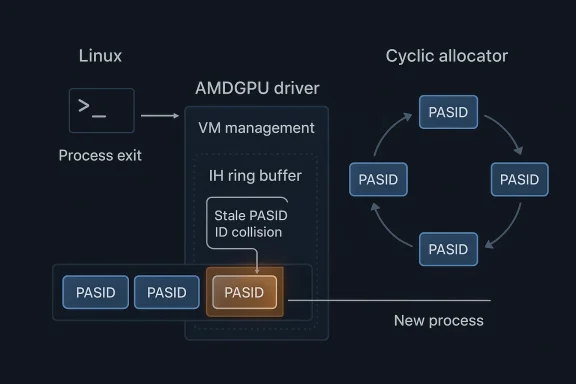

CVE-2026-31462 is a small-looking Linux kernel flaw with a very specific failure mode, but it sits in exactly the kind of plumbing that can cause outsized disruption when it misbehaves. The vulnerability in drm/amdgpu is about immediate PASID reuse after a process exits, where the GPU can briefly keep acting on stale state while page-fault interrupts are still sitting in the IH ring buffer. The fix is narrowly surgical: switch PASID allocation to an idr cyclic allocator so the same ID is not handed back immediately, mirroring the kernel’s own PID reuse discipline. That is the sort of change that sounds mundane until you remember how often GPUs, drivers, and interrupt paths interact under load. D, or Process Address Space ID, is one of those GPU-facing concepts that rarely enters public conversation unless something breaks. In AMD’s GPU stack, PASID helps the driver map process-specific memory context to the hardware so compute and virtual memory operations can be tracked correctly. The kernel’s amdgpu documentation shows that PASID is deeply tied to VM management, fault handling, and task information plumbing, which means it is not a superficial label but part of the device’s state machine.

That matters becautnt code are often less about a single bad pointer and more about timing. A process can exit, free its PASID, and leave behind hardware-visible work that has not fully drained yet. If the allocator immediately reissues the same PASID to a new process, the GPU may confuse old fault traffic with new execution state. In practice, that can produce interrupt handling problems, misattributed faults, and the kind of confusing device behavior that is hard to diagnose from user space.

The CVE description is important because it tells a complete : page faults can still be pending in the interrupt handler ring buffer when the process exits, and immediate PASID reuse can make the new process collide with the old process’s unfinished GPU state. That is a textbook example of a teardown race, except the race is not between two user-space threads but between device cleanup and hardware interrupt latency. The patch’s answer is equally telling: use a cyclic allocator so the same PASID does not come back right away.

This kind of bug is particularly relevant in the modern Linux graphics stack becauer just a display engine. It is a compute device, a memory-management participant, and sometimes a multi-tenant resource used in desktop, workstation, and server environments. That makes lifetime bugs around PASID reuse more interesting than they might look at first glance. Even if the practical consequence is “only” a driver hang or a faulty interrupt sequence, the operational impact can still be serious in environments that depend on reliable GPU scheduling.

The fact that the fix was cherry-picked from an upstream commit also matters. Stable-kernel backports ahat maintainers believe the bug is concrete, reproducible, and suitable for broad deployment. In kernel security terms, that is often a more meaningful signal than a dramatic CVSS number, especially when NVD has not yet published a severity assessment.

At a high level, a PASID lets the GPU associate work with the correct process addre the hardware and driver to maintain a per-process view of memory and faults. In AMDGPU’s VM-oriented interfaces, PASID management is explicitly part of the driver’s job, not an optional optimization.

The danger in immediate reuse is that hardware does not always stop thinking the instant a process exits. Interrupts can be deferred. Faults can process with the same identifier may arrive before the system has fully forgotten the old one. That is where the bug lives: not in the existence of PASID allocation itself, but in the assumption that an identifier is free to reuse the moment software says so.

A useful way to think about it is this:

This is elegant because it preserves the resource model. PASIDs are finite. You cannot simply keep issuing new ones indefinitely without eventually wrapping or exhausting the namespace. A cyclic allocator naturally balances reuse and freshness. It donent tombstone; it simply avoids the dangerous “same ID right away” path that causes the collision.

It also fits the kernel’s general philosophy of avoiding immediate identifier reuse when stale references can still exist. PIDs, file descriptors, and many other kernel resources benefit from this pattern because it reduces the chance that delayed work will point at the wrong live object. Inis less about AMDGPU specifically and more about reapplying a proven kernel design principle to a GPU identifier.

The broader lesson is that reuse policy is security policy. When the allocator hands back a value too early, the system is effectively telling the hardware to reinterpret old state as new state. That is never a good idea in an asynchronous device model. The cyclic strategy gives the driver a small buffer of tiurity, small buffers of time often matter a great deal.

That kind of behavior can be maddening in the field because it depends on timing. A workstation could run normally for days and only show the issue when a process exits at just the wrong moment, or when compute workloads are rapid-fire enough to expose the reuse window. Those are the bugs that pass ordinary smoke tests but surface in real production usem patterns

The issue also illustrates why lifecycle bugs often become support problems before they become glamorous security headlines. Affected users may never describe the behavior as a CVE; they will describe it as “the GPU got weird after a process restart.” That is exactly the sort of phrase that kernel maintainers learn to treat as a clue rather than a dismissal.

The reason thfies as security-relevant is that it can affect isolation between processes. PASID is supposed to help keep process contexts distinct on the GPU. If the driver accidentally reuses the same identifier too quickly, the isolation boundary becomes fuzzier than intended. That may not instantly equal arbitrary code execution, but it does mean the driver can be tricked into mixing the wrong hardware history with the wrong processrity significance without sensationalism

It is tempting to ask whether this is “just” a reliability bug. That question misses the point. Kernel reliability and kernel security overlap whenever the bug touches process isolation, interrupt handling, or device state that outlives the process itself. The fact that the fix is preventative rather than reactive is exactly what makes the CVE worthy of tracking.

The stable backport trail also strengthens the case. The committo a downstream patch set, which means maintainers considered the fix safe enough for established release branches. In the Linux world, that is usually a strong indicator that the bug is not hypothetical. It is an operational issue that maintainers expect users to run into if they stay on vulnerable builds long enough.

In other words, the CVE is not trying to tell us that AMDGPU is catastrophically broken. It is row but important identity-reuse assumption was too optimistic for an asynchronous device model. That is the kind of bug that disciplined kernel teams fix early precisely because it does not need to become a headline to be worth fixing.

The enterprise concern is not simply that one job may fail. It is that a rare interrupt mismatch can cause persat is expensive to root-cause. GPU issues in managed fleets are notorious for generating long incident threads because symptoms often span driver versions, kernel releases, user-space libraries, and scheduling behavior. A fix like this is attractive because it removes a source of nondeterminism rather than trying to paper over downstream effects.

The other enterprise wrinkle is vendor packaging. Most organizations are not running a raw upstream kernel; they are running a distribution kern means the real question is not whether the upstream fix exists, but whether the shipped kernel has already incorporated it. For an issue like this, version verification matters more than the headline CVE number.

The consumer story is mostly about stability and trust. If a graphics driver occasionally mixes old fault state with a newly launched process, the user experiences it as unpredictable behavior: a crash, alication failure that appears unrelated to the actual cause. That kind of bug is annoying on a personal machine and disruptive on a shared workstation.

The practical consumer takeaway is simple: if your system uses AMDGPU and you rely on it for anything beyond casual desktop rendering, this is worth patching promptly. The bug is not presented as a dramatic remote exploit, but it is a real state-lifecycle isriver that users depend on to keep the machine responsive and predictable.

The AMDGPU PASID issue fits a familiar pattern: a resource is freed too early from the perspective of software, but not early enough from the perspective of everything that still references it. The same dynamic appears across subsystems in different forms, which is why the kernel community often prefers re intentionally conservative. Holding off on reuse is cheap compared to chasing down a timing bug in production.

A broader implication for security teams is that “low-severity” kers really lifecycle design defects. Those are worth tracking carefully because they often serve as enabling conditions for more visible failures later. Fixing the allocator policy now is better than trying to debug random GPU weirdness after the fact.

The second strength is that the fix aligns with a long-standing kernel design pattern. Using cyclic allos with delayed teardown is a proven way to reduce reuse hazards, and the fact that the team adopted that pattern here suggests the problem was understood in lifecycle terms rather than as a one-off edge case.

Finally, the case for stress testing around device teardown and rapid relaunch. Tools that exercise process churn, interrupt latency, and fault cleanup are often more valuable than conventional happy-path validation. Bugs like this are exactly why. They do not announce themselves through normal use; they show up when the lifecycle gets tight.

Another concern is that the symptom can be intermittent. Timing bugs are notoriously hard to reproduce, which means organizations may fail to connect the issue to a specific kernel build or driver revision. That makes inventory and verification especially importfor the bug to become obvious is a poor strategy in a production fleet.

The final concern is that state-lifetime bugs tend to cluster. Once maintainers identify one timing-sensitive identifier reuse issue, it is worth looking for adjacent peanup paths. That does not mean there is a broader emergency; it means this is the kind of bug that rewards careful regression testing because the underlying pattern is common in asynchronous driver code.

The second thing to watch is whether follow-on fixes appear in nearby AMDGPU code paths. When a bug exposes a lifecycle assumption, maintainers often audit adjacent state transitions to ensure they are not making the same mistake elsewhere. That is especiars, where fault handling, VM teardown, and interrupt processing are tightly interwoven.

The broader story here is not that AMDGPU has a dramatic new exploit problem. It is that the GPU stack continues to mature into a deeply stateful subsystem where even identifier reuse policy can have security implications. That is a sign of complexity, but it is also a sign of healthy maintenance: the kernel community found a narrow race, reasone correctly, and chose the kind of fix that closes the window without creating a new one.

CVE-2026-31462 is therefore best understood as a precision correction in a very sensitive part of the graphics stack. It is not flashy, but it is the sort of fix that quietly prevents headaches, preserves trust in device isolation, and keeps asynchronous h on the ghost of a process that has already left the building.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

That matters becautnt code are often less about a single bad pointer and more about timing. A process can exit, free its PASID, and leave behind hardware-visible work that has not fully drained yet. If the allocator immediately reissues the same PASID to a new process, the GPU may confuse old fault traffic with new execution state. In practice, that can produce interrupt handling problems, misattributed faults, and the kind of confusing device behavior that is hard to diagnose from user space.

The CVE description is important because it tells a complete : page faults can still be pending in the interrupt handler ring buffer when the process exits, and immediate PASID reuse can make the new process collide with the old process’s unfinished GPU state. That is a textbook example of a teardown race, except the race is not between two user-space threads but between device cleanup and hardware interrupt latency. The patch’s answer is equally telling: use a cyclic allocator so the same PASID does not come back right away.

This kind of bug is particularly relevant in the modern Linux graphics stack becauer just a display engine. It is a compute device, a memory-management participant, and sometimes a multi-tenant resource used in desktop, workstation, and server environments. That makes lifetime bugs around PASID reuse more interesting than they might look at first glance. Even if the practical consequence is “only” a driver hang or a faulty interrupt sequence, the operational impact can still be serious in environments that depend on reliable GPU scheduling.

The fact that the fix was cherry-picked from an upstream commit also matters. Stable-kernel backports ahat maintainers believe the bug is concrete, reproducible, and suitable for broad deployment. In kernel security terms, that is often a more meaningful signal than a dramatic CVSS number, especially when NVD has not yet published a severity assessment.

What PASID reuse actually means

What PASID reuse actually means

At a high level, a PASID lets the GPU associate work with the correct process addre the hardware and driver to maintain a per-process view of memory and faults. In AMDGPU’s VM-oriented interfaces, PASID management is explicitly part of the driver’s job, not an optional optimization.The danger in immediate reuse is that hardware does not always stop thinking the instant a process exits. Interrupts can be deferred. Faults can process with the same identifier may arrive before the system has fully forgotten the old one. That is where the bug lives: not in the existence of PASID allocation itself, but in the assumption that an identifier is free to reuse the moment software says so.

Why the hardware timing matters

GPU fault handling is not synchronous in the way users often imagine. The driver receives and drains events through interrupt inf means there is always a small gap between “the process is gone” and “the hardware is completely done talking about it.” If a PASID is recycled during that gap, the remaining interrupts can be misrouted semantically, even if the memory backing the PASID has already been freed. That is exactly why the CVE highlights the IH ring buffer rather than just generic cleanup.A useful way to think about it is this:

- A process exits and frees its PASID.

- Pending page-fault interrupts are still waiting in the GPU’s interrupt handling path.

- The allocator to a new process.

- The hardware and driver now have a stale identifier collision.

- The new process inherits the operational confusion of the old one.

Why the fix uses cyclic allocation

The most interesting part of the fix is the allocator choice. The CVE description says the PASID allocator now uses the same cyclic strategy that kernel PIDs use. That is a very Linroblem: not by banning reuse forever, but by making immediate reuse unlikely enough that stale state has time to drain.This is elegant because it preserves the resource model. PASIDs are finite. You cannot simply keep issuing new ones indefinitely without eventually wrapping or exhausting the namespace. A cyclic allocator naturally balances reuse and freshness. It donent tombstone; it simply avoids the dangerous “same ID right away” path that causes the collision.

Why this is preferable to a heavier lock

A more aggressive fix might have involved stronger synchronization, explicit wait-for-drain semantics, or more invasive teardown ordering. Those approaches can work, but they often create more overhead and more coupling betwee paths. The cyclic allocator is attractive because it changes the allocation policy rather than trying to make every downstream path perfectly race-free. That usually produces a smaller, more backportable patch.It also fits the kernel’s general philosophy of avoiding immediate identifier reuse when stale references can still exist. PIDs, file descriptors, and many other kernel resources benefit from this pattern because it reduces the chance that delayed work will point at the wrong live object. Inis less about AMDGPU specifically and more about reapplying a proven kernel design principle to a GPU identifier.

The broader lesson is that reuse policy is security policy. When the allocator hands back a value too early, the system is effectively telling the hardware to reinterpret old state as new state. That is never a good idea in an asynchronous device model. The cyclic strategy gives the driver a small buffer of tiurity, small buffers of time often matter a great deal.

How the bug can manifest in real systems

The CVE text points to interrupt issues, which suggests this is not just a bookkeeping bug but a hardware-visible mismatch. In practical terms, the likely symptoms include incorrect page-fault accounting, spurious fault handling, and possibly hard-to-reproduce GPU instability under pmon thread is that the GPU might be forced to reconcile stale interrupt state with a freshly assigned PASID.That kind of behavior can be maddening in the field because it depends on timing. A workstation could run normally for days and only show the issue when a process exits at just the wrong moment, or when compute workloads are rapid-fire enough to expose the reuse window. Those are the bugs that pass ordinary smoke tests but surface in real production usem patterns

- Intermittent GPU page-fault handling anomalies.

- Interrupt behavior that appears to belong to the wrong process.

- Flaky recovery after process exit and relaunch.

- Rare hangs or misbehavior under heavy GPU task churn.

- Troublesome debugging sessions where the hardware seems to “remember” prior work.

The issue also illustrates why lifecycle bugs often become support problems before they become glamorous security headlines. Affected users may never describe the behavior as a CVE; they will describe it as “the GPU got weird after a process restart.” That is exactly the sort of phrase that kernel maintainers learn to treat as a clue rather than a dismissal.

Why this became a CVE

Not every kernel bug gity status, but this one clearly fits the model. The kernel description identifies a concrete failure mode, a plausible trigger, and a specific fix. Even though NVD had not yet assigned a score at the time of publication, the lack of a finalized CVSS value does not make the issue less real; it simply means the enrichment pipeline had not finished.The reason thfies as security-relevant is that it can affect isolation between processes. PASID is supposed to help keep process contexts distinct on the GPU. If the driver accidentally reuses the same identifier too quickly, the isolation boundary becomes fuzzier than intended. That may not instantly equal arbitrary code execution, but it does mean the driver can be tricked into mixing the wrong hardware history with the wrong processrity significance without sensationalism

It is tempting to ask whether this is “just” a reliability bug. That question misses the point. Kernel reliability and kernel security overlap whenever the bug touches process isolation, interrupt handling, or device state that outlives the process itself. The fact that the fix is preventative rather than reactive is exactly what makes the CVE worthy of tracking.

The stable backport trail also strengthens the case. The committo a downstream patch set, which means maintainers considered the fix safe enough for established release branches. In the Linux world, that is usually a strong indicator that the bug is not hypothetical. It is an operational issue that maintainers expect users to run into if they stay on vulnerable builds long enough.

In other words, the CVE is not trying to tell us that AMDGPU is catastrophically broken. It is row but important identity-reuse assumption was too optimistic for an asynchronous device model. That is the kind of bug that disciplined kernel teams fix early precisely because it does not need to become a headline to be worth fixing.

Enterprise impact

For enterprises, the key question is whether the affected machines use AMD GPUs in workflows where process churn and GPU fault handling are cis yes, then this is a meaningful maintenance item. Workstation fleets, development rigs, virtualization hosts with GPU acceleration, and compute nodes that spawn and tear down GPU jobs repeatedly are the most likely candidates for exposure.The enterprise concern is not simply that one job may fail. It is that a rare interrupt mismatch can cause persat is expensive to root-cause. GPU issues in managed fleets are notorious for generating long incident threads because symptoms often span driver versions, kernel releases, user-space libraries, and scheduling behavior. A fix like this is attractive because it removes a source of nondeterminism rather than trying to paper over downstream effects.

Operational reasons to patch quickly

- GPU compute fleets often run many shorDeveloper workstations may restart workloads frequently.

- Faults can survive briefly beyond the process that triggered them.

- Misattributed interrupts complicate incident response.

- Small timing bugs can become expensive support escalations.

- Backport-ready fixes are easier to adopt during normal patch windows.

The other enterprise wrinkle is vendor packaging. Most organizations are not running a raw upstream kernel; they are running a distribution kern means the real question is not whether the upstream fix exists, but whether the shipped kernel has already incorporated it. For an issue like this, version verification matters more than the headline CVE number.

Consumer impact

Consumer impact is likely narrower, but it is not zero. A desktop user who rarely stress-tests the GPU or runs many short-lived compute jobs may never notice the issue. Still, modern consumer systems increasingly use discreg from creative applications to local AI workloads, and those workloads can produce the same sort of rapid process exit and re-launch patterns that expose PASID reuse races.The consumer story is mostly about stability and trust. If a graphics driver occasionally mixes old fault state with a newly launched process, the user experiences it as unpredictable behavior: a crash, alication failure that appears unrelated to the actual cause. That kind of bug is annoying on a personal machine and disruptive on a shared workstation.

Where consumer users are most likely to notice

- Gaming or creative workloads that launch and quit GPU-heavy processes often.

- Local compute or AI tools that create short-lived GPU sessions.

- Systems with aggressive suspend/resume or application churn.

- Machines already near the edg.

- Environments where multiple user sessions share the same GPU.

The practical consumer takeaway is simple: if your system uses AMDGPU and you rely on it for anything beyond casual desktop rendering, this is worth patching promptly. The bug is not presented as a dramatic remote exploit, but it is a real state-lifecycle isriver that users depend on to keep the machine responsive and predictable.

Why immediate reuse is a recurring kernel problem

Immediate reuse bugs keep showing up in kernel code because the kernel is full of objects that outlive the request that created them. IDs, descriptors, handles, and contexts all have some lag between “software says done” and “hardware or deferred work truly done.” That lag is where confusion starts, and confusion in the precursor to a security or stability defect.The AMDGPU PASID issue fits a familiar pattern: a resource is freed too early from the perspective of software, but not early enough from the perspective of everything that still references it. The same dynamic appears across subsystems in different forms, which is why the kernel community often prefers re intentionally conservative. Holding off on reuse is cheap compared to chasing down a timing bug in production.

The design lesson

The best kernel fixes often do not try to make every path perfectly synchronous. Instead, they make the dangerous path less likely. That is what cyclic allocation does here. It leaves the architecture asynchronous, but it reduces the chance that stale state and fresh identity collide in exactly the wrong ordeeminder that GPU drivers increasingly look like miniature operating systems. They manage identity, memory, faults, and interrupts, all under pressure from user-space workloads that may come and go quickly. When a driver uses an identifier too aggressively, the problem is not just cosmetic. It can distort the way the hardware interprets the lifetime of a process context.A broader implication for security teams is that “low-severity” kers really lifecycle design defects. Those are worth tracking carefully because they often serve as enabling conditions for more visible failures later. Fixing the allocator policy now is better than trying to debug random GPU weirdness after the fact.

Strengths and Opportunities

The strongest aspect of this fix is that it ist redesign AMDGPU’s PASID machinery or introduce broad behavioral changes; it simply makes reuse less aggressive so stale interrupts are less likely to collide with a new process. That is a good kernel patch shape because it minimizes collateral risk while addressing the actual flaw.The second strength is that the fix aligns with a long-standing kernel design pattern. Using cyclic allos with delayed teardown is a proven way to reduce reuse hazards, and the fact that the team adopted that pattern here suggests the problem was understood in lifecycle terms rather than as a one-off edge case.

What operators can gain from this CVE

- A clear signal that the bug is real and already fixed upstream.

- A patch model that isleanly.

- Better protection against stale interrupt confusion.

- Improved stability under process churn.

- Reduced chance of future identifier-collision bugs.

- Easier vendor-to-upstream correlation during patch management.

- A reminder to prioritize lifecycle bugs, not just flashy exploit chains.

Finally, the case for stress testing around device teardown and rapid relaunch. Tools that exercise process churn, interrupt latency, and fault cleanup are often more valuable than conventional happy-path validation. Bugs like this are exactly why. They do not announce themselves through normal use; they show up when the lifecycle gets tight.

Risks and Concerns

The main concern is underestimation. A PASID reuse issue sounds small until you remembeprocess isolation, and interrupt handling all depend on it. Teams that dismiss this as a mere “driver quirk” may leave vulnerable kernels in service longer than they should.Another concern is that the symptom can be intermittent. Timing bugs are notoriously hard to reproduce, which means organizations may fail to connect the issue to a specific kernel build or driver revision. That makes inventory and verification especially importfor the bug to become obvious is a poor strategy in a production fleet.

Key risks to watch

- Delayed patch adoption in vendor kernels.

- Hidden exposure in long-lived workstation and compute images.

- Confusing symptoms that resemble ordinary driver instability.

- Process-churn workloads that trigger rare race windows.

- Misclassification as a low-priority rels between upstream fixes and downstream packaging.

- Hard-to-debug interruptions in shared GPU environments.

The final concern is that state-lifetime bugs tend to cluster. Once maintainers identify one timing-sensitive identifier reuse issue, it is worth looking for adjacent peanup paths. That does not mean there is a broader emergency; it means this is the kind of bug that rewards careful regression testing because the underlying pattern is common in asynchronous driver code.

Looking Ahead

The immediate next step is downstream adoption. Kernel distributors, OEMs, and enterprise Linux vendors will need to confirm that the cyclic PASID allocator change is present in their shipped buildam source trees. In practice, that means the most important question is not “Has the CVE been published?” but “Has my kernel actually absorbed the fix?”The second thing to watch is whether follow-on fixes appear in nearby AMDGPU code paths. When a bug exposes a lifecycle assumption, maintainers often audit adjacent state transitions to ensure they are not making the same mistake elsewhere. That is especiars, where fault handling, VM teardown, and interrupt processing are tightly interwoven.

What to monitor next

- Distribution advisories mapping the CVE to package versions.

- OEM kernel backports for AMDGPU-enabled systems.

- Any follow-up fixes in PASID, VM teardown, or IH handling code.

- Field reports from compute-heavy workloads and workstation fleets.

- Evidence of related identity-ent driver state.

The broader story here is not that AMDGPU has a dramatic new exploit problem. It is that the GPU stack continues to mature into a deeply stateful subsystem where even identifier reuse policy can have security implications. That is a sign of complexity, but it is also a sign of healthy maintenance: the kernel community found a narrow race, reasone correctly, and chose the kind of fix that closes the window without creating a new one.

CVE-2026-31462 is therefore best understood as a precision correction in a very sensitive part of the graphics stack. It is not flashy, but it is the sort of fix that quietly prevents headaches, preserves trust in device isolation, and keeps asynchronous h on the ghost of a process that has already left the building.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center