CVE-2026-31486 is a useful reminder that some of the most serious Linux kernel bugs are not glamorous memory-corruption exploits but plain old synchronization failures that can still destabilize a system. In this case, the flaw sits in the hwmon pmbus/core path, where regulator voltage operations accessed shared state without the update_lock mutex. The fix is more interesting than the bug: instead of merely slapping a lock around the affected functions, kernel maintainers had to redesign notification handling to avoid a self-deadlock scenario. That makes this CVE a small but revealing case study in how Linux kernel maintainers balance correctness, concurrency, and subsystem design.

The Linux PMBus subsystem is used to manage power supplies, regulators, and related hardware through standardized register access. It sits in a part of the kernel that is easy to overlook until something goes wrong, because it often supports enterprise servers, embedded platforms, and hardware monitoring stacks rather than consumer-facing features. The PMBus core also interacts with the regulator framework, which means the same code can be reached through multiple control paths.

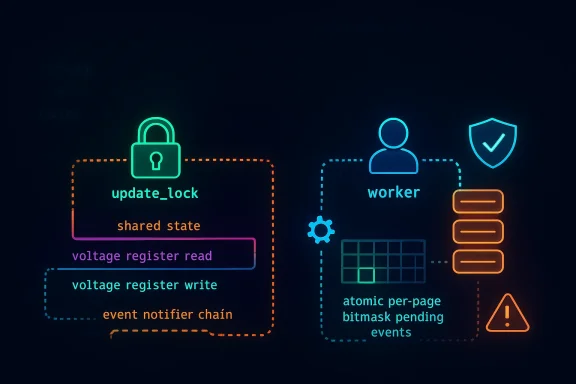

The root of CVE-2026-31486 is simple to describe and subtle to fix. The functions pmbus_regulator_get_voltage, pmbus_regulator_set_voltage, and pmbus_regulator_list_voltage were touching PMBus registers and shared data without taking the mutex that protects the device’s update path. In a kernel driver, that kind of omission is not just a style issue; it can create timing-dependent races that are hard to reproduce and even harder to diagnose once a system is under load.

The first instinct for many maintainers would be to lock those functions directly, and that would have been correct in isolation. But the PMBus code already had a separate notifier path, pmbus_regulator_notify, that calls regulator_notifier_call_chain while the same mutex is often already held, such as during fault handling. If a notifier callback were to call back into one of the now-locked voltage helpers, the kernel would try to re-acquire the same mutex and deadlock. That is the kind of circular dependency that makes kernel concurrency bugs so unforgiving.

The published fix therefore does not merely add protection; it moves notification work out of the critical section. The notification path now uses a worker function, with events stored atomically in a per-page bitmask and drained later outside the mutex. The design preserves correctness while avoiding recursive lock acquisition, which is precisely the sort of compromise that kernel maintainers reach for when a subsystem needs both safety and responsiveness.

For users of Linux on servers or appliances, the practical concern is reliability. For vendors and distro maintainers, the concern is that race conditions in shared kernel paths often sit silently until a specific workload, driver mix, or hardware state triggers them. That makes early patch adoption important even when the CVSS score has not yet been finalized, as is the case here.

The most notable technical adjustment is the introduction of asynchronous notification handling. Rather than sending regulator notifications immediately while the lock is held, the code now records pending events in atomics and lets a worker process them later. That keeps the locking hierarchy sane and avoids the trap of “fixing” a race by creating a deadlock. Kernel engineers will recognize this as a classic trade-off: serialize the data path, defer the callback path.

The patch also tightens lifecycle management. The worker and its associated state are initialized during regulator registration, and they are explicitly canceled when the device is removed through devm_add_action_or_reset. That matters because asynchronous work in the kernel always introduces teardown complexity; if a worker outlives the device it was servicing, the cure can be worse than the disease.

If one of those callbacks performs voltage operations, the thread tries to take the same mutex again. Because mutexes are not re-entrant in this context, the second acquisition blocks forever, leaving the system hung in a loop of its own making. That is the kind of deadlock that is hard to detect in testing because it often requires a precise chain of events, not a simple one-off action.

A useful mental model is this: the mutex protects the state mutation, while the worker handles the observer notification. Mixing those responsibilities in a single call path is what made the original implementation fragile. Once those roles are separated, the design becomes easier to reason about and much less likely to wedge under pressure.

This approach also reflects a larger kernel pattern. When a subsystem has to both guard mutable state and notify external observers, the safest design is often to queue state changes and notify later. It is a little less immediate, but it is far more robust when callbacks are allowed to re-enter other framework code. In a power-management subsystem, robustness beats eagerness every time.

There is also a lifecycle benefit. By initializing the worker at regulator registration time, the code ensures that the asynchronous path is ready before notifications can fire. By canceling it on device removal, the code avoids use-after-free style cleanup bugs that often appear when workqueues and device-managed resources are mixed carelessly. Kernel fixes that address one bug while preventing another are the best kind of fix.

The removal of the unnecessary linux/of.h include is a small housekeeping change, but it is still worth noting. It suggests the patch was reviewed with an eye toward making the code leaner and better scoped, not merely functionally safe. Those little cleanups are often a sign that maintainers were already taking the surrounding code seriously.

The vulnerable range in the public advisory information is shown relative to upstream commit history, with the issue affecting code before the fixes identified by the stable references. That is typical for Linux CVEs: the important question is not only which kernel version you run, but whether your vendor has backported the repair into its build. In practice, enterprises need to track both upstream lineage and distro-specific packaging.

It is also worth remembering that hardware monitoring bugs can be deceptively widespread in a vendor ecosystem. The same underlying kernel code may be present in cloud images, appliance firmware, and OEM distributions even when the user never notices the PMBus subsystem by name. That makes advisories like this one important to track by component, not just by package name.

The fix also illustrates the value of stable backporting. The advisory points to multiple kernel.org stable references, which suggests the change was treated as something that should land beyond just the immediate development branch. That is essential in kernel security work, where a fix that remains only in mainline leaves too many deployed systems exposed.

It is also a reminder that apparently “innocent” helper functions can become choke points. A helper like pmbus_regulator_get_voltage may look read-only from a distance, but if it touches shared state or hardware registers, it belongs inside the subsystem’s concurrency plan. Kernel code rarely fails because of one large mistake; it usually fails because one small assumption was made in the wrong layer.

The best part of this fix is that it keeps the design understandable. Anyone reading the code later can see that locking protects state, while a worker handles notifications. That clarity reduces the chance of future regressions, which is often the real measure of a good kernel patch.

It will also be worth seeing whether the PMBus cleanup inspires similar refactors elsewhere in the hardware-monitoring stack. Kernel maintainers often use one concurrency bug as a template for improving neighboring code paths, and this patch’s split between state locking and deferred notification is a pattern that could be reused. When that happens, the value of a CVE extends beyond its immediate fix.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

Background

Background

The Linux PMBus subsystem is used to manage power supplies, regulators, and related hardware through standardized register access. It sits in a part of the kernel that is easy to overlook until something goes wrong, because it often supports enterprise servers, embedded platforms, and hardware monitoring stacks rather than consumer-facing features. The PMBus core also interacts with the regulator framework, which means the same code can be reached through multiple control paths.The root of CVE-2026-31486 is simple to describe and subtle to fix. The functions pmbus_regulator_get_voltage, pmbus_regulator_set_voltage, and pmbus_regulator_list_voltage were touching PMBus registers and shared data without taking the mutex that protects the device’s update path. In a kernel driver, that kind of omission is not just a style issue; it can create timing-dependent races that are hard to reproduce and even harder to diagnose once a system is under load.

The first instinct for many maintainers would be to lock those functions directly, and that would have been correct in isolation. But the PMBus code already had a separate notifier path, pmbus_regulator_notify, that calls regulator_notifier_call_chain while the same mutex is often already held, such as during fault handling. If a notifier callback were to call back into one of the now-locked voltage helpers, the kernel would try to re-acquire the same mutex and deadlock. That is the kind of circular dependency that makes kernel concurrency bugs so unforgiving.

The published fix therefore does not merely add protection; it moves notification work out of the critical section. The notification path now uses a worker function, with events stored atomically in a per-page bitmask and drained later outside the mutex. The design preserves correctness while avoiding recursive lock acquisition, which is precisely the sort of compromise that kernel maintainers reach for when a subsystem needs both safety and responsiveness.

Why this matters in kernel terms

This is not a bug that necessarily screams “remote exploit” to the average reader, and that is part of the point. A race in a kernel power-management path can manifest as a crash, inconsistent regulator state, or hard-to-debug platform instability rather than an obvious security compromise. In infrastructure settings, that can still be a serious operational event, especially when power delivery and sensor state feed into system-wide policy decisions.For users of Linux on servers or appliances, the practical concern is reliability. For vendors and distro maintainers, the concern is that race conditions in shared kernel paths often sit silently until a specific workload, driver mix, or hardware state triggers them. That makes early patch adoption important even when the CVSS score has not yet been finalized, as is the case here.

What the Vulnerability Changes

At its core, CVE-2026-31486 changes the trust model inside one small part of the kernel. Before the fix, the PMBus regulator helpers assumed they could safely reach shared state without explicit serialization. After the fix, the system treats those operations as part of a broader concurrency domain that must be coordinated carefully with notifications and callbacks.The most notable technical adjustment is the introduction of asynchronous notification handling. Rather than sending regulator notifications immediately while the lock is held, the code now records pending events in atomics and lets a worker process them later. That keeps the locking hierarchy sane and avoids the trap of “fixing” a race by creating a deadlock. Kernel engineers will recognize this as a classic trade-off: serialize the data path, defer the callback path.

Key technical points

- The vulnerable path involved PMBus register access and shared regulator data.

- The missing guard was the update_lock mutex.

- Direct locking would have created a self-deadlock in notifier-driven callback chains.

- The fix moves notifications to a worker context.

- Pending events are tracked with atomic per-page bitmasks.

The patch also tightens lifecycle management. The worker and its associated state are initialized during regulator registration, and they are explicitly canceled when the device is removed through devm_add_action_or_reset. That matters because asynchronous work in the kernel always introduces teardown complexity; if a worker outlives the device it was servicing, the cure can be worse than the disease.

How the Deadlock Risk Emerged

This CVE is a textbook example of how notifier chains and locks can collide. The problem is not that locks are inherently dangerous, but that they are dangerous when kernel paths are layered without a complete view of who may call whom. In PMBus, a fault handler may already hold update_lock and then trigger regulator notifications, which in turn can call into arbitrary callbacks.If one of those callbacks performs voltage operations, the thread tries to take the same mutex again. Because mutexes are not re-entrant in this context, the second acquisition blocks forever, leaving the system hung in a loop of its own making. That is the kind of deadlock that is hard to detect in testing because it often requires a precise chain of events, not a simple one-off action.

The lock-ordering lesson

Kernel developers constantly have to choose between immediate execution and deferred execution. The PMBus fix demonstrates that notification logic belongs outside the mutex when callbacks might come back through the same interface. The code now treats event delivery as a deferred task, which is often the safest way to preserve lock ordering in complex subsystems.A useful mental model is this: the mutex protects the state mutation, while the worker handles the observer notification. Mixing those responsibilities in a single call path is what made the original implementation fragile. Once those roles are separated, the design becomes easier to reason about and much less likely to wedge under pressure.

Why race conditions are hard to reproduce

- They often depend on timing, load, and interrupt ordering.

- They may require a specific hardware fault or notification cascade.

- They can vanish under debugging tools because the added overhead changes timing.

- They frequently appear as intermittent hangs rather than clean crashes.

- They are especially likely in code that spans interrupts, workqueues, and callbacks.

The Fix, and Why It Is Better Than a Simple Lock

The repair for CVE-2026-31486 is not just a patch; it is a design correction. Rather than locking the voltage accessors directly, the kernel now defers regulator notifications to a worker, which means those callbacks run outside the update_lock critical section. That eliminates the deadlock risk while preserving protection for the shared data that actually needs serialization.This approach also reflects a larger kernel pattern. When a subsystem has to both guard mutable state and notify external observers, the safest design is often to queue state changes and notify later. It is a little less immediate, but it is far more robust when callbacks are allowed to re-enter other framework code. In a power-management subsystem, robustness beats eagerness every time.

Worker-based notification flow

The new flow can be understood in three steps. First, an event is recorded atomically in a per-page bitmask. Second, the worker is scheduled to run outside the protected region. Third, the worker delivers notifications without holding the mutex that protects the voltage operations. That separation is the key to avoiding recursive lock acquisition.There is also a lifecycle benefit. By initializing the worker at regulator registration time, the code ensures that the asynchronous path is ready before notifications can fire. By canceling it on device removal, the code avoids use-after-free style cleanup bugs that often appear when workqueues and device-managed resources are mixed carelessly. Kernel fixes that address one bug while preventing another are the best kind of fix.

The removal of the unnecessary linux/of.h include is a small housekeeping change, but it is still worth noting. It suggests the patch was reviewed with an eye toward making the code leaner and better scoped, not merely functionally safe. Those little cleanups are often a sign that maintainers were already taking the surrounding code seriously.

Affected Systems and Likely Exposure

The CVE description ties the problem to the Linux kernel’s PMBus and regulator code paths, which means exposure depends heavily on the hardware in use. Systems with PMBus-compatible power-management components, or those using kernel builds that include the affected code, are the most plausible candidates. That naturally points more toward servers, embedded platforms, and vendor-customized systems than toward generic desktop installations.The vulnerable range in the public advisory information is shown relative to upstream commit history, with the issue affecting code before the fixes identified by the stable references. That is typical for Linux CVEs: the important question is not only which kernel version you run, but whether your vendor has backported the repair into its build. In practice, enterprises need to track both upstream lineage and distro-specific packaging.

Enterprise versus consumer impact

For enterprises, this is the kind of issue that belongs in the “patch promptly” category. Even if exploitation is not the main concern, a kernel deadlock in a power-management path can cause service interruptions, node instability, or spurious hardware faults in fleets that depend on predictable telemetry. For consumers, the impact is likely narrower, but laptops, mini-PCs, and prosumer workstations with vendor PMBus integrations are not automatically immune.It is also worth remembering that hardware monitoring bugs can be deceptively widespread in a vendor ecosystem. The same underlying kernel code may be present in cloud images, appliance firmware, and OEM distributions even when the user never notices the PMBus subsystem by name. That makes advisories like this one important to track by component, not just by package name.

What administrators should verify

- Whether the deployed kernel includes the PMBus fix backport.

- Whether the platform actually uses PMBus-based regulator support.

- Whether vendor errata mention the relevant stable commits.

- Whether any rebootless patching mechanism is available for the environment.

- Whether the system has logs showing related hangs or fault-handler anomalies.

Why the Linux Kernel Community Handles Bugs Like This Well

One reason Linux remains resilient is that its maintainers are often willing to refactor code when the bug demands it. This CVE is a good example: the correct fix was not just a local lock insertion, but a modest redesign of notification sequencing. That kind of response shows the advantage of a mature upstream process that can tolerate surgical but meaningful changes.The fix also illustrates the value of stable backporting. The advisory points to multiple kernel.org stable references, which suggests the change was treated as something that should land beyond just the immediate development branch. That is essential in kernel security work, where a fix that remains only in mainline leaves too many deployed systems exposed.

Concurrency hygiene as a maintenance discipline

Kernel developers spend a lot of time preventing one fix from becoming another bug. That is why changes involving mutexes, notifier chains, and workqueues are reviewed so carefully. In this case, the redesign preserves the semantics of voltage access while moving the riskier observer work out of the critical path.It is also a reminder that apparently “innocent” helper functions can become choke points. A helper like pmbus_regulator_get_voltage may look read-only from a distance, but if it touches shared state or hardware registers, it belongs inside the subsystem’s concurrency plan. Kernel code rarely fails because of one large mistake; it usually fails because one small assumption was made in the wrong layer.

The best part of this fix is that it keeps the design understandable. Anyone reading the code later can see that locking protects state, while a worker handles notifications. That clarity reduces the chance of future regressions, which is often the real measure of a good kernel patch.

Strengths and Opportunities

The fix for CVE-2026-31486 is stronger than a narrow one-line patch because it addresses both the race and the deadlock hazard together. It also improves the maintainability of the PMBus regulator path by separating state protection from callback delivery. That kind of structural cleanup can pay dividends well beyond this single CVE.- Correctness-first redesign rather than superficial locking.

- Deadlock avoidance through deferred notifications.

- Atomic event tracking that reduces shared-state ambiguity.

- Better lifecycle management via device-managed cleanup.

- Cleaner subsystem boundaries between mutators and observers.

- Improved robustness under fault-handler and notifier pressure.

- Potentially lower regression risk in future PMBus changes.

Risks and Concerns

Even though the issue is fixed upstream, the main concern is patch latency across the broader ecosystem. Kernel CVEs often linger in vendor trees, especially when a fix touches shared subsystems that require careful backport validation. That means the real-world exposure window can remain open long after the public disclosure date.- Backport gaps in distro kernels and OEM builds.

- Hidden hardware exposure on PMBus-capable systems.

- Regression risk from asynchronous worker changes.

- Operational instability if the bug manifests as intermittent hangs.

- Vendor packaging delays for long-lived enterprise releases.

- Testing blind spots because the issue depends on timing and callbacks.

- Incomplete inventory of systems that actually use the affected path.

Looking Ahead

The next thing to watch is whether downstream distributors and cloud vendors fold the fix into their kernels quickly and cleanly. Since the advisory lists stable kernel references, the expectation is that backports will appear, but the timing will vary widely by vendor and by product lifecycle. For infrastructure operators, that means the important date is not the publication date alone, but the date the fix actually lands in the deployed build.It will also be worth seeing whether the PMBus cleanup inspires similar refactors elsewhere in the hardware-monitoring stack. Kernel maintainers often use one concurrency bug as a template for improving neighboring code paths, and this patch’s split between state locking and deferred notification is a pattern that could be reused. When that happens, the value of a CVE extends beyond its immediate fix.

What to watch next

- Stable backports in major Linux distribution kernels.

- Vendor advisories that map the fix to product-specific builds.

- Any follow-up PMBus or regulator patches that extend the same pattern.

- Reports of hangs or fault-handler issues on PMBus-heavy systems.

- Whether NVD later assigns a finalized CVSS score.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center