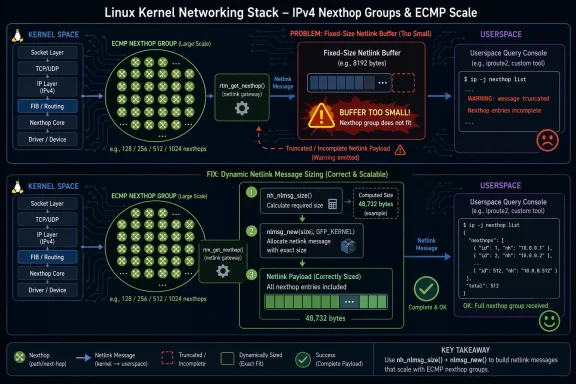

The Linux kernel has disclosed CVE-2026-31531, a networking bug in the IPv4 nexthop path that can trigger a kernel warning when users query very large nexthop groups through RTM_GETNEXTHOP. The issue is not a dramatic memory-corruption headline, but it is still a meaningful correctness and stability problem: the kernel was using a fixed-size skb allocation with NLMSG_GOODSIZE, which could be too small for large Equal-Cost Multi-Path nexthop groups. The fix switches to dynamic allocation with nh_nlmsg_size and nlmsg_new, and it also corrects size accounting for group metadata, including NHA_FDB. The record is still marked for NVD enrichment, so the public severity picture remains incomplete, but the technical root cause is already clear. st glance, CVE-2026-31531 looks like a narrow routing-introspection bug. In practice, it is another reminder that Linux networking correctness lives and dies on careful accounting, especially when the kernel has to serialize complex objects into netlink messages. The affected path, rtm_get_nexthop, is supposed to return the state of a nexthop object to userspace, and that includes single nexthops as well as large multipath groupings. When the object graph grows beyond a small size, a static allocation that once seemed “good enough” turns into a brittle assumption.

The description say when the kernel tries to fit a large group into a buffer sized for the ordinary case. That is the kind of bug that tends to sit quietly until someone exercises an edge case at scale. In routing systems, those edge cases are not hypothetical; large ECMP sets are common in spine-leaf fabrics, router stacks, and traffic engineering deployments where dozens or hundreds of nexthops are legitimate design choices.

The fix is elegant because it does not rubsystem. Instead, it aligns the query path with the notification path, which already sizes messages dynamically. That symmetry matters. When a kernel API has separate code paths for “tell userspace something changed” and “show userspace the current object,” both paths should agree on how large the resulting netlink payload can be. If they do not, the code becomes a maintenance trap, and those traps are where a lot of Linux kernel CVEs are born.

The record also mentions that the issue could not be reproducroute2** because the tool itself caps group size and hits a client-side bound first. That detail is important because it suggests the vulnerability is not a normal administrator footgun. It is a kernel-side correctness bug hiding behind a tooling limit, which means the real exposure may be more visible in automation, custom tooling, or future userland that does not share iproute2’s current constraint.

Linux nexthops are part of the routing machinery that tells the kerinations efficiently. Instead of treating every route as a single next hop, the modern API can represent independent nexthops, resilient groups, and ECMP-style collections that share traffic across multiple paths. That flexibility helps operators build highly available networks, but it also increases the amount of metadata the kernel has to pack and unpack whenever userspace asks for state.

The modern nexthop API was designed to be more expressive than older route formats, and that expressiveness comes with a price: dumps and notifications can become much larger than the simple cases developers first optimize for. The kernel documentation for nexthop groups emphasizes that RTM_GETNEXTHOP is used for requests just like other nexthop operations, and that resilient groups may contain enough internal state to make sizing and serialization nontrivial. In other words, message size is not a side concern; it is part of the API contract.

The Linux kernel’s CVE process also helps explain why an issue like this receives a CVE at all. The kernel project is deliberately cautious and assigns CVEs to fixes that may have security relevance even when exploitation is not obvious at the time of the patch. The documentation is explicit that CVEs are generally assigned after a fix lands in a stable tree, and that the team considers almost any kernel bug potentially security-relevant depending on context. That policy makes this kind of “only a warning” issue part of the same security conversation as more obviously dangerous flaws.

Microsoft’s Security Update Guide is one of the places enterprise teams encounter these kernel issues in mixed estates, even when the underlying bug lives in Linux. Microsoft has described the Security Update Guide as a centralized source for public vulnerability information, and it supports CVEs assigned by industry partners as well as Microsoft’s own disclosures. For organizations that track Linux alongside Windows and Azure assets, that matters: a kernel routing issue can appear in the same operational workflow as a Windows patch item.

The practical takeaway is that CVE-2026-31531 is not about a flashy exploit primitive. It is about how the kernel’s routing stack handles scale. Modern networking code lives on a st-path efficiency and correct serialization, and even a small mismatch between object size and buffer size can become a warning, a dropped response, or a broader reliability headache if left uncorrected.

The warning splat in the advisory shows that the failure is visible in rtm_get_nexthop, not buried in unrelated plumbing. That means the issue is directly tied to reply construction rather than later transmission. In security terms,leaner to reason about and easier to backport. It is a correctness failure in one well-defined function, not a long chain of side effects spread across the stack.

This is exactly the sort of bug that looks harmless in a code review and then becomes a production problem later. The code is not necessarily unsafe in the memory-corruption sense. Instead, it violates a hidden assumption about maximum message size. Hidden assumptions are a recurring theme in kernel security because they often survive for years until someone builds a configuration large enough to expose them.

The description also notes that the issue was not reproducible through iproute2 because the utility itself rejects messages that exceed its own bound of 1048 bytes. That is a fascinating operational detail. It means the kernel bug lived he standard toolchain, which can lull teams into thinking the path is impossible to hit when in reality the guardrail simply exists in one client and not in the kernel contract itself.

The change also makes the query path consistent with nexthop_notify, which already uses dynamic sizing behavior. That consistency matters more than it may appear. When a kernel subsystem has two code paths that serialize the same object type, they should agree on bounds and structure as closely as possible. Divergence between “dump” and “notify” paths is often how subtle bugs linger.

The mention of NHA_FDB is also notable. The advisory says the group size calculation was missing the size contribution of that attribute, so the fix adds it. That is a small detail with outsized significance original sizing code was not merely optimistic; it was incomplete. Small omissions like that are why dynamic calculation is usually preferable in kernel message assembly.

There is a wider lesson here for maintainers: a static constant like NLMSG_GOODSIZE is often only a proxy for reality. It can be useful as a common-case heuristic, but once the payload structure grows, the allocator must be driven by precise object accounting. Otherwise, the code drifts into “works until it doesn’t” territory, which is a familiar precondition for kernel CVEs.

The Linux nexthop framework is used to represent objects more flexibly than older route structures, and that flexibility means more metadata and larger serialized messages. Resilient nexthop groups in particular are meant to preserve forwarding stability while traffic or topology changes. That is a serious enterprise feature set, and it relies on the kernel being able to describe large groups back to userspace without tripping over its own buffer assumptions.

It is also worth separating consumer and enterprise impact. Typical desktops are unlikely to maintain giant nexthop groups, and many consumer systems will never touch this code path. But routers, appliances, virtual network edges, SD-WAN nodes, and cloud-hosted gateways absolutely can. For those systems, the bug sits squarely in the operational path that matters most.

The practical concern is not just a warning message. Any failure in a control-plane query can complicate automation, inventory, and health checks. In large environments, those are the exact systems that need to be reliable under scale, because they help administrators decide whether the data plane is healthy and whether failover logic is behaving as expected.

This is a classic example of tooling mask effectan be more conservative than the kernel API it talks to, and that conservatism is usually good. But it can also reduce the chance that a kernel-side defect is discovered in normal QA. In this case, the bug was still real; it just lived behind a userland limit.

A second lesson is that kernel warning paths matter. Some teams dismiss WARN splats as non-eventseeps running. But warnings in serialization code often signal latent contract breaks. In this case, the warning is telling maintainers that the reply path cannot safely assume a fixed buffer. That is precisely the kind of signal that should trigger a fix before a larger regression appears.

Cloud and virtualization environments deserve special attention. Large-scale forwarding setups frequently use ECMP or similar group-based designs to distribute traffic across many paths. That means an apparently niche bug in nexthop dumping can matter more on infrastructure hosts than on the kinds of endpoints most people think about first. The bigger and more automated the environment, the more valuable accurate kernel state reporting becomes.

There is also a broader enterprise management angle. Microsoft’s Security Update Guide makes Linux-related vulnerabilities visible in a workflow many defenders already use, which reduces the chance that a kernel issue gets ignored simply because it is not a Windows patch. That matters for mixed estates, where one operations team may own everything from endpoint fleets to Linux gateways. ([msrc.microsoftcrosoft.com/blog/2024/02/new-security-advisory-tab-added-to-the-microsoft-security-update-guide/)

The second strength is that the bug is easy to explain to operators. That sounds minor, but it is a real advantage in incident response. Teams can quickly understand that the issue is about message size and large nexthop groups, not a vague “kernel networking problem.” Clarity accelerates patch decisions.

The third strength is that the fix aligns query behavior with notification behavior. Consistency across code paths reduces the chance of future drift, and drift is often the hidden root of later defects. Kernel maintainers routinely prefer fixes that make separate paths obey the same sizing logic.

A second concern is patch lag. Even when upstream has the fix, real-world exposure depends on when distribution and vendor kernels absorb it. Linux users rarely run pristine upstream trees; they run backported, certified, or appliance-specific builds, and those can lag for weeks or montps://kernel.org/doc/html/next/process/cve.html)

A third concern is blind spots created by tooling limits. If the common admin tool refuses to generate large requests, teams may conclude the bug is impossible to reach. That kind of assumption is dangerous because it confuses one implementation’s restriction with the kernel’s actual contract.

The second thing to watch is whether other nexthop dump paths rely on similar fixed-size assumptions. Once one serialization path is shown to be too small for large groups, maintainers often audit neighboring code for the same pattern. That kind of follow-up is healthy, because it catches related proble separate CVEs.

The third item is userland evolution. Today, iproute2’s bound masks the bug in routine use, but tooling changes over time. If a future version relaxes limits or adds support for larger group encodings, the kernel path will need to be ready. That is another reason dynamic sizing is the safer long-term answer.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

The description say when the kernel tries to fit a large group into a buffer sized for the ordinary case. That is the kind of bug that tends to sit quietly until someone exercises an edge case at scale. In routing systems, those edge cases are not hypothetical; large ECMP sets are common in spine-leaf fabrics, router stacks, and traffic engineering deployments where dozens or hundreds of nexthops are legitimate design choices.

The fix is elegant because it does not rubsystem. Instead, it aligns the query path with the notification path, which already sizes messages dynamically. That symmetry matters. When a kernel API has separate code paths for “tell userspace something changed” and “show userspace the current object,” both paths should agree on how large the resulting netlink payload can be. If they do not, the code becomes a maintenance trap, and those traps are where a lot of Linux kernel CVEs are born.

The record also mentions that the issue could not be reproducroute2** because the tool itself caps group size and hits a client-side bound first. That detail is important because it suggests the vulnerability is not a normal administrator footgun. It is a kernel-side correctness bug hiding behind a tooling limit, which means the real exposure may be more visible in automation, custom tooling, or future userland that does not share iproute2’s current constraint.

Background

Background

Linux nexthops are part of the routing machinery that tells the kerinations efficiently. Instead of treating every route as a single next hop, the modern API can represent independent nexthops, resilient groups, and ECMP-style collections that share traffic across multiple paths. That flexibility helps operators build highly available networks, but it also increases the amount of metadata the kernel has to pack and unpack whenever userspace asks for state.The modern nexthop API was designed to be more expressive than older route formats, and that expressiveness comes with a price: dumps and notifications can become much larger than the simple cases developers first optimize for. The kernel documentation for nexthop groups emphasizes that RTM_GETNEXTHOP is used for requests just like other nexthop operations, and that resilient groups may contain enough internal state to make sizing and serialization nontrivial. In other words, message size is not a side concern; it is part of the API contract.

The Linux kernel’s CVE process also helps explain why an issue like this receives a CVE at all. The kernel project is deliberately cautious and assigns CVEs to fixes that may have security relevance even when exploitation is not obvious at the time of the patch. The documentation is explicit that CVEs are generally assigned after a fix lands in a stable tree, and that the team considers almost any kernel bug potentially security-relevant depending on context. That policy makes this kind of “only a warning” issue part of the same security conversation as more obviously dangerous flaws.

Microsoft’s Security Update Guide is one of the places enterprise teams encounter these kernel issues in mixed estates, even when the underlying bug lives in Linux. Microsoft has described the Security Update Guide as a centralized source for public vulnerability information, and it supports CVEs assigned by industry partners as well as Microsoft’s own disclosures. For organizations that track Linux alongside Windows and Azure assets, that matters: a kernel routing issue can appear in the same operational workflow as a Windows patch item.

The practical takeaway is that CVE-2026-31531 is not about a flashy exploit primitive. It is about how the kernel’s routing stack handles scale. Modern networking code lives on a st-path efficiency and correct serialization, and even a small mismatch between object size and buffer size can become a warning, a dropped response, or a broader reliability headache if left uncorrected.

What Went Wrong

The bug sits in a classic kernel pattern: build a netlink reply into an skb, then send it back to userspace. That seems simple until the object being described has variable-length components. A single nexthop is easy to size. A nexthop group with many members, flags, and ass not. The CVE description says the kernel used NLMSG_GOODSIZE for the allocation, which is a reasonable shortcut for typical payloads but not a safe upper bound for large groups.The warning splat in the advisory shows that the failure is visible in rtm_get_nexthop, not buried in unrelated plumbing. That means the issue is directly tied to reply construction rather than later transmission. In security terms,leaner to reason about and easier to backport. It is a correctness failure in one well-defined function, not a long chain of side effects spread across the stack.

Why fixed-size assumptions fail

The kernel can often get away with a fixed-size envelope for “common” cases, but routing objects are increasingly heterogeneous. A nexthop group can contain enough members and attributes to exceed the old buffer limit, especially once the system starts adding flags that expand the encoded message. e serialized payload no longer fits the assumed space, and the warning is the kernel’s way of telling you that the invariant has been broken.This is exactly the sort of bug that looks harmless in a code review and then becomes a production problem later. The code is not necessarily unsafe in the memory-corruption sense. Instead, it violates a hidden assumption about maximum message size. Hidden assumptions are a recurring theme in kernel security because they often survive for years until someone builds a configuration large enough to expose them.

The description also notes that the issue was not reproducible through iproute2 because the utility itself rejects messages that exceed its own bound of 1048 bytes. That is a fascinating operational detail. It means the kernel bug lived he standard toolchain, which can lull teams into thinking the path is impossible to hit when in reality the guardrail simply exists in one client and not in the kernel contract itself.

The Fix Strategy

The upstream fix does two important things. First, it replaces the static skb allocation with nlmsg_new, using a dynamically computed size from nh_nlmsg_size. Second, it adjusts the size helpers so they calculate the needed bags actually present. That is a textbook kernel fix: remove the guesswork, size the message from the object’s real shape, and let the allocator do the right thing.The change also makes the query path consistent with nexthop_notify, which already uses dynamic sizing behavior. That consistency matters more than it may appear. When a kernel subsystem has two code paths that serialize the same object type, they should agree on bounds and structure as closely as possible. Divergence between “dump” and “notify” paths is often how subtle bugs linger.

Size accounting is the real security boundary

A lot of kernel bugs are really accounting bugs wearing a security costume. Here, the dangerous assumption is not about a malicious pointer or an attacker-controlled length field in a parser. It is about the kernel underestimating the size of a legitimate object that it itself created. That kind of bug can still destabilize a system, and in privileged code, instability is security-relevant even when exploitability is not immediately obvious.The mention of NHA_FDB is also notable. The advisory says the group size calculation was missing the size contribution of that attribute, so the fix adds it. That is a small detail with outsized significance original sizing code was not merely optimistic; it was incomplete. Small omissions like that are why dynamic calculation is usually preferable in kernel message assembly.

There is a wider lesson here for maintainers: a static constant like NLMSG_GOODSIZE is often only a proxy for reality. It can be useful as a common-case heuristic, but once the payload structure grows, the allocator must be driven by precise object accounting. Otherwise, the code drifts into “works until it doesn’t” territory, which is a familiar precondition for kernel CVEs.

Why Large Nexthop Groups Matter

Large nexthop groups are not an academic curiosity. They are part of how modern networks spread traffic across multiple paths, improve failover behavior, and maintain performance under load. In a large fabric, a group of 512 nexthops is not anatural result of scale, policy design, or hardware topology. That is why the advisory’s mention of 512 nexthops is so important. It anchors the bug in realistic high-scale networking, not just synthetic test cases.The Linux nexthop framework is used to represent objects more flexibly than older route structures, and that flexibility means more metadata and larger serialized messages. Resilient nexthop groups in particular are meant to preserve forwarding stability while traffic or topology changes. That is a serious enterprise feature set, and it relies on the kernel being able to describe large groups back to userspace without tripping over its own buffer assumptions.

Real-world implications for operators

If your environment uses advanced routing automation, this bug matters even if does not trigger it. Custom orchestration, vendor control planes, and future utilities may query the same kernel path differently. A kernel warning in a routing control plane is not just a cosmetic issue; it can affect diagnostics, observability, and operator confidence.It is also worth separating consumer and enterprise impact. Typical desktops are unlikely to maintain giant nexthop groups, and many consumer systems will never touch this code path. But routers, appliances, virtual network edges, SD-WAN nodes, and cloud-hosted gateways absolutely can. For those systems, the bug sits squarely in the operational path that matters most.

The practical concern is not just a warning message. Any failure in a control-plane query can complicate automation, inventory, and health checks. In large environments, those are the exact systems that need to be reliable under scale, because they help administrators decide whether the data plane is healthy and whether failover logic is behaving as expected.

Why This Is Not Reproduced by iproute2

The advisory explicitly states that **ipmits group size, so the command fails before the kernel does. That matters because it explains why the issue may have stayed hidden longer than expected. If the standard userland tool refuses to send an oversized request, the kernel bug becomes invisible to the usual testing path.This is a classic example of tooling mask effectan be more conservative than the kernel API it talks to, and that conservatism is usually good. But it can also reduce the chance that a kernel-side defect is discovered in normal QA. In this case, the bug was still real; it just lived behind a userland limit.

What this tells us about test coverage

The strongest lesson is that control-plane code needs tests that push beyond convenience bounds. If a path can theoretically accept large nexthop groups, then the kernel should be validated against large nexthop groups, even when a particular toolchain does not currently emit them. Otherwise, the code may look healthy for years while silently relying on a userland cap.A second lesson is that kernel warning paths matter. Some teams dismiss WARN splats as non-eventseeps running. But warnings in serialization code often signal latent contract breaks. In this case, the warning is telling maintainers that the reply path cannot safely assume a fixed buffer. That is precisely the kind of signal that should trigger a fix before a larger regression appears.

Enterprise and Cloud Impact

For enterprise operators, the relevant question is whether the routing stack ever needs to inspect or export large nexthop groupss, then the bug can affect visibility and reliability even if it never becomes an outage. Routing APIs are the nervous system of network automation, and a reply-path warning in that nervous system can cause noisy logs, failed queries, or broken observability pipelines.Cloud and virtualization environments deserve special attention. Large-scale forwarding setups frequently use ECMP or similar group-based designs to distribute traffic across many paths. That means an apparently niche bug in nexthop dumping can matter more on infrastructure hosts than on the kinds of endpoints most people think about first. The bigger and more automated the environment, the more valuable accurate kernel state reporting becomes.

Operationally, this is what teams should care about

- Whether the kernel build contains the dynamic sizing fix.

- Whether any custom tooling queries large nexthop groups directly.

- Whether routing observability depends odumps.

- Whether vendor kernels have backported the same message-sizing logic.

- Whether large ECMP configurations are present in production, staging, or lab networks.

There is also a broader enterprise management angle. Microsoft’s Security Update Guide makes Linux-related vulnerabilities visible in a workflow many defenders already use, which reduces the chance that a kernel issue gets ignored simply because it is not a Windows patch. That matters for mixed estates, where one operations team may own everything from endpoint fleets to Linux gateways. ([msrc.microsoftcrosoft.com/blog/2024/02/new-security-advisory-tab-added-to-the-microsoft-security-update-guide/)

Strengths and Opportunities

The strongest part of the fix is that it is surgical. It changes the allocation strategy without altering the nexthop feature set, which lowers regression risk and makes backporting easier for stable kernels. That is exactly the kind of patch downstream vendors like to carry.The second strength is that the bug is easy to explain to operators. That sounds minor, but it is a real advantage in incident response. Teams can quickly understand that the issue is about message size and large nexthop groups, not a vague “kernel networking problem.” Clarity accelerates patch decisions.

The third strength is that the fix aligns query behavior with notification behavior. Consistency across code paths reduces the chance of future drift, and drift is often the hidden root of later defects. Kernel maintainers routinely prefer fixes that make separate paths obey the same sizing logic.

- Easier stable-tree backporting.

- Lower risk of side effects than a larger refactor.

- Better consistency between dump and notify paths.

- More accurate sizing for future attribute growth.

- Improved supportability in large ECMP environments.

- Reduced chance of repeat bugs from incomplete accounting.

- Better fit for vendors maintaining long-term kernels.

Risks and Concerns

The main risk is perational significance because the issue is not a remote code execution bug. That would be a mistake. In a routing control plane, a warning caused by malformed sizing can still disrupt automation, reduce trust in telemetry, and trigger failover or troubleshooting behavior that wastes time at the worst possible moment.A second concern is patch lag. Even when upstream has the fix, real-world exposure depends on when distribution and vendor kernels absorb it. Linux users rarely run pristine upstream trees; they run backported, certified, or appliance-specific builds, and those can lag for weeks or montps://kernel.org/doc/html/next/process/cve.html)

A third concern is blind spots created by tooling limits. If the common admin tool refuses to generate large requests, teams may conclude the bug is impossible to reach. That kind of assumption is dangerous because it confuses one implementation’s restriction with the kernel’s actual contract.

- Vendor backports may trail the upstream fix.

- Control-plane automation may use different query paths than iproute2.

- Large nexthop groups are more common in real infrastructure than in lab demos.

- Kernel warnings can hide deeper operational fragility.

- NVD enrichment lag leaves severity uncertain for now.

- Future tools may expose the bug more readily than current ones.

- Misreading “not reproducible in iproute2” could delay remediation.

Looking Ahead

The immediate next thing to watch is backport status across supported Linux branches. Because the CVE record points to stable references, the fix should propagate through the normal kernel security pipeline, but the important question for real deployments is when vendor kernels pick it up and how they document the change.The second thing to watch is whether other nexthop dump paths rely on similar fixed-size assumptions. Once one serialization path is shown to be too small for large groups, maintainers often audit neighboring code for the same pattern. That kind of follow-up is healthy, because it catches related proble separate CVEs.

The third item is userland evolution. Today, iproute2’s bound masks the bug in routine use, but tooling changes over time. If a future version relaxes limits or adds support for larger group encodings, the kernel path will need to be ready. That is another reason dynamic sizing is the safer long-term answer.

- Watch for downstream kernel advisories and backport notes.

- Check whether your routing automation ever dumps large nexthop groups.

- Review whether custom tooling bypasses current iproute2 limits.

- Monitor adjacent netlink serialization helpers for similar sizing bugs.

- Confirm whether your production kernels include the nh_nlmsg_size and nlmsg_new changes.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center