The Azure Monitor Agent (AMA) has landed on Microsoft’s security radar again, this time through CVE-2026-32168, an Elevation of Privilege issue that MSRC says should be evaluated using the “degree of confidence” metric attached to the vulnerability entry. That framing matters because it tells customers not just how severe the impact could be, but how certain Microsoft is that the weakness is real and technically understood. In practical terms, this is the kind of issue that can carry outsized operational risk in cloud and hybrid estates, especially because AMA is embedded in modern monitoring workflows across Azure, Arc, and Windows environments. Microsoft’s own documentation confirms that AMA is the supported agent for collecting guest OS data and that it is deployed broadly through VM extensions, Windows client installers, and Azure Arc scenarios.

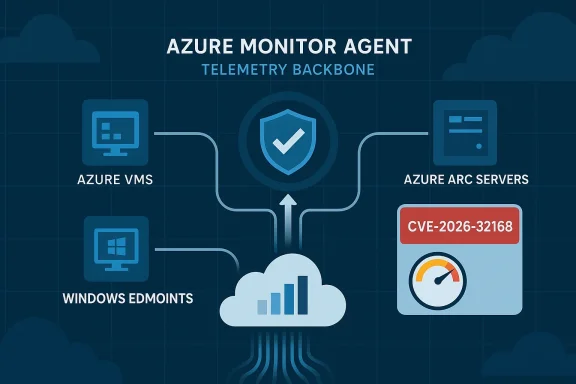

Azure Monitor Agent is not a niche utility. It is the telemetry backbone for a wide range of Azure monitoring and security use cases, from VM insights to Microsoft Sentinel integrations, and Microsoft documents it as the supported replacement for the legacy Log Analytics agent. That wide deployment surface is exactly why a privilege escalation vulnerability in the agent matters more than an isolated flaw in a rarely used component. If an attacker can move from a lower-privileged context to a higher one on a monitored machine, the monitoring layer itself becomes part of the attack path rather than just the defense stack.

The vulnerability entry’s confidence-based metric is especially important because it is not just a severity label. It is an assessment of how well the technical issue is understood and how credible the evidence is. In other words, a published CVE can exist in a spectrum ranging from “the problem is suspected” to “the problem is fully confirmed and exploitable under defined conditions.” Microsoft’s security ecosystem has used similar public disclosures in the past when the company had enough confidence to assign a CVE but still wanted to temper assumptions about exploitability.

For enterprise administrators, the immediate significance is less about the abstract CVSS style score and more about where AMA runs and what it touches. AMA commonly runs with elevated system-level privileges because it must collect logs, performance data, and security signals from the host. That makes the agent a high-value target: if a local attacker can exploit a flaw in the service, they may be able to cross a privilege boundary and gain stronger control over the machine or the surrounding monitoring pipeline.

For consumers and small businesses using Windows client monitoring, the story is similar but narrower. The Windows client installer is used on supported Windows 10 and Windows 11 systems, and Microsoft notes that AMA on client devices still launches and manages processes through a dedicated Windows service. That means the issue is not limited to Azure-hosted servers; it can also matter on enrolled endpoints that feed data into a cloud monitoring workflow.

Historically, monitoring agents have often been privileged by design. They need access to event logs, system metrics, and local services, and they frequently include background daemons or Windows services that run as SYSTEM or an equivalent high-trust identity. That is a useful operational pattern, but it also means a bug in the agent can have consequences beyond ordinary application-level compromise. Microsoft’s own Azure Monitor guidance shows AMA being deployed through VM extensions and Arc extension channels, reinforcing that it sits close to the management plane.

The cloud security industry has seen this pattern before. Microsoft has previously disclosed privilege escalation issues in Azure services where a local or scoped flaw could become a broader foothold, such as issues in Azure Synapse Spark and Azure Database for PostgreSQL Flexible Server. Those cases illustrate a recurring principle: when a service is designed to automate trust, any mistake in privilege handling can become a serious escalation path.

The confidence metric attached to CVE-2026-32168 suggests that Microsoft is not merely reacting to a theoretical issue. Even if the public advisory is sparse, the existence of a CVE usually indicates that Microsoft has enough evidence to formalize the problem and track remediation. That does not automatically mean there is active exploitation in the wild, but it does mean administrators should treat the issue as real rather than speculative.

The Windows client installation guide is also revealing because it describes the agent service as the component that launches and manages AMA processes. In security terms, that tells us the agent is not just a passive collector; it is an active service boundary with process management responsibilities. Any flaw in how it launches, coordinates, or secures subprocesses can become an elevation vector.

Microsoft’s broader Azure Monitor pages reinforce that AMA is central to telemetry pipelines for Azure Monitor and Microsoft Sentinel. The enterprise monitoring architecture documentation explicitly says Sentinel uses the same Log Analytics workspace and AMA architecture as Azure Monitor, which means a flaw in the agent can influence both observability and security analytics workflows. That is strategically important because monitoring infrastructure often becomes a trust anchor during incident response.

In the real world, confidence levels help bridge the gap between vulnerability disclosure and operational response. Security teams do not only ask, “Is this severe?” They also ask, “How sure are we that this is real, where does it live, and which systems should we prioritize first?” A credible, confirmed EoP on a monitoring agent will usually outrank a vague theoretical flaw in a low-value component.

The Microsoft ecosystem has a pattern of publishing advisories and blog posts that explain both the technical flaw and the mitigation path when an issue is significant enough. In previous cloud security incidents, Microsoft has described the attack path, the impacted service, and the steps taken to mitigate the problem across the fleet. That history suggests that when the company chooses to publish a CVE for an Azure service, it is generally signaling that remediation is underway or already available.

That matters especially in environments where AMA is used to feed Microsoft Sentinel or centralized Log Analytics workflows. Security monitoring depends on the integrity of the telemetry source, and anything that undermines that source can reduce confidence in alerts, logs, and forensic traces. In that sense, a vulnerability in AMA is not only an endpoint issue; it is also a visibility issue.

Enterprises also need to consider operational heterogeneity. Many organizations run a mix of Azure VMs, Azure Arc servers, Windows client devices, and perhaps older server fleets still supported under extended servicing. Microsoft’s support matrix shows that AMA spans a wide range of versions and deployment models, which means the remediation campaign may need to cross team boundaries and asset inventories.

For small and midsize businesses, the main risk is that they often rely on default or semi-managed deployment patterns. They may not have a dedicated vulnerability management program for monitoring agents, and they may not even realize AMA is installed because it can arrive through a broader management toolchain. That makes disclosure like CVE-2026-32168 potentially more disruptive than it first appears.

There is also a trust issue. When organizations deploy an agent specifically to improve visibility and compliance, they expect that agent to be a source of security assurance rather than a security liability. If a monitoring service can be turned into an escalation step, it creates an awkward inversion: the tool installed to detect threats becomes one that may help deliver them.

The broader industry lesson is that agent trust is becoming a differentiator. Vendors that can prove strong isolation, least-privilege design, and rapid patch delivery gain credibility when public vulnerabilities appear elsewhere. Conversely, a high-profile EoP in a core agent can remind customers that any software sitting close to the host OS deserves the same scrutiny as endpoint protection software.

Microsoft also has an incentive to respond visibly when issues affect core Azure monitoring infrastructure. Azure Monitor and Microsoft Sentinel are key parts of Microsoft’s cloud security story, and their value depends on customer confidence. If customers begin to believe the agent layer is fragile, they may delay migrations from older tooling or insist on extra controls before rolling out new monitoring workloads.

Microsoft’s own historical disclosures show how privilege escalation in cloud and platform services can stem from race conditions, overly permissive permissions, weak isolation, or insecure handling of helper processes. Those patterns do not prove the root cause in CVE-2026-32168, but they do show the kinds of mistakes that often underlie EoP flaws in managed systems. The lesson is to assume complexity, not simplicity.

A monitoring agent’s attack surface often includes installer code, service startup logic, update mechanisms, log handling, and communications with cloud backends. Any one of those can become a vulnerability if privileges are mismatched or trust assumptions are too loose. That is why patching only the visible service binary is sometimes not enough; defenders should understand whether the fix changes the agent’s logic, its service model, or its update path.

Second, validate whether the affected endpoints are mission-critical. Not every workstation or server has the same value, and patch sequencing should reflect business priority. Machines that host security tooling, identity services, or remote management jump points should be treated as higher-risk because they can become stepping stones after compromise.

Third, watch for signs of abnormal service behavior around the agent itself. In an EoP scenario, unusual child processes, unexpected file writes, or service restart anomalies can hint that someone is probing the boundary. This is not proof of exploitation, but it is the sort of telemetry that can help teams identify suspicious activity early.

Organizations should also watch for evidence of exploitability. A confirmed EoP does not always mean easy real-world exploitation, but it does mean attackers will study the issue carefully. If proof-of-concept details or detection guidance emerge, patch urgency should move even higher.

Finally, defenders should monitor their own environments for version drift and response readiness. The real difference between a manageable vulnerability and a crisis is often how quickly teams can identify affected systems and execute a controlled update across all deployment paths.

Source: MSRC Security Update Guide - Microsoft Security Response Center

Overview

Overview

Azure Monitor Agent is not a niche utility. It is the telemetry backbone for a wide range of Azure monitoring and security use cases, from VM insights to Microsoft Sentinel integrations, and Microsoft documents it as the supported replacement for the legacy Log Analytics agent. That wide deployment surface is exactly why a privilege escalation vulnerability in the agent matters more than an isolated flaw in a rarely used component. If an attacker can move from a lower-privileged context to a higher one on a monitored machine, the monitoring layer itself becomes part of the attack path rather than just the defense stack.The vulnerability entry’s confidence-based metric is especially important because it is not just a severity label. It is an assessment of how well the technical issue is understood and how credible the evidence is. In other words, a published CVE can exist in a spectrum ranging from “the problem is suspected” to “the problem is fully confirmed and exploitable under defined conditions.” Microsoft’s security ecosystem has used similar public disclosures in the past when the company had enough confidence to assign a CVE but still wanted to temper assumptions about exploitability.

For enterprise administrators, the immediate significance is less about the abstract CVSS style score and more about where AMA runs and what it touches. AMA commonly runs with elevated system-level privileges because it must collect logs, performance data, and security signals from the host. That makes the agent a high-value target: if a local attacker can exploit a flaw in the service, they may be able to cross a privilege boundary and gain stronger control over the machine or the surrounding monitoring pipeline.

For consumers and small businesses using Windows client monitoring, the story is similar but narrower. The Windows client installer is used on supported Windows 10 and Windows 11 systems, and Microsoft notes that AMA on client devices still launches and manages processes through a dedicated Windows service. That means the issue is not limited to Azure-hosted servers; it can also matter on enrolled endpoints that feed data into a cloud monitoring workflow.

Background

Azure Monitor Agent became the strategic replacement for older Microsoft monitoring technologies because organizations wanted a single, modern telemetry path that works across Azure VMs, on-premises servers, and Azure Arc-connected systems. Microsoft’s documentation presents AMA as the agent for guest OS monitoring and notes that it integrates with Azure Monitor, Microsoft Sentinel, and Microsoft Defender for Cloud. That unification simplifies deployment, but it also concentrates risk in one agent family.Historically, monitoring agents have often been privileged by design. They need access to event logs, system metrics, and local services, and they frequently include background daemons or Windows services that run as SYSTEM or an equivalent high-trust identity. That is a useful operational pattern, but it also means a bug in the agent can have consequences beyond ordinary application-level compromise. Microsoft’s own Azure Monitor guidance shows AMA being deployed through VM extensions and Arc extension channels, reinforcing that it sits close to the management plane.

The cloud security industry has seen this pattern before. Microsoft has previously disclosed privilege escalation issues in Azure services where a local or scoped flaw could become a broader foothold, such as issues in Azure Synapse Spark and Azure Database for PostgreSQL Flexible Server. Those cases illustrate a recurring principle: when a service is designed to automate trust, any mistake in privilege handling can become a serious escalation path.

The confidence metric attached to CVE-2026-32168 suggests that Microsoft is not merely reacting to a theoretical issue. Even if the public advisory is sparse, the existence of a CVE usually indicates that Microsoft has enough evidence to formalize the problem and track remediation. That does not automatically mean there is active exploitation in the wild, but it does mean administrators should treat the issue as real rather than speculative.

Why confidence matters

A vulnerability with a high-confidence identification is different from a vague rumor or an unverified research lead. The more certain the technical root cause, the easier it is for defenders to estimate exploitability, scope the affected versions, and prioritize patching. Conversely, a low-confidence disclosure can still matter, but it may require more caution before drawing operational conclusions.Why AMA is a sensitive target

AMA is a security-sensitive component because it touches telemetry, access controls, and service orchestration. It is often trusted to read from local resources and ship data to central workspaces, and in many environments that data includes event logs that security teams rely on for detection and incident response. If an attacker compromises the agent, they may gain a vantage point inside the monitoring chain itself.What Microsoft’s documentation tells us

Microsoft’s public documentation makes clear that the Azure Monitor Agent is widely supported across Windows Server, Windows client, Azure Local, and Azure Arc scenarios. The supported operating systems page lists Windows Server 2025, 2022, 2019, 2016, and Windows 10/11 client support, which highlights how broad the footprint can be in real environments. That breadth is an asset for standardization, but it also means one flawed component can affect many estates at once.The Windows client installation guide is also revealing because it describes the agent service as the component that launches and manages AMA processes. In security terms, that tells us the agent is not just a passive collector; it is an active service boundary with process management responsibilities. Any flaw in how it launches, coordinates, or secures subprocesses can become an elevation vector.

Microsoft’s broader Azure Monitor pages reinforce that AMA is central to telemetry pipelines for Azure Monitor and Microsoft Sentinel. The enterprise monitoring architecture documentation explicitly says Sentinel uses the same Log Analytics workspace and AMA architecture as Azure Monitor, which means a flaw in the agent can influence both observability and security analytics workflows. That is strategically important because monitoring infrastructure often becomes a trust anchor during incident response.

Deployment surfaces that increase exposure

AMA can be installed through multiple mechanisms, including VM extensions, Azure Policy-driven deployment, and Azure Arc extension workflows. That flexibility helps operations teams scale faster, but it also increases the number of places where version drift can happen. In a large enterprise, patching the agent everywhere it exists may be harder than patching a single standalone app.Why this is not just a Windows problem

Although the issue name references Azure Monitor Agent in general, the operational reality is cross-platform and hybrid. Microsoft documents support for both Windows and Linux environments, and it explicitly positions AMA as the agent used across Azure, other clouds, and on-premises through Azure Arc. That means administrators should not assume the exposure is confined to one asset class.Interpreting the CVE confidence metric

The wording in the CVE description is important because it frames the vulnerability as a measurement of confidence in the existence and technical credibility of the flaw. That is not the same thing as a final exploitability verdict. A high-confidence issue may still be difficult to weaponize, but defenders can be more certain that the underlying defect exists and needs attention.In the real world, confidence levels help bridge the gap between vulnerability disclosure and operational response. Security teams do not only ask, “Is this severe?” They also ask, “How sure are we that this is real, where does it live, and which systems should we prioritize first?” A credible, confirmed EoP on a monitoring agent will usually outrank a vague theoretical flaw in a low-value component.

The Microsoft ecosystem has a pattern of publishing advisories and blog posts that explain both the technical flaw and the mitigation path when an issue is significant enough. In previous cloud security incidents, Microsoft has described the attack path, the impacted service, and the steps taken to mitigate the problem across the fleet. That history suggests that when the company chooses to publish a CVE for an Azure service, it is generally signaling that remediation is underway or already available.

What confidence implies for defenders

Defenders should treat confidence as a prioritization signal, not a comfort blanket. High confidence can indicate that attacker knowledge is also likely to be mature, which can compress the window between disclosure and exploitation. That is the part security teams should care about most, because patch lag becomes the real risk multiplier.What confidence does not tell you

The metric does not, by itself, explain the root cause, the exploit chain, or the affected build numbers. It also does not reveal whether Microsoft has seen public proof-of-concept code or real-world abuse. Without those details, administrators should resist over-reading the label and instead focus on patch readiness and exposure mapping.Enterprise impact

The enterprise consequences of an AMA elevation flaw are straightforward but serious. Monitoring agents are often deployed broadly, granted significant local privileges, and trusted by both system operators and security teams. If the agent can be coerced into running code at a higher privilege level, an attacker who already has limited access on a machine may be able to pivot into deeper control of that endpoint.That matters especially in environments where AMA is used to feed Microsoft Sentinel or centralized Log Analytics workflows. Security monitoring depends on the integrity of the telemetry source, and anything that undermines that source can reduce confidence in alerts, logs, and forensic traces. In that sense, a vulnerability in AMA is not only an endpoint issue; it is also a visibility issue.

Enterprises also need to consider operational heterogeneity. Many organizations run a mix of Azure VMs, Azure Arc servers, Windows client devices, and perhaps older server fleets still supported under extended servicing. Microsoft’s support matrix shows that AMA spans a wide range of versions and deployment models, which means the remediation campaign may need to cross team boundaries and asset inventories.

Security teams should ask three questions

- Which endpoints are running AMA today?

- Which of those endpoints run the agent with the highest privileges?

- Which monitoring and response workflows depend on the integrity of that agent?

Why hybrid estates are harder

Hybrid estates typically have weaker change control consistency than pure cloud or pure on-premises environments. Some machines may receive extension updates automatically, while others remain pinned due to local policy or connectivity constraints. That creates a patch gap that attackers often exploit first, because the oldest and least-maintained instances are usually the easiest entry point.Consumer and SMB impact

The consumer angle is narrower but still relevant because Microsoft documents a Windows client installer for AMA on supported Windows 10 and Windows 11 systems. That means the agent is not purely a datacenter technology; it can appear on business laptops, developer workstations, and managed endpoints that participate in enterprise telemetry. If those devices are administratively important, then the vulnerability matters even outside a traditional server farm.For small and midsize businesses, the main risk is that they often rely on default or semi-managed deployment patterns. They may not have a dedicated vulnerability management program for monitoring agents, and they may not even realize AMA is installed because it can arrive through a broader management toolchain. That makes disclosure like CVE-2026-32168 potentially more disruptive than it first appears.

There is also a trust issue. When organizations deploy an agent specifically to improve visibility and compliance, they expect that agent to be a source of security assurance rather than a security liability. If a monitoring service can be turned into an escalation step, it creates an awkward inversion: the tool installed to detect threats becomes one that may help deliver them.

Endpoint hygiene becomes part of the response

In smaller environments, the best response is usually disciplined inventory and patch confirmation. Administrators should identify where AMA is installed, whether those systems are on the latest agent build, and whether the endpoints are allowed to update automatically. That is not glamorous work, but it is often the difference between theoretical exposure and a real-world compromise.The hidden risk of “just telemetry”

It is tempting to treat telemetry software as low-risk infrastructure, but agents like AMA are often among the most privileged components on a machine. They need to see enough of the system to be useful, and that same visibility can become an abuse path if privilege boundaries are imperfect. Security teams should never assume that “monitoring” means “low impact.”Competitive and market implications

Microsoft’s monitoring stack competes indirectly with third-party observability platforms and security vendors that also depend on agents or collectors. A privilege escalation flaw in a Microsoft agent does not automatically shift market share, but it can affect procurement conversations, especially in security-conscious sectors. Buyers are likely to ask whether they want to centralize telemetry around one vendor’s agent or diversify with multiple collection paths.The broader industry lesson is that agent trust is becoming a differentiator. Vendors that can prove strong isolation, least-privilege design, and rapid patch delivery gain credibility when public vulnerabilities appear elsewhere. Conversely, a high-profile EoP in a core agent can remind customers that any software sitting close to the host OS deserves the same scrutiny as endpoint protection software.

Microsoft also has an incentive to respond visibly when issues affect core Azure monitoring infrastructure. Azure Monitor and Microsoft Sentinel are key parts of Microsoft’s cloud security story, and their value depends on customer confidence. If customers begin to believe the agent layer is fragile, they may delay migrations from older tooling or insist on extra controls before rolling out new monitoring workloads.

Trust is now a product feature

In cloud infrastructure, trust is productized. Buyers do not simply buy features; they buy assurances about patch cadence, isolation, and blast-radius containment. A vulnerability like CVE-2026-32168 becomes part of that evaluation because it tests whether the vendor can protect the most privileged components in the stack.What rivals may emphasize

Competing vendors are likely to highlight agent hardening, customer-controlled update channels, and reduced privilege footprints if they can credibly do so. They may also stress modular deployment, which can make it easier to patch or replace one component without reworking the entire telemetry architecture. That is a subtle but important competitive advantage in enterprise monitoring.Technical context: why elevation bugs matter in agents

Elevation of privilege vulnerabilities are dangerous because they collapse the separation between what an attacker can already do and what they should never be able to do. In the case of a monitoring agent, that boundary is especially sensitive because the agent often touches sensitive logs, credentials-adjacent workflows, and management channels. If a lower-privileged local actor can influence the agent’s behavior, the machine may become more exposed than the organization realizes.Microsoft’s own historical disclosures show how privilege escalation in cloud and platform services can stem from race conditions, overly permissive permissions, weak isolation, or insecure handling of helper processes. Those patterns do not prove the root cause in CVE-2026-32168, but they do show the kinds of mistakes that often underlie EoP flaws in managed systems. The lesson is to assume complexity, not simplicity.

A monitoring agent’s attack surface often includes installer code, service startup logic, update mechanisms, log handling, and communications with cloud backends. Any one of those can become a vulnerability if privileges are mismatched or trust assumptions are too loose. That is why patching only the visible service binary is sometimes not enough; defenders should understand whether the fix changes the agent’s logic, its service model, or its update path.

Common escalation patterns to watch

- Unsafe file or directory permissions

- Token or handle misuse between service and child processes

- Race conditions during install or update

- Over-privileged helper binaries

- Insecure parsing of local inputs

- Trust boundary confusion between user context and service context

Why attackers care

An attacker does not need to start with full control of a system to benefit from EoP. A foothold in a standard user account, script execution context, or low-privileged service can be enough if the target process is trusted enough to cross the boundary for them. That is why privilege escalation bugs remain a favorite link in post-compromise chains.Response priorities for admins

The first response step is straightforward: inventory where Azure Monitor Agent is installed and determine whether the environment uses automatic updates or manual rollout. Microsoft documents multiple deployment paths, so organizations should not rely on a single control plane assumption. If the environment includes Azure Arc or VM extensions, those paths should be checked separately.Second, validate whether the affected endpoints are mission-critical. Not every workstation or server has the same value, and patch sequencing should reflect business priority. Machines that host security tooling, identity services, or remote management jump points should be treated as higher-risk because they can become stepping stones after compromise.

Third, watch for signs of abnormal service behavior around the agent itself. In an EoP scenario, unusual child processes, unexpected file writes, or service restart anomalies can hint that someone is probing the boundary. This is not proof of exploitation, but it is the sort of telemetry that can help teams identify suspicious activity early.

Practical triage order

- Identify every system running AMA.

- Confirm the installed version and deployment method.

- Prioritize internet-facing, remote-managed, or identity-adjacent systems.

- Verify whether update automation is enabled.

- Monitor for unexpected service and process behavior.

Why communication matters

Security and infrastructure teams should coordinate before pushing agent updates at scale. Monitoring agents can affect telemetry continuity, and poorly planned updates can create blind spots just when defenders need more visibility. A short maintenance window is better than a broken detection pipeline.Strengths and Opportunities

Microsoft’s handling of AMA’s public documentation gives defenders several advantages. The product has clear deployment models, broad OS support, and a fairly well-defined role in the Azure monitoring ecosystem, which makes it easier to map exposure and confirm where the agent exists. That clarity is a real strength when a vulnerability hits a foundational service.- Broad documentation makes asset inventory easier.

- Multiple deployment paths give admins flexibility.

- Integrated Azure Monitor and Sentinel workflows simplify central response.

- Support for client and server systems helps standardize operations.

- The agent’s strategic importance ensures management attention.

- Automated deployment channels can speed remediation.

- Microsoft’s history of cloud-service mitigations suggests the issue will likely receive active handling.

Risks and Concerns

The biggest concern is that monitoring software often has enough privilege to become a high-value escalation target. If attackers can turn a local flaw in the agent into a higher-trust execution path, they may gain access to logs, management interfaces, or even adjacent administrative tooling. That would create not only endpoint compromise but also monitoring integrity risk.- Privilege escalation can lead to deeper host control.

- Monitoring trust can be undermined if the agent is abused.

- Hybrid estates can leave patch gaps.

- Client systems may be overlooked because they are not “servers.”

- Update delays can extend exposure windows.

- Security teams may underestimate the importance of telemetry software.

- A compromised agent can distort detection and response workflows.

What to watch next

The most important next step is Microsoft’s remediation guidance. Customers should watch for whether the company publishes a detailed advisory, updated package versions, or deployment guidance specific to Azure Arc, Windows clients, or VM extensions. If the fix involves more than a binary update, the rollout plan may matter as much as the patch itself.Organizations should also watch for evidence of exploitability. A confirmed EoP does not always mean easy real-world exploitation, but it does mean attackers will study the issue carefully. If proof-of-concept details or detection guidance emerge, patch urgency should move even higher.

Finally, defenders should monitor their own environments for version drift and response readiness. The real difference between a manageable vulnerability and a crisis is often how quickly teams can identify affected systems and execute a controlled update across all deployment paths.

- Microsoft remediation notes and version guidance

- Any updated Azure Monitor Agent installer or extension package

- Detections for abnormal agent service behavior

- Evidence of exploit attempts or proof-of-concept research

- Inventory gaps across Azure, Arc, and Windows client systems

Source: MSRC Security Update Guide - Microsoft Security Response Center