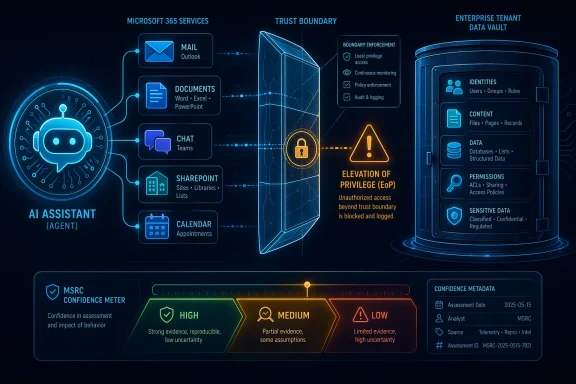

Microsoft’s CVE-2026-33102 advisory for Microsoft 365 Copilot is notable less for a dramatic technical disclosure than for the signal it sends about confidence, severity, and the growing scrutiny around AI-enabled productivity tools. Microsoft classifies the issue as an Elevation of Privilege vulnerability, and the wording around the advisory’s confidence metric matters because it tells defenders how certain the vendor is about the flaw’s existence and how credible the technical detail set is. In practical terms, this is the kind of entry that security teams cannot dismiss as speculative, even when the public explanation remains thin. The larger story is that Copilot has moved from “assistant” to infrastructure, and that shift inevitably turns trust boundaries into attack surfaces.

Microsoft has spent the last several years turning Copilot into a core part of Microsoft 365 rather than a novelty layered on top of it. That expansion has made the product far more valuable to users, but it has also made it a more attractive target for attackers. When an AI assistant is allowed to read messages, summarize files, act across services, and interpret context from an enterprise tenant, the difference between a convenience feature and a privilege boundary becomes operationally important.

The company has also been building a more formal framework for AI vulnerability handling. Microsoft’s security response organization has described how it classifies vulnerabilities in AI systems, including categories related to confidence, data extraction, and unintended behavior, and it has extended bounty and disclosure work around Copilot over time. That history matters because Copilot bugs are not being judged with the same assumptions used for a classic memory corruption flaw in a Windows driver. They live in a world where prompt handling, service integration, tenant boundaries, and authorization logic all interact.

Microsoft’s public security guidance also emphasizes that its vulnerability descriptions are designed to communicate both impact and confidence. That is not just a bureaucratic nuance. In the Security Update Guide, confidence metadata becomes a proxy for how much defenders can trust the advisory when exploit details are incomplete. For a rapidly evolving product like Copilot, where features are still changing and the attack surface is expanding, that metadata is part of the story itself.

There is also a broader context: Microsoft has already had to respond to a series of AI-related disclosure and privilege issues across its ecosystem. Even when a specific Copilot CVE is not disclosed in full, the pattern is clear. New AI features create new workflows, and those workflows create new failure modes. The enterprise risk is not only that Copilot may leak information; it is that it may be able to cross a trust boundary in a way that grants more access than intended.

For defenders, that means the entry should be treated as real even if the root cause is not fully visible. It also means the advisory can be a useful prioritization signal when incident response teams have to decide what deserves immediate attention.

That is why Copilot CVEs deserve special handling. A classical privilege escalation on a desktop often leads to local admin rights. A privilege escalation in a cloud productivity AI can lead to broader access to content, embedded permissions, connected services, or tenant data that the user did not intend to expose. The blast radius is often less visible but potentially much wider.

Microsoft has been gradually tightening its language around AI security because the product category itself has matured. Early Copilot coverage focused on productivity and user experience. More recent security work reflects the reality that AI systems participate in authorization, search, content generation, and cross-service orchestration. Once that happens, weaknesses can emerge in the seams between systems rather than inside a single component.

In that respect, the technical uncertainty around CVE-2026-33102 should not be confused with uncertainty about impact. Even if Microsoft has not published all the mechanics, an elevation-of-privilege advisory in Microsoft 365 Copilot tells defenders to think about trust chaining, implicit permissions, and the possibility that an attacker could cause the assistant to act with more authority than it should.

That can involve:

This is one reason Copilot security has become such a high-profile topic. The assistant is designed to interpret context and return helpful results. But every function that broadens usefulness also increases the risk that an attacker can exploit the system’s trust in content, prompts, links, connectors, or background services. The more helpful the assistant becomes, the more disciplined its boundaries must be.

Microsoft’s own AI security guidance has acknowledged that indirect prompt injection and unintended actions matter when they create security impact. That framing is important because Copilot issues often do not look like classic software bugs at first glance. Instead, they may appear as an input handling error, a policy bypass, or a trust failure that only becomes obvious when chained with other capabilities.

The practical lesson is simple: defenders should stop thinking of Copilot as a thin chat layer on top of Office and start thinking of it as an authorization-aware orchestration platform. That shift changes how one evaluates a vulnerability, how one tests controls, and how one prepares incident response.

A consumer account can be impacted by Copilot defects, but an enterprise tenant may carry much higher-value information and more complex permission structures.

For CVE-2026-33102, the confidence framing suggests Microsoft believes the issue is credible enough to publish and track, even if the underlying exploit mechanics are not widely described. In other words, the advisory is not just informational; it is operational. That is exactly the kind of signal security teams should treat as actionable.

This also reflects a broader Microsoft pattern. The company has increasingly used the Security Update Guide to make vulnerability metadata machine-readable and more transparent, while still withholding sensitive exploit specifics that could aid abuse. That balancing act is understandable. But it means defenders must learn to read between the lines.

A well-run security team will use confidence signals alongside product scope, deployment exposure, privilege level, and control dependence. If Copilot sits near sensitive business processes, the advisory becomes more urgent than if the feature is disabled, lightly used, or restricted by policy.

In AI products, privilege does not always look like a traditional admin token. It may appear as access to documents the user should not have seen, the ability to trigger actions in another service, or the ability to persuade an assistant to operate with broader context than intended. That is why AI privilege bugs often feel more like policy failures than code execution failures.

The security challenge is compounded by the fact that Copilot is designed to reduce friction. Frictionless access is good for productivity, but it also compresses the distance between user intent and system action. If a flaw can blur that distance, even slightly, the result can be a meaningful security gap.

There is a reason researchers and vendors keep circling around prompt injection, data exfiltration, and authorization confusion in AI systems. Those are not fringe theoretical issues; they are the natural hazards of giving a language model access to enterprise data and workflow tools. CVE-2026-33102 appears to belong to that broader class, even if its exact shape remains limited in public view.

Consumer users still care about privacy and account safety, of course. But the damage profile is different. A consumer-facing Copilot issue may cause unwanted access to personal content or misleading actions, while an enterprise issue can expose contracts, internal strategy, customer records, or regulated information. The stakes rise sharply once business data enters the picture.

There is also an important governance difference. In enterprises, Copilot may be subject to DLP, retention, eDiscovery, and conditional access policies. A privilege flaw can undermine those assumptions if the assistant is able to surface or move data in ways that bypass normal user expectations. That is why a Copilot EoP issue should be reviewed not only by endpoint teams, but also by identity, compliance, and information governance teams.

For smaller businesses, the practical issue may be simpler but still serious: limited security staffing means delayed response. If Copilot is enabled broadly and tenants are loosely managed, a vulnerability can linger longer than it should. That is one reason Microsoft’s advisory quality matters so much; it helps administrators decide whether to take immediate action or stage a more measured response.

This is one reason Microsoft has been expanding its AI-specific vulnerability taxonomy and bounty incentives. The company knows that AI systems require different forms of scrutiny, especially when they are embedded in the productivity stack used by millions of organizations. Security bugs in that environment can be harder to explain, but they are no less real.

The broader implication for defenders is that traditional patch management is necessary but not sufficient. AI services need behavioral monitoring, policy review, prompt hygiene, and clear segregation of sensitive data. If Copilot is allowed to operate inside broad workspaces without sufficient boundaries, then even a patched system may still have a weak posture.

That is the uncomfortable truth of modern cloud security: the patch may close the hole, but the architecture still determines how badly the hole could have hurt in the first place.

That makes them harder to detect, but potentially easier to chain into business-process abuse.

Incident response planning should also account for the possibility that Copilot behavior, rather than endpoint telemetry, becomes the clue that something is wrong. Unusual document retrieval, unexpected summaries, abnormal connector activity, or privilege boundary anomalies may matter more than traditional malware indicators. That is a subtle but important shift in the detection model.

Organizations should also treat the advisory as a chance to revisit the principle of least privilege. If Copilot can reach too much data by default, then a privilege bug becomes more dangerous. If access is narrower, scoped, and logged, then the same bug has less room to operate. Architecture is still the first defense.

For security operations teams, the right approach is to map exposure, patch when available, and then validate control effectiveness afterward. That sequence sounds basic, but in the Copilot era it is the difference between assuming safety and proving it.

That could benefit competitors that emphasize governance and isolation. It could also accelerate demand for independent controls around AI usage, auditability, and connector management. In other words, the market may move from “Which copilot is smartest?” to “Which copilot is safest to embed in the enterprise?”

There is also a reputational angle. Microsoft has made Copilot central to its platform story, so every vulnerability becomes part of the broader narrative around trust and deployment readiness. The company can absorb that pressure, but it cannot ignore it. The more Copilot becomes embedded in daily work, the more security issues will shape procurement decisions and deployment scope.

That does not mean Copilot is uniquely unsafe. It means it is being treated as critical infrastructure, which is exactly what success at this scale tends to produce.

The other issue to watch is whether this CVE becomes part of a larger pattern of Copilot trust-boundary bugs. If more AI-related privilege or disclosure issues emerge, enterprises may begin to treat Copilot deployment the same way they treat highly privileged administrative systems: useful, but only after careful segmentation and governance.

Source: MSRC Security Update Guide - Microsoft Security Response Center

Background

Background

Microsoft has spent the last several years turning Copilot into a core part of Microsoft 365 rather than a novelty layered on top of it. That expansion has made the product far more valuable to users, but it has also made it a more attractive target for attackers. When an AI assistant is allowed to read messages, summarize files, act across services, and interpret context from an enterprise tenant, the difference between a convenience feature and a privilege boundary becomes operationally important.The company has also been building a more formal framework for AI vulnerability handling. Microsoft’s security response organization has described how it classifies vulnerabilities in AI systems, including categories related to confidence, data extraction, and unintended behavior, and it has extended bounty and disclosure work around Copilot over time. That history matters because Copilot bugs are not being judged with the same assumptions used for a classic memory corruption flaw in a Windows driver. They live in a world where prompt handling, service integration, tenant boundaries, and authorization logic all interact.

Microsoft’s public security guidance also emphasizes that its vulnerability descriptions are designed to communicate both impact and confidence. That is not just a bureaucratic nuance. In the Security Update Guide, confidence metadata becomes a proxy for how much defenders can trust the advisory when exploit details are incomplete. For a rapidly evolving product like Copilot, where features are still changing and the attack surface is expanding, that metadata is part of the story itself.

There is also a broader context: Microsoft has already had to respond to a series of AI-related disclosure and privilege issues across its ecosystem. Even when a specific Copilot CVE is not disclosed in full, the pattern is clear. New AI features create new workflows, and those workflows create new failure modes. The enterprise risk is not only that Copilot may leak information; it is that it may be able to cross a trust boundary in a way that grants more access than intended.

Why confidence matters in MSRC language

Microsoft’s confidence metric is not merely a label. It reflects how sure the company is that a vulnerability exists and how solid the known technical detail is. That makes it especially useful when public exploit proof is unavailable or when the advisory is intentionally restrained.For defenders, that means the entry should be treated as real even if the root cause is not fully visible. It also means the advisory can be a useful prioritization signal when incident response teams have to decide what deserves immediate attention.

- High confidence generally implies stronger vendor certainty.

- Lower confidence can indicate incomplete technical corroboration.

- Public detail scarcity does not mean low risk.

- Vendor acknowledgment is often enough to justify urgent remediation planning.

Overview

CVE-2026-33102 sits in a fast-growing category of cloud and AI service vulnerabilities: flaws that may not involve code execution in the traditional sense, but can still change what a user, service, or attacker is allowed to do. For Microsoft 365 Copilot, that distinction is critical. An elevation of privilege issue in this context may allow an actor to influence what Copilot can access, how it responds, or which resources it can reach on behalf of a user or tenant.That is why Copilot CVEs deserve special handling. A classical privilege escalation on a desktop often leads to local admin rights. A privilege escalation in a cloud productivity AI can lead to broader access to content, embedded permissions, connected services, or tenant data that the user did not intend to expose. The blast radius is often less visible but potentially much wider.

Microsoft has been gradually tightening its language around AI security because the product category itself has matured. Early Copilot coverage focused on productivity and user experience. More recent security work reflects the reality that AI systems participate in authorization, search, content generation, and cross-service orchestration. Once that happens, weaknesses can emerge in the seams between systems rather than inside a single component.

In that respect, the technical uncertainty around CVE-2026-33102 should not be confused with uncertainty about impact. Even if Microsoft has not published all the mechanics, an elevation-of-privilege advisory in Microsoft 365 Copilot tells defenders to think about trust chaining, implicit permissions, and the possibility that an attacker could cause the assistant to act with more authority than it should.

What the advisory class suggests

An EoP label in Copilot is not interchangeable with a Windows kernel privilege bug. It suggests a trust or authorization problem inside an AI-enabled service path.That can involve:

- Improper permission handling

- Trust boundary confusion

- Authorization bypass

- Tenant-scoped access issues

- Cross-service privilege leakage

The Copilot attack surface

Microsoft 365 Copilot is not one product in the old sense. It is a stitched-together experience that spans mail, documents, chats, calendar context, SharePoint content, and enterprise search. That breadth is part of the value proposition, but it also means the attack surface is distributed across many moving parts. A bug in one layer can have consequences in another.This is one reason Copilot security has become such a high-profile topic. The assistant is designed to interpret context and return helpful results. But every function that broadens usefulness also increases the risk that an attacker can exploit the system’s trust in content, prompts, links, connectors, or background services. The more helpful the assistant becomes, the more disciplined its boundaries must be.

Microsoft’s own AI security guidance has acknowledged that indirect prompt injection and unintended actions matter when they create security impact. That framing is important because Copilot issues often do not look like classic software bugs at first glance. Instead, they may appear as an input handling error, a policy bypass, or a trust failure that only becomes obvious when chained with other capabilities.

The practical lesson is simple: defenders should stop thinking of Copilot as a thin chat layer on top of Office and start thinking of it as an authorization-aware orchestration platform. That shift changes how one evaluates a vulnerability, how one tests controls, and how one prepares incident response.

Why this matters to enterprises

Enterprise customers are the ones most exposed to privilege amplification because they connect Copilot to internal data, sensitive workspaces, and identity infrastructure. If a vulnerability changes what Copilot can see or do, the real exposure may be organizational rather than personal.A consumer account can be impacted by Copilot defects, but an enterprise tenant may carry much higher-value information and more complex permission structures.

- Document libraries often contain sensitive business material.

- Email context can reveal high-value internal discussions.

- Teams and shared workspaces can widen the trust perimeter.

- Search and connectors can blend data sources in risky ways.

Why Microsoft’s confidence metric is a big deal

The confidence metric on a Microsoft Security Update Guide entry is easy to overlook, but it is one of the most useful parts of the advisory. It helps separate “here is a theoretical concern” from “here is a tracked issue Microsoft believes exists.” That distinction matters when public details are incomplete, because it allows defenders to make a risk decision based on vendor certainty rather than on speculation.For CVE-2026-33102, the confidence framing suggests Microsoft believes the issue is credible enough to publish and track, even if the underlying exploit mechanics are not widely described. In other words, the advisory is not just informational; it is operational. That is exactly the kind of signal security teams should treat as actionable.

This also reflects a broader Microsoft pattern. The company has increasingly used the Security Update Guide to make vulnerability metadata machine-readable and more transparent, while still withholding sensitive exploit specifics that could aid abuse. That balancing act is understandable. But it means defenders must learn to read between the lines.

A well-run security team will use confidence signals alongside product scope, deployment exposure, privilege level, and control dependence. If Copilot sits near sensitive business processes, the advisory becomes more urgent than if the feature is disabled, lightly used, or restricted by policy.

Practical reading of the metric

The metric is best treated as a triage tool, not a complete risk rating.- Confidence is about existence, not exploit ease.

- High confidence does not automatically mean public exploitation.

- Low technical detail does not mean low operational urgency.

- Vendor certainty should influence patch priority.

How an EoP issue in Copilot could manifest

Without public exploit details, it is important not to overstate any specific mechanism. Still, an elevation-of-privilege flaw in Microsoft 365 Copilot would plausibly involve a weakness in how the assistant validates context, scopes permissions, or handles trust across services. That could allow a less-privileged actor to influence what the assistant can retrieve or process on their behalf.In AI products, privilege does not always look like a traditional admin token. It may appear as access to documents the user should not have seen, the ability to trigger actions in another service, or the ability to persuade an assistant to operate with broader context than intended. That is why AI privilege bugs often feel more like policy failures than code execution failures.

The security challenge is compounded by the fact that Copilot is designed to reduce friction. Frictionless access is good for productivity, but it also compresses the distance between user intent and system action. If a flaw can blur that distance, even slightly, the result can be a meaningful security gap.

There is a reason researchers and vendors keep circling around prompt injection, data exfiltration, and authorization confusion in AI systems. Those are not fringe theoretical issues; they are the natural hazards of giving a language model access to enterprise data and workflow tools. CVE-2026-33102 appears to belong to that broader class, even if its exact shape remains limited in public view.

Likely security questions defenders should ask

- Does Copilot have access to content beyond the user’s immediate needs?

- Are connectors and plugins constrained by least privilege?

- Can prompts or content influence authorization decisions?

- Are tenant-level boundaries consistently enforced?

- Are logs sufficient to detect suspicious assistant behavior?

Enterprise versus consumer risk

The enterprise impact of Copilot vulnerabilities is almost always more serious than the consumer impact, even when the CVE is technically the same. Business tenants typically include sensitive mailboxes, shared drives, collaboration tools, and data sources that are linked through policy. That makes privilege-related flaws more consequential because the system has more value to expose.Consumer users still care about privacy and account safety, of course. But the damage profile is different. A consumer-facing Copilot issue may cause unwanted access to personal content or misleading actions, while an enterprise issue can expose contracts, internal strategy, customer records, or regulated information. The stakes rise sharply once business data enters the picture.

There is also an important governance difference. In enterprises, Copilot may be subject to DLP, retention, eDiscovery, and conditional access policies. A privilege flaw can undermine those assumptions if the assistant is able to surface or move data in ways that bypass normal user expectations. That is why a Copilot EoP issue should be reviewed not only by endpoint teams, but also by identity, compliance, and information governance teams.

For smaller businesses, the practical issue may be simpler but still serious: limited security staffing means delayed response. If Copilot is enabled broadly and tenants are loosely managed, a vulnerability can linger longer than it should. That is one reason Microsoft’s advisory quality matters so much; it helps administrators decide whether to take immediate action or stage a more measured response.

Enterprise control points that matter most

Organizations should focus on the layers that determine what Copilot can touch.- Identity and access management

- Data loss prevention policies

- Tenant configuration and permissions

- Connector and plugin governance

- Audit logging and alerting

The broader Microsoft 365 security pattern

CVE-2026-33102 does not exist in isolation. It fits into a larger arc in which Microsoft 365 services are increasingly treated as security-critical infrastructure rather than ordinary SaaS applications. As the platform has absorbed more AI functionality, the security conversation has shifted from simple patching to control-plane integrity.This is one reason Microsoft has been expanding its AI-specific vulnerability taxonomy and bounty incentives. The company knows that AI systems require different forms of scrutiny, especially when they are embedded in the productivity stack used by millions of organizations. Security bugs in that environment can be harder to explain, but they are no less real.

The broader implication for defenders is that traditional patch management is necessary but not sufficient. AI services need behavioral monitoring, policy review, prompt hygiene, and clear segregation of sensitive data. If Copilot is allowed to operate inside broad workspaces without sufficient boundaries, then even a patched system may still have a weak posture.

That is the uncomfortable truth of modern cloud security: the patch may close the hole, but the architecture still determines how badly the hole could have hurt in the first place.

How this compares to classic Office bugs

Classic Office CVEs often centered on file parsing, macro abuse, or memory corruption. Copilot vulnerabilities are different because they can revolve around context, trust, and authorization.That makes them harder to detect, but potentially easier to chain into business-process abuse.

- Old model: open a malicious file, trigger code execution.

- New model: manipulate context, influence assistant behavior, cross a trust boundary.

- Old model: endpoint compromise.

- New model: workflow compromise.

What this means for patching and incident response

Because the public technical details are limited, the best response is disciplined triage rather than speculation. Security teams should verify whether Microsoft has published remediation guidance, review whether their tenant uses Copilot broadly, and confirm what data sources Copilot can access. If the service is tightly constrained, the immediate exposure may be lower; if not, the issue deserves urgent attention.Incident response planning should also account for the possibility that Copilot behavior, rather than endpoint telemetry, becomes the clue that something is wrong. Unusual document retrieval, unexpected summaries, abnormal connector activity, or privilege boundary anomalies may matter more than traditional malware indicators. That is a subtle but important shift in the detection model.

Organizations should also treat the advisory as a chance to revisit the principle of least privilege. If Copilot can reach too much data by default, then a privilege bug becomes more dangerous. If access is narrower, scoped, and logged, then the same bug has less room to operate. Architecture is still the first defense.

For security operations teams, the right approach is to map exposure, patch when available, and then validate control effectiveness afterward. That sequence sounds basic, but in the Copilot era it is the difference between assuming safety and proving it.

Sequential response checklist

- Confirm the advisory status in your Microsoft security tracking workflow.

- Identify which tenants and business units use Microsoft 365 Copilot.

- Review what data sources and connectors Copilot can access.

- Apply any Microsoft remediation or guidance as soon as it is available.

- Audit logs for suspicious Copilot interactions or privilege anomalies.

- Tighten least-privilege settings where possible.

Competitive and market implications

Microsoft’s handling of Copilot vulnerabilities has implications well beyond one CVE. It affects trust in AI productivity platforms generally, and it raises the bar for competitors building similar assistants into enterprise workflows. If a major vendor publishes AI-related privilege advisories with increasing regularity, the market will begin to assume that such systems need the same rigor once reserved for operating systems and identity services.That could benefit competitors that emphasize governance and isolation. It could also accelerate demand for independent controls around AI usage, auditability, and connector management. In other words, the market may move from “Which copilot is smartest?” to “Which copilot is safest to embed in the enterprise?”

There is also a reputational angle. Microsoft has made Copilot central to its platform story, so every vulnerability becomes part of the broader narrative around trust and deployment readiness. The company can absorb that pressure, but it cannot ignore it. The more Copilot becomes embedded in daily work, the more security issues will shape procurement decisions and deployment scope.

That does not mean Copilot is uniquely unsafe. It means it is being treated as critical infrastructure, which is exactly what success at this scale tends to produce.

Market takeaways

- Security maturity is becoming a buying criterion for AI assistants.

- Governance tooling will matter more than flashy model features.

- Enterprise customers will demand tighter boundary enforcement.

- Vendors will be judged on response speed as much as innovation.

Strengths and Opportunities

Microsoft’s publication of a Copilot EoP entry shows that the company is doing the right thing by acknowledging AI-specific risk and exposing useful confidence metadata to defenders. The upside is that organizations now have a clearer way to prioritize and harden their environments before an issue becomes a breach.- Vendor acknowledgment improves trust in the advisory process.

- Confidence metadata helps teams prioritize with more nuance.

- AI-specific classification reflects a more mature security model.

- Copilot hardening can reduce exposure to future flaws.

- Least-privilege redesign may improve overall tenant hygiene.

- Audit and logging improvements can pay dividends beyond this CVE.

- Security awareness around AI workflows is finally catching up.

Risks and Concerns

The main concern is that AI privilege issues are still poorly understood by many organizations, which increases the chance of slow response or misconfiguration. Copilot’s value depends on wide access to data and context, but that same breadth makes privilege mistakes more damaging than they first appear.- Incomplete public details can lead to false confidence.

- Broad tenant permissions may magnify impact.

- Weak connector governance can enlarge the trust boundary.

- Logging gaps may obscure abuse or anomalous behavior.

- Overreliance on AI summaries can hide dangerous access patterns.

- Patch-only thinking may miss architectural weaknesses.

- Slow governance changes could leave exposure in place after remediation.

Looking Ahead

The next important question is whether Microsoft expands the advisory with more technical detail, remediation guidance, or mitigations that help defenders understand the exact scope of CVE-2026-33102. Even if the company keeps the root cause private for security reasons, the direction of travel is already clear: Copilot is now part of the enterprise security conversation, and it will stay there.The other issue to watch is whether this CVE becomes part of a larger pattern of Copilot trust-boundary bugs. If more AI-related privilege or disclosure issues emerge, enterprises may begin to treat Copilot deployment the same way they treat highly privileged administrative systems: useful, but only after careful segmentation and governance.

- Microsoft guidance updates

- Tenant exposure reviews

- New Copilot-related CVEs

- Changes to AI security classification

- Enterprise policy tightening

Source: MSRC Security Update Guide - Microsoft Security Response Center