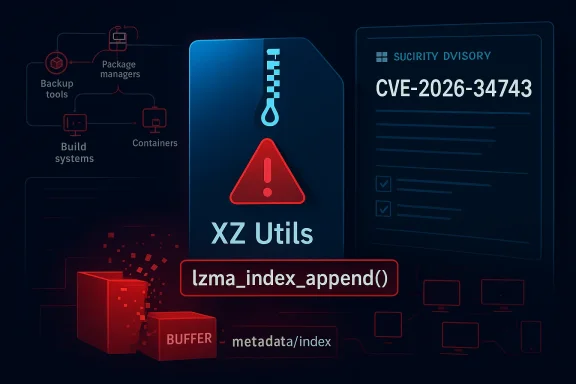

CVE-2026-34743 is a buffer overflow in XZ Utils’

XZ Utils is one of those quiet dependencies that rarely gets top billing until something goes wrong. It provides the widely used

That old assumption was already weakened by the broader attention paid to XZ in the wake of the infamous backdoor scare in 2024, which pushed the project into the center of supply-chain security discussions. Since then, security teams have become more alert to the fact that compression code is not “just plumbing”. It can be on the path of package verification, update delivery, log handling, file indexing, and even remote content processing.

Microsoft’s Security Update Guide has also become more explicit over the last few years about publishing vulnerability details for open-source components that affect Microsoft products or ecosystems. Microsoft says it now uses industry standards like CWE and CSAF to improve transparency and machine-readability of CVE data, which reflects a broader push toward faster coordination and cleaner downstream automation. That matters because a CVE like this one is not just read by humans; it is ingested by scanners, ticketing systems, and risk platforms that decide where teams spend their time.

The key phrase here is buffer overflow. That alone does not prove remote code execution, but it does signal memory corruption risk, and memory corruption in a parsing or indexing function is something security teams treat seriously. Functions such as

In practical terms, a flaw in XZ Utils can affect both consumer and enterprise environments. Consumers may encounter it indirectly through desktop tools or software installers. Enterprises may care more about whether their Linux distributions, appliances, build agents, container images, or Microsoft-integrated products carry a vulnerable version of the library. The same flaw can therefore be a quiet nuisance in one setting and a serious incident trigger in another.

For XZ-related issues, that matters because the same library can appear in multiple packaging ecosystems with different versioning and backport behavior. An enterprise may not even realize a vulnerable library is present because it is embedded in an application bundle or a vendor-maintained image. The MSRC disclosure therefore has value even before a vendor-specific patch arrives.

A few practical implications stand out:

Why

The function name suggests code that appends to an index structure, likely while processing compressed data metadata or maintaining internal state for archives. Functions that grow structures, reallocate memory, or copy chunks of input are classic candidates for boundary mistakes. If size calculations go wrong, a write can exceed the target buffer and corrupt adjacent memory.

That does not automatically mean exploitation is easy. It does mean the bug sits in a part of the codebase where memory safety and trust boundaries matter. If the overflow can be triggered by crafted input, the security relevance climbs quickly. If it is only reachable in narrow local workflows, the risk profile is lower but still worth tracking.

The broader lesson is that auxiliary library code often deserves the same attention as headline-grabbing network daemons. Attackers do not care whether a bug lives in “core” code or “supporting” code; they care whether it can be reached reliably. Compression and archive handling are prime examples of software that gets exercised by automated systems, sometimes at scale and often without direct user awareness.

For this reason, the best response is usually layered:

The practical difference is that consumers usually wait for the app or OS vendor to act, while enterprises can often audit, isolate, or mitigate faster. That said, consumers should still pay attention to update prompts, especially when they reference compression, archive handling, or file parsing components.

In modern environments, mitigations like ASLR, stack canaries, Control Flow Guard, and hardened allocators can reduce exploitability. But mitigation is not immunity. Security teams know that many exploitation chains start with a seemingly modest memory corruption bug and then rely on a second weakness to finish the job.

A few risk indicators matter most:

This is why memory safety bugs in codecs and archive libraries remain perennial targets. They live in a high-reuse environment, they process attacker-influenced data, and they often run in automated workflows. Even a bug that seems localized can become security-significant once deployed across millions of machines.

The downside is that automation can create a false sense of completeness. A scanner may flag or miss a component based on package metadata, while the real risk sits in an embedded dependency or a backported vendor build. Human validation still matters, especially for open-source libraries that appear in multiple package managers and image layers.

Microsoft’s approach also reflects a broader industry shift. Vendors increasingly recognize that they need to disclose not just the existence of vulnerabilities, but also their root cause and the affected ecosystems. This helps customers understand whether a bug is a one-off patch or part of a recurring weakness class that should influence development policy.

That said, metadata can only go so far. If your environment uses vendor-patched or renamed packages, the CVE entry may understate or overstate actual exposure. That is why asset-level verification remains essential.

XZ Utils is particularly important because it is widely embedded and often invisible to end users. Many people know they use a browser or an editor, but few know they are also using compression code buried in package managers, updater tools, or telemetry components. That invisibility makes risk communication harder.

Historically, security incidents in compression and archive code have had two recurring traits: they are easy to underestimate, and they are expensive to clean up. Once a library becomes a shared dependency, the fix has to travel through every product that bundles it. That can take weeks or months, especially in enterprise and appliance environments.

Enterprises should also consider whether the library is used in build pipelines. A vulnerable component in CI/CD infrastructure can be especially risky because it may process large volumes of untrusted artifacts. If a build agent or artifact scanner uses XZ, the operational priority rises quickly.

Those compensating controls could include restricted file handling, tighter sandboxing, temporary removal of risky workflows, or isolation of systems that parse untrusted archives. None of these is as good as a patch, but risk reduction is better than waiting passively.

Developers should also pay attention to input validation patterns around archive parsing. The best bug fix is usually upstream, but the second-best defense is to reduce the amount of malformed data a parser is asked to process. Defense in depth still matters, especially for code that handles external files.

A short developer checklist:

The most important thing to watch is whether this issue remains a contained memory corruption bug or becomes part of a broader pattern in the XZ ecosystem. If additional tooling or libraries expose similar assumptions, defenders may need to widen their audit beyond one function and one version. The security value of this CVE may therefore lie not only in the fix itself, but in the dependency reviews it triggers.

A few things to monitor closely:

Source: MSRC Security Update Guide - Microsoft Security Response Center

lzma_index_append(), a detail that matters because XZ sits deep in the software supply chain and is embedded, directly or indirectly, in far more systems than many administrators realize. Microsoft has now surfaced the issue in its vulnerability guidance, putting the flaw into the same operational bucket that security teams use for patch planning, exposure mapping, and asset triage. Even when a bug appears in a compression library, the blast radius can extend into backup tools, package managers, build systems, firmware workflows, and server-side utilities that rely on liblzma. That makes this more than a single-library bug; it is a reminder that low-level infrastructure code can become a high-value attack surface.

Background

Background

XZ Utils is one of those quiet dependencies that rarely gets top billing until something goes wrong. It provides the widely used xz compression format and the liblzma library, which many applications link against for decompression, indexing, and archive handling. Because it lives low in the stack, XZ often gets treated as a utility rather than as a security-critical component, but that distinction is increasingly outdated.That old assumption was already weakened by the broader attention paid to XZ in the wake of the infamous backdoor scare in 2024, which pushed the project into the center of supply-chain security discussions. Since then, security teams have become more alert to the fact that compression code is not “just plumbing”. It can be on the path of package verification, update delivery, log handling, file indexing, and even remote content processing.

Microsoft’s Security Update Guide has also become more explicit over the last few years about publishing vulnerability details for open-source components that affect Microsoft products or ecosystems. Microsoft says it now uses industry standards like CWE and CSAF to improve transparency and machine-readability of CVE data, which reflects a broader push toward faster coordination and cleaner downstream automation. That matters because a CVE like this one is not just read by humans; it is ingested by scanners, ticketing systems, and risk platforms that decide where teams spend their time.

The key phrase here is buffer overflow. That alone does not prove remote code execution, but it does signal memory corruption risk, and memory corruption in a parsing or indexing function is something security teams treat seriously. Functions such as

lzma_index_append() are especially interesting because they deal with structured data that may come from untrusted or semi-trusted files, which is exactly where attackers like to hide malicious payloads.In practical terms, a flaw in XZ Utils can affect both consumer and enterprise environments. Consumers may encounter it indirectly through desktop tools or software installers. Enterprises may care more about whether their Linux distributions, appliances, build agents, container images, or Microsoft-integrated products carry a vulnerable version of the library. The same flaw can therefore be a quiet nuisance in one setting and a serious incident trigger in another.

What Microsoft’s Disclosure Means

Microsoft’s inclusion of CVE-2026-34743 in its update guidance tells defenders that the issue has crossed from code-level concern into actionable security inventory. In Microsoft’s model, that typically means the CVE is relevant enough to affect customers, downstream products, or associated services, even when the vulnerable code originated outside Microsoft. The important part is not just the CVE number; it is the operational signal that this is now something defenders should track.Why Security Update Guide entries matter

A Security Update Guide entry is more than a notice. It is often the point at which a vulnerability becomes visible to enterprise tooling, patch management, and compliance workflows. Once that happens, administrators can begin mapping exposure against their fleets and prioritize remediation based on business impact rather than rumor.For XZ-related issues, that matters because the same library can appear in multiple packaging ecosystems with different versioning and backport behavior. An enterprise may not even realize a vulnerable library is present because it is embedded in an application bundle or a vendor-maintained image. The MSRC disclosure therefore has value even before a vendor-specific patch arrives.

A few practical implications stand out:

- Inventory tooling should look beyond OS packages and scan application bundles.

- SBOMs can help identify where liblzma is present.

- Vendor advisories may lag behind the MSRC entry, so teams should monitor both.

- Container images may need rebuilding rather than simple patching.

- Appliance firmware could require vendor-supplied updates, not local fixes.

Background on the Vulnerability Class

Buffer overflows remain one of the oldest bug classes in software security, but they are still dangerous because they often provide the ingredients for exploitation: memory corruption, unpredictable behavior, and sometimes control-flow hijacking. In parsing code, the risk increases because attackers can shape the input. In indexing code, the risk can be subtler, but it is still serious if input size or structure assumptions break down.Why lzma_index_append() is a red flag

The function name suggests code that appends to an index structure, likely while processing compressed data metadata or maintaining internal state for archives. Functions that grow structures, reallocate memory, or copy chunks of input are classic candidates for boundary mistakes. If size calculations go wrong, a write can exceed the target buffer and corrupt adjacent memory.That does not automatically mean exploitation is easy. It does mean the bug sits in a part of the codebase where memory safety and trust boundaries matter. If the overflow can be triggered by crafted input, the security relevance climbs quickly. If it is only reachable in narrow local workflows, the risk profile is lower but still worth tracking.

The broader lesson is that auxiliary library code often deserves the same attention as headline-grabbing network daemons. Attackers do not care whether a bug lives in “core” code or “supporting” code; they care whether it can be reached reliably. Compression and archive handling are prime examples of software that gets exercised by automated systems, sometimes at scale and often without direct user awareness.

Supply-Chain Implications

XZ Utils is a supply-chain story because its vulnerability surface is amplified by reuse. One library can feed dozens of products, and one flawed function can therefore become a shared liability across very different environments. That is the reason security teams treat open-source component bugs as enterprise events, not just upstream project issues.Enterprise packaging and downstream risk

Enterprises rarely consume XZ directly in the form of source code. They consume it through distributions, container bases, language runtimes, appliance firmware, and vendor applications. That means remediation may depend on multiple layers of ownership, and the path to a fix can be slower than administrators want.For this reason, the best response is usually layered:

- Identify whether liblzma/XZ Utils is present.

- Determine whether the vulnerable code path is actually reachable.

- Check whether your vendor has shipped a patched build.

- Rebuild or redeploy affected images where necessary.

- Verify with vulnerability management tools after the fix.

Consumer exposure is different

Consumers are less likely to manage XZ directly, but they can still be exposed through bundled software and updates. A desktop utility, file manager, or installer might ship with a vulnerable dependency. Most consumer risk is indirect, which makes it harder to spot and easier to ignore until a vendor publishes a fix.The practical difference is that consumers usually wait for the app or OS vendor to act, while enterprises can often audit, isolate, or mitigate faster. That said, consumers should still pay attention to update prompts, especially when they reference compression, archive handling, or file parsing components.

Technical Risk Profile

A buffer overflow in a library function is not the same as a confirmed exploit chain, but it is enough to demand scrutiny. The technical questions are straightforward: what kind of overwrite occurs, what triggers it, and whether the bug is reachable from untrusted input. Those questions determine whether the issue is a local crash, a denial-of-service condition, or a possible code execution vector.What can go wrong

When a buffer overflow occurs, the immediate effect can be a crash. In more severe cases, an attacker may be able to corrupt control data, alter program flow, or influence adjacent structures in memory. The exact impact depends on compiler defenses, memory allocator behavior, and whether the vulnerable process has high privileges.In modern environments, mitigations like ASLR, stack canaries, Control Flow Guard, and hardened allocators can reduce exploitability. But mitigation is not immunity. Security teams know that many exploitation chains start with a seemingly modest memory corruption bug and then rely on a second weakness to finish the job.

A few risk indicators matter most:

- Input-driven code paths are more dangerous than internal-only ones.

- Privileged processes make impact worse.

- Server-side reachability increases exploit value.

- Repeated parsing can raise reliability for attackers.

- Memory allocator hardening may slow, but not eliminate, abuse.

Why indexing code is tricky

Indexing code often assumes the data it processes is well-formed. That assumption is dangerous when the input comes from archives, update bundles, or files received over the network. A malformed index can force the parser into edge cases that ordinary testing misses.This is why memory safety bugs in codecs and archive libraries remain perennial targets. They live in a high-reuse environment, they process attacker-influenced data, and they often run in automated workflows. Even a bug that seems localized can become security-significant once deployed across millions of machines.

Microsoft’s CVE Process in Context

Microsoft has spent the last several years making its vulnerability reporting more structured and more interoperable. Its updates about CWE adoption and machine-readable CSAF files are not just bureaucracy; they are part of a larger effort to help customers and partners process vulnerability information faster. That means CVE entries are increasingly part of a machine-to-machine workflow rather than a static advisory page.Why that matters for defenders

For defenders, standardization makes it easier to automate detection, ticket creation, and remediation tracking. It also means more tools can ingest the same canonical data and produce consistent results. In a world where security teams are overwhelmed by alerts, that consistency matters a great deal.The downside is that automation can create a false sense of completeness. A scanner may flag or miss a component based on package metadata, while the real risk sits in an embedded dependency or a backported vendor build. Human validation still matters, especially for open-source libraries that appear in multiple package managers and image layers.

Microsoft’s approach also reflects a broader industry shift. Vendors increasingly recognize that they need to disclose not just the existence of vulnerabilities, but also their root cause and the affected ecosystems. This helps customers understand whether a bug is a one-off patch or part of a recurring weakness class that should influence development policy.

The role of metadata

Metadata may look boring, but in operational security it is often the difference between fast triage and endless manual review. A clear CVE title, root-cause classification, and affected-component mapping can save hours. In this case, the label “buffer overflow inlzma_index_append()” gives defenders a concrete starting point for searching package manifests, build logs, and vendor advisories.That said, metadata can only go so far. If your environment uses vendor-patched or renamed packages, the CVE entry may understate or overstate actual exposure. That is why asset-level verification remains essential.

Open Source and the Compression Stack

The compression stack sits at an awkward intersection of performance engineering and security engineering. Compression code needs to be fast, memory-efficient, and standards-compliant, but it also has to handle malformed inputs gracefully. That combination makes it a natural breeding ground for overflows, out-of-bounds reads, and parser bugs.Why compression libraries attract bugs

Compression formats are complex because they carry both data and metadata. That means they expose lots of size calculations, offsets, indexes, and state transitions. Any mismatch between expected and actual structure can send the program down a bad path.XZ Utils is particularly important because it is widely embedded and often invisible to end users. Many people know they use a browser or an editor, but few know they are also using compression code buried in package managers, updater tools, or telemetry components. That invisibility makes risk communication harder.

Historically, security incidents in compression and archive code have had two recurring traits: they are easy to underestimate, and they are expensive to clean up. Once a library becomes a shared dependency, the fix has to travel through every product that bundles it. That can take weeks or months, especially in enterprise and appliance environments.

Common failure modes in this area

The most common issues tend to involve:- Integer overflows in size calculations.

- Out-of-bounds writes during copy or append operations.

- Out-of-bounds reads that leak information.

- Use-after-free conditions in parser state machines.

- Denial-of-service crashes from malformed content.

Operational Response for Enterprises

Enterprise response should be disciplined, not frantic. The goal is to discover whether the vulnerable component exists in your environment, whether the vulnerable path is reachable, and whether vendor guidance already offers a remediation. Security teams that move methodically will usually get better results than teams that rush into broad, disruptive changes.A practical response plan

- Search inventory and SBOM data for XZ Utils and liblzma.

- Map exposure to internet-facing, privileged, or automation-heavy systems.

- Check vendor advisories for patched package versions or backports.

- Rebuild affected images rather than only patching live hosts.

- Retest and rescan after updates to confirm the issue is gone.

Enterprises should also consider whether the library is used in build pipelines. A vulnerable component in CI/CD infrastructure can be especially risky because it may process large volumes of untrusted artifacts. If a build agent or artifact scanner uses XZ, the operational priority rises quickly.

Patch management realities

Patch cadence is not always under direct control. Some systems require vendor release cycles, signing, or certification before updates can be deployed. Others live inside appliances that only update through a support contract. For those environments, compensating controls may be necessary while waiting for a fix.Those compensating controls could include restricted file handling, tighter sandboxing, temporary removal of risky workflows, or isolation of systems that parse untrusted archives. None of these is as good as a patch, but risk reduction is better than waiting passively.

Consumer and Developer Impacts

Consumers usually experience vulnerabilities like this as hidden friction. They may never see the library name, but they can still be affected when an application crashes, refuses to open a file, or receives an emergency update. Developers, by contrast, have the opportunity to respond earlier because they can inspect dependencies and adjust their build chains.What developers should do

Developers using XZ Utils, directly or indirectly, should verify the version they ship and the version they build against. If the library is bundled, they should rebuild with a fixed release as soon as one is available. If the dependency is transitive, they should trace it through package manifests and lockfiles.Developers should also pay attention to input validation patterns around archive parsing. The best bug fix is usually upstream, but the second-best defense is to reduce the amount of malformed data a parser is asked to process. Defense in depth still matters, especially for code that handles external files.

A short developer checklist:

- Confirm whether XZ/liblzma is direct or transitive.

- Identify every place the library is packaged.

- Rebuild and redeploy artifacts with the patched component.

- Run regression tests against malformed archive samples.

- Review any custom wrappers around index or metadata functions.

Strengths and Opportunities

This disclosure also highlights some positive trends in the way the ecosystem handles open-source risk. The more transparent and machine-readable the CVE process becomes, the easier it is for defenders to react quickly and consistently. It also creates an opportunity for organizations to improve how they inventory and govern third-party dependencies.- Better transparency from Microsoft helps downstream teams align on one source of truth.

- Standardized metadata improves automation and reporting.

- Supply-chain awareness is finally becoming part of routine patch management.

- SBOM adoption can materially reduce time to exposure assessment.

- Vendor coordination can speed up remediated builds for affected products.

- Security teams can refine policies around archive and compression libraries.

- Developers can use this as a case study for hardening low-level parsing code.

Risks and Concerns

The obvious risk is exploitation, but the operational risks are broader than that. A flaw in a ubiquitous compression library can create patching churn, uncertainty about where the vulnerable code lives, and confusion over which vendor is responsible for remediation. In large organizations, that uncertainty can last longer than the vulnerability itself.- Reachability may be hard to assess, especially in bundled software.

- Backported vendor fixes can make version checks misleading.

- Appliance and embedded systems may lag far behind mainstream distributions.

- False negatives in scanners can create dangerous complacency.

- Disruption from patching can slow response in fragile production systems.

- Exploitability uncertainty can delay prioritization when it should not.

- Dependency sprawl increases the number of systems that must be verified.

Looking Ahead

What happens next will depend on how quickly upstream maintainers, distributions, and downstream vendors publish their own remediation guidance. The Microsoft disclosure gives security teams a name and a target, but the real work begins when each environment is mapped against that target. In practice, the response window will differ by platform, packaging model, and support contract.The most important thing to watch is whether this issue remains a contained memory corruption bug or becomes part of a broader pattern in the XZ ecosystem. If additional tooling or libraries expose similar assumptions, defenders may need to widen their audit beyond one function and one version. The security value of this CVE may therefore lie not only in the fix itself, but in the dependency reviews it triggers.

A few things to monitor closely:

- Upstream XZ advisories and any fixed release announcements.

- Linux distribution backports and package changelogs.

- Microsoft guidance updates if affected products are identified.

- Enterprise scanner signatures as vendors tune detection.

- Container base image refreshes across development and production.

- SBOM reconciliation to ensure the vulnerable component is not hidden in embedded software.

Source: MSRC Security Update Guide - Microsoft Security Response Center