CVE-2026-43118 is a Linux kernel Btrfs vulnerability published on May 6, 2026, in which log replay after a crash can restore a truncated file with its old non-zero size under a specific fsync, hardlink, or rename sequence. That sounds like a narrow filesystem corner case because it is one. But it also lands in the most sensitive part of any storage stack: the promise that, after a power loss, the filesystem will tell the truth about what survived. For WindowsForum readers running Linux guests, WSL-adjacent workflows, NAS boxes, containers, Fedora desktops, or Btrfs-backed backup targets, the real lesson is not panic; it is that modern filesystems are still full of tiny state machines where one sentinel value can turn durability into plausible fiction.

The uncomfortable thing about CVE-2026-43118 is not that it enables a flashy remote compromise. It does not read like the sort of vulnerability that will dominate an emergency Patch Tuesday thread or get turned into a logo by a security vendor. It is a local data-integrity bug in the Linux kernel’s Btrfs filesystem, tied to a precise sequence of file operations and a crash or power failure at the wrong moment.

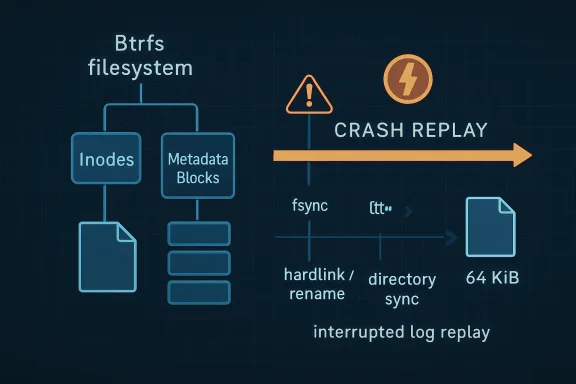

That should make it less alarming, but not less important. Filesystems are judged less by how elegant their tree structures look in design documents than by whether they behave correctly when the machine loses power, the UPS lies, the hypervisor dies, or a laptop battery reaches zero at exactly the wrong time. A bug that makes a zero-length file come back as 64 KiB after log replay is not just a bookkeeping error. It is the filesystem presenting a stale view of reality.

The CVE description gives a compact reproduction: create a file, write 64 KiB, sync it, truncate it to zero, fsync the file, create a hardlink, fsync the directory, then lose power. After log replay, the file that should be size zero remains at its old size. That is the kind of bug administrators hate because it is simultaneously explainable in five commands and buried deep enough in filesystem internals that normal operational monitoring may not catch it until an application trips over the result.

Btrfs has long sold itself on modern storage features: checksums, snapshots, subvolumes, compression, send/receive, online growth, and a copy-on-write architecture. But the features that make it attractive also mean it has many more edges where metadata, intent logging, and crash recovery have to agree. CVE-2026-43118 is one of those edges.

The substance is in the Btrfs log replay path. When a filesystem is trying to survive a crash, it needs to decide which operations made it to stable storage and which ones did not. The log is there to accelerate and constrain that recovery process. Instead of replaying the entire universe, the filesystem replays enough recorded intent to bring metadata back into a consistent post-crash state.

Btrfs has a concept in this path where an inode can be logged in an “exists” mode. That mode is used when the filesystem needs to record that an inode exists as part of logging a new name or new directory entries, without logging the file’s extents. In ordinary English: Btrfs is saying, “this file object is real and this name points to it,” not “here is the complete content and size story for this file.”

The existing logic used a generation value of zero as a signal during replay. That zero told the replay code not to update

The bug appears when that rule collides with an inode already logged with a real size change in the same transaction. A file is first written and synced at 64 KiB. Later it is truncated to zero and fsynced, so Btrfs logs the new size. Then a hardlink or rename causes the inode to be logged again in the lightweight “exists” mode. That second logging path sets the generation to zero, and replay later treats the zero as a reason not to apply the zero-byte size that had already been logged.

The result is a stale

The more realistic risk is corruption of application-visible state after a crash. A local user or process with write access to a Btrfs filesystem could create the affected sequence of operations. But the sequence only becomes visible after log replay, which means an unclean shutdown, power failure, crash, forced reset, or comparable interruption is part of the story.

That narrows the threat model, but it does not make the bug academic. Many serious storage failures start as “local only” problems because storage is local by definition. A database engine, package manager, build system, mail spool, VM image workflow, backup rotation job, or container runtime does not need a remote exploit to suffer from incorrect metadata after recovery. It merely needs the filesystem to report a file size that no longer matches the logical operation history.

There is also an important distinction between data corruption and data exposure. The CVE text describes a file retaining a previous non-zero size after a truncate-to-zero operation. Whether stale data is readable, sparse, zero-filled, or application-dependent is not something administrators should infer too aggressively from the summary alone. The plainly supported concern is that the file size is wrong after replay. That is already bad enough.

For sysadmins, the right framing is operational integrity. If your estate includes Btrfs systems where workloads frequently truncate files, create hardlinks or rename paths, fsync directories, and then might crash, this bug deserves attention. That does not describe every desktop. It describes enough real systems to matter.

That lag creates a strange silence for enterprise scanners. A vulnerability without a score often looks less urgent in dashboards than one with a bright red 9.8, even when the lower-scored or unscored issue is more relevant to a particular environment. Storage bugs are especially vulnerable to this distortion because they do not always fit neatly into confidentiality, integrity, and availability metrics.

If a file that should be empty comes back with the wrong size after a crash, that is an integrity failure. If the wrong file size causes an application to misread state, skip initialization, retain stale records, or treat a marker file incorrectly, the blast radius depends on the application layered above the filesystem. CVSS can gesture at that, but it cannot know your workload.

This is why administrators should resist waiting for a final score before doing the basic triage. The question is not “is this a 5.5 or a 7.1?” The question is “do I run affected kernels with Btrfs in places where crash consistency matters?” If the answer is yes, the bug belongs in the patch queue.

The CVE’s publication date also matters. It arrived on May 6, 2026, with kernel.org as the source. That tells us this is not a theoretical third-party advisory detached from an upstream fix. It is a vulnerability record wrapped around a kernel resolution, with stable kernel references already attached.

That is filesystem language, but the design lesson is broader. The old logic overloaded a value — generation zero — as a sentinel meaning “do not update size.” Sentinel values are tempting because they are cheap and simple. They are also treacherous when the same object can move through multiple modes in one transaction.

In CVE-2026-43118, the sentinel was not wrong in the abstract. It was wrong in sequence. The inode had already been logged with a meaningful size change, then later logged in a lighter mode for a name operation. The replay code saw the later sentinel and behaved as if the size should be preserved from the subvolume tree, even though the intended durable state was zero.

That is the kind of bug that survives ordinary reasoning. Each local rule can look sensible. Full logging records size. Exists-only logging avoids overwriting size. Replay respects the generation marker. The failure emerges when an inode moves from one path to another and the later, less informative record masks the earlier, more informative one.

This is also why filesystem regression tests are so specific. The CVE text mentions that an fstests case will follow. That is exactly the right direction because this class of bug is not caught by generic “write file, sync file, crash” testing. It requires the weird choreography: write, sync, truncate, fsync, hardlink or rename, fsync directory, crash, replay, inspect size.

That popularity changes the tone of every Btrfs bug. A decade ago, a log replay corner case might have been received as part of the cost of using a daring filesystem. In 2026, many users encounter Btrfs not as an exotic choice but as the default under their home directory, root filesystem, or appliance. The tolerance for “interesting” behavior is lower when the filesystem arrived preselected.

At the same time, Btrfs is doing harder things than older filesystems in many deployments. Copy-on-write metadata, snapshots, reflinks, checksums, subvolumes, and send/receive all increase the number of states the filesystem must track. The engineering challenge is not merely to make each feature work in isolation. It is to make them compose correctly during failure.

CVE-2026-43118 is a composition bug. It lives at the boundary between inode size tracking, name logging, hardlinks or renames, and crash recovery. That boundary is exactly where advanced filesystems earn or lose trust.

The fair reading is not “Btrfs is unsafe.” All serious filesystems have had crash-consistency bugs. Ext4, XFS, APFS, NTFS, ZFS, and Btrfs all represent long histories of subtle fixes. The fair reading is that Btrfs’s value proposition demands a disciplined update culture. If you use a filesystem because it evolves quickly and offers modern features, you also need to take its stable updates seriously.

WSL itself does not normally expose a Btrfs root filesystem to Windows users in the same way a bare-metal Fedora installation does, and Windows does not natively depend on Btrfs. But Windows-centric organizations often run Linux guests on Windows hosts, store Linux VM disks on appliances using Btrfs, or use Btrfs inside virtual machines that serve Windows clients. A data-integrity bug in one layer can surface as a mysterious application problem in another.

Consider a developer workstation running a Linux VM for builds. If the VM uses Btrfs and the host crashes, the guest may later present a file with an old size after a truncate sequence. The Windows user sees a failed build cache, a corrupt artifact, or an application state problem, not “CVE-2026-43118.” The bug’s name belongs to Linux; the operational pain can cross platform boundaries.

The same is true for home labs and small-business NAS setups. Btrfs is common in systems that present storage over SMB, NFS, or container volumes. Windows clients may never know the underlying filesystem. They only know whether the file server kept its promises after a reboot.

This is one reason mixed-platform administrators should track Linux kernel storage CVEs even if their identity stack, endpoint fleet, and patching muscle memory are Microsoft-centric. Infrastructure is no longer neatly divided by operating-system fandom. The failure domains overlap.

But “requires a crash” is not the comfort it first appears to be. Crashes are not rare in the places where storage integrity matters most. Kernel panics, hypervisor resets, power failures, watchdog reboots, failed batteries, bad RAM, accidental unplugging, cloud host failures, and impatient humans all happen. Filesystems are explicitly designed around the premise that they will happen.

The security angle becomes more interesting when a local actor can deliberately create the file-operation sequence and then induce or wait for a crash. That is not the same as a push-button exploit, and the public record does not indicate widespread exploitation. Still, local users, containers, or untrusted workloads that can write to shared Btrfs storage may be able to increase the chance of post-crash inconsistency in files they control.

Most environments should treat this as a patch-and-monitor item rather than an incident-response emergency. The exception is any system where file truncation carries security or correctness meaning. Some applications use truncate-to-zero patterns to clear state, rotate logs, reset markers, or prepare files for new content. If stale size after recovery can mislead the application, the risk rises.

This is where the CVE label helps. Without it, the bug might be dismissed as a filesystem oddity. With it, asset owners can at least ask whether the operation pattern intersects with their workloads.

The exact commands use

Administrators should not assume they can easily detect historical exposure after the fact. If a system crashed while running affected kernels, and if applications executed the vulnerable pattern, the resulting files may simply look like files with non-zero sizes. The filesystem is not necessarily going to raise a dramatic alarm saying “this inode size came from stale replay logic.”

That is why remediation is mostly forward-looking. Patch the kernel. Prefer clean shutdowns where possible. Review workloads that rely on truncate-and-link patterns. Keep backups that let you compare application-level state, not merely block-level survival. And if a Btrfs system suffered an unclean shutdown before patching, be alert for unexplained state inconsistencies in applications that were actively writing.

The eventual fstests regression case should help distributions and kernel maintainers prevent this exact bug from returning. It will not prove that every adjacent logging combination is correct. Filesystem testing is an arms race against state-space explosion.

CVE-2026-43118 sits in the messy middle. It is not a sensational attacker-to-root bug. It is also not meaningless. File integrity after crash recovery is a security property when systems depend on files for authorization, audit trails, package state, container layers, database durability, or application control flow.

The challenge for IT teams is to stop treating CVE assignment as the only prioritization signal. Kernel CVEs need local context. A Wi-Fi driver bug may be irrelevant to a headless VM. A Btrfs bug may be irrelevant to an ext4 server. But if your root filesystem, container host, or backup pool is Btrfs, a log replay integrity bug is directly in scope.

This is where asset inventory becomes more than compliance theater. You cannot sensibly triage CVE-2026-43118 if you do not know which systems run Btrfs, which kernel lines they track, and whether they receive stable updates promptly. “Linux server” is not enough information. Filesystem choice is part of the security profile.

The same principle applies to appliances. Many organizations run storage, backup, virtualization, or security products that embed Linux. The administrator may not manage the kernel directly, but the vendor does. If the product uses Btrfs, the vendor’s update cadence matters.

That sounds mundane, but it is the center of the story. Linux security in production is often less about knowing every upstream commit and more about trusting the chain from upstream maintainer to stable tree to distribution kernel to your patch deployment system. CVE-2026-43118 is exactly the kind of issue that tests whether that chain works.

Rolling distributions may receive fixes quickly but also expose users to newer kernel behavior faster. Enterprise distributions may backport fixes without changing the visible kernel version in a way that satisfies casual observers. Appliance vendors may lag behind both. The only reliable answer is to check the vendor advisory or package changelog for the fix, not merely stare at

For Windows admins managing Linux through endpoint tools, the patching mechanics may be awkward. Linux kernel updates often require a reboot to take effect. A package manager can install the fixed kernel while the machine continues running the vulnerable one. That is a recurring blind spot in mixed environments where Windows patch compliance habits do not map cleanly to Linux runtime state.

There is also a backup angle. If a storage bug affects log replay, backups taken after an unclean recovery may faithfully preserve the wrong state. That is not an argument against backups; it is an argument for versioned backups, application-aware validation, and tests that restore data into something that actually reads it.

Do not run destructive repair tools because you saw the word “Btrfs” next to a CVE. This bug is about log replay choosing the wrong inode size under a particular sequence. Tools like

Do not assume scrub will catch this either. Btrfs scrub is valuable for detecting checksum mismatches and repairing mirrored data when possible. A stale but internally consistent metadata value is a different category of problem. The filesystem can be wrong in a way that does not look like a checksum error.

The better operational control is reducing crash exposure. That means UPS health, clean shutdown policies, hypervisor stability, kernel update discipline, and not treating forced resets as harmless. Filesystems are designed to recover from crashes, but “designed to recover” has never meant “every possible pre-crash state sequence is immune to bugs.”

If you run Btrfs because of snapshots, this is also a reminder to understand what snapshots do and do not guarantee. Snapshots preserve point-in-time filesystem state. They do not magically correct a bad recovery decision that has already been committed into the visible filesystem state. Versioning helps when you know what good state looked like; it is not a substitute for a correct replay engine.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

A Small Inode Bug Cuts Straight Into Filesystem Trust

A Small Inode Bug Cuts Straight Into Filesystem Trust

The uncomfortable thing about CVE-2026-43118 is not that it enables a flashy remote compromise. It does not read like the sort of vulnerability that will dominate an emergency Patch Tuesday thread or get turned into a logo by a security vendor. It is a local data-integrity bug in the Linux kernel’s Btrfs filesystem, tied to a precise sequence of file operations and a crash or power failure at the wrong moment.That should make it less alarming, but not less important. Filesystems are judged less by how elegant their tree structures look in design documents than by whether they behave correctly when the machine loses power, the UPS lies, the hypervisor dies, or a laptop battery reaches zero at exactly the wrong time. A bug that makes a zero-length file come back as 64 KiB after log replay is not just a bookkeeping error. It is the filesystem presenting a stale view of reality.

The CVE description gives a compact reproduction: create a file, write 64 KiB, sync it, truncate it to zero, fsync the file, create a hardlink, fsync the directory, then lose power. After log replay, the file that should be size zero remains at its old size. That is the kind of bug administrators hate because it is simultaneously explainable in five commands and buried deep enough in filesystem internals that normal operational monitoring may not catch it until an application trips over the result.

Btrfs has long sold itself on modern storage features: checksums, snapshots, subvolumes, compression, send/receive, online growth, and a copy-on-write architecture. But the features that make it attractive also mean it has many more edges where metadata, intent logging, and crash recovery have to agree. CVE-2026-43118 is one of those edges.

The Vulnerability Lives Where Fsync Meets Reality

To understand why this bug matters, it helps to set aside the CVE machinery for a moment. The National Vulnerability Database record is still awaiting enrichment, with no NVD CVSS score at publication time. That absence of a score should not be mistaken for absence of risk. It mostly means the formal vulnerability bureaucracy has not yet finished translating a kernel maintainer’s bug fix into the neat boxes of security taxonomy.The substance is in the Btrfs log replay path. When a filesystem is trying to survive a crash, it needs to decide which operations made it to stable storage and which ones did not. The log is there to accelerate and constrain that recovery process. Instead of replaying the entire universe, the filesystem replays enough recorded intent to bring metadata back into a consistent post-crash state.

Btrfs has a concept in this path where an inode can be logged in an “exists” mode. That mode is used when the filesystem needs to record that an inode exists as part of logging a new name or new directory entries, without logging the file’s extents. In ordinary English: Btrfs is saying, “this file object is real and this name points to it,” not “here is the complete content and size story for this file.”

The existing logic used a generation value of zero as a signal during replay. That zero told the replay code not to update

i_size, because if the log only said the inode exists, the size in the main subvolume tree should be preserved. That makes sense as a general rule. If you did not log the extents, you should be careful about overwriting the size.The bug appears when that rule collides with an inode already logged with a real size change in the same transaction. A file is first written and synced at 64 KiB. Later it is truncated to zero and fsynced, so Btrfs logs the new size. Then a hardlink or rename causes the inode to be logged again in the lightweight “exists” mode. That second logging path sets the generation to zero, and replay later treats the zero as a reason not to apply the zero-byte size that had already been logged.

The result is a stale

i_size. The file was truncated before the crash. The filesystem had enough information to know that. But the later existence-only logging confused the replay path, so after recovery the file still appears to be 64 KiB.This Is Data Integrity, Not Drive-By Exploitation

Security coverage has a bad habit of flattening everything called a CVE into the same emotional category. CVE-2026-43118 is not a remote code execution bug. It is not a privilege escalation path in the usual sense. It does not, from the public description, give an attacker arbitrary kernel memory access or a clean route to root.The more realistic risk is corruption of application-visible state after a crash. A local user or process with write access to a Btrfs filesystem could create the affected sequence of operations. But the sequence only becomes visible after log replay, which means an unclean shutdown, power failure, crash, forced reset, or comparable interruption is part of the story.

That narrows the threat model, but it does not make the bug academic. Many serious storage failures start as “local only” problems because storage is local by definition. A database engine, package manager, build system, mail spool, VM image workflow, backup rotation job, or container runtime does not need a remote exploit to suffer from incorrect metadata after recovery. It merely needs the filesystem to report a file size that no longer matches the logical operation history.

There is also an important distinction between data corruption and data exposure. The CVE text describes a file retaining a previous non-zero size after a truncate-to-zero operation. Whether stale data is readable, sparse, zero-filled, or application-dependent is not something administrators should infer too aggressively from the summary alone. The plainly supported concern is that the file size is wrong after replay. That is already bad enough.

For sysadmins, the right framing is operational integrity. If your estate includes Btrfs systems where workloads frequently truncate files, create hardlinks or rename paths, fsync directories, and then might crash, this bug deserves attention. That does not describe every desktop. It describes enough real systems to matter.

The Absence of an NVD Score Is Not a Risk Assessment

The NVD record for CVE-2026-43118 is marked as awaiting enrichment, and the CVSS fields are not yet populated by NIST. This is a familiar lag in vulnerability handling, particularly for kernel CVEs that begin life as upstream fixes. The kernel community resolves a bug, the CVE record appears, references are attached, and only later does the scoring ecosystem catch up.That lag creates a strange silence for enterprise scanners. A vulnerability without a score often looks less urgent in dashboards than one with a bright red 9.8, even when the lower-scored or unscored issue is more relevant to a particular environment. Storage bugs are especially vulnerable to this distortion because they do not always fit neatly into confidentiality, integrity, and availability metrics.

If a file that should be empty comes back with the wrong size after a crash, that is an integrity failure. If the wrong file size causes an application to misread state, skip initialization, retain stale records, or treat a marker file incorrectly, the blast radius depends on the application layered above the filesystem. CVSS can gesture at that, but it cannot know your workload.

This is why administrators should resist waiting for a final score before doing the basic triage. The question is not “is this a 5.5 or a 7.1?” The question is “do I run affected kernels with Btrfs in places where crash consistency matters?” If the answer is yes, the bug belongs in the patch queue.

The CVE’s publication date also matters. It arrived on May 6, 2026, with kernel.org as the source. That tells us this is not a theoretical third-party advisory detached from an upstream fix. It is a vulnerability record wrapped around a kernel resolution, with stable kernel references already attached.

The Patch Changes a Signal That Was Too Clever

The fix described in the CVE is conceptually modest: when filling the inode item for the log, Btrfs should preserve the real generation if the inode was already logged in the current transaction with the size previously logged. Additionally, if an inode created in an earlier transaction is logged only in exists mode, the code should log thei_size from the inode item in the commit root, so repeated existence-only logging has the correct size context.That is filesystem language, but the design lesson is broader. The old logic overloaded a value — generation zero — as a sentinel meaning “do not update size.” Sentinel values are tempting because they are cheap and simple. They are also treacherous when the same object can move through multiple modes in one transaction.

In CVE-2026-43118, the sentinel was not wrong in the abstract. It was wrong in sequence. The inode had already been logged with a meaningful size change, then later logged in a lighter mode for a name operation. The replay code saw the later sentinel and behaved as if the size should be preserved from the subvolume tree, even though the intended durable state was zero.

That is the kind of bug that survives ordinary reasoning. Each local rule can look sensible. Full logging records size. Exists-only logging avoids overwriting size. Replay respects the generation marker. The failure emerges when an inode moves from one path to another and the later, less informative record masks the earlier, more informative one.

This is also why filesystem regression tests are so specific. The CVE text mentions that an fstests case will follow. That is exactly the right direction because this class of bug is not caught by generic “write file, sync file, crash” testing. It requires the weird choreography: write, sync, truncate, fsync, hardlink or rename, fsync directory, crash, replay, inspect size.

Btrfs Carries the Burden of Being Interesting

Btrfs is not a fringe experiment anymore. It is used by major Linux distributions, enthusiasts, NAS builders, and people who want snapshots without building an entire storage stack out of separate layers. Fedora’s use of Btrfs as a default filesystem for desktop variants helped normalize it for a generation of Linux users who might never have manually opted into it. SUSE has also long treated Btrfs as a serious production filesystem in its own ecosystem.That popularity changes the tone of every Btrfs bug. A decade ago, a log replay corner case might have been received as part of the cost of using a daring filesystem. In 2026, many users encounter Btrfs not as an exotic choice but as the default under their home directory, root filesystem, or appliance. The tolerance for “interesting” behavior is lower when the filesystem arrived preselected.

At the same time, Btrfs is doing harder things than older filesystems in many deployments. Copy-on-write metadata, snapshots, reflinks, checksums, subvolumes, and send/receive all increase the number of states the filesystem must track. The engineering challenge is not merely to make each feature work in isolation. It is to make them compose correctly during failure.

CVE-2026-43118 is a composition bug. It lives at the boundary between inode size tracking, name logging, hardlinks or renames, and crash recovery. That boundary is exactly where advanced filesystems earn or lose trust.

The fair reading is not “Btrfs is unsafe.” All serious filesystems have had crash-consistency bugs. Ext4, XFS, APFS, NTFS, ZFS, and Btrfs all represent long histories of subtle fixes. The fair reading is that Btrfs’s value proposition demands a disciplined update culture. If you use a filesystem because it evolves quickly and offers modern features, you also need to take its stable updates seriously.

Windows Shops Are Not Spectators

At first glance, a Linux kernel Btrfs CVE may look outside the WindowsForum lane. It is not. Windows environments increasingly contain Linux in places that do not look like traditional Linux servers: developer workstations, WSL workflows, Hyper-V guests, CI runners, Kubernetes nodes, NAS appliances, backup systems, security tooling, and lab machines.WSL itself does not normally expose a Btrfs root filesystem to Windows users in the same way a bare-metal Fedora installation does, and Windows does not natively depend on Btrfs. But Windows-centric organizations often run Linux guests on Windows hosts, store Linux VM disks on appliances using Btrfs, or use Btrfs inside virtual machines that serve Windows clients. A data-integrity bug in one layer can surface as a mysterious application problem in another.

Consider a developer workstation running a Linux VM for builds. If the VM uses Btrfs and the host crashes, the guest may later present a file with an old size after a truncate sequence. The Windows user sees a failed build cache, a corrupt artifact, or an application state problem, not “CVE-2026-43118.” The bug’s name belongs to Linux; the operational pain can cross platform boundaries.

The same is true for home labs and small-business NAS setups. Btrfs is common in systems that present storage over SMB, NFS, or container volumes. Windows clients may never know the underlying filesystem. They only know whether the file server kept its promises after a reboot.

This is one reason mixed-platform administrators should track Linux kernel storage CVEs even if their identity stack, endpoint fleet, and patching muscle memory are Microsoft-centric. Infrastructure is no longer neatly divided by operating-system fandom. The failure domains overlap.

The Exploit Prerequisite Is the Crash Window

The public scenario depends on an unclean shutdown or power failure after a particular sequence of operations. That detail is important because it shapes both risk and mitigation. If there is no crash, there is no log replay moment for this bug to express itself. If there is no affected operation sequence before the crash, the specific stale-size outcome does not occur.But “requires a crash” is not the comfort it first appears to be. Crashes are not rare in the places where storage integrity matters most. Kernel panics, hypervisor resets, power failures, watchdog reboots, failed batteries, bad RAM, accidental unplugging, cloud host failures, and impatient humans all happen. Filesystems are explicitly designed around the premise that they will happen.

The security angle becomes more interesting when a local actor can deliberately create the file-operation sequence and then induce or wait for a crash. That is not the same as a push-button exploit, and the public record does not indicate widespread exploitation. Still, local users, containers, or untrusted workloads that can write to shared Btrfs storage may be able to increase the chance of post-crash inconsistency in files they control.

Most environments should treat this as a patch-and-monitor item rather than an incident-response emergency. The exception is any system where file truncation carries security or correctness meaning. Some applications use truncate-to-zero patterns to clear state, rotate logs, reset markers, or prepare files for new content. If stale size after recovery can mislead the application, the risk rises.

This is where the CVE label helps. Without it, the bug might be dismissed as a filesystem oddity. With it, asset owners can at least ask whether the operation pattern intersects with their workloads.

The Test Case Tells Admins What to Look For

The reproduction sequence in the CVE text is unusually useful because it gives defenders a mental model. A file is created and written to 64 KiB. The system syncs. The file is truncated to zero and fsynced. A hardlink is created. The directory is fsynced. Then power fails. After replay, the file incorrectly reports as 64 KiB.The exact commands use

xfs_io, a tool often used for filesystem testing despite the name. That detail may amuse storage people, but it also underscores that this is a filesystem semantics test, not an XFS issue. The operations are ordinary POSIX-style operations: write, truncate, fsync, link, directory fsync.Administrators should not assume they can easily detect historical exposure after the fact. If a system crashed while running affected kernels, and if applications executed the vulnerable pattern, the resulting files may simply look like files with non-zero sizes. The filesystem is not necessarily going to raise a dramatic alarm saying “this inode size came from stale replay logic.”

That is why remediation is mostly forward-looking. Patch the kernel. Prefer clean shutdowns where possible. Review workloads that rely on truncate-and-link patterns. Keep backups that let you compare application-level state, not merely block-level survival. And if a Btrfs system suffered an unclean shutdown before patching, be alert for unexplained state inconsistencies in applications that were actively writing.

The eventual fstests regression case should help distributions and kernel maintainers prevent this exact bug from returning. It will not prove that every adjacent logging combination is correct. Filesystem testing is an arms race against state-space explosion.

Kernel CVEs Are Becoming More Literal

The Linux kernel project’s handling of CVEs has changed the rhythm of vulnerability news. More kernel fixes now receive CVE identifiers, including bugs that look to outsiders like correctness issues rather than classic security flaws. That has led to understandable fatigue among administrators who see a stream of kernel CVEs and wonder which ones actually matter.CVE-2026-43118 sits in the messy middle. It is not a sensational attacker-to-root bug. It is also not meaningless. File integrity after crash recovery is a security property when systems depend on files for authorization, audit trails, package state, container layers, database durability, or application control flow.

The challenge for IT teams is to stop treating CVE assignment as the only prioritization signal. Kernel CVEs need local context. A Wi-Fi driver bug may be irrelevant to a headless VM. A Btrfs bug may be irrelevant to an ext4 server. But if your root filesystem, container host, or backup pool is Btrfs, a log replay integrity bug is directly in scope.

This is where asset inventory becomes more than compliance theater. You cannot sensibly triage CVE-2026-43118 if you do not know which systems run Btrfs, which kernel lines they track, and whether they receive stable updates promptly. “Linux server” is not enough information. Filesystem choice is part of the security profile.

The same principle applies to appliances. Many organizations run storage, backup, virtualization, or security products that embed Linux. The administrator may not manage the kernel directly, but the vendor does. If the product uses Btrfs, the vendor’s update cadence matters.

Stable Kernels Are the Real Security Boundary

The references attached to the CVE point to stable kernel commits, which is the practical place most users will receive the fix. Very few organizations should be cherry-picking filesystem patches into custom kernels unless they already have the engineering discipline to own that decision. For everyone else, the answer is to follow the distribution or vendor kernel update.That sounds mundane, but it is the center of the story. Linux security in production is often less about knowing every upstream commit and more about trusting the chain from upstream maintainer to stable tree to distribution kernel to your patch deployment system. CVE-2026-43118 is exactly the kind of issue that tests whether that chain works.

Rolling distributions may receive fixes quickly but also expose users to newer kernel behavior faster. Enterprise distributions may backport fixes without changing the visible kernel version in a way that satisfies casual observers. Appliance vendors may lag behind both. The only reliable answer is to check the vendor advisory or package changelog for the fix, not merely stare at

uname -r and guess.For Windows admins managing Linux through endpoint tools, the patching mechanics may be awkward. Linux kernel updates often require a reboot to take effect. A package manager can install the fixed kernel while the machine continues running the vulnerable one. That is a recurring blind spot in mixed environments where Windows patch compliance habits do not map cleanly to Linux runtime state.

There is also a backup angle. If a storage bug affects log replay, backups taken after an unclean recovery may faithfully preserve the wrong state. That is not an argument against backups; it is an argument for versioned backups, application-aware validation, and tests that restore data into something that actually reads it.

The Practical Response Is Boring, Which Is Good

For most readers, the right response to CVE-2026-43118 is not dramatic. Identify Btrfs systems. Determine whether the running kernel includes the fix from your vendor or stable kernel line. Update. Reboot into the fixed kernel. Treat recent unclean shutdowns on affected systems as a reason to pay closer attention to application state.Do not run destructive repair tools because you saw the word “Btrfs” next to a CVE. This bug is about log replay choosing the wrong inode size under a particular sequence. Tools like

btrfs check --repair are not casual hygiene utilities. They are last-resort instruments for specific corruption scenarios, and using them without a diagnosis can turn a manageable problem into a self-inflicted outage.Do not assume scrub will catch this either. Btrfs scrub is valuable for detecting checksum mismatches and repairing mirrored data when possible. A stale but internally consistent metadata value is a different category of problem. The filesystem can be wrong in a way that does not look like a checksum error.

The better operational control is reducing crash exposure. That means UPS health, clean shutdown policies, hypervisor stability, kernel update discipline, and not treating forced resets as harmless. Filesystems are designed to recover from crashes, but “designed to recover” has never meant “every possible pre-crash state sequence is immune to bugs.”

If you run Btrfs because of snapshots, this is also a reminder to understand what snapshots do and do not guarantee. Snapshots preserve point-in-time filesystem state. They do not magically correct a bad recovery decision that has already been committed into the visible filesystem state. Versioning helps when you know what good state looked like; it is not a substitute for a correct replay engine.

The File Size That Should Not Have Survived

The concrete lessons from CVE-2026-43118 are narrower than the noise around kernel CVEs, but they are useful precisely because they are concrete. This is not a reason to abandon Btrfs, and it is not a reason to shrug at unscored vulnerabilities. It is a reminder that the smallest metadata shortcut can become visible at the worst possible moment.- CVE-2026-43118 affects the Linux kernel’s Btrfs log replay path and can leave a truncated file showing an old non-zero size after an unclean shutdown.

- The public reproduction involves writing a file, syncing it, truncating it to zero, fsyncing it, creating a hardlink or new name, fsyncing the directory, and then losing power before replay.

- The bug is best understood as an integrity issue rather than a remote compromise, with risk concentrated on systems that use Btrfs and experience crashes or forced resets.

- The NVD record was still awaiting enrichment at publication time, so administrators should not wait for a CVSS score before checking whether their Btrfs systems have a fixed kernel.

- The safest remediation path is to take vendor kernel updates, reboot into the fixed kernel, and validate application state on systems that recently recovered from unclean shutdowns.

- Scrub, snapshots, and backups remain useful, but none of them replaces prompt kernel maintenance for a log replay bug.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center