DataBahn’s newly announced deep integration with Microsoft Sentinel promises to collapse SIEM onboarding timeframes and materially lower analytics‑tier ingestion costs — claims that, if realized broadly, would change how security teams plan SIEM migrations and manage long‑term telemetry economics.

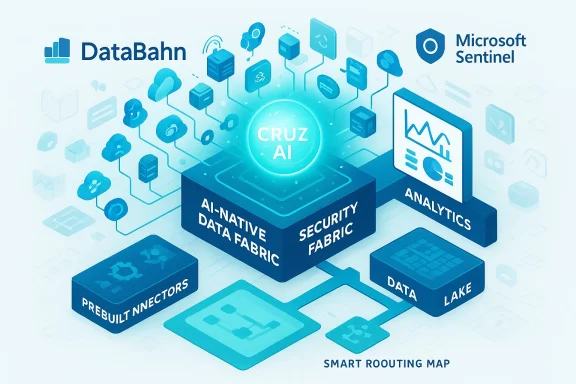

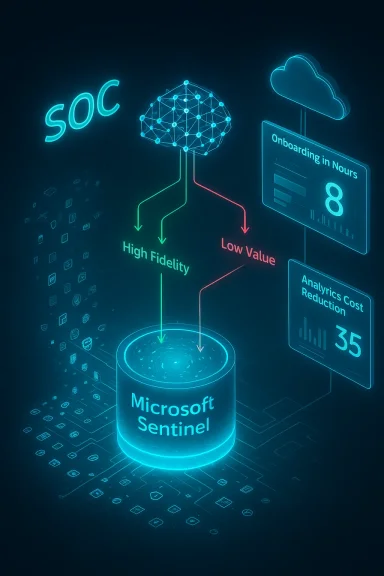

Microsoft Sentinel has been evolving from a traditional cloud SIEM into a broader, AI‑ready security platform with a dedicated data lake, richer connectors, and native Copilot‑driven telemetry workflows. That platform evolution makes third‑party pipeline and data‑management tools more than convenience; they can become cost and time multipliers when designed to work with Sentinel’s tiered ingestion model and retention strategies. This broader Sentinel trajectory is captured in recent community and forum analyses that emphasize the Sentinel data lake and emerging Copilot connectors as structural changes to SIEM economics and workflows.

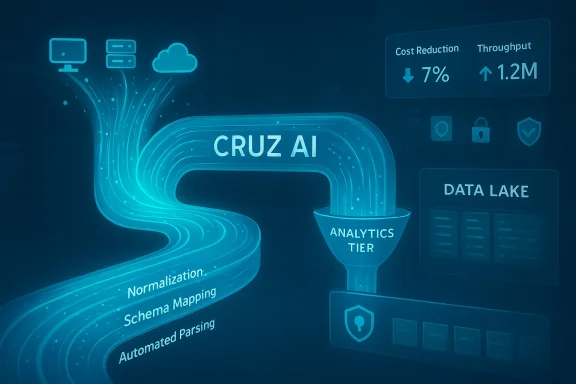

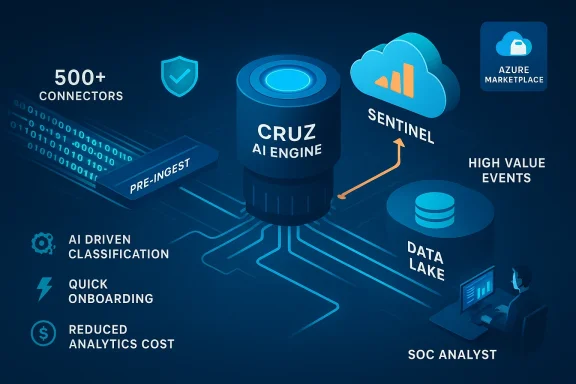

DataBahn — positioning itself as an “AI‑native Security Data Fabric” — says its Cruz AI engine and prebuilt connectors can normalize, enrich, classify, and route telemetry from 500+ sources directly into Sentinel, sending only high‑value detection data to Sentinel’s analytics tier while routing high‑volume, low‑value telemetry to lower‑cost storage such as the Sentinel data lake or other archival tiers. The company and its announcement claim joint customers can see onboarding measured in hours rather than weeks, and ingestion cost reductions up to 60% based on customer metrics.

This article unpacks the announcement, verifies the technical claims against publicly available product documentation and case studies, and provides an independent, practical assessment for security teams considering the DataBahn + Microsoft Sentinel path.

DataBahn’s integration works against this model by:

That said, the headline numbers — onboarding in “hours” and “60% cost reduction” — are environment‑dependent. They should be validated through a short, metric‑driven pilot that measures both cost and operational impacts (investigation speed, rule coverage, forensic readiness). Treat vendor figures as achievable targets, not guarantees, and insist on operational controls that preserve investigation fidelity, auditability, and compliance.

DataBahn’s Sentinel integration is a credible step toward making modern SIEM deployments faster and more economical — and it represents the logical next stage of the vendor‑partner ecosystem forming around Microsoft Sentinel’s data lake and AI capabilities. Early evidence and vendor case studies show substantial potential savings and faster time to value, but each organization must validate the classification rules, forensic guarantees, and governance model in its own environment before declaring victory.

In short: the integration is worth piloting for any team wrestling with runaway ingestion costs or slow onboarding — but only with strict controls, auditable AI decisions, and a well‑defined fallback path that preserves forensic integrity.

Source: Techzine Global DataBahn and Microsoft accelerate SIEM deployment through integration

Source: IT Brief New Zealand https://itbrief.co.nz/story/databahn-deepens-microsoft-sentinel-tie-up-to-cut-siem-costs/

Background / Overview

Background / Overview

Microsoft Sentinel has been evolving from a traditional cloud SIEM into a broader, AI‑ready security platform with a dedicated data lake, richer connectors, and native Copilot‑driven telemetry workflows. That platform evolution makes third‑party pipeline and data‑management tools more than convenience; they can become cost and time multipliers when designed to work with Sentinel’s tiered ingestion model and retention strategies. This broader Sentinel trajectory is captured in recent community and forum analyses that emphasize the Sentinel data lake and emerging Copilot connectors as structural changes to SIEM economics and workflows.DataBahn — positioning itself as an “AI‑native Security Data Fabric” — says its Cruz AI engine and prebuilt connectors can normalize, enrich, classify, and route telemetry from 500+ sources directly into Sentinel, sending only high‑value detection data to Sentinel’s analytics tier while routing high‑volume, low‑value telemetry to lower‑cost storage such as the Sentinel data lake or other archival tiers. The company and its announcement claim joint customers can see onboarding measured in hours rather than weeks, and ingestion cost reductions up to 60% based on customer metrics.

This article unpacks the announcement, verifies the technical claims against publicly available product documentation and case studies, and provides an independent, practical assessment for security teams considering the DataBahn + Microsoft Sentinel path.

What DataBahn says it delivers

The core promises

- Faster onboarding: DataBahn advertises automated, AI‑driven connectors that reduce the need for custom parsing and hand‑crafted ingestion pipelines, turning “weeks or months” into “hours” for connecting complex or custom log sources.

- Lower Sentinel ingestion costs: By classifying telemetry and routing only high‑fidelity detection data into Sentinel’s analytics tier while offloading verbose or archival logs to the Sentinel data lake (or other lower‑cost storage), DataBahn reports up to 60% reduction in analytics‑tier ingestion costs for customers. This number appears in the vendor announcement and in multiple vendor case studies that demonstrate substantial volume reduction.

- Operational simplicity: The integration is available through Microsoft Marketplace and the Sentinel Content Hub, and DataBahn highlights the ability to apply Microsoft Azure Consumption Commitments (MACC) to simplify procurement and reduce net‑new budget impact.

Core components (as described by DataBahn)

- AI‑driven connectors & parsers: Automated normalization for both standard and custom sources.

- Telemetry classification engine (Cruz AI): Labels and prioritizes records by detection value, routing them to appropriate storage and processing tiers.

- Volume control & reduction rules: A library of reduction rules (suppression, aggregation, sampling, deduplication) to cut noise such as heartbeats, verbose health checks, and repeated status codes. DataBahn’s case studies show 40–80% reductions in ingestion volume depending on the customer and use case.

Verification and independent evidence

The claim set in the announcement is a mixture of verifiable product facts and vendor‑sourced customer metrics. To be clear:- DataBahn’s product pages and case studies document real customer deployments showing substantial ingestion reductions and cost savings; those case studies list specific percentages and dollar values for license and storage savings.

- The PR release describing the expanded partnership and the 60% ingestion reduction figure comes directly from DataBahn and the company’s communications. While credible, that single press release is a vendor announcement.

- Tech media and industry writeups about DataBahn’s agent and pipeline approach provide independent context on the vendor’s technology direction and market positioning, even if they do not replicate every numeric claim. For example, trade coverage highlights the DataBahn agent for unified telemetry and notes the company’s approach to reducing tool sprawl and multi‑agent overhead.

How the integration technically reduces SIEM costs

Sentinel’s cost model and where savings come from

Microsoft Sentinel charges primarily around analytics‑tier ingestion (Log Analytics workspace ingestion) and retention. The larger the continuous ingress into the analytics tier, the higher the cost. Sentinel’s data lake and other tiering options were introduced to decouple long‑term storage and raw telemetry from high‑cost analytics operations — paving the way for a pipeline that stores raw, low‑value telemetry cheaply while surfacing only necessary signals to the analytics layer.DataBahn’s integration works against this model by:

- Classifying each telemetry record: deciding whether it is high‑fidelity (alerts, suspicious events, detections) or auxiliary (heartbeats, verbose telemetry).

- Applying volume control: suppression, aggregation, and sampling reduce duplicates and non‑actionable noise before it hits the analytics tier.

- Routing intelligently: sending high‑value data to Sentinel analytics, and routing the rest to the Sentinel data lake or cheaper long‑term stores so forensic and compliance needs are still met without the analytics ingestion charge.

Strengths and practical benefits

1. Realistic path to lower SIEM TCO

- Immediate cost leverage: Organizations with dense telemetry and a high proportion of auxiliary logs often face ballooning analytics bills. Routing and reduction strategies can produce large, measurable savings quickly. DataBahn’s case studies show day‑one reductions in many deployments.

2. Faster integration for complex sources

- Less custom engineering: Many enterprises find complex, bespoke log sources take weeks of parser work. Automated connectors and AI‑assisted parsing can significantly shorten that timeline and reduce professional services needs.

3. Better SOC signal‑to‑noise ratio

- Reduced alert fatigue: By prioritizing and surfacing only higher‑value telemetry to detection rules and analysts, organizations can improve mean time to detect and reduce false positives. Case studies and vendor materials highlight reduced noise as a secondary benefit.

4. Procurement and deployment convenience

- Marketplace availability: Being available via Microsoft Marketplace and Sentinel Content Hub eases procurement and may allow customers to use existing Azure commitments. That’s a practical win for procurement cycles.

Risks, caveats, and red flags

No matter how attractive the numbers, security and compliance teams must evaluate deeper implications. Below are the major considerations.1. Fidelity vs. reduction — the forensic tradeoff

Every suppression, sampling, or aggregation step alters the raw evidence trail. If done without clear rules and auditability, the pipeline can remove or obfuscate records needed during incident investigations or regulatory audits.- Risk: Overzealous reduction could destroy the context that turns a suspicious event into confirmed compromise.

- Mitigation: Maintain a forensics‑preserving path (full raw copy with immutable retention), or ensure sampled data preserves representative evidence for investigations.

2. Governance, transparency, and explainability of AI decisions

If an AI classifier decides what is “high fidelity,” SOCs must be able to explain and audit those decisions.- Risk: Black‑box classification undermines compliance and reduces analyst trust.

- Mitigation: Require transparent rule logs, explainability tooling, and human‑in‑the‑loop override capabilities.

3. Hidden costs and operational complexity

Adding a vendor pipeline introduces another system to maintain, secure, and scale. There are licensing, integration, network egress, and potential single‑vendor lock‑in considerations.- Risk: The pipeline itself becomes a new point of failure or cost center.

- Mitigation: Evaluate TCO net of the pipeline license, assess high‑availability patterns, and insist on clear SLAs and exit‑strategies (data export formats, immutable logs).

4. Compliance, data residency, and privacy

Routing telemetry across tiers and potentially different storage locations can trigger legal and regulatory issues, especially for PII or regulated industry logs.- Risk: Misrouted telemetry could violate retention or residency rules.

- Mitigation: Map data classifications to regulatory needs up front; require location constraints, tagging, and retention enforcement inside the pipeline.

5. Security of the pipeline

A pipeline that normalizes and enriches security logs becomes a high‑value target. It must be treated like any critical security control.- Risk: Pipeline compromise could corrupt logs, hide intrusions, or exfiltrate sensitive telemetry.

- Mitigation: Ensure strong authentication, encryption in transit and at rest, isolated service principals, and robust monitoring of the pipeline itself.

How to evaluate DataBahn + Sentinel in your environment: an operational checklist

- Run a short pilot (2–4 weeks) with real traffic and measurable KPIs. Capture:

- Baseline ingestion volume and cost per day.

- Post‑pipeline analytics‑tier volume and cost.

- Detection coverage and rule firing comparison.

- Define forensic requirements and test incident replay: ensure raw data needed for root‑cause analysis can be retrieved within required SLAs.

- Audit AI classification outcomes:

- Sample randomly for accuracy.

- Validate rules against known threat scenarios and edge cases.

- Test failover and independence:

- Ensure your SOC can bypass the pipeline if it becomes unavailable.

- Verify data export formats (parquet, newline JSON, CEF, etc.) are portable.

- Assess compliance mapping for all telemetry types: PCI, HIPAA, GDPR, sectoral requirements, and ensure the pipeline honors retention and residency tagging.

- Measure analyst impact:

- Time‑to‑investigate changes.

- Alert triage volumes.

- False positive rate shifts.

- Contractual safeguards:

- Clear SLAs for ingestion, throughput, and retention.

- Data ownership clauses and exit/export provisions.

- Cost model validation:

- Confirm the vendor’s modeled 60% (or other) savings against your environment. Use Azure pricing calculator and real ingestion patterns — not just vendor estimates.

Practical scenarios where this approach yields the most value

- Organizations migrating from legacy SIEMs to Microsoft Sentinel where vast quantities of historical telemetry must be moved but not all is needed in analytics.

- Enterprises with heavy device telemetry (network devices, firewalls, proxies) that generate many high‑volume auxiliary logs. Sampling and aggregation materially lower analytics ingestion while keeping long‑term raw archives.

- MSSPs and large distributed SOCs that manage multi‑tenant cost attribution and need consistent onboarding templates to speed client deployments. Marketplace distribution and prebuilt connectors accelerate rollouts.

When this approach can be problematic

- Highly regulated environments where every log must be retained unaltered for long durations and quick forensic replay is mandatory.

- Small organizations with low ingestion volumes; baseline Sentinel charges may already be modest and adding a pipeline license may not make economic sense.

- Environments that rely on microsecond‑level telemetry fidelity for specialized analytics (e.g., certain industrial control system forensics).

Recommended contract and technical clauses to insist on

- Full exportability of all data in an open, widely supported format (Parquet, JSONL, CEF), and an SLA for export timeframes.

- Immutable raw archive option (hash‑chained) to ensure evidence integrity even if reduction rules are applied downstream.

- Explainability and audit logs exposing why records were classified a certain way and when reduction rules applied.

- Security & compliance controls: role‑based access, encryption keys (BYOK if possible), and SOC‑level logging of pipeline administrative actions.

- Performance & throughput SLAs aligned to peak ingestion bursts, with financial remedies for missed SLAs.

Verdict: a compelling but careful “yes” for many organizations

DataBahn’s expanded partnership with Microsoft and the Sentinel‑focused integration is a timely and well‑aligned effort that matches where SIEM economics have been heading: toward separating raw telemetry storage from analytics‑grade ingestion and making intelligent, policy‑driven decisions about what to analyze now vs. store for later. The vendor’s customer metrics and case studies demonstrate real potential for significant cost reductions and much faster onboarding cycles.That said, the headline numbers — onboarding in “hours” and “60% cost reduction” — are environment‑dependent. They should be validated through a short, metric‑driven pilot that measures both cost and operational impacts (investigation speed, rule coverage, forensic readiness). Treat vendor figures as achievable targets, not guarantees, and insist on operational controls that preserve investigation fidelity, auditability, and compliance.

Quick decision framework for CISOs and security architects

- Do you ingest high volumes of auxiliary telemetry (firewalls, proxies, network telemetry)? If yes, this approach likely nets outsized savings.

- Do you require full‑fidelity logs for regulatory or forensic reasons? If yes, require immutable raw retention outside the reduction path.

- Can you commit resources to a short pilot and validation sprint? If no, delay adoption until you can measure outcomes empirically.

- Are you purchasing through Azure Marketplace or using MACC? If yes, verify contract language on consumption commitments and invoice treatment.

Final recommendations

- Start with a 2–4 week pilot focused on a single high‑volume domain (e.g., perimeter firewall logs) and measure ingestion, analyst workload, and detection parity.

- Demand transparent explainability from any AI classifier and require human‑in‑the‑loop override for sensitive data flows.

- Retain a complete raw archive (immutably stored) for at least the minimum forensics window your compliance posture demands.

- Model TCO including pipeline licensing, Azure egress, retention costs, and operational overhead — not just headline percent savings.

- Build an incident playbook that includes pipeline verification steps (how to prove data was not lost or transformed in a way that hides attack artifacts).

DataBahn’s Sentinel integration is a credible step toward making modern SIEM deployments faster and more economical — and it represents the logical next stage of the vendor‑partner ecosystem forming around Microsoft Sentinel’s data lake and AI capabilities. Early evidence and vendor case studies show substantial potential savings and faster time to value, but each organization must validate the classification rules, forensic guarantees, and governance model in its own environment before declaring victory.

In short: the integration is worth piloting for any team wrestling with runaway ingestion costs or slow onboarding — but only with strict controls, auditable AI decisions, and a well‑defined fallback path that preserves forensic integrity.

Source: Techzine Global DataBahn and Microsoft accelerate SIEM deployment through integration

Source: IT Brief New Zealand https://itbrief.co.nz/story/databahn-deepens-microsoft-sentinel-tie-up-to-cut-siem-costs/