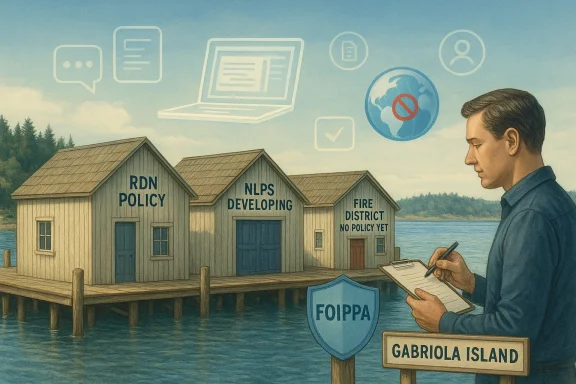

AI is no longer a hypothetical issue for Gabriola’s local governments; it is already shaping how staff research, draft, communicate, and handle sensitive information. The clearest contrast in the current picture is between organizations that have moved to formal governance and those that are still improvising. The Regional District of Nanaimo has adopted an internal AI governance policy, Nanaimo-Ladysmith Public Schools says a specific policy is still being developed, and the Gabriola Fire Protection Improvement District says it currently has no AI policy at all. Those differences matter because the risks are not abstract: they involve privacy, bias, embedded AI features in everyday software, and the possibility that data could leave Canada without residents fully understanding where it went.

Artificial intelligence has moved from the edge of public administration into the center of it, often without a clean boundary between “AI tools” and ordinary workplace software. That is part of the challenge for local government, where staff may encounter AI in search, email, meeting notes, document drafting, transcription, and analytics platforms long before anyone formally approves its use. In practical terms, a council can ban consumer chatbots and still be using AI indirectly through a licensed productivity suite. That is why the conversation on Gabriola is less about novelty than about governance.

The Sounder’s reporting, referenced by CHEK News, framed the issue through a privacy lens: AI can process personal and private information, and that information may be entered into data servers outside Canada. That concern is especially acute in public-sector environments, where resident data may be linked to health, housing, employment, education, or other sensitive matters. Once a prompt or document enters a vendor system, the authority may lose some visibility into retention, reuse, and training exposure. That is the heart of the policy problem.

The Islands Trust angle also helps explain why this debate has gained traction. If local consultants or staff are using AI in ways an authority cannot fully track, then the gap is not just technical; it is institutional. Governments are no longer only deciding whether to “adopt AI.” They are deciding whether they can even reliably see where it is already embedded. That distinction matters because policy that arrives after use becomes widespread is usually harder to enforce.

Another reason the issue is accelerating is that vendors are building AI into products by default. Office suites, customer service systems, meeting tools, and email platforms now frequently include generative features that are switched on by licensing rather than by a separate AI project. That means a local government can be exposed to AI risk without ever holding a formal vote on “AI adoption.” In other words, silence is not neutrality.

The Gabriola examples show three different stages of public-sector maturity. The RDN has a written policy, staff guidance, and a limited pilot. NLPS is in policy-development mode and is relying on broader rules for now. The Fire District, meanwhile, is only beginning to discuss whether it even needs a policy. Those stages are useful because they show how local government usually evolves: first comes awareness, then informal use, then governance, and finally oversight.

Birch’s description makes the RDN sound less like a tech enthusiast and more like a cautious operator. Staff remain accountable for whatever they produce with AI assistance, and they are expected to review output for accuracy, bias, and tone. The policy also requires people to make sure sensitive information is not unintentionally disclosed. That matters because one of the easiest mistakes with generative AI is assuming the tool is private, when in fact the user may be exposing data to a third-party system or an embedded cloud service. Convenience is not confidentiality.

The district has also built process into the policy. Before approved AI tools are added to workflows involving sensitive personal information, there must be an additional review. New AI tools go through a cross-departmental governance committee. That structure is significant because it recognizes that AI risk is not confined to one department. A tool that looks harmless in communications may be problematic in social services, planning, or records management. Cross-functional review is therefore a strength, not a bureaucratic delay.

The reporting suggests the pilot has so far been positive, especially for structured research, meeting summaries, and data analysis. Those are exactly the kinds of tasks where AI can provide value without being the sole decision-maker. Still, Birch emphasized that gains are sometimes offset by the need to carefully review and correct errors. That is a crucial point: AI may accelerate drafting, but it does not remove the burden of verification. In some workflows, it simply moves the labor from first drafting to fact-checking and correction.

The RDN’s approach also surfaces a deeper public-sector lesson: AI tools can be useful and still not be “free” in operational terms. Even if a district already pays for Microsoft 365, there are still hidden costs in training, oversight, policy maintenance, and rework. A tool that appears to save time in a single task can create a larger review burden downstream. Speed without scrutiny is not efficiency.

B.C.’s Freedom of Information and Protection of Privacy Act is the relevant legal backbone in the reporting. The RDN explicitly acknowledges that obligation, and NLPS says its existing policies are built around it. That is important because the legal standard is not “AI is useful,” but “information is handled as the law requires.” Once AI enters the picture, the risk shifts from local file storage to vendor infrastructure, cloud retention practices, and possible cross-border processing. The law does not get simpler when the software gets smarter.

Data residency is only one part of the challenge. There is also the problem of what staff actually paste into prompts. A well-meaning employee might use AI to summarize a complaint letter, rewrite a draft notice, or analyze a spreadsheet and inadvertently expose personal details. The user may think they are simply using a productivity tool, while in reality they are moving sensitive information into an environment that was never intended for casual disclosure. That is why policy language about “sensitive personal information” is so important.

Hallucination is particularly dangerous because it often looks polished. A machine-generated answer may sound more coherent than a hurried human draft, which can create a false sense of reliability. That makes training essential. Staff need to know that a clean paragraph is not the same thing as a verified paragraph. Polished output is not proof.

There is also a reputational dimension. If a local government publishes AI-assisted text that turns out to be wrong, residents may not care that it came from a tool. They will care that the authority put it out under its name. In that sense, AI policy is really about protecting the credibility of public communication.

The personal information policy links back to the B.C. Freedom of Information and Protection of Privacy Act, which means AI does not get a special exception simply because it is new. The district’s approach appears to be that if a technology touches personal information, it must fit within the existing privacy framework. That is sensible, especially in a school environment where the stakes include student records, staff information, and family data. Schools are not casual data environments.

The IT use policy is equally important because it recognizes that technology changes faster than policy language. The policy’s own wording appears to acknowledge that the “entire range of possible uses” cannot be anticipated when a rule is written. That is a useful admission. Good policy is not about predicting every future use case; it is about setting clear principles that remain valid when the details change.

There is also a trust issue. Parents and guardians expect schools to be careful and transparent. If a district is vague about how AI is used, it risks creating the impression that student information could be fed into a tool without full oversight. That is not a good place to be. The policy-development phase is therefore an opportunity to set expectations clearly before habits become fixed.

AI in schools also raises a fairness question. If teachers, administrators, or support staff use AI in uneven ways, the quality of communications and internal work products may vary from one school or department to another. That can produce inconsistent experiences for families. A district-wide policy helps reduce that fragmentation.

Chair Erik Johnson’s comments were notably skeptical. He said he has “a real problem with AI,” describing it as a tool that can be useful for research but one that must be checked at all times. He also warned that AI can make decisions that it should not make, including moral decisions. That view is not unusual among public officials who are wary of automation, especially in bodies that are small, volunteer-heavy, or operationally focused. Skepticism is often the first stage of governance.

Trustee David Chorneyko confirmed that there is no AI policy and said it is something the district needs to work on, but that other priorities are currently pressing. That is a common institutional pattern: policy development competes with operational needs, and the most urgent files tend to win the calendar. Trustee Diana Moher suggested the issue could be added to the work of the policy committee, especially as governments begin to release recommendations. That is a reasonable route because it moves the matter from informal opinion to a structured review process.

This is a good example of why AI policy has to distinguish between intentional use and incidental exposure. A grammar suggestion or auto-complete feature may not be the same as asking a chatbot to write a bylaw, but both sit on the same spectrum of machine assistance. The district may not be consciously using AI for external communications, yet it still cannot fully dissociate itself from the technology because it is built into the platform. The tool stack is part of the policy stack.

Trustee Moher’s comment that the practice is not to prompt AI to “write me an email” also shows an emerging internal norm: use AI lightly, if at all, and do not outsource official voice to it. That is a sensible instinct, but instincts are not the same as rules. The district will eventually need to decide whether to formalize that norm or continue relying on case-by-case judgment.

This creates a tricky compliance problem because staff can cross an AI boundary without consciously deciding to do so. That is especially true for smaller districts or schools that rely on commercially bundled software. If the feature is embedded in email, documents, or chat, then a policy that only bans standalone AI tools is incomplete. Invisible AI is still AI.

It also changes the training burden. Staff cannot be trained only on “approved” and “unapproved” apps; they need to understand where AI may appear inside approved software. That includes content suggestions, summarization tools, transcription features, and search assistants. Without that awareness, employees may assume they are working in a normal office suite when the software is quietly adding generative functions behind the scenes.

That is why policies like the RDN’s are more than internal etiquette. They are procurement filters. They tell staff and managers what kinds of tools are acceptable, what review is required, and when new uses must be escalated. Without those filters, organizations can drift into vendor dependence without ever really deciding to do so.

The Gabriola case shows that local governments need a sharper distinction between intentional deployment and ambient AI exposure. If a district uses Microsoft 365, it is already in the AI conversation whether it likes it or not. The question is whether it has a policy framework robust enough to notice.

This matters because generative systems can be persuasive even when they are wrong. The risk is not only factual error; it is also tone, framing, and hidden bias. A tool can generate content that sounds neutral while reproducing assumptions from its training data or from the way the prompt was constructed. If a public body is not actively checking for those issues, it can inherit them silently. Bias does not always announce itself.

The RDN’s policy language is particularly strong here because it requires review for accuracy, bias, and tone. That is the kind of checklist that can actually be used by staff. It gives people a concrete framework instead of a vague warning. It also aligns with the reality that AI output is often better treated as a first draft than as finished work.

A good accountability model also helps protect staff. If expectations are clear, people are less likely to make accidental mistakes or to feel pressured into using tools unsafely. When rules are vague, employees either avoid useful technology altogether or use it quietly in ways managers cannot see. Neither outcome is ideal. Good policy should support responsible work, not punish common sense.

That means the most useful near-term development would be a shared local understanding of the basic guardrails. Public bodies do not need identical policies, but they do need a common baseline: no casual disclosure of sensitive data, no blind trust in outputs, clear human responsibility, and explicit review of embedded AI features inside ordinary software. If those principles become standard, the area can adopt useful tools without giving up privacy or legitimacy.

The bigger lesson is that AI policy in local government is becoming a test of institutional maturity. The bodies that move first are not necessarily the most tech-forward; they are the ones most willing to say that convenience must be balanced with control. In that sense, the Gabriola story is not just about AI. It is about whether small governments can stay careful while modernizing, and whether they can make room for useful automation without surrendering the judgment that public service requires.

Source: CHEK News A look at Gabriola local governments AI technology policies

Background

Background

Artificial intelligence has moved from the edge of public administration into the center of it, often without a clean boundary between “AI tools” and ordinary workplace software. That is part of the challenge for local government, where staff may encounter AI in search, email, meeting notes, document drafting, transcription, and analytics platforms long before anyone formally approves its use. In practical terms, a council can ban consumer chatbots and still be using AI indirectly through a licensed productivity suite. That is why the conversation on Gabriola is less about novelty than about governance.The Sounder’s reporting, referenced by CHEK News, framed the issue through a privacy lens: AI can process personal and private information, and that information may be entered into data servers outside Canada. That concern is especially acute in public-sector environments, where resident data may be linked to health, housing, employment, education, or other sensitive matters. Once a prompt or document enters a vendor system, the authority may lose some visibility into retention, reuse, and training exposure. That is the heart of the policy problem.

The Islands Trust angle also helps explain why this debate has gained traction. If local consultants or staff are using AI in ways an authority cannot fully track, then the gap is not just technical; it is institutional. Governments are no longer only deciding whether to “adopt AI.” They are deciding whether they can even reliably see where it is already embedded. That distinction matters because policy that arrives after use becomes widespread is usually harder to enforce.

Another reason the issue is accelerating is that vendors are building AI into products by default. Office suites, customer service systems, meeting tools, and email platforms now frequently include generative features that are switched on by licensing rather than by a separate AI project. That means a local government can be exposed to AI risk without ever holding a formal vote on “AI adoption.” In other words, silence is not neutrality.

The Gabriola examples show three different stages of public-sector maturity. The RDN has a written policy, staff guidance, and a limited pilot. NLPS is in policy-development mode and is relying on broader rules for now. The Fire District, meanwhile, is only beginning to discuss whether it even needs a policy. Those stages are useful because they show how local government usually evolves: first comes awareness, then informal use, then governance, and finally oversight.

Why AI policy is now a governance issue

AI governance is not really about technology alone. It is about accountability, records, privacy, procurement, and public trust. If a public body uses AI to draft correspondence, summarize meetings, or assist with data analysis, the institution must still know who is responsible for the final output and where the underlying data went. That is why local governments are increasingly treating AI policy as a corporate issue rather than an IT side project.- AI features are now embedded in common workplace tools.

- Public bodies must protect personal information under law.

- Human review is needed because AI can be wrong, biased, or incomplete.

- Procurement decisions can create long-term vendor lock-in.

- Residents may not know when AI is being used on their data.

Regional District of Nanaimo: policy before improvisation

The Regional District of Nanaimo’s approach is the most developed of the three Gabriola-area bodies described in the CHEK/Sounder reporting. Chief Technology Officer Jason Birch said the district is not bound by B.C.’s provincial AI policy, but it does share an obligation to protect personal information under the B.C. Freedom of Information and Protection of Privacy Act. That legal framework is important because it makes privacy compliance a baseline duty rather than a discretionary preference. The district’s internal AI governance policy is designed to let staff explore AI while managing the risks.Birch’s description makes the RDN sound less like a tech enthusiast and more like a cautious operator. Staff remain accountable for whatever they produce with AI assistance, and they are expected to review output for accuracy, bias, and tone. The policy also requires people to make sure sensitive information is not unintentionally disclosed. That matters because one of the easiest mistakes with generative AI is assuming the tool is private, when in fact the user may be exposing data to a third-party system or an embedded cloud service. Convenience is not confidentiality.

The district has also built process into the policy. Before approved AI tools are added to workflows involving sensitive personal information, there must be an additional review. New AI tools go through a cross-departmental governance committee. That structure is significant because it recognizes that AI risk is not confined to one department. A tool that looks harmless in communications may be problematic in social services, planning, or records management. Cross-functional review is therefore a strength, not a bureaucratic delay.

Microsoft Copilot and the practical reality of embedded AI

Birch said all RDN staff with computer access are provided a version of Microsoft Copilot Chat through existing licensing, and that it has been approved for uses consistent with policy. The district is also running a limited pilot of more advanced Copilot features with representative staff. That pilot is intended to identify benefits and risks before broader adoption, which is a measured way to avoid hype-driven procurement.The reporting suggests the pilot has so far been positive, especially for structured research, meeting summaries, and data analysis. Those are exactly the kinds of tasks where AI can provide value without being the sole decision-maker. Still, Birch emphasized that gains are sometimes offset by the need to carefully review and correct errors. That is a crucial point: AI may accelerate drafting, but it does not remove the burden of verification. In some workflows, it simply moves the labor from first drafting to fact-checking and correction.

The RDN’s approach also surfaces a deeper public-sector lesson: AI tools can be useful and still not be “free” in operational terms. Even if a district already pays for Microsoft 365, there are still hidden costs in training, oversight, policy maintenance, and rework. A tool that appears to save time in a single task can create a larger review burden downstream. Speed without scrutiny is not efficiency.

What the RDN policy gets right

The RDN seems to have accepted a reality many public bodies still struggle with: AI is already present, so the job is to govern it, not pretend it can be wished away. That is a more durable approach than a blanket ban, which can push usage underground. It also gives the district a way to define acceptable use in advance rather than after a mistake.- Staff remain responsible for reviewing AI output.

- Sensitive information is not casually fed into AI systems.

- New tools require governance review before workflow adoption.

- Limited pilots allow the district to learn before scaling.

- Microsoft Copilot is being used under policy, not informally.

- The district is treating bias and hallucination as real risks, not theoretical ones.

Privacy, data residency, and legal exposure

The privacy issue is the central fault line in every local-government AI discussion, and Gabriola is no exception. The Sounder’s concern about private information entering servers outside Canada reflects a broader public anxiety: residents want to know where their data goes, who can see it, and whether it might be reused in ways they never intended. In a government context, those questions are not just about corporate trust; they are about legal compliance and democratic legitimacy.B.C.’s Freedom of Information and Protection of Privacy Act is the relevant legal backbone in the reporting. The RDN explicitly acknowledges that obligation, and NLPS says its existing policies are built around it. That is important because the legal standard is not “AI is useful,” but “information is handled as the law requires.” Once AI enters the picture, the risk shifts from local file storage to vendor infrastructure, cloud retention practices, and possible cross-border processing. The law does not get simpler when the software gets smarter.

Data residency is only one part of the challenge. There is also the problem of what staff actually paste into prompts. A well-meaning employee might use AI to summarize a complaint letter, rewrite a draft notice, or analyze a spreadsheet and inadvertently expose personal details. The user may think they are simply using a productivity tool, while in reality they are moving sensitive information into an environment that was never intended for casual disclosure. That is why policy language about “sensitive personal information” is so important.

Why hallucinations matter in government

Public-sector AI risk is not limited to privacy. Generative tools can produce confident but wrong answers, and in government that kind of error can be costly. A hallucinated legal reference, a distorted meeting summary, or a misleading analysis can ripple into public communication, administrative decisions, or policy advice. The RDN’s emphasis on accuracy, completeness, bias, and tone reflects a recognition that AI output cannot be treated as authoritative.Hallucination is particularly dangerous because it often looks polished. A machine-generated answer may sound more coherent than a hurried human draft, which can create a false sense of reliability. That makes training essential. Staff need to know that a clean paragraph is not the same thing as a verified paragraph. Polished output is not proof.

There is also a reputational dimension. If a local government publishes AI-assisted text that turns out to be wrong, residents may not care that it came from a tool. They will care that the authority put it out under its name. In that sense, AI policy is really about protecting the credibility of public communication.

Key privacy and risk themes

- Personal information must be handled under existing privacy law.

- AI tools can introduce cross-border data exposure risks.

- Users may disclose sensitive data without realizing it.

- Hallucinations can create administrative and public trust problems.

- Accuracy review is not optional; it is part of governance.

- Data residency questions are now part of procurement, not just IT.

Nanaimo-Ladysmith Public Schools: policy in development

NLPS told the Sounder that a specific AI policy is still being developed. That places the district in a transitional stage: it is not ignoring AI, but it is not yet ready to regulate it through a dedicated framework. In the meantime, the district says Policy 305.3 on Personal Information and Policy 401.13 on Appropriate Use of School District Information Technology cover AI use. That is a pragmatic stopgap, but it also reveals the difficulty of fitting a rapidly evolving technology into older policy structures.The personal information policy links back to the B.C. Freedom of Information and Protection of Privacy Act, which means AI does not get a special exception simply because it is new. The district’s approach appears to be that if a technology touches personal information, it must fit within the existing privacy framework. That is sensible, especially in a school environment where the stakes include student records, staff information, and family data. Schools are not casual data environments.

The IT use policy is equally important because it recognizes that technology changes faster than policy language. The policy’s own wording appears to acknowledge that the “entire range of possible uses” cannot be anticipated when a rule is written. That is a useful admission. Good policy is not about predicting every future use case; it is about setting clear principles that remain valid when the details change.

Why schools are especially sensitive

School districts are among the most sensitive public bodies when it comes to AI because they hold information about minors, families, learning needs, behavioral support, and special services. Even a seemingly harmless AI tool can become problematic if it is used to summarize a student file, rewrite a parent email, or process internal notes that should remain tightly controlled. The privacy stakes are simply higher than in many other parts of government.There is also a trust issue. Parents and guardians expect schools to be careful and transparent. If a district is vague about how AI is used, it risks creating the impression that student information could be fed into a tool without full oversight. That is not a good place to be. The policy-development phase is therefore an opportunity to set expectations clearly before habits become fixed.

AI in schools also raises a fairness question. If teachers, administrators, or support staff use AI in uneven ways, the quality of communications and internal work products may vary from one school or department to another. That can produce inconsistent experiences for families. A district-wide policy helps reduce that fragmentation.

Policy development as a signal

NLPS’s answer does not mean the district is behind; it means the district understands that rushing a policy can be worse than taking time to draft one properly. Still, the interim reliance on broader IT and privacy policies suggests that staff need clarity now, not someday. If AI use is happening already, the absence of a dedicated framework can create uncertainty in day-to-day work.- A specific AI policy is still under development.

- Existing privacy and IT policies are being used as a bridge.

- Student and family information make school AI use especially sensitive.

- Policy language needs to reflect rapid technological change.

- Clear guidance now can prevent inconsistent practices later.

- The district is implicitly treating AI as a privacy issue first.

Gabriola Fire Protection Improvement District: no formal AI policy yet

The Gabriola Fire Protection Improvement District appears to be at the earliest stage of the policy cycle. According to the reporting, it currently has no policy to guide trustees or staff on generative AI use. That absence was raised at the April 8, 2026 general meeting, which means the issue is now on the board’s agenda rather than remaining an unspoken assumption. In local government, that first acknowledgement often matters as much as the policy itself.Chair Erik Johnson’s comments were notably skeptical. He said he has “a real problem with AI,” describing it as a tool that can be useful for research but one that must be checked at all times. He also warned that AI can make decisions that it should not make, including moral decisions. That view is not unusual among public officials who are wary of automation, especially in bodies that are small, volunteer-heavy, or operationally focused. Skepticism is often the first stage of governance.

Trustee David Chorneyko confirmed that there is no AI policy and said it is something the district needs to work on, but that other priorities are currently pressing. That is a common institutional pattern: policy development competes with operational needs, and the most urgent files tend to win the calendar. Trustee Diana Moher suggested the issue could be added to the work of the policy committee, especially as governments begin to release recommendations. That is a reasonable route because it moves the matter from informal opinion to a structured review process.

The reality of built-in AI in everyday software

Trustee Wayne Mercier’s remarks reveal a practical complication that many smaller public bodies now face: even if you do not intentionally use generative AI, the software you already pay for may contain it. He said the Improvement District uses Microsoft 365, which includes tools such as Copilot that can insert themselves into email composition and suggest wording. That means a blanket claim that the district “never uses AI” would be inaccurate, because embedded features are already part of the environment.This is a good example of why AI policy has to distinguish between intentional use and incidental exposure. A grammar suggestion or auto-complete feature may not be the same as asking a chatbot to write a bylaw, but both sit on the same spectrum of machine assistance. The district may not be consciously using AI for external communications, yet it still cannot fully dissociate itself from the technology because it is built into the platform. The tool stack is part of the policy stack.

Trustee Moher’s comment that the practice is not to prompt AI to “write me an email” also shows an emerging internal norm: use AI lightly, if at all, and do not outsource official voice to it. That is a sensible instinct, but instincts are not the same as rules. The district will eventually need to decide whether to formalize that norm or continue relying on case-by-case judgment.

Why a small district still needs a policy

Small organizations sometimes assume AI policy is for larger governments with bigger IT teams, but that is exactly where assumptions become dangerous. Smaller bodies may have fewer staff, less formal training, and fewer layers of review, which can make them more vulnerable to casual AI use. If anything, that makes written guidance more important, not less.- The district currently has no generative AI policy.

- Board members are openly divided or cautious about AI.

- Microsoft 365 may already expose the district to embedded AI features.

- Informal norms are not a substitute for written rules.

- The policy committee may be the right place to start.

- Small bodies often need clearer guardrails because they have fewer oversight layers.

The embedded AI problem

One of the most important lessons from the Gabriola reporting is that AI governance is no longer just about chatbot apps. It is about the hidden layer of machine assistance built into the software people use every day. Microsoft 365, for example, can include Copilot features that help draft text, suggest wording, summarize content, or assist with analysis. That means a government office may be using AI simply by logging into its normal productivity environment.This creates a tricky compliance problem because staff can cross an AI boundary without consciously deciding to do so. That is especially true for smaller districts or schools that rely on commercially bundled software. If the feature is embedded in email, documents, or chat, then a policy that only bans standalone AI tools is incomplete. Invisible AI is still AI.

It also changes the training burden. Staff cannot be trained only on “approved” and “unapproved” apps; they need to understand where AI may appear inside approved software. That includes content suggestions, summarization tools, transcription features, and search assistants. Without that awareness, employees may assume they are working in a normal office suite when the software is quietly adding generative functions behind the scenes.

Procurement is now governance

A lot of public discussion treats AI policy as a matter of user behavior, but procurement may be even more important. Once an organization buys software with AI features, the governance problem is partially outsourced to the vendor. The public body then has to ask not only whether the feature is useful, but where the data goes, whether it is retained, whether it can be used to train models, and whether staff can opt out.That is why policies like the RDN’s are more than internal etiquette. They are procurement filters. They tell staff and managers what kinds of tools are acceptable, what review is required, and when new uses must be escalated. Without those filters, organizations can drift into vendor dependence without ever really deciding to do so.

The Gabriola case shows that local governments need a sharper distinction between intentional deployment and ambient AI exposure. If a district uses Microsoft 365, it is already in the AI conversation whether it likes it or not. The question is whether it has a policy framework robust enough to notice.

Practical implications

- Embedded AI features can exist inside standard office software.

- Staff may use AI without realizing they have crossed a policy line.

- Training must cover the whole software environment, not just chatbots.

- Procurement terms matter as much as user instructions.

- Data handling and retention must be understood before rollout.

- “We did not buy an AI tool” is no longer a reliable defense.

Human oversight, bias, and accountability

The biggest theme running through the Gabriola reporting is not enthusiasm for AI, but the insistence that humans remain responsible for what AI produces. That point appears explicitly in the RDN’s policy and implicitly in the cautionary comments from the Fire District. In public service, that is the right instinct. AI can assist with drafting, summarizing, and analysis, but it should not be the final authority on records, advice, or decisions.This matters because generative systems can be persuasive even when they are wrong. The risk is not only factual error; it is also tone, framing, and hidden bias. A tool can generate content that sounds neutral while reproducing assumptions from its training data or from the way the prompt was constructed. If a public body is not actively checking for those issues, it can inherit them silently. Bias does not always announce itself.

The RDN’s policy language is particularly strong here because it requires review for accuracy, bias, and tone. That is the kind of checklist that can actually be used by staff. It gives people a concrete framework instead of a vague warning. It also aligns with the reality that AI output is often better treated as a first draft than as finished work.

What accountability should look like

Accountability in a government setting means a named person, a named process, and a clear record of review. If a staff member uses AI, that use should not disappear into the machine. The human user remains accountable for the final output, and supervisors should understand when AI-assisted work is entering sensitive workflows. That is especially important when AI is used for correspondence, internal summaries, or public-facing drafting.A good accountability model also helps protect staff. If expectations are clear, people are less likely to make accidental mistakes or to feel pressured into using tools unsafely. When rules are vague, employees either avoid useful technology altogether or use it quietly in ways managers cannot see. Neither outcome is ideal. Good policy should support responsible work, not punish common sense.

Core accountability principles

- Humans, not tools, own the final decision.

- AI output must be reviewed for accuracy and bias.

- Sensitive information should not be casually exposed to vendors.

- Supervisors need visibility into new AI use cases.

- Auditability is part of public-sector legitimacy.

- Staff should not be blamed for using tools the organization failed to govern.

Strengths and Opportunities

The current Gabriola-area picture is not a story of uniform adoption or uniform resistance. It is a story of institutions moving at different speeds, with the RDN already formalizing controls, NLPS building a policy, and the Fire District still debating the issue. That diversity is useful because it shows what early-stage AI governance looks like in real life: uneven, cautious, and shaped by the type of service each body provides.- The RDN has already moved from concern to governance.

- Staff can use AI in controlled ways without hiding it.

- Limited pilots create real-world learning before broad rollout.

- NLPS has a chance to build a school-appropriate policy before bad habits harden.

- The Fire District can still create a simple, practical framework before usage becomes routine.

- All three bodies can learn from the privacy and accountability concerns already identified.

- Embedded AI awareness could improve procurement discipline across the board.

Risks and Concerns

The biggest danger is not flashy misuse; it is casual, everyday slippage. A staff member pastes sensitive details into a prompt. A built-in AI assistant drafts a message that is never properly checked. A small board assumes its software is “just office software” when it is quietly generating content behind the scenes. These are exactly the kinds of low-visibility risks that become serious only after a mistake is made.- Sensitive information may be entered into systems outside Canada.

- Users may trust hallucinated or incomplete output.

- Bias can enter quietly through model behavior or prompting.

- Small organizations may lack the training to spot misuse.

- Embedded AI features can evade simple policy bans.

- Vendor terms may shift faster than local policy updates.

- Informal norms may fail once staff workloads increase.

Looking Ahead

The next step for Gabriola’s local governments is not to decide whether AI exists. It clearly does. The real question is which bodies will govern it proactively and which will continue to rely on ad hoc judgment. The RDN has already established a template: policy, review, pilot, and accountability. NLPS is in the middle of translating broad privacy rules into a dedicated AI framework. The Fire District is just starting to confront the issue as a policy matter rather than a personal opinion.That means the most useful near-term development would be a shared local understanding of the basic guardrails. Public bodies do not need identical policies, but they do need a common baseline: no casual disclosure of sensitive data, no blind trust in outputs, clear human responsibility, and explicit review of embedded AI features inside ordinary software. If those principles become standard, the area can adopt useful tools without giving up privacy or legitimacy.

The bigger lesson is that AI policy in local government is becoming a test of institutional maturity. The bodies that move first are not necessarily the most tech-forward; they are the ones most willing to say that convenience must be balanced with control. In that sense, the Gabriola story is not just about AI. It is about whether small governments can stay careful while modernizing, and whether they can make room for useful automation without surrendering the judgment that public service requires.

- Watch for NLPS to release a dedicated AI policy.

- Watch for the Fire District to bring AI onto its policy committee agenda.

- Watch for the RDN’s pilot results to shape future adoption.

- Watch for guidance on embedded AI in Microsoft 365 and similar platforms.

- Watch for privacy language to become more explicit in procurement and workflow rules.

Source: CHEK News A look at Gabriola local governments AI technology policies

Last edited: