Microsoft’s explanation for the GitHub Copilot pull request ad controversy lands somewhere between a technical correction and a reputational cleanup. What looked to many developers like a new monetization layer inside pull requests is now being framed by the company as a programming logic issue that caused product tips to surface in the wrong place, at the wrong frequency, and with the wrong optics. The company says the feature has been removed from pull requests entirely, which makes this less a temporary bug fix than a hard retreat from a design choice that clearly crossed a trust boundary.

The incident matters because pull requests are not just another surface in GitHub; they are one of the most sensitive parts of the software development workflow. A PR is where engineers review code, debate architecture, validate behavior, and decide whether changes should ship. When promotional content appears there, even if it is framed as a tip or suggestion, it can feel like a breach of the workflow’s neutrality.

That perception is especially damaging for GitHub Copilot, which has spent years trying to position itself as an assistant rather than an attention channel. Copilot’s value proposition depends on staying out of the way and helping developers move faster. Any hint that it is also being used to distribute marketing messages risks undermining the very trust that makes people willing to let it touch code, reviews, and repository context.

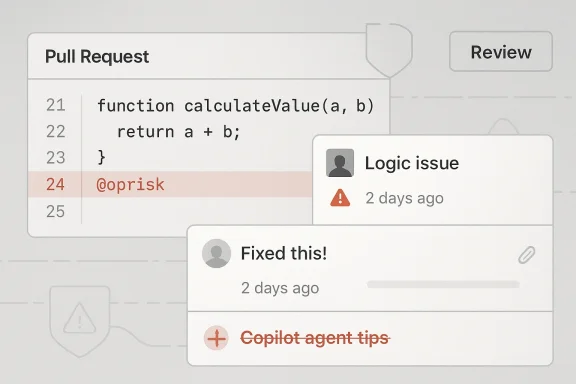

Microsoft’s messaging suggests the company believes the problem was not an ad sales arrangement but a product surface that expanded too aggressively after a March 24 change. According to the clarification, a third-party link was displayed in a way that could be interpreted as promotion, and the “Copilot agent tips” surfaced more frequently than intended alongside other suggestions. That distinction matters, but only up to a point; from a user’s perspective, the outcome still looked like advertising inside developer tooling.

The controversy also arrives at a moment when GitHub is pushing Copilot deeper into the platform. Recent GitHub updates have expanded Copilot’s role in pull requests, coding agent workflows, titles, code review, and issue-driven automation, making the PR experience a high-traffic place for AI-generated guidance. In that context, even a small logic bug can feel larger than it is, because it touches a workflow many teams now rely on daily.

This expansion is strategically logical. If Copilot can help create a PR, name it, summarize it, and respond to review feedback, then the tool becomes embedded in the developer lifecycle rather than hovering at the edge of it. GitHub has been marketing that deeper integration as a productivity story, with the added benefit of keeping users inside its ecosystem for longer stretches of time.

But deeper integration also raises the stakes of any interface decision. Developers are highly sensitive to anything that interrupts code review or makes workflow outputs feel commercialized. That sensitivity is not irrational; teams often use PRs to coordinate production changes, security fixes, and release approvals, so they expect those surfaces to be governed by utility, not promotion.

The new controversy appears to have been triggered by a March 24 change that expanded Copilot capabilities. Microsoft’s explanation suggests that a logic path responsible for surfacing product tips also introduced a link to a third-party product partner in a way that was too visible and too repetitive. In effect, what was meant to be a contextual hint became a distribution mechanism that looked like advertising.

That distinction between intent and presentation is central to the fallout. Even if no formal ad buy existed, the audience saw something that resembled paid placement. In consumer software, that might be dismissed as sloppy UI copy. In developer infrastructure, it reads more like a trust violation because the line between tooling and messaging is supposed to be firm.

The timing also made the situation worse. GitHub has been under heightened scrutiny due to recent product changes around Copilot usage policies and platform behavior. The company is in the middle of a broader push to monetize AI features more deeply while also promising greater utility and control, which means any misstep gets interpreted through a wider lens of commercialization.

Still, accidental does not mean harmless. Product bugs that affect trust surfaces are often remembered more for their impact than their root cause. If developers think a company is willing to test new “tips” inside their review workflow, they may begin to assume future changes are also experiments waiting to happen.

The company also says the highlighted partner was Raycast, and that there were no formal ad arrangements. That is an important clarification, but it does not eliminate the optics problem. A third-party link inside a PR still looks promotional whether it was paid for or not, especially when users encounter it repeatedly.

That is why the apology will likely only partially reset the narrative. Microsoft may have eliminated the immediate issue, but the broader question remains: why did Copilot have a mechanism to surface such tips in PRs at all? Once users ask that question, the answer has to be about product governance, not just a bad line of code.

The decision to remove the tips “moving forward” is also notable because it implies a permanent fix, not a temporary rollback. That suggests the company has judged the feature to be too costly in trust terms to keep, even if it could be reworked technically. In other words, Microsoft appears to be accepting that the optics were irreparable in this form.

A PR is also where cognitive load matters most. Reviewers are already parsing diff noise, test results, comments, and status checks, so even a small promotional element can feel intrusive. That is especially true when the content is not directly tied to the code under review.

This is why the backlash was more severe than it might have been in another product. In a social app, people may tolerate sponsored content because they expect it. In an engineering workflow, the expectation is nearly the opposite: the interface should be clean, deterministic, and free of promotional noise.

There is also a governance issue here. Teams often make policy decisions about which integrations are allowed in source control, code review, and CI/CD surfaces. If a vendor starts surfacing product suggestions inside those workflows without strong signaling, it can complicate internal compliance and procurement reviews.

GitHub’s recent push to expand Copilot into more surfaces shows how quickly this boundary can blur. When a product becomes embedded in coding, review, issue triage, and automation, every new suggestion has to be tested not only for relevance but also for perceived motive. That is a harder bar than “does this work?”

This transition also amplifies mistakes. A single misrouted tip is no longer an isolated UI blemish; it is a signal about the integrity of a system that is increasingly trusted to perform semi-autonomous work. In practical terms, the more Copilot does, the more every edge case matters.

GitHub has been presenting this evolution as a productivity gain. Its own messaging emphasizes faster startup times for coding agent work, better PR title generation, and tighter integration with comment-driven workflows. That is a coherent strategy, but it also raises the risk of overexposure if the product experiments too aggressively in visible surfaces.

It also affects enterprise adoption. Enterprises tend to treat Copilot through a lens of governance, predictability, and data handling. GitHub’s recent policy work shows that the company is still refining how Copilot fits into broader business rules, which means any trust issue in PRs can echo beyond individual developers.

In consumer usage, the damage may be annoyance. In enterprise usage, the damage can become policy skepticism. Once security, legal, or procurement teams think a workflow surface can be used for promotion, they may become more conservative about enabling new AI features. That could slow adoption even when the product itself remains technically strong.

Microsoft’s challenge is that GitHub is both a platform and a product brand. When one side of the house makes a mistake, the other side absorbs the perception hit. Competitors can frame that as evidence that their own assistants are more developer-centric or less commercially intrusive.

This kind of argument can be subtle but effective. A rival does not need to say Copilot is unsafe; it only needs to suggest that its own tool is more respectful of the development environment. In a category where developers compare tools on trust as much as capability, that message can carry weight.

That is especially true for features that connect Copilot to third-party tools, automation platforms, or productivity apps. A mention of a partner may now trigger more suspicion than it would have before. In that sense, the incident may create a chilling effect around future ecosystem placements unless GitHub becomes far more explicit about labeling and intent.

The consumer side is also where brand sentiment moves fastest. If Copilot feels less like a helper and more like a marketing surface, users may disable prompts, reduce reliance, or simply become more skeptical of future features. That is not catastrophic, but it is costly over time.

That means procurement, IT, and security teams may ask harder questions about where Copilot can surface tips, how they are labeled, and whether they can be disabled by policy. If GitHub wants enterprise confidence, it will need to prove that developer productivity and platform monetization are cleanly separated. Otherwise, the trust tax will show up in slower rollouts and tighter restrictions.

The best outcome would be a Copilot experience that feels more precise, not more promotional. If Microsoft uses this moment to sharpen feature boundaries, improve labeling, and reduce noisy suggestions, it can strengthen the product long term. The controversy may even force a healthier design philosophy.

There is also the risk of feature hesitation inside the company. If the reaction to this mistake is overcorrection, GitHub may become too cautious about useful contextual guidance. That would be a shame, because not every product tip is bad; the real challenge is distinguishing helpful assistance from anything that resembles promotion.

It will also be worth watching whether GitHub revisits other Copilot surfaces for similar issues. When products expand quickly, adjacent workflows often inherit the same design assumptions. A fix in pull requests does not automatically eliminate the risk elsewhere in the platform.

GitHub can recover from this, but only if it treats the issue as more than a bug. The company has to show that Copilot’s value comes from helping developers ship better software, not from discovering new ways to nudge them toward adjacent products. In a market this crowded and this trust-sensitive, that distinction is not cosmetic; it is the business.

Source: Neowin GitHub Copilot ads in PRs were due to a "programming logic issue", claims Microsoft

Overview

Overview

The incident matters because pull requests are not just another surface in GitHub; they are one of the most sensitive parts of the software development workflow. A PR is where engineers review code, debate architecture, validate behavior, and decide whether changes should ship. When promotional content appears there, even if it is framed as a tip or suggestion, it can feel like a breach of the workflow’s neutrality.That perception is especially damaging for GitHub Copilot, which has spent years trying to position itself as an assistant rather than an attention channel. Copilot’s value proposition depends on staying out of the way and helping developers move faster. Any hint that it is also being used to distribute marketing messages risks undermining the very trust that makes people willing to let it touch code, reviews, and repository context.

Microsoft’s messaging suggests the company believes the problem was not an ad sales arrangement but a product surface that expanded too aggressively after a March 24 change. According to the clarification, a third-party link was displayed in a way that could be interpreted as promotion, and the “Copilot agent tips” surfaced more frequently than intended alongside other suggestions. That distinction matters, but only up to a point; from a user’s perspective, the outcome still looked like advertising inside developer tooling.

The controversy also arrives at a moment when GitHub is pushing Copilot deeper into the platform. Recent GitHub updates have expanded Copilot’s role in pull requests, coding agent workflows, titles, code review, and issue-driven automation, making the PR experience a high-traffic place for AI-generated guidance. In that context, even a small logic bug can feel larger than it is, because it touches a workflow many teams now rely on daily.

Background

GitHub Copilot began as an inline code completion tool, but it has steadily grown into a broader agentic assistant. GitHub’s recent product cadence shows that the company wants Copilot to handle more of the work around software delivery, not just typing assistance. That includes pull request titles, pull request comments, coding agent handoffs, and broader review workflows.This expansion is strategically logical. If Copilot can help create a PR, name it, summarize it, and respond to review feedback, then the tool becomes embedded in the developer lifecycle rather than hovering at the edge of it. GitHub has been marketing that deeper integration as a productivity story, with the added benefit of keeping users inside its ecosystem for longer stretches of time.

But deeper integration also raises the stakes of any interface decision. Developers are highly sensitive to anything that interrupts code review or makes workflow outputs feel commercialized. That sensitivity is not irrational; teams often use PRs to coordinate production changes, security fixes, and release approvals, so they expect those surfaces to be governed by utility, not promotion.

The new controversy appears to have been triggered by a March 24 change that expanded Copilot capabilities. Microsoft’s explanation suggests that a logic path responsible for surfacing product tips also introduced a link to a third-party product partner in a way that was too visible and too repetitive. In effect, what was meant to be a contextual hint became a distribution mechanism that looked like advertising.

That distinction between intent and presentation is central to the fallout. Even if no formal ad buy existed, the audience saw something that resembled paid placement. In consumer software, that might be dismissed as sloppy UI copy. In developer infrastructure, it reads more like a trust violation because the line between tooling and messaging is supposed to be firm.

The timing also made the situation worse. GitHub has been under heightened scrutiny due to recent product changes around Copilot usage policies and platform behavior. The company is in the middle of a broader push to monetize AI features more deeply while also promising greater utility and control, which means any misstep gets interpreted through a wider lens of commercialization.

What Microsoft Says Happened

Microsoft’s public framing is straightforward: this was not an ad initiative but a programming logic issue. In that version of events, the code responsible for showing “product tips” misfired and caused a third-party suggestion to appear in pull requests where it did not belong, and with frequency that made it look intentional. The company says it has removed the Copilot agent tips from all pull requests moving forward.The key distinction: bug versus product strategy

That explanation matters because it determines how users judge the company’s intent. A bug is embarrassing; a strategy to place ads in PRs would be a much bigger breach. Microsoft is clearly trying to land on the safer side of that divide by calling the issue accidental and by emphasizing that the tips are gone, not merely paused.Still, accidental does not mean harmless. Product bugs that affect trust surfaces are often remembered more for their impact than their root cause. If developers think a company is willing to test new “tips” inside their review workflow, they may begin to assume future changes are also experiments waiting to happen.

The company also says the highlighted partner was Raycast, and that there were no formal ad arrangements. That is an important clarification, but it does not eliminate the optics problem. A third-party link inside a PR still looks promotional whether it was paid for or not, especially when users encounter it repeatedly.

Why “logic issue” is not a full defense

A logic error explains the mechanics, not the reaction. If a UI path causes marketing-adjacent content to appear in a sensitive location, the product design itself is part of the problem. The underlying lesson is that engineers and platform operators do not separate code paths from communication strategy the way legal teams might.That is why the apology will likely only partially reset the narrative. Microsoft may have eliminated the immediate issue, but the broader question remains: why did Copilot have a mechanism to surface such tips in PRs at all? Once users ask that question, the answer has to be about product governance, not just a bad line of code.

The decision to remove the tips “moving forward” is also notable because it implies a permanent fix, not a temporary rollback. That suggests the company has judged the feature to be too costly in trust terms to keep, even if it could be reworked technically. In other words, Microsoft appears to be accepting that the optics were irreparable in this form.

- The issue was framed as an error in logic, not a paid placement program.

- Microsoft says no ad deals were made with partners like Raycast.

- The company claims the tips surfaced more often than intended.

- Copilot agent tips have been removed from PRs entirely.

- The cleanup is as much about trust repair as about debugging.

Why Pull Requests Are a Sensitive Surface

Pull requests are not casual UI real estate. They are the operational nerve center where teams review code, discuss changes, and decide whether software is ready to merge. Any content injected into that flow has to justify itself in terms of utility, relevance, and reliability.A PR is also where cognitive load matters most. Reviewers are already parsing diff noise, test results, comments, and status checks, so even a small promotional element can feel intrusive. That is especially true when the content is not directly tied to the code under review.

The psychology of developer trust

Developer trust is fragile because it is earned by predictability. Tools that behave consistently and stay focused on the task become invisible in the best possible way. Tools that interrupt workflow, especially with messages that seem commercial, are remembered quickly and negatively.This is why the backlash was more severe than it might have been in another product. In a social app, people may tolerate sponsored content because they expect it. In an engineering workflow, the expectation is nearly the opposite: the interface should be clean, deterministic, and free of promotional noise.

There is also a governance issue here. Teams often make policy decisions about which integrations are allowed in source control, code review, and CI/CD surfaces. If a vendor starts surfacing product suggestions inside those workflows without strong signaling, it can complicate internal compliance and procurement reviews.

The broader pattern of AI tool expansion

The incident is part of a larger pattern in AI product design: helpful features often arrive adjacent to monetization opportunities. A recommendation can become a promotion, a tip can become a upsell, and a contextual suggestion can become a new channel for product discovery. That is not automatically bad, but it must be handled with extreme care in professional tools.GitHub’s recent push to expand Copilot into more surfaces shows how quickly this boundary can blur. When a product becomes embedded in coding, review, issue triage, and automation, every new suggestion has to be tested not only for relevance but also for perceived motive. That is a harder bar than “does this work?”

- Pull requests are high-trust, low-tolerance interfaces.

- Developers expect utility over persuasion.

- Even unpaid product suggestions can feel like ads.

- AI agents increase the risk of context drift.

- Trust damage can outlast the bug itself.

Copilot’s Expanding Role in GitHub

The timing of the controversy is particularly awkward because GitHub has been shipping more Copilot features around pull requests, not fewer. Recent changelog entries show that Copilot can now generate PR titles, respond to@copilot comments, and operate as a coding agent that can create or modify pull requests.From assistant to workflow actor

That shift is important because it changes user expectations. When Copilot becomes an active participant in the PR process, it is no longer just a suggestion engine. It becomes part of the review loop, which means its outputs need to be held to the same standards as human contributions.This transition also amplifies mistakes. A single misrouted tip is no longer an isolated UI blemish; it is a signal about the integrity of a system that is increasingly trusted to perform semi-autonomous work. In practical terms, the more Copilot does, the more every edge case matters.

GitHub has been presenting this evolution as a productivity gain. Its own messaging emphasizes faster startup times for coding agent work, better PR title generation, and tighter integration with comment-driven workflows. That is a coherent strategy, but it also raises the risk of overexposure if the product experiments too aggressively in visible surfaces.

Why the optics matter more now

A year ago, a product tip inside a developer tool might have been dismissed as an oddity. Today, with AI assistants increasingly acting on behalf of users, such content can be interpreted as part of an agent’s “agenda.” That makes the line between helpful guidance and hidden promotion even more consequential.It also affects enterprise adoption. Enterprises tend to treat Copilot through a lens of governance, predictability, and data handling. GitHub’s recent policy work shows that the company is still refining how Copilot fits into broader business rules, which means any trust issue in PRs can echo beyond individual developers.

In consumer usage, the damage may be annoyance. In enterprise usage, the damage can become policy skepticism. Once security, legal, or procurement teams think a workflow surface can be used for promotion, they may become more conservative about enabling new AI features. That could slow adoption even when the product itself remains technically strong.

- Copilot is moving from assistant to workflow actor.

- Every new Copilot surface increases the impact of mistakes.

- PRs are now a core part of the agentic AI story.

- Enterprises care as much about governance as convenience.

- A small UI issue can influence adoption policy.

Competitive Implications

The immediate competitive impact is reputational, but the longer-term stakes are strategic. GitHub Copilot competes not only with other coding assistants but also with the broader class of AI developer tools that promise cleaner workflows and less vendor noise. If developers begin to associate Copilot with unwanted promotion, rivals get an opening.Rivals will use trust as a feature

Competitive AI tooling is increasingly differentiated by tone, control, and transparency. The next wave of buyers is not just asking whether an assistant can write code; they are asking how much it is allowed to touch, where it draws data from, and whether it stays out of sensitive surfaces. That makes “no ads in PRs” a meaningful product promise, even if it sounds obvious.Microsoft’s challenge is that GitHub is both a platform and a product brand. When one side of the house makes a mistake, the other side absorbs the perception hit. Competitors can frame that as evidence that their own assistants are more developer-centric or less commercially intrusive.

This kind of argument can be subtle but effective. A rival does not need to say Copilot is unsafe; it only needs to suggest that its own tool is more respectful of the development environment. In a category where developers compare tools on trust as much as capability, that message can carry weight.

The ecosystem question

There is also an ecosystem implication. GitHub’s partner integrations, extension model, and AI ecosystem all depend on the platform feeling open without feeling opportunistic. If product tips are mistaken for ads, that can complicate how users interpret other partner-driven features, even legitimate ones.That is especially true for features that connect Copilot to third-party tools, automation platforms, or productivity apps. A mention of a partner may now trigger more suspicion than it would have before. In that sense, the incident may create a chilling effect around future ecosystem placements unless GitHub becomes far more explicit about labeling and intent.

- Competitors can pitch cleaner trust boundaries.

- GitHub’s platform brand absorbs the reputational spillover.

- Partner features may face extra skepticism.

- The incident may make “no ads” a differentiator.

- Transparency becomes a sales advantage.

Enterprise Versus Consumer Impact

For individual developers, the main consequence is annoyance and diminished goodwill. For enterprises, the issue is more serious because PR surfaces are tied to collaboration policies, internal approvals, and software delivery governance. A promotional-looking element in such a surface can prompt compliance questions even when it is technically harmless.Consumer users want restraint

Consumer-facing developers often tolerate occasional weirdness if the tool is useful. But they also form opinions quickly on social media, where the framing of “Microsoft injected ads into PRs” spreads faster than any nuanced clarification. That means the story can harden before the company has a chance to explain its intent.The consumer side is also where brand sentiment moves fastest. If Copilot feels less like a helper and more like a marketing surface, users may disable prompts, reduce reliance, or simply become more skeptical of future features. That is not catastrophic, but it is costly over time.

Enterprise buyers want controls

Enterprises, by contrast, will care about policy containment. They want assurance that one feature area cannot unexpectedly become a channel for product promotion, data usage changes, or workflow surprises. GitHub’s recent policy updates show that the company is already making changes that affect free, Pro, and Pro+ users differently than business accounts, which only increases the need for crisp boundaries.That means procurement, IT, and security teams may ask harder questions about where Copilot can surface tips, how they are labeled, and whether they can be disabled by policy. If GitHub wants enterprise confidence, it will need to prove that developer productivity and platform monetization are cleanly separated. Otherwise, the trust tax will show up in slower rollouts and tighter restrictions.

- Consumers react to annoyance and optics.

- Enterprises react to policy, control, and auditability.

- A bad UI decision can trigger security-style scrutiny.

- Clear labeling is now part of enterprise readiness.

- The issue may influence deployment policies.

Strengths and Opportunities

Even with this stumble, GitHub still has meaningful strengths. Copilot remains deeply integrated across one of the world’s most important developer platforms, and that distribution advantage is hard to replicate. The company also has a chance to turn this episode into a lesson in restraint, clarity, and better UX governance.The best outcome would be a Copilot experience that feels more precise, not more promotional. If Microsoft uses this moment to sharpen feature boundaries, improve labeling, and reduce noisy suggestions, it can strengthen the product long term. The controversy may even force a healthier design philosophy.

- Massive installed base inside GitHub workflows.

- Strong brand recognition among developers.

- Opportunity to improve UI transparency.

- Chance to reaffirm that Copilot is a utility, not a sales channel.

- Ability to learn from feedback quickly because the product is always online.

- Deeper AI integration can still drive genuine productivity gains.

- A clean rollback shows the company can respond fast when trust is at risk.

Risks and Concerns

The biggest risk is that this incident becomes shorthand for a larger pattern: Copilot as a product that sometimes overreaches. Even if that interpretation is unfair, perceptions matter, and perceptions harden quickly in developer communities. Once trust erodes, every future feature gets examined with suspicion.There is also the risk of feature hesitation inside the company. If the reaction to this mistake is overcorrection, GitHub may become too cautious about useful contextual guidance. That would be a shame, because not every product tip is bad; the real challenge is distinguishing helpful assistance from anything that resembles promotion.

- The story may become a trust narrative rather than a bug report.

- Future features may be judged more harshly.

- Overcorrection could slow useful AI innovation.

- Partner integrations may face lingering skepticism.

- Enterprises may demand more controls before enabling new Copilot surfaces.

- Confusion between tips and ads could recur if labeling is weak.

- Developer goodwill is easy to lose and slow to rebuild.

What to Watch Next

The immediate question is whether GitHub makes any further product changes to clarify where Copilot suggestions can appear and how they are labeled. A permanent removal from pull requests is a strong response, but it may not be the final word if users continue to push for more transparency. The company will likely need to show that it has closed the door on this specific behavior, not just renamed it.It will also be worth watching whether GitHub revisits other Copilot surfaces for similar issues. When products expand quickly, adjacent workflows often inherit the same design assumptions. A fix in pull requests does not automatically eliminate the risk elsewhere in the platform.

Key developments to monitor

- Whether GitHub publishes a more detailed explanation of the March 24 change.

- Whether other Copilot surfaces receive new labeling or disclosure language.

- Whether enterprise admins are given more control over product-tip behavior.

- Whether GitHub’s future changelogs emphasize non-promotional framing for assistant features.

- Whether competitors use the episode to sharpen their own trust-focused messaging.

GitHub can recover from this, but only if it treats the issue as more than a bug. The company has to show that Copilot’s value comes from helping developers ship better software, not from discovering new ways to nudge them toward adjacent products. In a market this crowded and this trust-sensitive, that distinction is not cosmetic; it is the business.

Source: Neowin GitHub Copilot ads in PRs were due to a "programming logic issue", claims Microsoft