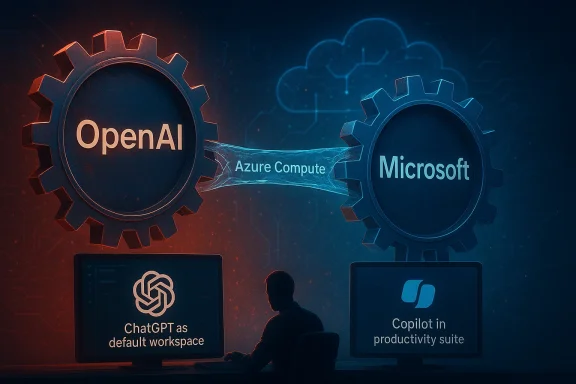

Behind OpenAI’s meteoric rise in generative AI, one strategic vulnerability stands out more than any model benchmark or product launch: the company is still deeply tied to Microsoft for capital, cloud capacity, and operational leverage. That dependence is increasingly awkward because the two firms are no longer just allies; they are now competing for the same AI workloads, the same enterprise customers, and the same future control points in productivity software. The result is a relationship that still looks mutually beneficial on paper, but increasingly resembles a high-stakes balancing act in which both sides are quietly preparing for a future with less reliance on the other.

OpenAI’s reported investor materials, as reflected in recent coverage, frame Microsoft dependence as a material business risk rather than a routine partnership issue. The concern is not simply that Microsoft is a large customer or investor; it is that Microsoft remains embedded in OpenAI’s most critical operating layers, from financing to compute to distribution. That makes the relationship foundational, but also brittle, because any change in terms could ripple through OpenAI’s business model almost immediately. s more now than it did two years ago because OpenAI is no longer a single-product phenomenon. It is building ChatGPT into a broader desktop and workflow layer, while also pushing deeper into enterprise collaboration and developer tooling. The company’s ambition is shifting from “best chatbot” to “default interface for AI-native work,” and that kind of platform play requires more independence, more infrastructure optionality, and more control over the customer relationship.

Microsoft, meanwhil to reduce reliance on OpenAI. Internal AI model work, including new Microsoft-branded model efforts, suggests Redmond wants more leverage over the stack rather than acting primarily as OpenAI’s distribution and cloud backbone. In other words, both companies are diversifying for the same reason: neither wants to be structurally trapped by the other.

The irony is that the closer the two firf the AI economy, the more their partnership begins to look like competitive coexistence. Microsoft still gains from OpenAI’s success, but it also risks losing strategic uniqueness if OpenAI captures the primary user interface for AI work. OpenAI still needs Microsoft’s scale, but it also needs room to grow beyond a single-cloud, single-partner identity. That is what makes the current moment so delicate.

The core risk is leverage. If one company controls the compute lifeline, it can influence pricing, deployment timing, and long-term negotiating power. OpenAI’s investor disclosures reportedly acknowledge that any disruption in Microsoft cooperation could materially affect financial condition and business prospects. That is exactly the kind of language investors notice, because it translates a strategic partnership into a concentration risk.

That is why diversification is so important. OpenAI has reportedly been expanding relationships with other infrastructure players, including Oracle a broadening its cloud options in parallel with Microsoft. Even if those deals are not yet enough to eliminate dependence, they signal a strategic desire to reduce single-point failure exposure.

Once OpenAI started building more of the product surface itself, the partnership stopped being purely complementary. ChatGPT is now more than an API consumer; it is a branded workspace, a collaboration environment, and a potential front door to knowledge work. That means OpenAI and Microsoft are increasingly fighting over who owns the daily user habit, not just who owns the compute bill.

In practical terms, that means the companies are competing both above and below the infrastructure layer. Below the layer, they still need each other. Above the layer, they are increasingly rivals. That is a classic platform tension, and it rarely stays tidy for long.

That strategy also changes investor perception. A company preparing for a potential public market debut needs to show that its supply chain is resilient, not just innovative. The more OpenAI can prove that it has options beyond Microsoft, the easier it becomes to argue that its growth is scalable, durable, and not hostage to one partner’s roadmap.

It also helps OpenAI address the realities of massive compute demand. Analysts and reportiverage point to extraordinary infrastructure costs by 2030, which underscores how expensive frontier AI really is. In that environment, multi-cloud economics are less a luxury than a necessity.

Microsoft’s strength is that it is already embedded where the work happens. Teams, Microsoft 365, Windows, and Azure give it a distribution advantage that most rivals would envy. But OpenAI’s advantage is that it can present a cleaner, more unified AI experience that may feel more natural than Microsoft’s layered ecosystem. That makes the competition feel less like product versus product and more like philosophy versus philosophy.

This matters because default behavior drives long-term platform value. If users begin work in ChatGPT, Microsoft’s productivity stack becomes a downstream destination. If they begin work in Copilot, OpenAI becomes a supplier rather than a destination. That is the real battlefield, and it is far more consequential than any single model release.

A highly valued AI company needs to show it can scale without hidden fragility. That means investors will ask whether Microsoft remains a benign strategic partner or a structural bottleneck. They will also ask whether OpenAI can control its economics well enough to justify a premium valuation while facing massive compute costs and intense competitive pressure.

The legal and operational environment also matters. OpenAI is navigating lawsuits, governance scrutiny, and public attention around its founders and structure. In a private-company world, those issues are manageable as long as capital remains abundant. In a public-company world, they become part of the risk premium.

When a company is already dealing with litigation, governance questions, and massive compute obligations, partner stability becomes even more important. A fragile operational base can be tolerated when a company is smaller, but at OpenAI’s scale it becomes a strategic exposure. Investors do not just ask whether the company can grow; they ask whether it can keep growing without accumulating hidden liabilities.

That creates an uncomfortable truthets, the more expensive it may become to sustain its lead. High demand drives compute usage, which increases dependency on supply, which reinforces the need for broader infrastructure strategy. The success loop is also a cost loop.

The Copilot and model strategy suggests Microsoft wants to be judged on more than distribution. It wants to be seen as a serious AI product company with its own identity, not just the company that made OpenAI mainstream. That may be why Microsoft is investing in internal model families and refining Copilot’s organizational structure.

The company also knows that enterprise buyers prefer stability. If Microsoft can present Copilot as the safe, predictable, deeply integrated option, it can defend its core franchise even if OpenAI remains the more exciting brand. That is a classic Microsoft play: make the product boring in the best possible way.

The second concern is strategic entanglement. OpenAI and Microsoft are partners, but they are also competing for the same interface layer. That means partnership friction is not hypothetical; it is built into the business model. When two companies compete and cooperate at the same time, the tension tends to surface in product decisions, pricing, and channel strategy.

The most important thing to watch is whether OpenAI keeps converting product momentum into control over the workflow layer. If ChatGPT becomes the default starting point for work, Microsoft’s role shifts downward in the stack. If Microsoft keeps embedding Copilot more deeply into business operations, OpenAI may remain brilliant but strategically subordinate. That contest will shape not only their partnership but the wider AI market.

In the end, this is not just a story about one company relying too heavily on another. It is a story about the AI industry maturing from exuberant scaling into hard questions about leverage, ownership, and control. OpenAI still has enormous opportunity, but its long-term value will depend on whether it can turn Microsoft from an indispensable crutch into one partner among many — before the market decides that distinction for it.

Source: VOI.id OpenAI Microsoft Dependency, Big Risks Revealed!

Overview

Overview

OpenAI’s reported investor materials, as reflected in recent coverage, frame Microsoft dependence as a material business risk rather than a routine partnership issue. The concern is not simply that Microsoft is a large customer or investor; it is that Microsoft remains embedded in OpenAI’s most critical operating layers, from financing to compute to distribution. That makes the relationship foundational, but also brittle, because any change in terms could ripple through OpenAI’s business model almost immediately. s more now than it did two years ago because OpenAI is no longer a single-product phenomenon. It is building ChatGPT into a broader desktop and workflow layer, while also pushing deeper into enterprise collaboration and developer tooling. The company’s ambition is shifting from “best chatbot” to “default interface for AI-native work,” and that kind of platform play requires more independence, more infrastructure optionality, and more control over the customer relationship.Microsoft, meanwhil to reduce reliance on OpenAI. Internal AI model work, including new Microsoft-branded model efforts, suggests Redmond wants more leverage over the stack rather than acting primarily as OpenAI’s distribution and cloud backbone. In other words, both companies are diversifying for the same reason: neither wants to be structurally trapped by the other.

The irony is that the closer the two firf the AI economy, the more their partnership begins to look like competitive coexistence. Microsoft still gains from OpenAI’s success, but it also risks losing strategic uniqueness if OpenAI captures the primary user interface for AI work. OpenAI still needs Microsoft’s scale, but it also needs room to grow beyond a single-cloud, single-partner identity. That is what makes the current moment so delicate.

The Microsoft Dependency Problem

OpenAI’s dependence on Microsoft is not a side issue; it is part of the company’s operating model. Microsoft has invested heavily, supported OpenAI’s compute needs through Azure, and helped turn ChatGPT from a breakthrough demo into a global product. That support has been essential, but it also means OpenAI’s runway, margins, and resilience are tied to a partner that increasingly has its own AI ambitions.The core risk is leverage. If one company controls the compute lifeline, it can influence pricing, deployment timing, and long-term negotiating power. OpenAI’s investor disclosures reportedly acknowledge that any disruption in Microsoft cooperation could materially affect financial condition and business prospects. That is exactly the kind of language investors notice, because it translates a strategic partnership into a concentration risk.

Why concentration risk matters

In AI, infrastructure is not just a backend detail. It shapes how fast models can be trainedts can be served, and how aggressively a company can scale into new workloads. If a company like OpenAI grows too quickly on top of a single provider, the provider effectively becomes part of the business architecture, not merely a vendor.That is why diversification is so important. OpenAI has reportedly been expanding relationships with other infrastructure players, including Oracle a broadening its cloud options in parallel with Microsoft. Even if those deals are not yet enough to eliminate dependence, they signal a strategic desire to reduce single-point failure exposure.

- Capital dependence can limit strategic freedom.

- Cloud dependence can constrain pricing power.

- Compute dependence can slow product expansion.

- Partner dependence can weaken negotiations.

- Concentration risk becomes more serious as scale increases.

Why the Relationship Is Changing

The OpenAI-Microsoft relationship worked best when the two companies had clearly separated roles: OpenAI made the models and Microsoft supplied the cloud, capital, and enterprise channel. That arrangement was efficient because it let each side focus on what it did best. But AI is now moving up the stack, and the most valuable layer is no longer just model access — it is workflow ownership.Once OpenAI started building more of the product surface itself, the partnership stopped being purely complementary. ChatGPT is now more than an API consumer; it is a branded workspace, a collaboration environment, and a potential front door to knowledge work. That means OpenAI and Microsoft are increasingly fighting over who owns the daily user habit, not just who owns the compute bill.

The shift from model supplier to platform contender

This is the most important strategic pivot. OpenAI is no longer merely a model supplier feeding other products; it is trying to become the place where work begins. That puts it in direct tension with Microsoft, whose Copilot strategy depends on being embedded in Microsoft 365, Teams, and other productivity surfaces.In practical terms, that means the companies are competing both above and below the infrastructure layer. Below the layer, they still need each other. Above the layer, they are increasingly rivals. That is a classic platform tension, and it rarely stays tidy for long.

- OpenAI wants to own the interface.

- Microsoft wants to own the workspace.

- Both want the customer relationship.

- Both need the same compute economy.

- Both are hedging against overdependence.

The Infrastructure Diversification Play

OpenAI’s reported move toward other cloud partners is not just about bargaining power; it is about operational continuity. In a compute-hungry AI market, the ability to shift workloads across providers can become a survival trait. A company that can route demand flexibly is much harder to corner than one that is tied to a single capacity provider.That strategy also changes investor perception. A company preparing for a potential public market debut needs to show that its supply chain is resilient, not just innovative. The more OpenAI can prove that it has options beyond Microsoft, the easier it becomes to argue that its growth is scalable, durable, and not hostage to one partner’s roadmap.

Cloud diversification as leverage

Diversification is often framed as insurance, but in OpenAI’s case it is also leverage. If the company can credibly say it has multiple cloud options, then no single provider can dictate terms as easily. That does not end the Microsoft relationship, but it changes the psychology of the negotiation.It also helps OpenAI address the realities of massive compute demand. Analysts and reportiverage point to extraordinary infrastructure costs by 2030, which underscores how expensive frontier AI really is. In that environment, multi-cloud economics are less a luxury than a necessity.

- Risk reduction through provider diversity.

- Negotiation leverage against dominant partners.

- Capacity flexibility during demand spikes.

- Resilience against outages and policy changes.

- Market signaling ahead of IPO or fundraising events.

The Competitive Collision With Microsoft

The relationship became more complicated the moment Microsoft started competing more directly with OpenAI in public-facing AI. Microsoft’s own Copilot stack now occupies many of the same user moments ChatGPT wants to own, especially inside productivity software and enterprise workflows. That overlap creates a strategic contradiction: Microsoft wants OpenAI to succeed, but not so much that OpenAI becomes the primary destination for work.Microsoft’s strength is that it is already embedded where the work happens. Teams, Microsoft 365, Windows, and Azure give it a distribution advantage that most rivals would envy. But OpenAI’s advantage is that it can present a cleaner, more unified AI experience that may feel more natural than Microsoft’s layered ecosystem. That makes the competition feel less like product versus product and more like philosophy versus philosophy.

Copilot versus ChatGPT as strategic defaults

Copilot is strongest when it is invisible and ambient inside Microsoft software. ChatGPT is strongest when it becomes the starting point for research, drafting, coding, and collaboration. Those are not identical use cases, but they fight for the same attention, especially among knowledge workers.This matters because default behavior drives long-term platform value. If users begin work in ChatGPT, Microsoft’s productivity stack becomes a downstream destination. If they begin work in Copilot, OpenAI becomes a supplier rather than a destination. That is the real battlefield, and it is far more consequential than any single model release.

- ChatGPT wants to be the first tab.

- Copilot wants to be the embedded assistant.

- ChatGPT favors workflow breadth.

- Copilot favors suite integration.

- The winner owns the habit loop.

The IPO and Valuation Angle

The reported possibility of a future listing changes the entire discussion. Public-market investors do not just buy growth; they buy narrative, control, and risk clarity. If OpenAI goes public or even signals that path more clearly, the Microsoft dependency issue will become a central underwriting question rather than a footnote.A highly valued AI company needs to show it can scale without hidden fragility. That means investors will ask whether Microsoft remains a benign strategic partner or a structural bottleneck. They will also ask whether OpenAI can control its economics well enough to justify a premium valuation while facing massive compute costs and intense competitive pressure.

What public investors will care about

Wall Street will likely focus on a few key questions. How concentrated are OpenAI’s infrastructure commitments? How much bargaining power does it really have with Microsoft? Can it monetize consumer demand without giving away too much margin to cloud providers and model costs? Those questions ahey go straight to valuation durability.The legal and operational environment also matters. OpenAI is navigating lawsuits, governance scrutiny, and public attention around its founders and structure. In a private-company world, those issues are manageable as long as capital remains abundant. In a public-company world, they become part of the risk premium.

- predictable compute access.

- Investors want cleaner governance.

- Public markets punish dependency risk.

- Any IPO would magnify partner conflicts.

- Margin pressure will remain a major concern.

Legal, Operational, and Regulatory Pressure

The dependency story cannot be separated from OpenAI’s broader risk profile. Investor materials reportedly point to legal disputes, including conflicts involving Elon Musk, and to other operational pressures that are norres but still relevant in aggregate. Those risks matter because they compound the effects of any infrastructure vulnerability.When a company is already dealing with litigation, governance questions, and massive compute obligations, partner stability becomes even more important. A fragile operational base can be tolerated when a company is smaller, but at OpenAI’s scale it becomes a strategic exposure. Investors do not just ask whether the company can grow; they ask whether it can keep growing without accumulating hidden liabilities.

The cost of frontier AI

One of the most sobering parts of the reported risk discussion is the sheer scale of projected compute costs. The implied figures reaching into the hundreds of billions over time underscore that frontier AI is not a software-only business anymore. It is a capital-intensive infrastructure business with software margins only if everything goes right.That creates an uncomfortable truthets, the more expensive it may become to sustain its lead. High demand drives compute usage, which increases dependency on supply, which reinforces the need for broader infrastructure strategy. The success loop is also a cost loop.

- Litigation can distract management.

- Governance issues can slow strategic moves.

- Compute cost inflation can compress margins.

- Infrastructure concentration raises operational rutiny can complicate expansion.

What Microsoft Wants Next

Microsoft is not standing still while OpenAI tries to diversify increasingly signals a desire to own more of the model layer, tighten product cohesion, and reduce the risk of being treated as a mere host for someone else’s breakout success. That is a rational response to OpenAI’s rise, but it also changes the emotional tone of the alliance.The Copilot and model strategy suggests Microsoft wants to be judged on more than distribution. It wants to be seen as a serious AI product company with its own identity, not just the company that made OpenAI mainstream. That may be why Microsoft is investing in internal model families and refining Copilot’s organizational structure.

The logic of ownership

If Microsoft can own more of the model stack, it gets more freedom to tune safety, pricing, and product behavior without waiting on external priorities. That matters because AI companies increasingly win by controlling the full loop from model to interface to distribution. Microsoft knows this, and it is behaving accordingly.The company also knows that enterprise buyers prefer stability. If Microsoft can present Copilot as the safe, predictable, deeply integrated option, it can defend its core franchise even if OpenAI remains the more exciting brand. That is a classic Microsoft play: make the product boring in the best possible way.

- More in-house model control.

- Stronger Copilot coherence.

- Better enterprise governance.

- Reduced reliance on a single AI supplier.

- A clearer platform identity.

Strengths and Opportunities

OpenAI’s position is still exceptionally strong, even with the dependency risk. It has a globally recognized brand, strong developer mindshare, and a product portfolio that reaches from consumer chat to enterprise workflows. The company’s challenge is not weakness in demand; it is turning demand into a durable, less fragile platform business.- Brand gravity keeps ChatGPT at the center of the AI conversation.

- Developer momentum creates ecosystem stickiness.

- Workflow breadth supports enterprise expansion.

- Diversification efforts reduce single-partner exposure.

- **Desktop inteily usage frequency.

- Collaboration features improve retention and stickiness.

- Multi-cloud strategy improves negotiating leverage.

Risks and Concerns

The main concern is that OpenAI’s success has created the exact kind of structural dependence that can become dangerous at scale. If Microsoft decides to change terms, tighten access, or prioritize its own stack more aggressively, OpenAI could feel the impact quickly. The company is trying to diversify before that happens, which is sensible, but it is not yet clear how quickly that diversification can mature.The second concern is strategic entanglement. OpenAI and Microsoft are partners, but they are also competing for the same interface layer. That means partnership friction is not hypothetical; it is built into the business model. When two companies compete and cooperate at the same time, the tension tends to surface in product decisions, pricing, and channel strategy.

- Overreliance on Microsoft remains a real weakness.

- Compute costs could pressure margins for years.

- Litigation and governance issues add noise and risk.

- Multi-cloud diversification may be expensive and complex.

- Partner conflict could slow product execution.

- Public-market scrutiny would intensify these issues.

Looking Ahead

The next phase will likely be defined by three questions: how fast OpenAI can diversify its infrastructure, how aggressively Microsoft pushes its own model independence, and whether either company can preserve the partnership without ceding strategic ground. None of those answers will arrive all at once. Instead, the market will see a series of incremental signals in product updates, cloud deals, and organizational changes.The most important thing to watch is whether OpenAI keeps converting product momentum into control over the workflow layer. If ChatGPT becomes the default starting point for work, Microsoft’s role shifts downward in the stack. If Microsoft keeps embedding Copilot more deeply into business operations, OpenAI may remain brilliant but strategically subordinate. That contest will shape not only their partnership but the wider AI market.

Key signals to monitor

- New cloud or compute partnerships beyond Microsoft.

- Changes in Microsoft’s Copilot branding and product structure.

- Enterprise adoption of OpenAI collaboration tools.

- Disclosure around capital expenditure and infrastructure commitments.

- Signs that either company is reducing strategic dependence on the other.

In the end, this is not just a story about one company relying too heavily on another. It is a story about the AI industry maturing from exuberant scaling into hard questions about leverage, ownership, and control. OpenAI still has enormous opportunity, but its long-term value will depend on whether it can turn Microsoft from an indispensable crutch into one partner among many — before the market decides that distinction for it.

Source: VOI.id OpenAI Microsoft Dependency, Big Risks Revealed!