The leaked files published this week show that U.S. Immigration and Customs Enforcement dramatically increased its use of Microsoft’s Azure cloud during the second half of 2025 — more than tripling stored volumes on Azure in six months and expanding the agency’s consumption of Microsoft productivity and AI tools — raising hard questions about how commercial cloud platforms, resellers and third‑party integrations are shaping a sweeping interior‑enforcement campaign.

In 2025 the federal government sharply increased funding and operational emphasis on interior immigration enforcement. Public and independent analyses put ICE’s available enforcement resources in 2025 in the high‑$20 billions when regular appropriations and later reconciliation supplements are counted, and legislative packages discussed publicly included multi‑year allocations that advocacy groups and some analysts described as a $75 billion program of enforcement spending spread over several years. Those headline numbers have been widely reported and debated; they reflect a combination of annual appropriations and larger reconciliation or supplemental allocations rather than a single, immediate cash transfer to the agency.

At the same time, reporting and contract records show a large uptick in ICE purchases of cloud services and software licenses from major vendors and resellers. Public federal contracting documents and investigative reporting show tens of millions of dollars of new cloud purchases from Microsoft and Amazon during 2025. Those procurement moves occurred alongside an aggressive enforcement posture that produced record levels of detentions and at‑large arrests, according to independent analyses and major newsroom investigations.

The new Guardian reporting — the documents at the center of this article — was published alongside partner outlets and appears to derive from leaked internal records describing ICE’s Azure consumption and related technical configurations. Because the files are leaked material, some specific claims cannot be independently corroborated in public contract logs; that distinction matters and will be highlighted below where appropriate.

At the same time, multiple internal Microsoft sources — and contemporaneous worker organizing captured in uploaded internal threads and memos — show persistent employee unease about government contracts that touch biometric processing, AI analytics and law‑enforcement integrations. The files uploaded to this conversation include employee organizing materials, petitions and risk assessments arguing that tech firms must adopt moratoria on new enforcement‑related sales and strengthen whistleblower protections. Those internal discussions are consistent with similar movements that have pressured other cloud vendors in recent years.

Two points are important:

That uncertainty is not an excuse for inaction. The combination of high enforcement budgets, rising agency headcount, and easy access to scalable cloud AI creates real potential for rights‑eroding outcomes. The appropriate response is not ideological — it is technical and legal: require auditable constraints, publish independent assessments, limit downstream sharing of biometric and location data, and strengthen whistleblower channels for both vendor and agency staff. The public, Congress and the courts must insist on those safeguards now, before cloud scale turns into institutionalized opacity with human costs that are harder to reverse.

Source: The Guardian ICE reliance on Microsoft technology surged amid immigration crackdown, documents show

Background

Background

In 2025 the federal government sharply increased funding and operational emphasis on interior immigration enforcement. Public and independent analyses put ICE’s available enforcement resources in 2025 in the high‑$20 billions when regular appropriations and later reconciliation supplements are counted, and legislative packages discussed publicly included multi‑year allocations that advocacy groups and some analysts described as a $75 billion program of enforcement spending spread over several years. Those headline numbers have been widely reported and debated; they reflect a combination of annual appropriations and larger reconciliation or supplemental allocations rather than a single, immediate cash transfer to the agency.At the same time, reporting and contract records show a large uptick in ICE purchases of cloud services and software licenses from major vendors and resellers. Public federal contracting documents and investigative reporting show tens of millions of dollars of new cloud purchases from Microsoft and Amazon during 2025. Those procurement moves occurred alongside an aggressive enforcement posture that produced record levels of detentions and at‑large arrests, according to independent analyses and major newsroom investigations.

The new Guardian reporting — the documents at the center of this article — was published alongside partner outlets and appears to derive from leaked internal records describing ICE’s Azure consumption and related technical configurations. Because the files are leaked material, some specific claims cannot be independently corroborated in public contract logs; that distinction matters and will be highlighted below where appropriate.

What the leaked files say — the headline technical claims

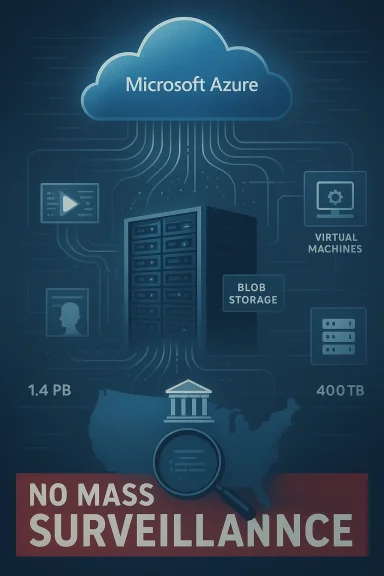

The documents published by the Guardian and partners state the following core technical claims:- ICE increased the amount of data held on Microsoft Azure from roughly 400 terabytes in July 2025 to about 1,400 terabytes in January 2026. The files describe Azure “blob” storage as a primary mechanism for raw data storage and suggest the growth was driven by the agency ramping up intake and processing of multimedia and records.

- ICE is reported to be using virtual machines (VMs) on Azure — effectively renting high‑performance compute — and to be running some agency applications on Microsoft infrastructure. The files name Azure services for image/video analysis and automated translation as being used for analytics.

- The documents imply that ICE’s expanded workforce and operational tempo were accompanied by increased access to Microsoft productivity suites and an AI chatbot made available through those productivity bundles. They also indicate third‑party reseller arrangements (for example, license buys via Dell and others) were part of how ICE procured some services.

Why the storage numbers matter (and what they really mean)

The jump from 400 TB to 1,400 TB is alarming in plain terms: if that capacity were purely image files, it would equal hundreds of millions of photos. But raw terabyte figures can mask how data is used and structured.- Blob storage on Azure is a general‑purpose object store used for backups, video and image archives, logs, and dataset lakes. A rapid increase in blob usage can reflect bulk ingestion of video, body‑cam footage, phone extractions, scanned documents, or large‑scale exports from other databases. The leaked files explicitly reference blob usage as a repository for raw data.

- Compute costs and VM use matter for analytics. Running virtual machines at scale lets an agency not only store but actively process, transcode and run machine‑learning models against incoming datasets. The files indicate ICE rented compute instances, which is a practical necessity if the agency is doing automated image and video analytics in‑house rather than simply archiving material.

- Interpretation caveat: terabytes alone do not reveal what data was stored, for how long, nor whether it was encrypted at rest with keys controlled by ICE. The leak does not publicly disclose dataset labels or retention policies; therefore claims about specific uses (for example, programmatic mass surveillance or targeted investigative analytics) should be judged carefully and flagged as not fully verifiable without access to the raw files or accounts from officials.

How ICE’s cloud consumption fits into the broader enforcement surge

ICE’s technology purchases and cloud use did not happen in a vacuum. Two parallel facts help explain the context:- Congress and the administration increased enforcement resources in 2025, creating both hiring and mission expansion pressure on ICE’s operations. Analyses that combine regular appropriations with reconciliation/supplemental appropriations place ICE’s accessible enforcement funding in 2025 near $28.7–$29.9 billion, and some reporting framed multi‑year packages as up to $75 billion over several years for enforcement priorities — figures that are best read as funding envelopes rather than instant, fungible cash sitting in a single account. Those increases gave the agency the means to expand personnel and purchases.

- Independent contract records reviewed by reporters show a fresh wave of purchases for cloud infrastructure, licenses and services from resellers during 2025, including significant buys routed through established government resellers (for example, Dell federal channels) and direct AWS and Microsoft purchases. This mirrors the leaked files’ timeline and supports the broader assertion that ICE’s cloud footprint grew substantially in late 2025.

Microsoft’s public position and internal employee concern

Microsoft’s public response — as reported in the Guardian coverage — was that the company provides productivity and collaboration tools to DHS and ICE through partners, that its terms prohibit mass surveillance of civilians, and that the company does not believe ICE is engaged in mass surveillance. The company has also told some employees that it “does not presently maintain AI services contracts tied specifically to enforcement activities.” Those statements, as presented to staff and press, attempt to draw a line between selling baseline cloud and productivity services and actively enabling targeted enforcement workflows.At the same time, multiple internal Microsoft sources — and contemporaneous worker organizing captured in uploaded internal threads and memos — show persistent employee unease about government contracts that touch biometric processing, AI analytics and law‑enforcement integrations. The files uploaded to this conversation include employee organizing materials, petitions and risk assessments arguing that tech firms must adopt moratoria on new enforcement‑related sales and strengthen whistleblower protections. Those internal discussions are consistent with similar movements that have pressured other cloud vendors in recent years.

Two points are important:

- Corporate public statements that a vendor “does not presently maintain AI services contracts tied specifically to enforcement” can be technically accurate while still leaving room for substantial indirect exposure: reseller sales, system integrators, platform hosting, managed services and bespoke engineering support can enable downstream enforcement workflows without an explicit “AI enforcement” line item on a vendor’s balance sheet. Public declarations must therefore be examined alongside procurement data and third‑party integrations.

- Employees raising ethics reports are a significant indicator of reputational and operational risk. The uploaded internal threads detail worker campaigns, petitions and policy recommendations that map directly onto the public controversy; when a company faces sustained internal pressure on public‑policy grounds, it signals governance blind spots that boards and regulators should note.

Procurement channels, resellers and the messy reality of cloud governance

The Guardian’s leak and contract reporting reveal that resellers and integrators have been an important pathway for ICE to acquire Microsoft and Amazon cloud products. This matters for two reasons:- Reseller sales can be less visible in public cloud vendor press releases yet still grant agencies enterprise licenses, engineering support or deployment assistance. Public contract registries often show resellers such as large federal integrators (for example, Dell federal channels) as the contracting vehicle even when the underlying product is Azure or AWS. That pattern complicates transparency and auditing.

- Many cloud deployments involve layered responsibilities: the hyperscaler provides infrastructure, a systems integrator configures and sometimes manages the environment, and the agency consumes the end product. That chain increases the number of actors who need to be covered by legal safeguards (data residency, access controls, logging, redaction) and increases the risk that governance gaps will be exploited or unmonitored. The uploaded employee documents repeatedly call for clearer contractual limits on downstream use and stricter audit rights — exactly the governance levers that matter in layered procurements.

Technical risks and failure modes

Putting significant enforcement workloads onto a public cloud raises distinct technical and security risks that should be assessed explicitly:- Access control and insider threat: More vendors, partners and resellers touching an environment create more privileged access points. Without strict key management and zero‑trust enforcement, sensitive PII and biometrics can be exposed to a larger set of human actors. The leaked files do not publicly disclose key management practices, which is a material omission for risk assessment.

- Data residency and cross‑border transfers: Cloud providers operate multi‑region storage and may move or replicate data across geographies for resilience. That has legal consequences where data protection regimes or third‑party subpoenas differ across jurisdictions. The Guardian’s prior reporting on Microsoft and Israeli military use underscores how cross‑border storage can become an accountability vector in high‑stakes deployments.

- Model‑based amplification and false positives: If ICE is using automated image‑and‑video analytics, the risk of misidentification grows when models are applied at scale to low‑quality footage or biased datasets. False positives in face recognition or inaccurate entity linking can cascade into wrongful arrests or other harms; these are not hypothetical — they are a documented failure mode of current algorithms. Public vendors and integrators have a responsibility to require human‑in‑the‑loop controls and transparent error‑rate disclosures.

- Supply‑chain and software risk: Many agency applications are built on open‑source components and third‑party modules; undocumented or poorly audited dependencies can introduce vulnerabilities into law‑enforcement systems. Running such workloads on shared cloud infrastructure increases blast radius if an application is exploited.

Civil‑liberties and legal implications

The expansion of cloud‑backed analytics for immigration enforcement implicates several legal and democratic risk vectors:- Mass surveillance vs. targeted investigation: The distinction is legal and moral: targeted investigative use (with warranted data collection and judicial oversight) is different from blanket, frictionless ingestion of people’s data for profiling. Leaked terabyte figures and AI‑tool references raise legitimate concerns that cloud scale could be used to operationalize broad surveillance if adequate constraints are not codified and enforced. Where the leak cannot conclusively show intent to mass‑surveil, it does create an evidentiary basis to demand clarity from vendors and agencies.

- Due process and remediation: Automated flags and prioritization systems can shape who is arrested, detained or deported — and those systems are often opaque. Civil‑liberties organizations and courts have repeatedly warned that algorithmic tools require transparency, explainability and meaningful avenues for people to contest automated decisions. The recent surge in arrests and detentions heightens the stakes for ensuring these protections are in place.

- Corporate legal exposure: Vendors and integrators could face reputational, shareholder and regulatory risk if their products are shown to materially enable rights violations. Employee activism and regulator inquiries following Microsoft’s earlier Israel‑related service restrictions show the reputational damage vendors can incur when public policy lines are perceived to be crossed.

What independent reporting and contract records confirm (and what remains unverified)

Cross‑referencing the Guardian’s leaked documents with public contract data and investigative reporting yields the following verified picture and gaps:- Verified: Federal procurement records and reporting show a meaningful increase in cloud and software spending by ICE and CBP in 2025, including significant reseller‑facilitated purchases from Microsoft and AWS. That confirmation makes the leak’s procurement timeline plausible. (forbes.com)

- Verified: Independent analyses document a surge in ICE enforcement activity and detentions in 2025, which aligns temporally with increased operational spending and staffing. Those operational pressures plausibly explain increased demand for storage and computing.

- Not independently verified from public records: The leak’s exact internal architecture diagrams, the precise contents of stored datasets, and operational directives that would prove whether Azure storage was used specifically to support particular surveillance streams (for example, phone intercepts, drone feeds, or cellphone extraction pipelines). The public contract logs do not reveal dataset labels or internal engineering details; therefore, the most sensitive technical claims remain anchored to the leaked files themselves and to the Guardian’s reporting. Readers should treat those claims as important but not yet independently audited evidence.

Practical steps vendors and policymakers can take now

The risks above are real and fixable only with concrete, technical and legal remedies. Based on the evidence available, these are immediate, implementable steps worth considering:- Vendors and resellers should adopt explicit contractual clauses for law‑enforcement customers that:

- Require granular, auditable logging and proof of judicial authorizations for sensitive analytic workloads.

- Prevent downstream sharing of raw biometric identifiers except under narrow, audited legal processes.

- Mandate independent human‑rights and privacy impact assessments before scaled deployment of AI analytics.

- Agencies should publish non‑classified system architecture summaries and data‑minimization policies that specify:

- Data sources, retention windows, and key‑management arrangements (who holds encryption keys).

- Human‑in‑the‑loop thresholds for automated flags leading to arrests or detention.

- Congress and oversight bodies should require:

- A public audit of major procurement pathways for federal enforcement agencies (including reseller flows).

- Regular reporting on algorithmic use in enforcement, with redress channels for affected people.

- Strengthened whistleblower protections for vendor and contractor employees who raise concerns about misuse.

- Companies should strengthen internal governance:

- Create rapid review teams for government contracts involving biometrics or AI.

- Publish redacted contract summaries and the policy rationale for any exceptions to standard privacy safeguards.

What to watch next

- Will Microsoft and other cloud providers produce a transparent accounting of reseller‑channel sales and the extent to which they offer managed services or engineering support to enforcement agencies? Public clarity here would materially reduce uncertainty.

- Will independent auditors be given access to ICE deployments on Azure to verify that encryption, access controls and human oversight meet the public standards vendors claim they enforce?

- Will Congress use hearings and appropriations oversight to tighten policy guardrails on the downstream use of cloud and AI services by enforcement agencies, or will procurement continue to outpace governance?

Conclusion

The Guardian’s leaked documents add a new, granular dimension to a debate we have been following for years: the extent to which global cloud firms and integration partners can be said to enable state power. The technical data points — a rapid rise in stored terabytes, expanded virtual‑machine use, and widespread productivity access — are plausible and are corroborated in part by procurement records and the broader enforcement expansion of 2025. But the most sensitive claims about precise datasets and operational use remain tied to the leaked files and require independent audit to be fully validated.That uncertainty is not an excuse for inaction. The combination of high enforcement budgets, rising agency headcount, and easy access to scalable cloud AI creates real potential for rights‑eroding outcomes. The appropriate response is not ideological — it is technical and legal: require auditable constraints, publish independent assessments, limit downstream sharing of biometric and location data, and strengthen whistleblower channels for both vendor and agency staff. The public, Congress and the courts must insist on those safeguards now, before cloud scale turns into institutionalized opacity with human costs that are harder to reverse.

Source: The Guardian ICE reliance on Microsoft technology surged amid immigration crackdown, documents show