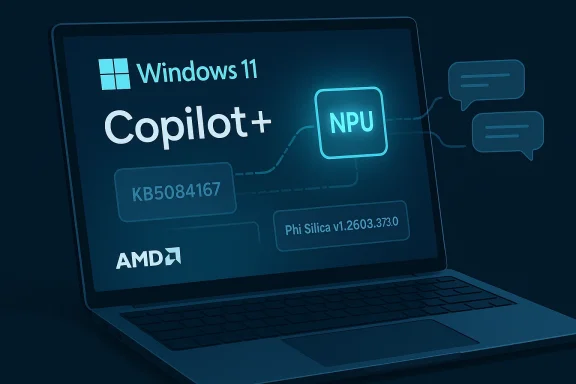

Microsoft has quietly shipped another targeted Phi Silica update for Copilot+ PCs, this time for AMD-powered systems running Windows 11 versions 24H2 and 25H2. The package, KB5084167, delivers Phi Silica version 1.2603.373.0 and continues Microsoft’s steady expansion of on-device AI as a first-class Windows component rather than a bundled app feature. It is a small release on the surface, but it says a lot about how Microsoft now thinks Windows should evolve: in modular pieces, in hardware-specific lanes, and with the NPU as a core platform requirement rather than a niche accelerator. Microsoft’s own support materials make clear that the update arrives automatically through Windows Update and depends on the latest cumulative update already being installed.

Phi Silica sits at the center of Microsoft’s local AI strategy for Windows Copilot+ PCs. Microsoft describes it as a Transformer-based local language model and, in its own words, “Microsoft’s most powerful NPU-tuned local language model,” optimized for efficiency and performance on Copilot+ hardware while still delivering many capabilities associated with larger language models. That positioning matters because it tells us Phi Silica is not just a feature add-on; it is part of the operating system’s new AI substrate.

The current KB5084167 package fits the same pattern Microsoft used with earlier Phi Silica refreshes. The company has been publishing separate AI component updates for different processor families, including AMD, Intel, and Qualcomm, rather than collapsing everything into one monolithic monthly rollup. That makes the servicing model more precise, but also more fragmented. For admins and power users, it means Windows Update is increasingly managing a stack of specialized models and dependencies rather than merely patching the kernel and desktop shell.

This release also reinforces the idea that Microsoft wants AI functionality to be closely tied to specific silicon capabilities. Phi Silica is available only on Copilot+ PCs with NPU support, and Microsoft’s developer documentation explicitly frames the model as a local language service for Windows AI APIs. In other words, the model is both a user-facing capability and a developer platform primitive. That dual identity is why even a minor version bump matters.

The practical consequence is straightforward: if you are tracking Windows 11 servicing, KB5084167 is not a flashy feature drop, but it is part of the infrastructure that keeps Microsoft’s local AI promises credible. If you are not on the right hardware, you never see it. If you are on AMD Copilot+ hardware, the update is another reminder that Microsoft intends local AI to be maintained the same way it maintains security and reliability fixes: automatically, quietly, and continuously.

A few details stand out immediately:

At the same time, local AI is not a free lunch. It consumes device resources, needs servicing, and must be kept aligned with Windows components and app APIs. Microsoft’s packaging approach reflects that reality. Rather than letting the model drift, the company is treating it like an operating system dependency. That is a sensible move, but it also raises the maintenance burden across the fleet.

The AMD-specific KB5084167 release is especially important because it shows Microsoft is no longer viewing Copilot+ AI as a single SKU story. Instead, the company is managing an ecosystem of hardware-specific AI assets. That implies different optimization paths for different chip vendors, which is exactly the kind of complexity you would expect when AI is bound to NPUs instead of simply running in the cloud.

For AMD, the upside is credibility in the Copilot+ market. If Microsoft treats AMD NPUs as worthy of their own Phi Silica branch, then those systems are not being left behind. That matters in a market where buyers increasingly look for meaningful on-device AI differentiation rather than marketing labels.

For Microsoft, the larger strategic benefit is flexibility. Separate packages allow the company to adjust performance, resolve hardware-specific issues, and push targeted improvements without waiting for a single unified release cycle. The trade-off is that the servicing matrix becomes more complex for support teams, imaging workflows, and documentation. That complexity is the price of precision.

This dependency chain also suggests Microsoft is prioritizing stability over convenience. AI components can be brittle if they are installed on mismatched builds, especially when the OS, NPU drivers, and AI runtime pieces all need to cooperate. By forcing the cumulative update first, Microsoft is reducing the chance of inconsistent state. That is the right call, even if it is less elegant for users who dislike forced sequencing.

It is also a reminder that Windows servicing now includes semantic components, not just security and reliability fixes. AI behavior can shift from release to release in ways that are visible to users but invisible to traditional patch reporting. That creates a new category of change management headache: the patch may not look important in traditional inventory tools, yet it can materially affect assistant quality and responsiveness.

A practical verification workflow would usually include:

There is also a developer angle here. Microsoft’s Windows AI APIs allow apps to use Phi Silica and other on-device models. That means an improved Phi Silica build can ripple outward into third-party applications, not just Microsoft’s own Copilot experiences. In practical terms, the update potentially benefits a wider ecosystem than the support page alone suggests.

It also supports Microsoft’s platform lock-in in a subtle way. Once users and developers start relying on the local AI stack, the hardware and Windows update cadence become part of the product experience. Competitors can copy features, but not easily replicate the combination of OS integration, model delivery, and silicon-targeted optimization that Microsoft is assembling. That integration is the moat.

The AMD-specific release adds one more layer: it helps ensure the Copilot+ ecosystem does not fragment into “best on vendor X” narratives. By shipping platform-specific model refreshes across CPU vendors, Microsoft can preserve a sense of shared capability while still respecting hardware differences. That is a delicate balancing act, but an important one.

The second thing to watch is whether Microsoft begins to explain more about what actually changes inside these model updates. Right now, the support pages mostly tell users that a version changed, not how it changed. For most consumers, that is enough. For enterprises, developers, and analysts, it would be helpful if Microsoft eventually exposed more detail about performance, capability shifts, and compatibility implications. Transparency will matter more as these models become part of the platform baseline.

What to watch next:

Source: Microsoft Support KB5084167: Phi Silica AI component update (version 1.2603.373.0) for AMD-powered systems - Microsoft Support

Overview

Overview

Phi Silica sits at the center of Microsoft’s local AI strategy for Windows Copilot+ PCs. Microsoft describes it as a Transformer-based local language model and, in its own words, “Microsoft’s most powerful NPU-tuned local language model,” optimized for efficiency and performance on Copilot+ hardware while still delivering many capabilities associated with larger language models. That positioning matters because it tells us Phi Silica is not just a feature add-on; it is part of the operating system’s new AI substrate.The current KB5084167 package fits the same pattern Microsoft used with earlier Phi Silica refreshes. The company has been publishing separate AI component updates for different processor families, including AMD, Intel, and Qualcomm, rather than collapsing everything into one monolithic monthly rollup. That makes the servicing model more precise, but also more fragmented. For admins and power users, it means Windows Update is increasingly managing a stack of specialized models and dependencies rather than merely patching the kernel and desktop shell.

This release also reinforces the idea that Microsoft wants AI functionality to be closely tied to specific silicon capabilities. Phi Silica is available only on Copilot+ PCs with NPU support, and Microsoft’s developer documentation explicitly frames the model as a local language service for Windows AI APIs. In other words, the model is both a user-facing capability and a developer platform primitive. That dual identity is why even a minor version bump matters.

The practical consequence is straightforward: if you are tracking Windows 11 servicing, KB5084167 is not a flashy feature drop, but it is part of the infrastructure that keeps Microsoft’s local AI promises credible. If you are not on the right hardware, you never see it. If you are on AMD Copilot+ hardware, the update is another reminder that Microsoft intends local AI to be maintained the same way it maintains security and reliability fixes: automatically, quietly, and continuously.

What KB5084167 Actually Is

KB5084167 is a Phi Silica AI component update for AMD-powered systems. Microsoft says the release is a new version of the Phi Silica component for Windows 11 24H2 and 25H2, and that it installs automatically from Windows Update once the required cumulative update is present. That means users should not expect a standalone installer, a feature dialog, or a separate setup experience. The update is intended to happen in the background.The servicing model matters

This is not the old Windows update model where one patch meant one binary and one reboot. Instead, Microsoft is using specialized component packages for AI workloads, which suggests tighter control over model versioning and hardware targeting. That is useful when the underlying workload is compute-intensive, device-specific, and dependent on NPU behavior. It is also a sign that Windows is being serviced more like a managed AI platform than a traditional desktop OS.A few details stand out immediately:

- The package is processor-specific, not universal.

- It is delivered through Windows Update, not manual download.

- It requires the latest cumulative update first.

- It applies to Windows 11 24H2 and 25H2 only.

- It is part of a broader family of Copilot+ AI components.

Why version bumps matter

Phi Silica versioning is not cosmetic. A model update can alter response quality, latency, memory behavior, and how well the NPU is utilized. Even if Microsoft doesn’t spell out every internal change, the fact that it ships separate builds indicates active tuning behind the scenes. In the AI era, a component version bump can be just as operationally important as a driver update.The Bigger Phi Silica Strategy

Microsoft has been remarkably consistent in how it frames Phi Silica. In developer docs, it is described as a local language model that can be integrated into Windows apps through the Windows AI APIs in the Windows App SDK. The company also emphasizes speculative decoding and other runtime techniques that help improve performance on-device. The story is not just “Windows has AI”; the story is “Windows can host AI locally, efficiently, and repeatedly across supported hardware.”Local AI, not cloud-only AI

That distinction matters because it changes the trust model. Local inference reduces dependence on network connectivity, can lower latency, and may help with privacy-sensitive tasks that users or enterprises prefer to keep on-device. Microsoft’s documentation makes clear that Phi Silica is intended for Copilot+ PCs with NPUs, which means the model is designed to exploit hardware that was specifically bought for AI acceleration.At the same time, local AI is not a free lunch. It consumes device resources, needs servicing, and must be kept aligned with Windows components and app APIs. Microsoft’s packaging approach reflects that reality. Rather than letting the model drift, the company is treating it like an operating system dependency. That is a sensible move, but it also raises the maintenance burden across the fleet.

The AMD-specific KB5084167 release is especially important because it shows Microsoft is no longer viewing Copilot+ AI as a single SKU story. Instead, the company is managing an ecosystem of hardware-specific AI assets. That implies different optimization paths for different chip vendors, which is exactly the kind of complexity you would expect when AI is bound to NPUs instead of simply running in the cloud.

What this means for users

For consumers, this mostly translates into a quieter experience. They get better on-device features without needing to know model names or version numbers. For enterprise users, though, the implications are deeper because AI behavior becomes part of the device baseline. That is both powerful and operationally awkward.AMD-Powered Copilot+ PCs as a Strategic Segment

AMD-powered Copilot+ PCs are not a side note in Microsoft’s AI push; they are a major validation point. By issuing a separate Phi Silica update for AMD systems, Microsoft is signaling that AMD NPUs are part of the same premium AI class as Intel and Qualcomm equivalents. That is good for AMD because it reinforces parity. It is also good for Microsoft because it reduces the risk that Copilot+ becomes perceived as a vendor-specific showcase.Hardware-specific AI is the new normal

The hardware targeting here is not accidental. Microsoft has already published separate Phi Silica and other AI component updates for different processor families, including Intel and Qualcomm. KB5084167 simply extends that pattern to AMD, which confirms that Microsoft’s AI servicing pipeline is now segmented by silicon platform. That segmentation likely helps with tuning and validation, but it also underscores how far Windows has moved from the old “one OS image for everything” ideal.For AMD, the upside is credibility in the Copilot+ market. If Microsoft treats AMD NPUs as worthy of their own Phi Silica branch, then those systems are not being left behind. That matters in a market where buyers increasingly look for meaningful on-device AI differentiation rather than marketing labels.

For Microsoft, the larger strategic benefit is flexibility. Separate packages allow the company to adjust performance, resolve hardware-specific issues, and push targeted improvements without waiting for a single unified release cycle. The trade-off is that the servicing matrix becomes more complex for support teams, imaging workflows, and documentation. That complexity is the price of precision.

Competitive implications

This matters beyond AMD and Microsoft. If on-device models become a core feature of premium PCs, then AI differentiation will increasingly depend on the quality of NPU support, model efficiency, and how well the OS can distribute updates. That gives Microsoft a strong platform advantage, but it also raises expectations for rivals shipping AI-capable laptops and local assistants.Windows 11 24H2 and 25H2: Why the Build Numbers Matter

Microsoft’s support page ties KB5084167 specifically to Windows 11 version 24H2 and version 25H2. That kind of pairing reflects the company’s broader servicing strategy: keep AI components aligned to the latest supported Windows branches and their cumulative updates. The result is tighter integration, but also a narrower support envelope.The dependency chain is deliberate

The requirement for the latest cumulative update is not a footnote. It tells administrators that the Phi Silica package is not meant to float independently from the core servicing stack. The model depends on a known baseline, and Microsoft wants that baseline in place before the AI component is applied. That is a classic enterprise-friendly move, even if it adds friction for individuals who prefer to control update timing manually.This dependency chain also suggests Microsoft is prioritizing stability over convenience. AI components can be brittle if they are installed on mismatched builds, especially when the OS, NPU drivers, and AI runtime pieces all need to cooperate. By forcing the cumulative update first, Microsoft is reducing the chance of inconsistent state. That is the right call, even if it is less elegant for users who dislike forced sequencing.

Enterprise and consumer impact differ

For consumers, the process is mostly invisible. Windows Update handles the installation, and the only user-facing sign may be a line in Update history. For enterprises, the story is operationally heavier because admins have to account for model updates in compliance, imaging, and device-readiness workflows. The distinction is becoming increasingly important as AI components behave more like platform code than like optional apps.Update History, Verification, and IT Operations

Microsoft says users can verify the presence of the update by checking Settings > Windows Update > Update history. That is a simple enough instruction, but it also reveals how Microsoft expects these packages to be consumed: not through a dedicated control panel, but as part of normal Windows maintenance. The update should appear in the history list depending on the processor type.What admins should be watching

Admins should think of this update as part of the device AI baseline, not as an optional enhancement. If a Copilot+ PC is expected to provide local AI behaviors, then missing or delayed Phi Silica refreshes could result in inconsistent user experiences across a fleet. That is especially relevant in mixed-vendor environments where AMD, Intel, and Qualcomm devices all receive distinct component packages.It is also a reminder that Windows servicing now includes semantic components, not just security and reliability fixes. AI behavior can shift from release to release in ways that are visible to users but invisible to traditional patch reporting. That creates a new category of change management headache: the patch may not look important in traditional inventory tools, yet it can materially affect assistant quality and responsiveness.

A practical verification workflow would usually include:

- Confirm the latest cumulative update is installed.

- Check whether the device is a Copilot+ PC with NPU support.

- Inspect Windows Update history for KB5084167.

- Validate the installed Phi Silica version against expected baselines.

- Test any workflows that rely on local AI features.

How This Fits Microsoft’s Broader AI Platform

KB5084167 should be read alongside Microsoft’s broader effort to make Windows a platform for local LLMs and AI-backed features. Microsoft’s documentation now explicitly groups Phi Silica with other local model options under Microsoft Foundry on Windows, which means this is not just a special feature for inbox apps. It is a general-purpose model layer intended for developers, OEMs, and Microsoft itself.From feature to foundation

That change is significant. A feature can be removed, replaced, or ignored. A platform layer becomes part of the architecture. By servicing Phi Silica through Windows Update, Microsoft is effectively declaring that local AI belongs in the same maintenance category as other foundational Windows components. That raises the importance of reliability, compatibility, and telemetry-driven iteration.There is also a developer angle here. Microsoft’s Windows AI APIs allow apps to use Phi Silica and other on-device models. That means an improved Phi Silica build can ripple outward into third-party applications, not just Microsoft’s own Copilot experiences. In practical terms, the update potentially benefits a wider ecosystem than the support page alone suggests.

Why this is important for Windows’ identity

Windows has long been defined by compatibility, breadth, and backward support. Phi Silica pushes it toward something more opinionated: a system where the hardware, model, and OS are co-designed for AI. That is a strategic bet, and it may pay off if local AI becomes a standard user expectation. But it also narrows the definition of a “full-featured” Windows PC to systems that can participate in Microsoft’s NPU-centric vision.The Business Case Behind Incremental AI Updates

The business logic behind KB5084167 is easy to miss because the update itself is so small. But the pattern is revealing. Microsoft is investing in a distributed AI delivery model that lets it refine the local experience continually, in place, and without major release drama. That helps keep Copilot+ PCs feeling current even when the user is not installing a headline feature.Why incremental updates are valuable

Incremental updates are valuable because they let Microsoft tune the model independently of the UI surface. If a response quality issue, performance regression, or hardware quirk appears, Microsoft can address the underlying component without reworking the whole OS. That is a mature servicing posture and one that mirrors how cloud AI products are maintained.It also supports Microsoft’s platform lock-in in a subtle way. Once users and developers start relying on the local AI stack, the hardware and Windows update cadence become part of the product experience. Competitors can copy features, but not easily replicate the combination of OS integration, model delivery, and silicon-targeted optimization that Microsoft is assembling. That integration is the moat.

The AMD-specific release adds one more layer: it helps ensure the Copilot+ ecosystem does not fragment into “best on vendor X” narratives. By shipping platform-specific model refreshes across CPU vendors, Microsoft can preserve a sense of shared capability while still respecting hardware differences. That is a delicate balancing act, but an important one.

Strengths and Opportunities

The release may look routine, but it reinforces several strategic strengths in Microsoft’s Windows AI plan. The biggest opportunity is not the version number itself; it is the consistency of the servicing model and the way it normalizes local AI as part of Windows maintenance. That makes the platform more predictable for both users and app developers.- Automatic delivery through Windows Update reduces deployment friction.

- Hardware-specific targeting lets Microsoft tune the model for AMD NPUs.

- Copilot+ alignment keeps the AI story tied to premium Windows hardware.

- Developer exposure via Windows AI APIs broadens the update’s impact.

- Versioned model servicing supports faster iteration than monolithic OS releases.

- Local inference can improve responsiveness and reduce cloud dependence.

- Enterprise control improves because the model is part of a managed baseline.

Risks and Concerns

The same structure that makes this strategy powerful also creates friction. Once AI components are treated like first-class Windows packages, update failures, version mismatches, and hardware fragmentation become more consequential. That is manageable, but it is not trivial, especially in mixed-device fleets.- Fragmented servicing across AMD, Intel, and Qualcomm increases complexity.

- Dependency chains can complicate troubleshooting and rollback.

- Model behavior drift may confuse users when responses change after updates.

- Enterprise imaging becomes harder when AI components are device-specific.

- NPU reliance narrows the eligible hardware pool.

- Support visibility may be weaker if admins do not track AI packages separately.

- User expectations may rise faster than local AI can consistently deliver.

Looking Ahead

The most important thing to watch is whether Microsoft keeps widening the number of Windows AI components that are serviced independently in this way. If Phi Silica continues to receive vendor-specific updates, that would confirm the broader trend toward componentized AI servicing on Windows. If other model families and AI features follow the same pattern, the update stack could become substantially more complex over the next few Windows release cycles.The second thing to watch is whether Microsoft begins to explain more about what actually changes inside these model updates. Right now, the support pages mostly tell users that a version changed, not how it changed. For most consumers, that is enough. For enterprises, developers, and analysts, it would be helpful if Microsoft eventually exposed more detail about performance, capability shifts, and compatibility implications. Transparency will matter more as these models become part of the platform baseline.

What to watch next:

- Additional AMD Phi Silica refreshes with new version numbers.

- Similar updates for Intel and Qualcomm Copilot+ systems.

- Changes in Windows AI API behavior tied to model updates.

- More explicit enterprise documentation for AI component inventorying.

- Whether Microsoft folds more local AI features into managed servicing channels.

Source: Microsoft Support KB5084167: Phi Silica AI component update (version 1.2603.373.0) for AMD-powered systems - Microsoft Support