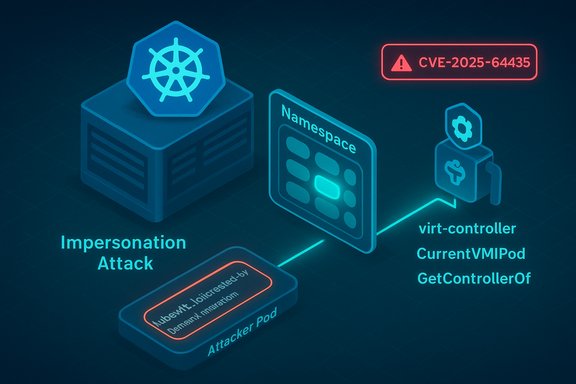

A logic flaw in KubeVirt’s virt-controller allows an attacker who can create pods in a target namespace to impersonate the legitimate virt-launcher pod for a running VirtualMachineInstance (VMI), causing the controller to bind lifecycle operations to the attacker-controlled pod and produce sustained denial‑of‑service (DoS) effects; the bug is tracked as CVE‑2025‑64435 and fixed in KubeVirt 1.7.0‑beta.0.

KubeVirt extends Kubernetes by running virtual machines (VMs) as first‑class workloads inside the cluster. Each VMI is managed by a small set of KubeVirt components: the virt-controller, which reconciles VMI state and orchestrates lifecycle changes; virt‑handler, which runs per‑node to manage QEMU and host integrations; and virt‑launcher, a pod that hosts the QEMU process and is treated as the immediate “controller” for the VMI on a node.

CVE‑2025‑64435 is a logic‑flaw vulnerability in the virt-controller’s pod‑to‑VMI association logic. The controller falls back to using the kubevirt.io/created‑by label when a pod lacks an OwnerReference, and selects the most recently created matching pod when multiple candidates are present. An attacker who can create a pod with the expected label and the VMI’s UID in the same namespace can therefore be mistaken for the real virt‑launcher; the controller may then issue updates or migration operations against the attacker’s pod instead of the legitimate launcher. This can disrupt VMI control and result in a DoS for the VMI. The project assigned the advisory GHSA‑9m94‑w2vq‑hcf9 and GitHub recorded the corrective commit. The flaw affects KubeVirt releases prior to 1.7.0‑beta.0; project maintainers published a targeted patch that changes how the controller determines the owning pod to avoid relying on label‑only fallbacks. Independent vulnerability trackers and distributors (NVD, OSV, SUSE, CVE aggregators) list the issue as a medium‑severity availability impact (CVSS v3.1 ≈ 5.3) and classify the underlying weakness as improper handling of exceptional conditions (CWE‑703).

Look for these signals:

Short‑term mitigations (compensating controls)

Priority actions (ranked)

This CVE is a reminder that availability attacks no longer require memory corruption or exotic kernel tricks to be operationally devastating — a small logic oversight in a controller accepting label‑only fallbacks can let an attacker with basic pod creation rights deny VMI availability. Combining immediate patching with robust RBAC, admission policies, and observability will both close the specific window and raise the bar for similar controller‑level attacks in the future.

KubeVirt vulnerability details and the upstream fix were verified against the KubeVirt security advisory and the patch commit published by the project, as well as corroborated against NVD/OSV and vendor advisories; operators should validate vendor package mappings and test the fix in staging before broad rollout. Additional operational context on virtualization availability risks and host‑level DoS scenarios is consistent with prior kernel/hypervisor availability advisories and community playbooks for responding to virtualization outages.

Source: MSRC Security Update Guide - Microsoft Security Response Center

Background and overview

Background and overview

KubeVirt extends Kubernetes by running virtual machines (VMs) as first‑class workloads inside the cluster. Each VMI is managed by a small set of KubeVirt components: the virt-controller, which reconciles VMI state and orchestrates lifecycle changes; virt‑handler, which runs per‑node to manage QEMU and host integrations; and virt‑launcher, a pod that hosts the QEMU process and is treated as the immediate “controller” for the VMI on a node.CVE‑2025‑64435 is a logic‑flaw vulnerability in the virt-controller’s pod‑to‑VMI association logic. The controller falls back to using the kubevirt.io/created‑by label when a pod lacks an OwnerReference, and selects the most recently created matching pod when multiple candidates are present. An attacker who can create a pod with the expected label and the VMI’s UID in the same namespace can therefore be mistaken for the real virt‑launcher; the controller may then issue updates or migration operations against the attacker’s pod instead of the legitimate launcher. This can disrupt VMI control and result in a DoS for the VMI. The project assigned the advisory GHSA‑9m94‑w2vq‑hcf9 and GitHub recorded the corrective commit. The flaw affects KubeVirt releases prior to 1.7.0‑beta.0; project maintainers published a targeted patch that changes how the controller determines the owning pod to avoid relying on label‑only fallbacks. Independent vulnerability trackers and distributors (NVD, OSV, SUSE, CVE aggregators) list the issue as a medium‑severity availability impact (CVSS v3.1 ≈ 5.3) and classify the underlying weakness as improper handling of exceptional conditions (CWE‑703).

How the bug works — technical anatomy

The vulnerable code path

At reconciliation time the virt‑controller needs to answer a simple question: "Which pod is the current virt‑launcher for this VMI?" The controller enumerates pods in the VMI namespace, checks ownership references, and — if controller OwnerReferences are absent — falls back to interpreting the kubevirt.io/created‑by label and the DomainAnnotation to synthesize a controller ref. The implementation then chooses the most recently created candidate when multiple pods match. The fallback and "pick most recent" behavior is the root cause: it permits an attacker‑created pod to masquerade as the controller if the attacker knows the VMI name and UID and can create pods in that namespace. The official advisory shows the affected functions (CurrentVMIPod, GetControllerOf, IsControlledBy) and the exact lines that were changed in the fix.Exploitation model and prerequisites

- Required capability: the attacker must be able to create pods in the same namespace as the target VMI (a low‑privilege capacity in many multi‑tenant clusters or in misconfigured namespaces).

- Knowledge needed: the attacker needs the VMI name and/or UID in order to craft labels/annotations that match the expected created‑by metadata. These values are frequently discoverable in same‑namespace contexts (via list/read permissions) or by inspecting cluster manifests in GitOps workflows and CI pipelines.

- Attack complexity: moderate to high in the sense that the attacker must place a pod with matching metadata in the right namespace at the right time; successful exploitation is not a single universal network call but is straightforward if the attacker already has pod‑create privileges for the namespace. The initial vulnerability listings use CVSS metrics indicating Network attack vector with High attack complexity, Low privileges required.

Practical effect on VMIs

When the controller is tricked into associating the VMI with the attacker pod, it may:- Apply updates or hotplug operations to the wrong pod, leaving the real virt‑launcher unsynchronized and the VMI in an inconsistent state.

- Mistakenly report VMI status, causing lifecycle operations to stall or be rejected.

- Influence migration decisions by pointing the VMI’s migration target to a node controlled by or favorable to the attacker, effectively bypassing nodeSelector/nodeAffinity constraints relied upon for placement security.

- Ultimately block management operations (start/stop/migrate/hotplug), producing a sustained or persistent DoS of the VMI until the controller and resources are corrected.

Exploitation scenarios and attack surface

Tenant‑adjacent and CI/CD abuse

Multi‑tenant clusters, shared namespaces, or over‑permissive CI/CD runners that allow pipeline jobs to create pods in application namespaces are the highest‑risk environments. An attacker can weaponize standard pod creation rights to impersonate virt‑launcher pods. Because many operators deploy KubeVirt VMIs in the same namespaces used by application teams or automation, the blast radius is broad if RBAC is not constrained.Supply chain and repo exposure

VMI names and UIDs may be present in manifests checked into Git repositories, Helm charts, or Kubernetes object stores. A compromised pipeline that pushes malicious pods into the same namespace can act as an orchestrated impersonation vector. Hardened pipelines and least‑privilege runners reduce this risk.Management plane compromises

If an attacker already controls a management host or has stolen credentials able to create namespaced pods, they can exploit this flaw without touching the control plane directly. This makes the vulnerability a compelling follow‑on for other post‑compromise actions and a useful way to deny availability of VMs used for recovery or forensic tasks. The operational cost of a single VMI DoS can be high in production or stateful workloads.Detection, telemetry, and indicators of exploitation

Detecting a successful impersonation is non‑trivial because the controller operates at the API level and the symptoms are behavioral, not obviously malicious.Look for these signals:

- Unexpected VMI status transitions that do not align with node‑side events.

- Reconciliation loops in virt‑controller logs where the controller repeatedly selects a pod that is not the expected virt‑launcher (logs around CurrentVMIPod / GetControllerOf are especially informative).

- Newly created pods in VMI namespaces bearing kubevirt.io/created‑by labels or DomainAnnotation values that correspond to running VMIs.

- Audit‑log entries for pod creation in namespaces hosting VMIs, especially when those creators are non‑standard service accounts or CI runners.

- Discrepancies between the pod that the controller treats as the launcher and the actual pod process tree on the node (i.e., virt‑launcher vs attacker pod). Preserving controller logs, API server audit logs, and node‑level process snapshots is critical to triage.

Confirmed fixes and verification steps

KubeVirt maintainers published a fix that changes the controller logic to avoid trusting labels when a proper OwnerReference is absent, and to make the association deterministic and robust in migration scenarios. The upstream commit referenced by advisories (commit 9a6f4a3…) is the authoritative patch. Administrators should verify that their KubeVirt installation contains that commit or is a version later than or equal to 1.7.0‑beta.0. How to verify a patch or backport:- Query the GitHub commit history in your installed KubeVirt package or container image and confirm the presence of commit 9a6f4a3a7079….

- Where you rely on vendor builds or OS package channels, confirm the vendor’s package changelog explicitly lists CVE‑2025‑64435 or references the same upstream commit id. Many distributors and security trackers (NVD, SUSE, Chainguard) have already recorded the CVE — use those listings to cross‑check your vendor mapping.

- In a staging cluster, simulate the controller behavior (a safe proof‑of‑concept reproducer is published in the advisory) to ensure the controller no longer elects attacker pods when you create a misleading pod; always run PoC tests in isolated test environments only.

Immediate mitigations and long‑term hardening

If you cannot upgrade immediately, apply compensating controls to narrow the attack surface.Short‑term mitigations (compensating controls)

- Enforce strict RBAC: restrict who and what service accounts can create pods in namespaces with VMIs. Prefer separate namespaces for VMIs and applications.

- Use Admission Controllers / Validating Webhooks: block pod creations whose labels/annotations contain kubevirt.io/created‑by or DomainAnnotation unless created by trusted controller accounts.

- Harden CI/CD runners: prevent pipeline jobs from creating pods in production namespaces; require dedicated service accounts with minimal privileges.

- Network policy and Pod Security admission: isolate management plane and restrict who can reach control components.

- Audit and alert: enable API server audit logging for pod create/update events in VMI namespaces and trigger alerts for anomalous creators.

- Upgrade KubeVirt to 1.7.0‑beta.0 or newer as soon as vendor‑supported releases are available. This is the definitive fix.

- Adopt namespace separation: run VMIs in dedicated, tightly controlled namespaces with minimal external write access.

- Treat labels and annotations as unauthenticated metadata: avoid making controller trust decisions that rely solely on user‑controlled labels. The KubeVirt fix reflects this engineering principle.

- Implement least‑privilege provisioning for tenants and pipelines: avoid granting pod create rights in production namespaces to untrusted or third‑party services.

- Increase CI/CD manifest secrecy: avoid publishing UIDs or deployment‑specific secrets in public repos; ensure ephemeral credentials and environment segregation for pipeline artifacts.

- Inventory: enumerate namespaces containing VMIs and identify which actors can create pods there.

- Patch planning: schedule KubeVirt upgrade windows and test in staging with representative VMI workloads.

- Apply compensating controls: Admission Controllers, RBAC locks, and API audit filters.

- Monitor: enable alerts for pods created with kubevirt created‑by annotations or misaligned ownership.

- Verify: confirm the fix via commit id and run a non‑destructive reconciliation test in staging.

Operational trade‑offs and residual risks

Strengths of KubeVirt’s response- Focused patch: the upstream fix is surgical and targets the controller’s logic fallback rather than reworking the whole controller; this reduces regression risk.

- Public advisory and PoC: maintainers published an advisory and a controlled proof‑of‑concept, so operators can validate and test mitigations in labs.

- Attack surface remains where pod creation privileges are too broad: even with the fix, poor RBAC and namespace hygiene will continue to amplify risk across other logic decisions.

- Patch lifecycle for vendor backports: downstream distributors may take time to ship fixed packages for operator‑managed platforms or appliance images; coordinate with vendors. SUSE and other vendors have recorded the CVE and their own assessments, but administrators must verify vendor package mappings for their specific builds.

- Detection difficulty: controller confusion manifests as availability and misbehavior rather than a binary exploit footprint. Operators must tune behavioral telemetry and audits to reliably spot incidents.

- Public exploitation in the wild: as of the advisory and the public records aggregated by NVD and OSV, there is no confirmed widespread mass exploitation report; however, the advisory contains a practical PoC, so the lack of observed exploitation does not imply immaterial risk. Treat claims about "in‑the‑wild" exploitation as unverified until authoritative incident reports appear.

Broader context: why controller logic flaws matter

Controller components in Kubernetes (and in virtualization overlays like KubeVirt) act as the authoritative orchestrators of state. When controllers make trust assumptions about object metadata that can be controlled by tenants or pipelines (labels, annotations, syntactic fallbacks), a surprisingly powerful confusion primitive is introduced. Attackers who can influence cluster metadata can force controllers into erroneous decisions that have high operational cost — from misconfiguration and drift to full workload denial. Similar availability‑centric vulnerabilities have previously caused hypervisor instability, guest hangs, and host reboots when controllers or drivers trusted unverified inputs; operators must treat availability‑first CVEs with as much urgency as confidentiality issues because service continuity is often the dominant business impact.Final assessment and recommended action plan

CVE‑2025‑64435 is a credible availability risk for organizations using KubeVirt in environments where pod creation rights are not tightly controlled. The vulnerability is fixed upstream in KubeVirt 1.7.0‑beta.0 and by the commit referenced in the advisory; the straightforward remediation is to upgrade KubeVirt to an unaffected release or apply the vendor backport.Priority actions (ranked)

- Confirm whether your cluster runs affected KubeVirt versions (any version < 1.7.0‑beta.0). If yes, prioritize remediation tickets.

- If you can upgrade immediately, plan a staged KubeVirt upgrade to a version containing the upstream commit; test migrations, hotplug, and lifecycle operations in staging first.

- If you cannot upgrade immediately, implement strict RBAC and admission controls to block pod creations that could impersonate virt‑launcher pods (label/annotation validation), and harden CI/CD runners.

- Improve auditing and alerting for pod creation events in VMI namespaces and capture controller logs for forensic analysis if anomalies appear.

This CVE is a reminder that availability attacks no longer require memory corruption or exotic kernel tricks to be operationally devastating — a small logic oversight in a controller accepting label‑only fallbacks can let an attacker with basic pod creation rights deny VMI availability. Combining immediate patching with robust RBAC, admission policies, and observability will both close the specific window and raise the bar for similar controller‑level attacks in the future.

KubeVirt vulnerability details and the upstream fix were verified against the KubeVirt security advisory and the patch commit published by the project, as well as corroborated against NVD/OSV and vendor advisories; operators should validate vendor package mappings and test the fix in staging before broad rollout. Additional operational context on virtualization availability risks and host‑level DoS scenarios is consistent with prior kernel/hypervisor availability advisories and community playbooks for responding to virtualization outages.

Source: MSRC Security Update Guide - Microsoft Security Response Center