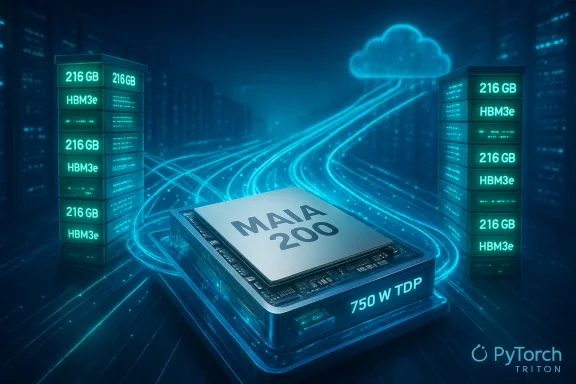

Microsoft’s Maia 200 announcement marks a decisive escalation in the hyperscaler silicon arms race: an inference‑first accelerator built on TSMC’s 3 nm process that Microsoft says is already in Azure racks and is explicitly tuned to lower the per‑token cost of running large language models like OpenAI’s GPT‑5.2. The Maia 200 promises a radical rebalancing of the memory, compute and networking trade‑offs that define inference economics — a design that Microsoft frames as delivering roughly 30% better performance‑per‑dollar for production inference while offering industry‑leading narrow‑precision throughput in FP4 and FP8 formats. These are vendor assertions, but they’re supported by an unusually detailed system pitch: a 750 W SoC envelope, over 140 billion transistors, 216 GB of HBM3e memory at roughly 7 TB/s, hundreds of megabytes of on‑die SRAM, and a bespoke two‑tier Ethernet scale‑up fabric exposing 2.8 TB/s of bidirectional scaleup bandwidth per accelerator. Microsoft’s official blog lays out these claims and positions Maia 200 as the engine for Microsoft Foundry, Microsoft 365 Copilot, and hosted OpenAI models running on Azure. ([blogs.microsoft.crosoft.com/blog/2026/01/26/maia-200-the-ai-accelerator-built-for-inference/))

Microsoft’s Maia program began as an internal experiment to co‑design silicon, rack architecture and the software stack. Maia 100 was an earlier, proof‑of‑concept step; Maia 200 is the productionized, inference‑first follow‑on that reframes the problem: inference — the repeated work of generating tokens — is the recurring cost center for cloud AI services, not the episodic cost of training. With Maia 200, Microsoft has explicitly traded some of the generality prized by training GPUs for density, deterministic latency, memory locality and quantization‑centric compute that purports to deliver more tokens per dollar and per watt. The company says early deployments are live in Azure’s US Central datacenter with US West 3 planned next, and a Maia SDK preview ng offered to accelerate model porting. (blogs.microsoft.com)

Why this matters now: hyperscalers increasingly treat custom silicon as a means to control operating margins, secure capacity, and tune product experiences. Microsoft’s narrative places Maia 200 at the center of that strategy — a way to lower marginal inference costs for services that generate billions of tokens a day.

Important caution points:

For WindowsForum readers and IT leaders, Maia 200 is a development to pilot aggressively but adopt cautiously. The upside — lower operating costs, improved throughput and better capacity control — is real if quantitative, real‑world tests confirm Microsoft’s claims. The downside — model fidelity risks under extreme quantization, software maturity hurdles, and operational complexity at the datacenter level — are equally real and worth measuring before production migrations. Microsoft’s launch is a competitive win for cloud customers in the long run: more vendor options and innovation in inference infrastructure should drive better price‑performance and cloud services that are more tailored to production AI realities.

In short: Maia 200 raises the stakes in inference infrastructure. It is a bold systems play by Microsoft — technically coherent, strategically timed, and practically significant — but the ultimate verdict will be decided in labs, pilot programs and the first independent workload benchmarks that measure true tokens‑per‑dollar under real application conditions.

Source: Quantum Zeitgeist Microsoft Unveils Maia 200: New AI Inference Accelerator For GPT-5.2

Background

Background

Microsoft’s Maia program began as an internal experiment to co‑design silicon, rack architecture and the software stack. Maia 100 was an earlier, proof‑of‑concept step; Maia 200 is the productionized, inference‑first follow‑on that reframes the problem: inference — the repeated work of generating tokens — is the recurring cost center for cloud AI services, not the episodic cost of training. With Maia 200, Microsoft has explicitly traded some of the generality prized by training GPUs for density, deterministic latency, memory locality and quantization‑centric compute that purports to deliver more tokens per dollar and per watt. The company says early deployments are live in Azure’s US Central datacenter with US West 3 planned next, and a Maia SDK preview ng offered to accelerate model porting. (blogs.microsoft.com)Why this matters now: hyperscalers increasingly treat custom silicon as a means to control operating margins, secure capacity, and tune product experiences. Microsoft’s narrative places Maia 200 at the center of that strategy — a way to lower marginal inference costs for services that generate billions of tokens a day.

What Microsoft announced — specs and claims

Microsoft’s public technical claims are precise and system‑level rather than single‑parameter marketing. Here are the key vendor figures they published:- Fabrication: TSMC 3 nm process; die transistor budget cited as over 140 billion transistors. (blogs.microsoft.com)

- Compute: native tensor cores for FP4 (4‑bit) and FP8 (8‑bit) datatypes, with per‑chip vendor‑stated peaks of >10 petaFLOPS (FP4) and >5 petaFLOPS (FP8). (blogs.microsoft.com)

- Memory: 216 GB HBM3e on package delivering ~7 TB/s of memory bandwidth, together with ~272 MB of on‑die SRAM for staging and caching. (blogs.microsoft.com)

- Power & thermal: a ~750 W SoC TDP (packaged, designed into liquid‑cooled trays at the rack level). (blogs.microsoft.com)

- Interconnect: a two‑tier scale‑up network using standard Ethernet plus a Maia AI transport protocol, with 2.8 TB/s bidirectional dedicated scaleup bandwidth per accelerator and predictable collective operations across clusters up to 6,144 accelerators. (blogs.microsoft.com)

- Economics & comparisons: Microsoft claims 3× FP4 performance over Amazon’s Trainium Gen‑3 and FP8 performance that exceeds Google’s TPU v7; the company also reports ~30% improvement in performance‑per‑dollar over its current fleet for inference workloads. (blogs.microsoft.com)

Deep dive: the architectural choices that matter

Microsoft’s pitch rests on three tightly coupled levers: narrow‑precision compute, a memory‑centric SoC/package, and a deterministic, Ethernet‑based scale‑up fabric. Each deserves unpacking.Narrow‑precision compute (FP4 / FP8)

- Why narrow precision? Modern inference workloads can tolerate aggressive quantization without large quality degradation, particularly when models and tokenizers are designed for it. Quantization reduces memory footprint and arithmetic cost, enabling more model state to remain “close” to compute and reducing cross‑device communication. Microsoft designed Maia 200 with native FP4 and FP8 tensor units to exploit that trade. (blogs.microsoft.com)

- Caveat: FP4 quantization is not universally lossless. The effectiveness depends on model architecture, training/fine‑tuning workflows (quantization‑aware training or post‑training calibration), and the nature of the workload (reasoning vs. numeric fidelity). Organizations will need to measure accuracy and hallucination characteristics carefully when migrating critical inference flows. Independent reporting and industry analysts underscore that peak FLOPS are theoretical, and real token throughput depends on memory behavior and software optimizations.

Memory subsystem: HBM3e + on‑die SRAM + DMA/NoC

- Microsoft puts memory and data movement at the center of Maia 200. By pairing 216 GB HBM3e with a substantial on‑die SRAM buffer and a hierarchical DMA/NoC fabric, the design aims to minimize off‑package traffic and maximize token throughput. For inference, keeping large swaths of weights and key/value caches local is a major advantage. (blogs.microsoft.com)

- Practical implication: a single Maia 200 can host larger contiguous model shards and KV caches, reducing the number of accelerators participating in each token generation — and that directly improves latency and utilization. But the memory strategy also increases package complexity and raises thermal/packaging requirements at the rack level. (blogs.microsoft.com)

Two‑tier Ethernet scale‑up fabric and the Maia transport

- Microsoft intentionally chose a standard Ethernet foundation rather than relying exclusively on proprietary fabrics like InfiniBand. The two‑tier design — with direct, non‑switched links among four accelerators per tray and an Ethernet‑based Maia transport for inter‑rack collectives — prioritizes predictable latency and leverages existing datacenter switching ecosystems. Microsoft claims this yields cost advantages and scalability up to 6,144 accelerators for collective operations. (blogs.microsoft.com)

- This is also a bet on software: to realize the promise, collective libraries and the SDK must hide complexity and ensure low‑latency collectives comparable to what tightly coupled fabrics provided previously. Early SDK features (PyTorch integration, Triton compiler, low‑level NPL) indicate Microsoft is addressing that challenge, but ecosystem maturity will take time. (blogs.microsoft.com)

Independent corroboration and what’s still vendor‑claimed

Multiple independent publications repeated Microsoft’s numbers and placed them against Trainium and TPU v7 specifications. Outlets such as The Verge, Tom’s Hardware and CRN cited the same headline figures and emphasized Microsoft’s memory and networking innovations as the defining differentiator. Those sources are consistent with Microsoft’s blog but do not replace independent benchmarking.Important caution points:

- Peak FP4 and FP8 FLOPS are vendor‑stated maxima. Real token throughput depends on quantization efficiency, cache hit rates, model partitioning, and software stack maturity. Treat vendor FLOPS claims as engineering targets, not guaranteed application performance. (blogs.microsoft.com)

- Transistor counts and package bandwidth figures are difficult to independently verify without teardown and lab benchmarks. Microsoft’s detailed system story is compelling, but objective performance and TCO will come from workload‑level tests run by independent labs or large customers.

Why Maia 200 could matter — practical benefits

If Microsoft’s claims hold up in representative workloads, Maia 200 offers several concrete advantages for organizations running large‑scale inference:- Cost efficiency: Microsoft asserts ~30% better performance‑per‑dollar for inference versus its prior fleet, which — at hyperscaler scale — compounds into material margin improvements for services and lower costs for customers. This is the core commercial case for first‑party silicon. (blogs.microsoft.com)

- Lower latency and higher utilization: larger on‑package memory and predictable scale‑up collectives can reduce the number of devices involved per token, trimming tail latency and increasing accelerator utilization.

- Improved capacity control: by owning the inference stack, Microsoft can manage supply, scheduling and pricing with less dependence on third‑party GPU market dynamics.

- A cloud‑native developer experience: the Maia SDK (PyTorch support, Triton compiler, NPL) aims to smooth migration and optimization for models, which matters for adoption across enterprises and research groups. (blogs.microsoft.com)

Risks, caveats and open questions

Maia 200 is strategically significant, but it also raises technical and operational risks enterprises should weigh carefully.1) Model compatibility and quality risk from FP4 quantization

Aggressive quantization can break model behavior for certain tasks. Enterprises must validate:- Accuracy loss and failure modes under FP4, particularly for safety‑sensitive or instruction‑following behaviors.

- Toolchain maturity for quantization‑aware training or mixed‑precision fallbacks.

- Whether third‑party models (and their licensing terms) permit the required retraining or calibration workflows.

2) Software and ecosystem maturity

The Maia SDK includes attractive primitives, but the heavy lifting is in integration:- Distributed collective libraries, kernel libraries, Triton backends and orchestration must all reach parity with existing GPU stacks to avoid friction.

- Tooling for debugging numerical fidelity under FP4 is essential. Microsoft’s preview SDK addresses these but widespread production readiness will take time. (blogs.microsoft.com)

3) Thermal, power and datacenter ops complexity

A 750 W SoC per package, large HBM stacks and new liquid cooling arrangements increase operational complexity:- Datacenter operators must provision liquid cooling or enhanced heat‑exchanger units and rethink power distribution at the rack level.

- Higher density increases failure domain and maintenance complexity; operational playbooks need updating. (blogs.microsoft.com)

4) Supply chain and vendor concentration risk

Maia 200 depends on TSMC’s 3 nm node and specialized packaging capability:- TSMC capacity constraints at advanced nodes create ramp risk and may limit broader availability in the near term.

- The industry moves toward more custom silicon; supply chain diversification and contractual clarity will become critical negotiation points for cloud consumers and partners.

5) Portability and potential lock‑in

Running production models on Maia‑specific optimizations can create migration friction:- Proprietary transport and NPL language, if used heavily, may hinder easy movement between clouds or back to commodity GPUs. Microsoft is promoting portability via PyTorch and Triton support, but the practical cost of re‑optimization matters. (blogs.microsoft.com)

Competitive and market implications

Maia 200 is a direct answer to the economics of large‑scale inference and a strategic electric fence around Azure’s AI services. Its arrival has several likely market effects:- Pressure on AWS and Google to accelerate their own inference‑centric roadmap or expand Trainium/TPU capabilities and pricing. Analysts already position Maia 200 as a move to reduce reliance on Nvidia for inference, though Nvidia remains dominant for training and retains a robust software ecosystem.

- Downward pressure on inference pricing: if Maia‑backed Azure SKUs deliver genuine tokens‑per‑dollar improvements at scale, competing clouds may adjust prices or accelerate in‑house silicon efforts.

- Peripheral winners in networking, switch gear and packaging supply chains: Microsoft’s Ethernet‑scale approach increases demand for high‑performance Ethernet switching and NICs tailored to scale‑up topologies, benefiting vendors that support that stack.

Practical guidance for IT leaders and developers

For enterprise teams and platform owners evaluating Maia‑backed offerings, here are pragmatic next steps to separate promises from production truth:- Run representative pilots. Choose 2–3 production workloads that reflect your heaviest inference patterns and measure accuracy, latency, cost and failure modalities under Maia‑targeted quantization.

- Quantization validation. Test both post‑training quantization and quantization‑aware training flows to measure fidelity loss and the operational overhead of retraining.

- Measure tail latency and SLAs. High percentile latency under load is the practical limiter for many user‑facing services — verify that Maia‑backed deployments meet your SLAs.

- Evaluate portability. Ensure you can fall back to GPU‑backed instances if necessary; invest in model abstractions (ONNX, Triton) that reduce rework.

- Prepare datacenter ops. If you are an Azure customer running private racks or express routes, confirm cooling, power and reliability implications with your account team.

- Negotiates. If Maia‑backed instances become strategic, include testing‑based performance clauses and migration support in contractual commitments.

What to watch next

- Independent benchmarks and lab reports that measure token‑per‑dollar across representative production models (instruction following, summarization, code generation). Those will be the clearest validators of Maia 200’s economic claims.

- SDK and runtime maturity: Triton integration, NPL adoption patterns, and the completeness of Maia’s optimized kernel library will determine how fast customers can move critical workloads.

- Regional availability and capacity ramps: Microsoft’s initial public deployment in US Central followed by US West 3 is only the start — availability in additional regions and public SKUs for customers will shape adoption timelines. (blogs.microsoft.com)

- Competitive responses from AWS, Google and Nvidia: expect pricing moves, new hardware announcements or software incentives that seek to blunt Maia’s market impact.

Final assessment

Maia 200 is a credible, system‑level engineering statement: Microsoft has packaged compute, memory and networking choices around the economics of inference rather than the flexibility of training. The publicly shared numbers — 216 GB HBM3e, ~7 TB/s, >10 PFLOPS (FP4), >5 PFLOPS (FP8), 2.8 TB/s scale‑up bandwidth and a multi‑thousand accelerator collective — describe a tightly integrated, inference‑centric platform with the potential to change token economics inside Azure and for Microsoft’s cloud partners. Microsoft’s own documentation and independent reporting corroborate the major claims, while also underscoring that workload‑level validation is essential. (blogs.microsoft.com)For WindowsForum readers and IT leaders, Maia 200 is a development to pilot aggressively but adopt cautiously. The upside — lower operating costs, improved throughput and better capacity control — is real if quantitative, real‑world tests confirm Microsoft’s claims. The downside — model fidelity risks under extreme quantization, software maturity hurdles, and operational complexity at the datacenter level — are equally real and worth measuring before production migrations. Microsoft’s launch is a competitive win for cloud customers in the long run: more vendor options and innovation in inference infrastructure should drive better price‑performance and cloud services that are more tailored to production AI realities.

In short: Maia 200 raises the stakes in inference infrastructure. It is a bold systems play by Microsoft — technically coherent, strategically timed, and practically significant — but the ultimate verdict will be decided in labs, pilot programs and the first independent workload benchmarks that measure true tokens‑per‑dollar under real application conditions.

Source: Quantum Zeitgeist Microsoft Unveils Maia 200: New AI Inference Accelerator For GPT-5.2