Microsoft’s Maia 200 has moved from lab talk to production racks — and CEO Satya Nadella was explicit that the move won’t end long-standing partnerships with Nvidia or AMD, even as Microsoft touts aggressive performance claims for its new inference accelerator. m])

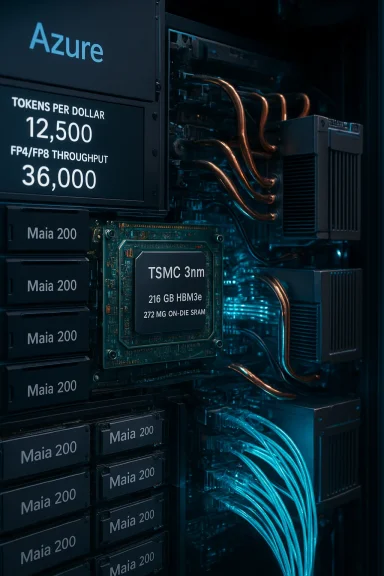

Microsoft announced Maia 200 as a purpose-built AI inference accelerator designed to reduce per-token cost and improve throughput for production large language model (LLM) workloads. The company says Maia 200 is fabricated on TSMC’s 3 nm process, features a memory-centric design with large HBM3e capacity, and is tuned for low-precision tensor math (FP4 and FP8) — the operating point many vendors now favour for high-volume inference. Those product claims are laid out in Microsoft’s official blog and echoed by multiple independent outlets.

At the same time Microsoft made a strategic point: building first‑party silicon is about capacity, cost and systems integration, not exclusivity. Nadella said Microsoft will continue buying from Nvidia and AMD while developing its own chips, and Microsoft has already begun deploying Maia 200 inside Azure data centres (US Central first, US West 3 next). That message — double down on custom silicon without abandoning partners — is central to Microsoft’s positioning.

Key published claims:

Important context and caveats:

But the story is not yet a fait accompli. The most important load‑bearing claims (PFLOPS, perf-per-dollar and real-world tokens-per-dollar) come from Microsoft and have not yet been validated at scale by neutral third parties. The software and ecosystem challenges — porting to FP4/FP8, maintaining model fidelity, and matching Nvidia’s mature tooling — are real hurdles that determine whether Maia will translate from “promising architecture” into pervasive operational advantage.

For enterprise IT teams and WindowsForum readers, the right posture is pragmatic experimentation: run representative pilots, validate workload-level economics, preserve portability, and avoid wholesale migration until independent benchmarks and broad tooling maturity arrive. If Microsoft’s claims are broadly confirmed, Maia-backed Azure SKUs will materially lower inference costs for many services; if not, Maia will still have delivered value to the market by forcing greater competition and faster iteration across hyperscalers and GPU vendors. Either outcome is a net win for customers — provided architects do the necessary validation work before committing critical workloads.

In short: Maia 200 intensifies the hyperscaler silicon arms race and gives Microsoft leverage — but Nvidia and AMD remain essential partners for the foreseeable future. The era ahead will be heterogeneous; success will go to teams that plan for portability, measure empirically, and exploit the new hardware selectively where it demonstrably improves production economics.

Conclusion: Maia 200 is a major milestone for Microsoft’s inference strategy and for the cloud AI market. The claims are bold and technically plausible; the risks are real and measurable. As the hardware arrives in more regions and independent benchmarks appear, IT leaders should move from passive interest to active testing — because the next wave of AI economics will be decided by tokens-per-dollar, not press‑release PFLOPS.

Source: The Tech Buzz https://www.techbuzz.ai/articles/microsoft-doubles-down-on-nvidia-amd-despite-maia-200-launch/

Background / Overview

Background / Overview

Microsoft announced Maia 200 as a purpose-built AI inference accelerator designed to reduce per-token cost and improve throughput for production large language model (LLM) workloads. The company says Maia 200 is fabricated on TSMC’s 3 nm process, features a memory-centric design with large HBM3e capacity, and is tuned for low-precision tensor math (FP4 and FP8) — the operating point many vendors now favour for high-volume inference. Those product claims are laid out in Microsoft’s official blog and echoed by multiple independent outlets. At the same time Microsoft made a strategic point: building first‑party silicon is about capacity, cost and systems integration, not exclusivity. Nadella said Microsoft will continue buying from Nvidia and AMD while developing its own chips, and Microsoft has already begun deploying Maia 200 inside Azure data centres (US Central first, US West 3 next). That message — double down on custom silicon without abandoning partners — is central to Microsoft’s positioning.

What Microsoft says Maia 200 is (and the numbers it published)

Microsoft’s public materials and blog list a precise technical story. Cross-checking Microsoft’s announcement against contemporary press reporting shows consistent headline figures; however, many of those figures are vendor-provided and should be treated accordingly.Key published claims:

- Built on TSMC 3 nm node with a transistor count described in the hundreds of billions.

- Native FP4 and FP8 tensor cores, with peak chip-level throughput claimed at ~10 petaFLOPS (FP4) and ~5 petaFLOPS (FP8).

- 216 GB HBM3e on-package memory with around 7 TB/s of memory bandwidth, and ~272 MB of on‑die SRAM to reduce off-chip movement.

- A 750 W SoC thermal envelope per chip, with rack and tray designs that connect multiple Maia accelerators with direct links and an Ethernet-based, two-tier scale-up transport.

- Microsoft’s claim of ~30% better performance-per-dollar for inference compared with the “latest generation hardware in our fleet today.”

How Maia 200 is positioned against Trainium, TPU and GPUs

Microsoft explicitly compared Maia 200’s FP4 and FP8 performance to Amazon’s Trainium and Google’s TPU v7 in its launch materials, and independent reporting reproduced those comparisons. Microsoft claims Maia 200 delivers roughly 3× the FP4 performance of Trainium Gen‑3 and FP8 performance above Google’s TPU v7.Important context and caveats:

- Vendor-to-vendor comparisons are useful signalling — they highlight architectural intent and target workloads — but they are not a substitute for independent, workload-level benchmarking. Microsoft’s blog and public figures give only part of the test configuration; independent tests will be necessary to validate real‑world tokens-per-dollar and latency under sustained production traffic.

- The Maia 200 is marketed as an inference-first accelerator. That focus matters: many high-precision training workloads still favour Nvidia’s latest Blackwell class GPUs for raw training throughput and for the maturity of the CUDA ecosystem. Maia’s elevator pitch is cheaper, denser inference at cloud scale rather than wholesale replacement of GPU training clusters.

The business : why Microsoft builds Maia 200

Microsoft’s rationale is straightforward and multi‑layered:- Capacity and supply resilience. Advanced GPUs are scarce and expensive; owning a portion of the silicon stack reduces reliance on external suppliers and gives Microsoft leverage in procurement and product design.

- Inference economics. Serving tokens at scale is a recurring line item. A 30% perf/$ gain on inference — if realized in practice — materially lowers operating costs for services like Microsoft 365 Copilot, Foundry and hosted models.

- Systems-level optimization. Microsoft is selling Maia as silicon plus software plus racks plus Ethernet fabric, a combined systems play that promises predictable collective operations and easier integration into Azure’s control plane. That system view is a differentiator vs. purely chip-focused vendors.

Strengths: what Maia 200 could deliver, if the claims hold up

- Lowered per-token costs for inference-heavy services. Microsoft projects a 30% perf-per-dollar advantage; even if real-world gains are smaller, the direction is meaningful for high-volume services.

- Memory-first architecture that addresses real bottlenecks. Large HBM3e capacity and on-die SRAM reduce the bandwidth and latency limitations that often throttle token generation on large models. That’s a pragmatic engineering choice for inference economics.

-n with Azure tooling.** A Maia SDK with PyTorch and Triton support, a Maia simulator and cost calculator could ease porting for developers and reduce migration friction over time. Integration with Azure’s control plane also helps operations teams manage heterogeneous fleets. - Strategic leverage in supply negotiations. Building viable first-party silicon changes Microsoft’s bargaining position with external vendors and may unlock long-term cost and capacity benefits even before Maia becomes the default hosting option.

Risks, unknowns and technical caveats

No silicon announcement is free of execution risk. Here are the primary concerns WindowsForum readers and IT decision-makers should track carefully.1. Vendor benchmarks vs. independent validation

Microsoft’s performance comparisons are company-published claims and early press reproductions; independent third‑party benchmarks (measuring metrics like tokens-per-dollar, tail latency, quantization fidelity under load, and sustained throughput) are essential before any large migration. Treat vendor PFLOPS numbers as directional until neutral tests apm])2. Software maturity and portability

Nvidia’s long-standing advantage is not just silicon but a massive, mature software ecosystem (CUDA, cuDNN, Triton, third-party libraries and tuned kernels). Microsoft’s Maia SDK and Triton compiler support are critical, but real-world model porting — especially to FP4/FP8 quantized formats — requires engineering effort and validation of model quality at low precision. Adoption will depend heavily on tooling quality and community uptake.3. HBM3e supply, TSMC yield constraints and rollout speed

Maia relackaging (HBM3e) and TSMC’s 3 nm process. Advanced nodes can suffer initial yield and supply constraints, which impacts how fast Microsoft can scale Maia across regions. Early deployment is U.S.-centric (Iowa, Phoenix) — broad global amore time and depends on foundry capacity.4. Operational and datacenter realities

A 750 W chip TDP with dense racks mandates robust cooling and power provisioning. Microsoft’s tray and sidecar cooling designs mitigate those constrainmits, field serviceability and regional power cost differences will shape where Maia racks make economic sense. Enterprises should not assume Maia support will automatically suit every Azure region or tenant configuration. (tomshardware.com)5. Market fragmentation and migration complexity

Heterogeneous fleets — GPUs, TPUs, Trainium, Maia — benefit the market but create migration complexity. Enterprises will need multi-backend orchestration strategies, portability layers and fallbacks. Without careful architectural planning, fragmentation can become operational risk rather than a competitive advantage.What the Nadella quote really signals for Nvidia and AMD partnerships

Nadella’s line — that Microsoft will continue buying from Nvidia and AMD while innovating with first‑party silicon — is both practical and strategic. It signals:- A pragmatic hedging strategy: Microsoft will diversify rather than substitute entirely. Suppliers remain necessary for training and for customers who require GPU interoperability.

- A negotiating posture: owning credible in-house silicon gives Microsoft leverage on price and supply, but it is not a commitment to exclusivity. This balance reduces procurement risk and preserves choice for customers that depend on specific ecosystems.

- A hybrid reality: expect continued coexistence — GPUs for training and flexible workloads, Maia-class accelerators for highly-optimized, high-volume inference inside Azure. That is the likely equilibrium over the next 12–36 months.

Practical advice for WindowsForum readers, IT architects and cloud buyers

If you manage AI infrastructure, here are practical steps to evaluate Maia‑era opportunities without exposing production risk.- Start with representative pilots:

- Pick one or two inference-heavy, non-critical services and validate tokens-per-dollar, latency percentiles and model fidelity when quantized to FP8 or FP4.

- Preserve portability:

- Use containerized runtimes and abstraction layers (Triton, ONNX Runtime, or internal orchestrators) to avoid long-term lock-in to any single accelerator.

- Require workload-level metrics:

- Ask cloud providers for real performance numbers on your workloads, not just vendor PFLOPS — specifically tokens-per-dollar under sustained traffic and 99th percentile tail latencies.

- Budget for toolchain work:

- Plan engineering time to adapt models to FP8/FP4 quantization and to test edge cases where numerical precision affects results.

- Design hybrid infrastructure:

- Keep training on proven GPU fleets and move inference to optimized Maia-like instances only where tests show compelling cost or latency wins.

What to watch next (short list)

- Independent benchmarks from reputable labs (MLPerf-style or neutral research labs) that report tokens-per-dollar, tail-latency and quantized model accuracy on common LLMs.

- Avaked Azure SKUs, pricing, and regional rollouts beyond US Central and US West 3.

- Maia SDK availability and the quality of Triton/PyTorch integrations and compilers. Strong developer tooling will determine adoption speed.

- Supply signals from TSMC: yields, HBM3e supply pricing and lead times will directly affect how quickly Microsoft can scale Maia deployments.

- Competitive responses from Nvidia, AWS and Google: pricing changes, new instance types, or improved software portability could reshape the calculus quickly.

Final analysis and verdict

Maia 200 is a consequential, coherent step in Microsoft’s long‑term strategy to control more of the AI inference stack: chip, memory, network, rack and software. The architectural choices — large HBM3e, on‑die SRAM, native FP4/FP8 tensor math and an Ethernet-based scale‑up fabric — are well aligned with the economic realities of large-scale inference and token generation. Microsoft’s narrative is credible and the company’s early deployment into Azure production racks is meaningful proof of intent.But the story is not yet a fait accompli. The most important load‑bearing claims (PFLOPS, perf-per-dollar and real-world tokens-per-dollar) come from Microsoft and have not yet been validated at scale by neutral third parties. The software and ecosystem challenges — porting to FP4/FP8, maintaining model fidelity, and matching Nvidia’s mature tooling — are real hurdles that determine whether Maia will translate from “promising architecture” into pervasive operational advantage.

For enterprise IT teams and WindowsForum readers, the right posture is pragmatic experimentation: run representative pilots, validate workload-level economics, preserve portability, and avoid wholesale migration until independent benchmarks and broad tooling maturity arrive. If Microsoft’s claims are broadly confirmed, Maia-backed Azure SKUs will materially lower inference costs for many services; if not, Maia will still have delivered value to the market by forcing greater competition and faster iteration across hyperscalers and GPU vendors. Either outcome is a net win for customers — provided architects do the necessary validation work before committing critical workloads.

In short: Maia 200 intensifies the hyperscaler silicon arms race and gives Microsoft leverage — but Nvidia and AMD remain essential partners for the foreseeable future. The era ahead will be heterogeneous; success will go to teams that plan for portability, measure empirically, and exploit the new hardware selectively where it demonstrably improves production economics.

Conclusion: Maia 200 is a major milestone for Microsoft’s inference strategy and for the cloud AI market. The claims are bold and technically plausible; the risks are real and measurable. As the hardware arrives in more regions and independent benchmarks appear, IT leaders should move from passive interest to active testing — because the next wave of AI economics will be decided by tokens-per-dollar, not press‑release PFLOPS.

Source: The Tech Buzz https://www.techbuzz.ai/articles/microsoft-doubles-down-on-nvidia-amd-despite-maia-200-launch/