Microsoft’s new Maia 200 accelerator signals a clear strategic pivot: build the economics of inference, not just raw training horsepower. The chip, unveiled by Microsoft on January 26, 2026, is a purpose‑built inference SoC fabricated on TSMC’s 3 nm node that stacks bandwidth and low‑precision math where token throughput and operating cost matter most. Microsoft positions Maia 200 as a cloud‑scale answer to the token‑generation bottleneck — trading some training flexibility for a notable improvement in performance‑per‑dollar and power efficiency in production serving.

The cloud AI infrastructure market has been dominated by general‑purpose training GPUs for several generations. Those devices — notably Nvidia’s Hopper and Blackwell families — were designed around raw, mixed‑precision peak compute and extremely high memory bandwidth to support both training and inference. But the economics of AI are increasingly dominated by inference: every user interaction, every API call, every enterprise feature in production translates to tokens generated and therefore recurring cost.

Inference workloads have different system constraints than training. Where training benefits from high precision and dense compute, inference is far more sensitive to memory bandwidth, on‑chip memory capacity and transport efficiency. For each generated token, a large language model typically needs to access a significant portion of active model weights and the KV cache; that makes streaming data from memory the gating factor on tokens‑per‑second and interactive latency.

Microsoft’s Maia 200 is explicitly designed against that bottleneck. Rather than building another universally capable training GPU, Microsoft optimized the SoC, memory subsystem and datacenter fabric around token throughput, energy use, and cost at scale.

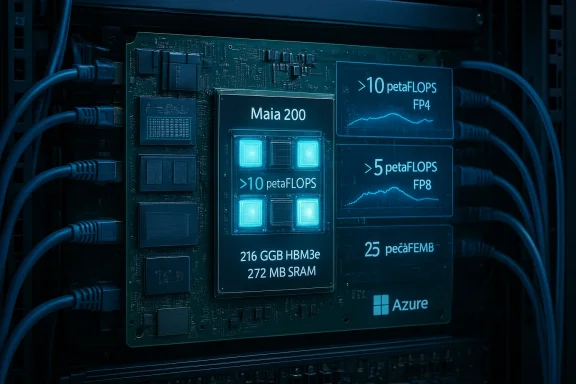

Where Maia draws attention is in its FP4 and FP8 dense throughput. Microsoft advertises >10 petaFLOPS at FP4 and >5 petaFLOPS at FP8 inside a 750 W envelope. That is a deliberate design point: deliver high effective token throughput under constrained datacenter power and cooling budgets.

The chip also exposes 272 MB of on‑chip SRAM that can be dynamically partitioned:

But training — and many research workflows — still prefer BF16/FP16 and higher. On Maia, workloads requiring 16‑ or 32‑bit precision must use the tile vector processors (TVPs), which reduces peak throughput. Microsoft acknowledges Maia 200 is an inference SoC, not a universal training workhorse. That tradeoff is by design: optimized inference math and memory vs. full‑spectrum training capability.

Rather than NVLink or native GPU mesh links, Microsoft’s choice is to place Ethernet at the center of the scale‑up fabric and run a custom AI transport layer on top. That design trades tightly coupled, coherent interconnects for a packetized, standardized fabric that the company believes is cheaper, easier to operate and sufficiently performant for inference patterns when coupled with careful design (CSRAM for collective buffering, deterministic packet transport, and a two‑tier scale‑up topology).

The sizable on‑die SRAM, Ethernet‑centric fabric and narrow‑precision tensor focus are pragmatic choices for the production phase of generative AI. They reflect a broader industry trend: specialize where the money is recurring. Nvidia’s Rubin platform, which promises larger leaps for inference and promises to be available later in 2026, will raise the performance bar again and force suppliers to double down on software, compilers and system integration.

Two realities will shape the next 12–24 months:

That said, Maia 200 is not a one‑size‑fits‑all replacement for the general‑purpose, training‑oriented GPUs that currently dominate AI research and large‑scale model training. The chip’s narrow precision focus and reliance on vector fallback for higher precisions mean training workloads will still favor other platforms. Marketing claims about a 30% cost advantage and precise transistor counts should be treated with caution until independent, workload‑matched benchmarks are published.

For enterprises and architects, the prudent path is multi‑pronged: continue to rely on purpose built training accelerators for model development, while planning to exploit Maia‑class inference silicon for production serving once validated. Microsoft’s push increases options and should help drive a more competitive pricing and innovation cycle for AI infrastructure — which, ultimately, is the outcome customers want most.

Source: theregister.com Microsoft looks to drive down AI infra costs with Maia 200

Background

Background

The cloud AI infrastructure market has been dominated by general‑purpose training GPUs for several generations. Those devices — notably Nvidia’s Hopper and Blackwell families — were designed around raw, mixed‑precision peak compute and extremely high memory bandwidth to support both training and inference. But the economics of AI are increasingly dominated by inference: every user interaction, every API call, every enterprise feature in production translates to tokens generated and therefore recurring cost.Inference workloads have different system constraints than training. Where training benefits from high precision and dense compute, inference is far more sensitive to memory bandwidth, on‑chip memory capacity and transport efficiency. For each generated token, a large language model typically needs to access a significant portion of active model weights and the KV cache; that makes streaming data from memory the gating factor on tokens‑per‑second and interactive latency.

Microsoft’s Maia 200 is explicitly designed against that bottleneck. Rather than building another universally capable training GPU, Microsoft optimized the SoC, memory subsystem and datacenter fabric around token throughput, energy use, and cost at scale.

Maia 200: what Microsoft says it is

Key technical claims (company brief)

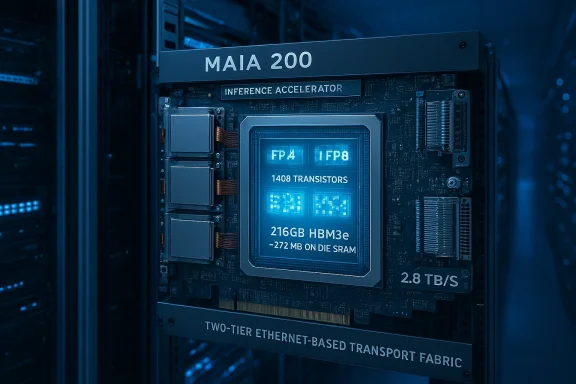

- Fabrication: TSMC N3 (3 nm).

- Transistors: over 140 billion (marketing materials cite “over 140B”; some outlets report 144B).

- Peak inference throughput: >10 petaFLOPS at 4‑bit precision (FP4); >5 petaFLOPS at 8‑bit (FP8).

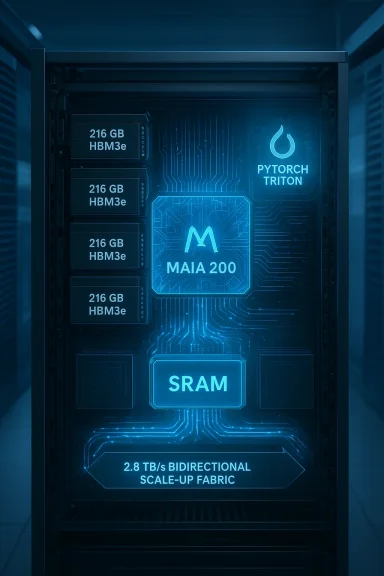

- Memory: 216 GB HBM3e on‑package with a claimed 7 TB/s memory bandwidth.

- On‑chip SRAM: 272 MB for tiled/cache use and collective communications buffering.

- TDP: 750 W SoC envelope.

- Inter‑accelerator scale‑up connect: 2.8 TB/s bidirectional dedicated scale‑up bandwidth (1.4 TB/s per direction), enabling clusters up to 6,144 accelerators.

- Fabric: integrated Ethernet NoC and a Microsoft AI transport layer; two‑tier scale‑up topology using packet switches.

- Software: Maia SDK (preview) with PyTorch integration, a Triton compiler, optimized kernel library and a low‑level programming language (NPL).

- Deployment: initial rollout to Azure US Central (Des Moines) with U.S. West 3 (Phoenix) to follow.

What’s different about this chip

- Maia 200’s tensor units are optimized for ultra‑low precision (FP8, FP6, FP4) in hardware. Higher precisions (BF16/FP16/FP32) must fall back to vector processors on the chip, which reduces training speed for high‑precision workloads.

- A redesigned memory subsystem focuses on narrow‑precision datatypes, a specialized DMA engine and large on‑die SRAM pools exposed to the runtime for caching and collective operations, specifically to reduce trips to HBM and thereby increase effective token throughput.

- The inter‑chip fabric uses standard Ethernet with a custom transport layer and dedicated NIC integration, rather than proprietary fabrics like NVLink Fusion or InfiniBand, which Microsoft argues reduces cost and increases deployability in standard datacenter topologies.

A technical deep dive

Process, transistor budget and peak math

Building on TSMC’s N3, Maia 200 sits in the same advanced‑node class as the latest bespoke accelerators. Microsoft’s claim of “over 140 billion transistors” positions Maia 200 in the flagship silicon tier; independent reportage has repeated both that figure and slightly different rounded numbers, so treat the exact transistor count as vendor‑stated rather than independently measured.Where Maia draws attention is in its FP4 and FP8 dense throughput. Microsoft advertises >10 petaFLOPS at FP4 and >5 petaFLOPS at FP8 inside a 750 W envelope. That is a deliberate design point: deliver high effective token throughput under constrained datacenter power and cooling budgets.

Memory system: capacity and bandwidth for inference

Maia 200 carries 216 GB of on‑package HBM3e with a claimed 7 TB/s of sustained bandwidth. For inference, where entire active weight subsets or KV caches are streamed per token, that large capacity combined with high aggregate bandwidth is the critical lever for lowering token latency and increasing interactivity.The chip also exposes 272 MB of on‑chip SRAM that can be dynamically partitioned:

- CSRAM (cluster‑level SRAM) as a buffer to ease collective operations across Maia clusters, minimizing the need to stage data via HBM.

- TSRAM (tile‑level SRAM) used as a fast scratchpad for intermediate matrix multiplications and attention kernels.

Tensor units, datatypes and the training tradeoff

Maia’s hardware tensor core (tile tensor unit, TTU) supports FP8, FP6 and FP4 natively. That is enough for the vast majority of modern inference stacks, which increasingly quantize weights to 4‑bit block floating point formats (e.g., NVFP4, MXFP4) and use 8‑bit for activations or KV caches where accuracy is more sensitive.But training — and many research workflows — still prefer BF16/FP16 and higher. On Maia, workloads requiring 16‑ or 32‑bit precision must use the tile vector processors (TVPs), which reduces peak throughput. Microsoft acknowledges Maia 200 is an inference SoC, not a universal training workhorse. That tradeoff is by design: optimized inference math and memory vs. full‑spectrum training capability.

Interconnect, scale‑up and the Ethernet approach

Every Maia 200 claims 2.8 TB/s of bidirectional scale‑up bandwidth (1.4 TB/s per direction), exposed via an integrated NoC and high‑speed SerDes. Microsoft says clusters of up to 6,144 Maia chips can be formed with this fabric, producing, in its arithmetic, on the order of 61 exaFLOPS of AI compute and ~1.3 PB of HBM3e across a cluster.Rather than NVLink or native GPU mesh links, Microsoft’s choice is to place Ethernet at the center of the scale‑up fabric and run a custom AI transport layer on top. That design trades tightly coupled, coherent interconnects for a packetized, standardized fabric that the company believes is cheaper, easier to operate and sufficiently performant for inference patterns when coupled with careful design (CSRAM for collective buffering, deterministic packet transport, and a two‑tier scale‑up topology).

Where Maia 200 shines

- Inference economics: The chip’s most persuasive pitch is lower cost per token and improved performance per dollar and watt for production serving. Microsoft claims a ~30% performance‑per‑dollar advantage compared with the current generation hardware in its fleet; this is a vendor‑supplied metric and should be measured in real‑world, workload‑matched benchmarks, but the architectural choices strongly favor inference TCO improvements.

- Power envelope: A 750 W TDP makes Maia deployable in air‑cooled racks in many datacenters, lowering overall datacenter cooling complexity and cost compared to some high‑power training GPUs that exceed 1,000 W and require liquid cooling.

- Memory architecture for inference: 216 GB of HBM3e plus substantial on‑die SRAM is a pragmatic balance for large model serving, letting Maia hold larger models per device and reducing the penalty of repeated off‑chip streaming during generation.

- Scale‑up for huge models: The cluster design and CSRAM/TSRAM partitioning are clearly intended to run massive multi‑trillion‑parameter models without excessive network hops, which is key for low‑latency, multi‑tenant cloud serving.

- Software accessibility: Early PyTorch and Triton support in the Maia SDK (preview) lowers the barrier to porting and should accelerate adoption for cloud customers who rely on these ecosystems.

Important comparisons and caveats

Maia 200 vs. Nvidia Blackwell (B200/GB200)

Nvidia’s Blackwell B200 (and the GB200/GB200 superchip variants) are the current high‑end general‑purpose accelerators, optimized for both training and inference. Key differences to bear in mind:- HBM capacity & bandwidth: Blackwell B200 GPUs typically ship with 192 GB HBM3e and up to ~8 TB/s bandwidth per GPU. Some Blackwell variants and system configurations push capacity toward 288 GB, with bandwidth remaining at similar peaks. Maia’s 216 GB and 7 TB/s position it close in capacity and bandwidth for inference — but Blackwell still has higher peak bandwidth per device in many configurations.

- Power & versatility: Blackwell GPUs can exceed 1,000 W TDP in some deployments; Maia’s 750 W target reduces rack power density and cooling needs but also signals a narrower performance envelope for training and mixed‑precision workloads.

- Precision support: Nvidia’s tensor cores cover a broader precision range (including BF16/FP16/FP32 and NVFP4), meaning training and high‑precision research workloads remain better suited to Nvidia hardware. Maia’s native tensor core support focuses on FP8/FP6/FP4, favoring inference efficiency.

- Interconnect & ecosystem: Nvidia’s NVLink and NVSwitch familes provide tightly coupled, high‑bandwidth chip‑to‑chip and node fabrics optimized for training sharding and coherence. Microsoft replaces that with an Ethernet‑based, packetized scale‑up designed around cost and standardized datacenter operational models.

Marketing claims vs. independent verification

Microsoft’s announced “30% better performance per dollar” and other cost claims are plausible given Maia’s design, but they are vendor statements. Independent, workload‑specific benchmarks — ideally third‑party measurements at matched model sizes, context windows and software stacks — are necessary to validate the claimed gains. Likewise, transistor counts reported in press pieces (e.g., 144B) are close to Microsoft’s “over 140B” statement; still, exact counts and die photos are not independently verifiable outside vendor disclosures.Strategic risks and open questions

- Inference‑only specialization limits flexibility. Maia 200 is tuned for serving models. Hyperscalers and enterprise customers that want a single accelerator family for both training and inference may still rely heavily on Nvidia Blackwell and the upcoming Rubin platform for training workloads and research. Microsoft’s fleet will become more heterogeneous — a win for targeted economics but a complexity cost for software and operations.

- Software and model portability. Even with PyTorch and Triton support, porting large, heavily optimized model kernels between Blackwell, Rubin and Maia requires engineering. Subtle differences in quantization support, kernel libraries, and transport behaviors can complicate portability and require substantial validation.

- Network latency/packetization tradeoffs. Ethernet with custom transport is cheaper and operationally familiar, but packetization can add jitter and tail latency issues if not engineered tightly. Microsoft’s CSRAM/TSRAM approach mitigates some of this, yet the ultimate performance of very large collective communications under bursty, multi‑tenant load remains to be proven at scale.

- Supply chain and node economics. Maia 200 is made at TSMC N3. Fabrication capacity, wafer allocation and geopolitics can affect supply. Similarly, Microsoft must sustain production volumes and packaging yields to make its cost claims real across its Azure footprint.

- Competitive reaction and cadence. Nvidia’s Rubin platform — an integrated system unveiled at CES 2026 that promises multiple× gains for inference — is the next major competitor on the horizon. Rubin aims to be a platform (GPU + CPU + networking + DPU), not just a single die, and vendors cite up to 5× inference improvements over Blackwell. That raises the bar for Maia’s next generations and forces Microsoft to iterate quickly if it wants to further close the training/inference gap.

What this means for customers and cloud operators

- For production serving at scale: Enterprises and cloud customers focused primarily on inference economics — delivering interactive AI features at scale — should watch Maia 200 closely. Microsoft’s internal TCO claims and the chip’s thermals point to lower operational costs for certain serving workloads, particularly those using heavy low‑precision quantization.

- For model developers and researchers: Maia is not a one‑stop shop for training and research. Teams that iterate models at higher precisions or require heterogeneous acceleration for training will continue to depend on GPUs optimized for training. Expect hybrid strategies: train on high‑precision GPU clusters and serve on Maia‑optimized inference fleets after quantization and validation.

- For cloud strategy: Organizations that rely on Azure will gain incremental negotiating leverage. Microsoft’s first‑party silicon reduces some hyperscaler dependence on external GPU vendors and offers a specialized platform for serving Microsoft’s own services (Foundry, Copilot) and Azure customers. However, cross‑cloud portability of optimized stacks will remain a challenge.

Practical recommendations for IT teams

- Benchmark before committing. Do not assume vendor performance claims match your workload. Run production‑representative inference traces (context windows, top‑k sampling modes, KV cache sizes) on Maia preview access when possible.

- Validate quantization and accuracy. Moving weights to FP4 or block‑4 formats can save enormous TCO; however, integrity and quality checks are essential to ensure model accuracy and safety.

- Design a hybrid compute strategy:

- Use high‑precision GPUs (Blackwell, Rubin when available) for training and model exploration.

- Use Maia 200 or similar inference accelerators for production serving after careful conversion and profiling.

- Instrument and monitor at scale. Maia’s Ethernet‑based fabric and CSRAM/TSRAM dynamics mean that network behavior at scale affects latency and tail performance. Invest early in telemetry, tracing and SLAs for token latency.

- Plan for heterogeneity in orchestration. Kubernetes, model serving platforms and schedulers must be adapted to place workloads on the right hardware class automatically. Containerized runtimes should expose precision profiles and cost metrics to schedulers.

- Audit cost claims internally. Take Microsoft’s 30% performance‑per‑dollar figure as directional; build cost models using your own token traces and pricing to estimate ROI.

Longer‑term implications and outlook

Maia 200 is significant not because it dethrones Nvidia overnight, but because it marks a mature hyperscaler move into specialized, production‑focused silicon. Microsoft is weaponizing integrated datacenter design — silicon, memory, network, and orchestration — to extract better economics for the most frequent workloads in AI: inference and token generation.The sizable on‑die SRAM, Ethernet‑centric fabric and narrow‑precision tensor focus are pragmatic choices for the production phase of generative AI. They reflect a broader industry trend: specialize where the money is recurring. Nvidia’s Rubin platform, which promises larger leaps for inference and promises to be available later in 2026, will raise the performance bar again and force suppliers to double down on software, compilers and system integration.

Two realities will shape the next 12–24 months:

- Ecosystem momentum matters. Hardware wins when the compiler, kernel libraries and model runtimes make it trivial to reach expected performance and fidelity. Microsoft’s SDK preview, with PyTorch and Triton support, is therefore as important as die specs.

- Operational economics will decide adoption. Power, cooling, rack space and interconnect costs are making inference economics a board‑level consideration. Maia 200 is designed to compete there; whether it becomes the preferred inference fabric for Azure customers depends on sustained deliveries of the stated TCO benefits under real workloads.

Final assessment

Maia 200 is a credible, well‑engineered inference accelerator that tightens Microsoft’s control over the cost of deploying large language models at cloud scale. Its architecture shows deep awareness of what limits modern token generation: memory bandwidth, on‑chip buffering and deterministic collective operations. By optimizing for low‑precision tensor math, a large HBM budget, substantial on‑die SRAM and an Ethernet‑based scale‑up network, Microsoft has created a platform that can materially lower the cost of serving generative AI features across Azure.That said, Maia 200 is not a one‑size‑fits‑all replacement for the general‑purpose, training‑oriented GPUs that currently dominate AI research and large‑scale model training. The chip’s narrow precision focus and reliance on vector fallback for higher precisions mean training workloads will still favor other platforms. Marketing claims about a 30% cost advantage and precise transistor counts should be treated with caution until independent, workload‑matched benchmarks are published.

For enterprises and architects, the prudent path is multi‑pronged: continue to rely on purpose built training accelerators for model development, while planning to exploit Maia‑class inference silicon for production serving once validated. Microsoft’s push increases options and should help drive a more competitive pricing and innovation cycle for AI infrastructure — which, ultimately, is the outcome customers want most.

Source: theregister.com Microsoft looks to drive down AI infra costs with Maia 200