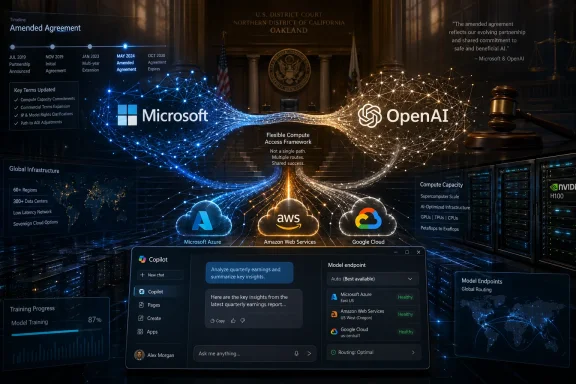

Microsoft and OpenAI have loosened one of the most important technology alliances of the AI era, turning an exclusive cloud-and-IP arrangement into a more flexible partnership just as Elon Musk’s lawsuit against OpenAI, Sam Altman, and Microsoft reaches a federal courtroom in Oakland. The amended agreement gives OpenAI the ability to serve all of its products across any cloud provider, while Microsoft keeps a non-exclusive license to OpenAI model and product IP through 2032. The timing is impossible to ignore: as jury selection begins, the companies are trying to show that their relationship is evolving from dependency into a broader, more conventional strategic alliance.

The Microsoft-OpenAI partnership began as a bet on scarce compute, frontier research, and the idea that large-scale AI systems would need industrial-grade infrastructure long before the market understood why. Microsoft’s early investment gave OpenAI access to Azure capacity, while Microsoft gained privileged access to the models that would later power Bing Chat, Microsoft 365 Copilot, GitHub Copilot enhancements, and Azure OpenAI Service.

That arrangement became a defining feature of the generative AI boom after ChatGPT turned OpenAI from a research lab into a household name. Microsoft’s integration of OpenAI models across Windows, Office, developer tools, security products, and cloud services gave Redmond a dramatic lead in enterprise AI branding. At the same time, it made Microsoft appear unusually dependent on one partner for its most visible AI strategy.

OpenAI’s own structure has always been more complicated than a standard startup story. Founded in 2015 as a nonprofit research organization, it later created a capped-profit structure, raised billions, and pursued commercial scale in a market where training frontier models requires extraordinary spending. That evolution is now central to Musk’s case, which alleges that the organization abandoned its original mission and that Microsoft benefited from the shift.

The new amendment should be read against that entire history. It does not end the partnership, but it does change the optics and mechanics of control. The companies are no longer presenting exclusivity as the foundation of the alliance; instead, they are emphasizing flexibility, predictability, and the ability for each side to pursue growth on its own terms.

The revised terms also make Microsoft’s license to OpenAI IP non-exclusive through 2032. Microsoft keeps access to OpenAI models and products, but OpenAI can license and deliver its technology more broadly. For a company reportedly pursuing enormous capital needs, that broader distribution path may be essential.

The revenue-share structure also changes. Microsoft will no longer pay a revenue share to OpenAI, while OpenAI’s payments to Microsoft continue through 2030 at the same percentage, now subject to a total cap. That turns a complex two-way economic relationship into something closer to a defined commercial obligation.

Key changes include:

For Microsoft, loosening exclusivity may reduce pressure from customers, regulators, and investors who worry that Azure is too entangled with one AI supplier. Redmond can still monetize OpenAI demand through Azure, but it can also argue that the market is becoming more open. That argument may prove useful in courtrooms, boardrooms, and competition policy discussions.

Enterprises also dislike architectural lock-in when workloads become mission-critical. If a bank, hospital network, manufacturer, or government agency wants OpenAI models but has standardized on AWS or Google Cloud for parts of its AI stack, the new agreement lowers friction. It gives customers more deployment choices without necessarily eliminating Azure’s advantage.

The cloud implications are substantial:

Microsoft’s strongest assets are still the enterprise layers around AI. Microsoft 365, Windows, Azure, GitHub, Defender, Entra, Purview, and Dynamics give the company unmatched channels into corporate work. Even if the underlying model mix changes, Microsoft can remain the company that packages AI into daily work.

The non-exclusive license lets Microsoft keep building with OpenAI technology while reducing the impression that it owns or controls OpenAI’s entire future. That distinction matters in antitrust analysis and in the Musk litigation. It also matters for customers who want assurance that Microsoft can support multiple models if OpenAI’s roadmap, pricing, or governance becomes controversial.

Microsoft’s strategic priorities now appear to be:

A multi-cloud model allows OpenAI to meet customers where they are. Developers may want API access close to existing workloads. Large enterprises may require regional hosting or cloud-specific compliance controls. Governments may demand procurement pathways that do not depend exclusively on Azure.

The financial logic is equally important. By broadening its commercial surface area, OpenAI can present itself less as Microsoft’s captive model lab and more as an independent AI platform. That matters for future fundraising, potential public-market ambitions, and negotiations with chip suppliers and data center partners.

The amended arrangement gives OpenAI:

Microsoft can point to the new structure as evidence that it is not the exclusive gatekeeper of OpenAI technology. OpenAI can argue that it remains capable of independent commercial decision-making. Musk, meanwhile, may argue that the amendment confirms how important Microsoft’s prior influence was and that the changes come only after legal pressure and public scrutiny.

The courtroom narrative is likely to revolve around several themes:

However, more choice does not automatically mean less complexity. Running AI workloads across clouds introduces questions about logging, identity, encryption, observability, cost controls, and incident response. Enterprises may discover that the model endpoint is portable, while governance remains painfully specific to each cloud environment.

For business buyers, the likely impacts include:

The consumer impact depends on whether multi-cloud capacity helps OpenAI roll out new features faster. If the company can tap more infrastructure, it may reduce waitlists, improve performance during peak demand, and experiment with richer multimodal services. That said, multi-cloud operations also create new consistency challenges.

Potential developer and consumer changes include:

The bigger competitive story is that AI platforms are becoming less vertically locked. Model developers need chips and data centers from multiple sources. Cloud providers need multiple models to satisfy customers. Enterprises want portability because they fear both technical lock-in and strategic dependency.

Likely competitive consequences include:

Microsoft’s role adds another layer. A public company has fiduciary duties, competitive goals, and shareholder expectations. When such a company becomes deeply embedded in a mission-driven AI lab, outsiders naturally ask whether the mission is protected or diluted.

The governance questions now include:

Copilot’s real advantage is not merely the model behind a text box. It is access to files, apps, calendars, Teams meetings, developer repositories, security signals, and device context. If Microsoft handles that responsibly, Copilot can remain compelling even when OpenAI models are available elsewhere.

Windows and Copilot priorities should include:

For Microsoft, ending its own payments to OpenAI may improve the economics of Copilot and other AI products. It also reduces the perception that every success built on OpenAI IP creates a complicated transfer back to the lab. That could make internal product planning cleaner.

The financial reset likely serves several purposes:

Microsoft and OpenAI are not ending their partnership; they are trying to make it survivable for the next decade of AI competition. The first era was defined by exclusivity, urgency, and the shock of ChatGPT’s rise. The next will be defined by choice, governance, infrastructure scale, and whether the companies can convince courts, customers, and the public that the most powerful AI systems are being built with enough independence and accountability to match their influence.

Source: GeekWire Microsoft and OpenAI revamp partnership, with trial in Elon Musk suit set to begin

Background

Background

The Microsoft-OpenAI partnership began as a bet on scarce compute, frontier research, and the idea that large-scale AI systems would need industrial-grade infrastructure long before the market understood why. Microsoft’s early investment gave OpenAI access to Azure capacity, while Microsoft gained privileged access to the models that would later power Bing Chat, Microsoft 365 Copilot, GitHub Copilot enhancements, and Azure OpenAI Service.That arrangement became a defining feature of the generative AI boom after ChatGPT turned OpenAI from a research lab into a household name. Microsoft’s integration of OpenAI models across Windows, Office, developer tools, security products, and cloud services gave Redmond a dramatic lead in enterprise AI branding. At the same time, it made Microsoft appear unusually dependent on one partner for its most visible AI strategy.

OpenAI’s own structure has always been more complicated than a standard startup story. Founded in 2015 as a nonprofit research organization, it later created a capped-profit structure, raised billions, and pursued commercial scale in a market where training frontier models requires extraordinary spending. That evolution is now central to Musk’s case, which alleges that the organization abandoned its original mission and that Microsoft benefited from the shift.

The new amendment should be read against that entire history. It does not end the partnership, but it does change the optics and mechanics of control. The companies are no longer presenting exclusivity as the foundation of the alliance; instead, they are emphasizing flexibility, predictability, and the ability for each side to pursue growth on its own terms.

What Changed in the Deal

The headline change is simple: OpenAI can now serve all of its products on any cloud provider. That includes API-based services that had previously been tied more tightly to Azure, opening the door for OpenAI to work directly with Amazon Web Services, Google Cloud, Oracle, CoreWeave, and other infrastructure partners without the same level of Azure exclusivity.The new operating model

Microsoft remains OpenAI’s primary cloud partner, and OpenAI products are still expected to ship first on Azure unless Microsoft cannot or chooses not to support the necessary capabilities. That clause matters because it preserves Azure’s first-look advantage while acknowledging a practical reality: no single cloud provider can easily satisfy all frontier AI capacity demands.The revised terms also make Microsoft’s license to OpenAI IP non-exclusive through 2032. Microsoft keeps access to OpenAI models and products, but OpenAI can license and deliver its technology more broadly. For a company reportedly pursuing enormous capital needs, that broader distribution path may be essential.

The revenue-share structure also changes. Microsoft will no longer pay a revenue share to OpenAI, while OpenAI’s payments to Microsoft continue through 2030 at the same percentage, now subject to a total cap. That turns a complex two-way economic relationship into something closer to a defined commercial obligation.

Key changes include:

- OpenAI can serve all products across any cloud provider

- Microsoft’s OpenAI IP license continues through 2032

- That license is now non-exclusive

- Microsoft stops paying revenue share to OpenAI

- OpenAI continues paying Microsoft through 2030, subject to a cap

- Azure remains the primary cloud partner and first launch venue

- Microsoft remains a major OpenAI shareholder

Why Cloud Exclusivity Became a Problem

Exclusivity made sense when OpenAI needed a single deep-pocketed infrastructure partner and Microsoft needed a breakthrough AI story. But in 2026, frontier AI is no longer a single-cloud business. The industry is constrained by GPUs, power, data center construction, networking, cooling, and sovereign cloud requirements.Compute is now strategy

For OpenAI, being able to run products across multiple clouds gives it leverage and resilience. It can chase capacity wherever it exists, negotiate better terms, and meet customers that may already be committed to a different cloud ecosystem. That flexibility is not cosmetic; it is operational survival in a compute-constrained market.For Microsoft, loosening exclusivity may reduce pressure from customers, regulators, and investors who worry that Azure is too entangled with one AI supplier. Redmond can still monetize OpenAI demand through Azure, but it can also argue that the market is becoming more open. That argument may prove useful in courtrooms, boardrooms, and competition policy discussions.

Enterprises also dislike architectural lock-in when workloads become mission-critical. If a bank, hospital network, manufacturer, or government agency wants OpenAI models but has standardized on AWS or Google Cloud for parts of its AI stack, the new agreement lowers friction. It gives customers more deployment choices without necessarily eliminating Azure’s advantage.

The cloud implications are substantial:

- Azure keeps priority access without absolute control

- AWS and Google Cloud gain a clearer path to OpenAI workloads

- Oracle and specialty GPU clouds may compete for capacity

- Enterprise buyers gain more negotiating leverage

- Regulators may see a less closed AI infrastructure market

Microsoft’s Strategy After Exclusivity

Microsoft has spent the past year making clear that its AI future is bigger than one supplier. The company has expanded model choice in Azure AI Foundry, integrated rival models into enterprise offerings, and pushed its own teams to develop more internal AI capability. The amended OpenAI deal fits that pattern.From dependency to portfolio

The old perception was that Microsoft had effectively outsourced its frontier AI future to OpenAI. That was always too simplistic, but it contained enough truth to worry investors. Copilot’s success depends not only on model quality but also on distribution, identity, security, compliance, data grounding, workflow integration, and trust.Microsoft’s strongest assets are still the enterprise layers around AI. Microsoft 365, Windows, Azure, GitHub, Defender, Entra, Purview, and Dynamics give the company unmatched channels into corporate work. Even if the underlying model mix changes, Microsoft can remain the company that packages AI into daily work.

The non-exclusive license lets Microsoft keep building with OpenAI technology while reducing the impression that it owns or controls OpenAI’s entire future. That distinction matters in antitrust analysis and in the Musk litigation. It also matters for customers who want assurance that Microsoft can support multiple models if OpenAI’s roadmap, pricing, or governance becomes controversial.

Microsoft’s strategic priorities now appear to be:

- Keep OpenAI access as a premium ingredient

- Build stronger first-party model capabilities

- Support rival models through Azure AI Foundry

- Turn Copilot into an orchestration layer, not a single-model wrapper

- Use security, identity, and compliance as differentiators

- Reduce investor concern over OpenAI concentration risk

OpenAI’s Business Case for Freedom

OpenAI’s need for flexibility is even more obvious. The company’s ambitions require staggering amounts of compute, capital, talent, energy, and global distribution. A single exclusive cloud relationship can become a bottleneck when the business is trying to serve consumers, developers, enterprises, governments, and future device ecosystems simultaneously.The road to a broader platform

OpenAI has moved beyond ChatGPT as a single consumer app. It now operates APIs, enterprise products, multimodal tools, coding systems, agent frameworks, safety infrastructure, and partnerships across industries. Each of those markets has different latency, compliance, data residency, and integration requirements.A multi-cloud model allows OpenAI to meet customers where they are. Developers may want API access close to existing workloads. Large enterprises may require regional hosting or cloud-specific compliance controls. Governments may demand procurement pathways that do not depend exclusively on Azure.

The financial logic is equally important. By broadening its commercial surface area, OpenAI can present itself less as Microsoft’s captive model lab and more as an independent AI platform. That matters for future fundraising, potential public-market ambitions, and negotiations with chip suppliers and data center partners.

The amended arrangement gives OpenAI:

- More bargaining power over infrastructure

- More freedom to partner with Microsoft rivals

- A clearer independent identity

- A broader path to enterprise and developer revenue

- Reduced friction for global customers

- A more IPO-friendly governance narrative

The Trial Timing Is More Than Coincidence

The announcement landed as jury selection began in Musk’s lawsuit, and that timing gives the agreement a second meaning beyond commercial strategy. Musk’s case centers on whether OpenAI betrayed its founding nonprofit mission and whether Microsoft aided or benefited from that shift. The amended deal gives both Microsoft and OpenAI fresh evidence that their relationship is not frozen in the most restrictive version of the past.Optics in Oakland

Legally, the amendment will not erase years of prior conduct. The court will evaluate specific claims, documents, testimony, and the evolution of OpenAI’s structure. But optics matter, especially in a case where the jury may hear competing narratives about control, mission, profit, and influence.Microsoft can point to the new structure as evidence that it is not the exclusive gatekeeper of OpenAI technology. OpenAI can argue that it remains capable of independent commercial decision-making. Musk, meanwhile, may argue that the amendment confirms how important Microsoft’s prior influence was and that the changes come only after legal pressure and public scrutiny.

The courtroom narrative is likely to revolve around several themes:

- Whether OpenAI’s nonprofit mission retained real authority

- Whether Microsoft’s investment distorted that mission

- Whether exclusivity created improper private benefit

- Whether OpenAI’s restructuring fairly served its charitable goals

- Whether Musk’s own AI ventures affect his credibility

- Whether monetary remedies or governance remedies are appropriate

Enterprise Impact: Choice Without Simplicity

For enterprise customers, the most immediate benefit is optionality. Companies that want OpenAI models but have strategic commitments to AWS, Google Cloud, Oracle, or hybrid infrastructure may soon have cleaner procurement and deployment paths. That could make OpenAI easier to adopt in industries where cloud architecture is already politically and technically complex.CIOs gain leverage

The new deal also strengthens the hand of CIOs negotiating AI contracts. If OpenAI can serve workloads across multiple clouds, buyers can compare price, compliance posture, latency, support models, and data residency commitments more directly. Microsoft will still compete strongly, but the conversation becomes less binary.However, more choice does not automatically mean less complexity. Running AI workloads across clouds introduces questions about logging, identity, encryption, observability, cost controls, and incident response. Enterprises may discover that the model endpoint is portable, while governance remains painfully specific to each cloud environment.

For business buyers, the likely impacts include:

- More flexibility in cloud procurement

- Better alignment with existing enterprise architecture

- Potentially improved regional deployment options

- Stronger negotiating leverage on price and capacity

- More pressure on internal AI governance teams

- Greater need for vendor-risk management

Consumer and Developer Impact

For consumers, the change may not be visible immediately. ChatGPT will still work, Copilot will still appear across Microsoft products, and most users will not know which cloud handled a given request. But beneath the surface, the amendment could influence product speed, availability, pricing, and reliability.Developers may feel it first

Developers are more likely to see near-term effects. API availability across clouds could reduce latency, simplify integration, and support organizations that already deploy applications outside Azure. If OpenAI offers more direct deployment options, developers may gain flexibility without having to re-architect around a single provider.The consumer impact depends on whether multi-cloud capacity helps OpenAI roll out new features faster. If the company can tap more infrastructure, it may reduce waitlists, improve performance during peak demand, and experiment with richer multimodal services. That said, multi-cloud operations also create new consistency challenges.

Potential developer and consumer changes include:

- More deployment paths for OpenAI APIs

- Lower latency in some regions

- Faster feature scaling if capacity improves

- More cloud-specific enterprise integrations

- Possible pricing pressure across AI platforms

- Less obvious dependence between ChatGPT and Azure

Competitive Implications for AWS, Google, and Others

The amendment gives Microsoft’s rivals a rare opening. AWS, Google Cloud, Oracle, and specialized AI infrastructure companies can now compete more directly for OpenAI workloads and customer deployments. That does not mean they will win the largest share, but they are no longer structurally excluded in the same way.The cloud AI race widens

AWS has long argued that customers want model choice, not a single AI stack. Google has its own Gemini models and deep TPU infrastructure, but it also benefits if OpenAI’s availability becomes more cloud-neutral. Oracle and CoreWeave can pitch specialized capacity, while NVIDIA remains central across much of the hardware ecosystem.The bigger competitive story is that AI platforms are becoming less vertically locked. Model developers need chips and data centers from multiple sources. Cloud providers need multiple models to satisfy customers. Enterprises want portability because they fear both technical lock-in and strategic dependency.

Likely competitive consequences include:

- AWS gains a stronger OpenAI partnership opportunity

- Google Cloud can compete on AI infrastructure and data tooling

- Oracle can pitch high-performance AI capacity

- Specialized GPU clouds may capture overflow demand

- Anthropic, Google, Meta, and xAI face a more open OpenAI

- Microsoft must defend Azure on execution, not contract structure alone

Governance, Mission, and Public Trust

The case against OpenAI is not just a commercial dispute; it is a test of how society should understand AI organizations that begin with public-interest language and later require enormous private capital. The Microsoft amendment does not resolve that tension. If anything, it makes the governance question more urgent.The mission problem

OpenAI’s original promise was unusually ambitious: develop advanced AI in a way that benefits humanity broadly. That kind of mission becomes harder to evaluate when the organization operates through complex corporate structures, billion-dollar investments, and product lines used by governments and enterprises. Good intentions are not a governance system.Microsoft’s role adds another layer. A public company has fiduciary duties, competitive goals, and shareholder expectations. When such a company becomes deeply embedded in a mission-driven AI lab, outsiders naturally ask whether the mission is protected or diluted.

The governance questions now include:

- Who decides when commercial scale conflicts with safety?

- How much independence does OpenAI’s nonprofit oversight truly retain?

- What safeguards prevent investor influence from shaping model deployment?

- How should courts value charitable purpose in frontier AI?

- Can public-benefit structures satisfy both markets and society?

- What transparency is owed when AI systems become infrastructure?

What This Means for Windows and Copilot

For Microsoft’s Windows ecosystem, the partnership change could be healthy. It forces Copilot to stand on product quality rather than privileged OpenAI access alone. That should push Microsoft toward better integration, faster local AI features, clearer privacy controls, and more useful enterprise administration.Copilot must become more than a chatbot

Windows users have already seen Microsoft move from cloud-only assistant experiences toward hybrid AI, local models, and device-level capabilities. As AI PCs mature, Microsoft will need to decide which features run locally, which run through Azure, and which use partner models. The OpenAI amendment gives Microsoft room to diversify that stack.Copilot’s real advantage is not merely the model behind a text box. It is access to files, apps, calendars, Teams meetings, developer repositories, security signals, and device context. If Microsoft handles that responsibly, Copilot can remain compelling even when OpenAI models are available elsewhere.

Windows and Copilot priorities should include:

- Clear user controls over data access

- Transparent distinction between local and cloud processing

- Faster on-device AI for common tasks

- Better integration with Microsoft 365 and Windows settings

- Support for multiple model providers where appropriate

- Enterprise-grade auditing for AI actions

The Revenue Share Shift

The financial changes may be as important as the cloud changes. Ending Microsoft’s revenue-share payments to OpenAI simplifies the economics and removes a peculiar arrangement in which the investor, infrastructure provider, and product partner also owed revenue back to the AI lab. OpenAI still pays Microsoft through 2030, but now with a cap.Why the cap matters

A capped payment structure gives OpenAI more financial predictability. That matters for investors who want to model future margins, especially if OpenAI is preparing for eventual public-market scrutiny. Infinite or open-ended obligations are difficult to explain when a company is spending heavily on compute and still scaling toward durable profitability.For Microsoft, ending its own payments to OpenAI may improve the economics of Copilot and other AI products. It also reduces the perception that every success built on OpenAI IP creates a complicated transfer back to the lab. That could make internal product planning cleaner.

The financial reset likely serves several purposes:

- Simplifies Microsoft’s AI product economics

- Makes OpenAI’s future obligations more predictable

- Improves investor readability ahead of possible public-market steps

- Reduces contractual friction around new products

- Separates cloud payments from broader strategic upside

- Helps both companies explain the partnership to regulators

Strengths and Opportunities

The amended partnership gives both companies a chance to mature beyond the first phase of the generative AI boom. Microsoft retains access to a leading AI platform while reducing concentration risk, and OpenAI gains room to become a broader infrastructure-neutral provider. If executed well, this could create a healthier AI market with more customer choice and fewer single-vendor bottlenecks.Opportunity checklist

- OpenAI can scale faster by tapping multiple cloud providers

- Microsoft can strengthen Azure by competing on quality rather than exclusivity

- Enterprise customers gain more architectural freedom

- Developers may get lower-latency and more flexible API deployments

- Regulators may view the AI cloud market as less closed

- Copilot can evolve into a model-agnostic productivity layer

- The companies can reduce friction before deeper courtroom scrutiny

Risks and Concerns

The risks are just as significant. Multi-cloud freedom can produce fragmentation, inconsistent performance, confusing procurement, and new security questions. The legal timing also means every contractual change will be interpreted through the lens of the Musk trial, whether the companies want that or not.Concern checklist

- The amendment may be portrayed as a litigation-driven repositioning

- Azure could lose high-value OpenAI workloads over time

- OpenAI may face operational complexity across too many infrastructure partners

- Customers may struggle with governance across multi-cloud AI deployments

- Copilot differentiation may weaken if OpenAI access becomes ubiquitous

- Revenue-share caps may raise questions about long-term margin pressure

- The broader mission debate around OpenAI remains unresolved

Looking Ahead

The next phase will unfold on two tracks: commercial execution and courtroom testimony. On the commercial side, watch how quickly OpenAI announces deeper deployments with other cloud providers and whether Azure continues to receive the earliest or best-supported product launches. On the legal side, watch whether Musk’s team uses the amendment as evidence of prior overreach or whether Microsoft and OpenAI successfully frame it as normal evolution in a fast-moving market.The signals to monitor

- New OpenAI cloud announcements will show whether the amendment is symbolic or immediately operational.

- Azure OpenAI feature timing will reveal whether Microsoft’s first-launch advantage remains meaningful.

- Copilot model diversity will indicate how aggressively Microsoft is reducing dependence on OpenAI.

- Trial evidence and testimony may expose details about influence, governance, and internal negotiations.

- Enterprise procurement changes will show whether customers treat multi-cloud OpenAI as a real buying advantage.

Microsoft and OpenAI are not ending their partnership; they are trying to make it survivable for the next decade of AI competition. The first era was defined by exclusivity, urgency, and the shock of ChatGPT’s rise. The next will be defined by choice, governance, infrastructure scale, and whether the companies can convince courts, customers, and the public that the most powerful AI systems are being built with enough independence and accountability to match their influence.

Source: GeekWire Microsoft and OpenAI revamp partnership, with trial in Elon Musk suit set to begin