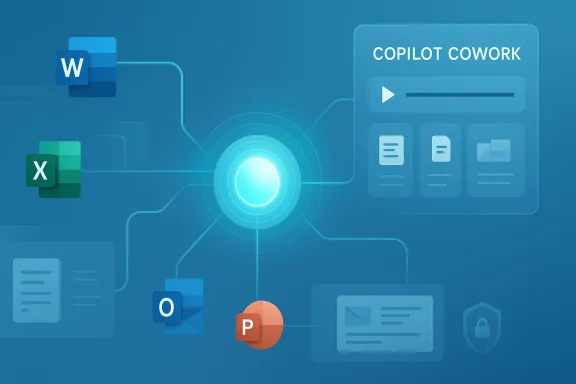

Microsoft’s Copilot strategy has crossed a meaningful threshold: the company is no longer positioning its assistant as a tool that merely drafts, summarizes, or answers questions, but as a long-running execution layer for enterprise work. The new Copilot Cowork preview, built in close collaboration with Anthropic, is designed to handle multi-step tasks that unfold over time, across Microsoft 365 apps and data sources, with visible progress and enterprise controls. Microsoft says the feature is being tested with a limited set of customers now and will expand through the Frontier program during March 2026, making this one of the clearest signs yet that the productivity suite is evolving into an agentic operating layer rather than a chat interface.

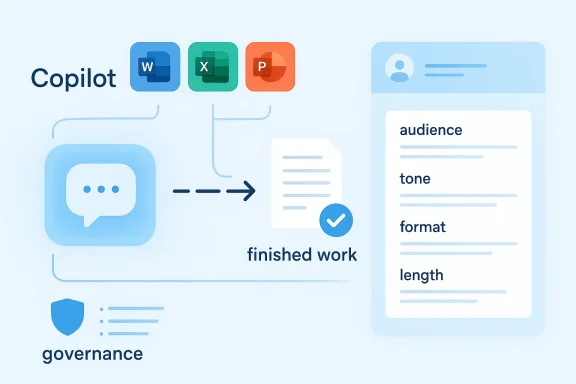

Microsoft’s push into AI-assisted productivity began with a familiar promise: make Word, Excel, Outlook, Teams, and PowerPoint faster by embedding generative models into the flow of work. Early Copilot experiences were, in effect, very capable accelerators for drafting and summarizing, but they still required users to orchestrate the workflow manually. That meant the human remained the conductor, and the model stayed closer to a responsive assistant than an active participant. Over time, Microsoft layered in more reasoning capabilities and more integration across the Microsoft 365 ecosystem, laying the groundwork for a broader move toward agents.

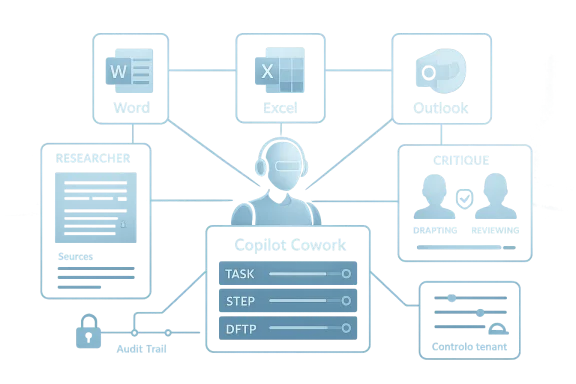

The shift to agentic work has been visible in stages. Microsoft has already experimented with reasoning agents such as Researcher and Analyst, and it has been widening model choice inside Copilot surfaces by adding Anthropic’s Claude family to selected experiences. That earlier step matters because it signaled a broader architectural change: Microsoft was willing to move away from a single-model worldview and toward a managed, multi-model platform. Copilot Cowork is the next logical step in that progression, because it is not just about model diversity; it is about granting the system permission to carry work forward over time.

At the center of the announcement is the idea that AI should not merely answer one prompt at a time. Instead, it should understand a task, break it down, use the right sources, and continue working until it produces something usable. Microsoft describes Cowork as a way to handle tasks that can run for minutes or hours, and the company emphasizes that progress can be reviewed, guided, or stopped along the way. That framing is important because it addresses one of the biggest obstacles to enterprise AI adoption: trust is not just about accuracy, but about control, observability, and governance.

This launch also lands in a broader commercial context. Microsoft has been steadily packaging Copilot as a core enterprise platform, and the company’s recent messaging makes clear that it sees AI as a durable operating model for knowledge work, not a bolt-on feature. In parallel, it has been investing in security, identity, governance, and agent management to make enterprise customers more comfortable with autonomous or semi-autonomous systems. Copilot Cowork is therefore not simply a product demo; it is a statement about where Microsoft believes the next generation of workplace software is headed.

The company is also tying the launch to the Frontier program, which serves as its access path for experimental enterprise AI features. Microsoft says Cowork will be available through Frontier in March 2026 after a limited research preview with selected customers. That phased release is consistent with how Microsoft has handled other advanced Copilot capabilities: test in controlled environments first, gather feedback, and only then widen access.

The use of a research preview also tells buyers something subtle but important: Microsoft wants early adopters, but it is not yet promising that every workflow will be safe, optimized, or repeatable. In other words, this is the beginning of a product category, not the end state.

The practical implication is that companies should treat Cowork as a sandbox for workflow design rather than a finished replacement for human oversight. That is a sensible posture for a feature that is expected to operate over long durations and across sensitive data sources.

Among the key claims Microsoft makes are that Cowork can work across documents, email, calendars, and other Microsoft 365 data, and that it can remain active while a user does other work. That is a significant step beyond generating a document draft or summarizing a meeting transcript, because it starts to resemble an operational colleague that keeps a project moving. Microsoft’s own messaging frames this as a move from assistance to execution, and that distinction is the core of the announcement.

This is strategically important because Anthropic has developed a strong reputation around reasoning-heavy enterprise use cases. By incorporating that capability into Microsoft 365, Microsoft can claim that it is delivering not just broad functionality, but a reasoning layer optimized for long-horizon tasks. The partnership also reflects a pragmatic reality: in enterprise AI, the best model for a job may not be the one built in-house. That is a notable change in tone for a company historically associated with deep platform control.

It also raises the stakes for Microsoft’s own orchestration layer. If the platform can route work to different models intelligently, then the value shifts upward into governance, routing, and policy enforcement rather than raw model identity.

The challenge, of course, is integration consistency. A multi-model platform is powerful, but it can also become harder to explain, harder to debug, and harder to govern if the boundaries are not cleanly defined.

Microsoft’s public materials now frame Anthropic not as a side experiment, but as part of a broader enterprise strategy that combines intelligence and trust. That phrasing is deliberate. Microsoft is trying to reassure customers that model diversity will not weaken security posture, and that enterprise controls will remain in place even as the system becomes more autonomous. For large organizations, that reassurance may be as important as the feature set itself.

In practical terms, this could change how teams handle recurring business processes. Meeting prep, market research, spreadsheet creation, status updates, and cross-functional report assembly all become more amenable to delegation if the system can hold context and preserve intent across days or hours. The result is not a full replacement for human work, but a reduction in the amount of repetitive coordination humans must perform.

This also changes the psychological contract inside the workplace. Employees are no longer just asking an AI for help; they are assigning it work and expecting it to remember the assignment. That is a much heavier trust burden.

The commercial opportunity is obvious. If a system can reliably handle boring but time-consuming work, enterprises may justify higher Copilot adoption, higher seat attachment, and deeper integration into core processes. But the more the system is allowed to act, the more companies will demand auditability and rollback controls.

Microsoft’s emphasis on visible progress, steering, and stop controls is therefore not cosmetic. It is the mechanism that makes long-running automation palatable to enterprises that cannot afford black-box behavior. A system that can be paused or corrected is more likely to survive in regulated and high-stakes environments than one that simply “does the task” in the background.

Meeting preparation is a good illustration. An executive assistant or operations lead often spends hours collecting prior notes, scanning email threads, verifying attendee availability, and assembling documents. Cowork is intended to compress that process into a more continuous workflow, gathering context from Microsoft 365 and producing a draft agenda or briefing pack. That does not eliminate judgment, but it could shrink the amount of manual stitching required before a meeting begins.

The opportunity here is less about replacing analysts than about compressing the time to insight. A team that gets a strong initial synthesis faster can spend more time on interpretation, scenario planning, and decision-making.

Still, analysts will remain essential because synthesis is not the same as truth. AI can gather and organize, but humans will need to validate assumptions and check for missing context.

There is also a potential benefit for internal operations. HR, finance, procurement, and legal teams all deal with long-running administrative workflows that follow recognizable patterns. A permissioned agent that can monitor inputs and prepare outputs could reduce bottlenecks, especially in organizations already standardized on Microsoft 365. The enterprise value proposition is therefore not just productivity in the abstract, but workflow compression inside systems companies already use every day.

Microsoft also says the system operates within its security, identity, and governance framework, and that enterprise documents are treated as protected knowledge. That is a critical point because enterprise buyers do not just ask whether an AI can do the work; they ask whether the AI can do the work without leaking data, violating policy, or creating unauthorized access pathways. The answer, at least on paper, is that Cowork is designed to respect existing permission structures rather than bypass them.

That does not eliminate risk, but it changes the nature of the risk from “Can the model answer?” to “Can the model act safely?” Those are very different questions.

For regulated sectors, that distinction will determine whether the product is deployable at all. Financial services, healthcare, government contractors, and legal organizations need more than capability; they need proof of compliance and evidence of control.

Microsoft’s broader agent strategy suggests that Copilot Cowork is part of a larger governance story, not an isolated product experiment. The company has also been talking about Agent 365 and security controls for observing and managing agents across an organization, which reinforces the idea that Microsoft sees governance as a platform layer. If that architecture holds up, it could become one of Microsoft’s biggest advantages over smaller AI vendors.

The timing is important. Microsoft has been emphasizing Copilot adoption growth and the increasing scale of deployments, which suggests the company is trying to convert usage into sustained commercial momentum. By adding agentic work, Microsoft can justify a stronger value proposition to CFOs and CIOs: not just a helpful assistant, but a labor-amplifying platform. That framing is far more monetizable than a generic productivity chatbot.

But premium pricing only works if the system earns trust fast enough. Enterprises are willing to pay for control and capability, not just novelty.

Microsoft appears to be engineering exactly that equation. By combining model diversity, agent management, and security architecture, it is trying to make agentic AI look less like an experiment and more like an enterprise tier of Microsoft 365.

That approach may also help Microsoft defend against competitors that want to position themselves as the “best AI layer” for work. If customers can get model choice, governance, and workflow execution inside one familiar suite, the switching cost becomes much higher. In that sense, Copilot Cowork is not only a product launch; it is a platform retention strategy.

The move also puts pressure on other productivity and collaboration platforms to show that they can offer more than copilots that generate text on demand. The next battleground is not whether AI can write a memo. It is whether AI can take responsibility for a workflow, preserve context, and finish the job without a human supervising every step. That is a much harder bar, but it is the bar Microsoft is now setting.

It also means smaller AI-first firms may need to specialize more aggressively. General-purpose assistant functionality is becoming table stakes, while enterprise-grade orchestration is becoming the premium differentiator.

Anthropic benefits too. Even if the company is not the consumer-facing brand in this story, its technology is now being embedded into one of the largest productivity ecosystems in the world. That is a powerful distribution win, and it could strengthen Anthropic’s profile as the reasoning engine of choice for enterprise agentic work.

At the same time, Microsoft’s multi-model posture may put pressure on OpenAI to differentiate more clearly inside Copilot and adjacent services. A platform that can select among models based on task fit is harder to commoditize, but it also raises expectations across the stack. Competitors will need to respond not only with better models, but with better enterprise workflow design.

The second thing to watch is how Microsoft balances model diversity with platform coherence. The more models and agents it supports, the more critical orchestration becomes. Enterprises will want a simple answer to a hard question: not just which model is used, but who governs the agent, how it is logged, and what happens when it makes a mistake.

Source: Mix Vale https://www.mixvale.com.br/2026/03/...technology-to-automate-business-tasks-en/amp/

Background

Background

Microsoft’s push into AI-assisted productivity began with a familiar promise: make Word, Excel, Outlook, Teams, and PowerPoint faster by embedding generative models into the flow of work. Early Copilot experiences were, in effect, very capable accelerators for drafting and summarizing, but they still required users to orchestrate the workflow manually. That meant the human remained the conductor, and the model stayed closer to a responsive assistant than an active participant. Over time, Microsoft layered in more reasoning capabilities and more integration across the Microsoft 365 ecosystem, laying the groundwork for a broader move toward agents.The shift to agentic work has been visible in stages. Microsoft has already experimented with reasoning agents such as Researcher and Analyst, and it has been widening model choice inside Copilot surfaces by adding Anthropic’s Claude family to selected experiences. That earlier step matters because it signaled a broader architectural change: Microsoft was willing to move away from a single-model worldview and toward a managed, multi-model platform. Copilot Cowork is the next logical step in that progression, because it is not just about model diversity; it is about granting the system permission to carry work forward over time.

At the center of the announcement is the idea that AI should not merely answer one prompt at a time. Instead, it should understand a task, break it down, use the right sources, and continue working until it produces something usable. Microsoft describes Cowork as a way to handle tasks that can run for minutes or hours, and the company emphasizes that progress can be reviewed, guided, or stopped along the way. That framing is important because it addresses one of the biggest obstacles to enterprise AI adoption: trust is not just about accuracy, but about control, observability, and governance.

This launch also lands in a broader commercial context. Microsoft has been steadily packaging Copilot as a core enterprise platform, and the company’s recent messaging makes clear that it sees AI as a durable operating model for knowledge work, not a bolt-on feature. In parallel, it has been investing in security, identity, governance, and agent management to make enterprise customers more comfortable with autonomous or semi-autonomous systems. Copilot Cowork is therefore not simply a product demo; it is a statement about where Microsoft believes the next generation of workplace software is headed.

What Microsoft Actually Announced

The headline feature is Copilot Cowork, now in research preview. Microsoft says it is being developed closely with Anthropic and built on the technology that powers Claude Cowork, bringing that agentic capability into Microsoft 365 Copilot for long-running, multi-step work. In plain terms, this means the system is not limited to a single response cycle; it can reason through a task, act across tools, and continue until the job is done or the user intervenes.The company is also tying the launch to the Frontier program, which serves as its access path for experimental enterprise AI features. Microsoft says Cowork will be available through Frontier in March 2026 after a limited research preview with selected customers. That phased release is consistent with how Microsoft has handled other advanced Copilot capabilities: test in controlled environments first, gather feedback, and only then widen access.

Why the research preview matters

A research preview is not the same thing as broad commercial availability. It signals that Microsoft believes the capability is promising, but still needs hardening for real-world use. That distinction matters in enterprise software, where a flashy demo is easy and dependable execution under governance is much harder.The use of a research preview also tells buyers something subtle but important: Microsoft wants early adopters, but it is not yet promising that every workflow will be safe, optimized, or repeatable. In other words, this is the beginning of a product category, not the end state.

The practical implication is that companies should treat Cowork as a sandbox for workflow design rather than a finished replacement for human oversight. That is a sensible posture for a feature that is expected to operate over long durations and across sensitive data sources.

Among the key claims Microsoft makes are that Cowork can work across documents, email, calendars, and other Microsoft 365 data, and that it can remain active while a user does other work. That is a significant step beyond generating a document draft or summarizing a meeting transcript, because it starts to resemble an operational colleague that keeps a project moving. Microsoft’s own messaging frames this as a move from assistance to execution, and that distinction is the core of the announcement.

- Research preview first, broad rollout later

- Anthropic technology integrated into Microsoft 365 Copilot

- Long-running tasks across multiple apps and data sources

- Human review and interruption built into the workflow

- Frontier program access for selected customers

The Anthropic Partnership

One of the most notable parts of the announcement is the collaboration with Anthropic. Microsoft says it is bringing the technology that powers Claude Cowork into Microsoft 365 Copilot, while also continuing to offer Claude in mainline Copilot Chat through the Frontier program. That makes Microsoft’s AI stack look less like a locked garden and more like a model-diverse platform that can select the best engine for the task.This is strategically important because Anthropic has developed a strong reputation around reasoning-heavy enterprise use cases. By incorporating that capability into Microsoft 365, Microsoft can claim that it is delivering not just broad functionality, but a reasoning layer optimized for long-horizon tasks. The partnership also reflects a pragmatic reality: in enterprise AI, the best model for a job may not be the one built in-house. That is a notable change in tone for a company historically associated with deep platform control.

Model choice as a competitive weapon

Microsoft’s willingness to mix OpenAI and Anthropic models is more than a technical detail. It is a competitive signal to enterprises that the platform can adapt to different workloads without forcing a single-model dependency. That flexibility could matter to buyers who worry about model drift, vendor concentration, or performance gaps between use cases.It also raises the stakes for Microsoft’s own orchestration layer. If the platform can route work to different models intelligently, then the value shifts upward into governance, routing, and policy enforcement rather than raw model identity.

The challenge, of course, is integration consistency. A multi-model platform is powerful, but it can also become harder to explain, harder to debug, and harder to govern if the boundaries are not cleanly defined.

Microsoft’s public materials now frame Anthropic not as a side experiment, but as part of a broader enterprise strategy that combines intelligence and trust. That phrasing is deliberate. Microsoft is trying to reassure customers that model diversity will not weaken security posture, and that enterprise controls will remain in place even as the system becomes more autonomous. For large organizations, that reassurance may be as important as the feature set itself.

How Copilot Cowork Changes the Workflow Model

The biggest conceptual change is that Copilot Cowork is designed to manage work over time, not just generate output in one shot. Microsoft says the system can break down complex requests into steps, reason across tools and files, and carry work forward with visible progress. That is a direct challenge to the old software assumption that a user must explicitly trigger each micro-task.In practical terms, this could change how teams handle recurring business processes. Meeting prep, market research, spreadsheet creation, status updates, and cross-functional report assembly all become more amenable to delegation if the system can hold context and preserve intent across days or hours. The result is not a full replacement for human work, but a reduction in the amount of repetitive coordination humans must perform.

From chat assistant to execution agent

The step from chat to agent is bigger than it sounds. A chat assistant is reactive: it waits for a prompt, responds, and stops. An execution agent is proactive: it can plan, continue, and potentially notify the user only when something meaningful changes. That shift creates both value and risk, because the system becomes more useful precisely when it becomes more independent.This also changes the psychological contract inside the workplace. Employees are no longer just asking an AI for help; they are assigning it work and expecting it to remember the assignment. That is a much heavier trust burden.

The commercial opportunity is obvious. If a system can reliably handle boring but time-consuming work, enterprises may justify higher Copilot adoption, higher seat attachment, and deeper integration into core processes. But the more the system is allowed to act, the more companies will demand auditability and rollback controls.

Microsoft’s emphasis on visible progress, steering, and stop controls is therefore not cosmetic. It is the mechanism that makes long-running automation palatable to enterprises that cannot afford black-box behavior. A system that can be paused or corrected is more likely to survive in regulated and high-stakes environments than one that simply “does the task” in the background.

- Handles multi-step tasks instead of isolated prompts

- Works across apps and files, not just one interface

- Keeps state over time for longer workflows

- Exposes progress so users can intervene

- Targets routine coordination work, not just content generation

Enterprise Use Cases Microsoft Is Targeting

Microsoft is clearly aiming Copilot Cowork at knowledge work that is repetitive, cross-functional, and expensive in human time. The most obvious examples are executive meeting prep, project tracking, market research, and status reporting. These tasks are attractive because they involve synthesizing information from many places, which is exactly where a well-governed agent can add value.Meeting preparation is a good illustration. An executive assistant or operations lead often spends hours collecting prior notes, scanning email threads, verifying attendee availability, and assembling documents. Cowork is intended to compress that process into a more continuous workflow, gathering context from Microsoft 365 and producing a draft agenda or briefing pack. That does not eliminate judgment, but it could shrink the amount of manual stitching required before a meeting begins.

Market research and synthesis

Microsoft also highlighted market research as a use case. That matters because research work can benefit from breadth, but it still requires careful verification. If Cowork can ingest news, public filings, and other business sources, then it could accelerate the first pass of competitive intelligence substantially.The opportunity here is less about replacing analysts than about compressing the time to insight. A team that gets a strong initial synthesis faster can spend more time on interpretation, scenario planning, and decision-making.

Still, analysts will remain essential because synthesis is not the same as truth. AI can gather and organize, but humans will need to validate assumptions and check for missing context.

There is also a potential benefit for internal operations. HR, finance, procurement, and legal teams all deal with long-running administrative workflows that follow recognizable patterns. A permissioned agent that can monitor inputs and prepare outputs could reduce bottlenecks, especially in organizations already standardized on Microsoft 365. The enterprise value proposition is therefore not just productivity in the abstract, but workflow compression inside systems companies already use every day.

Enterprise vs consumer impact

For enterprise customers, the value is obvious: better throughput, fewer handoffs, and more automation inside governed systems. For consumers, the picture is less immediate because the feature is being framed around organizational data, permissions, and enterprise controls rather than personal productivity alone. That makes Copilot Cowork feel more like a workplace platform than a general-purpose consumer assistant.- Executive meeting prep

- Market and competitive research

- Project coordination and status tracking

- Drafting internal reports and briefings

- Scheduling and calendar-based orchestration

Governance, Security, and Trust

If Copilot Cowork is going to matter in the enterprise, it will succeed or fail on governance as much as on model quality. Microsoft has made trust a central theme, saying that work is observable, actions are transparent, and progress can be reviewed, guided, or stopped. That design language is meant to reassure security teams that autonomy does not mean opacity.Microsoft also says the system operates within its security, identity, and governance framework, and that enterprise documents are treated as protected knowledge. That is a critical point because enterprise buyers do not just ask whether an AI can do the work; they ask whether the AI can do the work without leaking data, violating policy, or creating unauthorized access pathways. The answer, at least on paper, is that Cowork is designed to respect existing permission structures rather than bypass them.

Why permissions matter more than prompts

The strongest AI models in the world are not useful for enterprise work if they cannot be safely constrained. In practice, the real product is often the control system around the model: identity, logging, policy enforcement, and data boundaries. Microsoft understands that better than most, and that is why its launch messaging leans so heavily on enterprise controls.That does not eliminate risk, but it changes the nature of the risk from “Can the model answer?” to “Can the model act safely?” Those are very different questions.

For regulated sectors, that distinction will determine whether the product is deployable at all. Financial services, healthcare, government contractors, and legal organizations need more than capability; they need proof of compliance and evidence of control.

Microsoft’s broader agent strategy suggests that Copilot Cowork is part of a larger governance story, not an isolated product experiment. The company has also been talking about Agent 365 and security controls for observing and managing agents across an organization, which reinforces the idea that Microsoft sees governance as a platform layer. If that architecture holds up, it could become one of Microsoft’s biggest advantages over smaller AI vendors.

- Observable actions

- Reviewable progress

- Stop-and-steer controls

- Security and identity integration

- Permission-aware access to enterprise data

- No training on public models for enterprise data

Commercial Strategy and Pricing Power

Although Microsoft is presenting Cowork as a feature preview, the strategic direction is unmistakable: this is part of a larger monetization path for enterprise AI. The company has been expanding Copilot as a platform, and it has repeatedly signaled that advanced capabilities will be tied to premium commercial packaging. That means more of the value is likely to accrue through enterprise subscriptions, upgrades, and higher attachment to Microsoft 365.The timing is important. Microsoft has been emphasizing Copilot adoption growth and the increasing scale of deployments, which suggests the company is trying to convert usage into sustained commercial momentum. By adding agentic work, Microsoft can justify a stronger value proposition to CFOs and CIOs: not just a helpful assistant, but a labor-amplifying platform. That framing is far more monetizable than a generic productivity chatbot.

Why premium bundles matter

A premium bundle works when customers believe the added value is tied to measurable output. If Cowork reduces the time spent on recurring workflows, then the business case becomes easier to sell. That is especially true for large organizations where small per-seat improvements can translate into major annual savings.But premium pricing only works if the system earns trust fast enough. Enterprises are willing to pay for control and capability, not just novelty.

Microsoft appears to be engineering exactly that equation. By combining model diversity, agent management, and security architecture, it is trying to make agentic AI look less like an experiment and more like an enterprise tier of Microsoft 365.

That approach may also help Microsoft defend against competitors that want to position themselves as the “best AI layer” for work. If customers can get model choice, governance, and workflow execution inside one familiar suite, the switching cost becomes much higher. In that sense, Copilot Cowork is not only a product launch; it is a platform retention strategy.

- Supports Microsoft 365 monetization

- Strengthens seat expansion logic

- Creates a premium enterprise value story

- Improves switching costs

- Makes AI adoption easier to justify internally

Competitive Implications

Copilot Cowork raises the bar for rivals because it combines three difficult things at once: enterprise context, long-running task execution, and governance. Many AI vendors can do one of those well, but fewer can do all three inside a sprawling productivity suite with identity, email, calendar, files, and admin controls already in place. That integration moat is one of Microsoft’s most important assets.The move also puts pressure on other productivity and collaboration platforms to show that they can offer more than copilots that generate text on demand. The next battleground is not whether AI can write a memo. It is whether AI can take responsibility for a workflow, preserve context, and finish the job without a human supervising every step. That is a much harder bar, but it is the bar Microsoft is now setting.

The broader market shift

The market is moving from prompt response to workflow execution. That transition favors companies that control the surrounding stack: identity, storage, collaboration, admin policy, and app integration. Microsoft fits that description almost perfectly, which is why this announcement matters beyond Microsoft alone.It also means smaller AI-first firms may need to specialize more aggressively. General-purpose assistant functionality is becoming table stakes, while enterprise-grade orchestration is becoming the premium differentiator.

Anthropic benefits too. Even if the company is not the consumer-facing brand in this story, its technology is now being embedded into one of the largest productivity ecosystems in the world. That is a powerful distribution win, and it could strengthen Anthropic’s profile as the reasoning engine of choice for enterprise agentic work.

At the same time, Microsoft’s multi-model posture may put pressure on OpenAI to differentiate more clearly inside Copilot and adjacent services. A platform that can select among models based on task fit is harder to commoditize, but it also raises expectations across the stack. Competitors will need to respond not only with better models, but with better enterprise workflow design.

Sequential adoption path

- Pilot with low-risk, high-repeatability workflows.

- Validate permissions, logging, and output quality.

- Expand into meeting prep, research, and reporting.

- Add more sensitive internal processes only after controls mature.

- Measure time saved, error rates, and user override frequency.

Strengths and Opportunities

Microsoft’s launch has real strategic force because it combines the strengths of the Microsoft 365 footprint with a more ambitious AI operating model. If the feature works as advertised, it could meaningfully improve productivity while giving Microsoft a new reason for enterprise customers to deepen their Copilot investment. The opportunity is not just automation; it is the creation of a new default way to move work through an organization.- Huge installed base across Microsoft 365 environments

- Native access to email, calendar, documents, and collaboration data

- Enterprise-grade controls that make adoption more realistic

- Model diversity that reduces dependence on a single AI engine

- Clear productivity narrative for CIOs and business leaders

- Strong fit for repetitive, multi-step workflows

- Potential for workflow standardization across departments

Risks and Concerns

The promise of agentic AI is compelling, but the operational risks are equally real. Long-running systems can drift, misinterpret intent, overstep permissions, or produce outputs that look polished while hiding factual or procedural mistakes. In an enterprise setting, those errors can be expensive, which is why Microsoft’s safeguards will be tested as hard as the model itself.- Hallucinated or incomplete outputs in long workflows

- Permission mistakes if governance is misconfigured

- Overreliance by employees who may trust polished results too quickly

- Audit complexity when tasks span multiple apps and days

- Security concerns around sensitive internal data

- Workflow fragility if agents depend on inconsistent source material

- User resistance if autonomy feels too intrusive

Looking Ahead

The most important thing to watch next is whether Microsoft can convert a promising research preview into a dependable enterprise capability. If Cowork proves itself in early customer environments, it could become a template for a wider class of Microsoft agents that handle increasingly complex business processes. If it stumbles, the company may still have the right strategic idea, but the timeline for broad adoption could stretch out considerably.The second thing to watch is how Microsoft balances model diversity with platform coherence. The more models and agents it supports, the more critical orchestration becomes. Enterprises will want a simple answer to a hard question: not just which model is used, but who governs the agent, how it is logged, and what happens when it makes a mistake.

What to watch next

- Frontier rollout timing and customer eligibility

- Whether Microsoft expands Cowork beyond initial pilot use cases

- How strong the governance and audit tooling proves in practice

- Whether Copilot adoption accelerates after agentic features arrive

- How Anthropic’s role evolves across the Microsoft 365 stack

Source: Mix Vale https://www.mixvale.com.br/2026/03/...technology-to-automate-business-tasks-en/amp/

Last edited: